IRIS: LLM-Assisted Static Analysis for Detecting Security Vulnerabilities

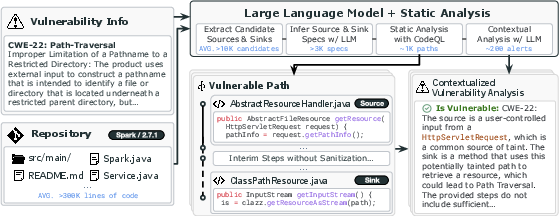

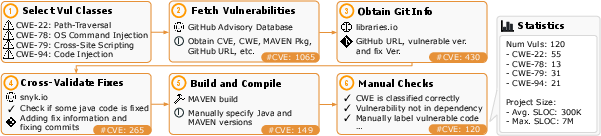

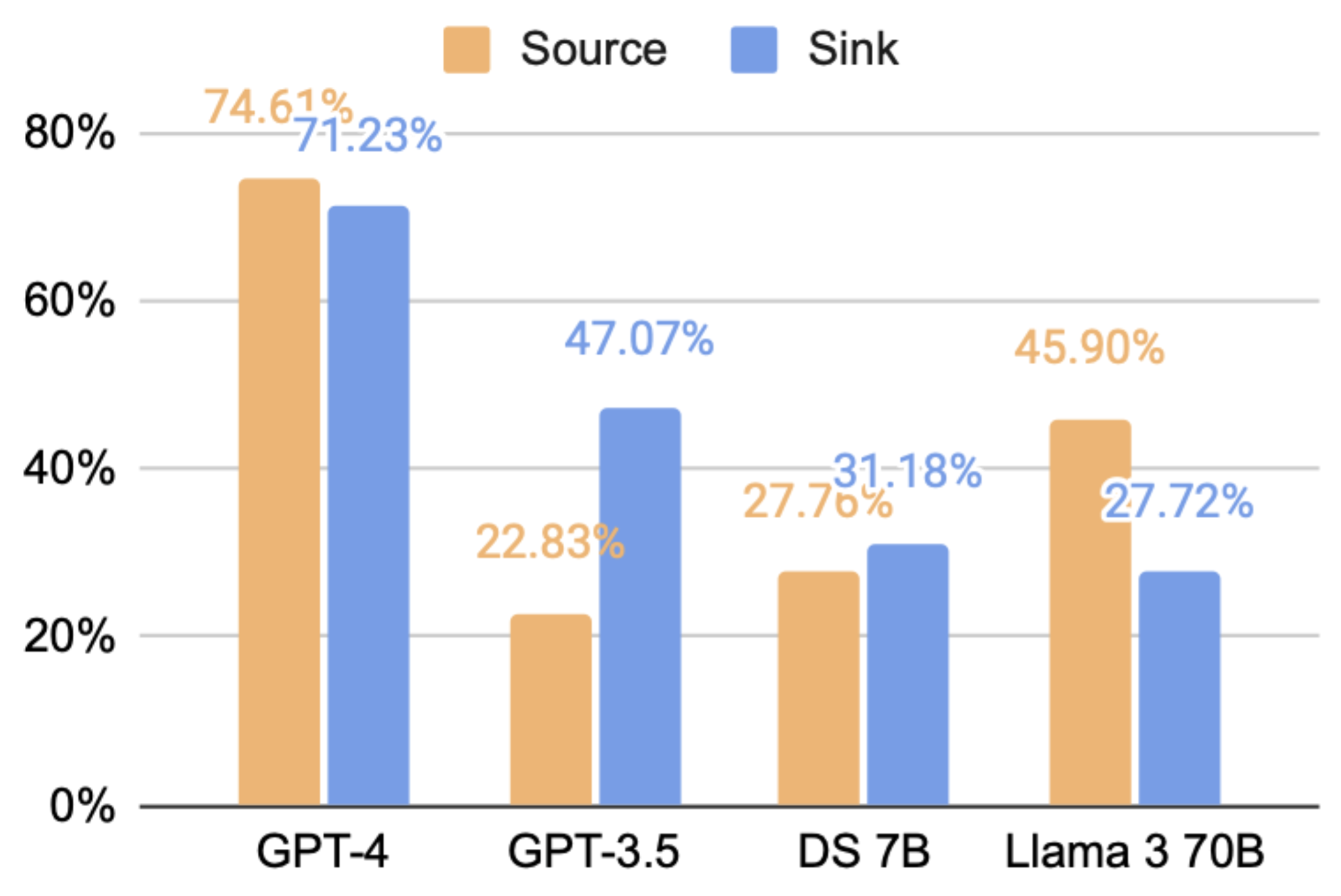

Abstract: Software is prone to security vulnerabilities. Program analysis tools to detect them have limited effectiveness in practice due to their reliance on human labeled specifications. LLMs (or LLMs) have shown impressive code generation capabilities but they cannot do complex reasoning over code to detect such vulnerabilities especially since this task requires whole-repository analysis. We propose IRIS, a neuro-symbolic approach that systematically combines LLMs with static analysis to perform whole-repository reasoning for security vulnerability detection. Specifically, IRIS leverages LLMs to infer taint specifications and perform contextual analysis, alleviating needs for human specifications and inspection. For evaluation, we curate a new dataset, CWE-Bench-Java, comprising 120 manually validated security vulnerabilities in real-world Java projects. A state-of-the-art static analysis tool CodeQL detects only 27 of these vulnerabilities whereas IRIS with GPT-4 detects 55 (+28) and improves upon CodeQL's average false discovery rate by 5% points. Furthermore, IRIS identifies 4 previously unknown vulnerabilities which cannot be found by existing tools. IRIS is available publicly at https://github.com/iris-sast/iris.

- Ql: Object-oriented queries on relational data. In European Conference on Object-Oriented Programming, 2016. URL https://api.semanticscholar.org/CorpusID:13385963.

- Improving java deserialization gadget chain mining via overriding-guided object generation. In Proceedings of the 45th International Conference on Software Engineering (ICSE), 2023. doi: 10.1109/ICSE48619.2023.00044.

- Deep learning based vulnerability detection: Are we there yet? IEEE Transactions on Software Engineering, 48:3280–3296, 2020. URL https://api.semanticscholar.org/CorpusID:221703797.

- Checker Framework, 2024. https://checkerframework.org/.

- Path-sensitive code embedding via contrastive learning for software vulnerability detection. Proceedings of the 31st ACM SIGSOFT International Symposium on Software Testing and Analysis, 2022. URL https://api.semanticscholar.org/CorpusID:250562410.

- Scalable taint specification inference with big code. In Proceedings of the 40th ACM SIGPLAN Conference on Programming Language Design and Implementation, pages 760–774, 2019.

- Code Checker, 2023. https://github.com/Ericsson/codechecker.

- CPPCheck, 2023. https://cppcheck.sourceforge.io/.

- CVE Trends, 2024. https://www.cvedetails.com.

- Vulnerability detection with code language models: How far are we? arXiv preprint arXiv:2403.18624, 2024.

- Fb Infer, 2023. https://fbinfer.com/.

- FlawFinder, 2023. URL https://dwheeler.com/flawfinder.

- M. Fu and C. Tantithamthavorn. Linevul: A transformer-based line-level vulnerability prediction. In 2022 IEEE/ACM 19th International Conference on Mining Software Repositories (MSR). IEEE, 2022.

- GitHub. Codeql, 2024a. https://codeql.github.com.

- GitHub. Github advisory database, 2024b. https://github.com/advisories.

- GitHub. Github security advisories, 2024c. https://github.com/github/advisory-database.

- J. He and M. Vechev. Large language models for code: Security hardening and adversarial testing. In Proceedings of the 2023 ACM SIGSAC Conference on Computer and Communications Security, pages 1865–1879, 2023.

- S. Heckman and L. Williams. A model building process for identifying actionable static analysis alerts. In 2009 International conference on software testing verification and validation, pages 161–170. IEEE, 2009.

- Linevd: Statement-level vulnerability detection using graph neural networks. 2022 IEEE/ACM 19th International Conference on Mining Software Repositories (MSR), pages 596–607, 2022. URL https://api.semanticscholar.org/CorpusID:247362653.

- Swe-bench: Can language models resolve real-world github issues? arXiv preprint arXiv:2310.06770, 2023.

- Why don’t software developers use static analysis tools to find bugs? In 2013 35th International Conference on Software Engineering (ICSE), pages 672–681. IEEE, 2013.

- Repair is nearly generation: Multilingual program repair with llms. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 37, pages 5131–5140, 2023.

- Taming false alarms from a domain-unaware c analyzer by a bayesian statistical post analysis. In International Static Analysis Symposium, pages 203–217. Springer, 2005.

- Detecting false alarms from automatic static analysis tools: How far are we? In Proceedings of the 44th International Conference on Software Engineering, pages 698–709, 2022.

- Understanding the effectiveness of large language models in detecting security vulnerabilities. arXiv preprint arXiv:2311.16169, 2023.

- Codamosa: Escaping coverage plateaus in test generation with pre-trained large language models. In International conference on software engineering (ICSE), 2023.

- Enhancing static analysis for practical bug detection: An llm-integrated approach. Proceedings of the ACM on Programming Languages (PACMPL), Issue OOPSLA, 2024.

- Comparison and evaluation on static application security testing (sast) tools for java. In Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pages 921–933, 2023.

- Vulnerability detection with fine-grained interpretations. Proceedings of the 29th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering, 2021. URL https://api.semanticscholar.org/CorpusID:235490574.

- Sysevr: A framework for using deep learning to detect software vulnerabilities. IEEE Transactions on Dependable and Secure Computing, 19:2244–2258, 2018. URL https://api.semanticscholar.org/CorpusID:49869471.

- Vuldeelocator: A deep learning-based fine-grained vulnerability detector. IEEE Transactions on Dependable and Secure Computing, 19:2821–2837, 2020. URL https://api.semanticscholar.org/CorpusID:210064554.

- An empirical study on the effectiveness of static c code analyzers for vulnerability detection. In Proceedings of the 31st ACM SIGSOFT International Symposium on Software Testing and Analysis, pages 544–555, 2022.

- Merlin: Specification inference for explicit information flow problems. ACM Sigplan Notices, 44(6):75–86, 2009.

- Lost in translation: A study of bugs introduced by large language models while translating code. In 2024 IEEE/ACM 46th International Conference on Software Engineering (ICSE), pages 866–866. IEEE Computer Society, 2024.

- I. A. A. Ranking. Finding patterns in static analysis alerts. In Proceedings of the 11th working conference on mining software repositories. Citeseer, 2014.

- Semgrep. The semgrep platform. https://semgrep.dev/, 2023.

- Y. Smaragdakis and M. Bravenboer. Using datalog for fast and easy program analysis. In International Datalog 2.0 Workshop, pages 245–251. Springer, 2010.

- Snyk.io, 2024. https://snyk.io.

- SonarQube, 2024. https://www.sonarsource.com/products/sonarqube.

- Dataflow analysis-inspired deep learning for efficient vulnerability detection, 2023.

- A comprehensive study of the capabilities of large language models for vulnerability detection. arXiv preprint arXiv:2403.17218, 2024.

- SWE Agent, 2024. https://swe-agent.com.

- C. S. Xia and L. Zhang. Less training, more repairing please: revisiting automated program repair via zero-shot learning. In Proceedings of the 30th ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pages 959–971, 2022.

- Automated program repair in the era of large pre-trained language models. In Proceedings of the 45th International Conference on Software Engineering (ICSE 2023). Association for Computing Machinery, 2023.

- Fuzz4all: Universal fuzzing with large language models. In Proceedings of the IEEE/ACM 46th International Conference on Software Engineering, pages 1–13, 2024.

- Large language models for test-free fault localization. arXiv preprint arXiv:2310.01726, 2023.

- Autocoderover: Autonomous program improvement. arXiv preprint arXiv:2404.05427, 2024.

- Devign: Effective vulnerability identification by learning comprehensive program semantics via graph neural networks. In Neural Information Processing Systems, 2019. URL https://api.semanticscholar.org/CorpusID:202539112.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

The following applications can be deployed today using the IRIS framework (LLM-assisted static analysis), especially for Java repositories and the four CWEs studied (CWE-22, CWE-78, CWE-79, CWE-94).

- DevSecOps code scanning in CI/CD pipelines (Software)

- Use IRIS to auto-infer repository- and CWE-specific taint source/sink specs via LLMs, then run CodeQL queries to detect unsanitized dataflows.

- Integrate as a GitHub Action or Jenkins step to scan each PR/commit; publish alerts and LLM-generated explanations to developers.

- Potential workflow: “IRIS Spec Miner” step → CodeQL run → “IRIS Triage” step to filter false positives (80% reduction shown in best case).

- Assumptions/dependencies: the repository must build successfully for CodeQL; sending code to closed LLMs may require privacy controls; token cost and latency must be budgeted.

- SAST vendor augmentation (Software security products)

- Embed IRIS’s LLM-driven spec mining to keep third‑party API source/sink specifications current without manual curation.

- Ship automatically generated CodeQL packs or equivalent taint rules per CWE that update as libraries evolve (e.g., Maven ecosystem).

- Assumptions/dependencies: ongoing prompt/LLM maintenance; evaluate per-model performance and stability; legal/licensing of training/inference on customer code.

- Targeted CWE sweeps after advisories (Software, Security Operations)

- Rapidly scan internal Java codebases for specific CWE patterns when a CVE drops (e.g., path traversal in libraries handling file paths).

- Use IRIS contextual analysis to prioritize alerts and suppress benign flows.

- Assumptions/dependencies: focused on the four CWEs in the paper; static analysis cannot capture vulnerabilities that rely on runtime-only behaviors or external side effects.

- Enterprise secure code review assist (Software)

- Augment code review with IRIS alerts and LLM-generated, path-aware justifications; reviewers can request context explanations for flagged flows.

- Reduce time spent triaging false alarms; route high-confidence alerts to security owners.

- Assumptions/dependencies: developers must trust LLM verdicts; provide audit trails for decisions.

- Open-source project maintainers’ guardrails (Software)

- Run IRIS periodically on large Java repositories (hundreds of thousands to millions of lines of code) to catch CWE-specific flows missed by stock CodeQL rules.

- Use pre-merge scanning to catch regressions; publish results with reasoning to issues/PRs.

- Assumptions/dependencies: compute/time budget for scanning large repos; repository must compile; community acceptance of LLM-driven triage.

- Software supply chain risk checks (Finance, Healthcare, Energy, Government)

- Scan internally maintained Java services and third-party components for CWE-22/78/79/94, generating evidence for audits and due diligence.

- Produce actionable reports for compliance frameworks (e.g., OWASP Top 10 alignment).

- Assumptions/dependencies: mapping findings to compliance controls requires policy translation; static analysis gaps must be documented.

- Security consulting and M&A code diligence (Industry)

- Use IRIS for time-bounded vulnerability discovery across large Java portfolios; deliver reduced-noise findings with contextual evidence.

- Assumptions/dependencies: scope limited to Java and supported CWEs; codescan privacy provisions.

- Academic uses of CWE-Bench-Java (Academia)

- Benchmark LLM-assisted static analysis; reproduce IRIS results; teach secure coding and whole‑repository reasoning.

- Build course assignments around the curated dataset with 120 validated vulnerabilities and build scripts.

- Assumptions/dependencies: dataset availability; students need access to CodeQL and at least one LLM.

- Developer “daily life” safeguards (Software practice)

- Local pre-commit scan for Java projects using on‑prem/open-source LLMs (e.g., DeepSeekCoder 7B) to avoid sending code offsite.

- Lightweight triage for modified files only; post-commit job for full scans on larger repos.

- Assumptions/dependencies: memory/compute for local LLMs; models vary in detection quality (GPT‑4 highest in paper).

Long-Term Applications

The following applications require further research, engineering, scaling, or standardization before widespread deployment.

- Multi-language and cross-service support (Software, Cloud-native)

- Extend IRIS to C/C++, Python, JavaScript/TypeScript, and Android; track cross-language flows in polyglot repos and microservices.

- Build language-agnostic spec mining with framework-aware semantics (e.g., Spring, Express).

- Dependencies: robust CodeQL or equivalent for each language; cross-language dataflow models; large-context prompts for multi-service reasoning.

- Automated sanitizer inference and flow correctness (Software security research)

- Teach LLMs to identify sanitizer functions and conditions per CWE, reducing both false positives and false negatives.

- Combine IRIS with symbolic/dynamic analysis to validate flow feasibility and side effects (e.g., OS command gadgets).

- Dependencies: precise definitions of sanitization per API; hybrid analysis orchestration; higher compute cost.

- AI-assisted remediation (Software)

- Move from detection to fix suggestions: propose and validate code changes (escaping, input validation, safer APIs) with tests.

- “Detect → Explain → Patch → Verify” workflow integrated into IDEs and CI.

- Dependencies: reliable patch synthesis and safety checks; developer-in-the-loop acceptance; regression testing.

- Enterprise privacy and on-prem model deployment (Policy, Governance, Software)

- Train/fine-tune smaller, specialized security LLMs on CWE-Bench-Java and internal corpora; host models behind firewalls.

- Provide provable data handling for regulated sectors (HIPAA, PCI DSS, FISMA).

- Dependencies: high-quality fine-tuning data; model evaluation/monitoring; compliance documentation.

- Continuous, registry-backed spec mining services (Software supply chain)

- Operate a service that mines taint specs from package ecosystems (Maven, NPM, PyPI) and publishes signed spec updates.

- Integrate with SBOM pipelines to annotate dependencies with up-to-date source/sink roles per CWE.

- Dependencies: ecosystem cooperation; version tracking and semantic diffs; trust and signing infrastructure.

- Standards and policy adoption (Policy, Compliance)

- Incorporate “AI-augmented static analysis” into secure development baselines, procurement requirements, and audit checklists (e.g., NIST/OWASP guidance).

- Standardize reporting formats for LLM‑assisted findings and evidentiary reasoning.

- Dependencies: consensus on metrics and disclosure formats; validation suites; regulator buy-in.

- IDE-centric interactive vulnerability exploration (Software tooling)

- Build visualization and chat-based assistants around IRIS: navigate paths, request targeted context, query “what if” scenarios.

- Blend path graph views with natural language explanations and code snippets.

- Dependencies: UX design, latency management; large-context handling; developer adoption.

- Sector-specific secure SDLC gates (Finance, Healthcare, Energy)

- Tailor IRIS policies for regulated domains (e.g., stricter gating on CWE-78 and CWE-22 in data-processing pipelines).

- Automate risk scoring and escalation paths tied to regulatory controls.

- Dependencies: domain-specific rulepacks; mapping to control catalogs; integration into governance tooling.

- Advanced benchmarking and model distillation (Academia, Software)

- Use CWE-Bench-Java to build new benchmarks for whole-repository reasoning; distill GPT‑4 performance into smaller models with reproducible prompts.

- Study generalization across CWEs and projects; develop reliability metrics.

- Dependencies: sustained access to strong teacher models; careful prompt and dataset curation; standardized evaluation.

Global Assumptions and Dependencies That Affect Feasibility

- Buildability: Projects must compile to extract dataflow graphs for static analysis.

- Model quality and variability: Detection rates depend on the chosen LLM; GPT‑4 performed best in the paper, while open models varied.

- Privacy and IP: Using closed/cloud LLMs requires guardrails; on‑prem inference mitigates but needs hardware.

- Cost and latency: LLM calls introduce runtime and financial costs; batching and prompt optimization are needed.

- Static analysis limitations: Some vulnerabilities (e.g., OS command injection via gadget chains or external side effects) may evade static methods; hybrid approaches may be necessary.

- Scope of CWEs and specs: The paper focused on four CWEs and did not infer sanitizers; annotation-based sources (e.g., Java annotations) may need special handling.

- Documentation dependency: Spec inference benefits from README/JavaDoc quality; sparse docs can degrade labeling accuracy.

- Prompt maintenance: Templates and context-selection heuristics require ongoing tuning to avoid drift and hallucinations.

Collections

Sign up for free to add this paper to one or more collections.