AgentLens: Visual Analysis for Agent Behaviors in LLM-based Autonomous Systems

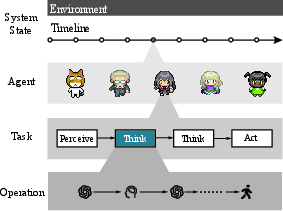

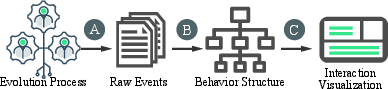

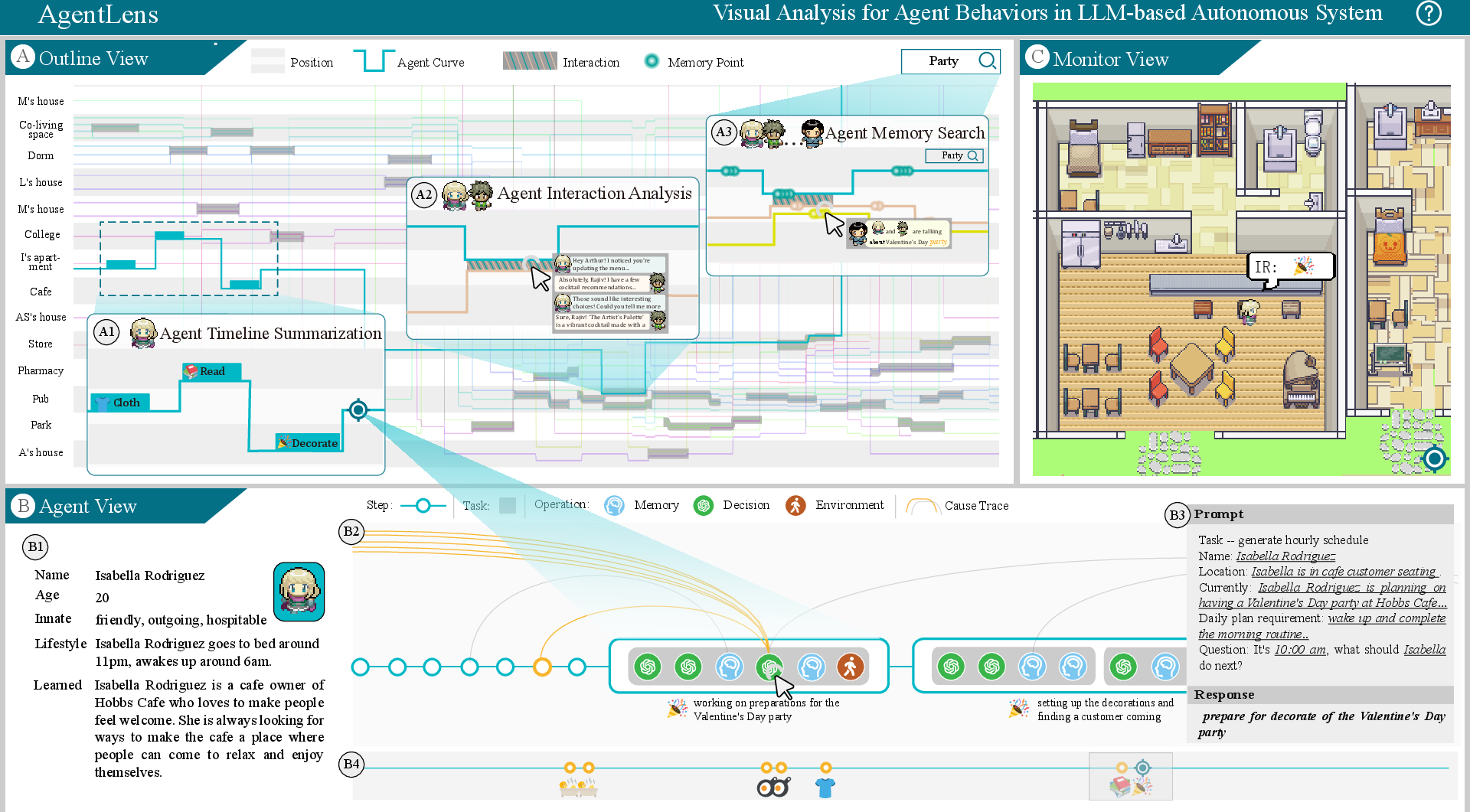

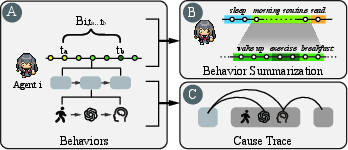

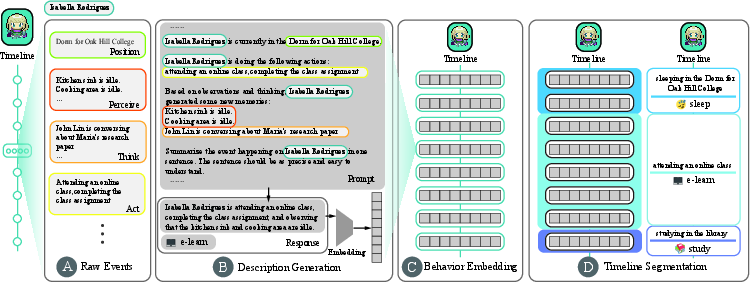

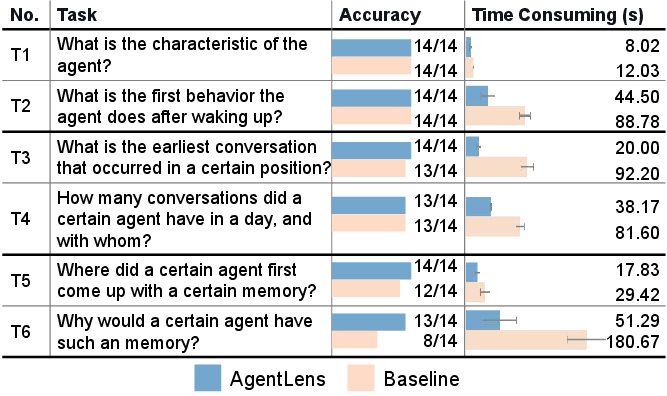

Abstract: Recently, LLM based Autonomous system(LLMAS) has gained great popularity for its potential to simulate complicated behaviors of human societies. One of its main challenges is to present and analyze the dynamic events evolution of LLMAS. In this work, we present a visualization approach to explore detailed statuses and agents' behavior within LLMAS. We propose a general pipeline that establishes a behavior structure from raw LLMAS execution events, leverages a behavior summarization algorithm to construct a hierarchical summary of the entire structure in terms of time sequence, and a cause trace method to mine the causal relationship between agent behaviors. We then develop AgentLens, a visual analysis system that leverages a hierarchical temporal visualization for illustrating the evolution of LLMAS, and supports users to interactively investigate details and causes of agents' behaviors. Two usage scenarios and a user study demonstrate the effectiveness and usability of our AgentLens.

- N. H. S., “Software agents: an overview,” The Knowledge Engineering Review, vol. 11, p. 205–244, Jul. 1996.

- M. J. Wooldridge and N. R. Jennings, “Intelligent agents: theory and practice,” The Knowledge Engineering Review, vol. 10, no. 2, pp. 115–152, Jun. 1995.

- L. Ouyang, J. Wu, X. Jiang, D. Almeida, C. L. Wainwright, P. Mishkin, C. Zhang, S. Agarwal, K. Slama, A. Ray, J. Schulman, J. Hilton, F. Kelton, L. Miller, M. Simens, A. Askell, P. Welinder, P. F. Christiano, J. Leike, and R. Lowe, “Training language models to follow instructions with human feedback,” in NeurIPS. New Orleans, USA: PMLR, 2022.

- OpenAI, “GPT-4 technical report,” CoRR, vol. abs/2303.08774, Mar. 2023.

- J. Wei, Y. Tay, R. Bommasani, C. Raffel, B. Zoph, S. Borgeaud, D. Yogatama, M. Bosma, D. Zhou, D. Metzler, E. H. Chi, T. Hashimoto, O. Vinyals, P. Liang, J. Dean, and W. Fedus, “Emergent abilities of large language models,” TMLR, vol. 2022, 2022.

- L. Wang, C. Ma, X. Feng, Z. Zhang, H. Yang, J. Zhang, Z. Chen, J. Tang, X. Chen, Y. Lin, W. X. Zhao, Z. Wei, and J. Wen, “A survey on large language model based autonomous agents,” CoRR, vol. abs/2308.11432, 2023.

- Z. Xi, W. Chen, X. Guo, W. He, Y. Ding, B. Hong, M. Zhang, J. Wang, S. Jin, E. Zhou, R. Zheng, X. Fan, X. Wang, L. Xiong, Y. Zhou, W. Wang, C. Jiang, Y. Zou, X. Liu, Z. Yin, S. Dou, R. Weng, W. Cheng, Q. Zhang, W. Qin, Y. Zheng, X. Qiu, X. Huan, and T. Gui, “The rise and potential of large language model based agents: A survey,” CoRR, vol. abs/2309.07864, 2023.

- J. S. Park, J. C. O’Brien, C. J. Cai, M. R. Morris, P. Liang, and M. S. Bernstein, “Generative agents: Interactive simulacra of human behavior,” in Proc. UIST. San Francisco, USA: ACM, 2023.

- J. Shi, J. Zhao, Y. Wang, X. Wu, J. Li, and L. He, “CGMI: Configurable general multi-agent interaction framework,” CoRR, vol. abs/2308.12503, 2023.

- C. Qian, X. Cong, C. Yang, W. Chen, Y. Su, J. Xu, Z. Liu, and M. Sun, “Communicative agents for software development,” CoRR, vol. abs/2307.07924, 2023.

- S. Hong, X. Zheng, J. Chen, Y. Cheng, J. Wang, C. Zhang, Z. Wang, S. K. S. Yau, Z. Lin, L. Zhou, C. Ran, L. Xiao, and C. Wu, “MetaGPT: Meta programming for multi-agent collaborative framework,” CoRR, vol. abs/2308.00352, Aug. 2023.

- D. A. Boiko, R. MacKnight, and G. Gomes, “Emergent autonomous scientific research capabilities of large language models,” CoRR, vol. abs/2304.05332, 2023.

- Gravitas, “AutoGPT,” https://github.com/Significant-Gravitas/AutoGPT, 2023.

- J. Lin, H. Zhao, A. Zhang, Y. Wu, H. Ping, and Q. Chen, “AgentSims: An open-source sandbox for large language model evaluation,” CoRR, vol. abs/2308.04026, 2023.

- W. Chen, Y. Su, J. Zuo, C. Yang, C. Yuan, C. Qian, C. Chan, Y. Qin, Y. Lu, R. Xie, Z. Liu, M. Sun, and J. Zhou, “AgentVerse: Facilitating multi-agent collaboration and exploring emergent behaviors in agents,” CoRR, vol. abs/2308.10848, 2023.

- C. Zhang, K. Yang, S. Hu, Z. Wang, G. Li, Y. Sun, C. Zhang, Z. Zhang, A. Liu, S. Zhu, X. Chang, J. Zhang, F. Yin, Y. Liang, and Y. Yang, “ProAgent: Building proactive cooperative AI with large language models,” CoRR, vol. abs/2308.11339, 2023.

- H. Zhang, W. Du, J. Shan, Q. Zhou, Y. Du, J. B. Tenenbaum, T. Shu, and C. Gan, “Building cooperative embodied agents modularly with large language models,” CoRR, vol. abs/2307.02485, 2023.

- X. Zhu, Y. Chen, H. Tian, C. Tao, W. Su, C. Yang, G. Huang, B. Li, L. Lu, X. Wang, Y. Qiao, Z. Zhang, and J. Dai, “Ghost in the Minecraft: Generally capable agents for open-world environments via large language models with text-based knowledge and memory,” CoRR, vol. abs/2305.17144, 2023.

- D. Hafner, J. Pasukonis, J. Ba, and T. P. Lillicrap, “Mastering diverse domains through world models,” CoRR, vol. abs/2301.04104, 2023.

- A. Mirchev, B. Kayalibay, P. van der Smagt, and J. Bayer, “Variational state-space models for localisation and dense 3D mapping in 6 DoF,” in ICLR. Austria: OpenReview.net, 2021.

- S. Franklin and A. C. Graesser, “Is it an agent, or just a program?: a taxonomy for autonomous agents,” in Proc. ATAL. Budapest, Hungary: Springer, 1996.

- S. Bubeck, V. Chandrasekaran, R. Eldan, J. Gehrke, E. Horvitz, E. Kamar, P. Lee, Y. T. Lee, Y. Li, S. M. Lundberg, H. Nori, H. Palangi, M. T. Ribeiro, and Y. Zhang, “Sparks of artificial general intelligence: Early experiments with GPT-4,” CoRR, vol. abs/2303.12712, Mar. 2023.

- H. Touvron, T. Lavril, G. Izacard, X. Martinet, M. Lachaux, T. Lacroix, B. Rozière, N. Goyal, E. Hambro, F. Azhar, A. Rodriguez, A. Joulin, E. Grave, and G. Lample, “LLaMA: Open and efficient foundation language models,” CoRR, vol. abs/2302.13971, Feb. 2023.

- T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell et al., “Language models are few-shot learners,” Advances in neural information processing systems, vol. 33, pp. 1877–1901, 2020.

- S. Yao, J. Zhao, D. Yu, N. Du, I. S. andKarthik R. Narasimhan, and Y. Cao, “ReAct: Synergizing reasoning and acting in language models,” in ICLR. Kigali, Rwanda: OpenReview.net, 2023.

- S. Noah, C. Federico, G. Ashwin, N. K. R, and Y. Shunyu, “Reflexion: Language agents with verbal reinforcement learning,” in NeurIPS. New Orleans, USA: PMLR, 2023.

- S. Yao, D. Yu, J. Zhao, I. Shafran, T. L. Griffiths, Y. Cao, and K. Narasimhan, “Tree of thoughts: Deliberate problem solving with large language models,” CoRR, vol. abs/2305.10601, 2023.

- T. Schick, J. Dwivedi-Yu, R. Dessì, R. Raileanu, M. Lomeli, L. Zettlemoyer, N. Cancedda, and T. Scialom, “Toolformer: Language models can teach themselves to use tools,” CoRR, vol. abs/2302.04761, 2023.

- Y. Qin, S. Hu, Y. Lin, W. Chen, N. Ding, G. Cui, Z. Zeng, Y. Huang, C. Xiao, C. Han, Y. R. Fung, Y. Su, H. Wang, C. Qian, R. Tian, K. Zhu, S. Liang, X. Shen, B. Xu, Z. Zhang, Y. Ye, B. Li, Z. Tang, J. Yi, Y. Zhu, Z. Dai, L. Yan, X. Cong, Y. Lu, W. Zhao, Y. Huang, J. Yan, X. Han, X. Sun, D. Li, J. Phang, C. Yang, T. Wu, H. Ji, Z. Liu, and M. Sun, “Tool learning with foundation models,” CoRR, vol. abs/2304.08354, 2023.

- Y. Qin, S. Liang, Y. Ye, K. Zhu, L. Yan, Y. Lu, Y. Lin, X. Cong, X. Tang, B. Qian, S. Zhao, R. Tian, R. Xie, J. Zhou, M. Gerstein, D. Li, Z. Liu, and M. Sun, “Toolllm: Facilitating large language models to master 16000+ real-world apis,” CoRR, vol. abs/2307.16789, 2023.

- C. Qian, C. Han, Y. R. Fung, Y. Qin, Z. Liu, and H. Ji, “CREATOR: disentangling abstract and concrete reasonings of large language models through tool creation,” CoRR, vol. abs/2305.14318, 2023.

- H. Chase, “Langchain,” https://github.com/hwchase17/langchain, 2022.

- Y. Nakajima, “BabyAGI,” https://github.com/yoheinakajima/babyagi, 2023.

- G. Li, H. A. A. K. Hammoud, H. Itani, D. Khizbullin, and B. Ghanem, “CAMEL: communicative agents for ”mind” exploration of large scale language model society,” CoRR, vol. abs/2303.17760, Mar. 2023.

- Y. Talebirad and A. Nadiri, “Multi-agent collaboration: Harnessing the power of intelligent LLM agents,” CoRR, vol. abs/2306.03314, 2023.

- T. Liang, Z. He, W. Jiao, X. Wang, Y. Wang, R. Wang, Y. Yang, Z. Tu, and S. Shi, “Encouraging divergent thinking in large language models through multi-agent debate,” CoRR, vol. abs/2305.19118, 2023.

- R. Nakano, J. Hilton, S. Balaji, J. Wu, L. Ouyang, C. Kim, C. Hesse, S. Jain, V. Kosaraju, W. Saunders, X. Jiang, K. Cobbe, T. Eloundou, G. Krueger, K. Button, M. Knight, B. Chess, and J. Schulman, “WebGPT: Browser-assisted question-answering with human feedback,” CoRR, vol. abs/2112.09332, 2021.

- Q. Wu, G. Bansal, J. Zhang, Y. Wu, S. Zhang, E. Zhu, B. Li, L. Jiang, X. Zhang, and C. Wang, “AutoGen: Enabling next-gen LLM applications via multi-agent conversation framework,” CoRR, vol. abs/2308.08155, 2023.

- R. Guo, T. Fujiwara, Y. Li, K. M. Lima, S. Sen, N. K. Tran, and K. Ma, “Comparative visual analytics for assessing medical records with sequence embedding,” Visualization Informatics, vol. 4, no. 2, pp. 72–85, 2020.

- Z. Jin, S. Cui, S. Guo, D. Gotz, J. Sun, and N. Cao, “CarePre: An intelligent clinical decision assistance system,” ACM Transactions on Computing for Healthcare, vol. 1, no. 1, pp. 1–20, 2020.

- C. B. Nielsen, S. D. Jackman, I. Birol, and S. J. M. Jones, “ABySS-Explorer: Visualizing genome sequence assemblies,” IEEE Transactions on Visualization and Computer Graphics, vol. 15, no. 6, pp. 881–888, 2009.

- S. Guo, K. Xu, R. Zhao, D. Gotz, H. Zha, and N. Cao, “EventThread: Visual summarization and stage analysis of event sequence data,” IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 1, pp. 56–65, 2018.

- S. Guo, Z. Jin, D. Gotz, F. Du, H. Zha, and N. Cao, “Visual progression analysis of event sequence data,” IEEE Transactions on Visualization and Computer Graphics, vol. 25, no. 1, pp. 417–426, 2019.

- A. Perer and F. Wang, “Frequence: interactive mining and visualization of temporal frequent event sequences,” in IUI. Haifa, Israel: ACM, 2014.

- Y. Han, A. Rozga, N. Dimitrova, G. D. Abowd, and J. T. Stasko, “Visual analysis of proximal temporal relationships of social and communicative behaviors,” Computer Graphics Forum, vol. 34, no. 3, pp. 51–60, 2015.

- N. Cao, Y. Lin, F. Du, and D. Wang, “Episogram: Visual summarization of egocentric social interactions,” IEEE Computer Graphics and Applications, vol. 36, no. 5, pp. 72–81, 2016.

- F. Fischer, J. Fuchs, P. Vervier, F. Mansmann, and O. Thonnard, “VisTracer: a visual analytics tool to investigate routing anomalies in traceroutes,” in VizSec. Seattle, USA: ACM, 2012.

- L. Wenting, W. Meng, and C. J. H, “Real-time event identification through low-dimensional subspace characterization of high-dimensional synchrophasor data,” IEEE Transactions on Power Systems, vol. 33, no. 5, pp. 4937–4947, 2018.

- Y. Wu, N. Cao, D. Gotz, Y. Tan, and D. A. Keim, “A survey on visual analytics of social media data,” IEEE Transactions on Multimedia, vol. 18, no. 11, pp. 2135–2148, 2016.

- F. Zhou, X. Lin, C. Liu, Y. Zhao, P. Xu, L. Ren, T. Xue, and L. Ren, “A survey of visualization for smart manufacturing,” Journal of Visualization, vol. 22, no. 2, pp. 419–435, 2019.

- Y. Shi, Y. Liu, H. Tong, J. He, G. Yan, and N. Cao, “Visual analytics of anomalous user behaviors: A survey,” IEEE Transactions on Big Data, vol. 8, no. 2, pp. 377–396, 2020.

- Y. Guo, S. Guo, Z. Jin, S. Kaul, D. Gotz, and N. Cao, “Survey on visual analysis of event sequence data,” IEEE Transactions on Visualization and Computer Graphics, vol. 28, no. 12, pp. 5091–5112, 2022.

- X. Yuan, Z. Wang, Z. Liu, C. Guo, H. Ai, and D. Ren, “Visualization of social media flows with interactively identified key players,” in IEEE VAST. Paris, France: IEEE Computer Society, 2014, pp. 291–292.

- Y. Wu, S. Liu, K. Yan, M. Liu, and F. Wu, “OpinionFlow: Visual analysis of opinion diffusion on social media,” IEEE Transactions on Visualization and Computer Graphics, vol. 20, no. 12, pp. 1763–1772, 2014.

- S. Chen, S. Li, S. Chen, and X. Yuan, “R-Map: A map metaphor for visualizing information reposting process in social media,” IEEE Transactions on Visualization and Computer Graphics, vol. 26, no. 1, pp. 1204–1214, 2020.

- G. Sun, T. Tang, T. Peng, R. Liang, and Y. Wu, “SocialWave: Visual analysis of spatio-temporal diffusion of information on social media,” ACM Transactions on Intelligent Systems and Technology, vol. 9, no. 2, pp. 1–23, 2018.

- J. Zhao, N. Cao, Z. Wen, Y. Song, Y. Lin, and C. Collins, “#FluxFlow: Visual analysis of anomalous information spreading on social media,” IEEE Transactions on Visualization and Computer Graphics, vol. 20, no. 12, pp. 1773–1782, 2014.

- F. B. Viégas, M. Wattenberg, J. Hebert, G. Borggaard, A. Cichowlas, J. Feinberg, J. Orwant, and C. R. Wren, “Google+Ripples: a native visualization of information flow,” in WWW. Rio de Janeiro, Brazil: ACM, 2013.

- P. Law, Z. Liu, S. Malik, and R. C. Basole, “MAQUI: Interweaving queries and pattern mining for recursive event sequence exploration,” IEEE Transactions on Visualization and Computer Graphics, vol. 25, no. 1, pp. 396–406, 2019.

- S. N. Dambekodi, S. Frazier, P. Ammanabrolu, and M. O. Riedl, “Playing text-based games with common sense,” CoRR, vol. abs/2012.02757, 2020.

- M. J. Hausknecht, P. Ammanabrolu, M. Côté, and X. Yuan, “Interactive fiction games: A colossal adventure,” in AAAI. New York, USA: AAAI Press, 2020, pp. 7903–7910.

- R. Liu, R. Yang, C. Jia, G. Zhang, D. Zhou, A. M. Dai, D. Yang, and S. Vosoughi, “Training socially aligned language models in simulated human society,” CoRR, vol. abs/2305.16960, 2023.

- D. Driess, F. Xia, M. S. M. Sajjadi, C. Lynch, A. Chowdhery, B. Ichter, A. Wahid, J. Tompson, Q. Vuong, T. Yu, W. Huang, Y. Chebotar, P. Sermanet, D. Duckworth, S. Levine, V. Vanhoucke, K. Hausman, M. Toussaint, K. Greff, A. Zeng, I. Mordatch, and P. Florence, “PaLM-E: An embodied multimodal language model,” in ICML. Honolulu, USA: PMLR, 2023.

- S. Paul, A. Roy-Chowdhury, and A. Cherian, “AVLEN: audio-visual-language embodied navigation in 3d environments,” in NeurIPS, New Orleans, USA, 2022.

- J. S. Park, L. Popowski, C. J. Cai, M. R. Morris, P. Liang, and M. S. Bernstein, “Social simulacra: Creating populated prototypes for social computing systems,” in UIST. Bend, USA: ACM, 2022, pp. 1–18.

- C. Gao, X. Lan, Z. Lu, J. Mao, J. Piao, H. Wang, D. Jin, and Y. Li, “S33{}^{\mbox{3}}start_FLOATSUPERSCRIPT 3 end_FLOATSUPERSCRIPT: Social-network simulation system with large language model-empowered agents,” CoRR, vol. abs/2307.14984, 2023.

- R. Williams, N. Hosseinichimeh, A. Majumdar, and N. Ghaffarzadegan, “Epidemic modeling with generative agents,” CoRR, vol. abs/2307.04986, 2023.

- A. O’Gara, “Hoodwinked: Deception and cooperation in a text-based game for language models,” CoRR, vol. abs/2308.01404, 2023.

- L. Fan, G. Wang, Y. Jiang, A. Mandlekar, Y. Yang, H. Zhu, A. Tang, D. Huang, Y. Zhu, and A. Anandkumar, “MineDojo: Building open-ended embodied agents with internet-scale knowledge,” in NeurIPS, New Orleans, USA, 2022.

- G. Wang, Y. Xie, Y. Jiang, A. Mandlekar, C. Xiao, Y. Zhu, L. Fan, and A. Anandkumar, “Voyager: An open-ended embodied agent with large language models,” CoRR, vol. abs/2305.16291, 2023.

- C. Lynch, A. Wahid, J. Tompson, T. Ding, J. Betker, R. Baruch, T. Armstrong, and P. Florence, “Interactive language: Talking to robots in real time,” CoRR, vol. abs/2210.06407, 2022.

- D. Surís, S. Menon, and C. Vondrick, “ViperGPT: Visual inference via python execution for reasoning,” in ICCV. Paris, France: IEEE, 2023, pp. 11 854–11 864.

- K. Nottingham, P. Ammanabrolu, A. Suhr, Y. Choi, H. Hajishirzi, S. Singh, and R. Fox, “Do embodied agents dream of pixelated sheep: Embodied decision making using language guided world modelling,” in ICML. Honolulu, USA: PMLR, 2023.

- J. Cui, Z. Li, Y. Yan, B. Chen, and L. Yuan, “ChatLaw: Open-source legal large language model with integrated external knowledge bases,” CoRR, vol. abs/2306.16092, 2023.

- W. Huang, F. Xia, T. Xiao, H. Chan, J. Liang, P. Florence, A. Zeng, J. Tompson, I. Mordatch, Y. Chebotar, P. Sermanet, T. Jackson, N. Brown, L. Luu, S. Levine, K. Hausman, and B. Ichter, “Inner monologue: Embodied reasoning through planning with language models,” in Conference on Robot Learning, vol. 205. Auckland, New Zealand: PMLR, 2022, pp. 1769–1782.

- Y. Shen, K. Song, X. Tan, D. Li, W. Lu, and Y. Zhuang, “HuggingGPT: Solving AI tasks with ChatGPT and its friends in hugging face,” CoRR, 2023.

- X. Liang, B. Wang, H. Huang, S. Wu, P. Wu, L. Lu, Z. Ma, and Z. Li, “Unleashing infinite-length input capacity for large-scale language models with self-controlled memory system,” CoRR, vol. abs/2304.13343, 2023.

- C. J. Chu, “Time series segmentation: A sliding window approach,” Inf. Sci., vol. 85, no. 1-3, pp. 147–173, Jul. 1995.

- C. Truong, L. Oudre, and N. Vayatis, “Selective review of offline change point detection methods,” Signal Process., vol. 167, Feb. 2020.

- M. Ogawa and K. Ma, “Software evolution storylines,” in Proc. VISSOFT. Salt Lake City, USA: ACM, 2010, pp. 35–42.

- Y. Tanahashi and K. Ma, “Design considerations for optimizing storyline visualizations,” IEEE Transactions on Visualization and Computer Graphics, vol. 18, no. 12, 2012.

- Y. Feng, X. Wang, B. Pan, K. Wong, Y. Ren, S. Liu, Z. Yan, Y. Ma, H. Qu, and W. Chen, “XNLI: explaining and diagnosing NLI-based visual data analysis,” IEEE Transactions on Visualization and Computer Graphics, 2023.

- B. John, “SUS: a retrospective,” Journal of usability studies, vol. 8, no. 2, p. 29–40, 2013.

- X. Team, “XAgent: An autonomous agent for complex task solving,” 2023, https://github.com/OpenBMB/XAgent.

- R. Team, “Research agent,” 2023, https://github.com/mukulpatnaik/researchgpt.

- Y. Zheng, C. Ma, K. Shi, and H. Huang, “Agents meet OKR: an object and key results driven agent system with hierarchical self-collaboration and self-evaluation,” CoRR, vol. abs/2311.16542, 2023.

- Z. Yang, L. Li, K. Lin, J. Wang, C. Lin, Z. Liu, and L. Wang, “The dawn of LMMs: Preliminary explorations with GPT-4V(ision),” CoRR, vol. abs/2309.17421, 2023.

- F. Yingchaojie, C. Jiazhou, H. Keyu, J. K. Wong, Y. Hui, Z. Wei, Z. Rongchen, L. Xiaonan, and C. Wei, “iPoet: interactive painting poetry creation with visual multimodal analysis,” Journal of Visualization, vol. 25, no. 3, 2022.

- Y. Feng, X. Wang, K. K. Wong, S. Wang, Y. Lu, M. Zhu, B. Wang, and W. Chen, “PromptMagician: Interactive prompt engineering for text-to-image creation,” IEEE Transactions on Visualization and Computer Graphics, vol. 30, no. 1, 2024.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.