Shampoo: Preconditioned Stochastic Tensor Optimization

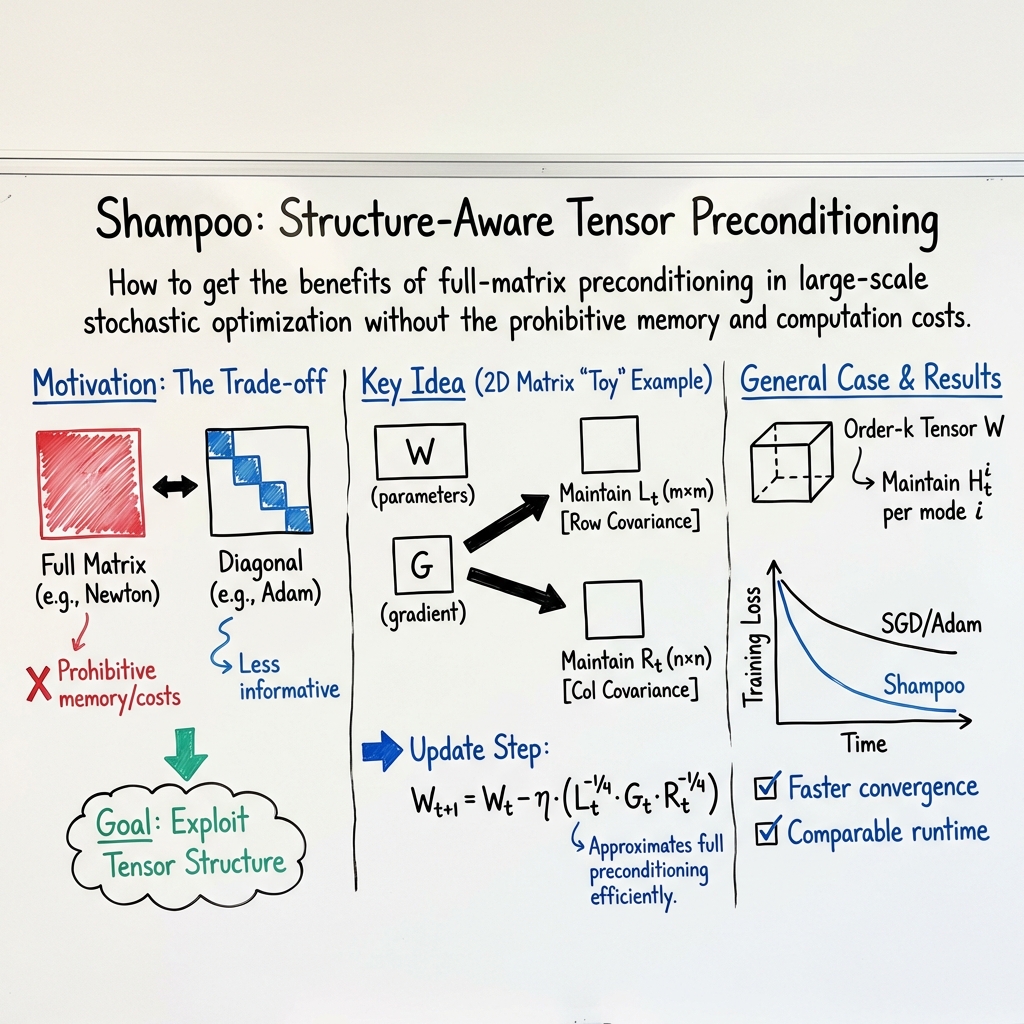

Abstract: Preconditioned gradient methods are among the most general and powerful tools in optimization. However, preconditioning requires storing and manipulating prohibitively large matrices. We describe and analyze a new structure-aware preconditioning algorithm, called Shampoo, for stochastic optimization over tensor spaces. Shampoo maintains a set of preconditioning matrices, each of which operates on a single dimension, contracting over the remaining dimensions. We establish convergence guarantees in the stochastic convex setting, the proof of which builds upon matrix trace inequalities. Our experiments with state-of-the-art deep learning models show that Shampoo is capable of converging considerably faster than commonly used optimizers. Although it involves a more complex update rule, Shampoo's runtime per step is comparable to that of simple gradient methods such as SGD, AdaGrad, and Adam.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper introduces a new way to speed up how computers learn from data, especially in deep learning. The method is called Shampoo. It helps training go faster by taking smarter steps during learning, using the natural shape of the data and model (tensors, like multi-dimensional grids), without using too much memory or time.

Think of learning like hiking down a mountain to reach the lowest point (the best solution). Regular methods take the same kind of step in every direction. Shampoo adjusts the size of each step differently in each direction, based on what it has seen so far, so it can get to the bottom faster and more safely.

Objectives: What the authors wanted to achieve

The paper aims to:

- Build a “structure-aware” optimizer that uses the shape of the model’s parameters (matrices and higher‑dimensional tensors) to make better updates.

- Keep the method practical: low memory use and speed per step similar to simple methods like SGD, AdaGrad, or Adam.

- Provide mathematical guarantees that it will converge (reach good solutions) in a standard setting.

- Show with experiments that it works well on real deep learning models.

Approach: How Shampoo works (in simple terms)

First, a few quick ideas:

- A vector is a list of numbers. A matrix is a 2D table of numbers. A tensor is like a 3D (or more) grid of numbers—think of a stack of images or video frames.

- During training, we compute a gradient, which points in the direction to change the model to reduce error.

- Preconditioning means “reshaping” that gradient so we can move faster in easy directions and slower in difficult ones.

The problem with classic “smart” methods:

- The best preconditioning would use a huge matrix that captures how every parameter interacts with every other one. But for modern models, this matrix is gigantic—too big to store or compute with.

Shampoo’s key idea:

- Keep the tensor shape of the model’s parameters and gradients.

- Instead of one giant preconditioner, keep one small preconditioner per dimension (axis) of the tensor.

- For a matrix (2D), it keeps two moderate-size matrices: one for rows and one for columns.

- For a 3D or 4D tensor (like in convolutional layers), it keeps one small matrix per axis.

- At each step:

- It updates these small matrices using the history of gradients (similar to AdaGrad’s “second moments,” which are like running sums of squared gradients).

- It multiplies the gradient by these matrices along each axis (left, right, depth, etc.). This “scales” the gradient properly in each direction.

This acts like a smart filter that adapts step sizes differently along each dimension, but without ever forming the massive full matrix.

Analogy:

- Imagine you’re wearing special shoes that automatically adjust your stride length differently for uphill, downhill, and sideways movement, based on what you’ve experienced so far. Shampoo gives you those adaptive “per-direction” adjustments.

Under the hood (gently):

- The math shows that combining the per-axis preconditioners is similar to using a much larger, full preconditioner, but at a tiny fraction of the cost.

- The analysis uses a standard framework called online convex optimization and some matrix math tools. The key takeaway: the steps are provably safe and efficient.

Main findings: What they discovered and why it matters

- Theory: Shampoo has solid convergence guarantees in the convex setting. In other words, if the problem is well-behaved, the method steadily improves at an optimal rate.

- Efficiency: Memory and compute costs are much lower than full-matrix methods.

- Example for a matrix with m rows and n columns:

- Full preconditioning would need a huge mn × mn matrix.

- Shampoo only keeps two matrices: m × m and n × n.

- Speed in practice: On modern deep learning models, Shampoo often reaches good accuracy faster (in fewer training steps) than popular methods like SGD, AdaGrad, or Adam.

- Runtime per step: Despite the smarter math, each training step takes about as long as simple methods, thanks to the compact per-axis design and efficient tensor operations.

- Ease of use: Implemented in TensorFlow; you apply it to each parameter tensor without redesigning your model. It can even switch to a simpler diagonal version when a dimension is very large to keep memory in check.

Implications: Why this is useful

- Faster training: You can reach good results in fewer steps, saving time and compute budget.

- Better use of structure: Modern deep networks are full of tensors; Shampoo naturally leverages this, capturing important relationships that simple, per-parameter methods miss.

- Scalable and practical: It fits into existing workflows and frameworks and keeps per-step cost low.

- Broad impact: From vision to LLMs, any training setup that uses tensors can benefit. It’s a strong middle ground between very simple methods (fast but less informed) and very smart full-matrix methods (powerful but too expensive).

In short, Shampoo brings the benefits of “smart” second-order-like optimization to large-scale deep learning by cleverly using the shape of your parameters—making training both faster and practical.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, focused list of concrete gaps and open questions that remain unresolved by the paper, organized to help guide future research.

- Non-convex theory: The analysis covers online/stochastic convex optimization, but provides no convergence guarantees (e.g., stationarity, escape-from-saddle rates) for the non-convex settings (deep networks) where Shampoo is evaluated and claimed to excel.

- Rank assumptions in bounds: Regret bounds hinge on per-mode low-rank assumptions (rank(#i{G_t}) ≤ r_i). In practice, gradients can be full-rank or have slowly decaying spectra. It is unclear how bounds degrade without these assumptions or whether spectrum-aware bounds (e.g., in terms of eigenvalue sums/tails) can be derived.

- Tightness vs. full AdaGrad: While the Kronecker preconditioner is shown to lower-bound a full preconditioner, there is no quantitative approximation-error bound to full-matrix AdaGrad (e.g., condition-number improvement guarantees), nor conditions characterizing when Shampoo matches or meaningfully improves upon diagonal AdaGrad.

- Projection omission: The analysis acknowledges omitting projections and replaces norm constraints with a diameter D term that can grow with T. Practical, efficient projection (or a provable substitute) in the Shampoo geometry remains unaddressed.

- Choice of exponents: The specific exponents (e.g., L_t{-1/4}, R_t{-1/4} for matrices and (H_ti){-1/(2k)} for tensors) are analysis-driven but not justified as optimal. It is unknown whether alternative exponents (or learned/adaptive exponents) improve theory or practice.

- Accumulation strategy: Shampoo uses un-discounted second-moment sums. The impact of windowing, exponential decay, or bias-correction (as in Adam) on stability, adaptivity, and regret is unexplored theoretically and empirically.

- Numerical stability: There is no analysis of numerical conditioning, choice and scaling of ε, or behavior with near-singular preconditioners—particularly under mixed precision, large batch variance, or ill-conditioned curvature.

- Cost of matrix functions: Each step requires matrix roots/inverses (via SVD/eigendecomposition). The paper lacks:

- Complexity bounds per step in terms of per-mode sizes and frequency of updates.

- Practical strategies to amortize or approximate matrix powers (e.g., Newton–Schulz iterations, Krylov/Lanczos, low-rank approximations), with error-vs-speed trade-offs.

- Guidance on how often to recompute preconditioners without hurting convergence.

- Scalability limits: Per-mode O(n_i2) memory and O(n_i3) compute become prohibitive for large dimensions (e.g., embeddings, large conv channels, transformer MLPs). Scalable variants (sketching, block-sparse/low-rank, hierarchical factorizations) are not developed.

- Diagonal fallback policy: The diagonal switch threshold (~1200) is heuristic. There is no principled criterion or analysis quantifying the accuracy/computation trade-off, nor adaptive schemes that tune diagonalization per layer/mode during training.

- Inter-tensor correlations: Shampoo uses per-tensor block preconditioners and ignores cross-tensor correlations. The effect on optimization efficiency and whether cross-tensor Kronecker structures (or shared preconditioners) can close this gap are open.

- Convolutional structure: Although tensors are handled, the method does not exploit convolutional weight sharing or spatial structure explicitly. It remains open whether specialized Kronecker layouts (e.g., channel×channel vs. spatial×spatial) yield better preconditioning.

- Sparse gradients: The algorithm forms dense contractions (G_ti). How to exploit sparsity (common in NLP/recsys) for subquadratic memory/time while preserving preconditioning quality is not addressed.

- Momentum and combinations: Interactions with momentum (classical or Nesterov), weight decay, or adaptive schedules are not analyzed. Whether momentum+Shampoo yields provable or empirical gains is open.

- Learning-rate selection: There is no principled step-size tuning (global vs. per-tensor), nor analyses of sensitivity to η and ε, especially under preconditioning that changes scale.

- Distributed training: The paper does not address communication, sharding, or synchronization of per-mode preconditioners across devices. Efficient distributed Shampoo (e.g., periodic sync, compressed preconditioners) and its convergence properties remain open.

- Generalization and implicit bias: The effect of Shampoo’s geometry on generalization (e.g., margin or path-norm analogs) and its interaction with normalization layers is not investigated.

- Mode permutation/design: Preconditioners depend on tensor mode choices. There is no guidance on selecting/learning mode permutations (or grouping) to optimize preconditioning quality.

- Non-Euclidean parameterizations: Extensions to constrained or manifold-constrained parameters (e.g., orthogonal, low-rank, simplex) under Shampoo’s tensor geometry are unexplored.

- Empirical ablations: Missing systematic studies on:

- Runtime and memory vs. SGD/Adam/K-FAC on identical hardware.

- Sensitivity to η, ε, exponent choices, recomputation frequency, and diagonal fallback thresholds.

- Task/domain coverage (e.g., very LLMs, extreme-classification embeddings).

- Comparative performance against K-FAC and full AdaGrad under controlled settings.

- Adversarial/non-stationary data: While framed in OCO, adaptations for drifting distributions (e.g., resetting or weighting the history) and corresponding regret bounds are not provided.

- Biases and constraints: Handling explicit constraints (norm, box, simplex) efficiently with the Shampoo geometry is not developed, beyond noting the computational burden of projections.

- Implementation portability: Only a TensorFlow prototype is mentioned; PyTorch and JAX implementations, kernel-level optimizations, and integration with mixed-precision training are left for future work.

Glossary

- AdaGrad: An adaptive gradient method that forms a preconditioner from accumulated gradient statistics to scale updates per-coordinate. "most notably AdaGrad~\citep{duchi2011adaptive}, that use the covariance matrix of the accumulated gradients to form a preconditioner."

- Block-diagonal preconditioner: A preconditioning matrix structured with independent blocks (submatrices) along the diagonal, each applied to a separate parameter block/tensor. "amounts to employing a block-diagonal preconditioner, with blocks corresponding to the different tensors in the model."

- Contraction (tensor): An operation that sums over all indices except one (or a set), producing a lower-order object; here used to produce an n_i × n_i matrix from a tensor by contracting all other modes. "The contraction of an tensor with itself along all but the 'th dimension is an matrix defined as$A<sup>{(i)}</sup> = #1{i}{A} #1{i}{A}$"

- Fisher-information matrix: A matrix capturing the curvature of the log-likelihood; in deep learning it measures sensitivity of model predictions to parameters. "approximates the Fisher-information matrix of a generative model represented by a neural network."

- Frobenius norm: The square root of the sum of squares of all entries of a matrix, equivalent to the Euclidean norm of its vectorization. "the Frobenius norm is ."

- Geometric mean (of matrices): A generalization of the scalar geometric mean to positive semidefinite matrices, with operator monotonicity under certain conditions. "In words, the (weighted) geometric mean of commuting PSD matrices is operator monotone."

- Hessian: The matrix of second derivatives of a function, used by Newton-type methods to capture local curvature. "Newton's method, which employs the local Hessian as a preconditioner"

- K-FAC: An optimization method (Kronecker-Factored Approximate Curvature) that approximates the Fisher matrix using Kronecker-factored blocks per layer. "Another recent optimization method that uses factored preconditioning is K-FAC \citep{martens2015optimizing}"

- Kronecker product: A tensor (block) product of two matrices producing a larger block matrix, useful for structured preconditioning and vectorization identities. "The Kronecker product, denoted , is an block matrix defined as,"

- Mahalanobis norm: A norm induced by a positive definite matrix that scales space by its inverse covariance, measuring distances with respect to that metric. "the Mahalanobis norm of $x\inR^d$ as induced by a positive definite matrix ."

- Matricization: The reshaping of a tensor into a matrix by stacking vectorized slices along a chosen mode. "The matricization operator $#1{i}{A}$ reshapes a tensor to a matrix by vectorizing the slices of along the 'th dimension and stacking them as rows of a matrix."

- Matrix trace inequalities: Inequalities involving the matrix trace (sum of diagonal elements), used to bound expressions in optimization and matrix analysis. "the proof of which builds upon matrix trace inequalities."

- Online convex optimization (OCO): A framework where a learner makes sequential decisions in a convex set and incurs losses from adversarially chosen convex functions, aiming to minimize regret. "We use Online Convex Optimization~(OCO)~\citep{shalev2012online, hazan2016introduction} as our analysis framework."

- Online Mirror Descent (OMD): A first-order online optimization algorithm that uses a (possibly time-varying) regularizer/metric to adapt gradient steps. "an adaptive version of Online Mirror Descent (OMD) in the OCO setting"

- Online-to-batch conversion: A technique that converts online learning guarantees (regret) into batch generalization/convergence bounds. "an online-to-batch conversion technique~\cite{cesa2004generalization}."

- Operator-monotone (function): A function f such that A ⪯ B implies f(A) ⪯ f(B) for PSD matrices A, B; important for matrix powers. "The function is operator-monotone for "

- Positive semidefinite (PSD): A symmetric matrix with nonnegative eigenvalues; denotes a nonnegative quadratic form. "the notation (resp.~) for a matrix means that is symmetric and positive semidefinite (resp.~definite), or PSD (resp.~PD) in short."

- Preconditioning (preconditioner): Transforming the gradient (or variables) using a matrix to improve conditioning and convergence of optimization. "Preconditioning methods maintain a matrix, termed a preconditioner, which is used to transform (i.e., premultiply) the gradient vector before it is used to take a step."

- Quasi-Newton methods: Optimization methods that approximate the Hessian or its inverse using gradient and step information to achieve curvature-aware updates. "as well as a plethora of quasi-Newton methods (e.g., \cite{fletcher2013practical,lewis2013nonsmooth,nocedal1980updating})"

- Regret (in online optimization): The difference between the learner’s cumulative loss and that of the best fixed decision in hindsight. "the learner attempts to minimize its regret, defined as the quantity"

- Singular value decomposition (SVD): A factorization A = UΣVT expressing a matrix via its singular vectors and singular values, used here to compute matrix powers. "Matrix powers were computed simply by constructing a singular value decomposition (SVD) and then taking the powers of the singular values."

- Spectral norm: The largest singular value of a matrix, equivalently the operator 2-norm. "The spectral norm of a matrix is denoted "

- Tensor–matrix product (mode-i product): Multiplying a tensor along a specific mode by a matrix, changing the size of that mode while preserving others. "denoted , for which the identity $#1{i}{A\times_i M} = M #1{i}{A}$ holds."

- Vectorization (flattening): Converting a matrix or tensor into a single column vector by stacking its entries in a specified order. "The vectorization (or flattening) of is the column vector"

Practical Applications

Immediate Applications

The following items describe concrete, deployable use cases and workflows that can be implemented now, across industry, academia, policy, and daily life.

- Training acceleration for deep learning models

- Sector: software, healthcare (medical imaging), robotics, finance (risk models), education (edtech)

- Application: Swap existing optimizers (SGD, AdaGrad, Adam) with Shampoo in TensorFlow for faster convergence of CNNs, transformers, and multi-task models without changing model architecture.

- Tools/workflows: Use Shampoo as a plug-in optimizer; retain tensor structure per layer; set diagonal fallback for large dimensions (e.g., >1200) to control memory; adopt standard learning-rate schedules; monitor epoch-time vs. epochs-to-target.

- Assumptions/dependencies: TensorFlow or similar framework with tensor contraction and SVD support; sufficient GPU/TPU memory for per-dimension preconditioners; non-convex training gains are empirical (theory given for convex); gradient statistics stable enough for second-moment accumulation.

- Cost and energy reduction in ML training

- Sector: energy, cloud/IT operations, sustainability (ESG)

- Application: Fewer training epochs to reach target accuracy reduces compute-hours and energy; integrate Shampoo into MLOps pipelines to lower training carbon footprint and cloud spend.

- Tools/workflows: Add optimizer selection to CI/CD training jobs; track power draw and time-to-metric; use diagonal fallback to fit memory budgets; leverage mixed precision to reduce SVD cost.

- Assumptions/dependencies: Comparable per-step runtime to SGD holds for typical models; energy savings require fewer total steps; accurate metering for energy and cost.

- Faster fine-tuning of pre-trained foundation models

- Sector: software, healthcare (clinical NLP), finance (document intelligence), education

- Application: Use Shampoo during fine-tuning to reach target validation scores with fewer steps, enabling quicker iteration cycles and deployment.

- Tools/workflows: Integrate Shampoo in transfer learning scripts; keep per-layer tensor preconditioners; measure convergence speed vs. baseline optimizers.

- Assumptions/dependencies: Preconditioning benefits persist in low-data fine-tuning; moderate dimension sizes or use diagonal fallback for giant layers.

- AutoML and hyperparameter tuning efficiency

- Sector: software platforms, MLOps

- Application: Include Shampoo as an optimizer choice in AutoML search spaces; reduce overall search time by quicker convergence per trial.

- Tools/workflows: Define optimizer as a hyperparameter; log regret/validation curves; early-stop more aggressively when using Shampoo’s faster trajectories.

- Assumptions/dependencies: Gains translate to a broad set of model families; tuning systems support TensorFlow; learning-rate and epsilon choices remain simple.

- Robust optimization for multi-dimensional parameters

- Sector: robotics (policy learning), recommender systems, speech/audio

- Application: Apply per-dimension preconditioning in tensor-heavy components (e.g., convolutional kernels, embeddings), improving conditioning without full matrix overhead.

- Tools/workflows: Exploit Shampoo’s left/right/tensor-mode preconditioners; avoid hand-crafted architectural-specific optimizers (e.g., K-FAC) when simplicity is preferred.

- Assumptions/dependencies: Tensor structure available; matrix powers (−1/4 for matrices, −1/(2k) for tensors) computed stably; diagonal fallbacks for extremely large modes.

- Educational and research use

- Sector: academia, education

- Application: Use Shampoo to teach structure-aware preconditioning, Kronecker-product calculus, and online convex optimization; include in coursework and reproducible benchmarks.

- Tools/workflows: Provide lab notebooks that compare SGD/AdaGrad/Adam/Shampoo; visualize trace-based bounds; demonstrate tensor matricization and contractions.

- Assumptions/dependencies: Access to Python/TensorFlow; datasets and baseline scripts; familiarity with basic linear algebra and optimization.

- MLOps-ready optimizer packaging

- Sector: software (platforms), open-source

- Application: Package Shampoo as an enterprise-ready optimizer module with default safe settings and memory-aware diagonal fallback.

- Tools/workflows: Create Keras/TensorFlow optimizer APIs; add configuration flags (epsilon, diagonal threshold, frequency of SVD updates); include telemetry.

- Assumptions/dependencies: Licensing and integration policies; internal build systems; teams trained to use the optimizer.

- On-device and resource-constrained training

- Sector: mobile/embedded, IoT

- Application: Use Shampoo’s diagonal fallback to precondition large modes while still benefiting from full preconditioning on smaller modes, enabling limited on-device training.

- Tools/workflows: Hybrid mode (full for small layers, diagonal for big ones); quantify device memory usage and per-step latency; use sparse updates where applicable.

- Assumptions/dependencies: Device supports necessary linear algebra ops; model sizes compatible with memory constraints; acceptable numerical precision on device.

- Faster iteration for applied ML teams

- Sector: industry across domains

- Application: Shorten model iteration cycles (experiments-to-insights) by achieving target metrics in fewer steps, improving developer productivity and release cadence.

- Tools/workflows: Standardize Shampoo in internal templates; track “time to target” KPI; couple with experiment tracking tools (Weights & Biases, MLflow).

- Assumptions/dependencies: Team adoption; consistent wins vs. incumbent optimizers on internal workloads.

- Compliance-friendly efficiency improvements

- Sector: policy, governance

- Application: Document training efficiency gains (less energy/compute) for ESG reports and AI governance audits.

- Tools/workflows: Maintain artifacts showing reduced epochs and energy usage with Shampoo; include optimizer choice in model cards.

- Assumptions/dependencies: Auditable metrics collection; organizational policies recognizing efficiency improvements.

Long-Term Applications

The following items outline opportunities that require additional research, scaling work, or productization before wide deployment.

- Distributed and sharded Shampoo for large-scale training

- Sector: cloud/IT, software platforms

- Application: Design communication-efficient distributed versions that shard per-dimension preconditioners and amortize matrix power computations across devices.

- Tools/workflows: All-reduce for second-moment stats; asynchronous SVD updates; schedule-aware pipelines to overlap computation/communication.

- Assumptions/dependencies: Efficient multi-GPU/TPU primitives; numerically stable distributed matrix powers; fault tolerance.

- Hardware co-design for tensor preconditioning

- Sector: semiconductors, hardware accelerators

- Application: Add accelerator kernels for per-mode contractions and matrix powers (e.g., fast eigendecompositions/SVD), optimizing Shampoo’s critical path.

- Tools/workflows: Compiler support for tensor-mode ops; libraries with batched decompositions; mixed-precision strategies tuned for preconditioners.

- Assumptions/dependencies: Vendor support; ROI for specialized kernels; verification of numerical stability in low precision.

- Cross-tensor preconditioners capturing inter-layer correlations

- Sector: deep learning research, software

- Application: Extend Shampoo beyond block-diagonal (per-tensor) preconditioners to capture inter-tensor correlations, potentially improving convergence further.

- Tools/workflows: Structured Kronecker factorizations across layers; memory-efficient summaries of cross-layer stats; iterative low-rank updates.

- Assumptions/dependencies: Manageable memory/computation growth; effective approximations to avoid full-matrix blowup; robust numerical routines.

- Theoretical advances in non-convex settings

- Sector: academia, research

- Application: Develop convergence guarantees for non-convex objectives typical of deep networks, and investigate adaptive exponents or schedules per mode.

- Tools/workflows: New regret/proof techniques; empirical studies on saddle-point escapes; adaptivity based on curvature proxies.

- Assumptions/dependencies: Progress in non-convex theory; practical model diagnostics for curvature estimation.

- Integration into PyTorch and other ecosystems

- Sector: software, open-source

- Application: Mature PyTorch implementation with optimized linear algebra backends, making Shampoo broadly accessible.

- Tools/workflows: Torch-native SVD/eig with autograd; integration with distributed data-parallel APIs; recipe libraries for common architectures.

- Assumptions/dependencies: Community contributions; cross-framework parity tests; maintenance commitment.

- Communication-efficient second-order statistics for federated learning

- Sector: privacy, healthcare, finance

- Application: Use compact representations (sketches/low-rank summaries) of per-mode gradient covariance in federated settings to gain preconditioning benefits without excessive bandwidth.

- Tools/workflows: Secure aggregation for preconditioner stats; compression schemes; client-side diagonal/full hybrids.

- Assumptions/dependencies: Privacy constraints; acceptable client device resources; robust aggregation under heterogeneity.

- Carbon-aware training schedulers leveraging faster optimizers

- Sector: policy, energy, sustainability

- Application: Combine Shampoo with carbon-aware schedulers (train when grid is greener) and exploit fewer epochs to minimize emissions.

- Tools/workflows: Emissions forecasting integration; optimizer-aware scheduling; reporting to ESG dashboards.

- Assumptions/dependencies: Accurate carbon intensity data; orchestration systems; organizational policy adoption.

- Domain-specific optimizer variants

- Sector: robotics (real-time adaptation), healthcare (regulatory-grade models), finance (stress testing)

- Application: Tailor Shampoo’s preconditioner updates (e.g., frequency, mode-specific damping) to domain constraints like latency, safety, or compliance.

- Tools/workflows: Mode-wise update throttling; trust-region overlays; certification-ready training logs.

- Assumptions/dependencies: Domain requirements; regulator acceptance of optimizer changes; rigorous validation pipelines.

- Low-rank/approximate matrix-power routines

- Sector: software performance engineering

- Application: Replace full SVD/eigendecomposition with incremental low-rank updates or polynomial approximations to matrix powers to further reduce runtime/memory.

- Tools/workflows: Chebyshev/Padé approximants; randomized SVD; caching and reuse of factorizations across steps.

- Assumptions/dependencies: Approximation accuracy sufficient for stable training; numerical safeguards; performance gains justify complexity.

- Personalized and on-device learning at scale

- Sector: consumer tech, daily life

- Application: Enable efficient on-device personalization (keyboard, speech, recommendation) using resource-aware Shampoo variants for small fine-tunes that respect battery and memory.

- Tools/workflows: Adaptive diagonal/full mode selection; occasional full preconditioner refresh; battery-aware scheduling.

- Assumptions/dependencies: Devices capable of required ops; user privacy constraints; variability in user data and stability of gains.

Collections

Sign up for free to add this paper to one or more collections.