- The paper introduces FuseMoE's modular architecture leveraging a novel Laplace gating mechanism to enhance fleximodal data fusion.

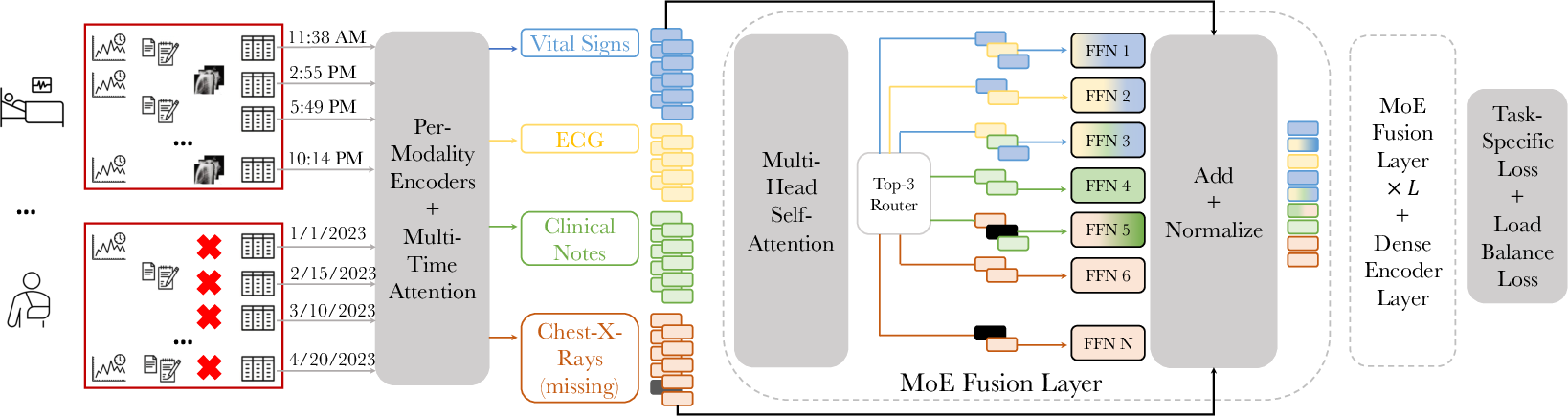

- It utilizes a discretized multi-time attention (mTAND) module to convert irregular temporal samples into consistent representations.

- Empirical validations on clinical datasets such as MIMIC-IV demonstrate improved prediction accuracy for in-hospital mortality and length-of-stay.

Introduction

"FuseMoE: Mixture-of-Experts Transformers for Fleximodal Fusion" addresses the challenges faced by machine learning models in handling multimodal data characterized by missing elements and temporal irregularity. In this context, a novel framework named FuseMoE is introduced, which incorporates a mixture-of-experts paradigm. This approach is particularly suitable for scenarios with diverse modalities that may include missing components and irregularly sampled trajectories. The work presents a unique gating function designed to manage these complexities effectively.

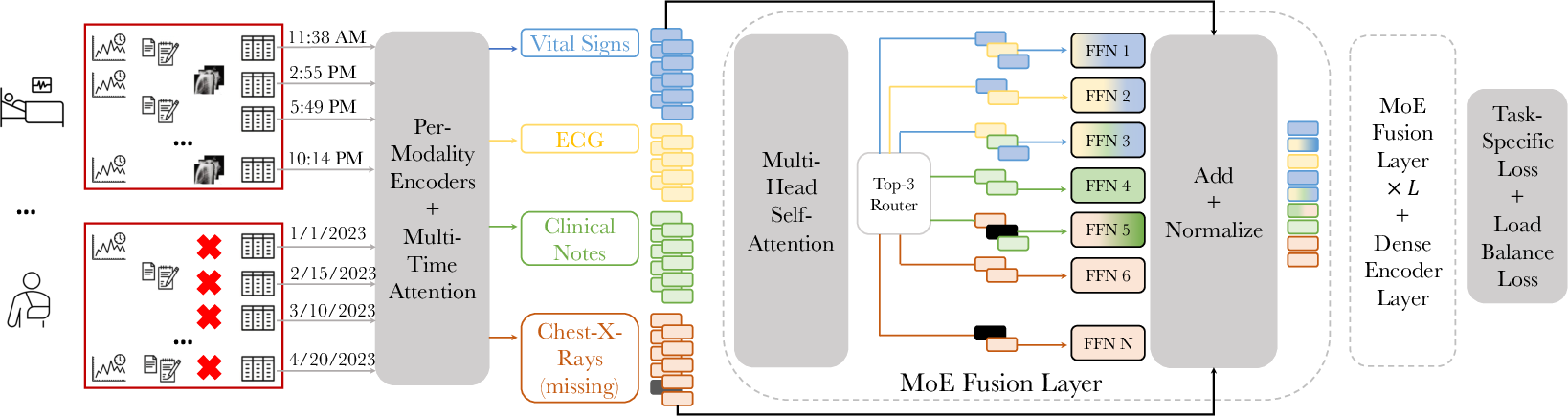

The Architecture: FuseMoE Framework

The core innovation of FuseMoE lies in its modular architecture that significantly enhances the fusion of FlexiModal Data.

Figure 1: Addressing the challenge of FlexiModal Data in clinical scenarios, showcasing FuseMoE's capability to handle any combination of modalities including those with missing elements.

Sparse MoE Backbone

FuseMoE's backbone relies on sparsely gated Mixture-of-Experts layers which are adept at managing distinct tasks and optimizing modality partitioning. The gating mechanism employed is not the classical Softmax but a novel Laplace gating function enhancing faster convergence rates and more precise predictions. This function is mathematically described by:

hl(x)=Top K(−∥W−x∥2)

Modality and Irregularity Encoder

To address irregularities in temporal data sampling, FuseMoE employs a discretized multi-time attention (mTAND) module. This harnesses temporal embeddings to convert irregular observations into a consistent format suitable for fusion with other data types.

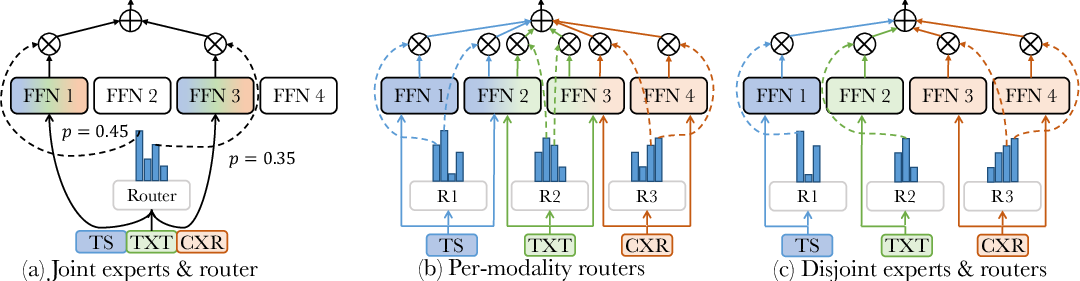

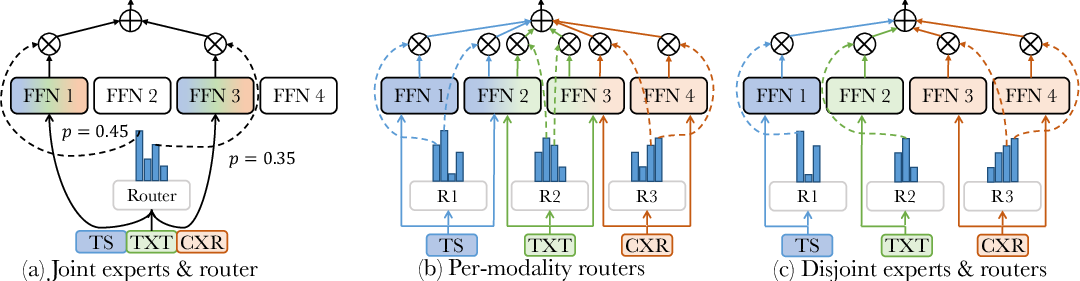

MoE Fusion Layer and Router Design

Figure 2: Tok-K router designs for multimodal fusion, showcasing the adaptability of FuseMoE to utilize modality-specific processing structures for improved performance.

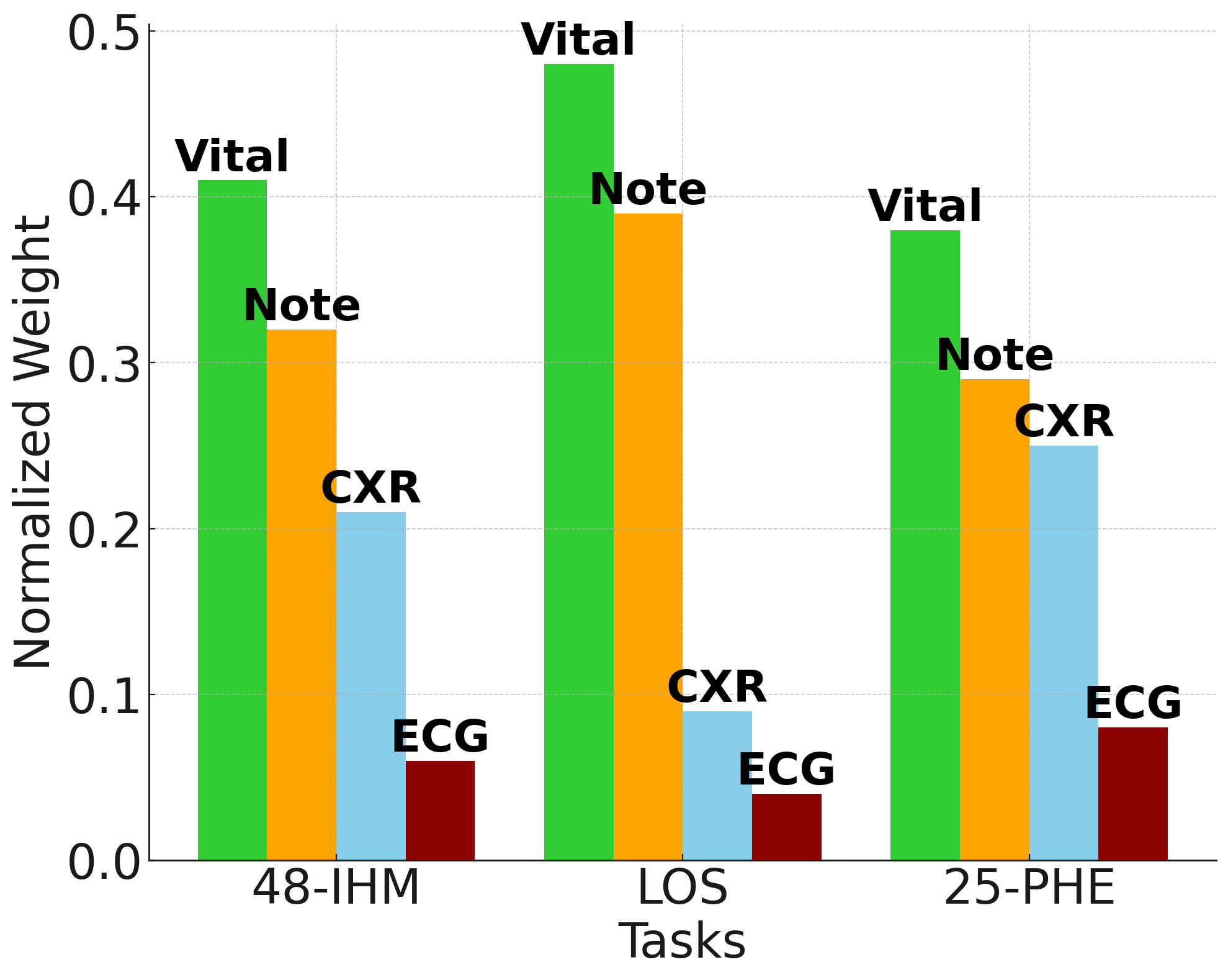

Multiple router configurations, including joint and modality-specific options, enhance the model's adaptability to handle up to unlimited modalities effectively while managing missing data with dynamic embedding adjustments.

Theoretical Justifications

The theoretical contributions of the paper focus on establishing the convergence behaviors of the Laplace gating mechanism and its superiority over Softmax in certain conditions. Theoretical analysis and results demonstrate more robust parameter estimation rates with Laplace gating, particularly when optimized for Gaussian distributions in mixture-of-experts contexts.

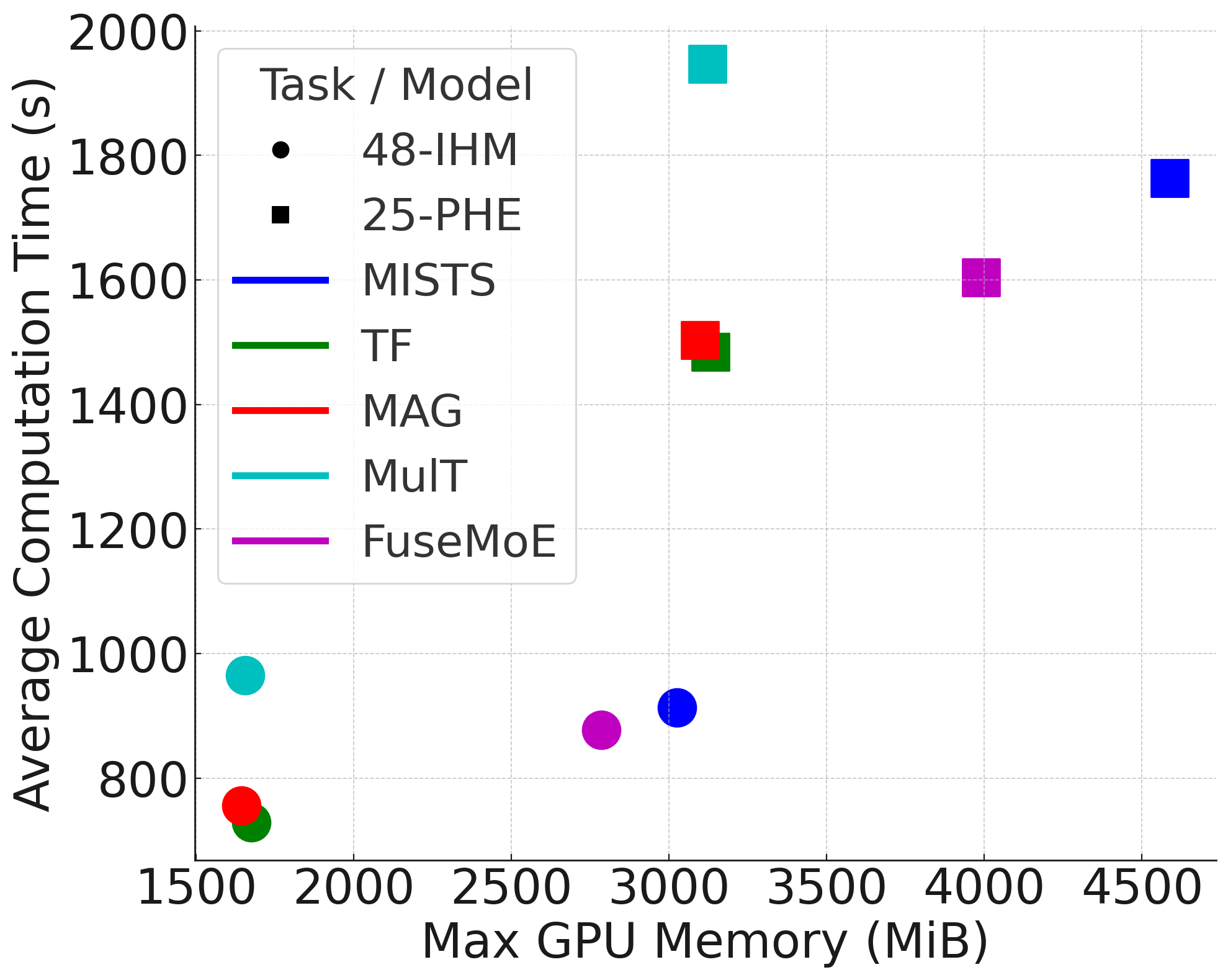

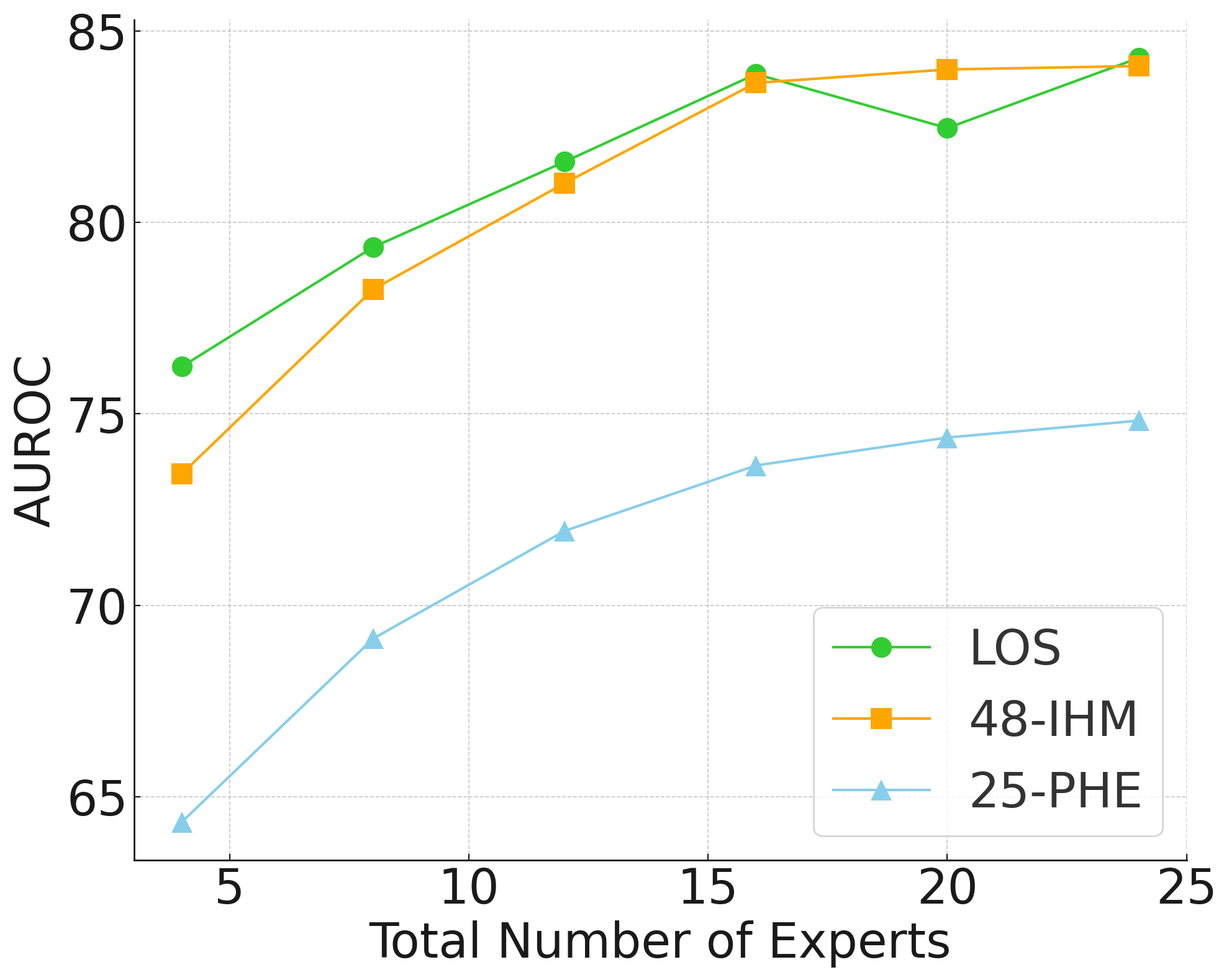

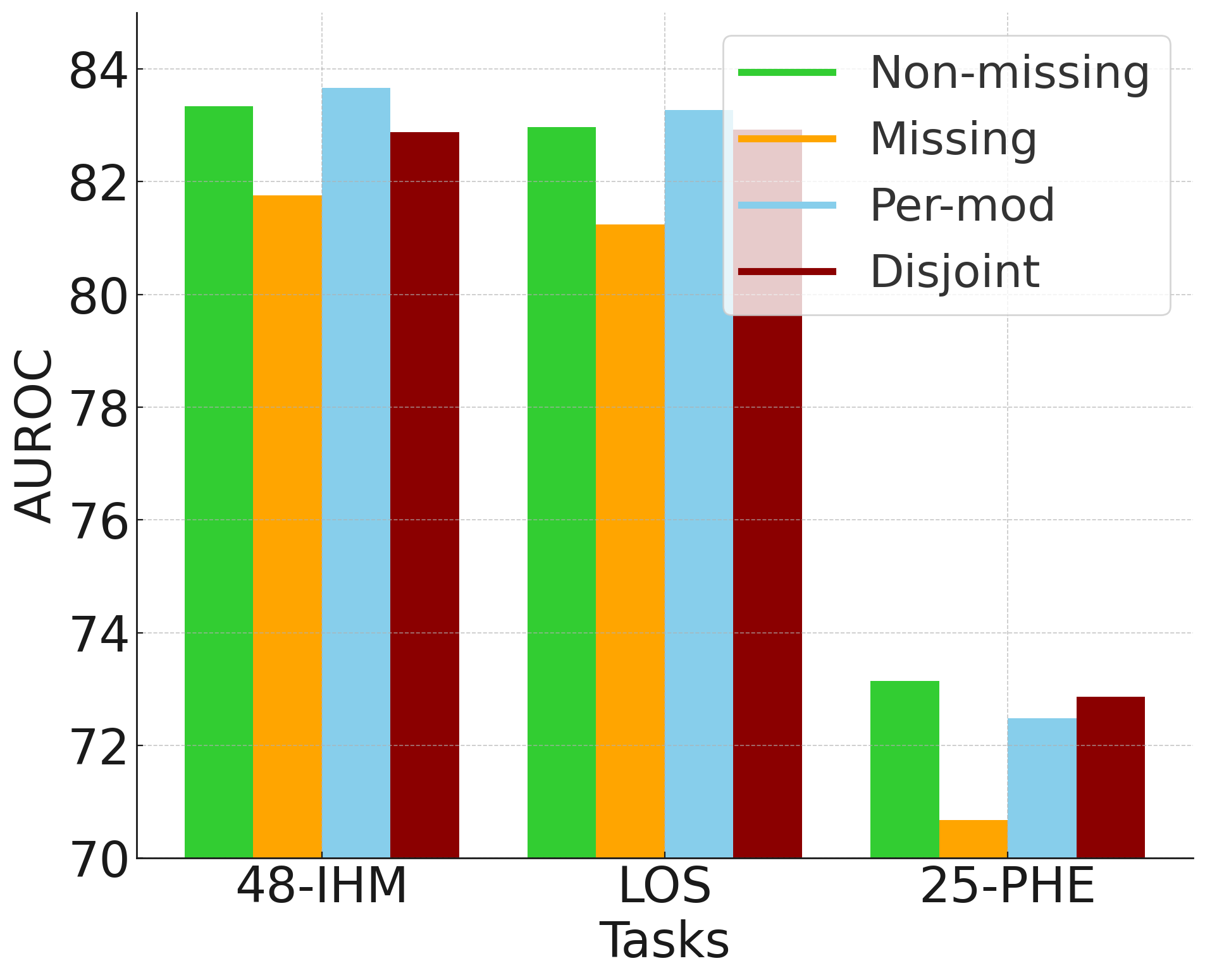

Empirical Evaluation: Clinical Validations

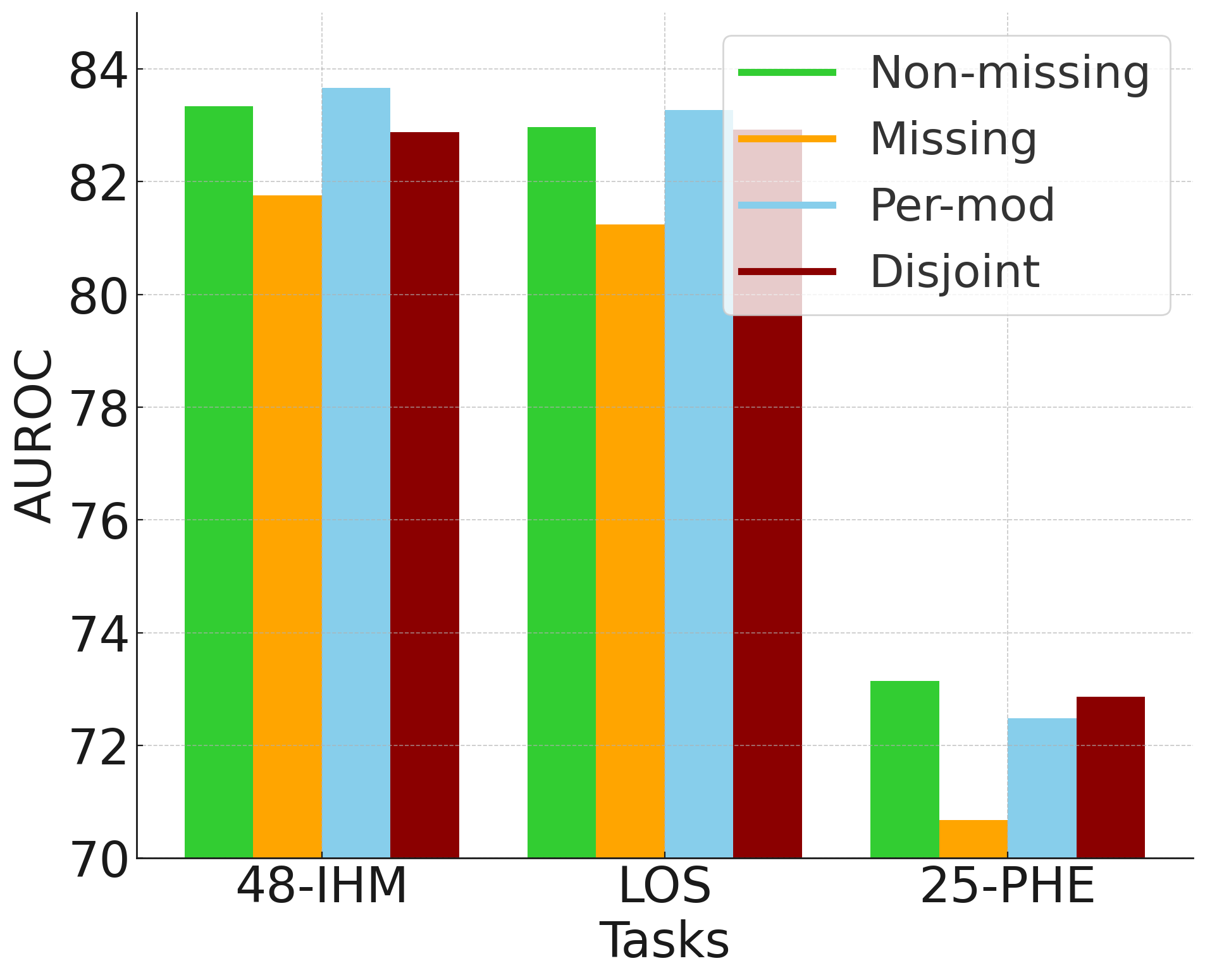

Through rigorous empirical validation using clinical datasets such as MIMIC-IV, FuseMoE demonstrates substantial improvements in prediction accuracy across several tasks including in-hospital mortality (48-IHM) and length-of-stay prediction (LOS), while effectively managing data with varying degrees of missingness.

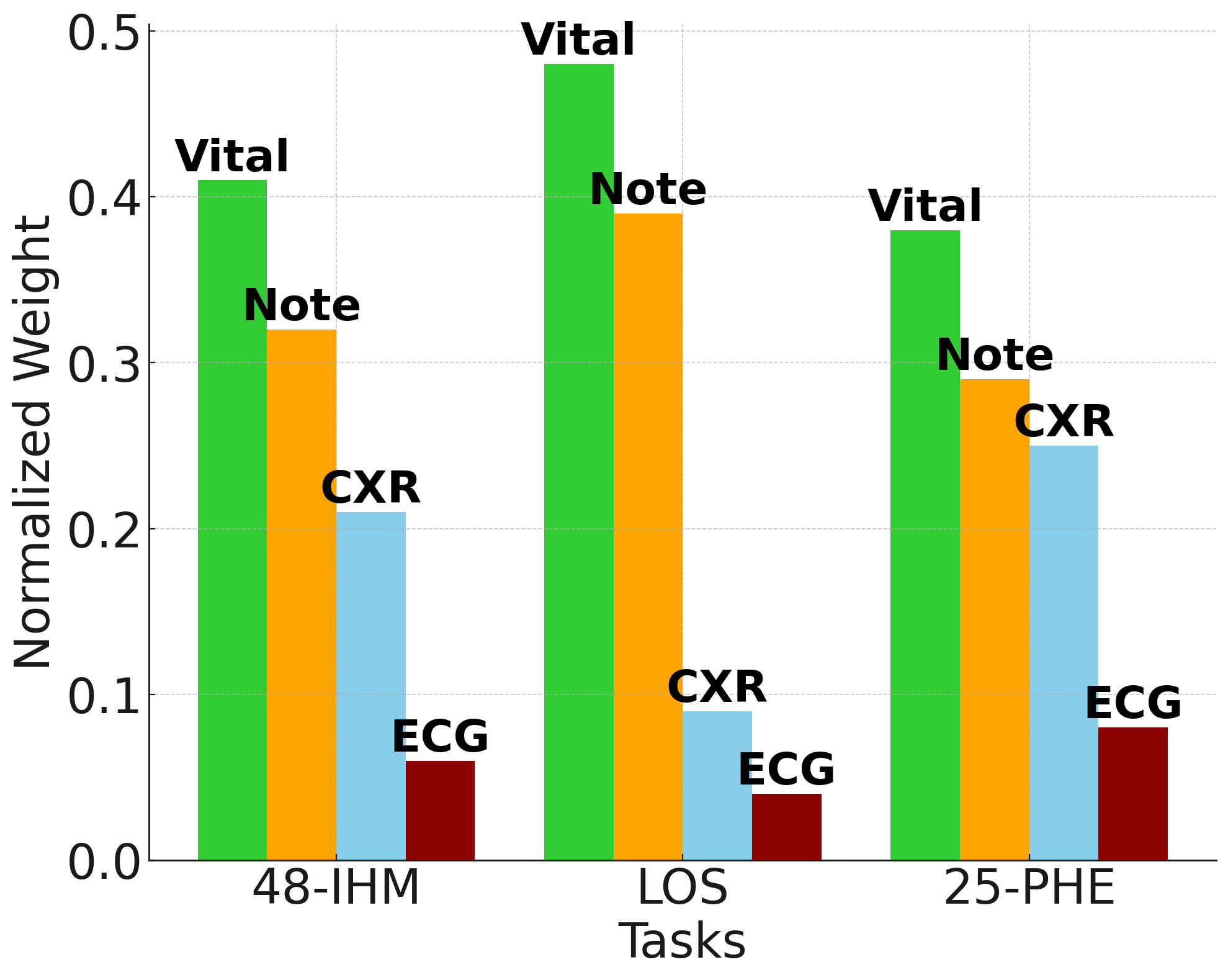

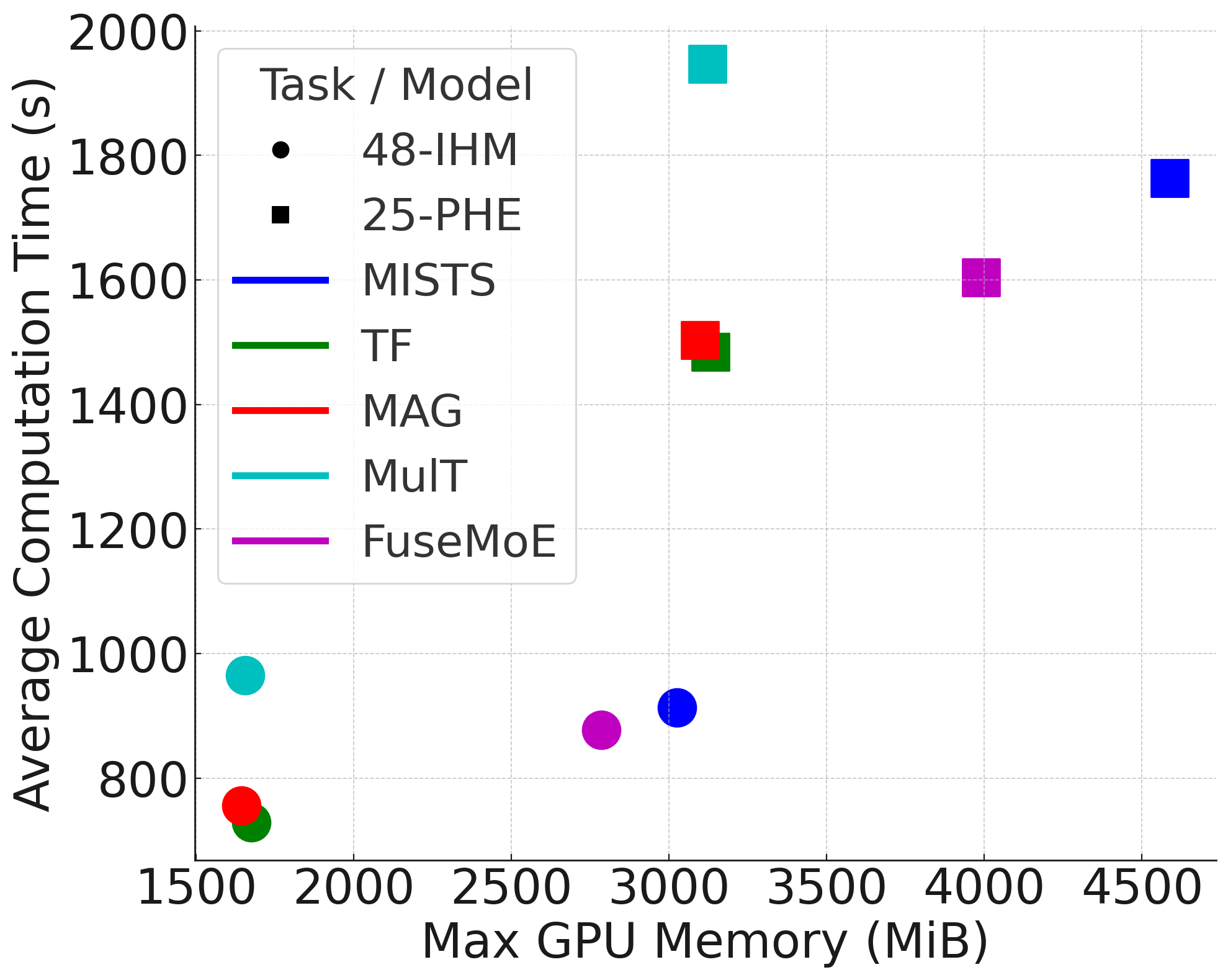

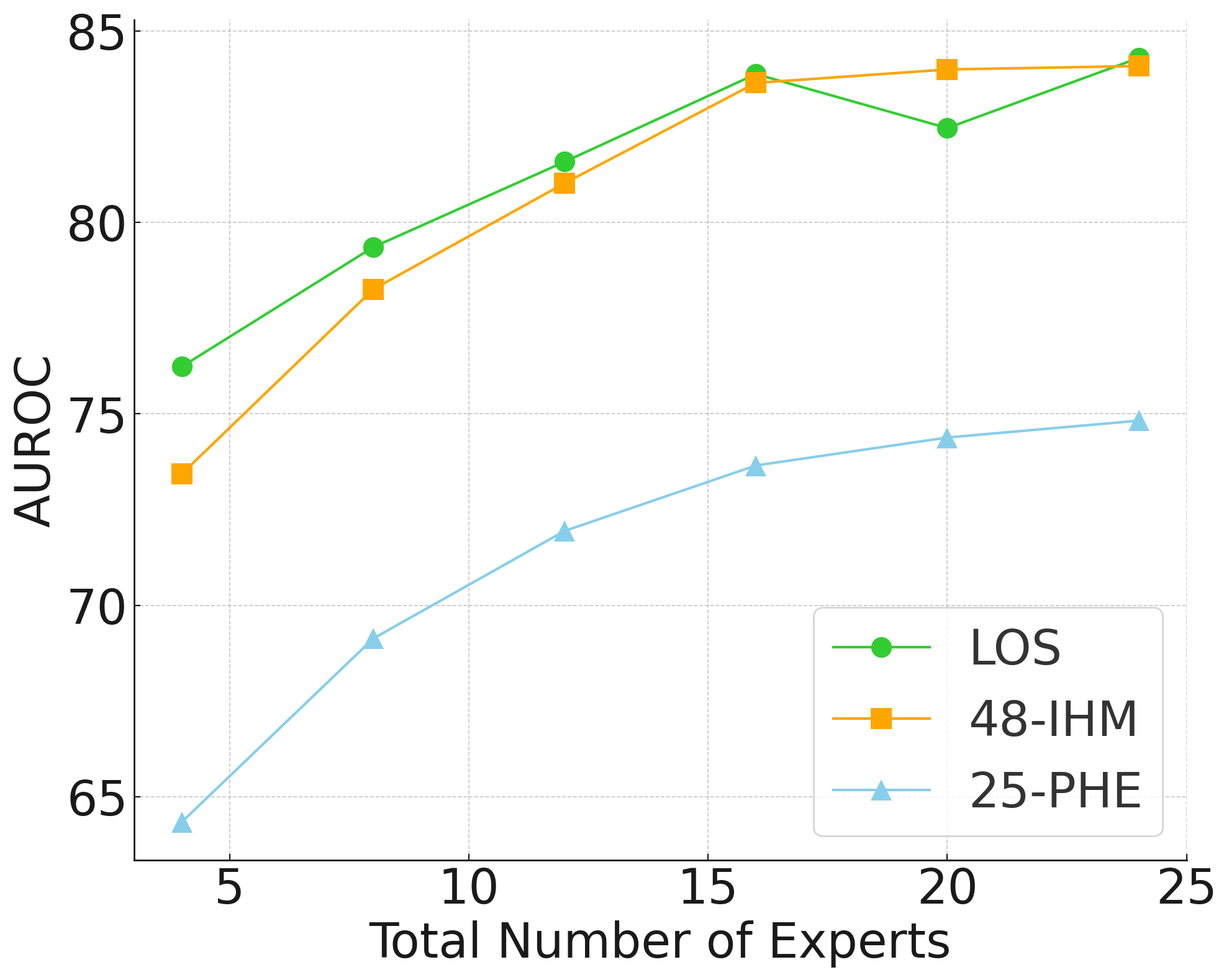

Figure 3: Results of ablation studies showing computational efficiency and the enhancement brought by employing per-modality routers and entropy regularization in managing missing modalities.

Conclusion

FuseMoE stands as a significant advancement in the fusion of multimodal data, addressing key challenges in managing data irregularities and missing modalities with a theoretically robust and empirically validated approach. Future explorations could focus on expanding the scope of modality encoders and exploring additional critical application domains.

This model not only enhances predictive accuracy in clinical settings but also sets a foundation for further innovations in other domains requiring flexible data fusion strategies.