- The paper introduces Infinigen, a procedural system that generates infinite, photorealistic natural scenes with high geometric fidelity via modular asset generators.

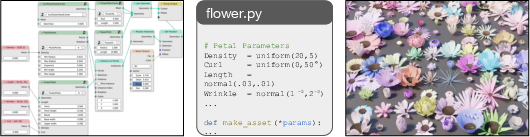

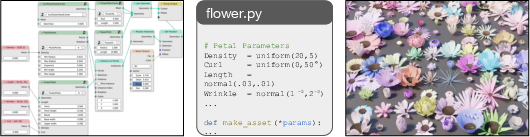

- It employs Blender integration and a node transpiler to convert artist node graphs into procedural Python code, enhancing asset diversity and control.

- Quantitative benchmarks show Infinigen-trained models achieve lower stereo matching errors on natural scenes, validating its domain-specific adaptability.

Infinite Photorealistic Worlds using Procedural Generation: Infinigen System

Introduction and Motivation

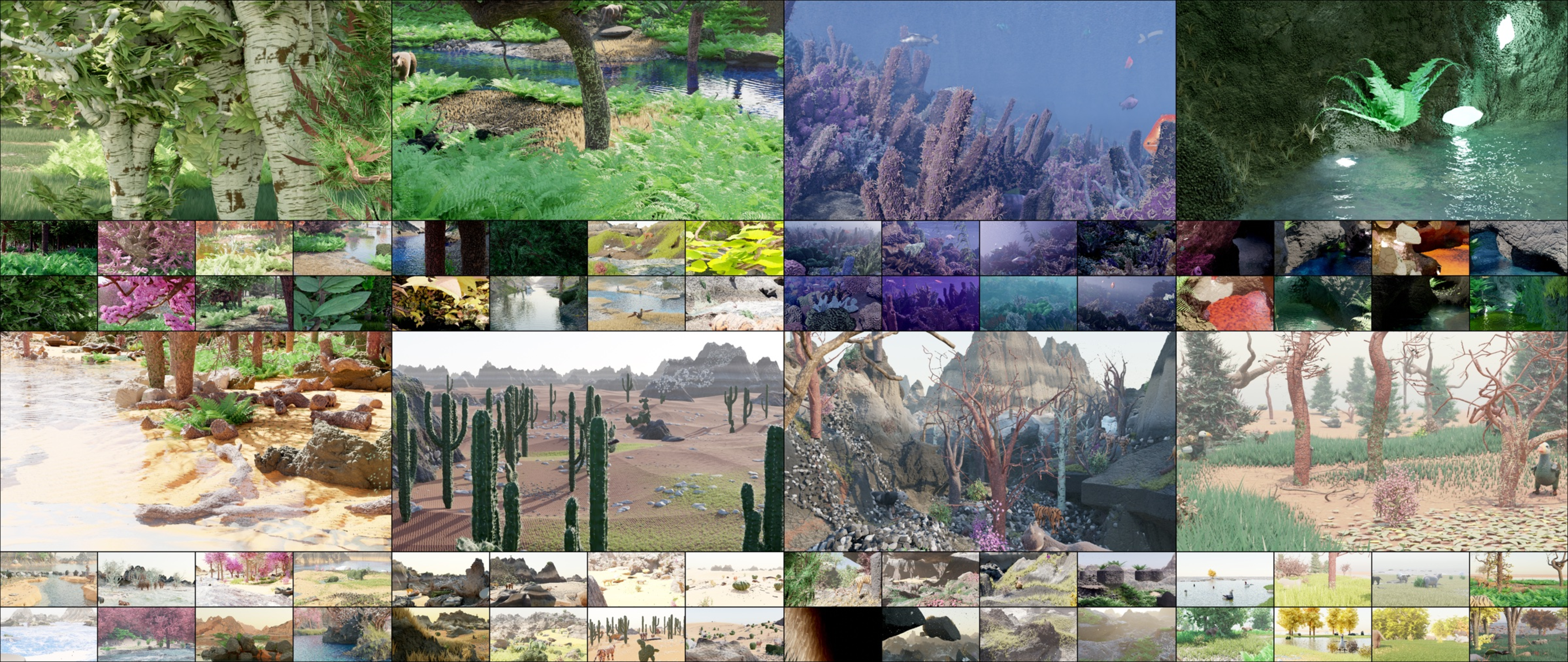

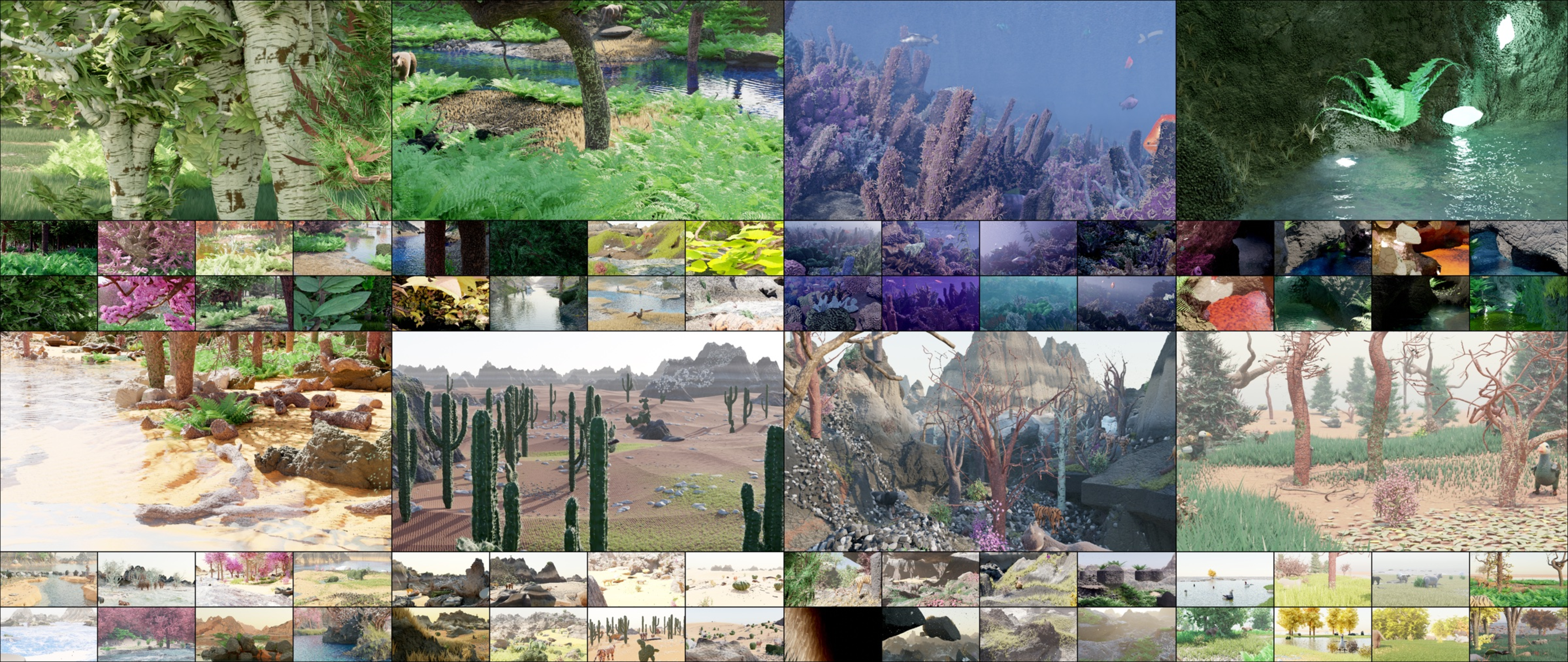

The Infinigen system presents a fully procedural approach to generating photorealistic 3D scenes covering a wide spectrum of natural world content—terrains, plants, animals, and environmental phenomena. This work addresses persistent limitations in synthetic dataset diversity and realism, particularly for natural scenes, in computer vision research. Existing sources overwhelmingly rely on finite asset libraries and frequently focus on urban or indoor environments, constraining model generalization. Infinigen distinguishes itself through comprehensive proceduralism, enabling infinite variation at geometric, material, and semantic levels.

Figure 1: Random, non cherry-picked images of generated natural scenes illustrating scene diversity and photorealism.

System Architecture and Procedural Methodology

Blender Integration and Node Transpilation

Infinigen leverages Blender's open-source ecosystem for both geometric and node-based asset specification. Core primitives (geometry, shaders, particles) are manipulated via Blender's Python API; the architecture supports asset generation both inside and outside Blender graphically and programmatically. A notable contribution is the Node Transpiler, a tool that converts artist-composed node graphs into procedural Python code, facilitating randomization not only of input parameters but also graph structure—enabling community extension and modular asset control.

Figure 2: Artist-friendly node graphs are automatically transpiled to procedural Python code to generate assets, bridging artist and technical workflows.

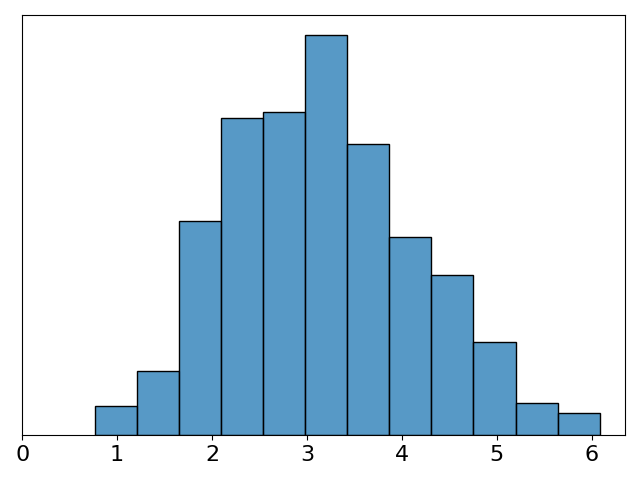

Asset Generators and Diversity Quantification

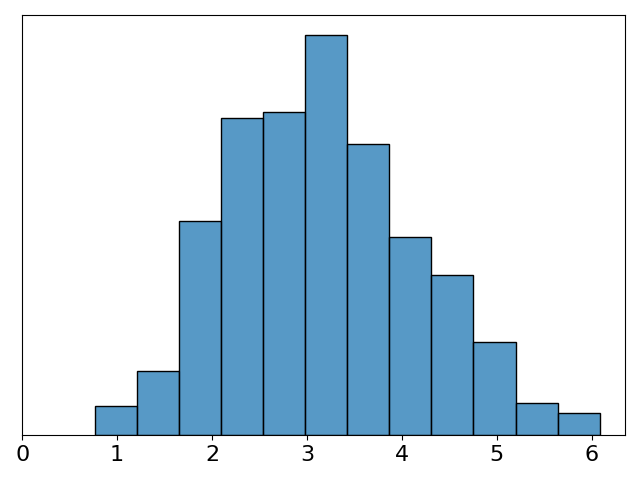

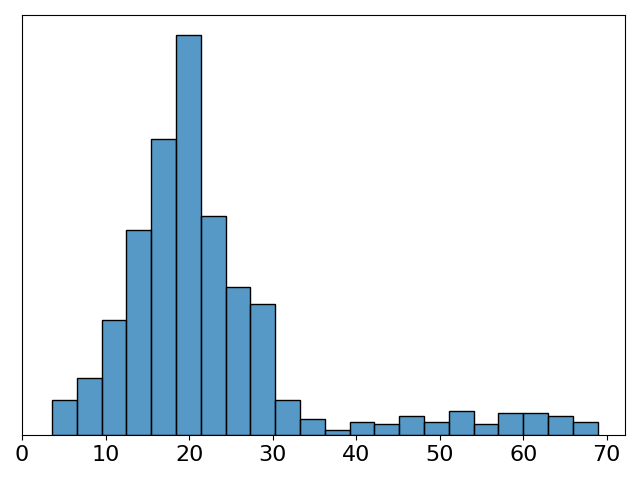

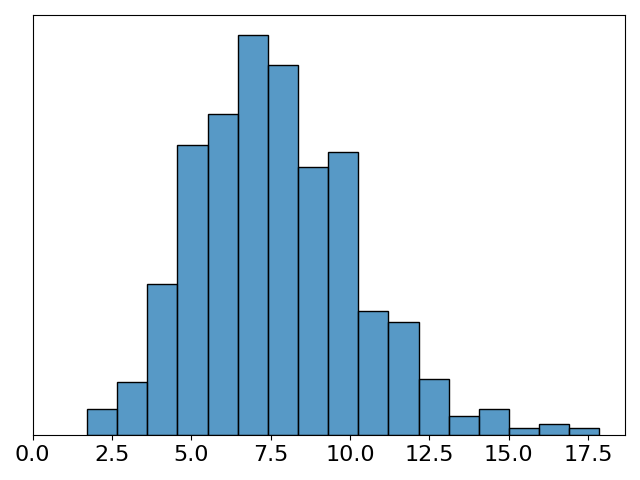

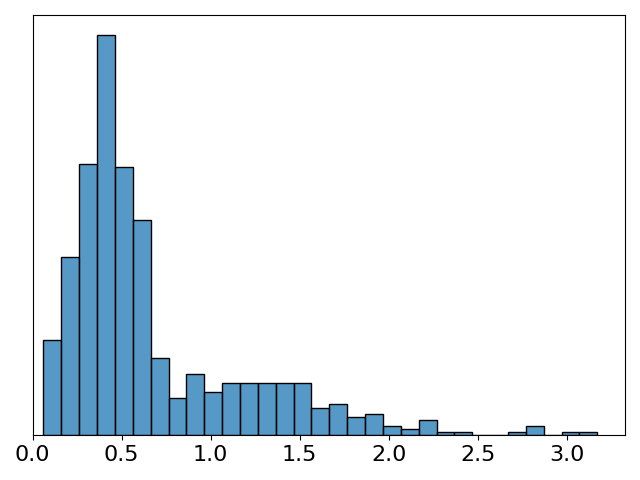

Infinigen organizes asset generation into probabilistic subsystems specialized for terrain, materials, weather, rocks, flora, fauna, and compositional scatter. Each generator exposes a set of high-level human-interpretable parameters (DOF). For example, terrain may parametrize elevation profiles, erosion rates, noise scales; trees expose growth height, branching morphology, bark and leaf parameters. Internal (microscopic) randomness further enhances diversity.

For quantitative assessment, the system provides 182 generators with a total of 1070 named, independently tunable procedural parameters. Terrain and weather are often simulation-based (e.g., SDF evaluation via marching cubes, FLIP fluid, particle systems), providing high internal complexity beyond explicit DOF.

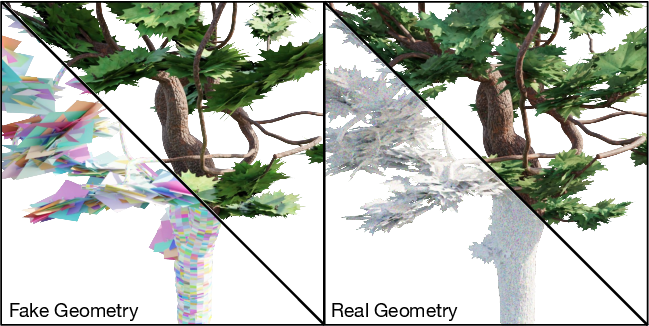

Geometric Fidelity and Ground Truth Extraction

Real vs. Illusory Geometry

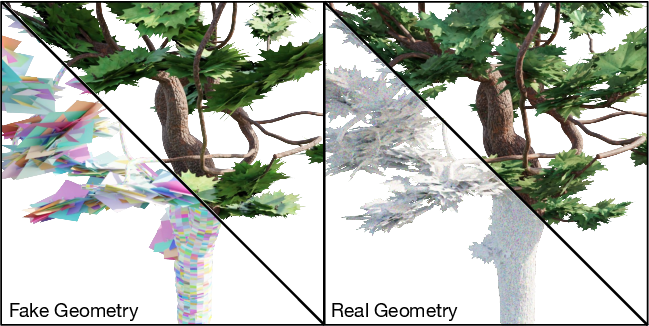

A distinguishing feature of Infinigen is its commitment to geometric realism. Unlike game asset design, where bump/displacement mapping, alpha textures, and low-poly meshes fake surface detail, every visible detail in Infinigen is physically realized as mesh geometry.

Figure 3: Comparison of conventional real-time optimized assets using shading tricks versus Infinigen assets with explicit high-resolution geometry.

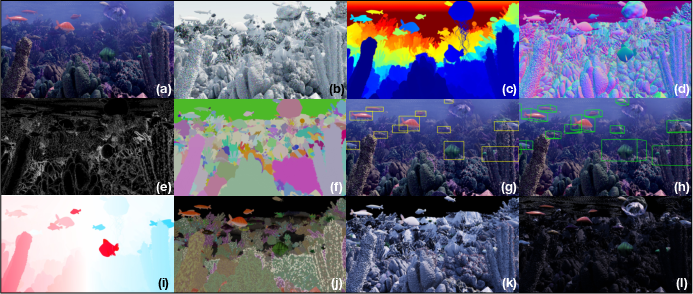

Ground Truth Rendering

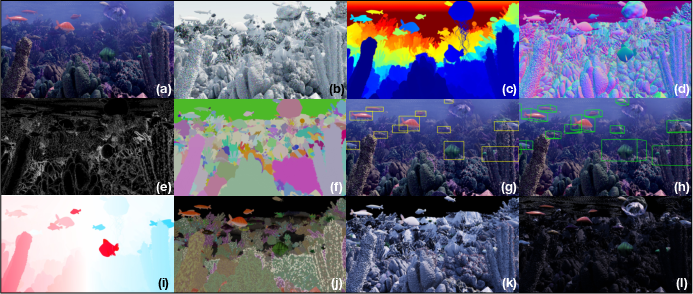

The system enables direct extraction of comprehensive ground truth from scene geometry rather than renderer byproducts—yielding exact depth, surface normals, occlusion boundaries, instance segmentation masks, and 2D/3D bounding boxes. This approach eliminates artifacts and ambiguities arising from volumetric rendering effects, translucency, and post-process blurring common in prior pipelines.

Figure 4: Ground truth outputs extracted directly from mesh: depth, normals, segmentation, bounding boxes, optical flow, and material parameters.

Coverage: Natural Assets and Scene Complexity

Vegetation and Terrain

Procedural vegetation generators span small plants, flowers, leaves, mushrooms, cacti, ferns, and extensive tree species, parametrized via growth algorithms (random walks, space colonization), shape morphing, and domain-specific simulation. Terrain composition utilizes physical simulation and fractal noise, supporting explicit modeling of elements such as caves (L-systems), eroded rocks, boulders, tiled landscapes, fluid dynamics (FLIP for water/lava), and volumetric clouds.

Figure 5: Random, non cherry-picked terrain-only scenes sampled across mountains, arctic, underwater, caves, and other biomes.

Fauna and Creature Generation

Creatures are parameterized by genome trees encoding anatomical topology, part parameters, attachment, and rigging. Mesh synthesis uses NURBS and node-graph tools. Animation rigs are procedurally generated, and physical simulation is available for skin and hair articulation. The pipeline supports both realistic and combinatorial organism synthesis for maximal diversity.

Figure 6: Automated genome-to-mesh pipeline for creature generation across classes—with sample carnivores, herbivores, birds, beetles, and fish.

Scene Composition and Scalability

Compositional Scatters

Dense scene composition is achieved by procedural scattering—populating surfaces densely with compatible assets (logs, leaves, rocks), reflecting realistic ecological ground cover.

Figure 7: Dense surface coverage transforms terrain into realistic forest floors, seafloors, grasslands through procedural asset scattering.

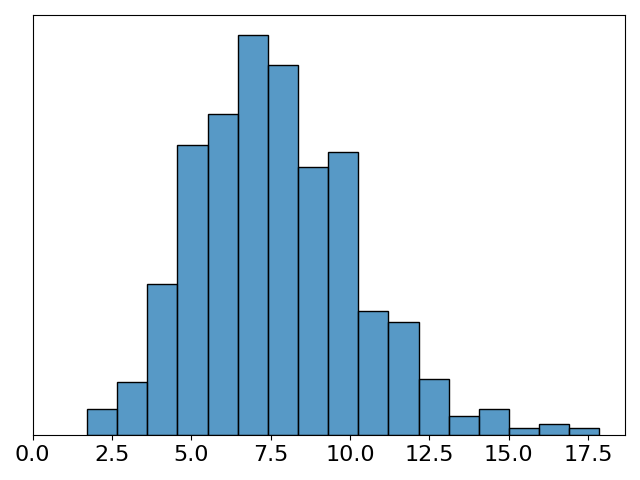

Dynamic Resolution Scaling

Resolution scaling evaluates procedural asset geometry at adaptive resolution depending on camera distance, ensuring mesh face size matches pixel granularity—optimizing memory and maintaining visual detail.

Figure 8: Visualization of triangle size scaling with camera distance; consistent image quality is maintained by dynamic mesh resolution adjustment.

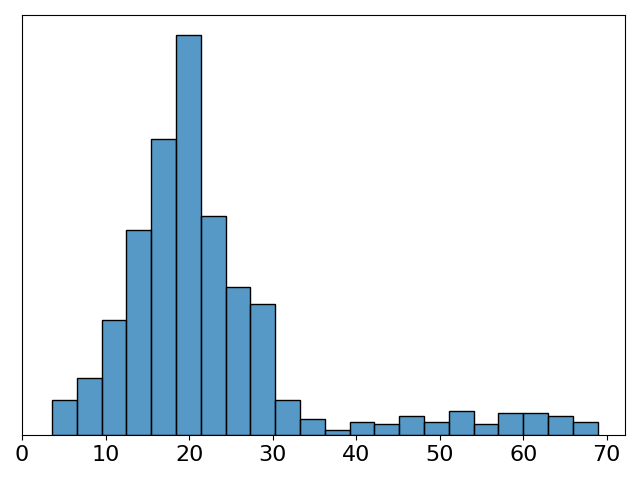

Full Pipeline and Resource Profiling

Scene composition involves sampling full ground surfaces for asset placement (Poisson-disc sampling, procedural masks), camera selection matching “creature” perspectives, and rendering with Cycles (physically-based path tracing) at high fidelity. The data generation pipeline is engineered for parallelism, with strong resource requirements—on benchmark hardware, generating a stereo pair (1080p) takes 3.5 hours and ~16M polygons per scene.

Figure 9: Data pipeline: layout, asset generation, material assignment, displacement, and photorealistic rendering.

Figure 10: Resource requirements profile for wall time, memory, CPU, GPU, and mesh complexity per rendered stereo image.

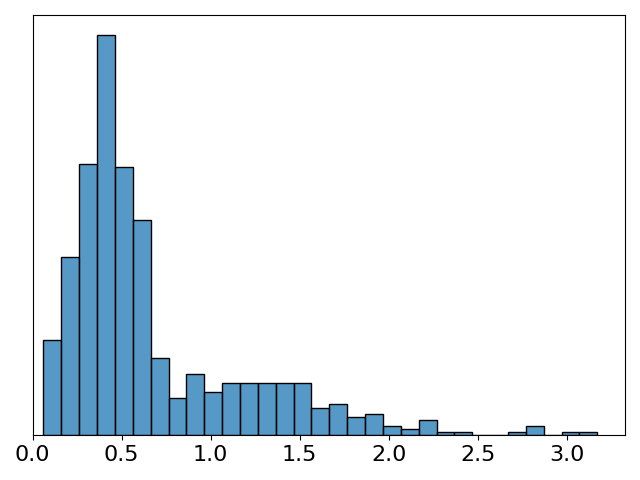

Experimental Validation

RAFT-Stereo models trained exclusively on 30K Infinigen image pairs were benchmarked against leading synthetic datasets (SceneFlow, FallingThings, Tartanair, Li et al., HR-VS, Sintel, InStereo2K) on the Middlebury stereo matching validation suite. Infinigen-trained models exhibited the lowest error on natural object scenes (e.g., Jadeplant), validating domain-specific generalization. However, performance is reduced on indoor scenes, reflecting domain distribution shift—natural scenes contain few planar/textureless surfaces.

Empirical results:

- Bad 3.0 error on Middlebury Jadeplant: Infinigen 35.2% vs. SceneFlow 41.3%, HR-VS 43.2%.

- Average error competitive across benchmarks, outperforming SceneFlow on selected categories, but lagging on planar/indoor domains.

- Qualitative evaluation on real-world stereo photographs (captured with ZED 2 camera) confirms strong zero-shot transfer of models trained solely on Infinigen synthetic data to real natural environments.

Accessibility, Extensibility, and Open Science

Infinigen is released fully open source (BSD), with asset, generator, and pipeline code available. Artist contributions are enabled via node transpilation—lowering technical barriers for iterative enhancement and community wide collaboration. The modular design and explicit parameter exposure allow for rapid adaptation to new tasks, environmental types, and annotation formats.

Implications and Prospects

Infinigen substantially expands the scope of procedural dataset generation, breaking the reliance on finite asset libraries and the artificial scene bias prevalent in existing resources. Its capacity for infinite sampling of natural world content enables comprehensive model pretraining for vision tasks sensitive to ecological diversity and geometric complexity. The approach is extensible to embodied AI simulation, robotics, content creation, and digital twin modeling.

On the theoretical front, the explicit modeling of natural world priors and physical plausibility is a step toward bridging visual ecological gaps in foundation models. Practically, the system drives dataset curation away from curation bottlenecks and licensing restrictions—democratizing access to training data with tunable coverage.

Future extensions should target increased simulation realism (soft body physics, more advanced weather/lighting models), fine-grained biotic interaction modeling, and automated real/synthetic domain adaptation. Integration with learnable generative models for procedural rule induction may further enhance asset realism and diversity. Large-scale experiments on transfer learning, downstream embodied tasks, and joint 2D/3D perception will provide additional validation and guidance for ongoing development.

Conclusion

The Infinigen system provides a scalable, fully procedural method for generating diverse, photorealistic synthetic datasets reflecting nature’s complexity. Its mesh-centric approach ensures physical plausibility and accurate ground truth, facilitating improved generalization for models in underrepresented domains. The architecture, open-source philosophy, and documented performance suggest Infinigen will play a critical role in closing the synthetic-to-real data gap for computer vision and broader AI applications.