- The paper introduces simultaneous adjoint updates and unrolling intermediate states to accurately estimate gradients in DEQs.

- It demonstrates that mitigating gradient obfuscation can enhance adversarial robustness through precise white-box evaluations.

- Experimental analysis on CIFAR-10 reveals that DEQs can achieve competitive robustness compared to traditional deep networks.

Adversarial Robustness of Deep Equilibrium Models

Introduction

Deep Equilibrium Models (DEQs) deviate from the traditional deep network architecture by resolving the computation into a fixed-point structure found within a single layer, rather than propagating through multiple stacked layers. This implicitly layered approach offers notable benefits in memory efficiency, allowing DEQs to perform competitively across various applications like language modeling and image classification while consuming O(1) memory. Despite these advantages, DEQs have been identified to exhibit vulnerabilities to adversarial attacks which pose significant risks for applications demanding robustness. Concerns regarding such vulnerabilities have catalyzed research into techniques for certifying robustness, especially for monotone DEQs. However, empirical investigations focusing on general DEQs are sparse. This paper examines the robustness of general DEQs, innovating strategies for estimating intermediate gradients which facilitate comprehensive white-box adversarial evaluations and robust defense formulations.

Challenges in Robust DEQ Training

A central challenge for DEQs in adversarially robust training is the convergence behavior of black-box solvers utilized for determining equilibrium states. Unlike monotone DEQs, general DEQs lack assured convergence and dependent forward-backward alignment, potentially leading to obfuscated gradient pathways when perturbed. Independent computation tracks for forward and backward passes further exacerbate this issue by excluding intermediate states from gradient calculations, resulting in opacity for standard attack methods. Additionally, the dropout in standard accuracy witnessed in adversarial training introduces instability into training processes, often violating fixed-point structures.

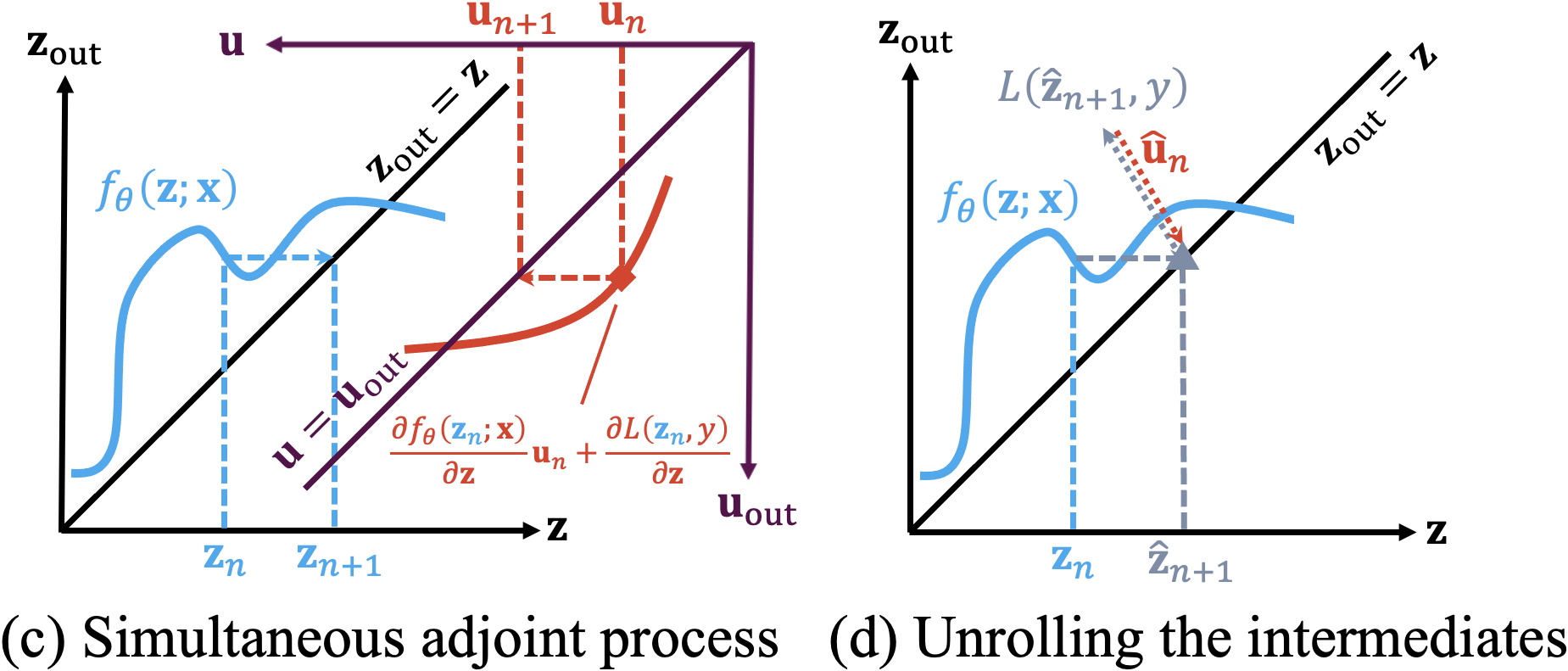

Figure 1: Challenges in benchmarking adversarial robustness of DEQs reflect gradient obfuscation issues and convergence instabilities.

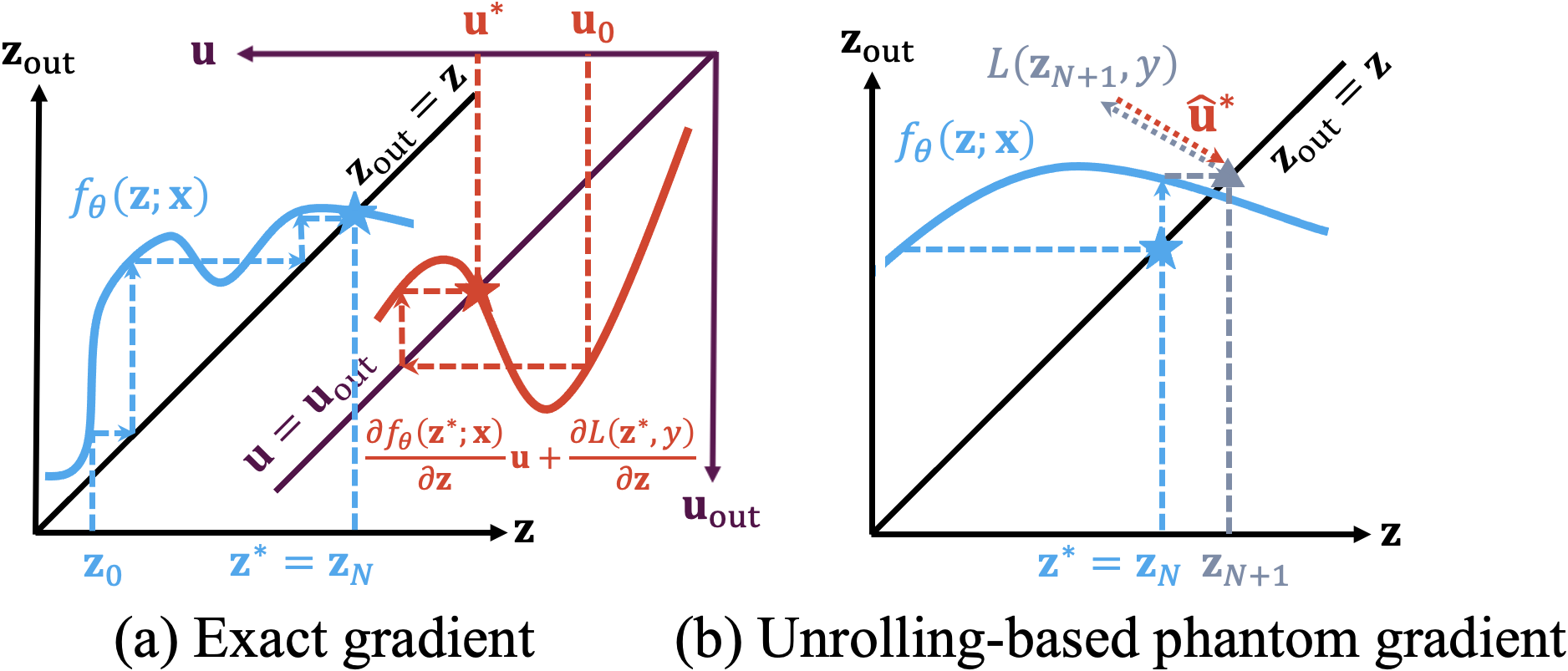

To counteract gradient obfuscation and validate robustness in a fully accessible white-box setting, two distinct methods for intermediate gradient estimation were developed:

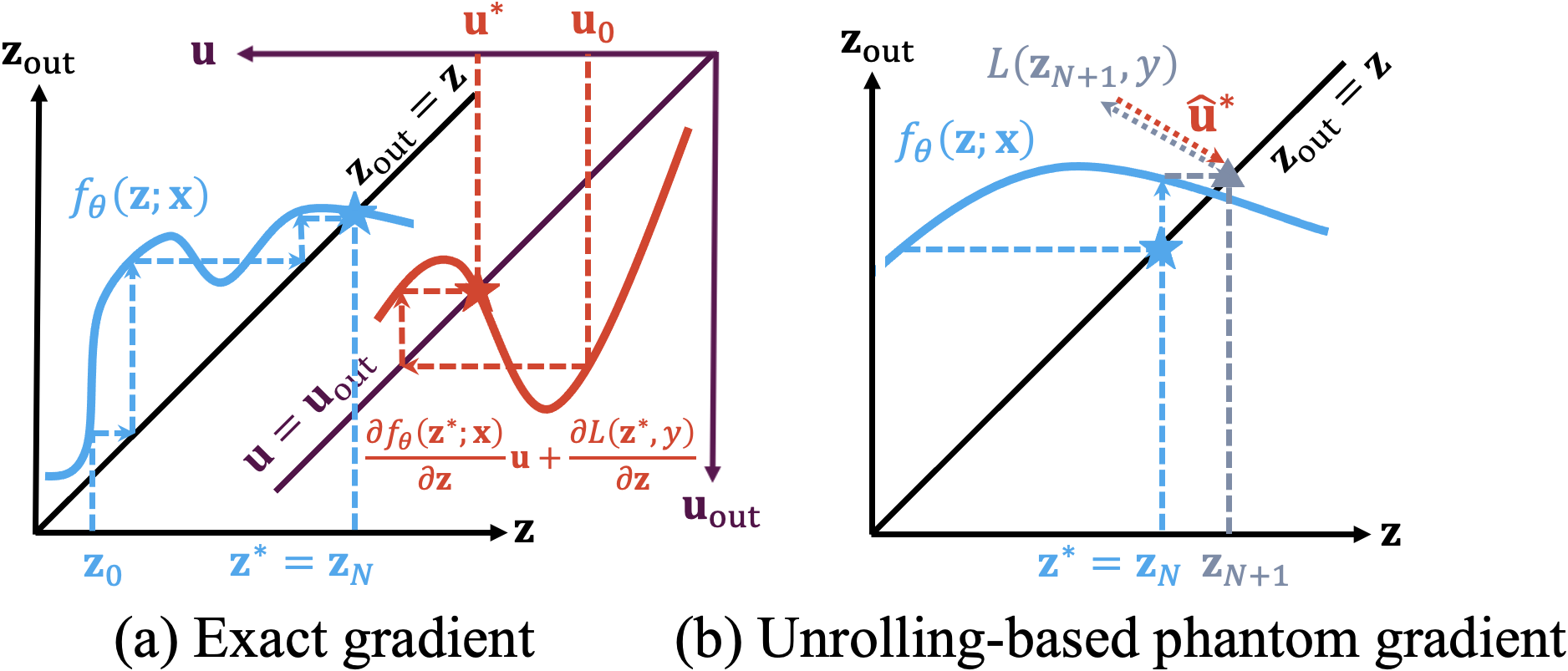

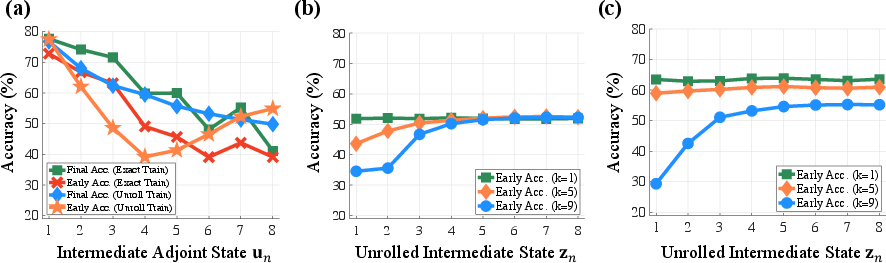

- Simultaneous Adjoint Updates: Inspired by neural ODE adjoint methods, simultaneous adjoint updates leverage low-rank Jacobian inverse approximations to iteratively align adjoint gradient estimations with ongoing forward computations. This approach, illustrated in Figure 2, captures nuanced gradients without requiring convergence of the adjoint states.

Figure 2: Proposed gradients for DEQs show alignment in simultaneous adjoint process and computational complexity.

- Unrolling Intermediate States: Variably unrolling intermediate states forms a computational graph path wherein automatic differentiation can backpropagate perturbative effects, estimating surrogate gradients efficiently while accounting for feedback activities from the ongoing solver's outputs.

Together, these methods empower white-box evaluations with robust gradient calculability, vital for developing precise defense mechanisms against adversarial perturbations.

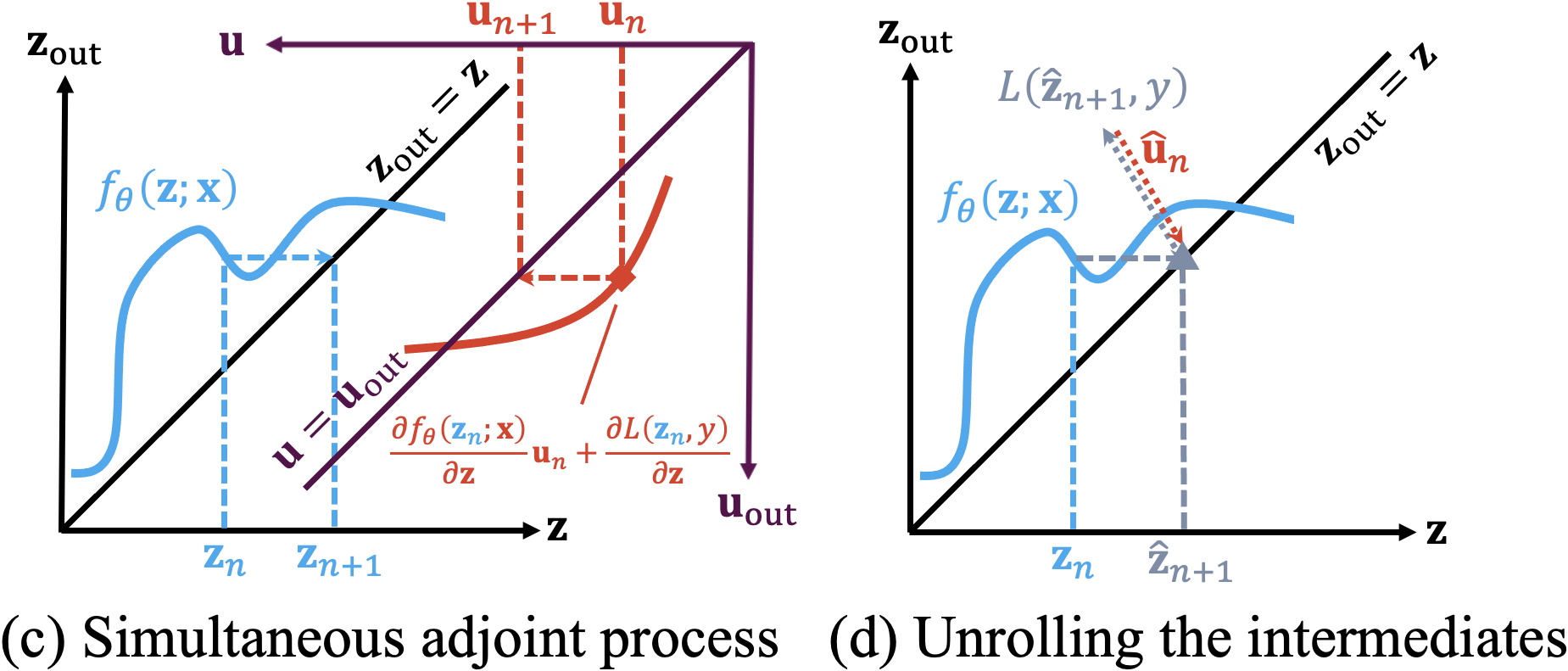

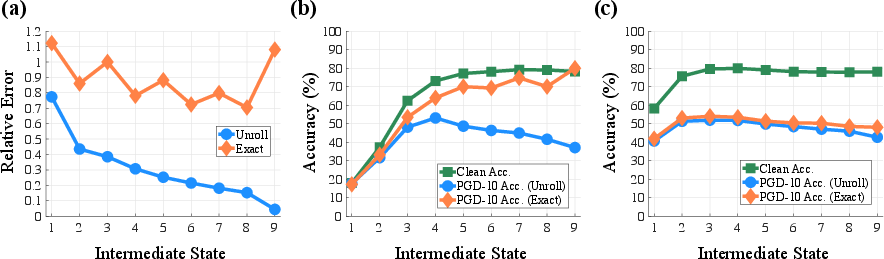

Figure 3: Ablation study reveals differential robustness performances based on unrolled gradient estimations at varied states.

Evaluation and Results

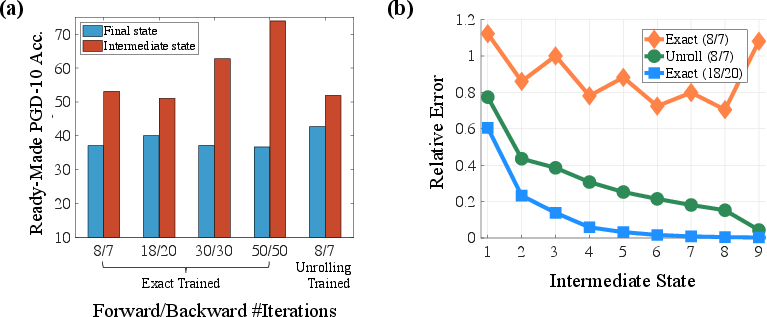

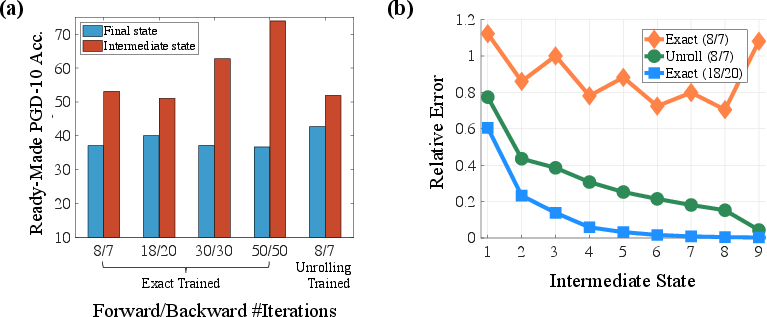

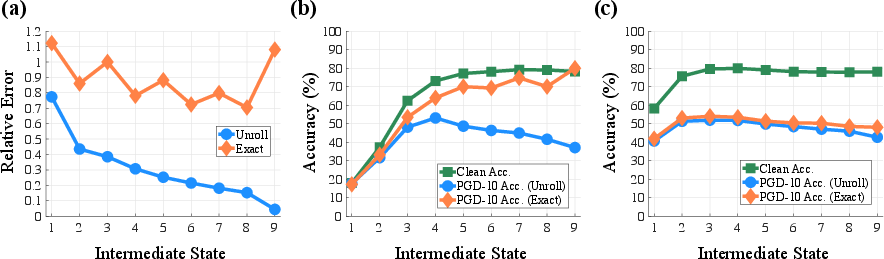

Experimental setups employed CIFAR-10 data, encompassing DEQs similarly parameterized to ResNet-18 and WideResNet-34-10 for fair comparisons. Adversarial training involved PGD-based generation processes leveraging exact and phantom gradients. Observations illustrated stark differences: the robustness accumulation effect in intermediate states suggested latent obfuscation; meanwhile, methodically calculated white-box attacks revealed reduced efficacy in traditionally robust states. Findings detailed significantly contrasting attack outcomes across differently trained DEQ configurations.

Matter-of-fact comparisons with deep networks reveal competitive adversarial robustness achieved by DEQs—contributing insights toward improved defense strategies for both early-exit solvers and ensemble state aggregations.

Figure 4: Performance discrepancy of robust DEQs highlights challenges imposed by traditional attack assumptions.

Conclusion

DEQs possess intrinsically advantageous computational properties but require calculated approaches to robustness evaluation. By estimating intermediate gradients, this paper elucidates how DEQs can avoid obfuscation pitfalls, presenting strategies for both adversarial training acceleration and defense stability. Future implications suggest further refinement in model architectures and computational heuristics to fully leverage DEQ attributes for artificial intelligence across sensitive domains, with the aim of achieving reliable, scalable, and secure deployments in potentially adversarial environments.