nuts-flow/ml: data pre-processing for deep learning

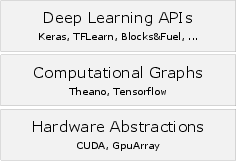

Abstract: Data preprocessing is a fundamental part of any machine learning application and frequently the most time-consuming aspect when developing a machine learning solution. Preprocessing for deep learning is characterized by pipelines that lazily load data and perform data transformation, augmentation, batching and logging. Many of these functions are common across applications but require different arrangements for training, testing or inference. Here we introduce a novel software framework named nuts-flow/ml that encapsulates common preprocessing operations as components, which can be flexibly arranged to rapidly construct efficient preprocessing pipelines for deep learning.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper introduces nuts-flow/ml and demonstrates basic image-focused preprocessing pipelines, but it leaves several aspects unaddressed. The following list identifies concrete gaps and open questions that future work could tackle:

- Lack of empirical evaluation: no benchmarks comparing nuts-flow/ml throughput, latency, memory footprint, or GPU utilization against alternatives (e.g., Keras ImageDataGenerator, TensorFlow tf.data, Fuel, TFLearn DataFlow).

- Concurrency model is underspecified: the framework relies on chained iterators without a workflow engine; how to systematically overlap CPU preprocessing with GPU training, implement prefetch/worker pools, and manage inter-stage backpressure is not detailed.

- No support for DAG/topologically complex pipelines: branching/merging, multi-input/multi-output flows, and synchronization across multiple streams are not supported, limiting multi-task or multi-modal preprocessing.

- Scalability and distributed execution: there is no design or evidence for scaling across machines (e.g., sharded reads, distributed samplers, fault tolerance), despite claims that components can be integrated with Spark/Dask.

- Reproducibility controls: the paper does not specify global random seeding, deterministic replay of augmentations, or consistent train/validation/test splitting across runs and epochs (especially under parallelism).

- Data modality coverage: beyond images, there is no implemented support for audio, video, text, or variable-length sequence handling (e.g., padding, bucketing, collation).

- GPU-accelerated preprocessing: transformations/augmentations appear CPU-bound; there is no exploration of GPU-side ops or integration with libraries like NVIDIA DALI, Kornia, or tf.image to reduce CPU bottlenecks.

- Data source integration: support is shown for CSV/Pandas and local files only; connectors for databases, cloud object stores (e.g., S3/GCS), compressed archives, and streaming inputs are absent.

- Memory management and backpressure: prefetching/caching are mentioned but without configuration or policies for queue sizing, spill-to-disk, memory-mapped I/O, and mechanisms to prevent out-of-memory conditions.

- Robustness and fault tolerance: behavior on corrupt files, transient I/O errors, retries, sample skipping, and error policies are not specified (despite a mention of exception-handling nuts).

- Advanced sampling strategies: only basic stratification with up/down-sampling is provided; class-balanced batchers, per-epoch shuffling, distributed shuffling, hard-example mining, or curriculum-based sampling are not addressed.

- Patch extraction details: although patching is claimed, there is no description or evaluation of strategies (ROI-based sampling, overlap/stride control, class-balanced patching, and patch-to-image reconstruction for segmentation).

- Handling heterogeneous or dynamic shapes: dynamic batching, padding/truncation policies, bucketing by size, and custom collate functions are not discussed.

- Framework interoperability: wrappers exist for Keras/Lasagne only; there is no pathway for seamless use with PyTorch DataLoader, TensorFlow tf.data, JAX/Flax, or ONNX Runtime.

- Monitoring and observability: no built-in profiling or stage-level metrics (throughput, latency breakdowns), nor integrations with TensorBoard, MLflow, or Prometheus for pipeline health and performance monitoring.

- Debuggability and visualization: utilities to inspect and visualize samples at intermediate stages, render augmentation distributions, or visualize pipeline structures are not described.

- API stability and extensibility: there is no specification for plugin discovery, versioning, or backward compatibility of nuts, which complicates third-party extensions and long-term maintenance.

- Deterministic synchronized augmentations: while synchronized augmentation across multiple images (e.g., image+mask) is mentioned, guarantees, APIs, and seeding semantics for strict determinism are not detailed.

- Long-running/interruptible workflows: checkpointing/resumption of pipeline state (e.g., sample cursors, RNG state), and recovery after interruptions are not considered.

- Security and privacy: strategies for on-the-fly anonymization, PII redaction, or secure data handling are not discussed.

- Cross-platform I/O considerations: there is no evaluation of async I/O, filesystem performance differences (Linux vs. Windows), or optimal settings for high-throughput reading.

- Testing and quality metrics: although “well tested” is stated, there are no test coverage metrics, performance regression tests, or validations across corner cases (e.g., massive datasets, highly imbalanced classes).

- Developer productivity claims: the assertion of improved readability/maintainability is not supported by user studies or metrics (e.g., time-to-pipeline, error rates, learnability).

- Performance trade-offs of Python generators: potential overhead of per-sample Python iteration vs. vectorized/batched ops is not quantified; guidance on when to batch-transform for efficiency is missing.

Collections

Sign up for free to add this paper to one or more collections.