Weak-to-Strong Generalization: Eliciting Strong Capabilities With Weak Supervision

This presentation explores a groundbreaking approach to AI alignment that addresses a fundamental challenge: how to supervise superhuman models when human evaluators can no longer provide reliable feedback. The paper demonstrates that strong models like GPT-4 can generalize beyond weak supervision from smaller models, recovering significant capabilities even when trained on imperfect labels. Through experiments across natural language processing, chess puzzles, and reward modeling, the researchers reveal both the promise and limitations of weak-to-strong generalization, proposing innovative techniques like auxiliary confidence losses that enable strong models to reject weak supervisor errors and achieve near ground-truth performance.Script

How do you train an AI system that's smarter than you? When models surpass human capabilities, our ability to provide reliable feedback breaks down, creating a fundamental alignment challenge for the future of artificial intelligence.

Building on this tension, the researchers from OpenAI identify a critical gap in current alignment techniques.

The limitation is stark: reinforcement learning from human feedback becomes unreliable precisely when we need it most. This paper investigates whether weaker models can effectively supervise stronger ones, a phenomenon they call weak-to-strong generalization.

To test this hypothesis, the authors designed experiments across multiple domains.

Across these diverse tasks, they trained strong models using labels from weaker supervisors, then measured how well the strong models recovered their true capabilities. The setup creates a controlled analog of the superhuman alignment problem.

The results reveal both encouraging possibilities and important limitations.

The encouraging news is that weak-to-strong generalization does occur naturally. However, the persistent gap between weak-supervised and ground-truth performance indicates that naive approaches leave capability on the table, particularly in complex reward modeling scenarios.

Crucially, the authors demonstrate that strategic interventions make a substantial difference. By encouraging strong models to recognize and reject errors from weak supervision through confidence losses, they recovered nearly all the capability gap in natural language tasks.

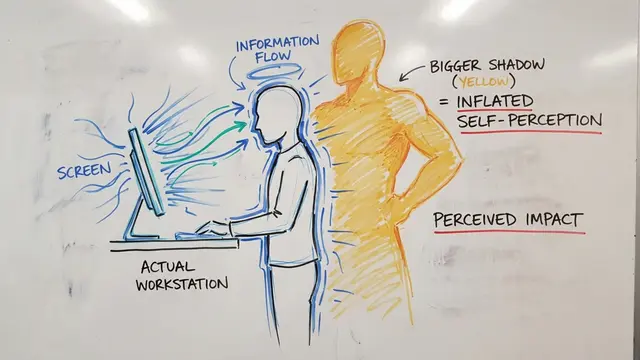

This visualization reveals a critical challenge in the training process: strong models tend to overfit to the imperfect labels provided by weak supervisors. The curves show accuracy over training time across different model size gaps, demonstrating that without intervention, models learn the supervisor's mistakes rather than transcending them.

These findings carry profound implications for AI safety research. The study demonstrates a concrete path forward for aligning systems that exceed our evaluation capabilities, while highlighting the work still needed to make weak-to-strong generalization robust and reliable.

Weak-to-strong generalization offers a promising empirical foundation for the superalignment challenge, showing that even imperfect guidance can help capable models realize their potential. Visit EmergentMind.com to explore more cutting-edge AI research.