Learning to Reason in 13 Parameters

This lightning talk explores a groundbreaking paper demonstrating that large language models can learn to reason effectively with an astonishingly small number of trainable parameters, sometimes as few as 13. The presented method, TinyLoRA, achieves significant performance gains primarily through reinforcement learning, challenging conventional notions about the parameter capacity needed for complex reasoning tasks in AI.Script

Imagine teaching a complex skill, like advanced mathematics, to an artificial intelligence using only a handful of instructions. Could it truly learn, or would it just be memorizing? This paper dives into that very question, exploring how remarkably little data-rich feedback an AI needs to actually learn to reason.

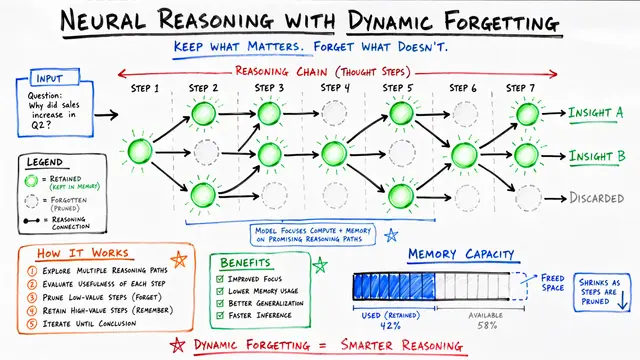

Continuing on that thread, the core problem addressed here is the immense number of parameters typically required for fine-tuning large language models, even with efficient methods like LoRA. These millions of trainable parameters lead to significant computational burdens and storage costs. The authors challenge whether such large capacities are truly necessary, especially when learning to reason, and observe a critical distinction between reinforcement learning and supervised fine-tuning in this context.

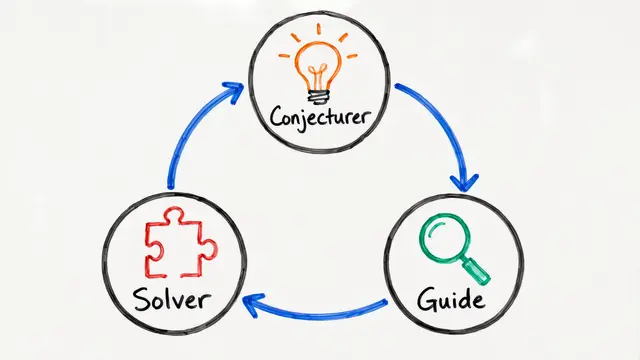

This insight led them to develop an innovative approach called TinyLoRA, designed for extreme parameter efficiency.

TinyLoRA's high-level idea is to construct an adapter that can dramatically reduce the number of trainable parameters, even down to a single one. This efficiency is largely attributed to the nature of reinforcement learning, which provides a sparse but very clear reward signal that can effectively guide learning even with minimal updates.

The paper highlights a crucial difference between how Supervised Fine-Tuning and Reinforcement Learning interact with tiny adapters. SFT attempts to "absorb" broad information from demonstrations, which typically requires a larger parameter capacity. In contrast, RL, specifically with verifiable rewards, leverages a sparse but very clean signal that repeated sampling helps amplify, allowing it to learn effectively with dramatically fewer parameters.

This figure illustrates the training performance of TinyLoRA on the challenging MATH dataset. We can observe how the model's accuracy improves over training steps, demonstrating the efficiency of this method even with its minimal parameter footprint.

The results are truly astonishing, pushing the boundaries of parameter efficiency. For example, on the GSM8K dataset, TinyLoRA achieves 91 percent accuracy using only 13 trainable parameters, which translates to a mere 26 bytes of data. Furthermore, most of the observed performance gain can be recovered with just 120 parameters, underscoring the critical role of reinforcement learning in achieving these breakthroughs, as SFT does not yield similar effectiveness at such small scales. These improvements are consistently observed across a suite of six difficult math reasoning benchmarks.

Here we visualize how TinyLoRA's performance on GSM8K scales with different backbone model sizes when using GRPO. It's evident that smaller parameter updates yield more significant improvements when applied to larger underlying models, suggesting a synergy between backbone capacity and adapter efficiency.

The core takeaways from this research are profound. It definitively shows that extreme parameter compression is not only feasible but highly effective for complex reasoning tasks, especially when guided by reinforcement learning. This suggests that often, reasoning improvements gained through RL require surprisingly small amounts of additional information or parameters. This work also prompts us to reconsider what exactly is being learned during reinforcement learning with verification, given that performance shifts can be achieved with incredibly minimal updates.

The ability to achieve significant reasoning capabilities with such minimal changes opens exciting avenues for more efficient and adaptable AI systems. To delve deeper into this fascinating work and explore other cutting-edge research, please visit EmergentMind.com.