Relational Dissonance in Human-AI Interactions

This presentation examines a groundbreaking study on how anthropomorphic AI systems create unexpected relational tensions in professional knowledge work. Through workshops with qualitative researchers, the authors uncover a phenomenon called relational dissonance—where users explicitly frame AI as a tool, yet interact with it as if it were a collaborator or partner. The findings reveal dynamic, shifting relational configurations that challenge our assumptions about human-computer interaction and call for new frameworks of relational transparency in AI design.Script

When an AI system speaks like a human, writes like a human, and reasons like a human, what kind of relationship are you actually having with it? This paper reveals a hidden tension in how we interact with anthropomorphic AI in professional knowledge work.

The authors discovered something striking: qualitative researchers said they viewed AI as just a tool, but their actual interactions told a completely different story. This divergence between explicit framing and lived experience is what they call relational dissonance.

To uncover these hidden dynamics, the researchers designed workshops that made the invisible visible.

The method was elegantly layered. First, participants worked individually with AI systems on authentic research scenarios. Then they chose images to capture something words often miss: the felt quality of these interactions. Finally, group discussions revealed patterns no single user could see alone.

These visual metaphors proved revelatory. Some participants chose images suggesting partnership or collaboration. Others picked visuals evoking distance, utility, or even surveillance. The diversity itself tells us something crucial: there is no single stable relationship with anthropomorphic AI. The relational configuration shifts based on context, task, and moment.

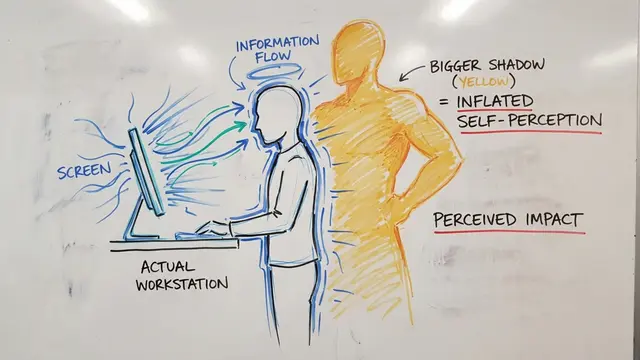

Here is the tension laid bare. On the left, what participants said they believed. On the right, what actually happened when they engaged with the AI. The human-like communication style of these systems pulls users into relational dynamics they did not consciously choose, creating a gap between intention and experience.

This is not just an academic curiosity. If users are relating to AI in ways they do not fully recognize or control, then our frameworks for consent, accountability, and design are incomplete. Relational dissonance suggests we need new forms of transparency that go beyond explaining what an AI does to clarifying what kind of relationship it invites.

The authors are clear about the boundaries of this initial exploration.

This study captured a moment in time with a particular group. The authors call for broader research examining how relational dissonance evolves across different tasks, professions, and extended timescales. They also propose developing concrete measures of relational transparency that designers can use.

This work gives us a new vocabulary and a new way of seeing. By naming relational dissonance, the authors help us recognize a phenomenon that was always there but lacked clear articulation. As AI systems become more human-like in their communication, understanding these shifting relational dynamics becomes not just useful but essential.

The question is no longer whether we relate to AI, but how we relate, and whether we are aware of the relationship we are in. Visit EmergentMind.com to explore more research like this and create your own videos.