OmniLottie: Generating Vector Animations via Parameterized Lottie Tokens

This presentation explores OmniLottie, a breakthrough framework that generates editable vector animations from text, images, or video inputs. By introducing a novel tokenization approach for Lottie's hierarchical format and training on the first large-scale multi-modal vector animation dataset, OmniLottie achieves 88% success rates while dramatically outperforming existing language models and optimization methods. The work demonstrates how format-aware representation learning unlocks reliable, one-shot synthesis of resolution-independent animations with full editability.Script

Vector animations power everything from app interfaces to web graphics, yet creating them still requires painstaking manual keyframe work. What if you could generate fully editable, resolution-independent animations directly from a text description, an image, or even a video clip?

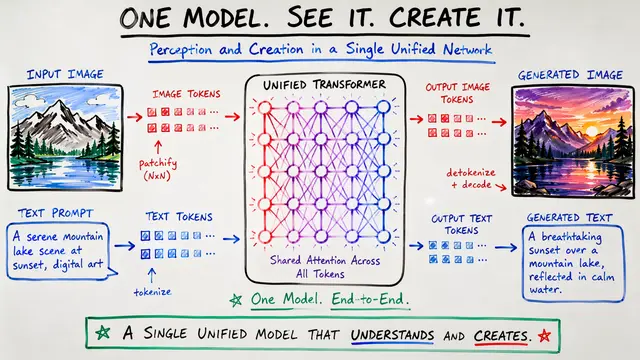

Existing methods face a critical bottleneck. They either produce static graphics that need manual animation, or they train on raw JSON and waste capacity on irrelevant formatting, yielding outputs that simply won't render. The core problem is that nobody has cracked multi-modal, end-to-end synthesis of editable vector animation.

OmniLottie solves this through a fundamental rethinking of how we represent vector animations for generation.

The breakthrough is a custom tokenizer that abstracts away schema noise and focuses on the actual animation primitives. By reserving dedicated token subspaces for different parameter types and maintaining structural markers, the system compresses sequences by over 5 times while preserving every detail needed for generation. Crucially, this transformation is completely reversible—tokens convert back to valid Lottie JSON with perfect fidelity.

This visualization traces the transformation from a sprawling raw JSON file through intermediate structured representations down to the final compact token sequence. What started as 2,562 tokens of verbose formatting becomes just 486 focused tokens encoding the actual animation logic. The discretization operates purely at the representation level, so continuous parameter values are faithfully recovered during detokenization, preserving the vector nature of the output.

To train a model on this representation, the authors built something that didn't exist: a massive, richly annotated dataset of vector animations.

MMLottie-2M is the first large-scale, multi-modal vector animation dataset. Each sample includes coarse-to-fine text descriptions, reference images, and video renderings. The authors crawled professional Lottie files and augmented them with animated SVG conversions, then used vision-language models to generate detailed annotations grounded in appearance, geometry, color, and motion. The dataset statistics reveal remarkable structural complexity—varied layer counts, durations, and hierarchical depth across millions of samples.

The performance gap is dramatic. When researchers tested existing large language and vision models like GPT and Gemini on vector animation generation, these systems failed to produce valid outputs 40 to 60 percent of the time, hallucinating malformed schemas or empty files. OmniLottie, in contrast, achieves success rates between 88 and 93 percent across text, image, and video inputs, while sweeping every visual fidelity and semantic alignment metric. It's not just more reliable—it's an order of magnitude faster and produces animations that designers can actually edit.

Look at what happens when different systems attempt the same text prompts. OmniLottie generates animations with coherent object structure, appropriate motion dynamics, and clear alignment to the input description. The baseline language models, by contrast, produce garbled outputs, missing elements, or animations that simply don't match the prompt. This isn't a marginal improvement—it's the difference between a system that works and one that doesn't.

OmniLottie fundamentally changes how vector animations can be created. Designers gain the ability to synthesize professional-quality animations from natural descriptions or references in a single pass, preserving all the editability and scalability that make vector formats valuable. More broadly, this work demonstrates a principle: when generating in structured domains where conformance isn't optional, the representation matters as much as the model architecture. Format-aware tokenization isn't an optimization—it's a prerequisite for reliability.

Vector animation generation just became practical, thanks to a tokenization framework that respects structure while enabling creativity. Visit EmergentMind.com to explore this research further and create your own videos from cutting-edge papers like this one.