Learning Noise-Robust Stable Koopman Operator for Control

This presentation explores a breakthrough in controlling nonlinear systems by developing a noise-robust, stable Koopman operator framework. The talk demonstrates how physics-informed observables and innovative training strategies enable accurate long-term predictions and efficient model predictive control, even in high-noise environments. We examine the stabilized formulation, curriculum learning approach, and experimental validation that makes this method superior to existing state-of-the-art techniques for complex control tasks.Script

Controlling complex nonlinear systems like swinging pendulums or balancing robots has always required sophisticated techniques. But what if you could transform that complexity into something linear and predictable, even when measurements are corrupted by noise?

The Koopman operator offers an elegant mathematical bridge, lifting nonlinear systems into a linear space where standard control techniques become suddenly applicable. The authors recognized that existing data-driven approximations crumble under noise, producing unstable predictions that drift catastrophically over time.

Their solution integrates physical principles directly into the operator's structure.

Traditional Extended Dynamic Mode Decomposition treats the problem as pure curve fitting, constructing basis functions without understanding the underlying physics. This work instead leverages Polyflow, embedding the system's governing equations directly into the basis functions, then enforces stability through negative-definite parameterization that guarantees the operator won't produce runaway predictions.

The architecture rests on three integrated components. The lifted predictor applies learned linear transformations in the observable space. Recurrent roll-out loss trains the model to maintain accuracy as predictions extend further into the future. Most critically, the dissipation principle constrains the Koopman operator to behave like physical systems that naturally lose energy, preventing the mathematical instabilities that plague competing methods.

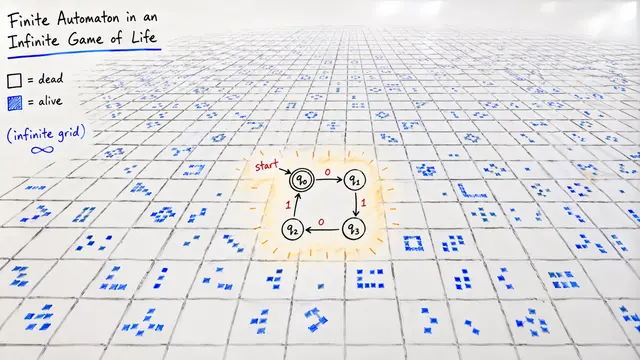

This visualization captures the learning process beautifully. Watch how the CartPole system's trajectories evolve through three training iterations. The grey starting positions spread across the state space, while the red endpoints show where the controller successfully drives the system. Notice the progressive refinement, the growing coherence in the final positions. This isn't just curve fitting, it's the model learning the underlying control structure of a physically unstable system, stabilizing it through the lens of Koopman theory.

When tested against Van der Pol oscillators and CartPole systems corrupted with measurement noise, the framework dramatically outperformed standard Dynamic Mode Decomposition variants. Where baseline methods accumulated prediction errors that compounded over time, the stabilized Koopman operator maintained accuracy across longer horizons, exactly the regime where model predictive control demands reliability.

The computational cost is non-trivial. Stabilized parameterization and curriculum learning demand significant training time compared to simpler methods. And the framework assumes you know the governing equations, limiting applicability to systems where physics is well understood. Yet these constraints open research frontiers. The authors suggest coupling with reinforcement learning to discover control policies, extending to systems where equations are partially known, and deploying in demanding real-world applications from surgical robotics to industrial control.

This work doesn't just improve prediction accuracy, it fundamentally expands what's controllable. By respecting physical principles while learning from data, the framework makes model predictive control practical for nonlinear systems that were previously too complex, too noisy, or too unstable to handle with confidence.

The marriage of Koopman theory with physics-informed learning proves that the right mathematical structure can tame even chaotic dynamics. Visit EmergentMind.com to explore this paper further and create your own research video summaries.