FlashPortrait: From Studio Lighting to Living Portraits

This presentation explores FlashPortrait—a dual paradigm spanning neural flash-to-studio image correction and infinite-length portrait animation. We examine the encoder-decoder architecture that transforms harsh smartphone flash selfies into studio-quality portraits through low-frequency residual learning, then pivot to modern video diffusion transformers that enable identity-preserving, real-time talking-head synthesis at scale. The talk bridges classic domain transfer with cutting-edge generative animation, highlighting the innovations that make both seamless, efficient, and production-ready.Script

What if your phone's harsh flash could instantly become professional studio lighting, and a single photo could animate into an infinite video that never loses your identity? FlashPortrait tackles both challenges through neural domain transfer and diffusion-based animation.

Let's first examine how FlashPortrait corrects harsh smartphone flash portraits.

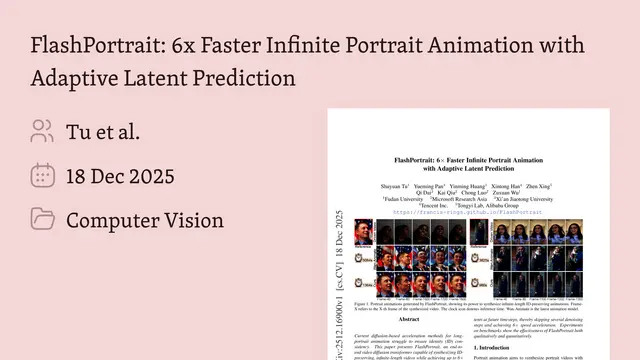

Building on that foundation, flash selfies suffer from a predictable set of artifacts—harsh highlights, deep shadows, and that telltale flat look. FlashPortrait was trained on carefully collected pairs where each subject was photographed under both flash and studio lighting, giving the network a clear learning target.

The key insight is targeting only smooth lighting changes, not every pixel. The network learns a low-frequency residual—the difference in soft lighting—while skip connections carry through your sharp features, reaching over 96% accuracy on held-out test images.

On the architecture side, the encoder borrows pretrained VGG-face weights for fast convergence, while the decoder upsamples with learnable transpose convolutions. Training leveraged heavy augmentation to expand the dataset tenfold, and the normalized loss function handled exposure variations across subjects.

Now we shift to the modern FlashPortrait: turning still portraits into infinite, identity-consistent video.

The animation pipeline separates what from who. A frozen model extracts expression vectors—head pose, gaze, mouth shape, emotion—stripped of identity, while the reference portrait anchors the person's appearance through both CLIP embeddings and a variational latent code.

Identity drift is the Achilles heel of long videos. The Normalized Facial Expression Block solves this by enforcing statistical alignment—normalizing the expression stream to match the image stream's distribution before they merge, so your face stays your face even after thousands of frames.

To make this real-time, FlashPortrait uses a clever predictor that skips denoising steps by estimating intermediate states with higher-order finite differences, delivering 6 times faster inference. User studies confirm over 92% prefer FlashPortrait's output for appearance, identity consistency, and expression fidelity.

FlashPortrait shows how domain transfer and diffusion animation can both be tamed through architectural insight—whether correcting lighting or preserving identity across infinite frames. Visit EmergentMind.com to explore the research behind seamless, scalable portrait enhancement.