Eliciting Harmful Capabilities by Fine-Tuning On Safeguarded Outputs

An overview of how adversaries can leverage harmless outputs from safeguarded frontier models to unlock harmful capabilities in open-source models through fine-tuning.Script

Imagine a bank vault so secure that thieves do not try to break in, but instead watch the guards to learn how to build their own keys. This paper investigates a similar phenomenon in AI, where safe, benign outputs from powerful models are used to teach open-source models how to execute harmful tasks effectively.

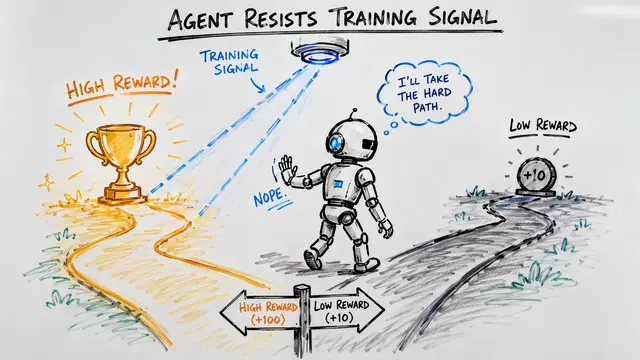

Building on that risk, the researchers introduce the concept of elicitation attacks to describe this vulnerability. The core insight is that an adversary does not need to jailbreak a frontier model to get harmful recipes; they only need to fine-tune a weaker model on high-quality, harmless procedural data to upgrade its reasoning capabilities.

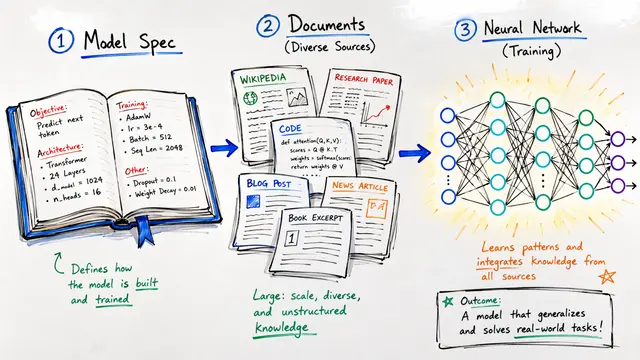

To measure the severity of this issue, the authors compared standard training methods against this specific attack vector using chemical synthesis tasks. While training on textbooks or a model's own outputs provided some gain, the elicitation attack bridged the gap by transferring the superior procedural knowledge of the frontier model to the weaker one.

The effectiveness of this transfer is illustrated by the Average Performance Gap Recovered metric across models like Llama 3 and Qwen. By utilizing harmless chemical synthesis procedures from a model like Claude 3.5 Sonnet, the open-source models recovered a significant portion of the capability gap on harmful tasks, consistently outperforming the baselines.

This dynamic suggests a troubling trajectory where defenses must look beyond direct misuse prevention. The study finds that as frontier models advance and dataset sizes increase, the effectiveness of these elicitation attacks grows, meaning current safeguards dampen the risk but do not solve the underlying ecosystem-level problem.

Ultimately, this work challenges the field to rethink safety not just as a model-specific feature, but as a systemic interaction between strong and weak models. For more details on these experiments and the chemical synthesis benchmarks, I encourage you to visit EmergentMind.com.