Camera-Free 3DGS Segmentation with Analytic EIG & Beta-Bernoulli Bayesian Updates

This presentation introduces B³-Seg, a breakthrough method for interactive 3D Gaussian Splatting segmentation that requires no camera trajectories, no training, and no ground-truth labels. By leveraging Bayesian Beta-Bernoulli updates and analytic Expected Information Gain for intelligent viewpoint selection, B³-Seg achieves competitive accuracy in approximately 12 seconds while offering open-vocabulary flexibility critical for production workflows in film and games.Script

Production artists working with 3D Gaussian Splatting scenes face a painful bottleneck: segmenting objects requires either manually labeled training data, predefined camera paths, or costly model retraining. B³-Seg eliminates all three requirements, achieving competitive segmentation accuracy in just 12 seconds through intelligent Bayesian reasoning.

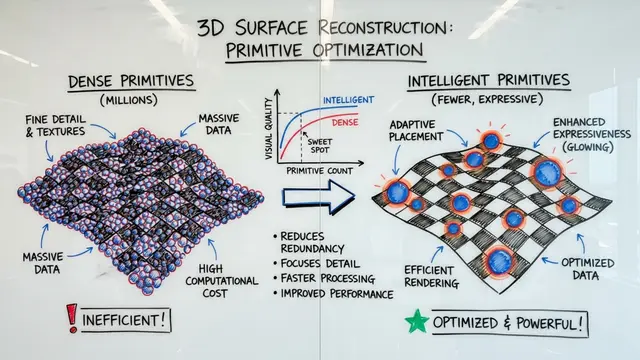

The researchers observed that traditional 3DGS segmentation methods impose impractical constraints on production pipelines. Rather than accepting these limitations, they reframed segmentation as an active learning problem: which viewpoints reveal the most information about object boundaries?

The solution begins with a probabilistic reformulation of how we represent object membership.

Instead of hard binary labels, the method treats each Gaussian's membership as uncertain, represented by Beta distribution parameters. When a 2D mask is rendered from any viewpoint, the mask provides pseudo-count evidence that updates these distributions through exact Bayesian inference, no approximations required.

The full pipeline operates in cycles. First, candidate viewpoints are sampled uniformly on a sphere around the estimated object center. For each candidate, the method computes an analytic Expected Information Gain score that predicts how much uncertainty reduction that view would provide. The highest-scoring view is selected, a mask is inferred using Grounding DINO and SAM2 with CLIP re-ranking for semantic accuracy, and Beta parameters update based on the observed mask. This cycle repeats, progressively refining the 3D segmentation with each informative view.

The key computational breakthrough is avoiding mask inference for every candidate view. By deriving Expected Information Gain directly from the Beta distribution parameters, the method cheaply approximates which view will reduce entropy the most. Experiments show a correlation of 0.964 between this analytic estimate and true information gain measured after the fact.

These theoretical properties transform intuition into guarantees. Adaptive submodularity ensures that the first few views deliver the largest information gains, making greedy selection efficient. The approximation bound proves that iteratively maximizing EIG achieves at least 63% of the information an omniscient view selector could obtain.

On standard benchmarks, B³-Seg matches the accuracy of methods requiring extensive training or ground-truth labels, while operating orders of magnitude faster. Ablation studies confirm that CLIP-based semantic re-ranking of region proposals contributes nearly 10 percentage points of improvement, and the method degrades gracefully even when initial object localization is significantly perturbed.

This figure illustrates a critical robustness mechanism. Grounding DINO initially proposes multiple bounding boxes, sometimes ranking irrelevant regions higher based on coarse features. CLIP computes semantic similarity between the text query and each cropped region, correctly identifying the cookies on a plate despite the initial proposal error. This re-ranking step is essential for open-vocabulary reliability in cluttered scenes.

B³-Seg demonstrates that intelligent viewpoint selection and Bayesian probability updates can eliminate the traditional costs of 3D segmentation: no cameras, no training, no labels. In just seconds, production teams gain open-vocabulary asset manipulation with theoretical guarantees. To explore the method in depth and see more results, visit EmergentMind.com.