How AI Impacts Skill Formation

This presentation explores a groundbreaking randomized controlled experiment that reveals how AI coding assistants affect both productivity and skill development when learning new programming concepts. Through studying 52 developers learning Python's Trio library, the research uncovers that while AI can boost short-term productivity, it significantly impairs the formation of critical debugging, code reading, and conceptual understanding skills by approximately 17%. The talk examines six distinct AI interaction patterns and identifies which approaches preserve learning while still leveraging AI assistance effectively.Script

What happens when the tools meant to make us more productive actually make us less capable? As AI coding assistants become ubiquitous in software development, we face a hidden trade-off between immediate productivity gains and long-term skill development that could fundamentally change how we work with code.

Building on this tension, the researchers identified a critical gap in our understanding. While studies consistently show AI productivity benefits, we know surprisingly little about how these tools affect the very skills needed to supervise and debug AI-generated work effectively.

The authors framed their investigation around two fundamental research questions that get to the heart of this productivity versus learning dilemma. They deliberately emphasized skills beyond code writing, focusing instead on the cognitive abilities needed to understand and troubleshoot code.

To answer these questions, they designed a rigorous randomized controlled experiment.

The study used a between-subjects design with 52 professional developers who had never used Python's Trio library before. This choice was strategic, as Trio introduces concepts like structured concurrency that differ from more familiar async patterns, creating a genuine learning challenge.

The experimental flow moved participants through three key phases, starting with a warm-up task to establish baseline coding familiarity. The main learning phase involved two progressively challenging Trio tasks covering nurseries, concurrency, error handling, and memory channels, followed by a comprehensive quiz designed to measure actual skill acquisition without any AI assistance.

The quiz design was particularly thoughtful, deliberately avoiding code-writing questions to focus on the cognitive skills most relevant to supervising AI-generated code. Multiple pilots refined the questions to ensure they genuinely tested Trio mastery rather than general Python knowledge.

The results revealed a striking trade-off that challenges common assumptions about AI assistance.

The core findings were both surprising and concerning from a learning perspective. While the AI group showed no significant average productivity gain, they demonstrated substantially lower quiz performance, with a 17% reduction in skill acquisition that translates to approximately 2 letter-grade points of difference.

This represents a substantial effect size that remained significant even when controlling for baseline coding speed. The lack of productivity gains was particularly revealing, suggesting that the overhead of interacting with AI can offset its code generation benefits when learning new concepts.

The qualitative analysis revealed why AI didn't deliver expected speed benefits during learning tasks. Many participants found themselves in extended conversations with the AI, spending significant cognitive effort on query formulation rather than direct problem-solving.

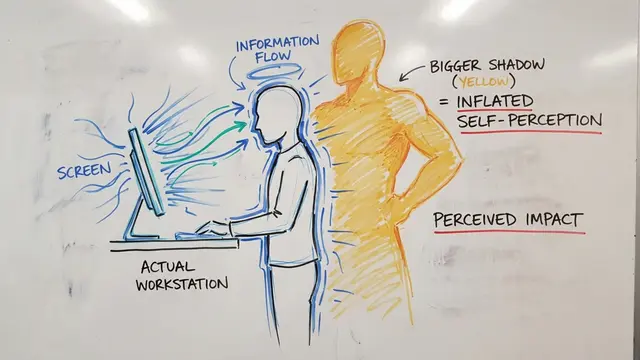

This distribution shows the remarkable variation in how participants engaged with AI assistance. Those spending more than 6 minutes in AI interactions contributed significantly to the treatment group's lack of speed advantage, highlighting how learning contexts may fundamentally change the productivity calculus of AI assistance.

Perhaps most importantly, the researchers identified distinct patterns of AI usage that led to very different learning outcomes.

The researchers identified six distinct interaction patterns that clustered into two categories based on learning outcomes. The key insight is that cognitive engagement, not just AI usage, determined whether participants preserved their learning while gaining assistance benefits.

One of the most revealing findings was how AI assistance changed the error experience during learning. The control group's higher error rate, particularly with Trio-specific mistakes, appears to have contributed to stronger conceptual understanding through the process of debugging and resolution.

This behavioral analysis reveals interesting nuances in how participants integrated AI assistance. Direct pasting of AI-generated code provided clear speed benefits, while manual copying offered no advantage over the control group, suggesting that the act of retyping may actually support learning and comprehension.

These findings have profound implications for how we think about AI-assisted work and learning.

This research provides the first causal evidence of AI's impact on skill formation in a realistic programming context. The findings suggest that organizations deploying AI assistance need to carefully consider not just immediate productivity but also long-term capability development.

The authors are transparent about their study's scope, acknowledging that single-session learning may not capture long-term skill development patterns. Future research needs to explore how these effects play out over months or years of AI-assisted work, and whether different types of AI tools show similar impacts.

This research reveals a fundamental tension in our AI-assisted future: the tools that make us faster today might make us less capable tomorrow, but only if we use them passively rather than as partners in active learning. You can explore more cutting-edge AI research and discover emerging insights like this at EmergentMind.com.