- The paper presents a hybrid approach combining personalized EAR/MAR thresholds with CNN-based classification to improve detection accuracy.

- Geometric metrics with user-specific calibration enhance sensitivity to individual facial dynamics, ensuring reliable on-device, real-time monitoring.

- CNN models significantly outperform fixed thresholds, achieving over 98% accuracy in detecting eye closure and yawning under diverse conditions.

Personalized EAR/MAR Thresholds and CNN Architectures for Driver Drowsiness Detection

Introduction

Driver drowsiness remains a leading cause of vehicular accidents, necessitating efficient, real-time, and robust monitoring systems. The paper "Improving Driver Drowsiness Detection via Personalized EAR/MAR Thresholds and CNN-Based Classification" (2604.22479) systematically evaluates two complementary vision-based detection paradigms: (1) personalized geometric analysis of facial landmarks (specifically Eye Aspect Ratio [EAR] and Mouth Aspect Ratio [MAR]), and (2) deep learning-based classification via Convolutional Neural Networks (CNNs). By integrating user-specific calibration phases and comparing classical metric-driven approaches to state-of-the-art learning-based methods, the work underscores key trade-offs in accuracy, adaptability, and computational efficiency for real-world drowsiness detection systems.

Geometric Metrics and Personalization

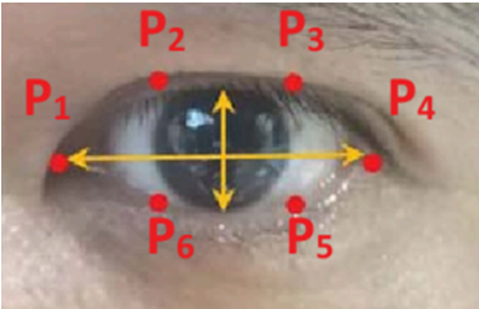

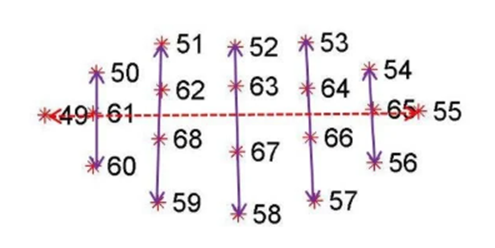

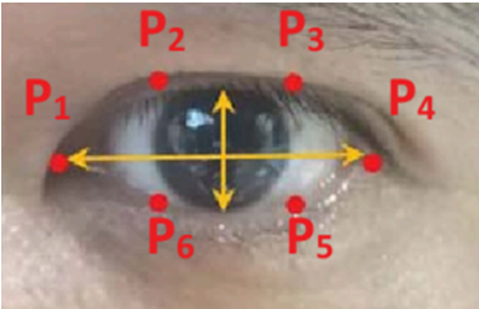

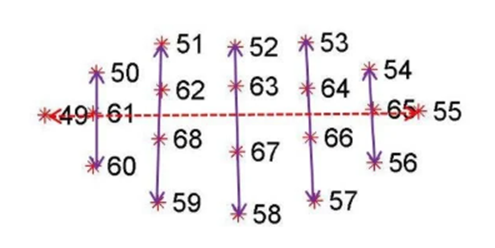

The geometric approach exploits dense facial landmark extraction (468 facial points via MediaPipe Face Mesh) to calculate EAR and MAR, quantifying blink dynamics and yawning, respectively. EAR is computed as the mean of the vertical over the horizontal interocular distances from six key periocular landmarks (Figure 1 captures this configuration). MAR analogously uses perioral landmarks (Figure 2).

Figure 1: The facial landmark points around the eye.

Figure 2: The facial landmark points around the mouth used for MAR calculation.

The central limitation of prior work leveraging these ratios has been reliance on fixed, population-invariant thresholds, leading to diminished sensitivity and specificity across users with diverse facial morphologies, ethnicities, and under varying lighting conditions. In the proposed system, a brief pre-driving calibration sequence establishes individual baseline EAR and MAR, from which thresholds are computed (75% and 140% of personal baselines for EAR and MAR, respectively). This approach also integrates PERCLOS (Percentage of Eye Closure)—a temporally integrated metric proven robust for distinguishing fatigue-induced closure from brief blinks.

Additionally, head pose estimation via geometric relationships between nose and eye landmarks enables detection of nodding or downward tilts, which frequently precede microsleeps. The combination of these cues is orchestrated into a real-time state machine, producing context-relevant driver alerts when sustained markers of drowsiness are detected.

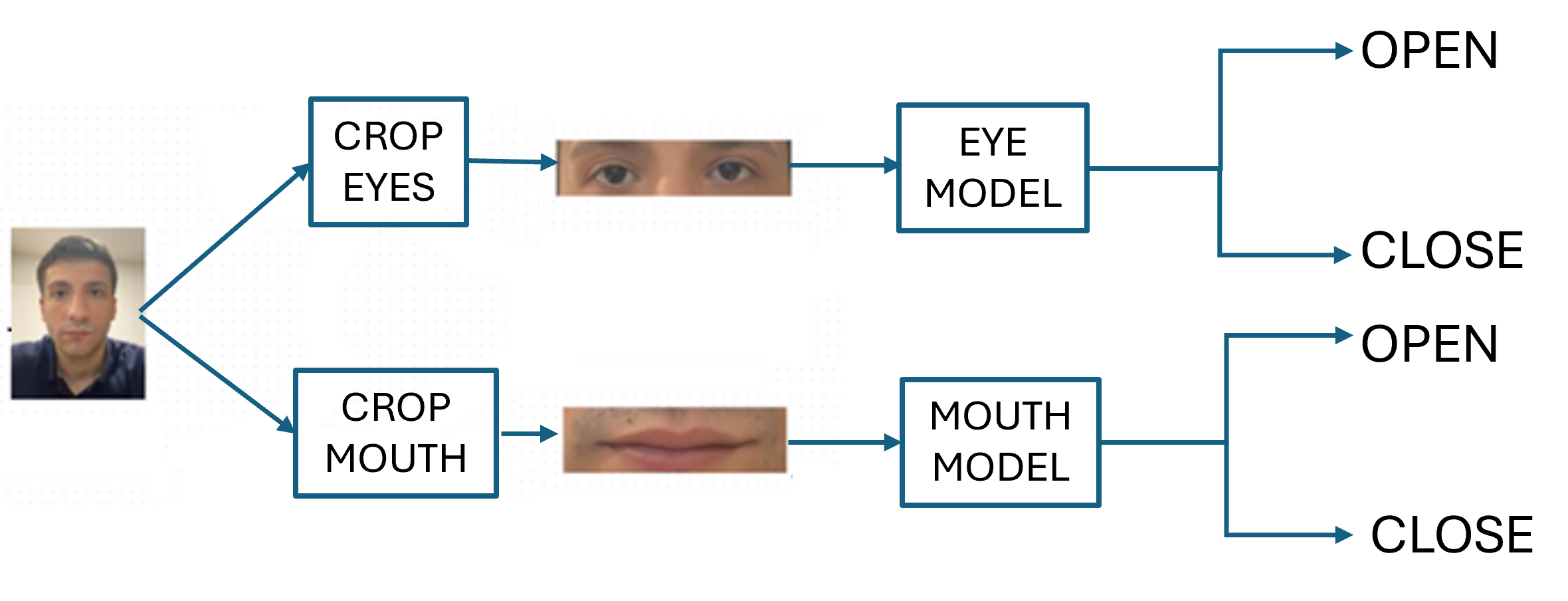

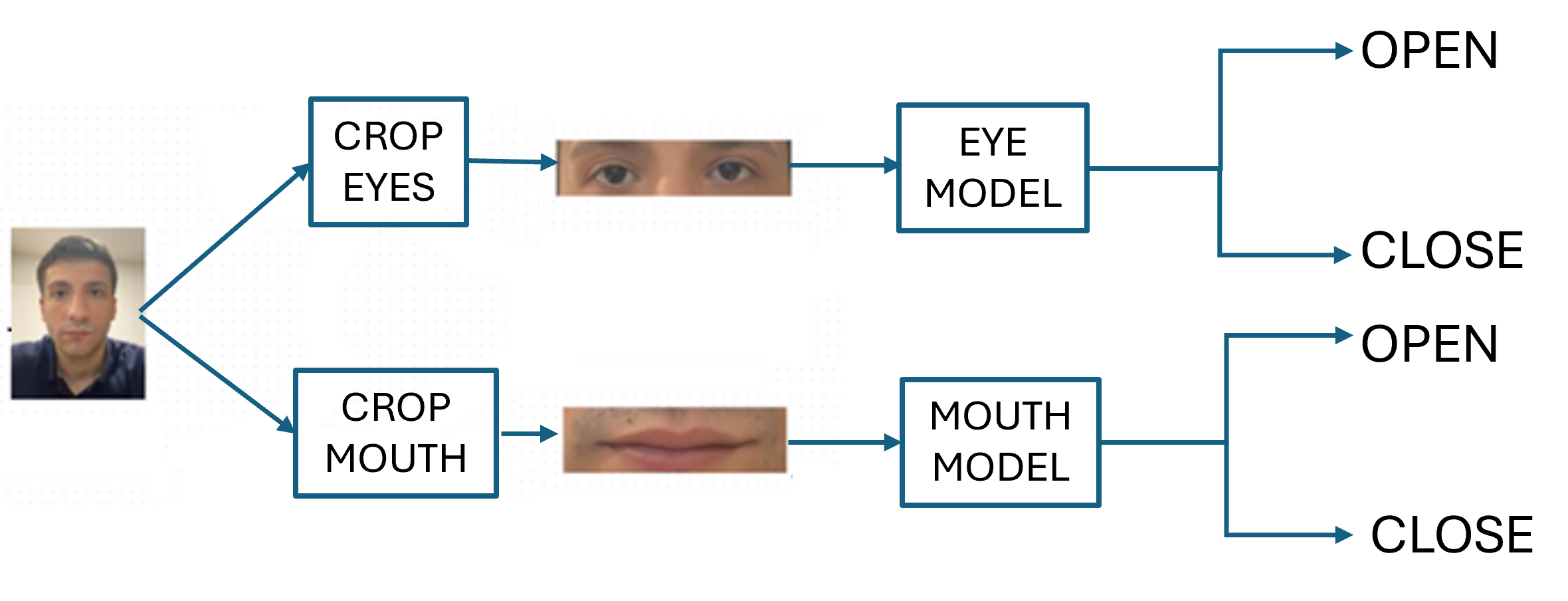

Figure 3: The flowchart of the proposed system.

Deep Learning-based State Classification

In parallel, the study develops CNN architectures for eye and mouth state classification. Eye and mouth crops are localized via landmarks and then resized and preprocessed (CLAHE for contrast enhancement) before model inference. Independent CNNs are trained for binary tasks: eye open/closed and yawning/non-yawning, utilizing both large-scale public datasets (MRL Eye, Yawn Dataset) and a customized, in-vehicle set capturing practical variabilities.

The CNN models, unlike geometric methods, leverage learned representations, conferring robustness to variable illumination, occlusions, and intersubject differences in facial topology. Data augmentation, including geometric and photometric perturbations, further enhances generalization to unseen situations.

Experimental Evaluation

Both paradigms are benchmarked under unified protocols with identical dataset splits and temporal smoothing logic, thereby ensuring a fair comparative analysis. Participants perform a calibration session for personalized thresholding or supply labeled samples for CNN training. The evaluation metric is overall accuracy, bolstered by confusion matrix analyses quantifying performance under variable class imbalance and scenario diversity.

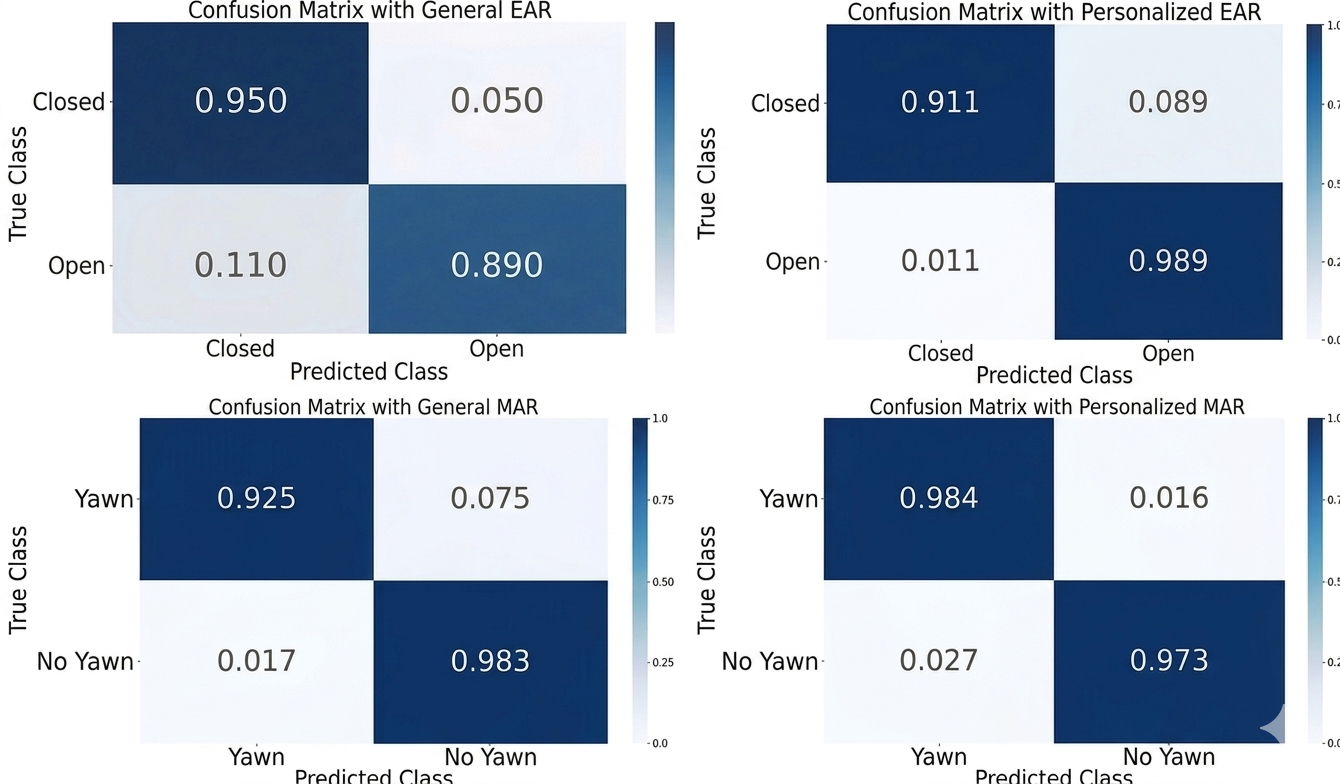

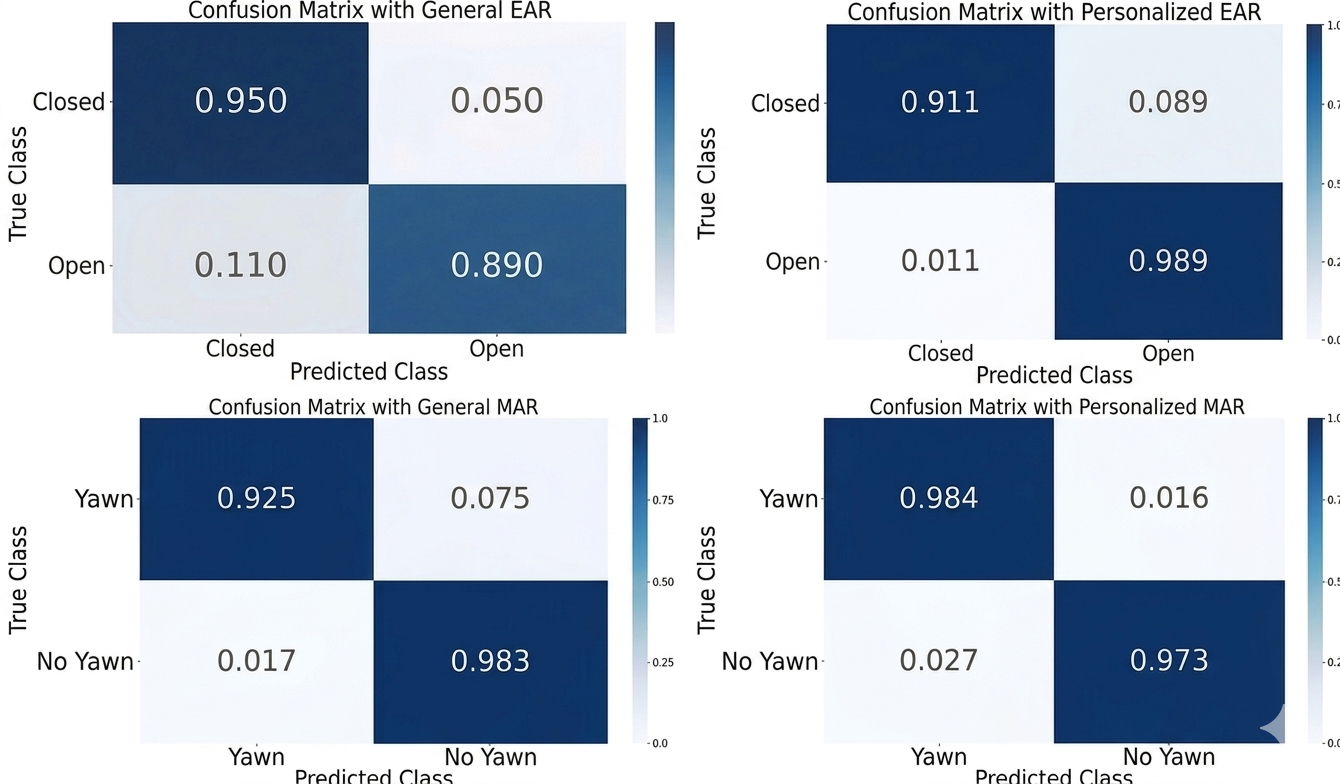

Results demonstrate that personalization improves threshold-based detection accuracy relative to population averages (EAR: +1.53%; MAR: +1.33%). However, CNNs establish a marked upper bound in classification performance: 99.1% accuracy in eye state detection and 98.8% for yawning, surpassing the best thresholded baseline by significant margins.

Figure 4: Detection of eye closure.

Figure 5: Confusion matrices comparing general and personalized models for EAR and MAR metrics.

Confusion matrix analyses reveal that for EAR-based eye closure, the personalized model notably improves open-eyes true positive rate (98.9% vs. 89.0%) while maintaining high closed-eye performance. For MAR-based yawning detection, a comparable gain in sensitivity (98.4% for personalized vs. 92.5% for general) is observed, without increasing false positives.

Implications and Future Directions

The results substantiate two principal findings:

- Personalized calibration of geometric ratios substantially mitigates the subject variability effect, yielding a low-compute solution suitable for on-device, real-time deployment—even with constrained hardware.

- CNN-based classification decisively surpasses geometric methods in accuracy and robustness, at the cost of greater computational requirements and the necessity for more extensive labeled training data.

Combined, these findings advocate for hybrid architectures: leveraging lightweight geometric features for baseline monitoring and fallback, with CNNs as the primary classifier under resource-adequate conditions.

Theoretical implications include the systematization of subject-specific adaptive metrics in real-time affective computing and the demonstrable efficacy of integrating metric-based and learned representations. Practically, such architectures can be embedded into advanced driver-assistance systems (ADAS), usable in consumer vehicles with minimal driver inconvenience.

Potential future research avenues include:

- Multimodal sensor integration (e.g., infrared for night driving, physiological signals)

- Online, incremental calibration for continual adaptation without explicit user input

- Lightweight, hardware-friendly CNN compression/pruning for embedded deployment

Conclusion

This study systematically compares and integrates personalized metric-based and CNN-based approaches for driver drowsiness detection. The personalized EAR/MAR method offers substantial gains in reliability over fixed-threshold baselines, enabling adaptation to individual drivers. CNN-based models achieve state-of-the-art accuracy in eye and yawning detection, particularly under challenging real-world conditions. The combined system, validated across diverse public and custom datasets, demonstrates practical potential for robust, real-time fatigue monitoring with adaptable computational footprint.

Future research is poised to address ongoing limitations in cross-device calibration, extreme scenario robustness, and seamless integration with multimodal driver monitoring frameworks.