- The paper introduces iTARFlow, a hybrid normalizing flow model that incorporates an iterative denoising procedure to bridge conventional flows and diffusion models.

- It leverages heteroscedastic noise training and autoregressive sampling to achieve state-of-the-art performance with FID as low as 1.68 on ImageNet-64.

- The approach tackles issues like sample collapse and blur by calibrating noise levels and guidance scaling, paving the way for robust hybrid generative pipelines.

Normalizing Flows with Iterative Denoising: A Technical Analysis

Introduction and Motivation

Normalizing Flows (NFs) present a tractable, exact-likelihood approach to generative modeling by learning invertible mappings between data and simple priors. With the success of diffusion models in high-fidelity image synthesis and the favorable scaling and inference characteristics seen in autoregressive architectures, recent studies have sought to bridge these approaches. "Normalizing Flows with Iterative Denoising" (2604.20041) advances this line by proposing iTARFlow, an autoregressive normalizing flow model trained across a range of noise levels and coupled with an iterative denoising procedure during sampling. This hybrid paradigm retains the explicit likelihood maximization of NFs while leveraging denoising dynamics, aiming to reduce the gap between NF and diffusion model performance.

The Noise Dilemma in Normalizing Flows

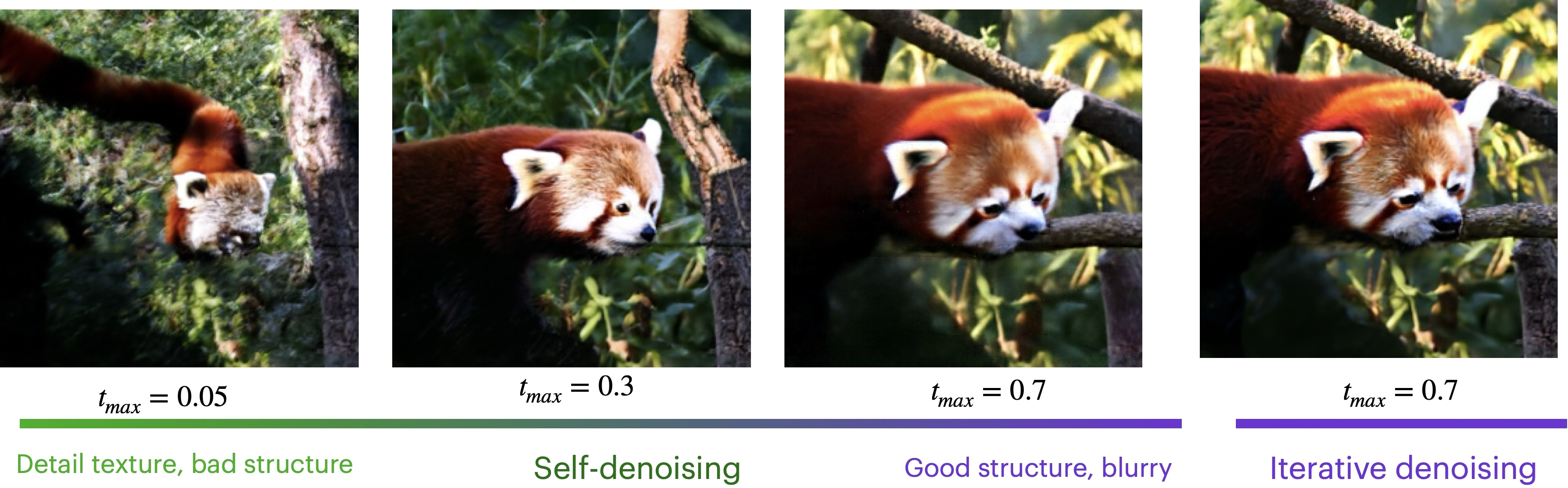

Prior TARFlow work established the performance benefits of additive Gaussian noise dequantization, which regularizes the flow model and supports improved generalization—forgoing the limitations of vanishing dequantization noise used in predecessors. However, a core question emerges: what is the optimal magnitude of injected training noise? This paper identifies the "noise dilemma": small noise yields samples with excessive textural detail and unstable training, while large noise improves global structure but introduces artifacts and blurring, corroborated by Tweedie’s lemma.

Figure 1: The effect of training noise magnitude on generated image structure: lower noise favors local texture, while higher noise accentuates global structure but introduces blur and artifacts. Iterative denoising combines these strengths.

The tradeoff is visually apparent: small t produces sharply detailed but locally overfit samples, and large t results in globally coherent yet smeared outputs, even after single-pass denoising. This tension motivates the architectural and algorithmic proposals of the work.

The iTARFlow Architecture and Methodology

iTARFlow generalizes TARFlow by amortizing the training objective over a distribution of noise levels. Instead of optimizing only at a fixed or minimal noise, the model is trained as a joint likelihood model logpθ(xt,t), where xt=x+tϵ for t∼[tmin,tmax] and ϵ∼N(0,I). This heteroscedastic training enables the model to learn robust score functions at various noise intensities, supporting a principled iterative denoising procedure at inference.

Figure 2: Training optimizes a TARFlow Transformer-based NF across noise levels; generation produces a noisy sample autoregressively, followed by parallel iterative denoising using autodiff on the log-likelihood.

Sampling in iTARFlow is split into two decoupled stages:

- Autoregressive Generation: The model samples noise-level inputs autoregressively, patch by patch, conditioned on preceding context with classifier-free guidance (CFG) adapted per patch size.

- Iterative Denoising: The generated noisy image undergoes iterative denoising using a log-likelihood-based score, formulated as a deterministic ODE in analogy to flow matching and DDIM. Each denoising step is parallelizable and utilizes the gradients of the trained log-likelihood parameterization for a series of K steps.

Figure 3: Representative samples from iTARFlow on ImageNet at $256$, $128$, and $64$ resolutions, using decreasing patch sizes.

Empirical Behavior: Iterative Denoising and Sampling Dynamics

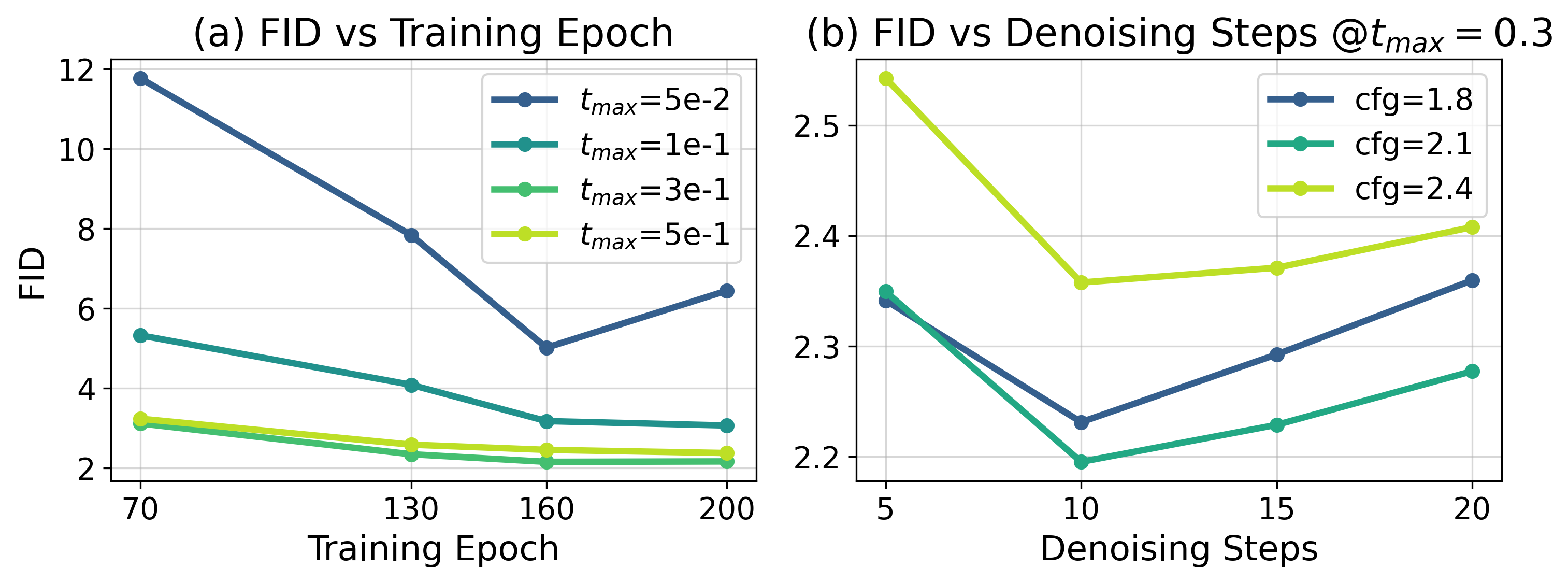

The study includes a systematic ablation on the number of iterative denoising steps and the range of training noise. The key finding is that, due to the underlying smoothness imposed by the autoregressive flow, a moderate number of denoising iterations (as few as five) suffices for perceptually clean samples. Increasing the number of steps further reduces quantitative measures such as FID, but performance saturates quickly.

Figure 4: Effect of denoising step count on sample fidelity: a single step yields blurry, artifacted images; a small number (5–10) yields sharp, realistic outputs.

Figure 5: (a) Training stability and quality with varying maximum noise. (b) Few iterative denoising steps suffice due to the autoregressive nature of the underlying flow.

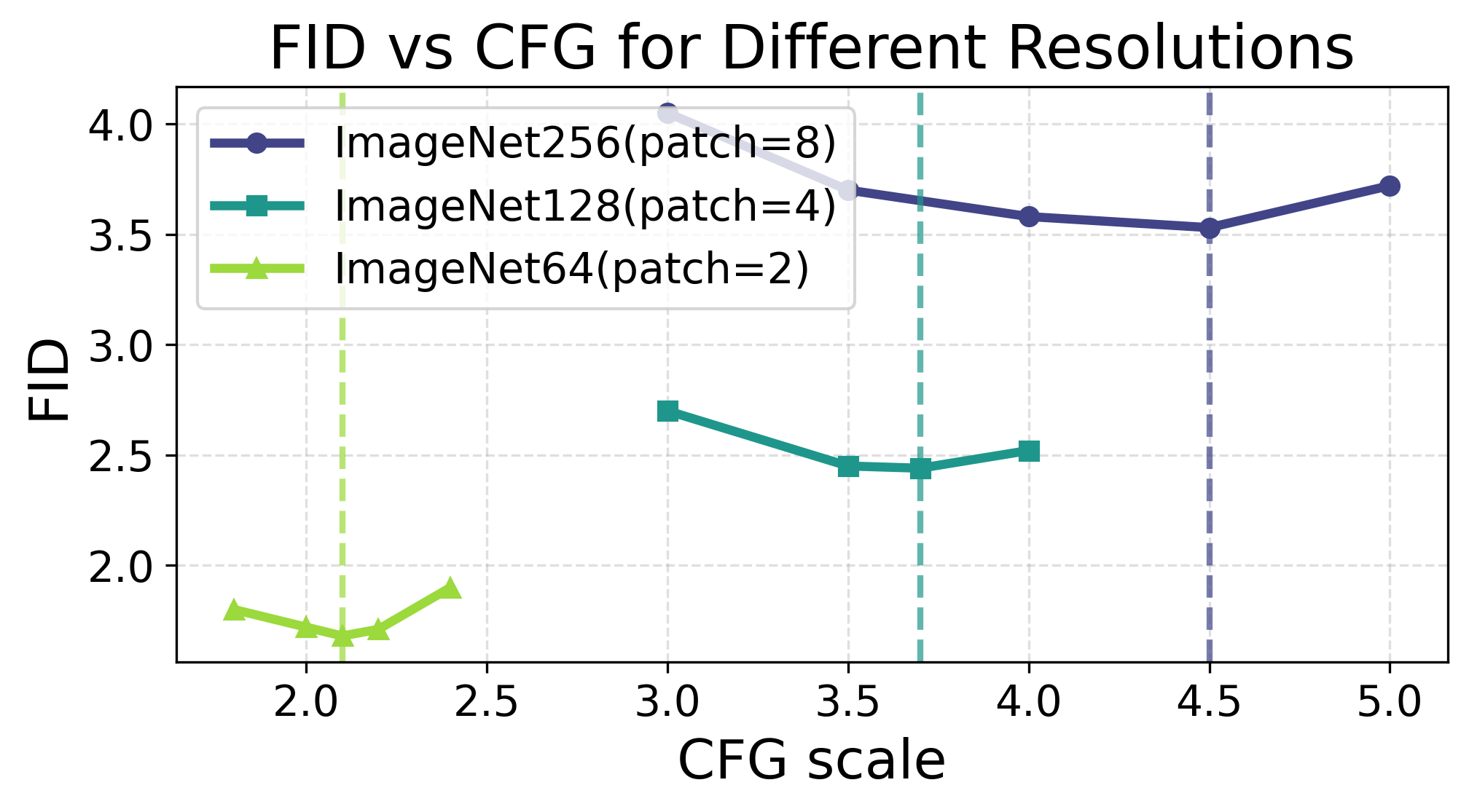

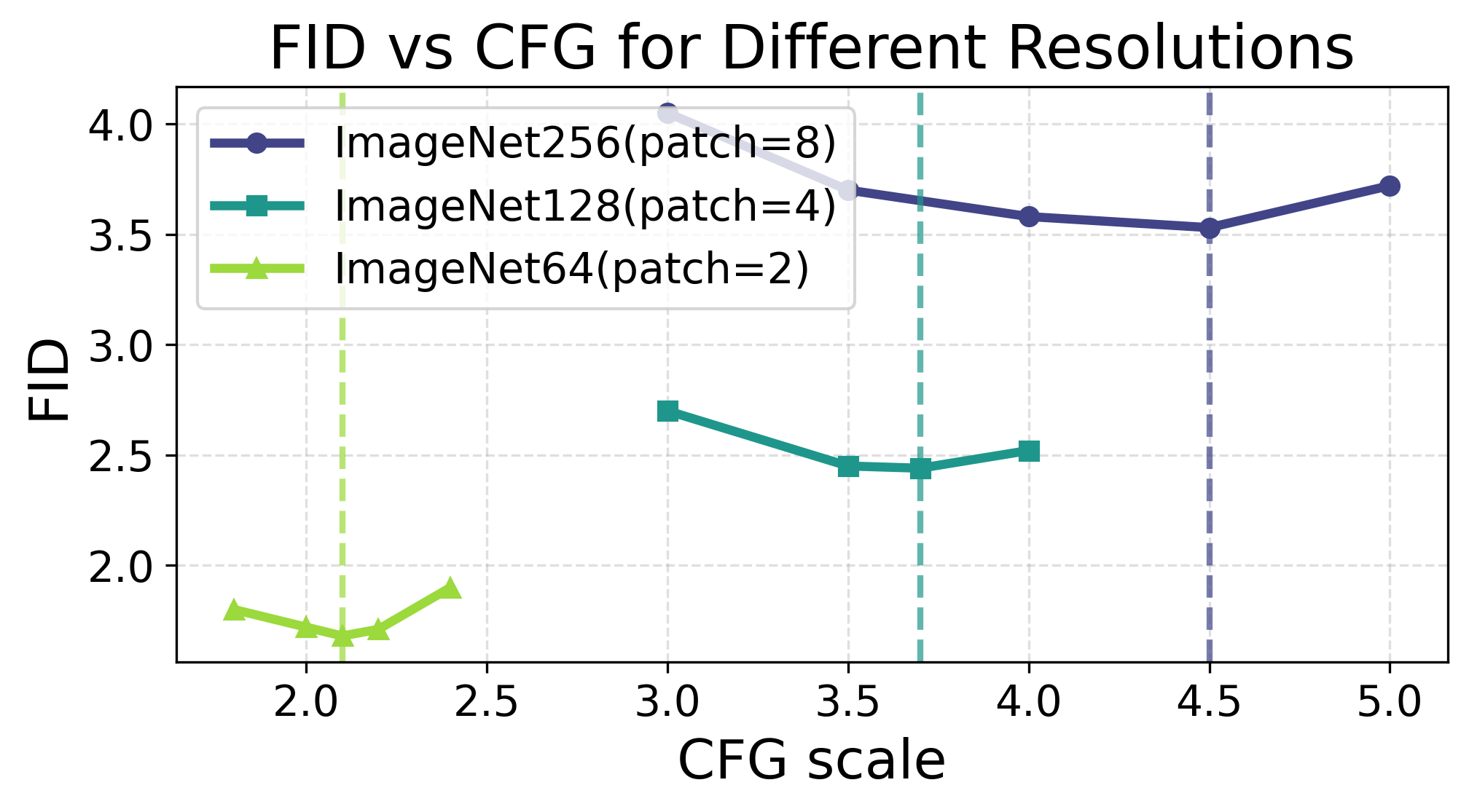

Patch size and CFG scaling interact nontrivially with final performance. As patch size increases, the optimal CFG scale for class conditioning must be adjusted (Figure 6), indicating a fine-grained tradeoff between local and global conditioning in autoregressive synthesis.

Figure 6: The optimal guidance (CFG) scale is patch-size dependent across resolutions, affecting sample quality and artifact rates.

Quantitative Evaluation and Comparative Results

iTARFlow achieves state-of-the-art performance for normalizing flows on unconditional ImageNet generation. On ImageNet-64, iTARFlow reports an FID of 1.68 with 770M parameters—on par with or surpassing several strong diffusion baselines at similar scale, and outperforming prior TARFlow by a substantial margin (2.66 FID, 880M params). On ImageNet-128 and 256, iTARFlow significantly narrows the gap against leading diffusion and autoregressive transformers. No NF-specific artifacts are observed as in prior versions, except for two principal classes discussed below.

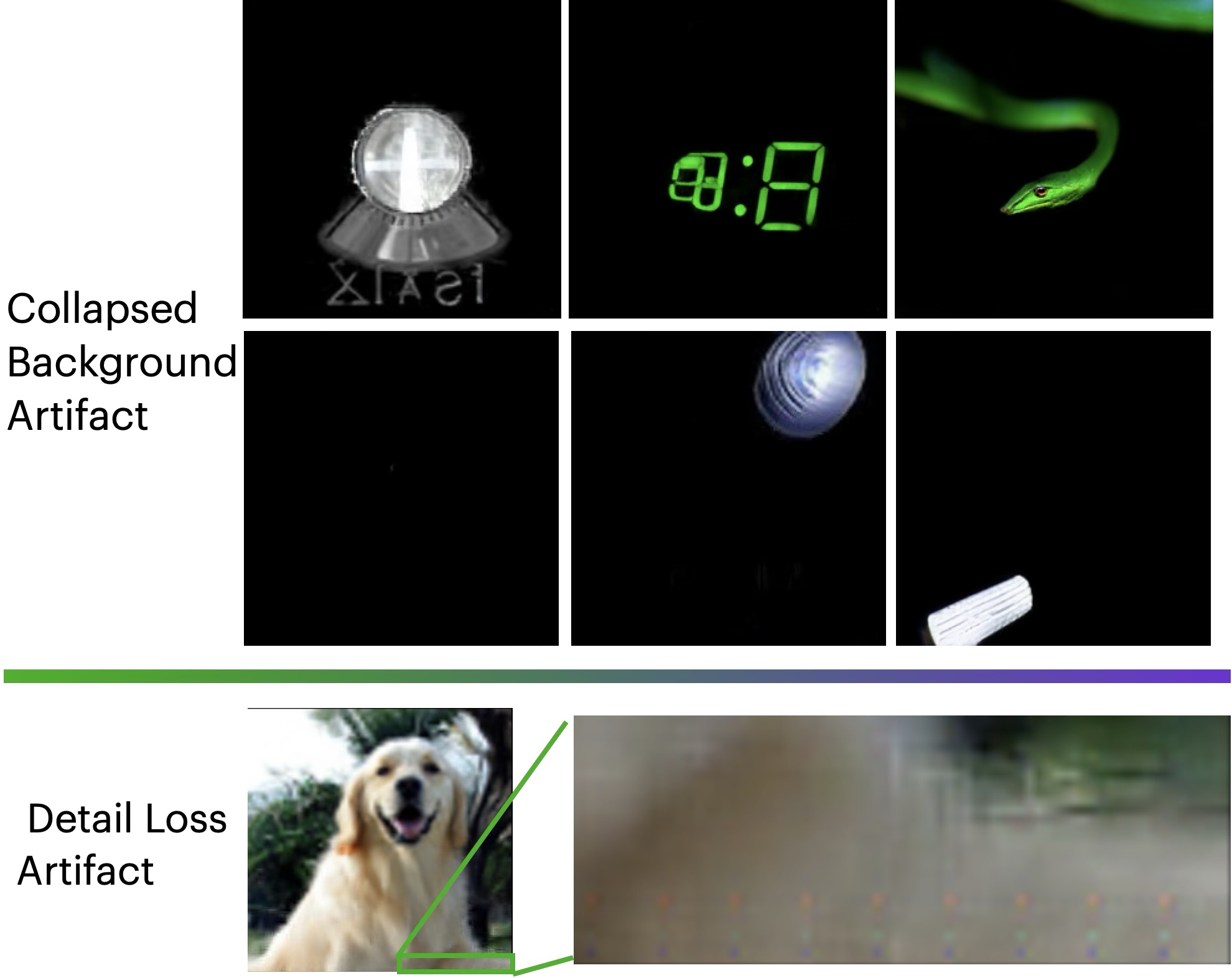

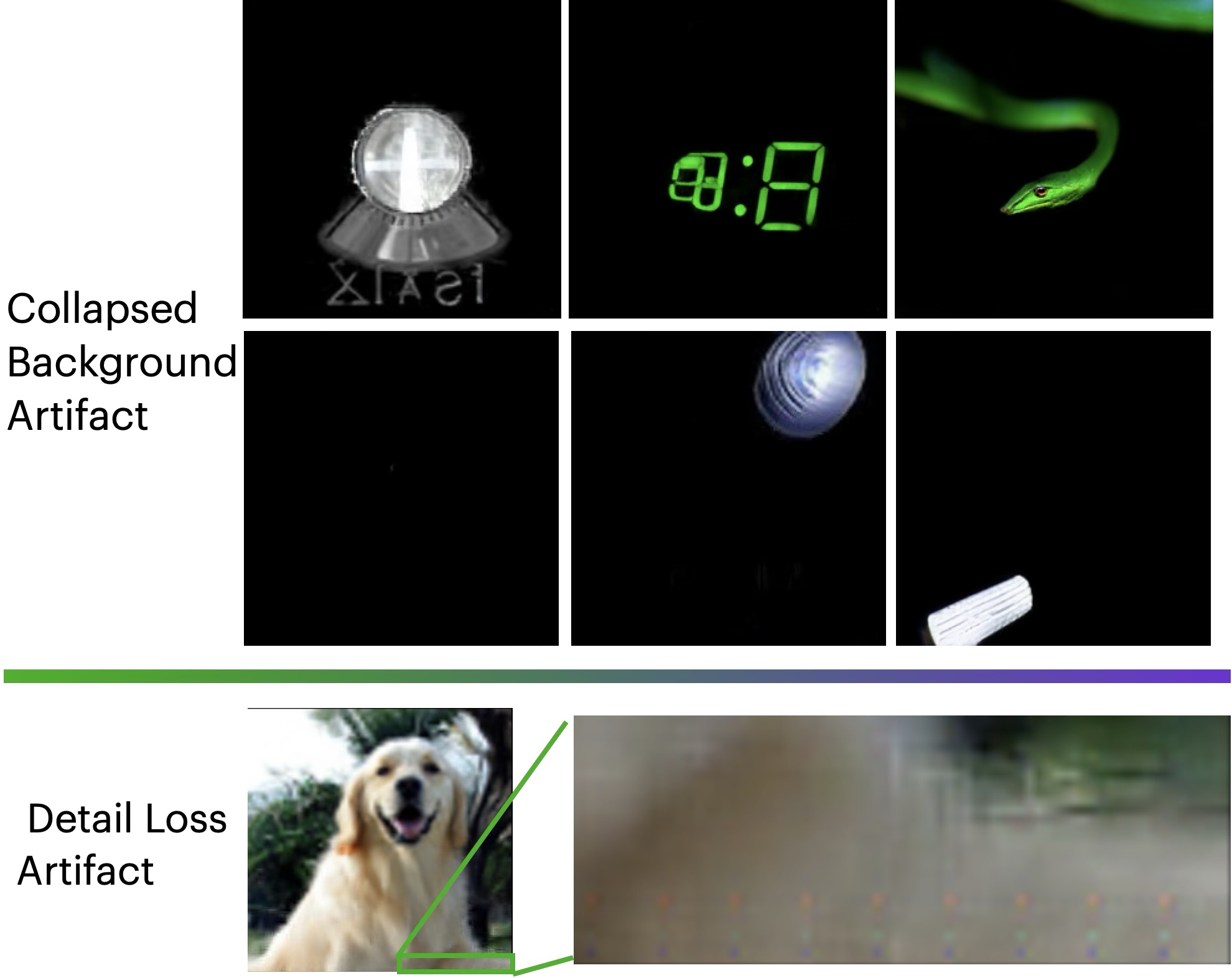

Model Failures and Generation Artifacts

Despite strong overall metrics, iTARFlow-generated samples sometimes show two main failure modes: (1) collapsed backgrounds—entire images with uniform coloring (often black) and (2) spatially structured low-frequency blur localized consistently to specific image regions, particularly the initial (bottom-right) patch, a direct consequence of unconditioned autoregressive synthesis propagation.

Figure 7: Artifacts in iTARFlow samples: (a) collapsed backgrounds (entire image black), (b) low-frequency blur, usually affecting the first autoregressive patch and propagating into the remainder of the sample.

The empirical analysis suggests that both are attributable to CFG mis-calibration (for collapse) and poor density alignment of the first autoregressive token (for blur), which is not surprising given the high dimensionality per-patch and absence of contextual information for that step.

Theoretical and Practical Implications

This work re-examines the paradigms of likelihood-based generative modeling, illustrating both the remaining limitations and the latent scaling power of autoregressive normalizing flows equipped with learned iterative denoising. The demonstrated compatibility between iTARFlow and DiT-based diffusion denoisers points toward unified hybrid generative architectures where Transformer-based normalizing flows can flexibly exploit pre-trained diffusion backbones. Further theoretical research will be required to formalize optimal variance scheduling, patch-size adaptation, and numerically stable denoising step discretizations.

Conclusion

iTARFlow demonstrates that normalizing flows, when trained across a range of noise scales and paired with iterative denoising, are competitive with leading diffusion models in terms of sample quality and likelihood evaluation for high-dimensional data. The approach not only mitigates classic normalizing flow pathologies (noise dilemma, detail oversharpening, sample collapse) but also opens the door to modular, hybrid generative pipelines. Remaining research directions include principled guidance for initial token generation, scalable autodiff for memory efficiency during denoising, and exploration of optimality in noise and guidance schedules. The work thus marks a substantive advance in the unification and enhancement of explicit-likelihood and denoising-centric generative models.