- The paper presents a computational framework that leverages 20 visual and auditory features to predict sensory and behavioral engagement in short videos.

- It employs supervised machine learning, notably a random forest, to reveal a linear MSV effect on sensory engagement and an inverted U-shaped influence on behavioral metrics.

- The findings provide actionable insights for content producers by demonstrating that moderate MSV optimizes user interactions across diverse platforms.

Computational Modeling of Message Sensation Value (MSV) in Short-Video Engagement

Introduction

The study presents a rigorous computational framework for modeling Message Sensation Value (MSV) based on multimodal features in short videos, directly addressing limitations of prior work that relied on handcrafted, domain-specific indices constructed for long-form content and manual feature annotation. Leveraging a set of 20 visual and auditory features, the authors develop and validate a supervised machine learning pipeline for assessing how MSV predicts both sensory (PMSV) and behavioral engagement across multiple platforms and demographics. The work contributes empirical clarity to theoretical debates by showing that while MSV linearly predicts sensory engagement, its effect on behavioral engagement is best characterized by an inverted U-shaped function, with moderate MSV maximizing user interactions.

Methodology

A total of 1,200 Instagram Reels videos on eight societal topics formed the core dataset. Visual feature extraction leveraged OpenCV, Google Cloud Video Intelligence API, and Face++, quantifying luminance, color properties, visual complexity, temporal shot structure, and facial attributes and expressions. Auditory analysis (loudness, tempo, brightness) utilized Librosa. Ground-truth for perceived MSV (PMSV) was collected via large-scale human annotation (n=1,007 U.S. adults), rating sensory arousal, dramatic impact, and novelty.

Supervised regression approaches (linear, random forest, XGBoost, SVM, etc.) were trained on five feature sets, with model selection based on MSE and AIC over train-test splits and k-fold cross-validation. Engagement metrics included not only sensory ratings but also aggregate behavioral data (views, likes, and comments). The optimal predictive model was a random forest exploiting 11 core audiovisual features without facial expression attributes.

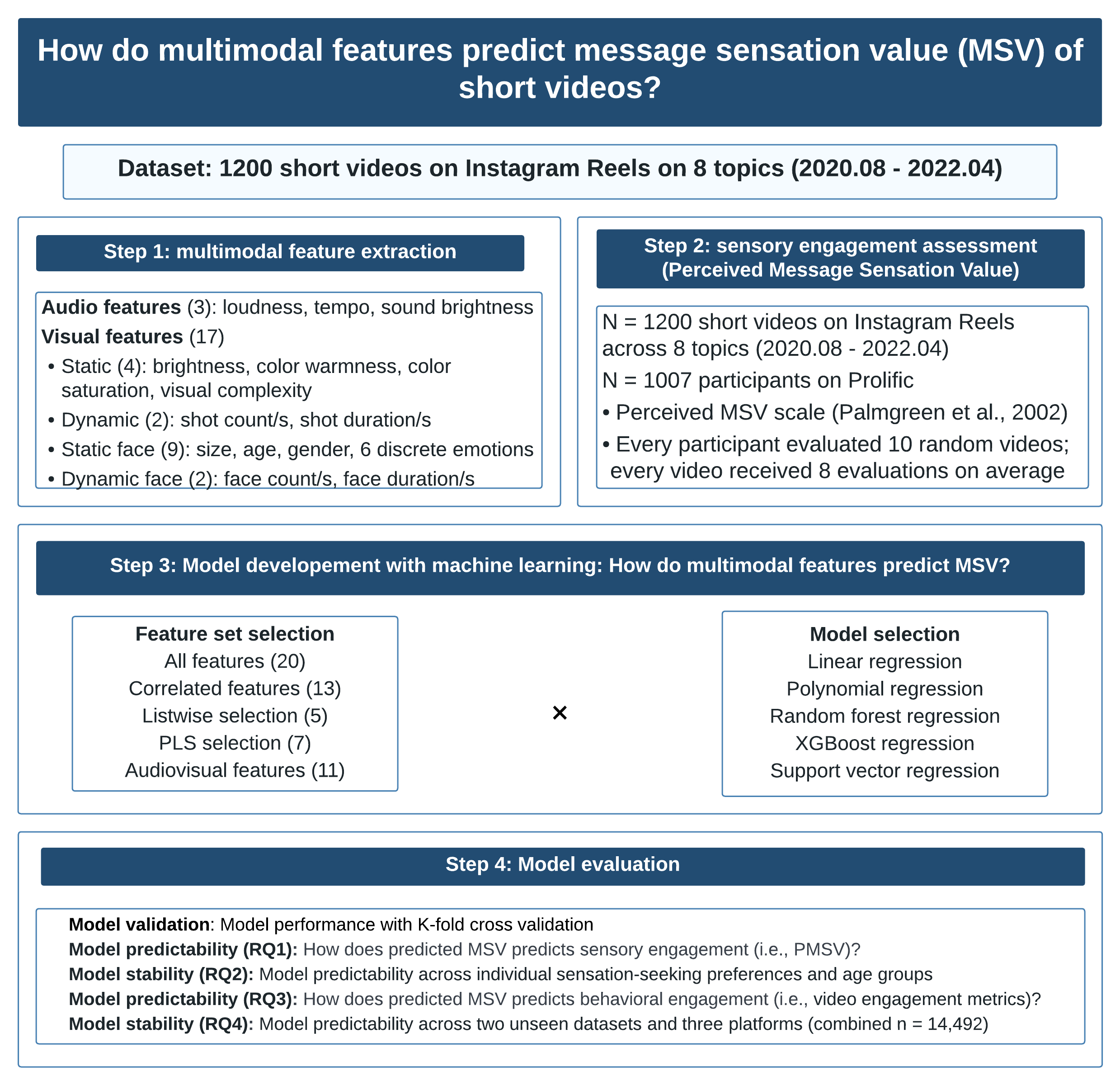

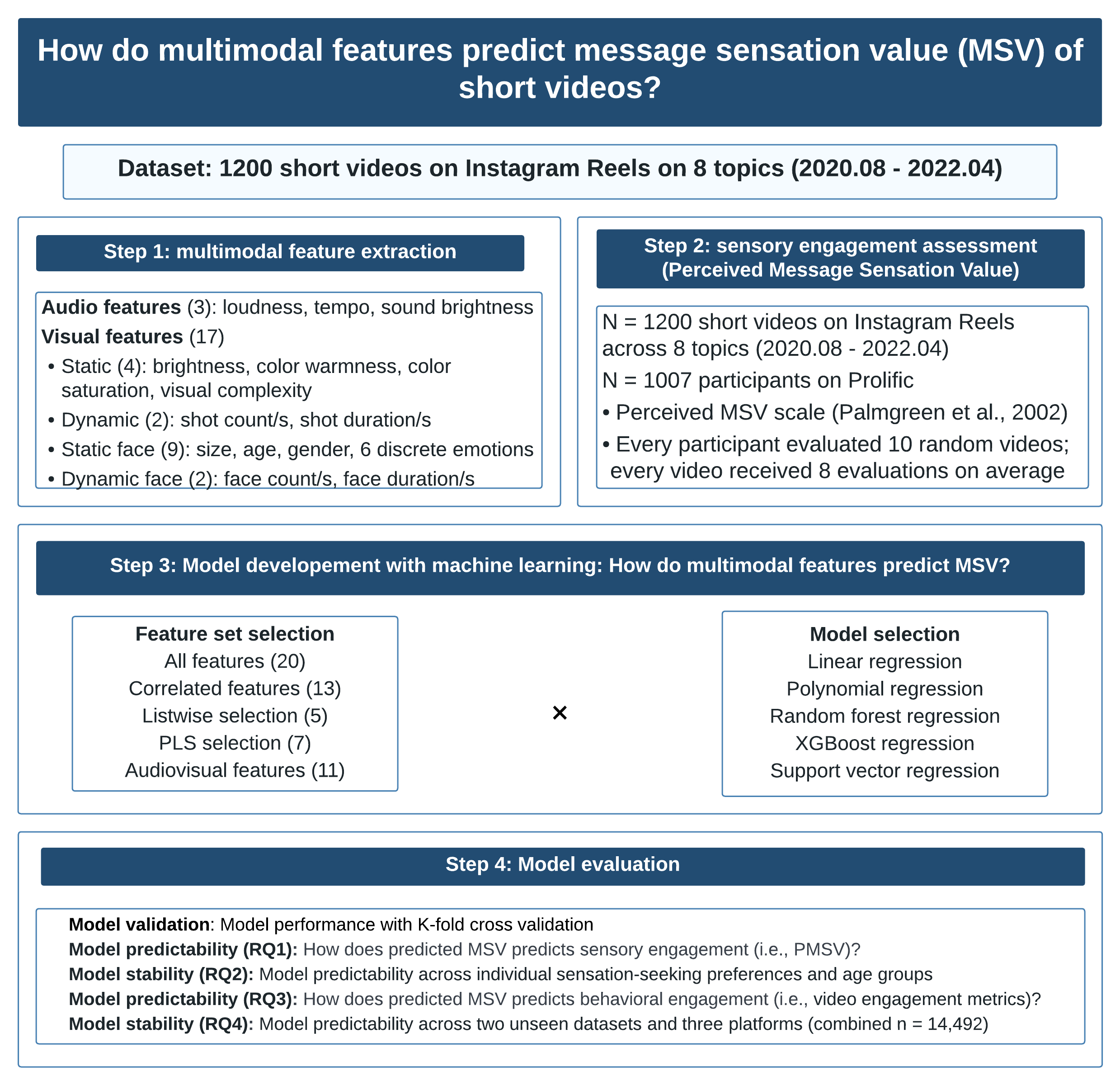

A schematic overview of procedures is presented below.

Figure 1: Flow diagram capturing feature extraction, annotation, model development, and evaluation steps in the computational MSV pipeline.

Results

Sensory Engagement Prediction

The computational MSV output demonstrated strong linear correlation with PMSV, with regression coefficients (b≈1.57, p<.001) robust across age and sensation-seeking strata. High MSV values systematically mapped to heightened sensory responses regardless of group (younger/older; high/low sensation-seekers), with no evidence of population-specific moderation.

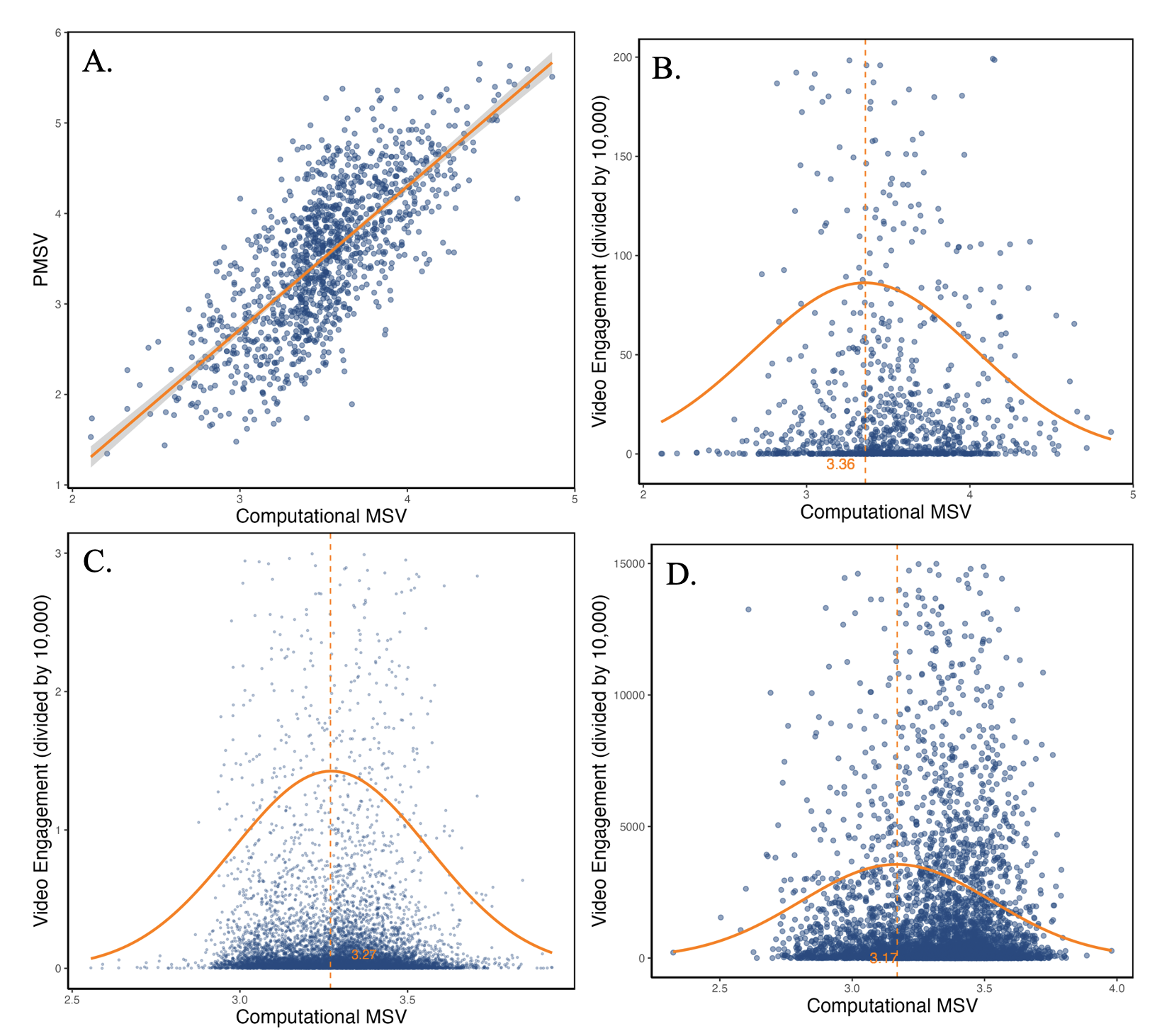

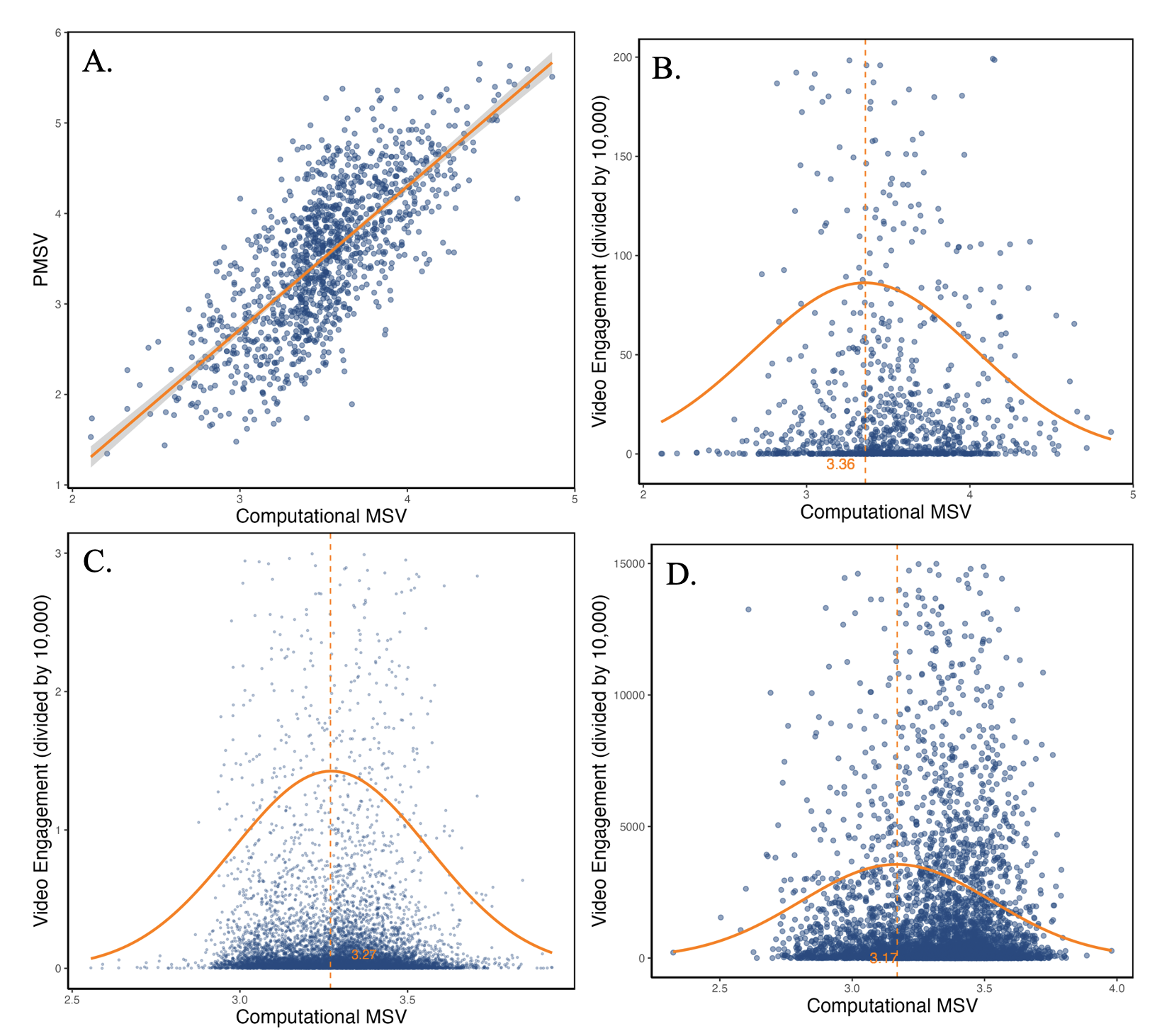

The following figure visualizes key associations:

Figure 2: Panel A illustrates a direct linear association between computational MSV and PMSV; Panel B shows the inverted U-shaped relationship between MSV and behavioral engagement, highlighting an optimal MSV (≈3.36); Panels C and D show the generalization of this effect in science-related and children’s video datasets.

Behavioral Engagement and Inverted U-Shape

For behavioral engagement, polynomial regression analyses revealed a robust inverted U-shaped relationship: moderate MSV consistently maximized behavioral interaction rates (likes, shares, comments), while both low and high MSV levels yielded attenuation in engagement. The optimal MSV value to maximize behavioral activity was approximately 3.36 (in the training dataset), with generalization to 3.27–3.17 in external science/kids datasets. This pattern was supported across all platforms and content types examined, indicating stability of the model as a generalized predictor.

Model Generalizability

Application to two large external datasets (science and kids' videos on TikTok, Instagram, YouTube Shorts; total N=14,492) replicated the central findings, including the critical inverted U-shaped MSV–engagement relationship. The model thus demonstrates strong domain and platform robustness.

Theoretical and Practical Implications

The persistence of the linear MSV–sensory engagement effect alongside the inverted U-shaped MSV–behavioral engagement effect provides data-driven constraints for theories that previously framed MSV as either universally attention-enhancing (AMIE, LC4MP) or as a distractor (ELM). The results indicate the need for multi-stage cognitive models, where MSV has distinct roles: initial sensory response increases monotonically with MSV, while action-oriented behavioral engagement is optimally triggered at moderate MSV.

On the practical side, the research offers a scalable, objective tool for real-time, automated MSV quantification in short video contexts, obviating the need for labor-intensive annotation. Content producers can optimize for moderate MSV to maximize engagement, balancing sensational elements to avoid overstimulation that would otherwise suppress behavioral response.

Limitations and Future Research

This work is constrained by specification of multimodal features primarily at the low perceptual level (e.g., shot count, color warmth); high-level semantic and compositional attributes, and platform- or culture-specific idiosyncrasies, may further refine MSV models. Experimental work is needed to establish causal mechanisms underlying the observed inverted-U engagement curve. Future research should also leverage foundation multimodal models such as Veo and Sora for feature extraction, improve reproducibility via open-source tools, and examine algorithmically mediated feed environments to untangle content–algorithm interaction effects.

Conclusion

The computational model of MSV presented constitutes a validated, theory-driven pipeline for predicting how the sensory properties of short video content drive both immediate affective engagement and downstream behavioral interaction. Critically, the research clarifies that maximizing behavioral engagement requires careful moderation of the sensational features, avoiding both under- and overstimulation. These insights suggest actionable contours for short-form content design and lay a robust groundwork for further theoretical integration, domain expansion, and data-driven content optimization.