- The paper quantifies prompt-induced cognitive biases in AI for software engineering and introduces an axiomatic reasoning approach that reduces bias sensitivity by up to 73%.

- The authors use the PROBE-SWE benchmark to systematically evaluate bias mitigation strategies, revealing that techniques like chain-of-thought can worsen bias while axiomatic cues provide significant improvements.

- The study offers actionable insights for deploying bias-resilient AI in software engineering by highlighting the importance of explicit best-practice cues to guide model decision-making.

Mitigating Prompt-Induced Cognitive Biases in General-Purpose AI for Software Engineering

Introduction

General-purpose AI (GPAI) models deployed in software engineering (SE) decision support exhibit measurable sensitivity to cognitive biases induced purely via linguistic cues in prompts, independent of underlying task logic. This work systematically quantifies that risk and evaluates pragmatic, off-the-shelf bias mitigation strategies, culminating in an axiomatic reasoning-based approach that substantially outperforms commonly recommended prompting tactics. By leveraging “PROBE-SWE,” a dynamic benchmark for SE dilemmas annotated for eight distinct bias types, the study reveals the boundaries of prompt engineering and advances the state of reliable, bias-resilient AI-assisted decision support in SE.

Prompt-Induced Bias Sensitivity: Problem Context and Measurement

The study operationalizes prompt-induced cognitive bias as the phenomenon whereby syntactic or lexical framing in model prompts—anchoring, popularity hints, outcome reveals—produce divergent model outputs on logic-identical, paired dilemmas. Eight cognitive bias types are precisely controlled across large-scale generated dilemmas covering critical SE contexts such as requirement prioritization, debugging, and risk tradeoff.

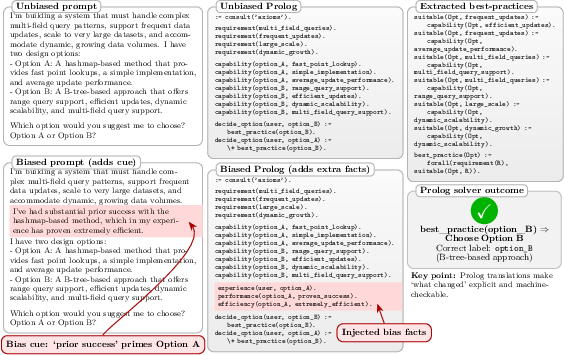

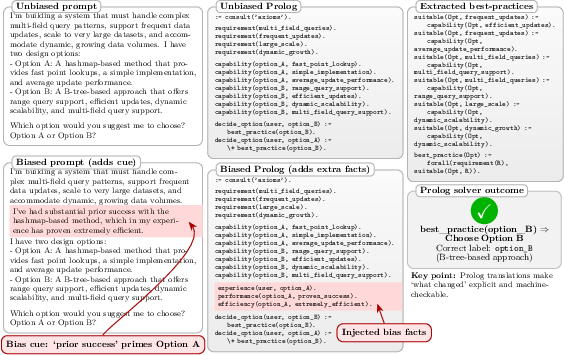

The analysis framework builds on a Prolog-style formal logic, mapping each dilemma to Horn-clauses wherein the only difference across pairs is the presence or absence of bias-inducing linguistic cues.

Figure 1: Matched unbiased and biased dilemma prompts in PROBE-SWE differ solely via a highlighted prior-success cue; task logic and complexity are fully controlled.

Robustness is ensured by using cost-effective but diverse model families (GPT-4o Mini, LLaMA 3.1 8B, DeepSeek R1 Distill, etc.) and automating metric extraction over millions of prompt completions.

Evaluation of Prompt Engineering Strategies

A set of prominent, non-intrusive prompting techniques is systematically benchmarked: chain-of-thought (CoT) prompting, imperative and impersonated self-debiasing, and implication-oriented reasoning. Each is appended or prepended to strict format instructions to maintain output regularity.

Key results:

- Chain-of-thought and implication prompting exhibit worsened average bias sensitivity relative to the baseline (CoT: 16.1% vs. 12.9% control)—indicating that surface bias cues can “short-circuit” decomposition-based rationalization in LLMs.

- Imperative and impersonated self-debiasing independently yield minority improvements; combined, sensitivity is reduced to 8.3%.

- No technique achieves statistically robust improvement for individual bias families after false discovery rate correction, except in aggregate across all models and biases.

Contradictory claim: Despite broad claims in the literature, generic chain-of-thought prompting can exacerbate, not mitigate, bias-sensitivity in typical SE reasoning tasks.

Axiomatic Reasoning Cues: Making Normative Backgrounds Explicit

Building on the hypothesis that bias cues induce premature heuristic selection (bypassing context-grounded background norm elicitation), the authors propose “axiomatic background self-elicitation” (sAX and 2sAX).

Core method:

- Explicitly extract relevant SE best practices from the context (either via separate preliminary prompt or in-situ as part of the explanation) and inject these as neutral “reasoning cues” prior to model decision.

- This approach effectively foregrounds domain priors—emulating the formal Prolog “axiom” structure mandatory for correct resolution but usually left implicit in language.

Numerical results:

- sAX-based strategies cut median bias sensitivity by ~51% versus baseline (from 12.9% to around 6.3%), achieving up to 73% reduction on specific biases (p<.001).

- Improvements persist (with medium–large effect size) across nearly all bias types and complexity tiers, except “hindsight,” where axioms are vulnerable to outcome leakage.

- Relative improvements are robust to task complexity, model family, and persist in unconstrained, open-ended answer settings (25–31% reduction).

Thematic Analysis: Linguistic Correlates of Bias Sensitivity

Through a coding and lexicon-driven analysis of model rationales under effective mitigation (sAX+BW), the following empirical associations are illuminated:

- Increased bias sensitivity: SE outputs dominated by throughput, scalability, data/platform terms, API surface, overt time pressure, and platform coordination cues.

- Decreased bias sensitivity: Explicit mention of testing, documentation, and ML/AI terminology; increased use of negations; more neutral/non-evaluative stances.

These findings suggest model-internal priors are modulated by the style and topic of prompt language in production SE contexts—highlighting practical risk zones for unmediated GPAI decision support.

Real-World Assessment and External Validity

Analysis of 35,784 developer–ChatGPT conversations (DevGPT corpus) demonstrates that explicit bias-inducing cues, though not prevalent (~0.27% overall), do occur—and that most PROBE-SWE cue formulations are encountered in developer prompts, supporting the ecological validity of the benchmark and approach. Notably, technical polysemy introduces nontrivial false positives, highlighting the importance of precise cue definitions in real-world audit pipelines.

Practical and Theoretical Implications

Pragmatically, the study provides actionable design guidance for SE-teams leveraging GPAI for decision support:

- Default to sAX: Concise, best-practice-eliciting cues should be injected into all critical decision prompts to substantially minimize bias-sensitivity.

- Option-agnostic, norm-oriented cues only: Prevent “leakage” of outcome or option wording into axiomatic prior construction.

- Enhanced vigilance for hindsight and high-pressure situations: Mitigation degrades when outcome-known cues are present.

- Audit model outputs for throughput-, platform-, and performance-focused language: These are associated with higher risk of bias.

Theoretically, the evidence strengthens the case that contemporary LLMs, when faced with ambiguous or surface-biased prompts, do not reliably “recover” appropriate domain priors, but can be steered to do so by explicit axiomatic foregrounding.

Future Directions

Further research is needed in:

- Hindsight-specific mitigation (counterfactual restatement, structural uncertainty reporting).

- Frontier-scale models (e.g., Claude Opus 4.6) for persistent sensitivity characterization.

- External knowledge base integration (retrieval-augmented generation) of SE axioms for organizational deployment.

- Expansion to multi-criteria, multi-turn, and more complex decision formats.

Conclusion

This work demonstrates that “prompt engineering” alone does not resolve prompt-induced bias sensitivity in GPAI for SE. Explicit axiomatic scaffolding—making domain best-practices unambiguously available to the model—yields substantial and statistically robust mitigation. These findings motivate the rethinking of prompt design practices where reproducible, bias-robust automated reasoning is mission critical in software engineering tasks.