- The paper demonstrates that fine-tuned TSFMs, such as ChronosX and Moirai, achieve lower CRPS and improved calibration compared to task-specific models.

- The paper shows that advanced feature engineering with models like NHITS+QRA can offer competitive forecasting performance at significantly reduced computational costs.

- The paper indicates that incorporating exogenous features and cross-market adaptation yields incremental benefits, underscoring the performance-efficiency trade-off in forecasting.

Introduction

The ongoing transition toward renewables-driven power systems has introduced significant volatility and uncertainty into electricity markets, impactfully altering dispatch, market-clearing, and operational paradigms. Probabilistic electricity price forecasting (PEPF)—that is, the modeling and prediction of full predictive distributions for day-ahead prices—has become fundamental for risk-aware decision making. This paper systematically benchmarks state-of-the-art task-specific deep learning models against Time Series Foundation Models (TSFMs), focusing specifically on the German-Luxembourg (DE-LU) bidding zone, which is highly interconnected and data-rich. The study spans critical axes: model performance, calibration, input feature group analysis, various adaptation regimes, and computational efficiency.

Figure 1: Overview of the evaluated models, dataset splits, feature modalities, and experimental blocks (baselines, hyperparameter tuning, feature selection, x-shot adaptation).

Experimental Design

The evaluation protocol comprises four main experimental blocks:

- Model Comparison: Establishes baselines with classical and deep learning models (NHITS+QRA, Normalizing Flow) and compares them to TSFMs (Moirai, ChronosX) under both zero-shot and fine-tuned conditions.

- Hyperparameter Optimization: For task-specific models, multi-objective tuning (over CRPS, ECE, and ES) is performed to approximate Pareto-optimal configurations at multiple model scales.

- Feature Selection: Forward selection evaluates the incremental benefit of including market, supply, and cross-border features, contrasted with minimal calendar-based covariates.

- Cross-Market Transfer ("x-shot"): Zero-shot, one-shot, and few-shot scenarios probe the marginal value of market-specific adaptation in DE-LU after cross-border pretraining.

The datasets include DE-LU and 14 adjacent European zones, with 2018–2022 for training, 2023 for validation, and 2024 for test. The input horizon is fixed at 168 hours and the prediction target is 24 hourly prices.

Figure 2: Annual average day-ahead spot prices across European bidding zones in 2024, illustrating substantial spatial variation and cross-border correlation structures.

Model Architectures

Task-Specific Methods

- NHITS+QRA: NHITS, a deep, hierarchical forecaster, outputs deterministic trajectories which are post-processed using Quantile Regression Averaging (QRA) to produce calibrated, monotonic quantile estimates. Calibration is enhanced by leveraging stochastic weight realizations via SWAG or MC dropout.

- Transformer-Conditioned Normalizing Flow (NF): A Transformer encoder summarizes the lagged prices and exogenous features, outputting context vectors conditioning masked autoregressive flows (MAF) to parametrize a flexible, high-dimensional density for 24-hour price vectors.

Foundation Models

- Moirai: An encoder-only Transformer with variable patching scales to accommodate diverse temporal resolutions, simultaneously attending across autoregressive and exogenous (calendar, market, supply) variables, and outputting probabilistic mixture distributions.

- ChronosX: An autoregressive, quantized forecasting Transformer built on T5; ChronosX leverages adapters to incorporate exogenous features during fine-tuning, providing a bridge from language-modeling paradigms to regression with complete probabilistic generative capabilities.

Results

TSFMs exhibit robust zero-shot performance: Moirai (Base) achieves a CRPS of 16.63, ChronosX (Large) 15.76. These results consistently outperform classical baselines (e.g., Same-Hour/28d empirical with CRPS ≈ 19.9).

Fine-tuning Gains

Fine-tuned TSFMs deliver the strongest performance: for DE-LU, ChronosX (Large) achieves 13.63 and Moirai (Base) 14.22 CRPS. However, well-configured NHITS+QRA is highly competitive, reaching 16.87 (after tuning) and 15.98 (with feature augmentation), and for certain feature combinations even matches or slightly surpasses TSFMs (see Table below).

| Model |

Size |

Test CRPS (Fine-Tuned) |

| ChronosX |

Large |

13.63 |

| Moirai |

Base |

14.22 |

| NHITS+QRA |

Tiny |

16.87 (15.98 with exogenous features) |

| Normalizing Flow |

Small |

24.23 |

Notably, increasing model size for TSFMs shows diminishing returns and even small configurations for NHITS+QRA are competitive in CRPS/ES.

NHITS+QRA demonstrates sensitivity to feature selection: exogenous market and supply variables incrementally and significantly lower CRPS, down to 15.98. Conversely, for TSFMs, adding covariates occasionally degrades CRPS, emphasizing their limited marginal value beyond large-scale pretraining in this context.

Cross-Market Adaptation (x-shot)

- NF: Marked improvement as more DE-LU specific samples are incorporated; CRPS drops from 22.92 (zero-shot) to 19.97 (few-shot).

- NHITS+QRA: Stable CRPS across x-shot regimes (≈13.5–13.6), implying strong baseline generalization with minimal dependence on cross-market adaptation.

- Moirai: CRPS changes by < 0.4 points across adaptation regimes, remaining above fine-tuned reference levels, indicating little adaptation gain.

This result underscores that for large, interconnected markets (like DE-LU), data-limited downstream adaptation may not confer significant gains for TSFMs.

Distributional and Calibration Properties

TSFMs (especially fine-tuned ChronosX and Moirai) consistently outperform in calibration metrics (ECE), but NHITS+QRA (with QRA and SWAG) remains competitive, producing well-calibrated predictive intervals. Both families capture relevant distributional features such as spikes, heavy tails, and regime shifts, but TSFMs provide tighter calibration and sharper intervals.

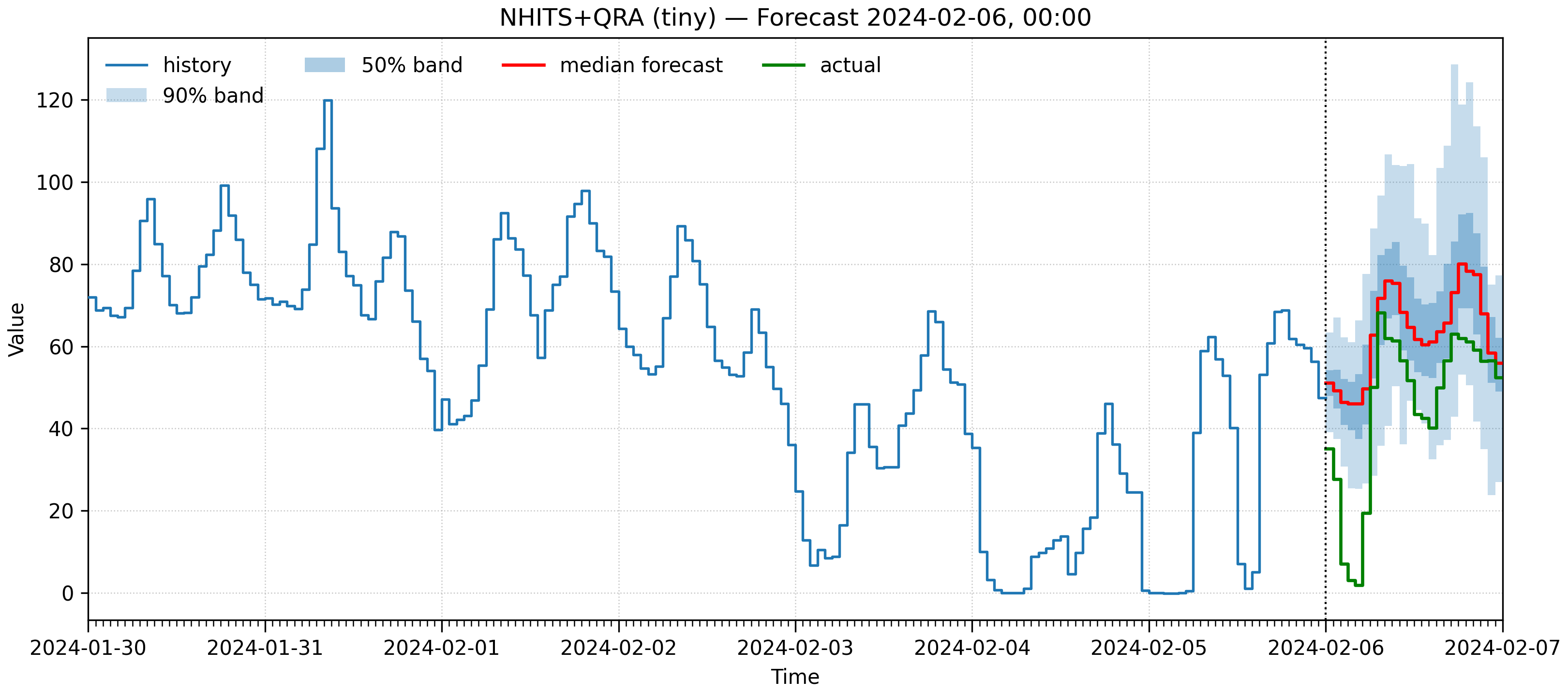

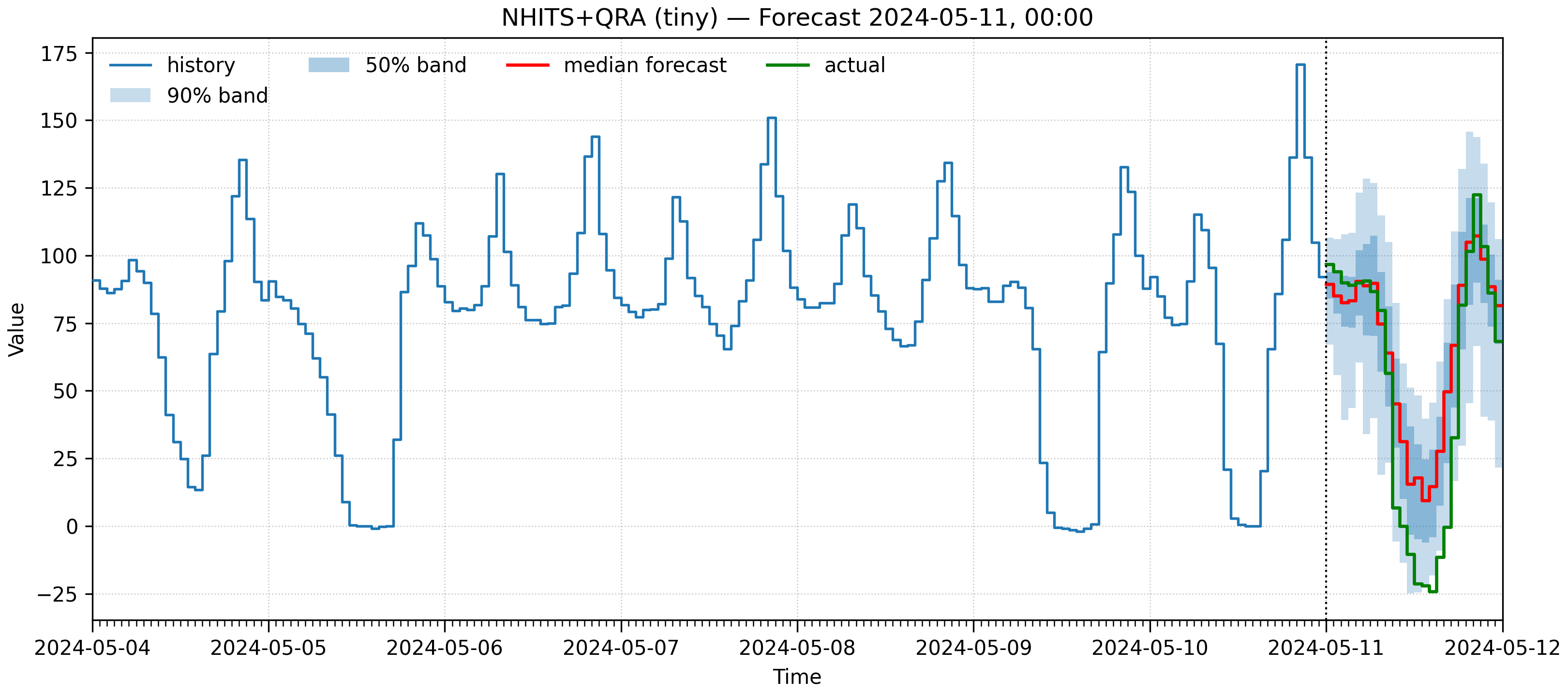

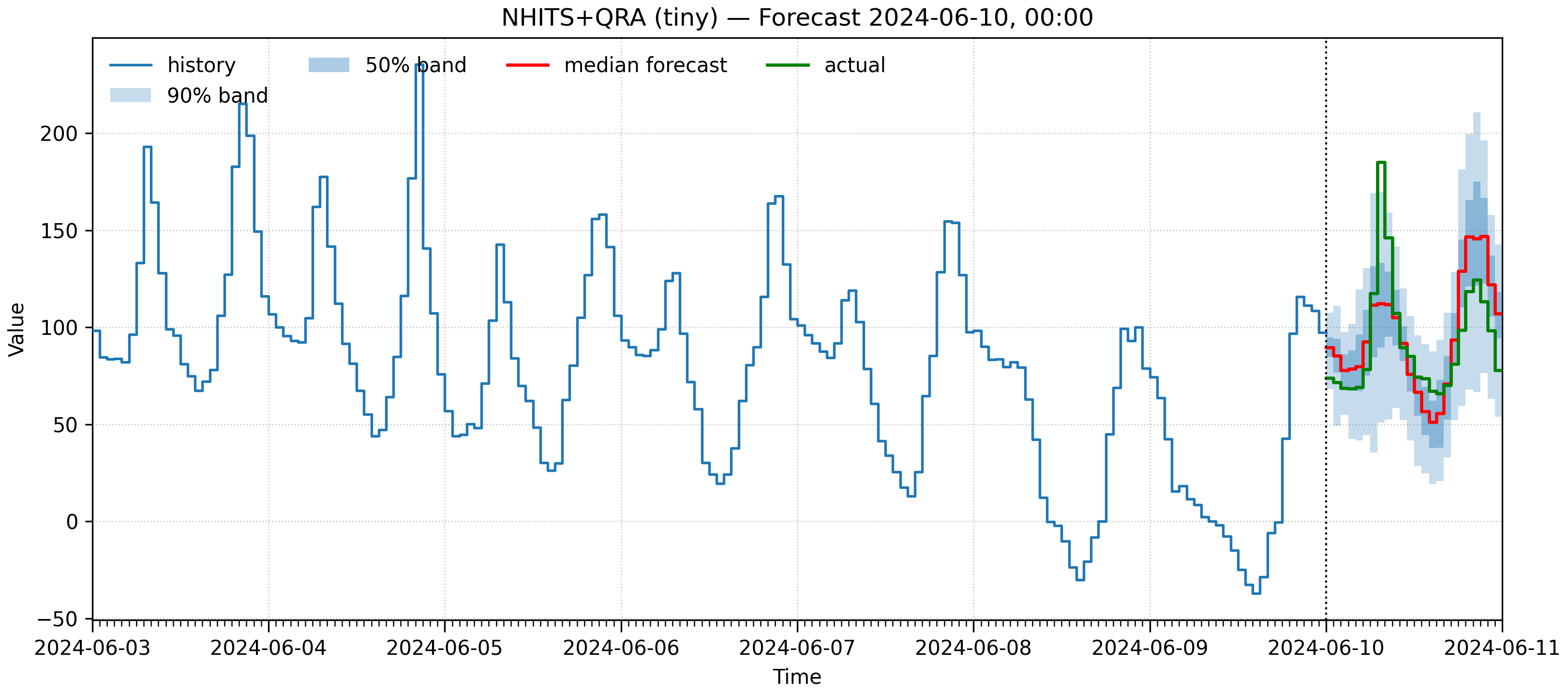

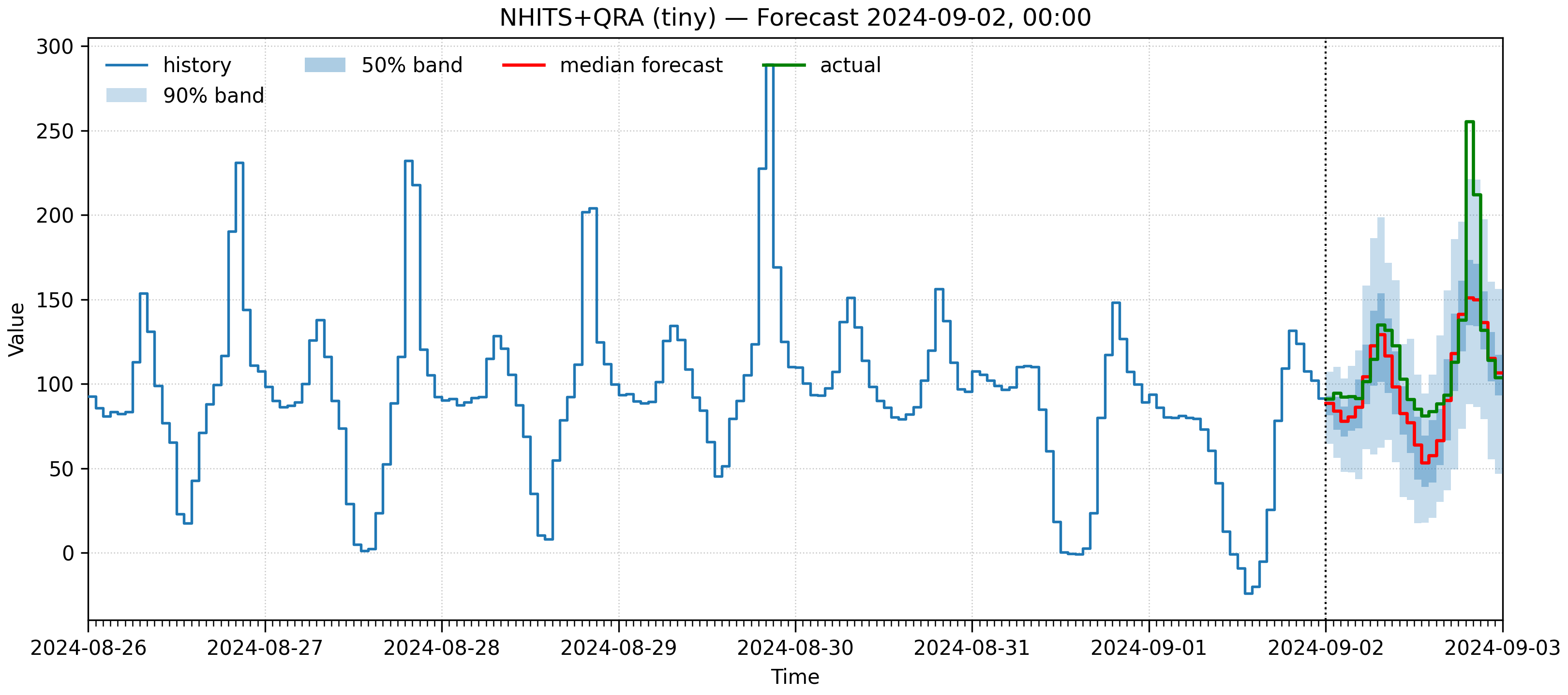

Figure 3: NHITS+QRA fan charts for few-shot cross-border experiments, visualizing probabilistic interval coverage and adaptivity to observed price distributions.

Computational and Practical Considerations

Computational footprint varies widely:

- TSFMs require substantially higher storage, inference time, and device memory—especially in large-scale, full fine-tuning scenarios.

- NHITS+QRA is efficient, requiring an order of magnitude less compute/energy for similar performance.

- For high-frequency, privacy-sensitive, or resource-constrained applications, tuned task-specific models are more pragmatic.

Additional advantages of non-TSFM approaches include easier control over end-to-end data pipelines (potentially providing enhanced privacy and reduced risk of systemic data leakage).

Implications and Forward Directions

From a theoretical perspective, these findings argue that the inductive bias and transferability of TSFMs—while enabling strong baseline performance and superior calibration—do not categorically outperform smaller, tuned models, especially when domain knowledge is encoded via feature engineering or post-processing ensembles (as in QRA). In operational terms, the marginal value of TSFM adoption must be justified against substantially increased resource and infrastructure costs.

For future work, several directions stand out:

- Loss Function Innovation: Exploring novel calibration-focused or scenario-weighted losses to further improve distributional robustness.

- Cross-domain Pretraining: Curating domain-specific corpora for TSFM pretraining, including coherent representations of market coupling, regulatory events, and renewables-induced nonstationaries.

- Feature Leveraging in Foundation Models: Designing TSFM architectures or adaptation schemes that fully capitalize on detailed, structured exogenous drivers.

- Next-Generation TSFMs: Investigating models such as Chronos-2, Moirai 2.0, and Moirai-MoE, which promise improved covariate-handling and more efficient scaling properties.

Conclusion

On the challenging task of day-ahead probabilistic electricity price forecasting, TSFMs (Moirai, ChronosX) consistently match or slightly surpass strong task-specific deep learners (NHITS+QRA, NF) in CRPS, energy score, and interval calibration—but with markedly higher computational requirements. The marginal performance advantage of TSFMs must therefore be balanced against efficiency, especially when conventional models, augmented with advanced quantile post-processing and careful feature selection, remain highly competitive. The work highlights the critical need for careful task-model matching, explicit cost-performance assessment, and continuous methodological innovation to meet the evolving requirements of renewable-integrated power markets.

(Referenced paper: "Assessing the Performance-Efficiency Trade-off of Foundation Models in Probabilistic Electricity Price Forecasting" (2604.14739))