Lectures on AI for Mathematics

Abstract: This book provides a comprehensive and accessible introduction to the emerging field of AI for mathematics. It covers the core principles and diverse applications of using artificial intelligence to advance mathematical research. Through clear explanations, the text explores how AI can discover hidden mathematical patterns, assist in proving complicated theorems, and even construct counterexamples to challenge conjectures.

- The Mathematics of Artificial Intelligence (2022)

- A Triumvirate of AI Driven Theoretical Discovery (2024)

- Mathematical Formalized Problem Solving and Theorem Proving in Different Fields in Lean 4 (2024)

- Mathematics and Machine Creativity: A Survey on Bridging Mathematics with AI (2024)

- The Mathematics of Artificial Intelligence (2025)

- The Mathematician's Assistant: Integrating AI into Research Practice (2025)

- Mathematics: the Rise of the Machines (2025)

- AI for Mathematics: Progress, Challenges, and Prospects (2026)

- Mathematicians in the age of AI (2026)

- Artificial Intelligence and the Structure of Mathematics (2026)

Summary

- The paper presents a comprehensive framework that connects AI methods, including deep learning, ATP, and RL, with core mathematical tasks such as discovery, proof, and counterexample construction.

- It details the use of deep neural networks, transformer-based models, and reinforcement learning to uncover novel patterns in domains like knot theory and combinatorics.

- The research emphasizes human-AI collaboration, reshaping mathematical epistemology by integrating automated verification and generative techniques into traditional mathematical practices.

Lectures on AI for Mathematics: An Expert Synthesis

Introduction and Scope

"Lectures on AI for Mathematics" (2604.11504) presents a comprehensive, technically rigorous framework connecting AI methods to core problems in mathematical research. The monograph systematically addresses how advancements in ML, deep neural architectures, reinforcement learning (RL), automated theorem proving (ATP), and generative models both support and transform discovery, proof, and refutation in mathematics. The authors emphasize a problem-centric paradigm, consistently analyzing how specific mathematical challenges map to distinct AI techniques and methodologies.

Mathematical Practice Reframed by AI

Mathematics, as defined in the text, comprises cyclically interacting modes: discovery of patterns, formal proof, and counterexample construction. AI's strengths differ in each mode:

- Discovery: AI leverages high-dimensional statistical pattern recognition to discern non-intuitive relationships in mathematical data, as demonstrated in knot theory and algorithmic optimization.

- Proof: ATP and neuro-symbolic systems combine large-scale formal libraries (e.g., Lean, Mathlib) with heuristic guidance from LLMs to automate and accelerate formal deduction.

- Counterexample generation: Reinforcement learning and evolutionary methods efficiently search vast, combinatorial spaces, finding explicit objects that refute conjectures more systematically than manual methods.

This structural analysis demonstrates that AI is not merely a computational accelerator but constitutes a new methodological layer for mathematical epistemology.

Core Enabling Technologies

Automated Theorem Proving and Formalized Mathematical Libraries

ATP has progressed from brute-force enumeration to the integration of formal verification (Coq, Isabelle, Lean) and interactive theorem proving paradigms, which guarantee logical soundness. The emergence of large, machine-verifiable mathematical libraries enables data-driven approaches and underpins hybrid systems where neural models suggest tactics or proof-paths, filtered and certified by formal kernels.

Deep Neural Networks and Representation Learning

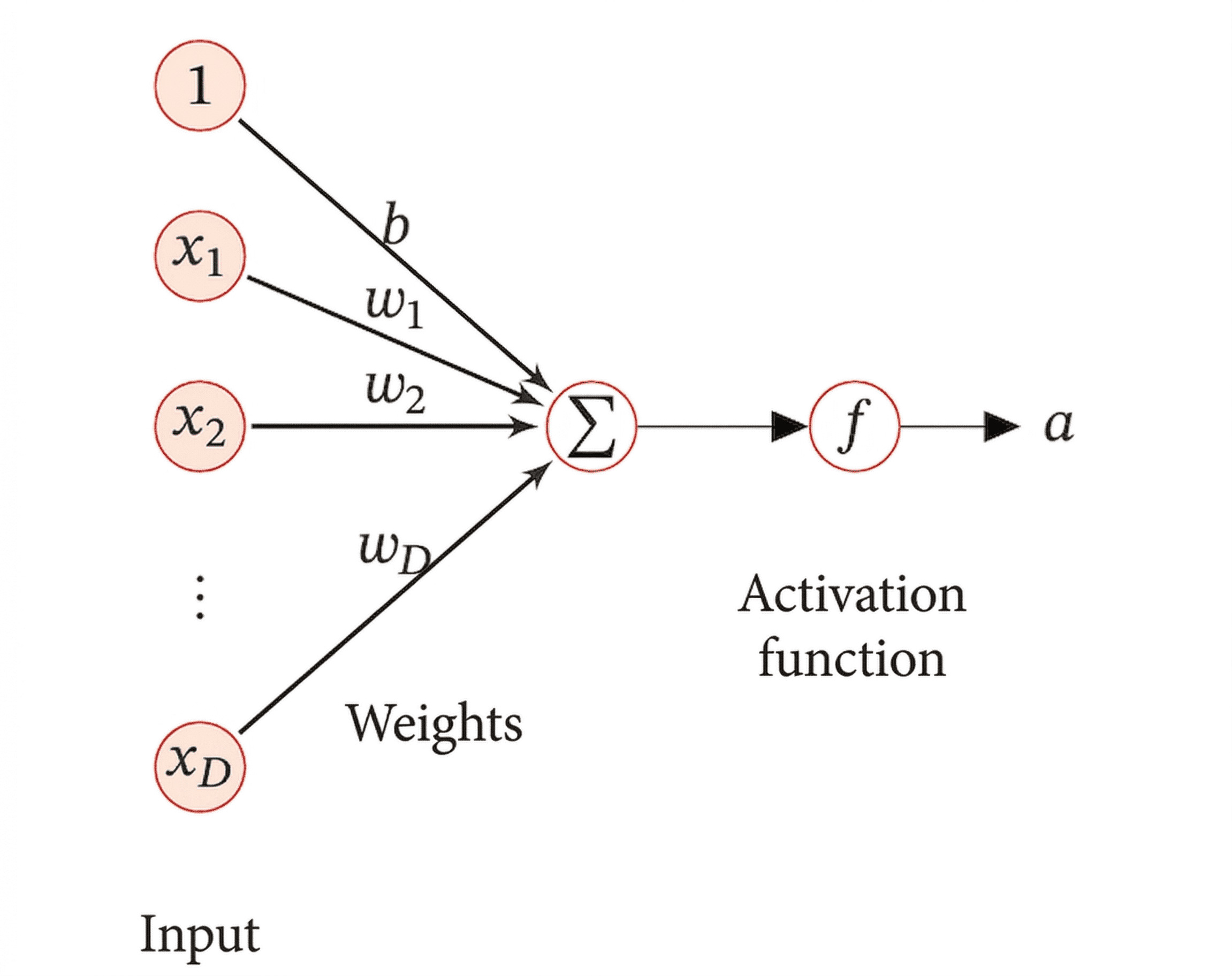

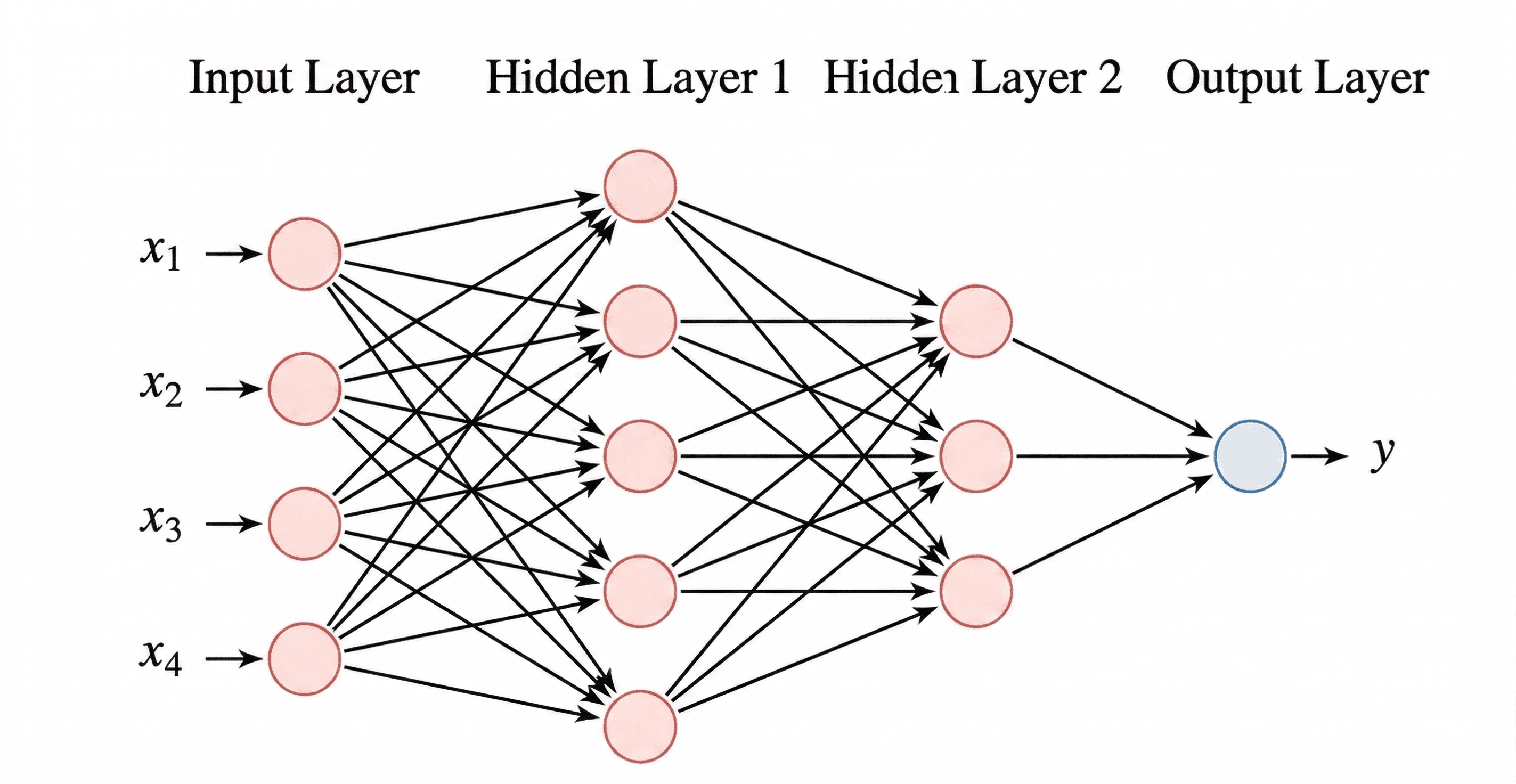

Deep networks, especially transformers, serve as universal nonlinear function approximators and encode mathematical structures as vectors, supporting both discovery and metrics-based problem mapping. Models such as AlphaTensor and AlphaEvolve (see below) leverage the compositionality and high expressivity of these architectures.

Figure 1: Artificial neuron structure—the atomic computational unit underpinning deep learning approaches to pattern extraction and function approximation.

Figure 2: Feedforward neural network architecture enables multivariate nonlinear regression and classification—essential for modeling complex mathematical object-to-invariant mappings.

Reinforcement Learning and Combinatorial Construction

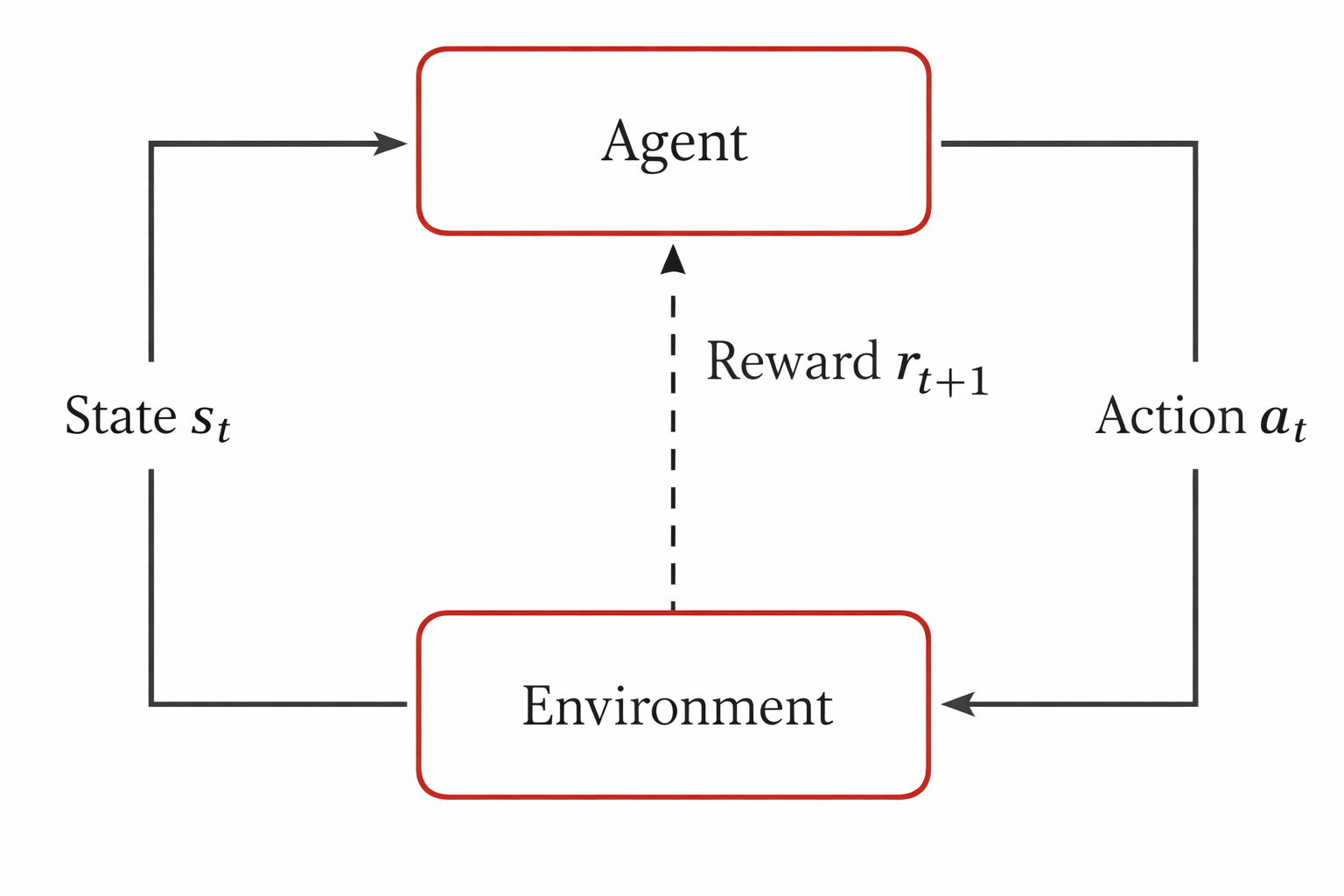

RL methods recast mathematical object construction as sequential decision processes in massive action-state spaces.

Figure 3: Standard agent-environment RL interaction loop—a key abstraction for algorithmic and structural search in combinatorics and function space.

Policy search, MCTS, policy gradients, and program-evolution methods now systematically identify combinatorial counterexamples and optimal constructions beyond human reach.

Generative Models and LLMs

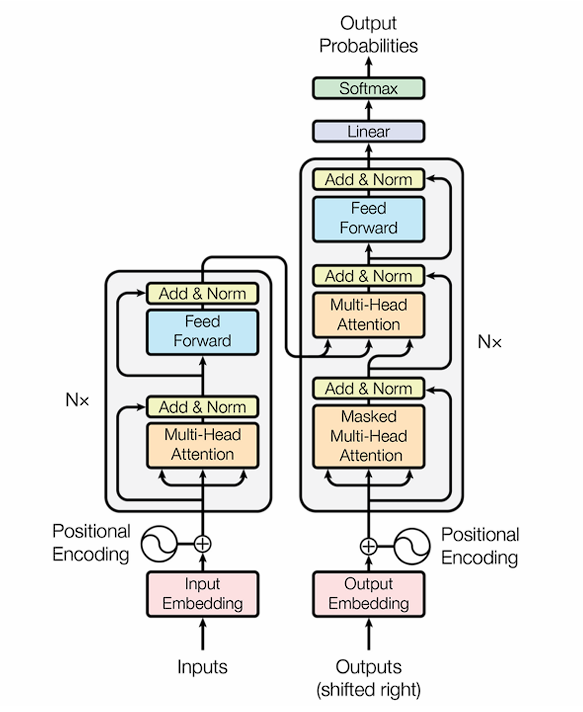

Generative adversarial networks (GANs) and especially transformer-based LLMs (e.g., GPT, Gemini) supply powerful prior knowledge and in-context reasoning abilities. They bootstrap code generation, synthesis, and meta-heuristic search in highly structured scientific domains. Fine-tuning, RLHF, DPO, and group comparative methods adapt these models for mathematical reasoning alignment, while robust extract-evaluate loops ensure verifiability.

Figure 4: Transformer architecture—the backbone of modern language modeling and mathematical text reasoning pipelines.

Exemplary AI-Driven Mathematical Discoveries

The text devotes substantial attention to high-impact technical case studies:

1. Structural Pattern Discovery in Knot Theory

By encoding geometric and algebraic knot invariants as high-dimensional vectors and training deep neural networks, DeepMind (2021) derived statistical mappings linking hyperbolic invariants (such as Cusp geometry) to algebraic signatures, a mathematical pattern previously inaccessible to intuition. Saliency analysis isolated the minimal sufficient features, guiding mathematicians to define a new composite invariant (natural slope) and conjecture a precise new relationship—later proved as a theorem.

2. Algorithm Discovery Via RL and LLM Evolution

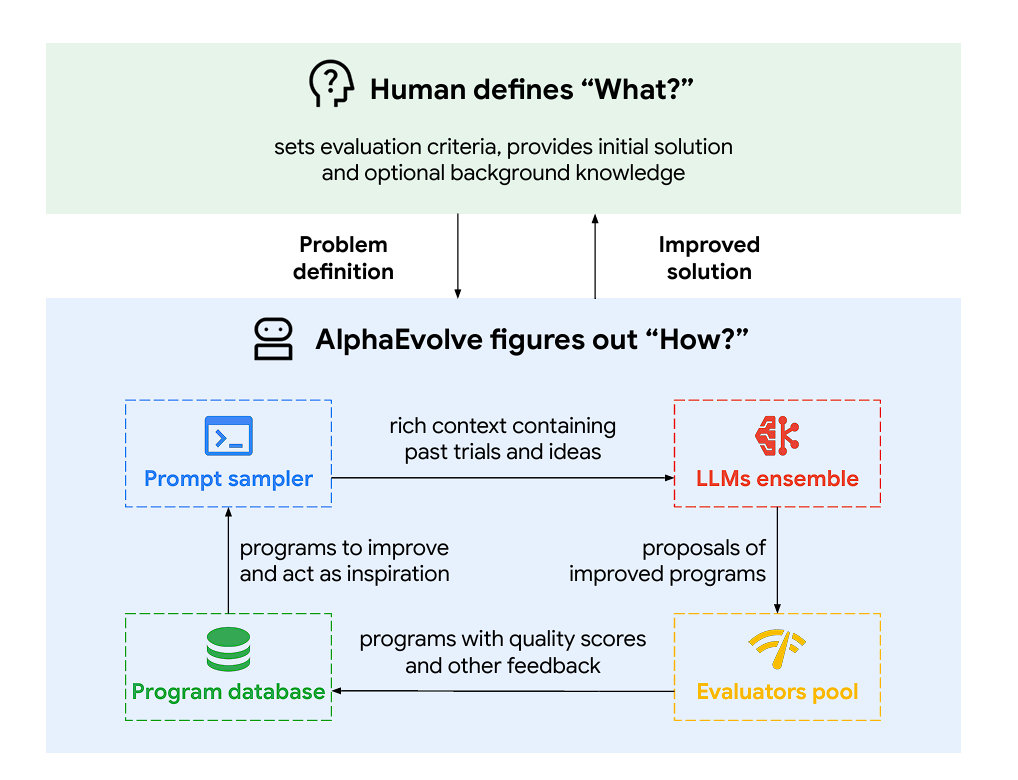

AlphaTensor formalized the search for minimal-rank matrix multiplication algorithms as a single-player game, using MCTS and neural policy/value networks to discover explicit, improved tensor decompositions—surpassing hand-optimized records for decades and closing longstanding gaps in both finite fields and over ℝ/ℂ. AlphaEvolve generalized this to algorithmic meta-search: evolving code-level heuristics for algorithm discovery via LLM-guided mutation-selection-evaluation cycles.

Figure 5: AlphaEvolve workflow—LLM-driven iterative program evolution guided by performance-based selection and automated verification.

The combination of the two paradigms demonstrates both focused, domain-specific performance (AlphaTensor) and broader, highly flexible innovation pipelines (AlphaEvolve).

3. Automated Theorem Proving at Olympiad and IMO Level

SOTA neuro-symbolic systems (AlphaProof, AlphaGeometry) aggregate unsupervised pretraining, large-scale synthetic problem generation, formal verification, and test-time RL. These agents have reached silver-level performance on the International Mathematical Olympiad (IMO), formally verifying all produced proofs via Lean and superseding the performance of previous ATP approaches.

4. Counterexample Construction in Combinatorics

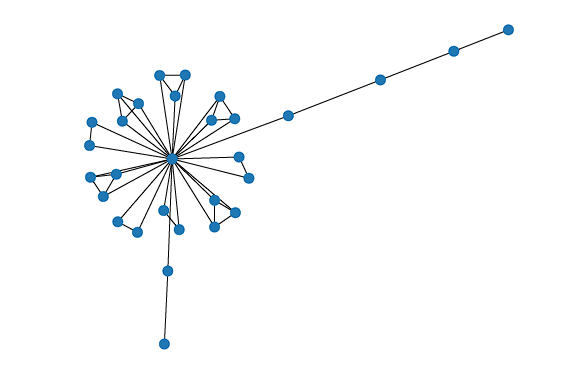

RL and cross-entropy-based construction frameworks, such as that of Wagner, automate the search for counterexamples to extremal combinatorial conjectures. Policy models are iteratively improved based on reward signals sensitive to progressive violation or satisfaction of mathematical properties, enabling discovery of non-obvious structural counterexamples in graph theory, extremal set systems, and related domains.

Figure 6: RL-based construction of combinatorial counterexamples; agent states prune the search space adaptively based on feedback.

PatternBoost further iterates between local search and global generative modeling, allowing sampling and refinement of constructions that capture emergent structural archetypes (e.g., bipartiteness in extremal graph problems).

Numerical Computation and PDEs: Physics-Informed Neural Networks

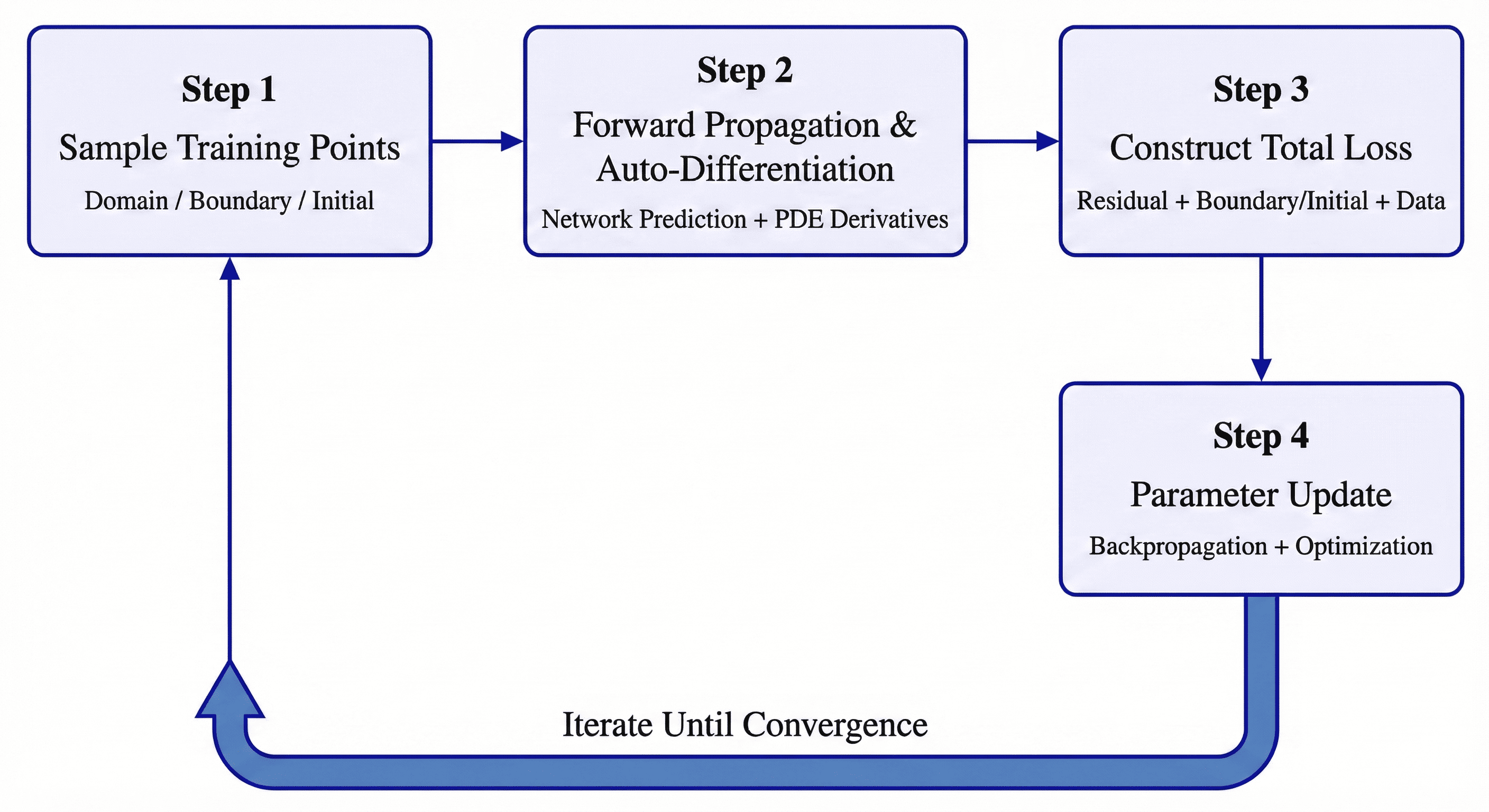

Recent advances extend deep learning to scientific computation via PINNs, which encode PDEs as soft constraints in neural network loss functions. By leveraging automatic differentiation, they permit mesh-free approximation of solutions in arbitrarily high dimensions, transcending the curse of dimensionality inherent in traditional FDM and FEM techniques.

Figure 7: Training process of Physics-Informed Neural Networks—PDE residual and boundary constraints form the composite loss landscape optimized via gradient-based learning.

Epistemological and Practical Implications

Unification via Cross-Domain Integration

Large models—both language and generative—enable cross-field knowledge retrieval, analogy, and pattern transfer. By indexing thousands of mathematical papers and equations, AI can propose structurally relevant connections across disparate branches, accelerating integrative insights and meta-theorem formation.

Challenges and Controversies

The text acknowledges substantive open problems:

- Attribution and coauthorship: As AI systems propose or independently discover central mathematical results, the community must develop new norms for credit assignment, intellectual property, and peer recognition.

- Verification and reproducibility: The complexity of AI-produced proofs and constructions demands disclosure of code, training runs, and hyperparameters to sustain trust and reproducibility.

- Creativity in mathematics: The work rigorously identifies conceptual abstraction, analogical transfer, and theory-building as hallmarks of mathematical creativity still largely inaccessible to present AI systems.

- Mathematical education: The traditional focus on procedural competence must shift towards cultivating problem-formulation, critical evaluation, and human-AI collaborative research skills.

Future Perspectives

The monograph foresees:

- Increased methodological innovation at the intersection of formal methods, RL, and generative modeling—shifting from tool-based to paradigm-level transformation in the mathematical sciences.

- Human-AI co-discovery, where AI acts as an epistemic collaborator, probing vast, high-complexity spaces, and humans focus on conceptual purification, abstraction, and contextualization of results.

- Potential emergence of AI-generated mathematics—new frameworks, definitions, and theoretical constructs, some perhaps only subsequently understood by human mathematicians.

Conclusion

"Lectures on AI for Mathematics" (2604.11504) constitutes a foundational text delineating the technical frontier in AI-driven mathematical discovery, proof, and refutation. Its synthesis of neural, symbolic, and evolutionary paradigms, grounded in explicit mapping of AI methodologies to mathematical epistemic tasks, establishes a roadmap for methodological transformation in mathematics. The research challenges the community to reconsider both the cognitive division of labor and the criteria for creativity in mathematics, as AI accelerates and reshapes the very logic of mathematical knowledge production.

Paper to Video (Beta)

No one has generated a video about this paper yet.

Whiteboard

No one has generated a whiteboard explanation for this paper yet.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Open Problems

We haven't generated a list of open problems mentioned in this paper yet.

Continue Learning

- How does the paper integrate deep neural networks with traditional mathematical methods?

- What specific advantages do reinforcement learning methods offer in constructing counterexamples?

- In what ways do generative models enhance automated theorem proving according to the study?

- How could the integration of AI and mathematics influence future research methodologies in formal verification?

- Find recent papers about automated theorem proving.

Collections

Sign up for free to add this paper to one or more collections.