Artificial Intelligence and the Structure of Mathematics

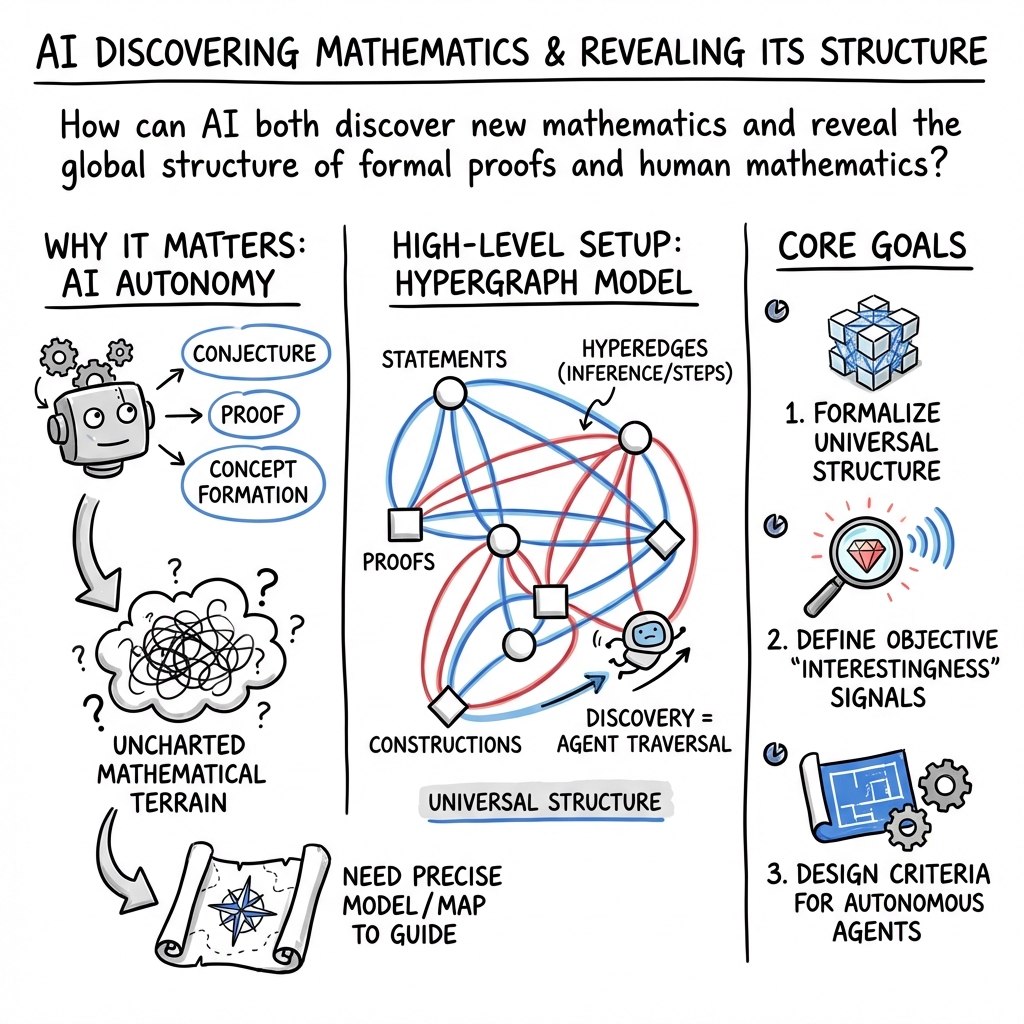

Abstract: Recent progress in AI is unlocking transformative capabilities for mathematics. There is great hope that AI will help solve major open problems and autonomously discover new mathematical concepts. In this essay, we further consider how AI may open a grand perspective on mathematics by forging a new route, complementary to mathematical\textbf{ logic,} to understanding the global structure of formal \textbf{proof}\textbf{s}. We begin by providing a sketch of the formal structure of mathematics in terms of universal proof and structural hypergraphs and discuss questions this raises about the foundational structure of mathematics. We then outline the main ingredients and provide a set of criteria to be satisfied for AI models capable of automated mathematical discovery. As we send AI agents to traverse Platonic mathematical worlds, we expect they will teach us about the nature of mathematics: both as a whole, and the small ribbons conducive to human understanding. Perhaps they will shed light on the old question: "Is mathematics discovered or invented?" Can we grok the terrain of these \textbf{Platonic worlds}?

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple guide to “Artificial Intelligence and the Structure of Mathematics”

What this paper is about

This essay asks a big question: how can AI help us explore and understand the entire “world” of mathematics? The authors propose a new way to map math using networks (like webs) that show how ideas and proofs connect. With this map, they discuss how to build AI “math explorers” that can discover new ideas, explain them, and work alongside humans. They also ask what makes the small slice of math humans actually do special, compared to all the math that could be done.

The main goals and questions

The authors set out to do three things:

- Design a clear “map” of mathematics that a computer can navigate (not just a list of facts, but how those facts connect).

- Describe what a good AI “mathematician” should be able to do.

- Ask deep questions about math itself: What is the shape of all possible proofs? Why does human math seem to be a thin, understandable slice of a much bigger, wilder world? Is math discovered (already “out there”) or invented?

How they approach the problem (with simple analogies)

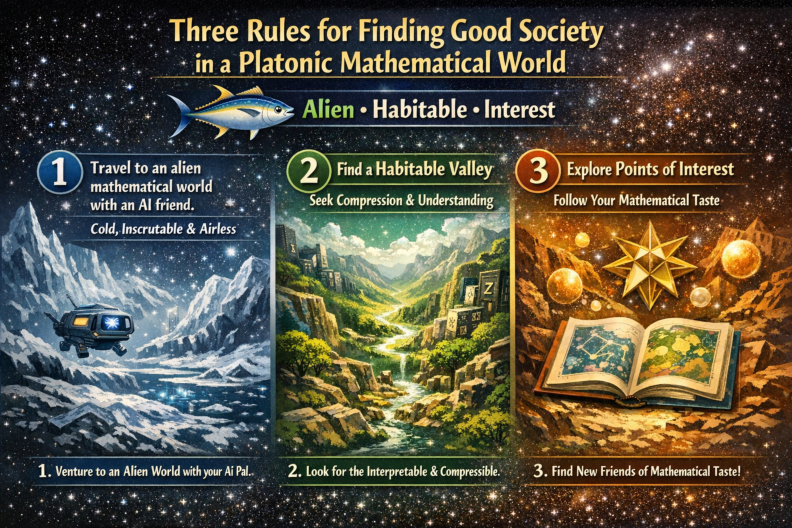

Think of mathematics like a vast planet we’ve never fully explored. The authors:

- Build a “map” using a network called a hypergraph:

- A graph is a map of dots (ideas) connected by lines (relationships). A hypergraph is similar, but one “connection” can link many dots at once (like a recipe that uses many ingredients to make one dish).

- A node is a statement or a proof. A hyperedge is a rule or step that turns some statements into a new statement (like “if A and B are true, then A and B together are true”).

- Define two big networks:

- The universal proof hypergraph (U): the web of every statement that can be proven from a chosen set of basic rules (axioms).

- The structural hypergraph (S): a bigger web that includes not only true/false statements but also definitions, functions, and proofs as objects you can build and reuse.

- Treat proofs as objects:

- Instead of just marking “this is proven,” they make the proof itself a thing in the network. That way, AI can combine, reuse, and compare proofs directly.

- Use abstraction to compress ideas:

- Abstraction is like turning a long recipe into a named shortcut. Instead of repeating the whole recipe every time, you write “make cupcakes,” and everyone knows what that means. In math, this could be turning a long proof into a single, reusable theorem, or a long formula into a named function.

- Use simplification rules (canonicalization):

- Some steps are routine and should always simplify the same way (like reducing 2+2 to 4). Marking these rules helps an AI avoid wasting time on easy parts.

- Add “scores” for complexity and efficiency:

- They define ways to measure how long or complex a proof or definition is, and how much it helps compress the map. This can help AIs decide what’s “interesting” or worthwhile.

They also note that there isn’t just one “math world.” Different starting rules (axioms) create different “Platonic worlds” of math. Some statements are true in one world and unprovable in another. The authors ask how these worlds compare and how an AI should navigate them.

Key ideas and takeaways

Here are the main insights the paper offers:

- The map of all proofs grows incredibly fast:

- If you try to build all possible proofs layer by layer, the number of statements explodes faster than exponential growth. This shows why an AI (or human) can’t just brute-force search math.

- Abstraction is essential:

- Turning long arguments into reusable building blocks makes reasoning faster and clearer. It also helps AIs avoid getting lost.

- Proofs should be first-class objects:

- If proofs are things you can build, measure, and combine, AIs can reuse them intelligently.

- Routine simplification should be automatic:

- Marking some steps as “just simplify this way” helps keep searches focused on the hard parts.

- We can score usefulness:

- By measuring the “cost” of a proof or the “compression” it gives to the overall map, AIs can rank results and decide what’s worth reporting.

- There are many mathematical “worlds”:

- Different rules lead to different universes of math. Some facts are “absolute” (true across many worlds), while others depend on the world you choose. Comparing these worlds matters for AI design.

- What a good AI math agent should do:

- The paper proposes clear criteria for an autonomous mathematical discovery (AMD) agent. In short, a strong AI mathematician should:

- Work in an open-ended language (able to create new theorems, proofs, and concepts).

- Produce proofs we can check.

- Tell what’s genuinely new (not easy from what it already knows).

- Propose and prove its own theorems.

- Invent useful definitions and data types.

- Pick a few best results and explain why they matter (e.g., they compress the map or open paths to more results).

- Run in a loop, adding discoveries and making steady progress without running out of steam.

Why this matters

- For AI: This framework helps design AI that doesn’t drown in the sea of possible proofs, but instead develops taste, strategy, and judgment—much like a good human mathematician.

- For humans: It offers tools to understand why some math feels “natural” or “beautiful” and how to keep humans and AIs working together efficiently.

- For philosophy: It gives a new way to explore the old question “Is math discovered or invented?” by watching how AI navigates different “worlds” of math and what it finds compelling.

What could happen next

- A new field: The authors foresee a discipline they call “computational metamathematics,” where we study math itself using AI, formal proof systems, and these hypergraph maps.

- Better AI copilots for math: With these tools, AI could discover lemmas, suggest definitions, sketch research programs, and help prove hard theorems—while explaining its choices.

- Human–AI partnership: Because the math “universe” is unimaginably large, there will always be directions for humans to explore, especially those that reward insight and creativity. AIs can handle the heavy lifting; humans can guide the vision.

In short, the paper lays out a blueprint: a way to map mathematics so that AI can explore it wisely, share important discoveries, and help us understand both the structure of math and our special human ribbon within it.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of what remains missing, uncertain, or unexplored in the paper, phrased to guide actionable research.

Foundations and hypergraph representation

- Lack of a fully rigorous, executable specification of the universal proof hypergraph U and the structural hypergraph S for a concrete foundation (e.g., Lean/Coq’s dependent type theory), including:

- Precise node/edge schemas for terms, types, propositions, and proof objects.

- A complete, finite set of construction and deduction hyperedge “colors” with input/output typing rules.

- Explicit handling of binding and α/β/η equivalences (e.g., via de Bruijn indices or nominal techniques) to prevent variable capture.

- No formal treatment of definitional equality and canonicalization in the hypergraph:

- How to mark “orientation” of rewrite rules (definitional equalities) to ensure normalization, confluence, and termination.

- How canonicalization interacts with proof search and complexity metrics.

- Proof objects are posited but not concretely mapped to ITP internals:

- A principled translation from Lean/Coq/Mizar/Isabelle proof terms to the proof hypergraph (including rewrite/definitional reduction).

- Criteria for proof identity/equivalence (when are two proof nodes “the same”?), and integration with homotopy type theory if used.

- Treatment of non-terminating or non-computable constructions is deferred:

- Systematic representation of partiality and general recursion (beyond “fuel parameters”), and its consequences for S and U.

- How to represent classical existence/choice principles and non-constructive arguments within a strongly-normalizing core.

Growth, complexity, and “geometry” of mathematics

- Doubly exponential growth is argued by a toy example, but no empirical or theoretical bounds for realistic foundations:

- Measurement of growth rates in formal libraries (e.g., Mathlib) as a function of depth and available abstractions.

- Models of branching factors under different abstraction policies and definitional equalities.

- Complexity measures are underspecified and unvalidated:

- Concrete instantiations for c(e), c′(s), and l(s), with sensitivity analyses and foundation-independence tests.

- Relationship to Kolmogorov complexity and proof-length lower bounds (only promised, not delivered here).

- Algorithms to compute or approximate m(s) for large subgraphs with performance guarantees.

- “Geometry of math” in hypergraphs is proposed but not defined:

- Formal analogs of distance, curvature, and scaling limits for directed, ordered, colored hypergraphs with n-ary edges.

- Well-posed coarse invariants of proof/structural hypergraphs that are robust across foundations.

- Conditions under which manifold-like or metric-like structures can emerge from hypergraph limits.

- Neighborhood-based proof search is not operationalized:

- Algorithms for computing or bounding N(s,d) ∩ U efficiently, including pruning/heuristics and canonicalization-aware expansions.

- Learning-guided selection of instantiations to keep U_t locally finite without losing relevant proofs.

Human mathematics ribbon H and interestingness

- No precise definition or extraction procedure for the “human mathematics” subgraph H:

- Operational criteria to distinguish H from U using structural/compressibility/centrality measures.

- Tests for the claimed “polynomial growth ribbon” hypothesis beyond toy models.

- Methods to align “taste” and “interestingness” with measurable hypergraph features (e.g., compression of proof networks, connectivity gains).

- Interestingness and abstraction utility are gestured at but not grounded:

- Explicit formulas for “efficiency E(Ṗ)” and “abstraction utility U(A),” with benchmarks and ablation studies.

- Robustness of rankings to choice of cost functions and definitional equalities.

Autonomy and agentic discovery (AMD)

- Criteria for AMD are proposed but lack operationalization and tests:

- Concrete evaluation protocols, datasets, and scoring functions for each criterion (1)–(10) in the figure.

- Definition of “independent criteria” in §S: tests are referenced but not specified here.

- Learning to abstract and select definitions is under-specified:

- Formal objective functions and optimization strategies for abstraction choice under branching-factor vs proof-length trade-offs.

- Curriculum and meta-learning strategies to build reusable abstractions without destabilizing search.

- Modeling contingent development C_t lacks constructive dynamics:

- Explicit state/action/reward formulations for RL (or other) agents acting on S/U with computational budget constraints.

- Mechanisms for conjecturing, prioritizing, and closing feedback loops (publish-select-update) with resource guarantees.

- Handling conjectures, independence, and axiom changes is not operationalized:

- Policies for when an AMD agent proposes new axioms vs. seeks proofs, and safeguards against inconsistency.

- Systematic treatment of conditional theorems and how they inhabit S beyond U.

- Long-horizon autonomy and open-endedness remain unproven:

- The paper requires that closed-loop iterations keep yielding discoveries, but provides no theoretical guarantees or empirical evidence.

Cross-foundation transfer and absoluteness

- Coarse equivalence across foundations is not quantitatively defined:

- Metrics for “coarse similarity” of proof hypergraphs (e.g., distortion functions) and algorithms to translate proofs/theorems with controlled blow-ups.

- Cataloging domains where translation blow-ups are polynomial vs. superrecursive, with predictors and costs.

- “Foundation-independent” invariants are only suggested:

- Identification and computation of invariants of statements/proofs that persist across PA/ZF/ZFC/DTT/HoTT/category-theoretic foundations.

- Benchmarks for cross-foundation transfer learning and portability of abstractions.

Tooling, data, and empirical validation

- No pipeline to extract S/U from existing formal corpora:

- End-to-end tooling to parse Mathlib/Coq stdlib into hypergraphs, annotate edges (construction/deduction/canonicalization), and compute metrics.

- Datasets of (statement, proof-graph, complexity) triples for training and evaluation.

- Lack of empirical studies validating claims and measures:

- Experiments correlating complexity/abstraction/interestingness metrics with human judgments and citation/impact proxies.

- Controlled studies comparing AMD systems and human baselines on shared tasks with transparent metrics.

- Informal-to-formal and natural language aspects are not integrated:

- Methods to incorporate heuristic reasoning, diagrammatic/semantic content, and informal proofs into the hypergraph framework with verifiable links.

Scope and breadth of mathematics

- Limited coverage of mathematical domains beyond arithmetic/toy examples:

- Case studies for algebra, topology, analysis, category theory, and probability, showing how S/U and canonicalization scale.

- Representation of isomorphism classes, quotient structures, and univalence-like principles when appropriate.

- Equality vs isomorphism beyond simple quotients:

- Practical methods for rewriting across isomorphic structures with guarantees (e.g., transport theorems, coercions) and tractable search.

- Multi-agent and collaborative discovery dynamics are absent:

- Models for division of labor, specialization, and communication across agents operating on shared S/U with resource accounting.

Safety, governance, and selection

- Governance of “what is worth reporting” remains vague:

- Formal selection mechanisms (e.g., Pareto fronts over compression, generality, surprise, transfer utility) and human-in-the-loop protocols.

- Safeguards against trivial, vacuous, or misaligned “discoveries” that game the proposed metrics.

- Robustness to metric and foundation choices:

- Sensitivity analyses showing stability of rankings and discovery pipelines under changes in cost models and foundational settings.

Practical Applications

Immediate Applications

Below are practical use cases that can be deployed now, leveraging the paper’s frameworks (universal and structural hypergraphs, proof objects, abstraction, canonicalization) and its criteria for automated mathematical discovery (AMD). Each item notes sector(s), the tools/workflows likely to emerge, and assumptions/dependencies affecting feasibility.

- AI-assisted formalization and research copilots

- Sectors: academia, software (formal methods), scientific computing

- What: Embed AI theorem-proving assistants (Lean, Coq/Rocq) into the research workflow to draft, check, and compress proofs; use the paper’s AMD criteria to triage outputs and select “discoveries.”

- Tools/workflows: Lean/Coq integration, proof-object tracking, proof-complexity scoring (

m(s)), efficiency metrics (E(Ṗ)), abstraction utility (U(A)), novelty detection relative to the current corpus𝒞_t. - Assumptions/dependencies: Access to formal libraries (Mathlib), reliable proof-object generation, scalable proof-complexity estimates, human-in-the-loop evaluation.

- Formal verification pipelines for safety-critical systems

- Sectors: software, robotics, healthcare (medical devices), automotive, energy, aerospace

- What: Use proof-object hypergraphs to verify properties of code and systems; apply canonicalization and definitional equality to reduce proof search; model equivalences via quotient types (e.g., protocol equivalence, semantics-preserving transformations).

- Tools/workflows: Type-theory-backed verification (Lean/Coq), proof-object provenance, canonicalization engines for arithmetic/logic, “projection” and “congruence” hyperedges for quotient-based reasoning.

- Assumptions/dependencies: Formal specifications exist; strong normalization holds for the modeled functions; domain experts maintain abstraction layers; compute budgets for proof search.

- Novelty detection and research portfolio management

- Sectors: academia, R&D organizations, IP management

- What: Rank candidate results by proof complexity (

m(s)) relative to the current corpus, flag redundancies, compress proof graphs to reveal “high-yield” lemmas and definitions; select a small number of proposals as genuine discoveries per AMD criteria. - Tools/workflows: Corpus-aware novelty scoring, proof-graph compression dashboards, “interestingness” ranking via

E(Ṗ)orU(A). - Assumptions/dependencies: Access to organizational proof/knowledge graphs, agreed thresholds for “new vs trivial,” scalable graph analytics.

- Compiler and CAS (computer algebra system) optimization via canonicalization

- Sectors: software engineering, data science, HPC

- What: Integrate canonicalization rules (e.g., arithmetic normalization, definitional equality) to simplify expressions deterministically, reduce search branching factors, and stabilize symbolic computation.

- Tools/workflows: Canonicalization passes informed by structural hypergraph rules; definitional equality preferences; rewrite systems with cost/reward heuristics.

- Assumptions/dependencies: Formal semantics of transformations; performance validation; careful handling of variable capture and scoping.

- Knowledge graph/hypergraph construction and dependency mapping

- Sectors: enterprise knowledge management, research organizations

- What: Build structural hypergraphs of concepts, definitions, and results; visualize prerequisite paths and local neighborhoods

N(s, d)to target learning, refactoring, or proof automation. - Tools/workflows: Concept dependency graphs, local ball expansions

N(s, d), abstraction nodes to hide proof details while preserving applicability, novelty and redundancy detectors. - Assumptions/dependencies: Content formalization, consistent symbol tables across teams, ontology alignment.

- Adaptive math education and assessment

- Sectors: education, edtech

- What: Map curricular concepts as structural hypergraphs; estimate problem difficulty via proof and expression complexity; generate tailored exercises and explanations; automatically track student progression along the “human mathematics ribbon.”

- Tools/workflows: Lean-backed problem generators, concept graphs with depth/complexity annotations, adaptive tutors that surface abstractions and canonical examples.

- Assumptions/dependencies: Availability of formalized curricular content; teachers’ acceptance; ethical data collection and student modeling.

- Policy and governance frameworks for AI math systems

- Sectors: policy, standards bodies, research governance

- What: Adopt the paper’s AMD criteria as evaluation standards (open-ended language, verifiable proofs, novelty detection, program generation, closed-loop growth); require proof objects for claims; mandate independent validation tests.

- Tools/workflows: Capability benchmarks (e.g., FrontierMath, Putnam-level sets), audit trails for formal/informal proofs, reproducibility protocols.

- Assumptions/dependencies: Regulator buy-in; agreed-upon test suites; cross-institutional interoperability of proof formats.

- Security and cryptography assurance

- Sectors: cybersecurity, fintech

- What: Formally verify cryptographic protocols and properties; use quotient-type modeling for equivalence classes (e.g., indistinguishability) and canonicalization for normalized reasoning about attacks.

- Tools/workflows: Type-theory proof pipelines, equivalence proof objects, protocol property libraries, automated congruence checks.

- Assumptions/dependencies: High-quality formal models of protocols; efficient proof search; secure integration.

Long-Term Applications

These applications are feasible with further research, scaling, and maturation of formalization, AMD agents, and hypergraph analytics. Each item notes sectors, potential tools/workflows, and key dependencies.

- Closed-loop autonomous mathematical discovery (AMD agents)

- Sectors: academia, software tooling, cross-disciplinary science

- What: Agents that propose definitions, conjectures, theorems; prove them; select “discoveries” with reasons; iterate to unbounded growth of knowledge; generate research programs (not just proofs).

- Tools/workflows: Reinforcement-learning-based proof/conjecture loops, taste/interestingness models rooted in graph compression and abstraction utility, discovery ranking and reporting.

- Assumptions/dependencies: Scalable proof search; robust abstraction management; reliable novelty detection; compute resources; safeguards for correctness.

- Computational metamathematics discipline and infrastructure

- Sectors: academia, standards bodies, software

- What: Establish repositories of universal proof hypergraphs (across axiom systems), cross-foundation translation tools, and foundation-independent measures for “coarse geometry” of math; comparative analytics for formal systems.

- Tools/workflows: Multi-foundation translators (PA ↔ ZF/ZFC/type theory), hypergraph similarity metrics, curvature-like invariants for hypergraphs, dashboards for growth and efficiency.

- Assumptions/dependencies: Agreement on benchmark corpora and metrics; tractable cross-system translations (often nontrivial and possibly non-recursive in higher arithmetics); sustained community effort.

- Foundation-independent “geometry of mathematics”

- Sectors: academia (math, CS theory), AI research

- What: Develop hypergraph analogs of geometric/combinatorial notions (e.g., Gromov-style curvature), scaling limits, and coarse-equivalence tools; seek universality classes across logical foundations.

- Tools/workflows: Hypergraph geometric toolkits, manifold-like approximations, large-scale graph limit computations, sector-wide visualization platforms.

- Assumptions/dependencies: New theory and algorithms; large-scale formalization; consensus on measures; compute at scale.

- Domain-general formal reasoning in law, policy, compliance

- Sectors: legal, public policy, finance

- What: Formalize regulations/contracts in structural hypergraphs; automate compliance auditing via proof objects; canonicalize contractual expressions; compute decision consistency.

- Tools/workflows: Legal type systems, equivalence and quotient modeling for clauses, compliance proof generators, audit dashboards.

- Assumptions/dependencies: Standardization of legal semantics; institutional willingness to adopt formal proofs; handling ambiguity and interpretation.

- Healthcare decision support with formal guarantees

- Sectors: healthcare, biomedical research

- What: Formalize clinical guidelines and causal models; use proof objects to ensure consistency and safety; detect contradictions and gaps; canonicalize data transformations.

- Tools/workflows: Medical guideline type systems, equivalence classes for treatments/outcomes, formal safety proofs, explainable recommendation engines.

- Assumptions/dependencies: High-quality formalizations; interoperability with EHRs; rigorous validation; regulatory approval.

- Proof-centric engineering design synthesis

- Sectors: robotics, aerospace, energy systems, automotive

- What: Generate designs along with proofs of safety, performance, and compliance; automate abstraction of reusable modules; verify system-level properties compositionally via structural hypergraphs.

- Tools/workflows: Compositional proof pipelines, module libraries with proof objects, automated congruence checks for design equivalence, canonicalization of constraints.

- Assumptions/dependencies: Formal models of physical systems; component marketplaces with proofs; scalable compositional verification.

- Next-generation programming languages with embedded proof objects

- Sectors: software engineering, formal methods

- What: Type-theory-first languages making proofs first-class; automatic lambda abstraction and function synthesis; integrated canonicalization for deterministic semantics.

- Tools/workflows: IDEs that surface proof objects, proof-aware compilers, structural hypergraph libraries for codebases, integrated abstraction-taste models to refactor APIs.

- Assumptions/dependencies: Developer adoption; performance parity; robust tooling; education for teams.

- IP, credit, and publication ecosystems for AI-discovered results

- Sectors: academia, publishing, IP law

- What: Standards for attributing discoveries, certifying proofs, managing novelty in proof graphs, and curating research programs initiated by AMD agents.

- Tools/workflows: Proof registries, novelty thresholds using

m(s), reproducibility services, multi-party validation networks. - Assumptions/dependencies: Policy frameworks; interoperable proof formats; incentives for adoption.

- Cross-disciplinary scientific discovery

- Sectors: physics, materials, biology, economics

- What: Agents explore structural hypergraphs of theories/models; propose new mathematical structures and mappings; compress complex derivations; expose re-usable abstractions to humans.

- Tools/workflows: Unified formalization layers across domains, cross-domain abstraction discovery, proof compression services, human-in-the-loop curation.

- Assumptions/dependencies: Deep formalization of scientific models; robust translation between informal and formal statements; significant compute.

Common assumptions and dependencies across applications

- Formalization depth: Success depends on how completely domains can be represented in dependent type theory (or compatible formalisms).

- Compute and scalability: Hypergraphs grow doubly exponentially in naive expansions; practical implementations need canonicalization, abstraction, and prioritization heuristics.

- Standards and interoperability: Shared proof-object formats, cross-foundation translators, and governance for validation and reproducibility.

- Human-AI collaboration: Taste judgments, research-program design, and ethical oversight remain essential, especially for closed-loop discovery agents.

- Correctness guarantees: Strong normalization and definitional equality choices must be carefully engineered to avoid subtle errors (e.g., variable capture, non-termination).

- Regulatory acceptance: In healthcare, law, and finance, formal verification practices must align with compliance frameworks and be interpretable.

Glossary

- absoluteness: A property indicating that certain statements have the same truth value across different foundational systems or that provability transfers between them. "This question touches on absoluteness and on the efficiency of rewriting results in one system to another, which can vary from polynomial to non-recursive."

- acyclic hypergraph: A hypergraph with no directed cycles, used here to model deduction structures where inferences don’t loop back. "A formal mathematical system determines the structure of a directed, ordered, colored, acyclic hypergraph (henceforth ``hypergraph'')."

- canonicalization: The process of transforming expressions into a standard or normal form to simplify equivalence and computation. "One way to represent canonicalization is by assigning a

rank'' orenergy'' to statements, and always following hyperedges which reduce the rank." - coarse geometry: A field studying large-scale geometric properties that are invariant under coarse (large-scale) equivalences, invoked as an analogy for comparing proof structures. "Our use of the word \"coarse\" is a nod to the subject of coarse geometry \cite{wikipedia_coarse_structure} a concept well explored by M. Gromov and his school."

- colimit: A categorical construction describing a universal object that coherently “glues together” a directed system; here, it captures building an infinite structure from finite stages. "Our universal hypergraph is then the colimit of as ."

- Curry-Howard correspondence: The isomorphism between propositions and types and between proofs and programs in type theory. "This is the Curry-Howard correspondence."

- definitional equality: An equality decided by the syntactic rules of reduction/normalization (by definition), not requiring an explicit proof. "Another way, called definitional equality, is to mark the hyperedges with this information and prefer one side of a construction to the other."

- dependent type theory: A type-theoretic foundation where types can depend on values, enabling expressive definitions like vectors of length n. "we follow dependent type theory as used in the Coq/Rcoq and Lean theorem proving languages, in the version given in Chapter 1 of \cite{aczel2013homotopy}."

- directed hyperedge: A generalized edge connecting multiple inputs to multiple outputs in a directed manner, representing inference steps. "A directed hyperedge of type (sometimes called a directed hyperarc) within the hypergraph is a list of vertices, divided into

input'' vertices andoutput'' vertices, the deductive consequences of the input." - Finsler generalization: A generalization of Riemannian geometry allowing norms that need not come from inner products. "The richest expression of geometry is Riemannian geometry (or possibly its Finsler generalization)."

- first order logic: A logical system allowing quantification over individuals but not over predicates or functions. "a concrete example to keep in mind is first order logic and Peano arithmetic (PA)."

- Goodstein's theorem: A statement about sequences of natural numbers that is true but unprovable in Peano Arithmetic, illustrating independence phenomena. "For example, there are well-known theorems of set theory (ZF) like Goodstein's theorem, which cannot be proven (according to the Kirby--Harrington theorem) within PA."

- homotopy type theory: A variant of type theory incorporating homotopical ideas, where equality proofs themselves have structure. "This is the starting point for homotopy type theory and related developments."

- inductive closure: The result of repeatedly applying construction rules to an initial set until no new elements are produced, yielding the minimal fixed point. "We define the inductive closure $\mbox{Cl}_{T_E}G_0$ to be the union of the ."

- Kleene fixed point theorem: A result guaranteeing the existence and uniqueness of least fixed points for certain monotone operators, used to justify inductive constructions. "It is also the least fixed point of the operator and is unique by the Kleene fixed point theorem given the finiteness assumptions."

- Kolmogorov complexity: The length of the shortest program producing a given object; used here as a comparator for proof/definition length measures. "In \S \ref{ss:efficiency} we will compare these definitions with Kolmogorov complexity."

- Modus ponens: A fundamental inference rule allowing one to derive B from A and A ⇒ B. "Another example is modus ponens: if two previous vertices are and , respectively, then we can add a hyperedge with these inputs and outputting ."

- Peirce arrow: The logical NOR operator, known to be functionally complete. "In Boolean logic, for example, NAND (Sheffer stroke) and NOR (Peirce arrow) are each universal."

- proof hypergraph: A hypergraph whose nodes represent proof objects and whose structure captures how proofs are constructed. "the graph of our earlier discussion is the ``shadow'' of a universal hypergraph of proofs $P\subsetS$."

- proof objects: Explicit entities inhabiting proposition-types in type theory, representing concrete proofs. "In terms of proof objects, a proof of is simply a pair of proofs, of and of ."

- quotient type: A type formed by identifying elements of a type under an equivalence relation, yielding classes as new elements. "Given a type and an equivalence relation , we introduce a new node in representing the quotient type ."

- Riemannian geometry: The study of smooth manifolds with smoothly varying inner products on tangent spaces, cited as a canonical geometric framework. "The richest expression of geometry is Riemannian geometry (or possibly its Finsler generalization)."

- Sheffer stroke: The logical NAND operator, functionally complete by itself. "In Boolean logic, for example, NAND (Sheffer stroke) and NOR (Peirce arrow) are each universal."

- signature-axiom class: A set-theoretic way to package a structure by specifying its carrier, operations, and axioms (à la Bourbaki). "In set theoretic foundations this would be a signature-axiom class, as in Bourbaki."

- strong normalization: A property ensuring that all well-typed computations (reductions) terminate. "In our specific framework (dependent type theory), we avoid this problem by enforcing strong normalization: all functions which follow the typing rules and thus are defined in will terminate."

- structural hypergraph: A hypergraph encoding the compositional structure of expressions (terms, types, propositions) rather than just their logical entailments. "In this way, the set of all statements becomes the set of nodes of what we will call the {\bf structural} hypergraph "

- universality classes: Groupings of systems that share the same large-scale (coarse) behavior despite microscopic differences. "Are there just a few universality classes of mathematical systems in terms of the \"coarse geometry\" of their proof hypergraphs?"

- universal hypergraph: The hypergraph containing all provable statements (or proofs) within a given formal system. "The vertices (nodes) of the universal hypergraph are the subset of all provable propositions in ."

- variable capture: An unintended binding of a variable during substitution, causing semantic errors in formal manipulation. "Then there is a danger of misinterpretation (variable capture)."

- Zermelo-Fraenkel (ZF) set theory: A foundational system for mathematics based on axioms about sets, used here to discuss truth and provability across systems. "there is an accepted simplest model within Zermelo-Fraenkel (ZF) set theory"

- ZFC: Zermelo-Fraenkel set theory with the Axiom of Choice, often a stronger framework for proving statements than ZF alone. "Any statement in (first order) PA which is provable in ZFC is also provable in ZF,"

Collections

Sign up for free to add this paper to one or more collections.