- The paper introduces AI4Math, leveraging both problem-specific and general-purpose models to enhance mathematical discovery.

- It demonstrates progress in ML-guided intuition, reinforcement learning-based example generation, and hybrid neuro-symbolic formal reasoning.

- It outlines challenges such as formal data scarcity, semantic fidelity in autoformalization, and the gap between exam-level and research-level reasoning.

AI for Mathematics: Progress, Challenges, and Prospects

Overview and Motivation

The integration of artificial intelligence, particularly modern machine learning, with mathematical research has led to the emergence of AI for Mathematics (AI4Math) as a vibrant interdisciplinary field. AI4Math is characterized both by the application of ML and deep learning methods to mathematical discovery, reasoning, and formalization, and by the use of mathematics as a stringent testbed for developing general-purpose reasoning capabilities in AI. The field encompasses two primary modeling strategies: problem-specific modeling, which creates bespoke architectures for targeted mathematical domains, and general-purpose modeling, which develops foundation models and agents equipped for broad, rigorous mathematical workflows. This essay synthesizes the key advances, limitations, and prospects in AI4Math as reviewed in "AI for Mathematics: Progress, Challenges, and Prospects" (2601.13209).

Problem-Specific Modeling

Recent progress in problem-specific modeling leverages ML for three main goals: guiding mathematical intuition, constructing examples/counterexamples, and performing formal reasoning in well-defined domains.

Machine Learning-Guided Mathematical Intuition

ML models have substantially accelerated the conjecture acceleration loop in mathematics by revealing hidden patterns in high-dimensional mathematical data that guide expert intuition. Frameworks established by Davies et al. and contemporaries operationalized a cycle in which structure learning and attribution analyses yield refined conjectures, which are then either proved or iteratively improved by humans and models jointly [davies2021advancing, dong2024machine]. Notably, such AI-driven intuition has resulted in rigorous discoveries, such as new relationships between algebraic and geometric invariants in knot theory and lower-bound theorems in arithmetic geometry.

Constructive Approaches via RL and Data-Driven Methods

RL-based approaches and search techniques have successfully generated explicit examples and counterexamples, e.g., for graph-theoretic conjectures and singularity resolution, often surpassing human-derived or classical algorithmic constructions [wagner2021constructions, berczi2023ml, charton2024patternboost]. These models encode mathematical objects as sequences amenable to modern policy optimization or transformer prediction, enabling automated discovery of objects that violate long-standing conjectures or illustrate unanticipated phenomena.

In closed domains such as Euclidean geometry, state-of-the-art systems such as AlphaGeometry and its successor AlphaGeometry2 combine deductive symbolic engines with LLM-empowered proposal modules for auxiliary constructions, achieving performance at or above the International Mathematical Olympiad (IMO) gold standard [trinh2024solving, chervonyi2025gold]. Pure heuristic approaches, as in HAGeo, achieve similar results through systematic auxiliary construction rules. These advances reflect the fruitfulness of hybridizing neural and symbolic reasoning tailored to the structure of the mathematical domain.

General-Purpose Modeling

General-purpose modeling in AI4Math introduces foundation models—primarily LLMs—that serve as general operators across a suite of mathematical reasoning tasks.

Foundation Models and LLM Scaling

Modern LLMs distinguish themselves from classical ML by learning operators over task distributions, enabling cross-domain task transfer, long-context coherence, and emergent reasoning capabilities. Tokenization and next-token prediction objectives provide a unifying framework for modeling mathematical reasoning as coherent, step-wise text generation.

Despite strong performance in undergraduate- and some graduate-level mathematics, a gap persists between current LLMs and genuine research-level mathematical reasoning, due to the stochastic nature of next-token generation and the long-tail distribution of advanced mathematical knowledge.

Empirical Assessment of LLM Mathematical Abilities

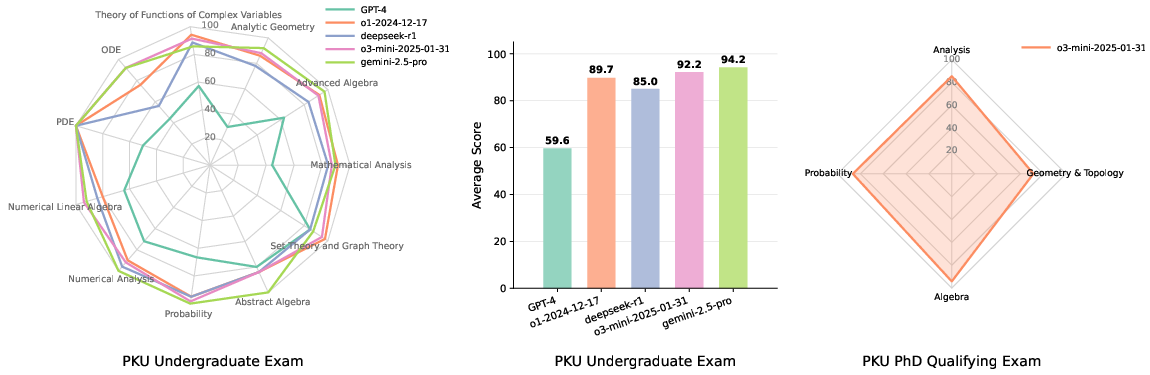

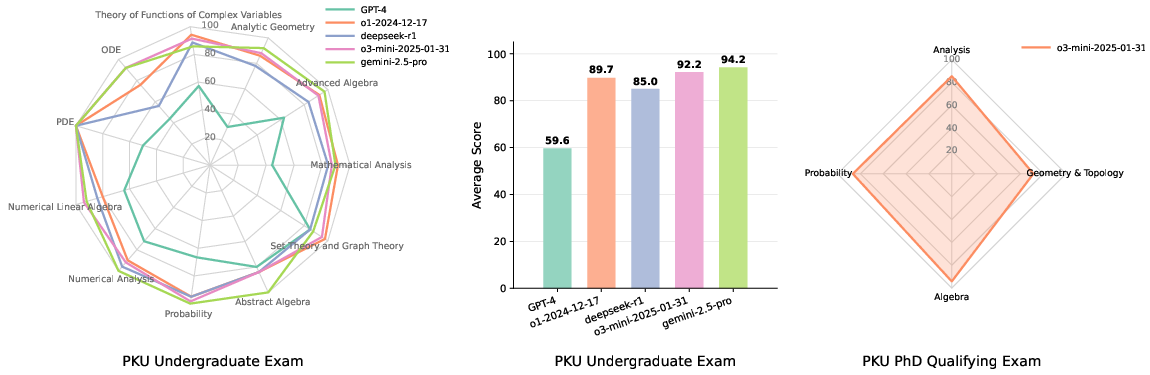

Extensive benchmarking, as illustrated below, shows top models achieving >90% normalized scores on undergraduate mathematics exams and strong results (average 84.4) on PhD qualifying exams, with algebraic domains being notably more tractable for current systems than geometric-topological ones.

Figure 1: Performance of five LLMs across PKU exams, demonstrating strong performance on undergraduate and substantial competence on PhD qualifying exams, particularly in algebra.

Nevertheless, these models do not yet replicate the open-ended explorative and rigorous capabilities needed for mathematical research, as evidenced by their lower scores on challenging research-level benchmarks.

The critical bottleneck in scaling LLM-based formal reasoning is the lack of high-quality formal data and verifiable feedback. The mathematical formalization movement, with significant milestones (e.g., the proof of the Liquid Tensor Experiment in Lean), both verifies correctness and catalyzes the development of interactive environments and libraries (e.g., mathlib4).

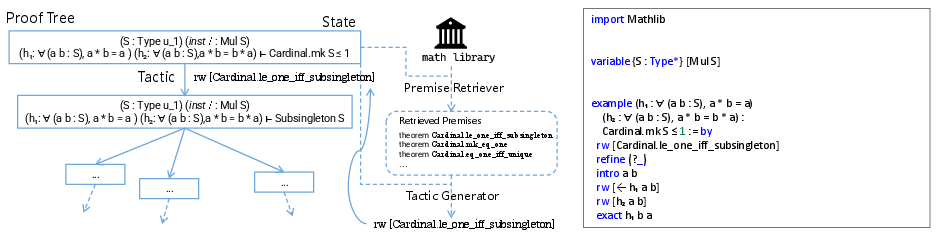

Autoformalization has undergone a paradigm shift, moving from classical seq2seq models to LLM-driven translation using few-shot prompting, synthetic data aligned via LLM-informalization, and agentic systems capable of decomposing and recursively synthesizing missing definitions [wu2022autoformalization, gao2025herald, liu2025rethinking, wang2025aria]. New evaluation metrics based on logical equivalence or structured semantic consistency further close the formalization-verification loop.

Automated Theorem Proving (ATP)

ATP has increasingly benefitted from deep learning, with methodologically distinct paradigms:

Mathematical IR operates not only for human navigation but as a core component in ATP and agentic workflows. Major challenges include bridging the gap between surface form and deep semantic matching for theorems, formulas, and structured proof objects, especially given the combinatorics of mathematical equivalence and the scale of formal libraries.

Recent tools (LeanSearch, LeanExplore, hybrid neural-symbolic retrieval strategies) offer multimodal and cross-representation matching, improving both the retrieval of relevant premises for ATP and user-level search productivity [gao-etal-2024-semantic-search, asher2025leanexplore].

LLM-Based Agents for Discovery

LLM-based agentic approaches (notably FunSearch, AlphaEvolve, and open analogues) demonstrate utility for constructive mathematical discovery, especially when the search space is quantifiable via evaluable code. These agents iteratively improve candidate constructions, achieving new records in extremal combinatorics and similar fields [romera2024mathematical, novikov2025alphaevolve].

Challenges and Open Problems

Despite rapid and multi-pronged progress, several fundamental challenges remain:

- Formal Data Scarcity: The formal reasoning abilities of LLMs still lag natural language, primarily due to limited high-quality, verifiable annotated data.

- Semantic Fidelity in Autoformalization: Ensuring that formalizations accurately capture the full semantic intent of original informal statements is an unresolved challenge, necessitating improved evaluation and grounding.

- Research-Level Reasoning: Transitioning from exam-solving proficiency to open-ended, research-level reasoning and discovery requires new workflow designs, robust agentic routines, and active integration with mathematical expertise and community infrastructure.

- Tooling and Cultural Shift: The transition to AI-assisted mathematical research will only be realized with accessible and robust tools, as well as a shift in mindset regarding the use of generative, high-variance candidate generators and human verification regimes.

Implications and Prospects

Practical implications include the integration of ATP and autoformalization tools into mathematicians’ workflows, significantly increasing discovery pace and reducing verification overhead. Theoretically, mathematics stands as the leading testbed for general reasoning in AI, offering uniquely rigorous training and feedback loops. The ongoing expansion of agentic architectures opens up the possibility of AI systems not just verifying but contributing nontrivially to core mathematical research, provided the gap in formal data and semantic evaluation is addressed.

Longer term, as LLMs become increasingly sophisticated, the distinction between computational assistant and research collaborator will blur. This will amplify the focus on not merely achieving formal correctness, but developing models and agents that can suggest new techniques, analogies, and avenues of research—functions that presently define mathematical insight and creativity.

Conclusion

AI for Mathematics has evolved rapidly from niche symbolic approaches to a field characterized by hybrid neuro-symbolic architectures, foundation models, and agentic systems equipped for rigorous discovery and verification. Despite outstanding challenges in formal data scaling, semantic evaluation, and agent orchestration, the trajectory is clear: the rigorous, hierarchical, and formalizable nature of mathematics makes it an ideal domain for advancing the frontiers of general AI reasoning. Meaningful integration into research-level mathematics will require not only algorithmic and architectural advances but also active and ongoing collaboration with the mathematical community.