- The paper presents a novel decoupled framework where the core AI engine is isolated from delivery interfaces, enabling integration into heterogeneous workflows.

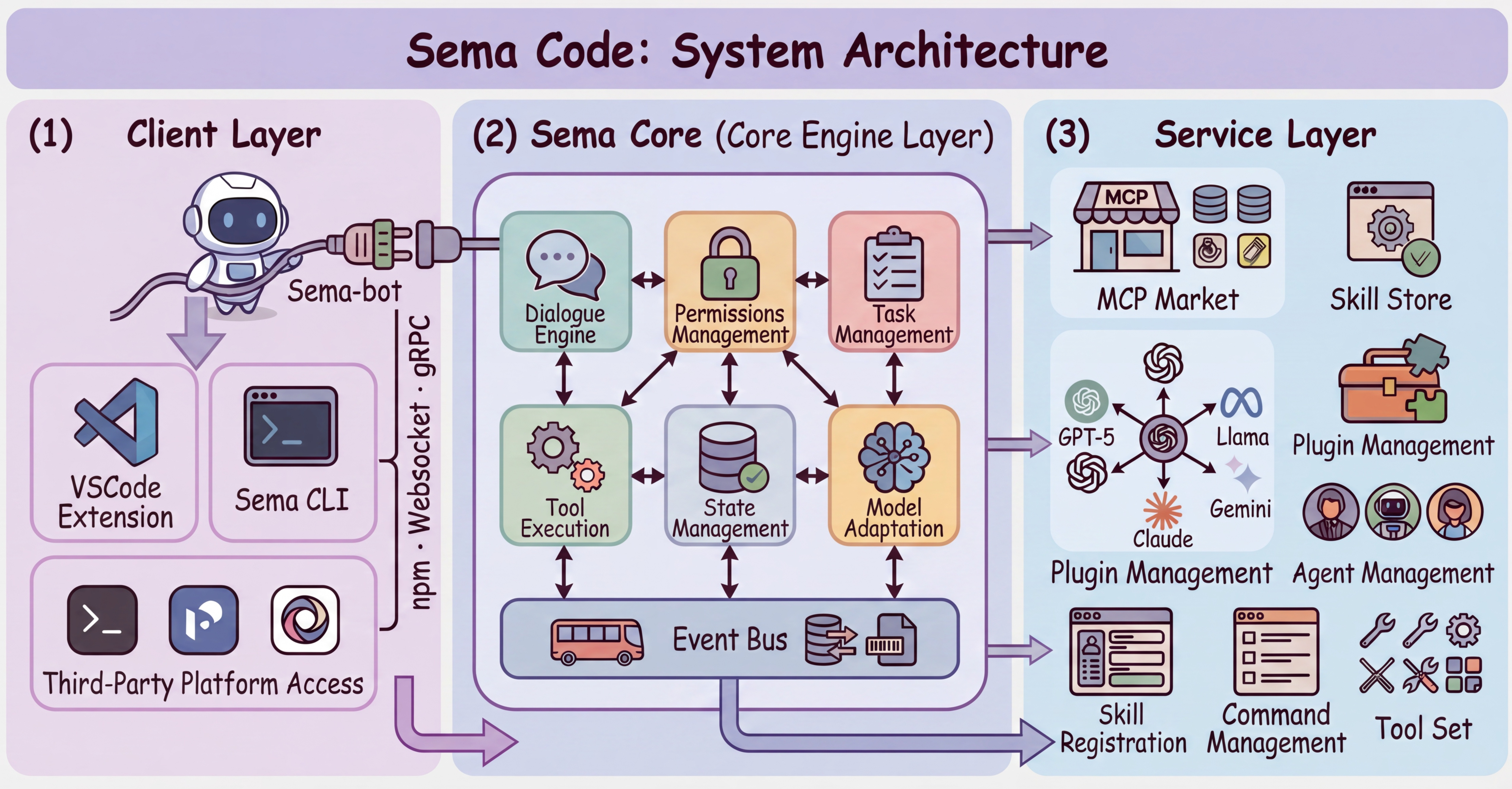

- The methodology employs a three-layer architecture with distinct client, core, and service layers, ensuring multi-tenant isolation and efficient context management.

- Key results include robust event-driven APIs, background task handling, and secure multi-model adaptation, paving the way for flexible, production-grade AI code assistants.

Sema Code: A Framework for Decoupled, Programmable AI Coding Agent Infrastructure

Motivation and Context

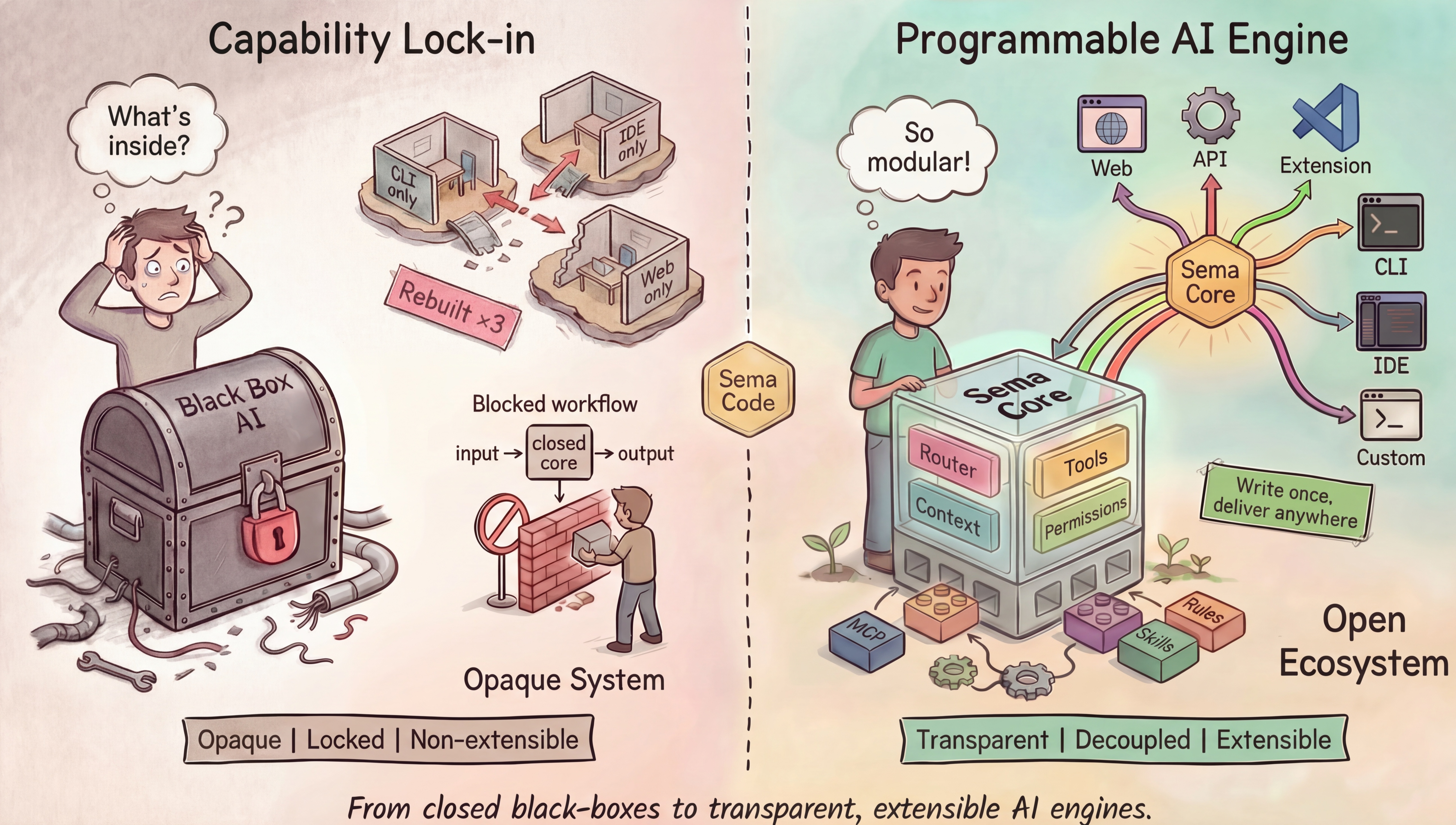

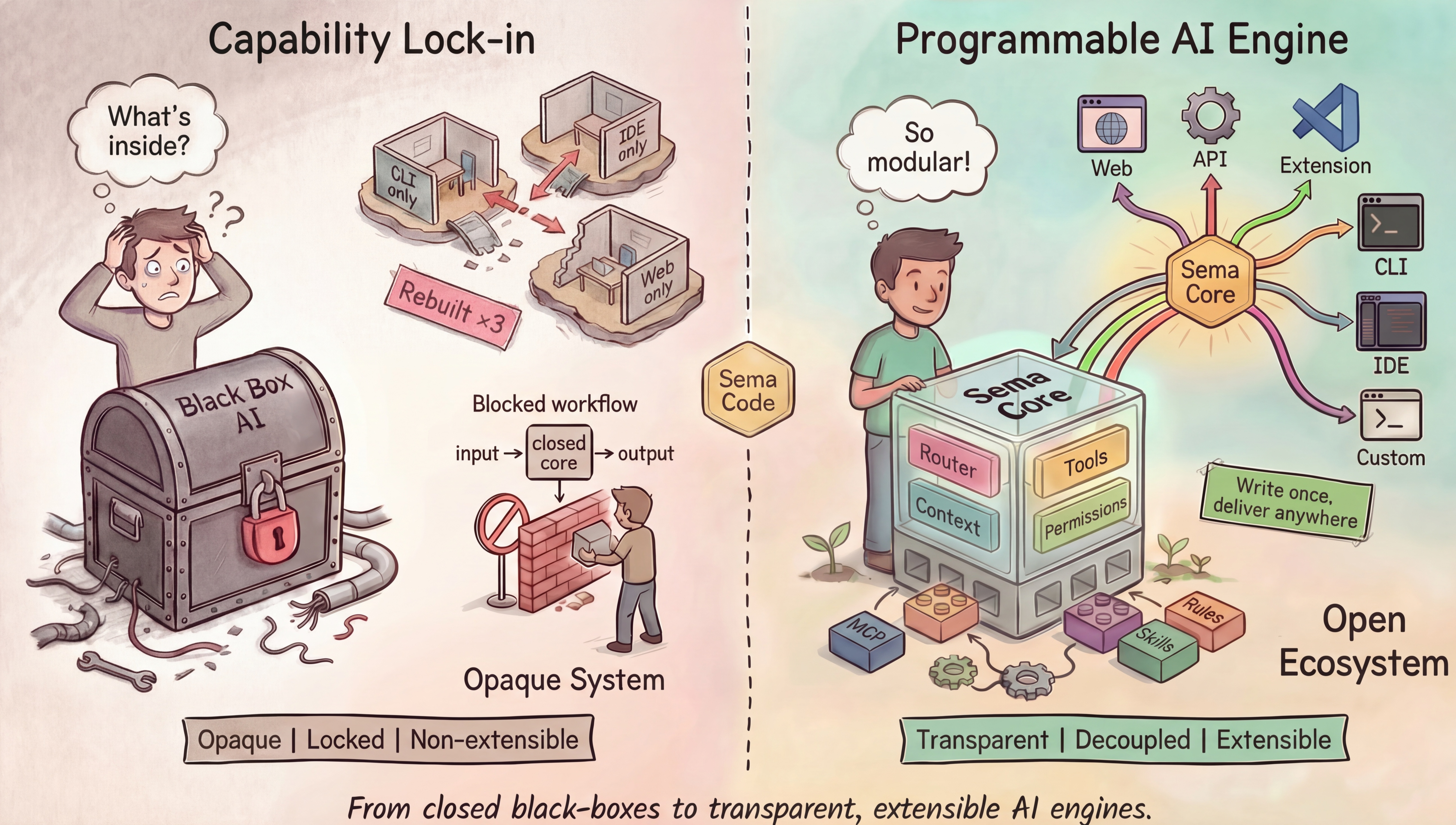

Current AI coding agents and assistants, such as Copilot, Claude Code, and Cursor, are architecturally monolithic, with tightly coupled frontends and core reasoning engines. This coupling yields significant limitations for enterprises seeking to integrate agentic code intelligence into heterogeneous workflows, both for backend automation and for cross-platform delivery. Mainstream systems either lock the agent into a CLI, IDE, or web product, or, in the case of research frameworks, neglect multi-tenant safety, permission management, and cross-language API stability.

Sema Code addresses this architectural bottleneck by introducing a framework that fully decouples the core agent engine from any delivery form. The central argument is that AI agent capabilities, like database engines, should be infrastructure: embeddable, programmable, and extensible from any client environment. This claim is grounded in an explicit architectural shift away from opaque product lock-in towards reusable infrastructure.

Figure 1: Architectural comparison between traditional, monolithic AI systems and the proposed Sema Code framework, illustrating the shift from opaque capability lock-in to a transparent, decoupled, and extensible AI engine.

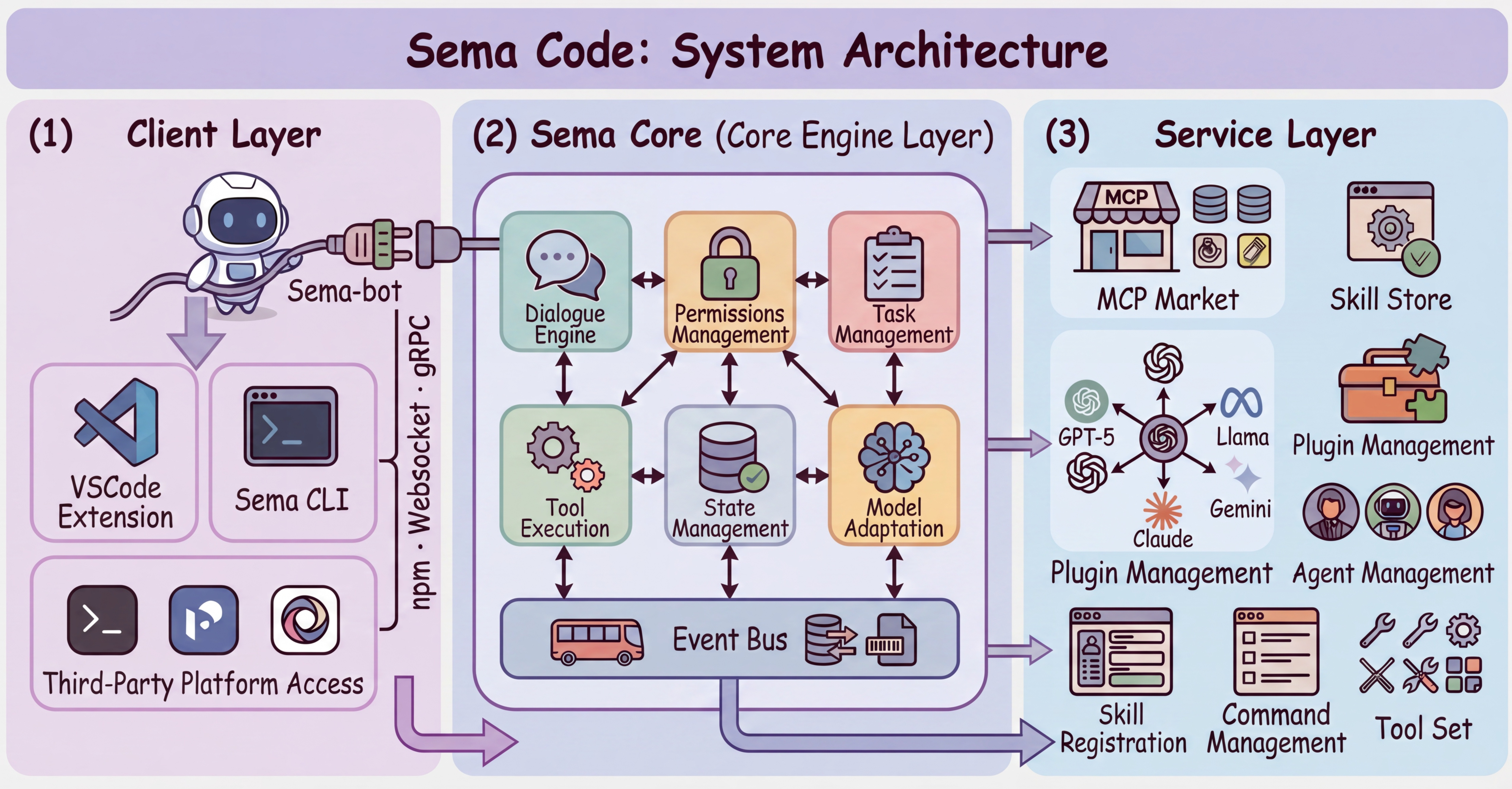

Architecture: Three-Layer Separation

Sema Code's architecture is formalized as a three-layer separation model: client, core engine, and service. The core engine contains all reasoning, tool orchestration, and state management; it is distributed as a UI-free npm package, devoid of any frontend or presentation code. Clients, such as IDE plugins or bots, interact with the core exclusively via a typed, event-driven streaming API over native JS, WebSocket, or gRPC interfaces. The design decision to eschew RPC in favor of event streams is justified by the need to expose heterogeneous, interleaved outputs (text, tool results, permission requests) with minimal coupling.

Figure 2: Sema Code three-layer architecture, with a UI-free core engine layer powering diverse client environments solely via event-driven APIs.

This explicit separation enables Sema Core to power not just a VSCode extension but also a multi-channel agent named SemaClaw (supporting platforms like Telegram and Feishu), both running the same unmodified engine binary.

Engine Mechanisms: Multi-Tenancy, Isolation, and Context Management

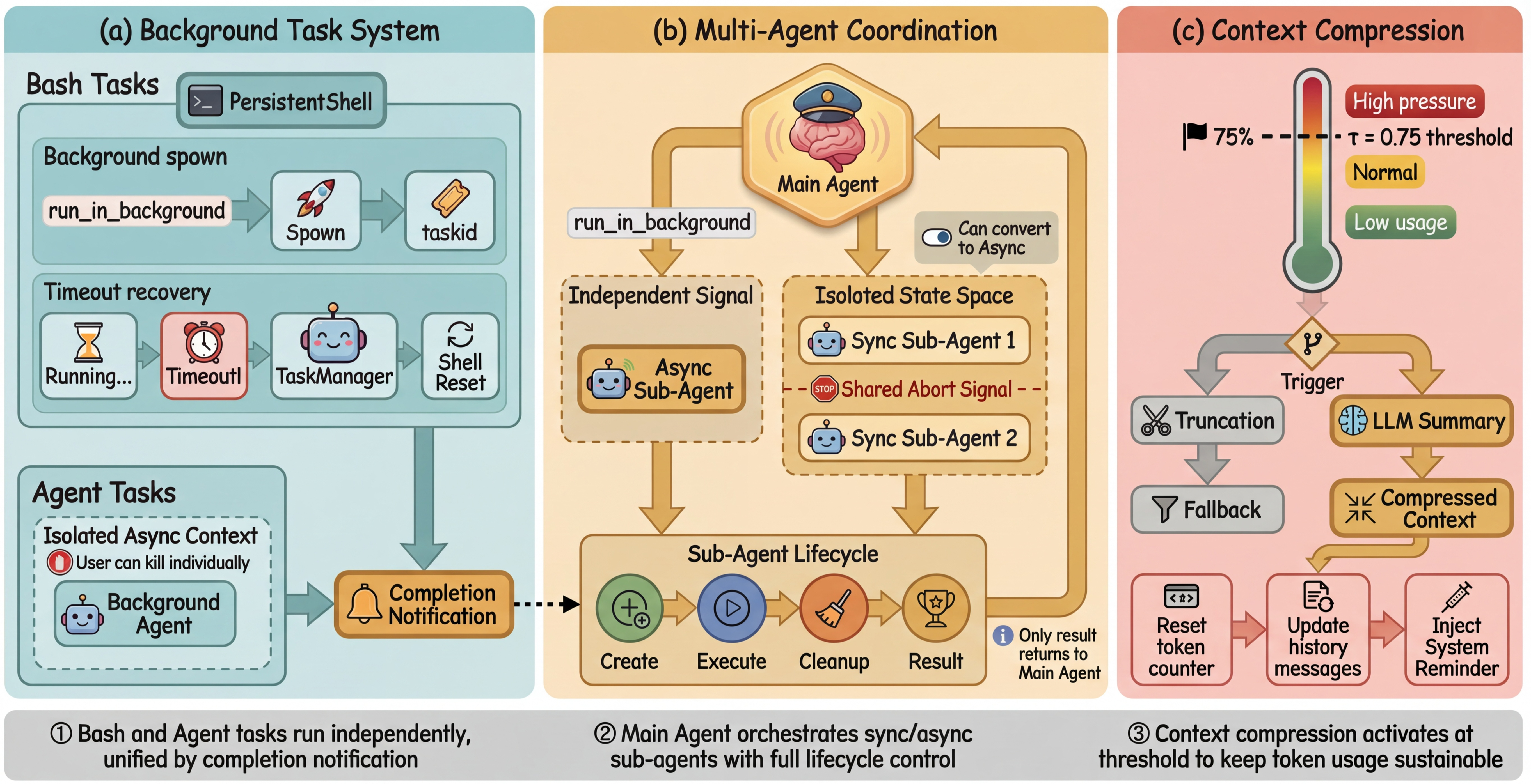

Transforming an AI code agent into a shared, embeddable system introduces significant challenges:

- Multi-Tenant State Isolation: Separation of per-user state spaces is guaranteed using Node.js AsyncLocalStorage, eliminating state bleed and cross-tenant interference without the cost and latency of process isolation. Additionally, a hierarchical state mechanism separates agent-local state (conversation, task list, file access) from session-global controls (edit permissions, global abort signals).

- FIFO Input Queuing and Session Reconstruction: To handle concurrent input and overlapping commands (e.g., bursty message traffic in bot deployments), the engine queues messages, performs semantic batching, and triggers clean session reconstruction with robust handling of mid-execution context switches.

- Adaptive Context Compression: Context window exhaustion in LLMs is mitigated via dual-path strategies. The preferred path utilizes usage metadata for O(1) monitoring and triggers LLM-driven semantic summarization, preserving critical history. A deterministic truncation fallback activates on summarization failure, always respecting message role and structure constraints.

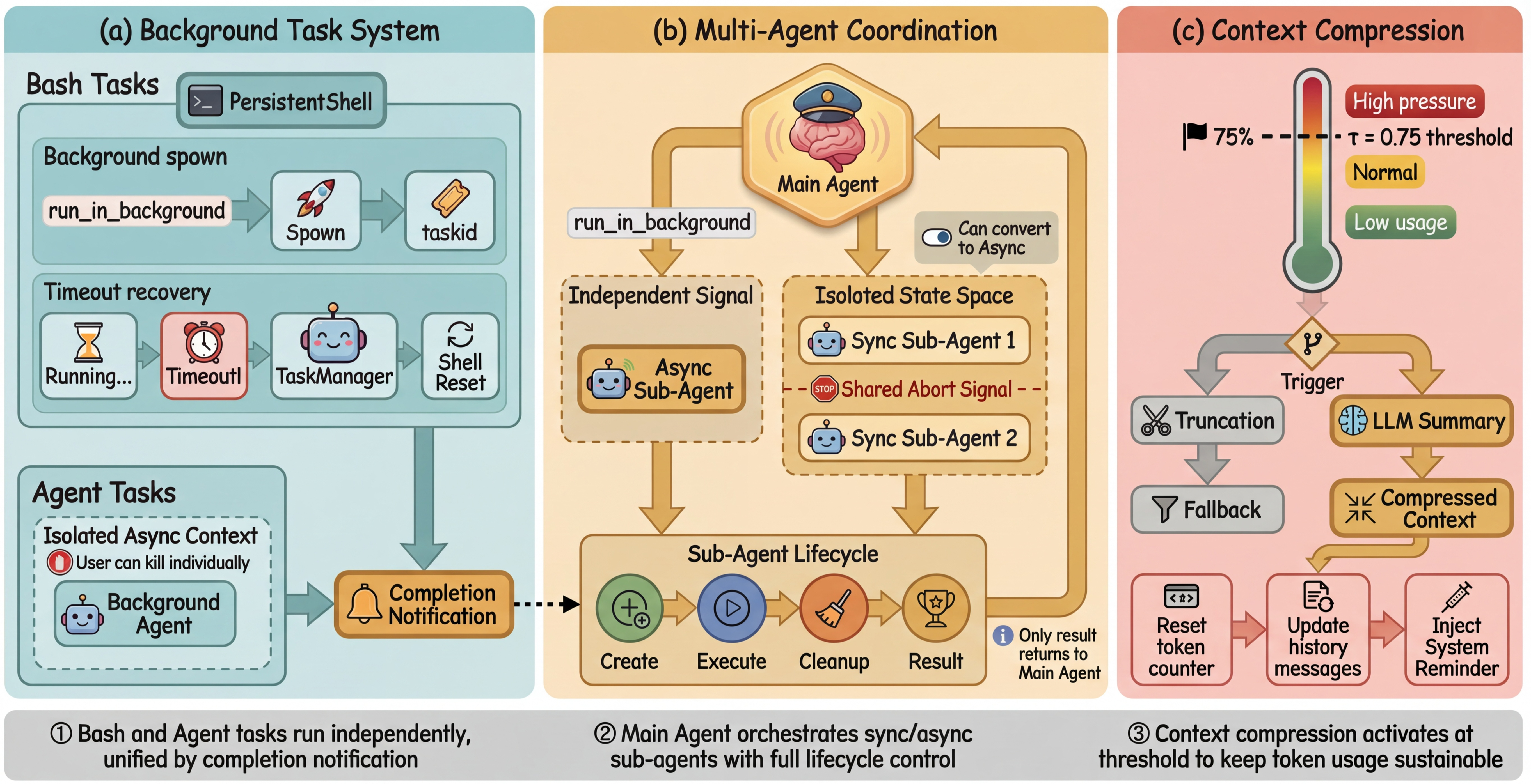

Multi-Agent Runtime and Scheduling

Sema Code's agent runtime is architected for concurrent, observable multi-agent execution:

- Multi-Agent Collaborative Scheduling: Tasks can be delegated from main agents to sub-agents, with strictly isolated state spaces and controlled lifecycle. Sub-agents lack delegation rights, bounding call depth and resource consumption.

- Interrupt Propagation: A centralized abort controller ensures coordinated and immediate termination across agent hierarchies, with fine-grained control at multiple execution phases.

- Intelligent Todo Process Management: Task lists are deterministically tracked and rendered, eliminating false progress feedback and UI flicker caused by LLM text rephrasing.

- Background Task Execution: Long-running bash or agent processes are offloaded into a background manager, decoupling execution from active dialog threads and enforcing bounded resource retention and robust recovery from overrun or timeout cases.

Figure 3: Agent runtime overview, highlighting background execution management, coordinated multi-agent scheduling, and context compression mechanisms.

Security, Permissions, and Ecosystem Integration

Security in LLM-based code agents is non-trivial, especially with autonomous shell/system command invocation. Sema Code implements:

- Four-Layer Asynchronous Permission Model: Separate policy and evaluation for file edits, shell commands, Skill invocations, and MCP service calls, each with tailored risk/authorization semantics and both static and LLM-assisted injection risk analysis. Authorization requests are issued via asynchronous event protocol, harmonized across client presentations (dialogs, messaging buttons).

- Three-Tier Marketplace Ecosystem: The architecture distinguishes among MCP services (infrastructure-level integration), Skills (agent behavior modification), and Plugins (lifecycle/workflow hooks), eschewing the one-size-fits-all plugin design. All extensions are discoverable and installable via a curated registry (ClaWHub).

- Multi-Model Adaptation Layer: Native support for Anthropic and OpenAI APIs, with normalization of proprietary features to a unified event protocol. This enables seamless dynamic switching across models such as Code Llama, DeepSeek-Coder, and commercial LLMs, maintaining API and agentic behavior invariance.

Deployment Validation and Limitations

The deployment validation is performed with two orthogonal integration forms:

- VSCode Extension: Exercises single-user, interactive workloads with intensive use of context compression and background execution. All engine logic is invoked directly through the npm package, with no CLI or subprocess isolation.

- SemaClaw Agent Platform: Implements multi-channel, multi-tenant operation and tests concurrent session management, session isolation, and asynchronous input handling. Permission prompts and user feedback paths adapt to the messaging paradigm.

Both deployments required zero modifications to the core engine source and only layered client-specific logic atop the public streaming API, verifying the architectural claim of delivery-form independence.

Limitations noted include lack of validation at very large scale (hundreds of users/processes), unaddressed issues in horizontal scaling and distributed stateful synchronization, and possible summarization errors in semantic context compression for code-dense or highly technical sessions. Cross-language streaming interfaces have not yet been formally benchmarked for throughput or adversarial failure cases.

Implications and Future Directions

Sema Code's decoupled infrastructure model directly challenges the prevailing product-locked paradigm of AI coding agents. If adopted, it would facilitate deeper integration of code intelligence into arbitrary infrastructure: from automated refactoring bots in CI/CD, to custom IDE environments, to conversational assistants for audit and compliance.

The explicit architectural emphasis on event-driven isolation, fine-grained permission, and extension via open marketplaces marks a transition in agentic frameworks from research-oriented proof-of-concept toolchains toward robust, production-grade infrastructure.

Future development is projected in several directions: vector-based fine-grained retrieval for context recall, more sophisticated multi-agent communication via state/message-passing (beyond delegation), and distributed scheduling/topology-aware scaling for cloud/cluster deployment.

Conclusion

Sema Code presents a rigorous, systematically engineered framework for embedding AI code agent capabilities as direct, decoupled infrastructure—eliminating product lock-in and enabling programmable extension across diverse delivery platforms. This approach is underpinned by formal isolation, concurrent execution, efficient context management, robust security permissioning, and open extensibility. The validation across disparate product forms affirms its feasibility and positions the architecture as a future baseline for scalable, secure agentic code intelligence in heterogeneous engineering ecosystems (2604.11045).