- The paper demonstrates that the shift from manual code generation to orchestration and verification drives significant productivity gains and system reliability.

- It highlights rigorous multi-layer verification methods and multi-agent cooperation as essential to address semantic errors and emergent risks in AI-generated code.

- The research underscores the need to revamp education, toolchains, and workflows to equip engineers for roles centered on design, oversight, and ethical accountability.

Rethinking Software Engineering for Agentic AI Systems

Introduction

The maturation and integration of LLMs and agentic AI systems have precipitated a paradigmatic transformation in software engineering. Code generation—once the rate-limiting, value-defining activity—is now abundant and synthesized at scale, challenging the foundational premises of process models, educational structures, and role identity within the discipline. In response, this paper redefines the core competences and practices of software engineering around orchestration, verification, and human-AI collaboration, synthesizing contemporary studies and empirical results to articulate the nature, scope, and requirements of this transition (2604.10599).

From Scarcity to Abundance: Shifting Artifacts and Bottlenecks

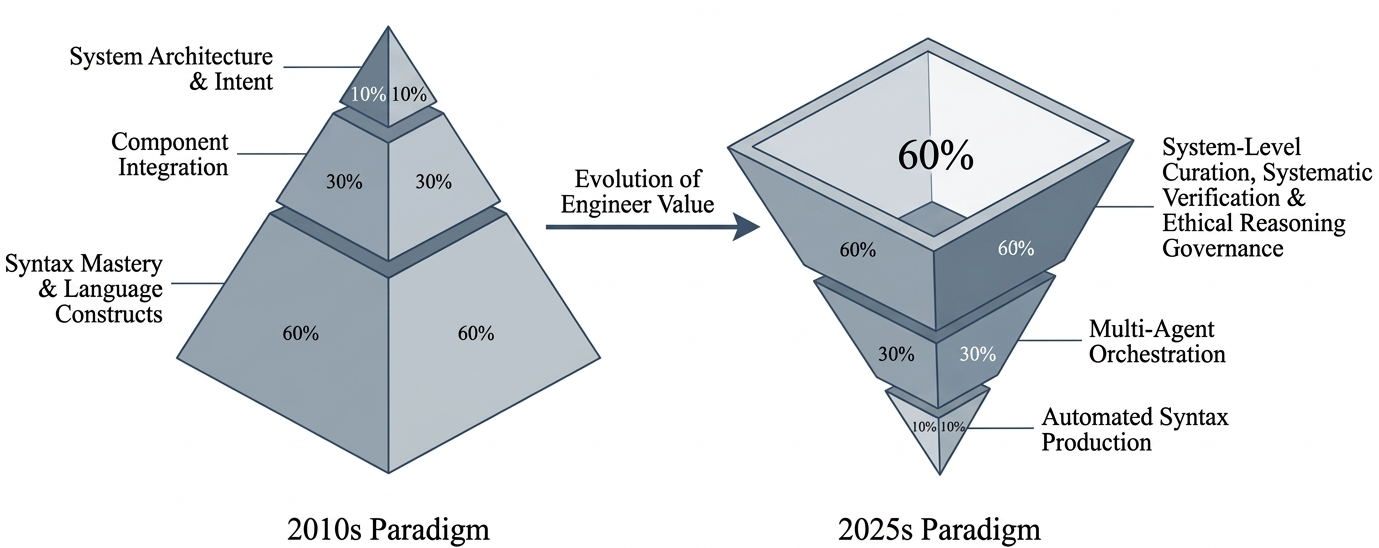

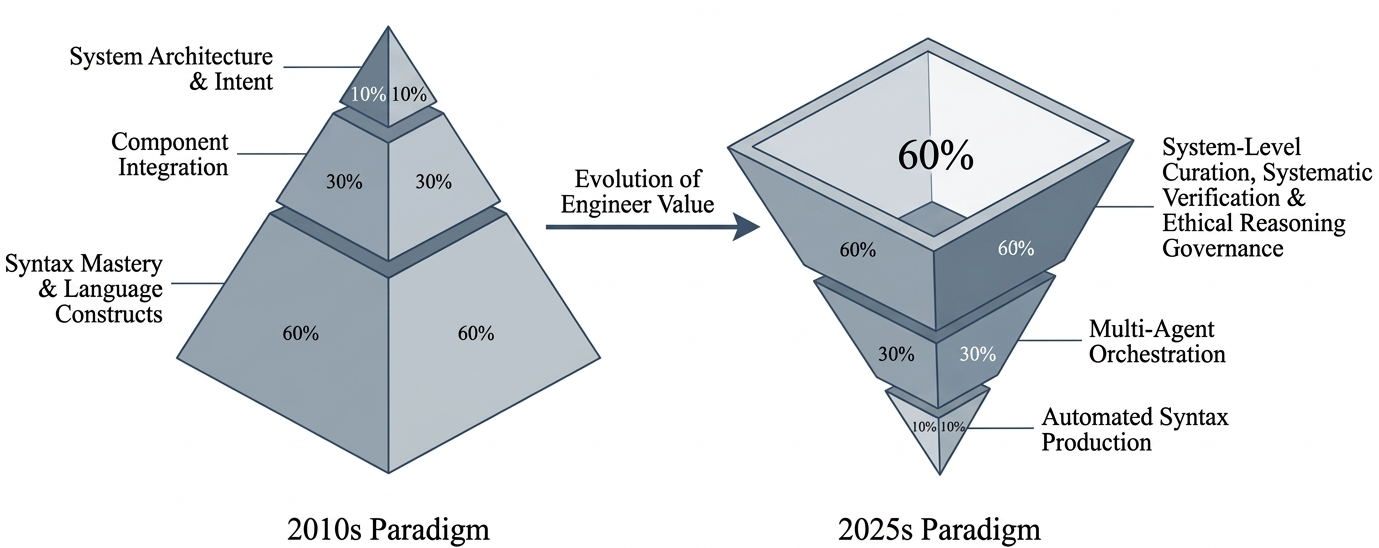

Traditionally, software engineering privileged manual code authorship, with metrics (e.g., lines of code, pull requests) aligned with incremental human labor. LLMs such as GPT-4 and Gemini, alongside specialized agentic systems, render this model obsolete: code is commoditized, and the labor of software engineering migrates from production to orchestration. This inversion is succinctly depicted as an "Inversion of the Engineering Value", where software engineers are recentered from code writers to designers and verifiers of complex sociotechnical systems.

Figure 1: The Inversion of the Engineering Value.

Empirical studies confirm that while AI-augmented development boosts productivity—median reductions of up to 30.7% in task completion time are observed—the effect is not monotonic with increased code generation capability. Maintainability and system reliability increasingly depend on robust process infrastructure centered primarily around verification and oversight rather than raw generation (2604.10599).

Verification-First Lifecycles: The New Bottleneck

As the code generation bottleneck is eclipsed, verification emerges as the new locus of engineering challenge and value. LLM outputs are frequently syntactically plausible but semantically flawed, vulnerable to hallucination, specification drift, and alignment errors. Studies emphasize that standalone LLM code must never be deployed without rigorous multi-layer verification—including static analysis, dynamic testing, and, increasingly, formal methods.

Hybrid pipelines—where LLMs operate as assistants to traditional verification tools and are themselves subject to verification—demonstrate increased coverage and interpretability, especially in safety- and security-critical domains. For instance, LLM-driven assertion suggestion coupled with human review yields net improvements in hardware verification workflows (2604.10599). Notably, empirical work demonstrates concordance among multiple independently configured AI agents (multi-agent cross-verification) outperforms any single model in terms of error detection, but also introduces new demands for orchestration and capability assessment.

Orchestration and Human-AI Collaboration

Agentic AI systems decentralize code generation and transform the engineer’s responsibility to strategic orchestration: decomposing tasks, specifying high-level intent, and managing the interplay of autonomous and human agents. No single agent configuration prevails universally; orchestration must be adaptive across tasks, domains, and project phases.

Studies of multi-agent communities (e.g., MOSAICO) corroborate that specialized cooperating agents coordinated by a central oversight process consistently achieve higher aggregate reliability relative to monolithic or uncoordinated LLM deployments. Human-AI collaboration further extends capability: performance on complex tasks increases by a factor of three over independent human or AI efforts when hybrid workflows are properly instantiated (2604.10599).

Reconceptualizing Core Competencies

The professional profile of the software engineer is restructured around four core competencies:

- Intent Articulation and Architectural Control: Engineers must precisely specify system architecture, quality constraints, and stakeholder objectives, translating them into actionable forms for AI agents—requiring fluency beyond language syntax into formal specification and constraint engineering.

- Systematic Verification: Engineers are responsible for constructing robust hybrid test and analysis pipelines capable of mediating AI-specific failure modes at both unit and integration levels. Downstream maintainability is empirically linked more to process discipline than to the sophistication of the generative model.

- Multi-Agent Orchestration: Effective workflows demand the design, composition, and coordination of ensembles of specialized AI agents. Engineers must negotiate inter-agent communication, resolve conflicts, and ensure convergence while monitoring for emergent errors and performance degradation.

- Human Judgment and Accountability: Human oversight is irreducible, encompassing business logic interpretation, ethical decision-making, and assumption of liability for system behavior. Professional practice is shifting toward roles of system stewardship, aligning system outputs with societal norms and regulatory frameworks.

Education

Curricula must pivot from language-level training to architectural reasoning, verification literacy, and prompt engineering. Early-career engineers require exposure to AI-mediated workflows and assessment mechanisms that emphasize reasoning, oral defense, and evidence-based critique. The "AI drag" effect—wherein inexperienced engineers without orchestration fluency are hampered by AI assistance—necessitates rethinking both instructional modalities and evaluation criteria (2604.10599).

The future software toolchain is characterized by orchestrative and verification-first platforms, integrating agent management, prompt versioning, provenance tracking, and real-time auditability. Verification infrastructure—ephemeral testing, static/dynamic analysis, formal proof assistants—must be tightly integrated and AI-augmented. Automated continuous integration/deployment (CI/CD) pipelines with embedded governance become central for systemic reliability.

Processes

Process models must operationalize verification-first, human-in-the-loop lifecycles, transitioning from code-centric metrics toward outcome-oriented measures such as decision velocity and system-level reliability. Specification-driven development—whereby humans author high-fidelity requirements and delegate implementation to agents—replaces code-centric cycles. Formal oversight gates and handoff protocols are required to prevent “accountability collapse”.

Professional Practice

Roles evolve toward specialization in AI orchestration and verification (e.g., AI Workflow Engineer, PromptOps Specialist). Performance is measured by the ability to sustain velocity and reliability under abundant and disposable code. Continuous upskilling, interdisciplinary collaboration, and governance aligned to societal standards and liability regimes are necessary for responsible engineering practice.

Implications and Open Problems

This reconceptualization of software engineering foregrounds several open challenges:

- Development of open standards for prompt provenance, agent declaration, and audit logs.

- Longitudinal studies on curriculum interventions and workforce adaptation.

- Empirical benchmarking of orchestration-centric versus authorship-centric workflows in industrial contexts.

- Research on hybrid integration of probabilistic LLM reasoning and formal verification for scalable, trustworthy systems.

Further, the relationship between automation and skill atrophy requires systematic monitoring, particularly the risk that undertrained engineers become mere curators of AI outputs rather than effective system designers and verifiers.

Conclusion

LLM- and agent-driven code generation necessitates a foundational reorientation of software engineering: from craft-centric authorship toward the design, validation, and governance of AI-mediated systems. The discipline’s focus now centers on intent articulation, verification, orchestration, and accountable human oversight. Future research is required to extend standards, curricula, and empirical evaluations to support this transition.

By instituting coordinated transformation across education, tools, processes, and governance, the discipline can realize both the productivity gains and reliability imperatives of agentic AI, sustaining a human-centered but AI-augmented practice of resilient and accountable software engineering (2604.10599).