- The paper presents an epistemologically-informed LLM dialogue system (CausaDisco) that significantly improves engagement and conceptual understanding.

- It employs a mixed-method study and controlled experiments, revealing 67% more dialogue turns and marked gains in logical coherence and usability.

- The framework integrates Aristotle’s Four Causes in prompt engineering to provide metacognitive support and scaffold personalized, multidimensional learning.

Introduction

The integration of LLMs into educational workflows has transformed self-directed learning by enabling scalable, personalized, dialogue-driven exploration. Despite these advances, there remains a persistent gap between potential and practice: many learners struggle to engage in productive, meaningful conversations, leading to increased cognitive load and superficial engagement. "Enhanced Self-Learning with Epistemologically-Informed LLM Dialogue" (2604.10545) addresses this limitation by systematically embedding epistemological frameworks into LLM-mediated learning, operationalized via prompt engineering and interaction design.

The research initiates with a formative study (N=26) leveraging a triangulated data collection—comprising dialogue logs, quizzes, surveys, and semi-structured interviews—to characterize how learners interact with LLMs during open-ended sensemaking tasks.

Figure 1: The formative study data pipeline and analysis workflow, culminating in the derivation of epistemologically-driven design requirements.

This mixed-method approach produces a six-stage analytical pipeline, dissecting interaction behaviors, epistemological schemas, and observed user challenges. The resulting taxonomy reveals four archetypal interaction patterns along dimensions of interactivity and reflective-mindedness: proactive, validation-seeking, content-focused, and receptive.

Figure 2: The structured analytic development mapping data to behavioral and epistemological profile categories.

A 2x2 matrix visualizes these interaction types, showing substantial behavioral and mental model variance among self-learners.

Figure 3: Matrix mapping of interaction patterns by AI interactivity and reflective-mindedness, grounding subsequent system design.

Notably, participants report substantial challenges in question formulation, response clarity, and achieving deep conceptual understanding—indicating innate limits to the efficacy of freeform LLM dialogue for diverse cohorts.

Figure 4: Participant-reported challenges, highlighting key pain points obstructing productive self-study with LLMs.

CausaDisco: Epistemological Prompt Engineering in Interactive Systems

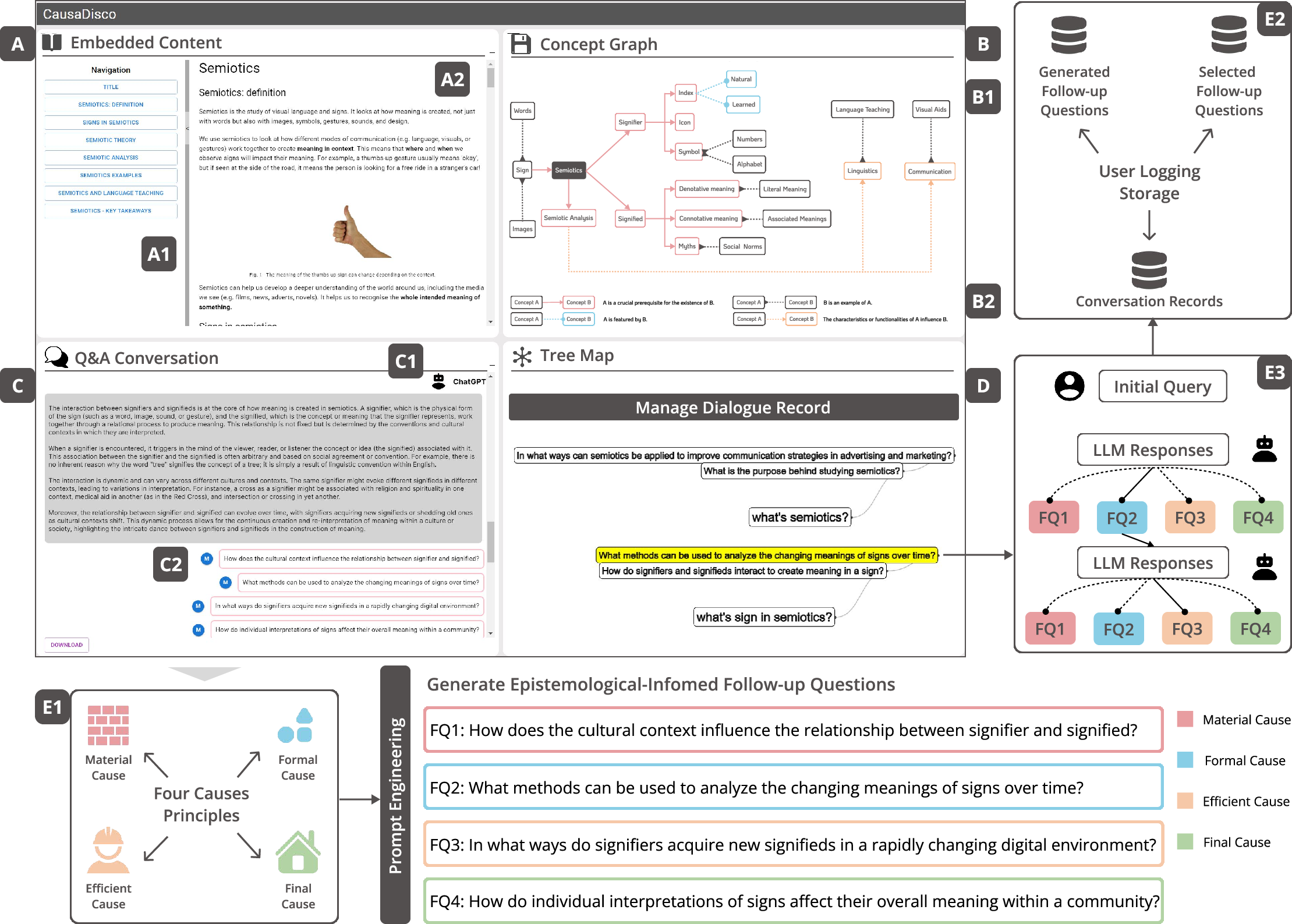

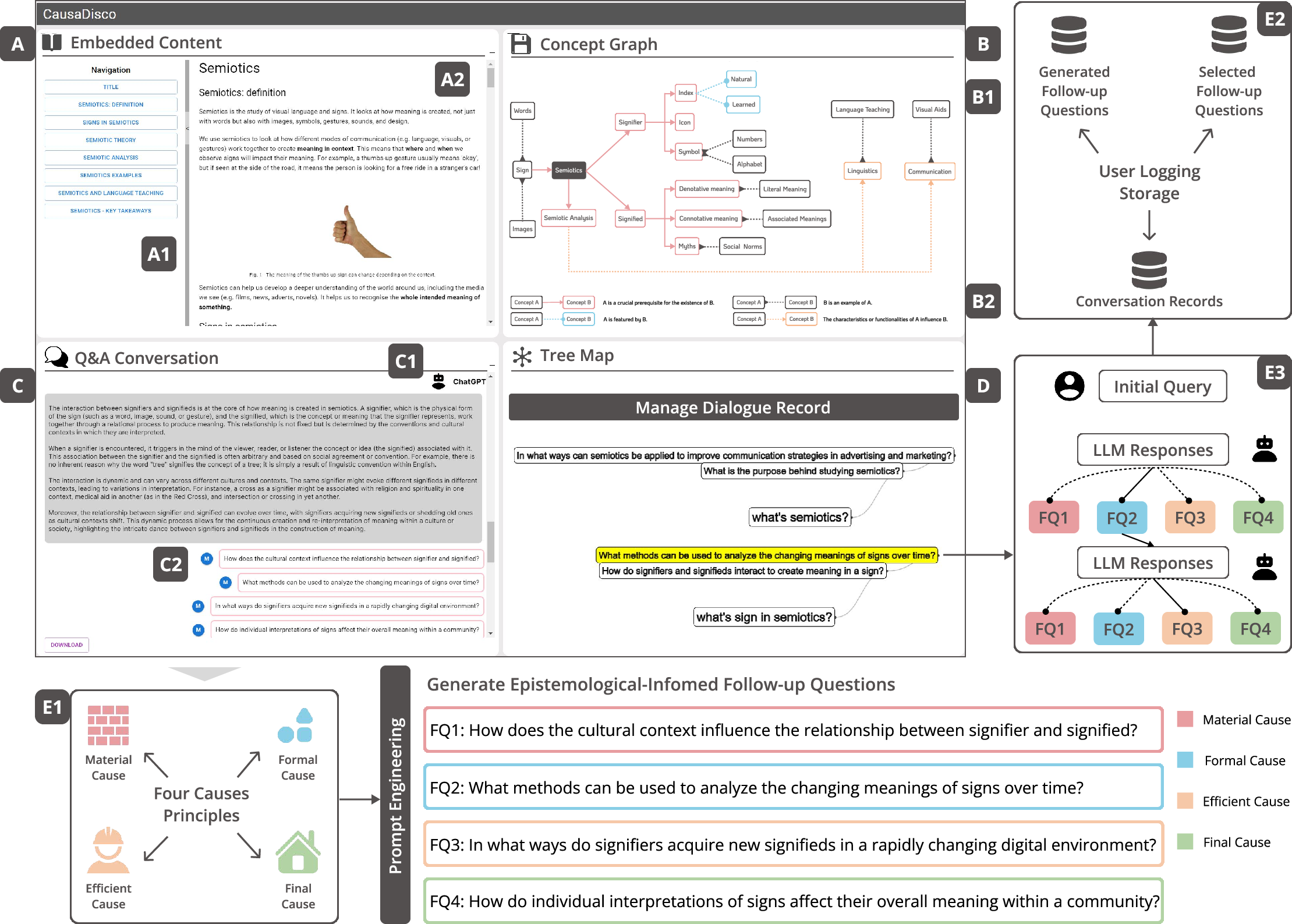

Building on the derived taxonomy and challenge landscape, the authors introduce CausaDisco, an interactive system integrating Aristotle’s Four Causes (material, formal, efficient, final) as an epistemic framework for prompt engineering. The system architecture and frontend feature suite are directly informed by user-centric and epistemological analyses.

Figure 5: System overview illustrating component views and the epistemology-driven query generation mechanism.

CausaDisco operationalizes five design requirements: (1) built-in information validation, (2) automated, structured onboarding via follow-up queries, (3) scaffolding of engagement for exploratory multi-turn dialogue, (4) systematic exposure to multidimensional perspectives, and (5) metacognitive support through visualization of the user’s interaction history.

The Conversational View leverages LLM prompt engineering to automatically generate contextually relevant follow-up questions mapped to the Four Causes, moving interaction from shallow Q&A to epistemic exploration. This epistemological grounding provides robust cognitive scaffolding, reducing non-expert cognitive load and promoting multidimensional engagement.

Evaluation: Controlled Study and Empirical Analysis

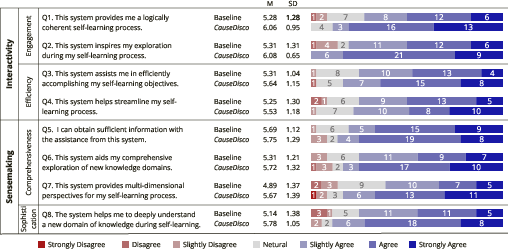

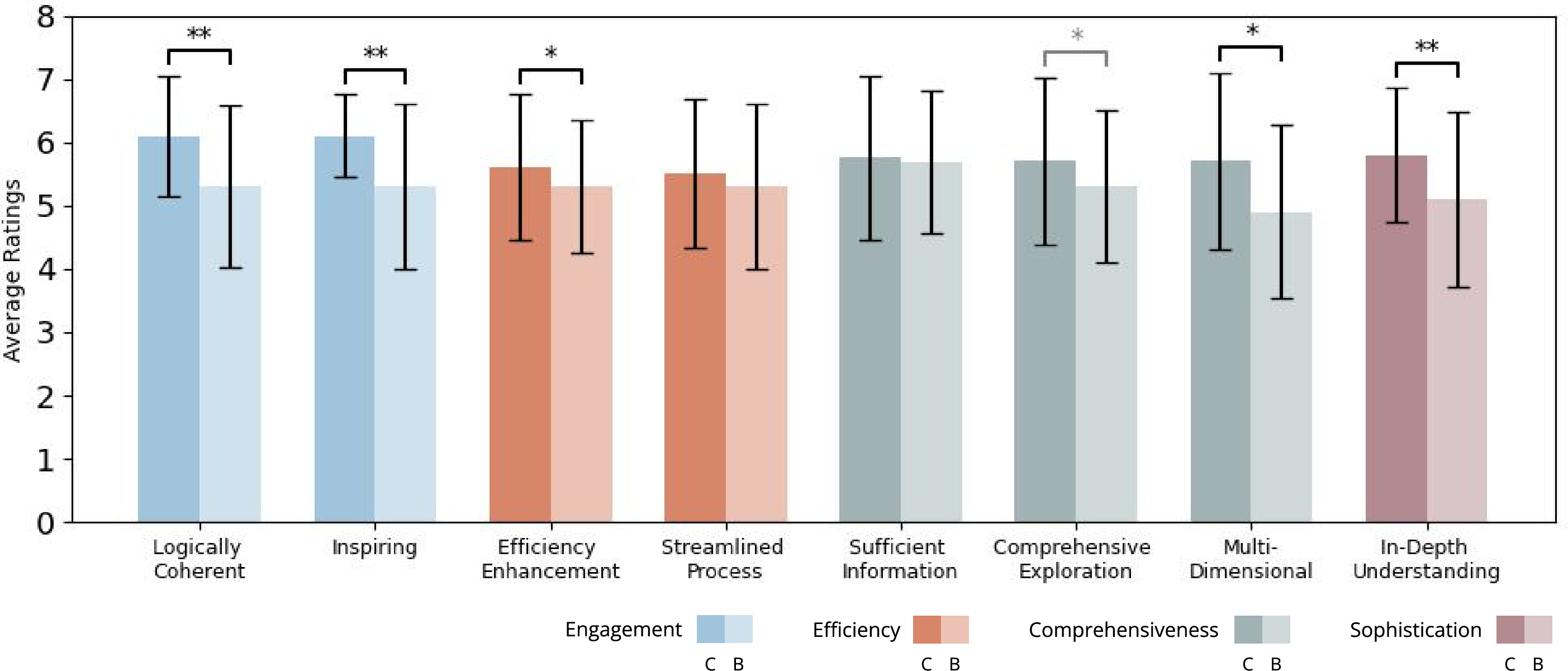

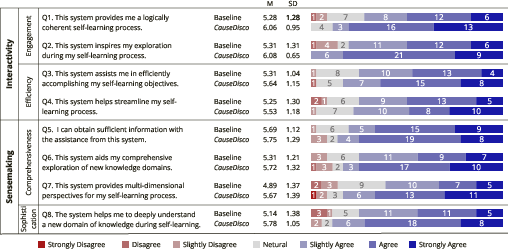

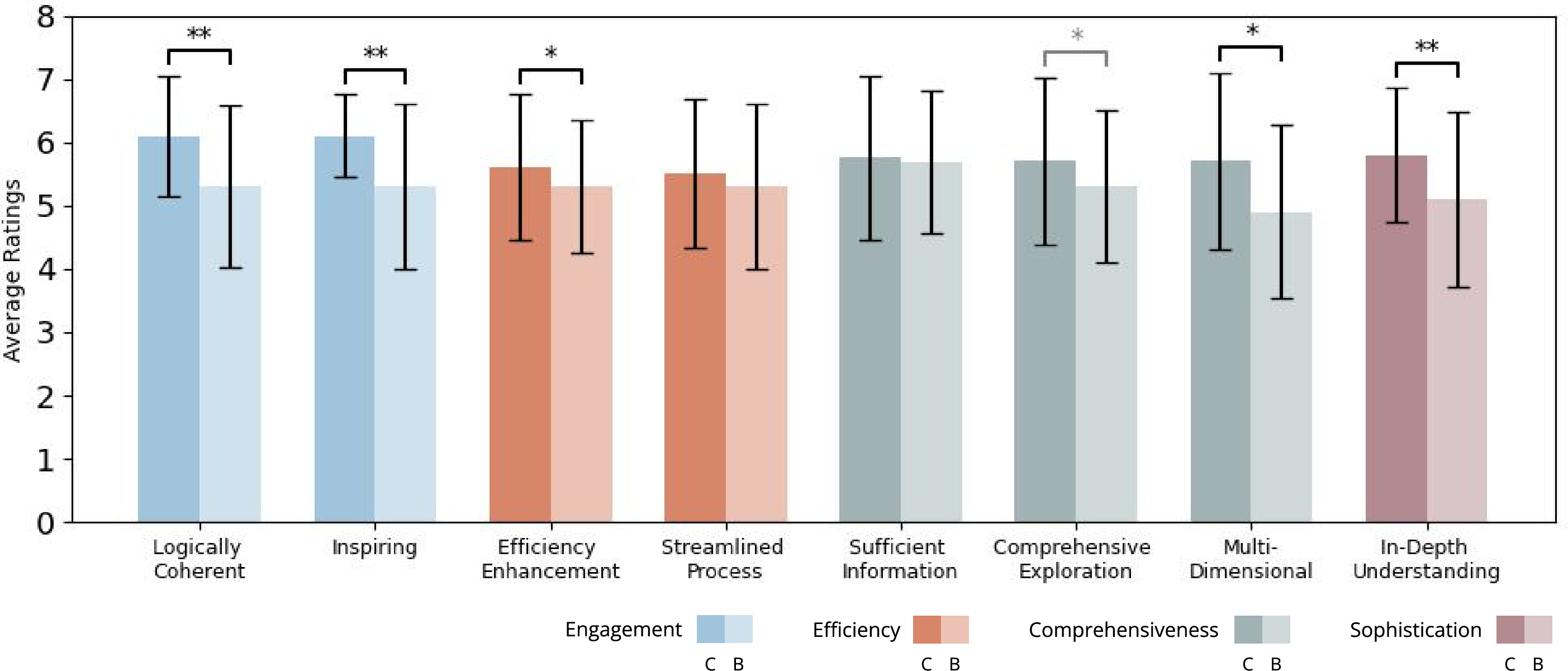

A controlled within-subjects study (N=36) evaluates CausaDisco against baseline tools. Key measured constructs include engagement, efficiency, comprehensiveness, sophistication, usability, and design quality. The experimental workflow enforces proper randomization and counterbalancing to mitigate ordering and learning effects.

Statistical analysis reveals significantly higher ratings for CausaDisco in logical coherence (p<0.01), inspiration to explore (p<0.01), and engagement, as well as moderate-to-strong evidence for improvements in efficiency and multidimensionality of information acquisition (p<0.05). Notably, participants conducted 67% more dialogue turns using CausaDisco and self-report increased depth in their conceptual understanding.

Figure 6: Subjective participant ratings demonstrating that CausaDisco enhances interactivity and sensemaking compared to baseline.

Figure 7: Comparative ratings across engagement, efficiency, comprehensiveness, and sophistication, substantiating the system’s epistemic design hypothesis.

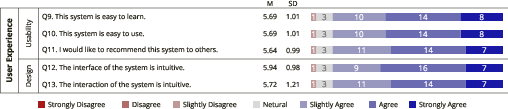

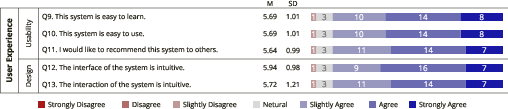

Usability/design acceptance is also robust, with the majority reporting ease-of-use, rapid learnability, and intent to recommend.

Figure 8: Usability and design metrics, indicating positive reception of CausaDisco's epistemologically-grounded interaction model.

Design and Theoretical Implications

The results have both practical and theoretical implications. Practically, the findings validate that epistemologically-framed prompt engineering mitigates several key user challenges in LLM-mediated self-learning—especially for less proactive or LLM-naive learners. Theoretically, the work grounds human-AI collaborative learning in epistemology, providing a domain-agnostic pathway for cognitive scaffolding and sensemaking.

The research also identifies necessary directions for future system expansion: dynamic adaptation of epistemological scaffolds per learner profile and disciplinary context, deeper personalization through user-modeling, and extension of metacognitive supports. The Four Causes framework, while broadly applicable, may require weighting and adaptation for domain-specific resonance, especially in STEM contexts where certain causal dimensions are less relevant.

Conclusion

"Enhanced Self-Learning with Epistemologically-Informed LLM Dialogue" provides a rigorous empirical and design framework for integrating epistemological theory into LLM-based self-learning environments. Key empirical results include statistically significant improvements in engagement and sensemaking quality via epistemic prompt engineering. The work underscores the necessity of moving beyond one-size-fits-all LLM tools and towards inclusive, cognitively-aligned educational agents. This epistemological grounding is expected to inform future developments in personalized AI-driven education, suggesting a principled path for augmenting both the efficacy and inclusivity of LLM-mediated self-directed learning experiences (2604.10545).