- The paper reframes diffusion models by unifying them under Langevin dynamics, demonstrating that VP, VE-Karras, and flow matching are fundamentally equivalent.

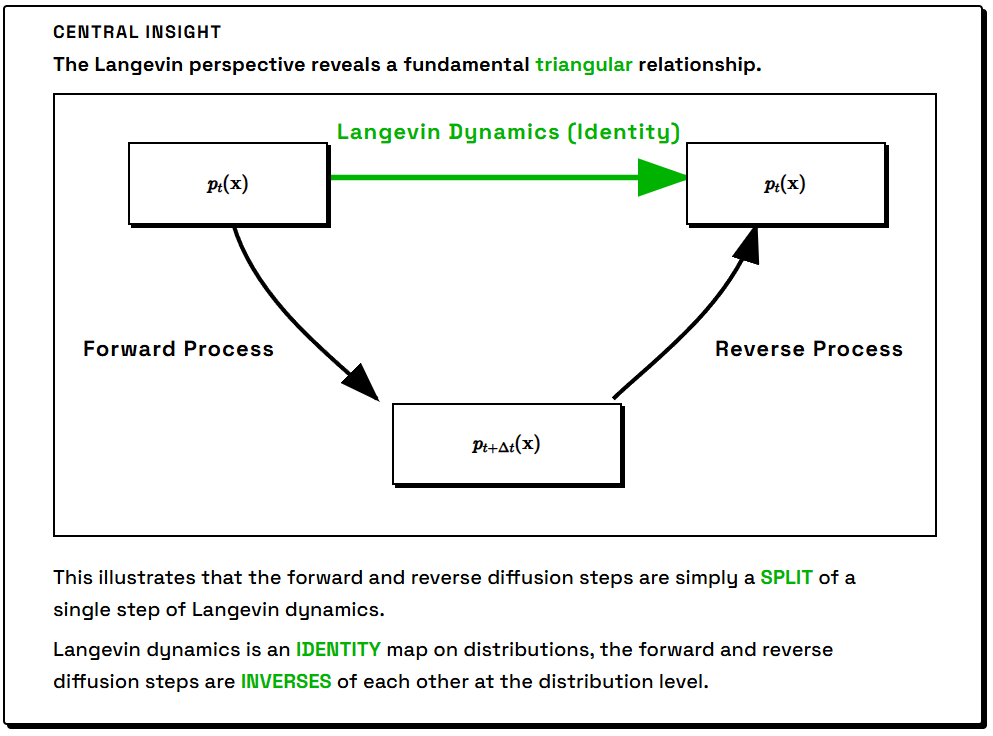

- It details the construction of forward and reverse diffusion processes as splits of Langevin dynamics, clarifying the role of Gaussian noising and denoising.

- The analysis establishes maximum likelihood foundations of different parameterizations, informing loss function design and model selection for generative tasks.

A Unified Langevin Perspective on Diffusion Models: Concepts, Equivalences, and Training

Introduction

"Rethinking the Diffusion Model from a Langevin Perspective" (2604.10465) advances the theoretical understanding of diffusion-based generative modeling by systematically reframing diffusion models within the unifying framework of Langevin dynamics. The work scrutinizes prevailing perspectives—VAE, score-based, and flow-based—and demonstrates their equivalence under maximum likelihood through the lens of Langevin dynamics, which acts as an identity map over distributions. This approach elucidates the construction of forward and reverse diffusion processes, clarifies the relation of ODE and SDE variants, and dispels misconceptions regarding the simplicity or superiority of flow-matching formalisms.

Langevin Dynamics as the Foundational Identity

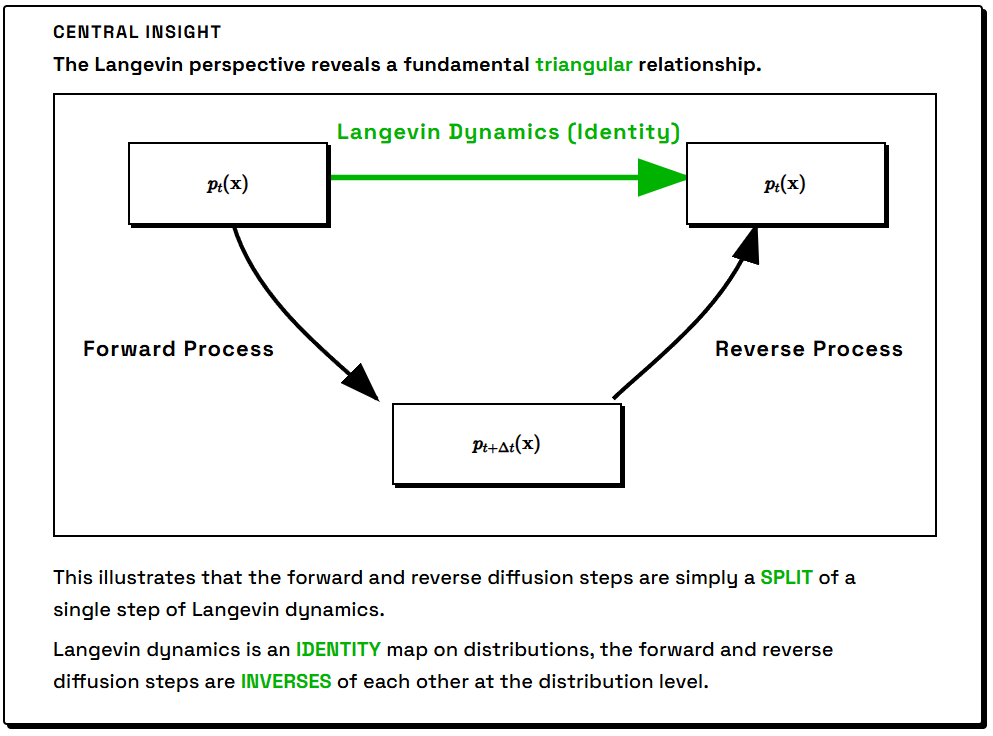

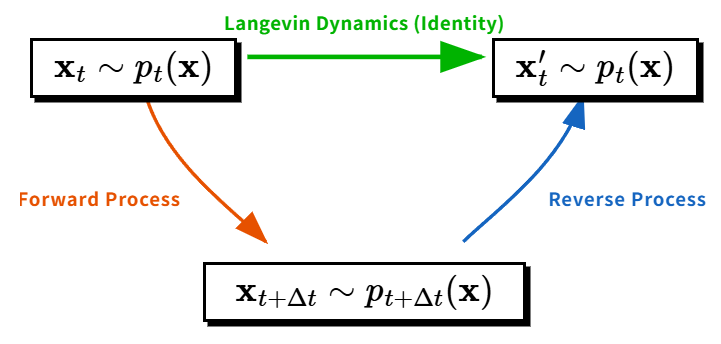

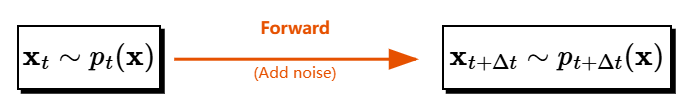

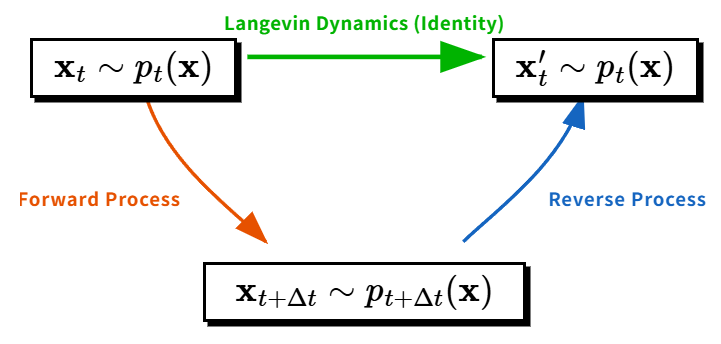

Langevin dynamics underlies most generative diffusion frameworks due to its property of having a prescribed stationary distribution: applying Langevin dynamics to samples from p(x) yields an independent sample from the same density. This 'identity' action provides the conceptual foundation for generative processes that can be decomposed into a forward (noising) and reverse (denoising) trajectory.

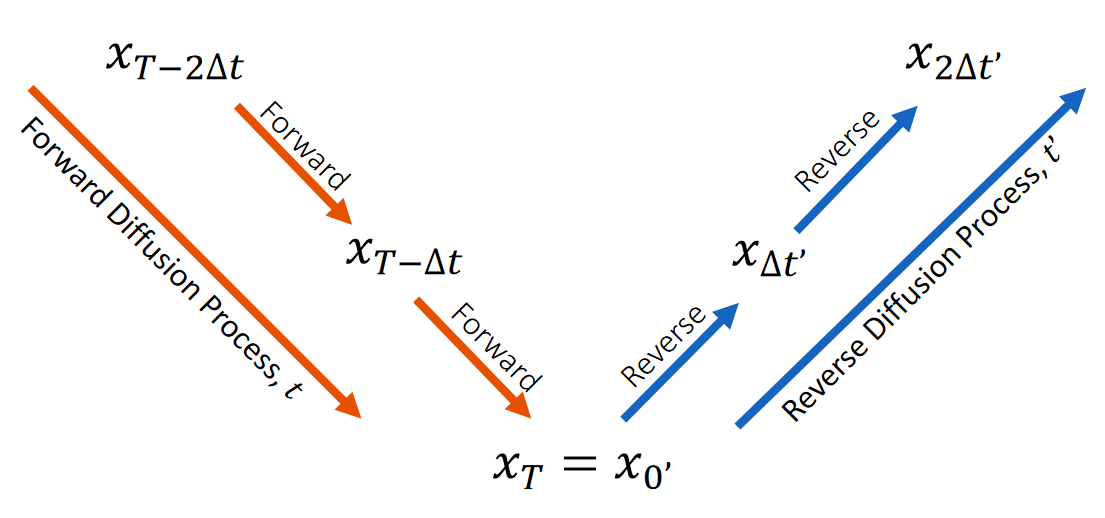

Figure 1: Central organizing principle—viewing forward/reverse processes as a split of Langevin dynamics (identity operation).

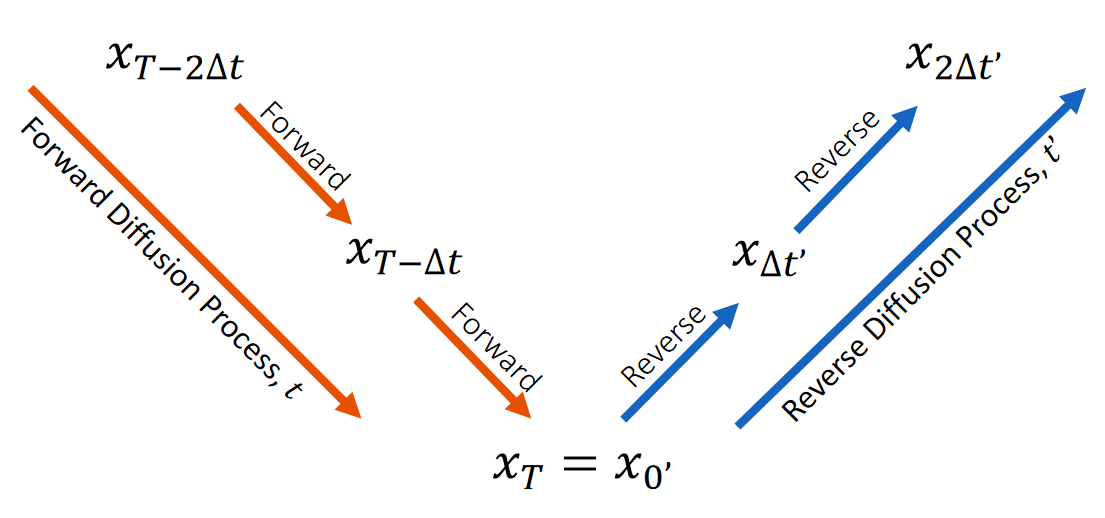

Forward and Reverse Diffusion: Splitting the Identity

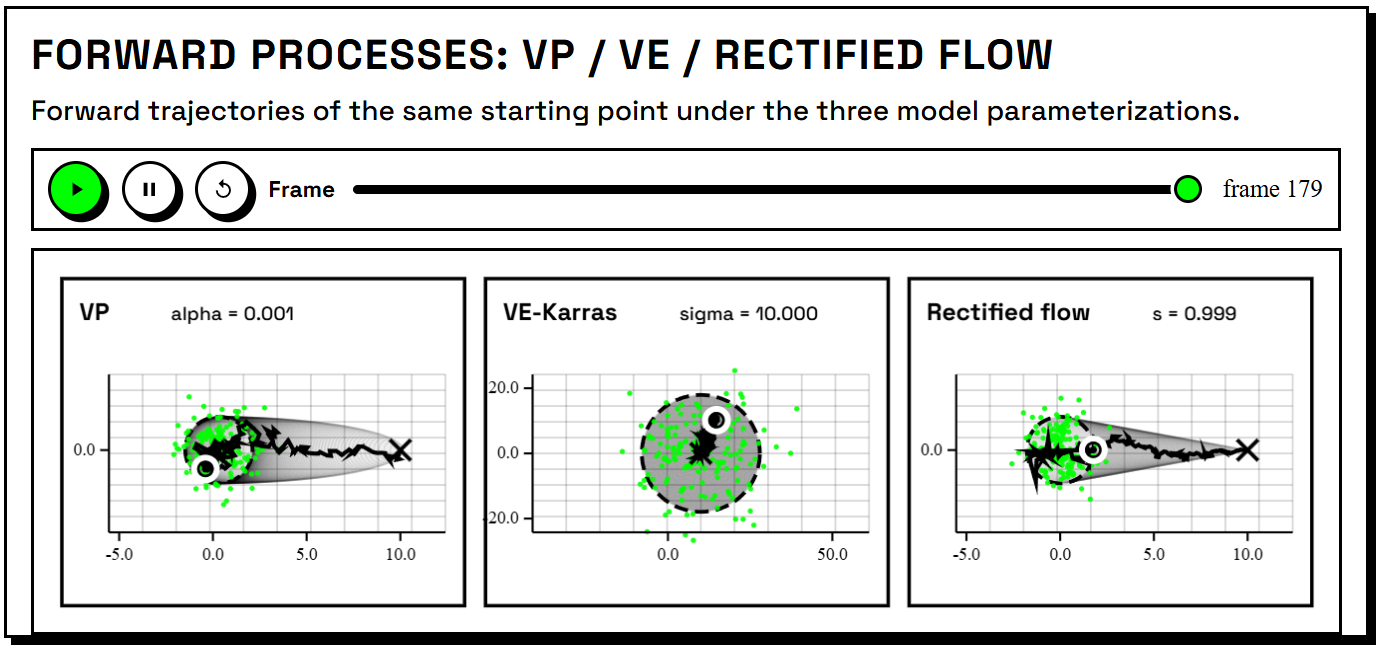

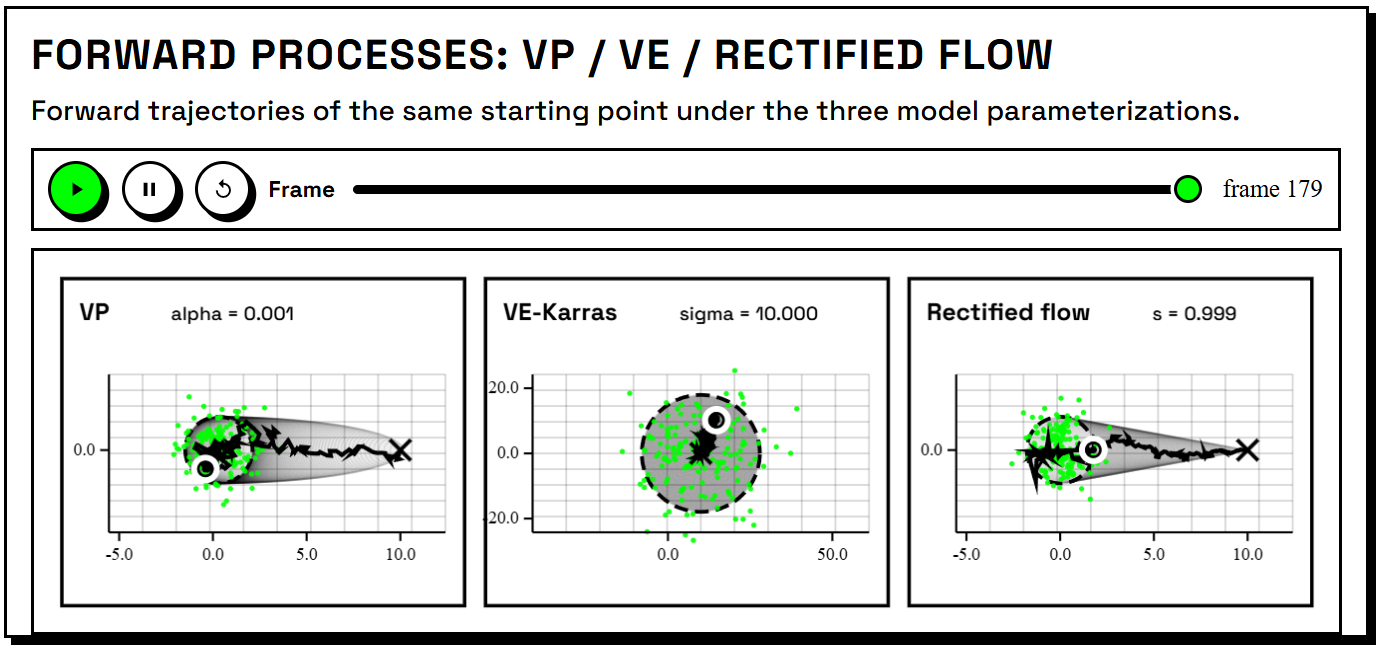

The forward process is cast as an Itô SDE, parameterized variously as VP, VE-Karras, and rectified-flow forms, each of which defines unique noising schedules but is shown to be reparameterizations of the same underlying transformation. The reverse process is then derived as the conjugate operation that, when composed with the forward process, reconstructs the original Langevin dynamics.

Pragmatically, diffusion models are initialized with various forward SDEs:

Figure 3: Overview of forward processes: VP (Ornstein–Uhlenbeck drift), VE-Karras (variance-exploding), and rectified-flow, highlighting their respective sample trajectories.

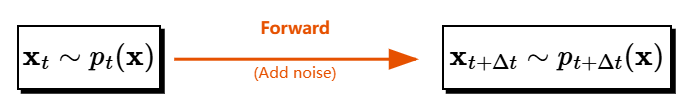

A forward diffusion step with increment Δt can be visualized as pushing the data closer to a Gaussian reference, with gradual addition of noise.

Figure 2: Each forward diffusion step adds Gaussian noise, moving the sample distribution toward a Gaussian.

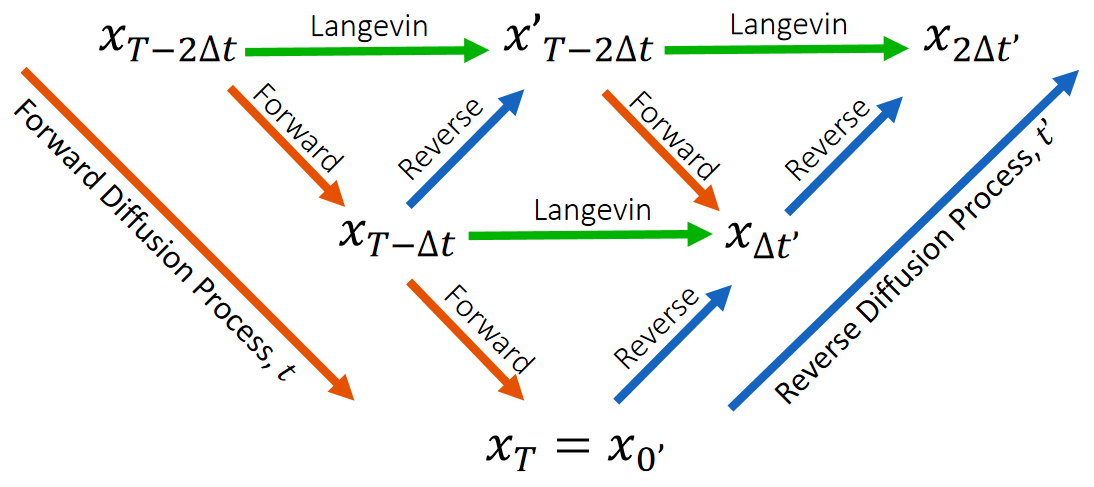

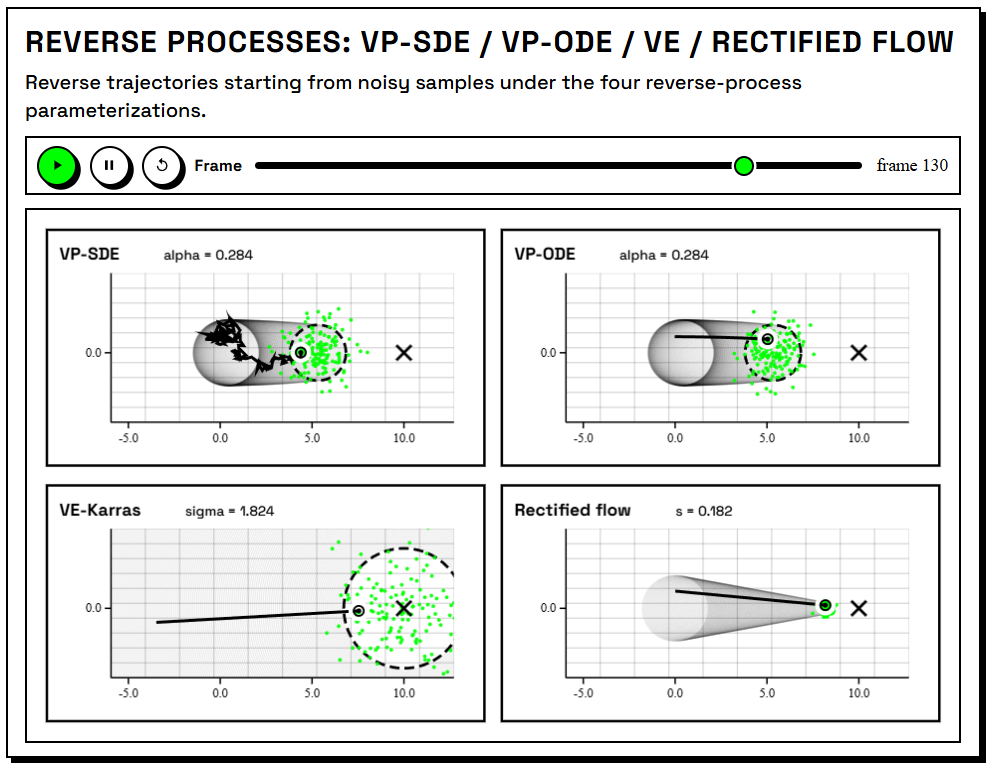

The reverse process is constructed such that composing a forward and corresponding reverse step forms a legitimate Langevin update on the intermediate distribution. The Langevin split for each paradigm is tabulated in the paper, clarifying the relationships among parameterizations.

Figure 4: The composition of forward and reverse processes reconstructs Langevin dynamics.

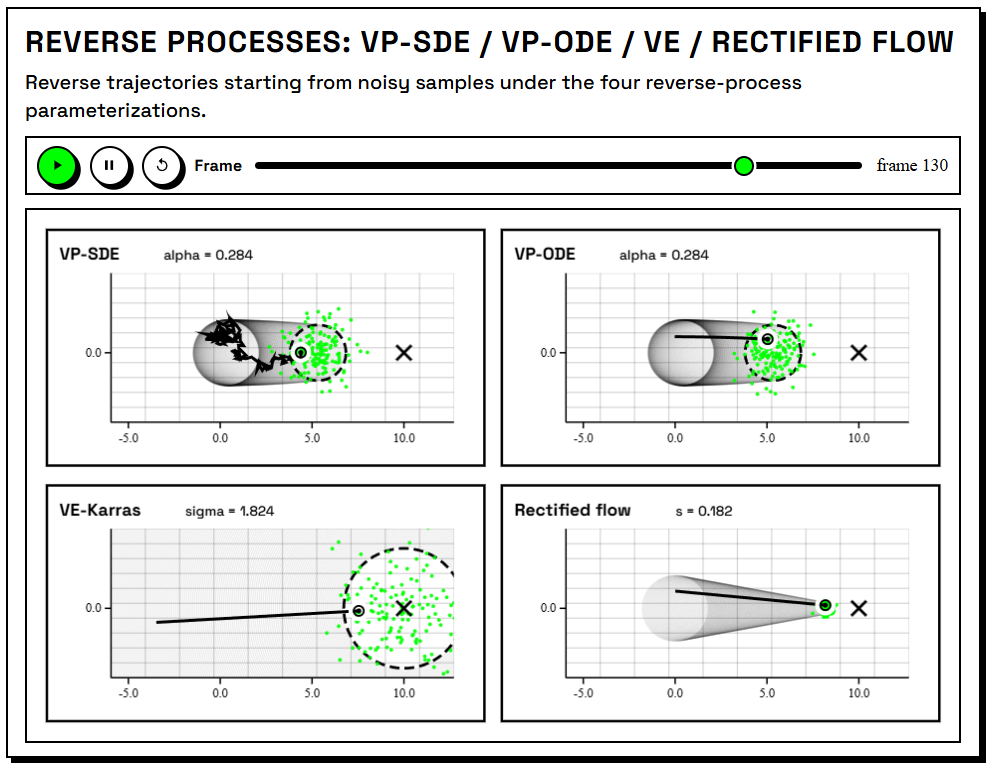

Reverse trajectories in different reparameterizations imply distinct geometric behaviors, with flow-based parameterizations emphasizing approximate straight-line interpolation in sample space—only a local, not a global, property.

Figure 5: Reverse diffusion trajectories under VP, VE-Karras, and rectified-flow parameterizations; straight lines occur only in degenerate (one-point) cases.

Forward–Reverse Duality: Theoretical Guarantees

An explicit correspondence is established—the evolution of the forward and reverse processes yields KL contraction and ensures, at sufficient time horizon, that the generative process initiated from noise recovers the true data distribution in theory. This formalizes why diffusion models, unlike conventional VAEs, can perfectly invert the forward map (absent parameterization or learning error), supporting an exact prior–posterior pairing for the entire trajectory.

Subsequent figures illustrate the interplay of these dual processes, emphasizing time reversal between forward and reverse clocks.

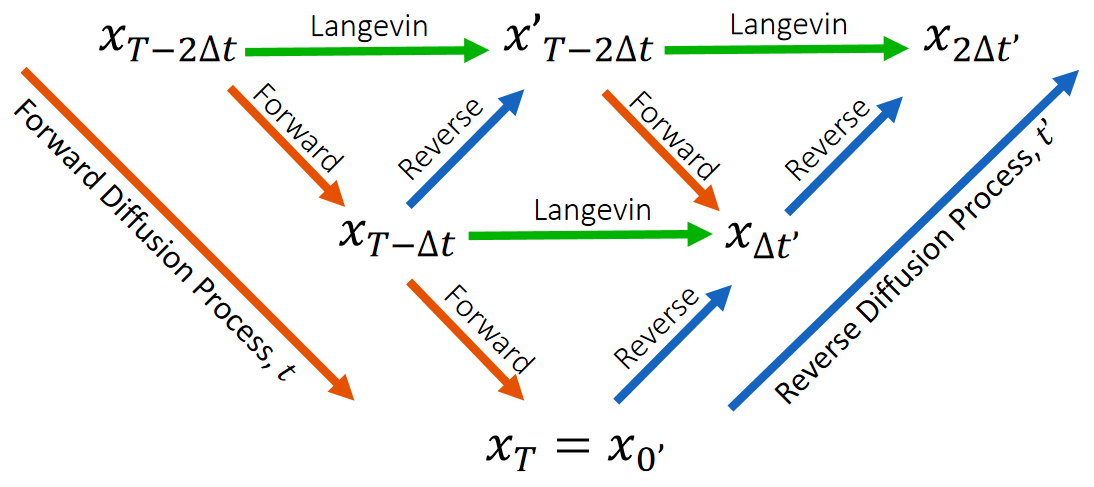

Figure 6: Partial forward–reverse cycle: forward (green) and reverse (blue) steps are coupled via their time indices.

Figure 7: Horizontal rows represent Langevin steps matching forward and reverse process samples with identical probability densities.

Maximum Likelihood, Parameterization Equivalences, and Losses

The training objective is derived via time differentiation of the KL divergence along the forward diffusion trajectory. This yields a family of equivalent objectives—denoising score matching (DSM), marginal score matching (SM), and flow matching—which differ only in representation due to parameterization but are equivalent under maximum likelihood. The target is always the score function of the data at a given time.

The paper specifies conversion rules between the native outputs of VP, VE, and rectified-flow models (score, noise, and velocity field, respectively), showing that all represent the same underlying object.

Key losses across parameterizations—including the widely used epsilon prediction and velocity matching—are algebraic reformulations of the same squared-score objective weighted over noise levels. The conclusion is that flow matching, denoising, and score matching are not fundamentally distinct; the perceived simplicity of flow matching arises from local geometric properties, not theoretical advantage, and cannot be generalized as a global property over complex data manifolds.

Practical and Theoretical Implications

- Unified Analytical Framework: All SDE-based and ODE-based diffusion models can be regarded as alternative splittings of Langevin dynamics, simplifying comparative analyses and bridging the terminology across the literature.

- Loss Function Engineering: Deriving losses from first principles clarifies the effect of weighting on the optimization landscape, supporting informed choices in model design and training heuristics.

- Parameterization Choice: Conversions between outputs allow for hybrid or adaptive approaches to match the problem structure without sacrificing theoretical guarantees.

- Score Estimation Universality: Since all routes converge to the estimation of time-dependent scores, advances in score estimator architectures or initialization strategies will have cross-cutting benefits.

Future Directions

Potential extensions include leveraging the Langevin unification for innovation in conditional sampling, inpainting, or fast and exact inference variants (e.g., training-free inpainting, as in related work), as well as systematic study of discretization error and geometric properties of the generative path in high-dimensional state spaces. The equivalence between parameterizations suggests that progress in one approach (e.g., improved velocity networks for flow matching) can be ported to classic score-based models seamlessly, accelerating advancements across the field.

Conclusion

This work refines the conceptual and technical toolkit for diffusion-based generative modeling by systematizing the theory around the simple yet powerful framework of Langevin dynamics. Through this lens, the superficial diversity of architectures, parameterizations, and objectives collapses to a set of equivalent representations, all targeting maximal likelihood estimation of time-evolving (or time-reversed) score fields (2604.10465). This perspective strengthens cross-model interpretability, facilitates theoretical analysis, and provides clarity for future research and informed model selection.