- The paper demonstrates a significant increase in lexical complexity and a decline in readability in cybersecurity papers following the adoption of generative AI.

- It employs detailed syntactic and lexical metrics as well as marker word frequency analysis to capture evolving writing styles over 25 years.

- It highlights varied, delayed conference policy responses regarding AI usage, emphasizing challenges in standardizing disclosure and ensuring accessible scientific communication.

The Impact of Generative AI on Writing Style in Top-Tier Cybersecurity Papers

Introduction

The release of ChatGPT in late 2022 marked a significant inflection point in the utilization of generative AI (GenAI) for academic writing, particularly in highly competitive domains such as cybersecurity. This study systematically examines the linguistic and policy-driven consequences of GenAI adoption across 25 years of publications in four A* cybersecurity conferences. The analysis leverages syntactic and lexical metrics, as well as the frequency of curated marker words, to quantify evolving writing norms. The findings reveal pronounced shifts in writing style post-2022 and highlight considerable heterogeneity in conference policy responses to GenAI.

Policy Evolution in A* Cybersecurity Conferences

Despite swift community uptake of LLMs for manuscript preparation, formal policy adoption by top conferences lagged. The study documents that conference guidelines concerning GenAI were non-existent in most venues until 2025, at which point NDSS enacted explicit policies. Other flagship venues (USENIX Security, IEEE S&P, ACM CCS) followed suit in 2026, with varying stipulations regarding author responsibility, disclosure of GenAI usage, acceptability of AI-assisted grammar correction, and environmental impact reporting.

Conferences uniformly require authors to claim full responsibility for AI-assisted content. However, structured declaration sections and environmental disclosures are only mandated by a subset of venues, notably IEEE S&P. The lack of policy standardization introduces ambiguity for multi-venue authors and impedes the establishment of consistent community norms.

Quantitative Analysis of Linguistic Shifts

Lexical Complexity and Readability

Analyzing the text of all accepted papers from 2000 to 2025, the study uncovers that the release and subsequent widespread adoption of GenAI tools coincide with measurable increases in lexical complexity and a reduction in readability.

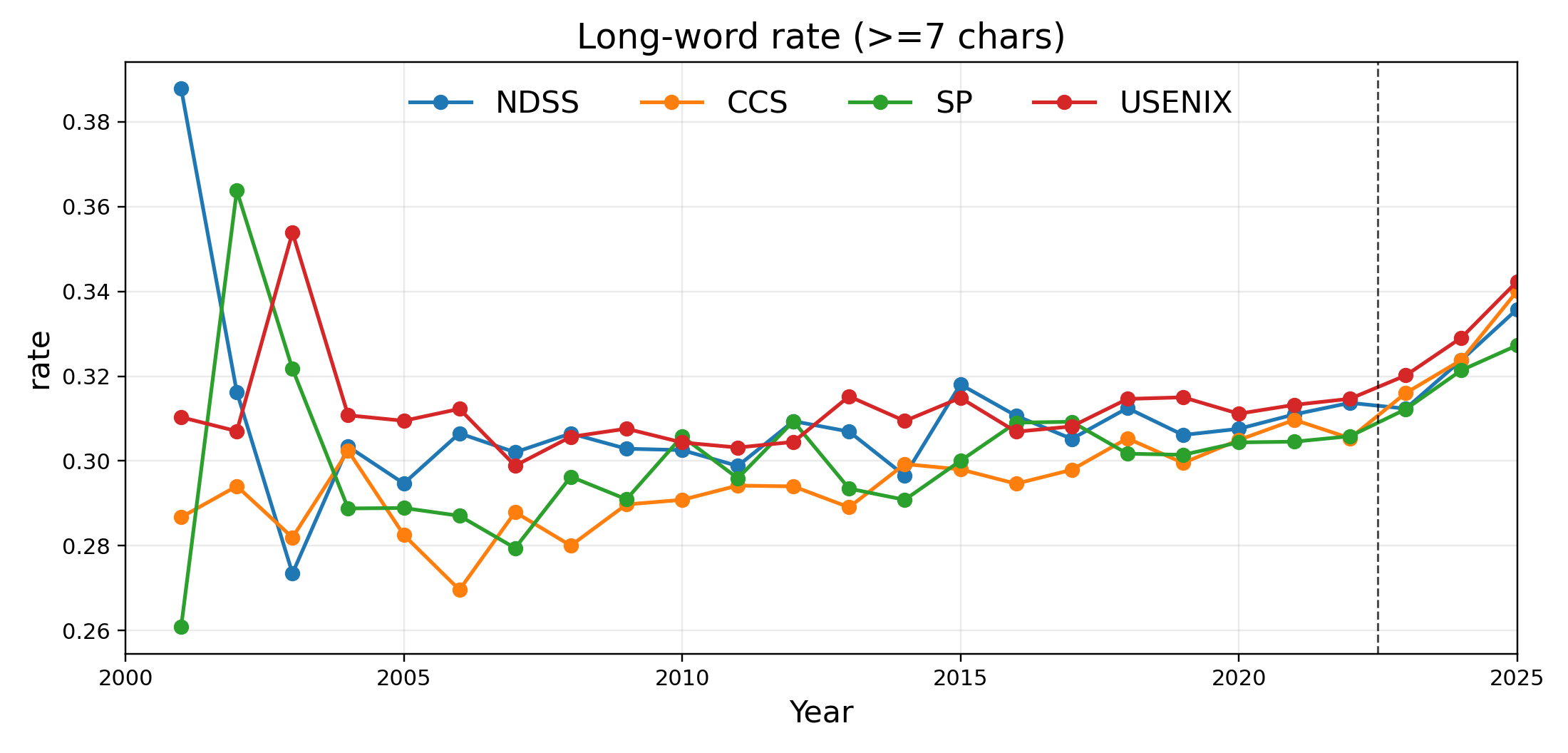

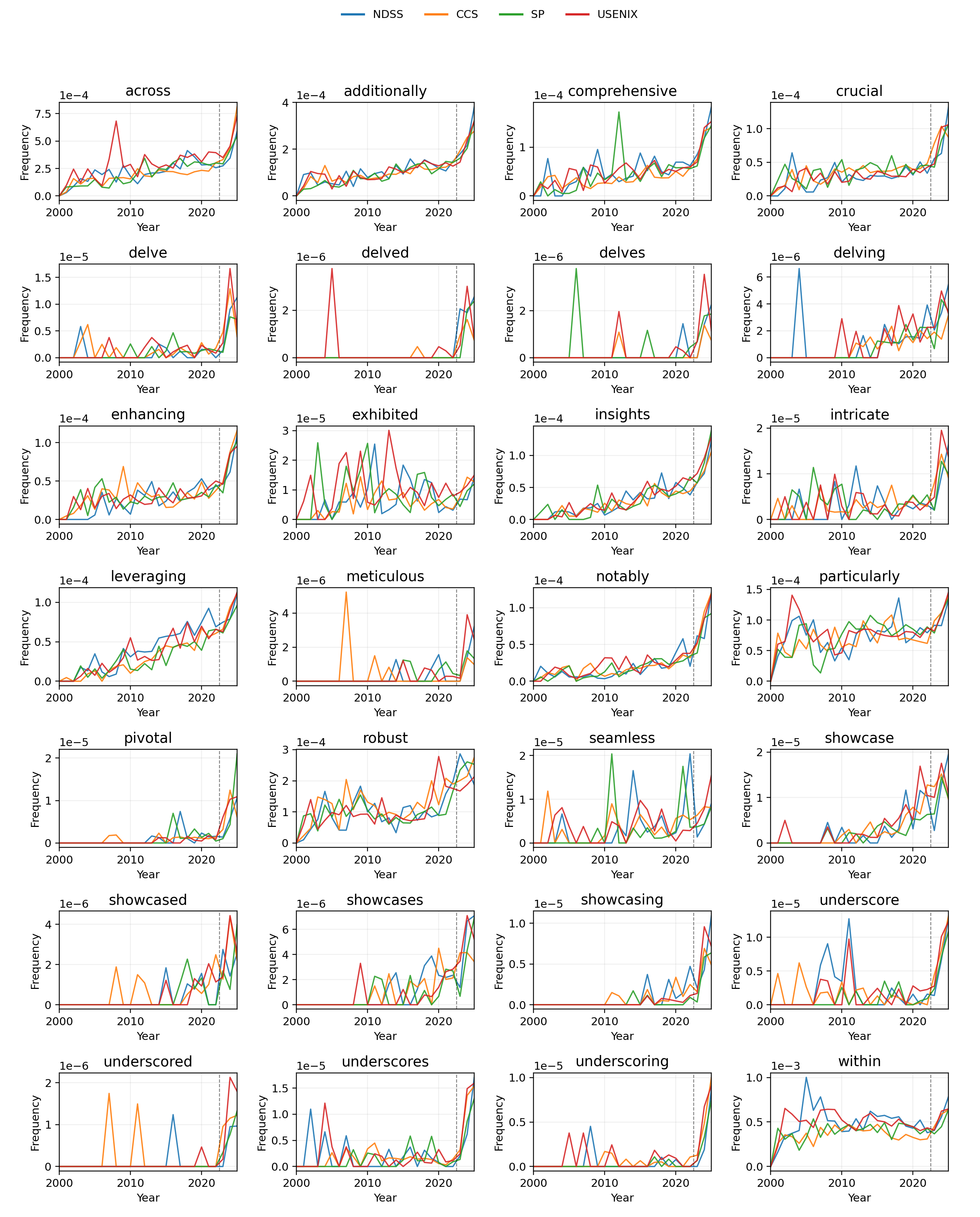

A marked increase in the proportion of long words (≥7 characters) is observed starting in 2022, with the trend maintaining an upward trajectory through 2025.

Figure 1: The average percentage of words in a paper consisting of at least 7 characters across all venues.

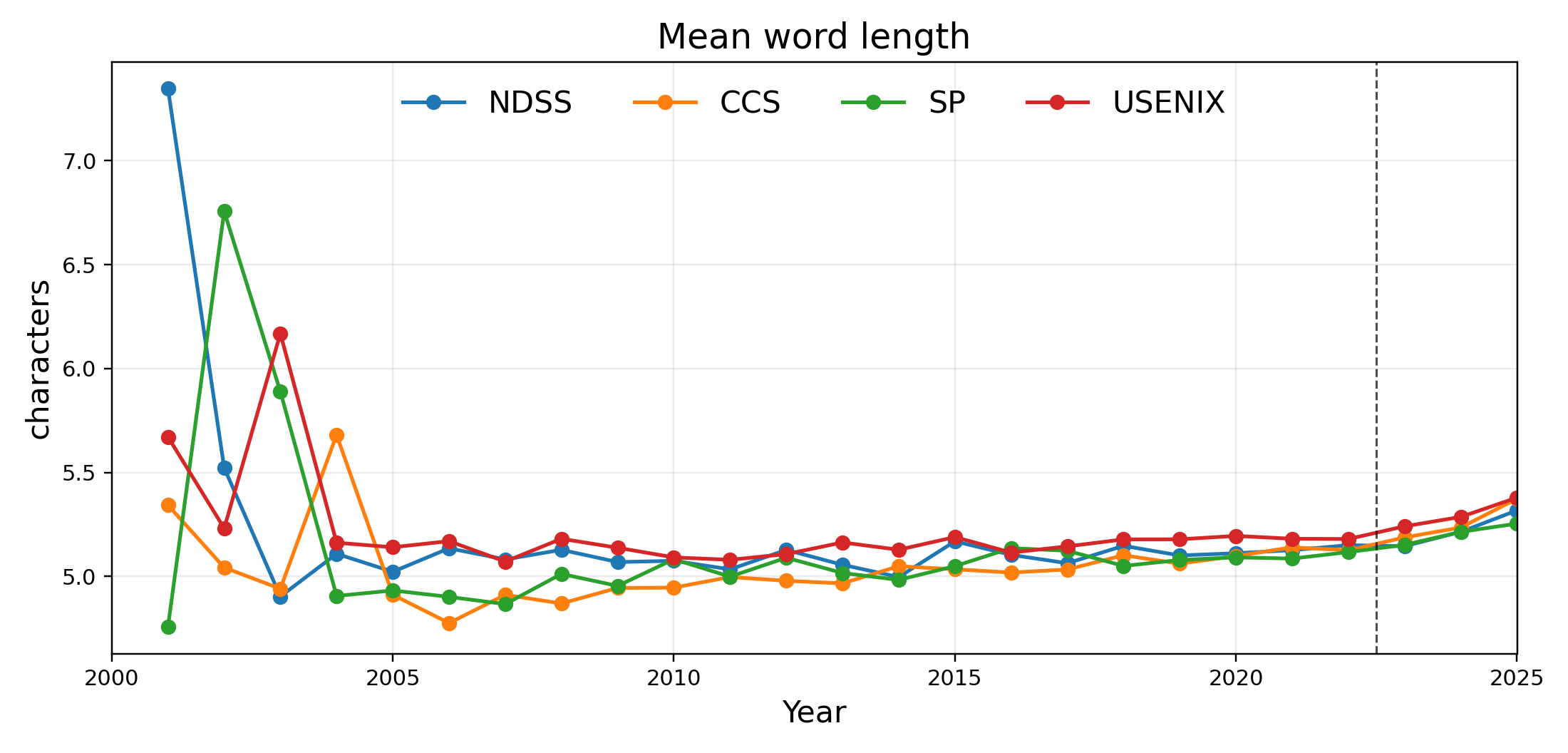

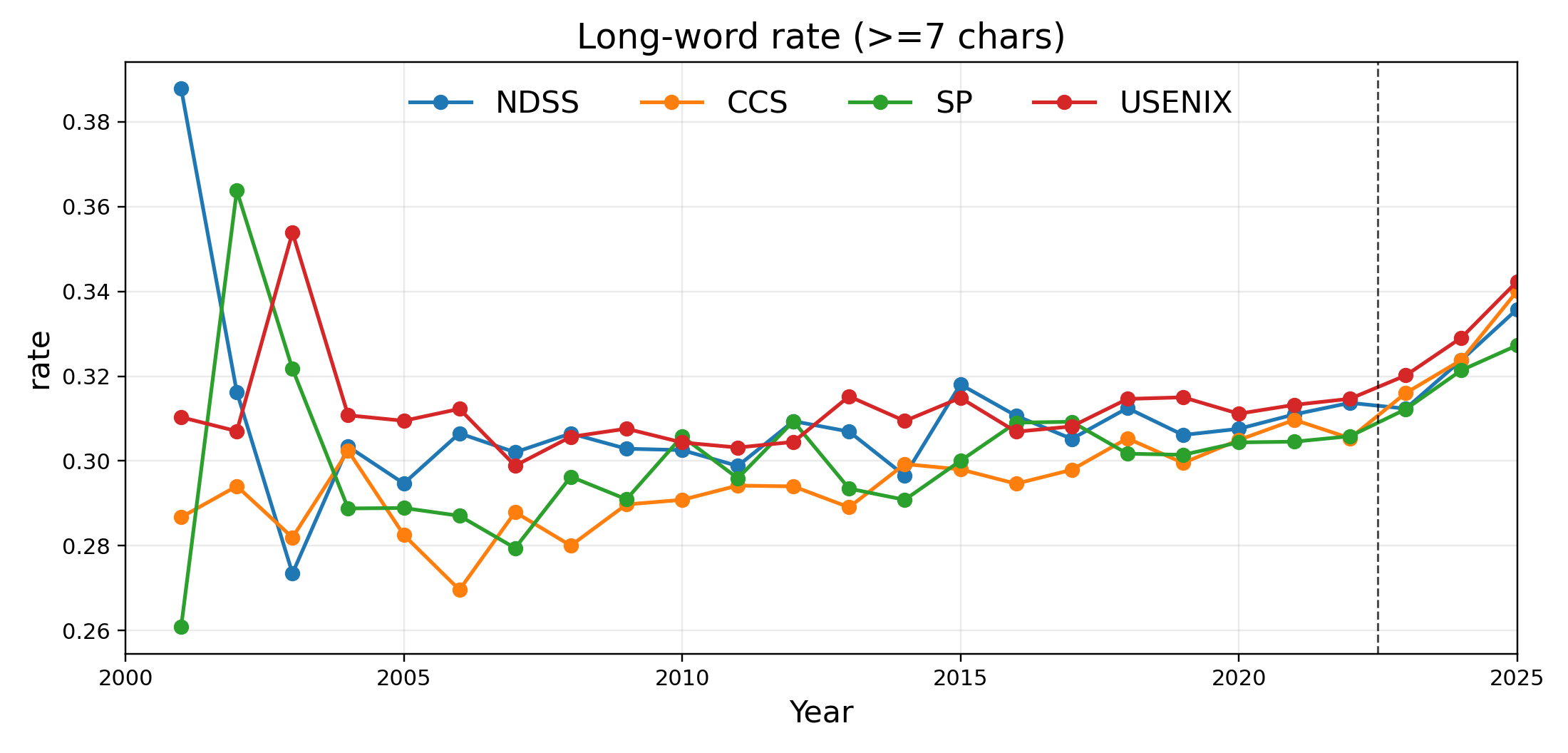

Further, the mean word length across all tokens exhibits a similar post-2022 elevation.

Figure 2: The mean length of words averaged over all papers by year, showing an increase after 2022.

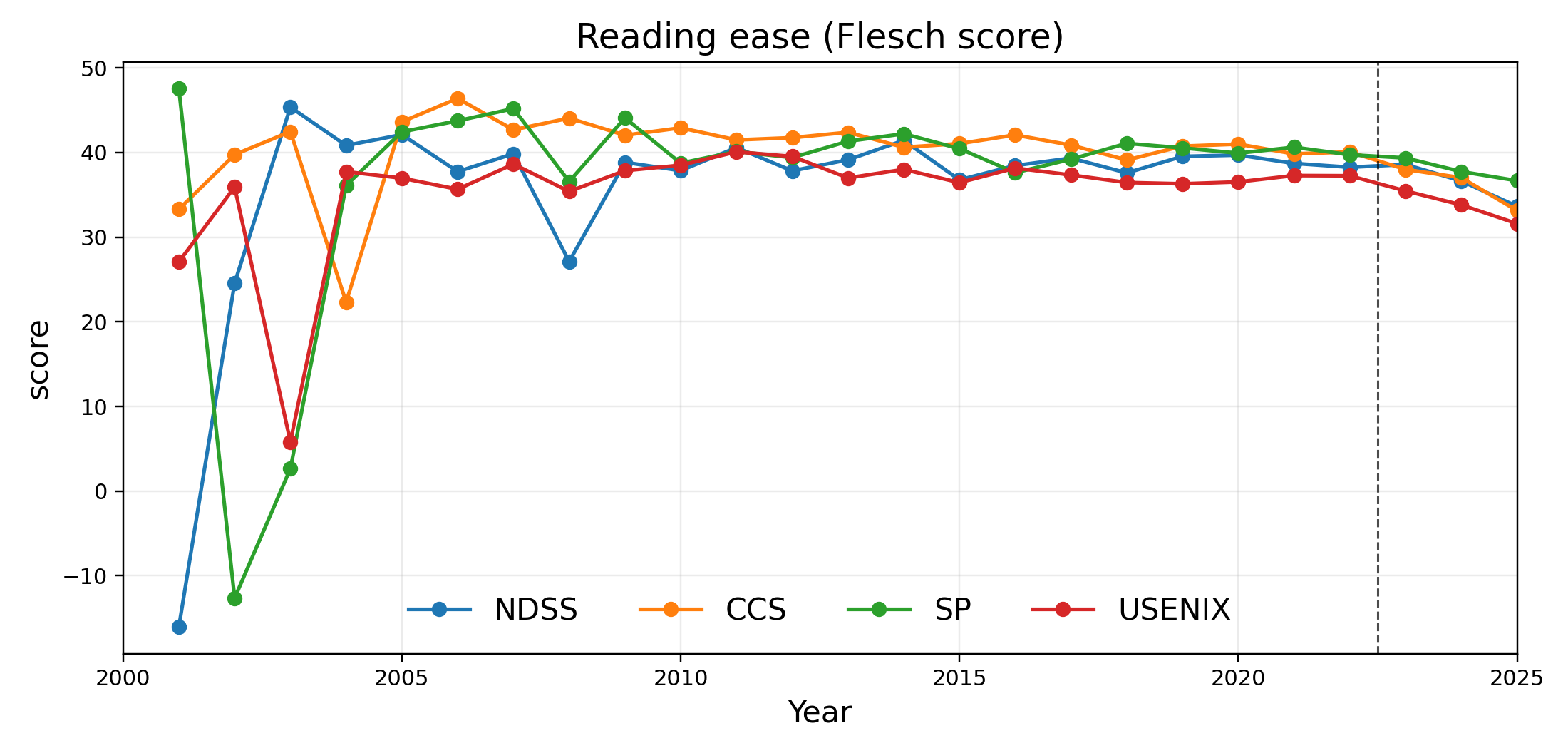

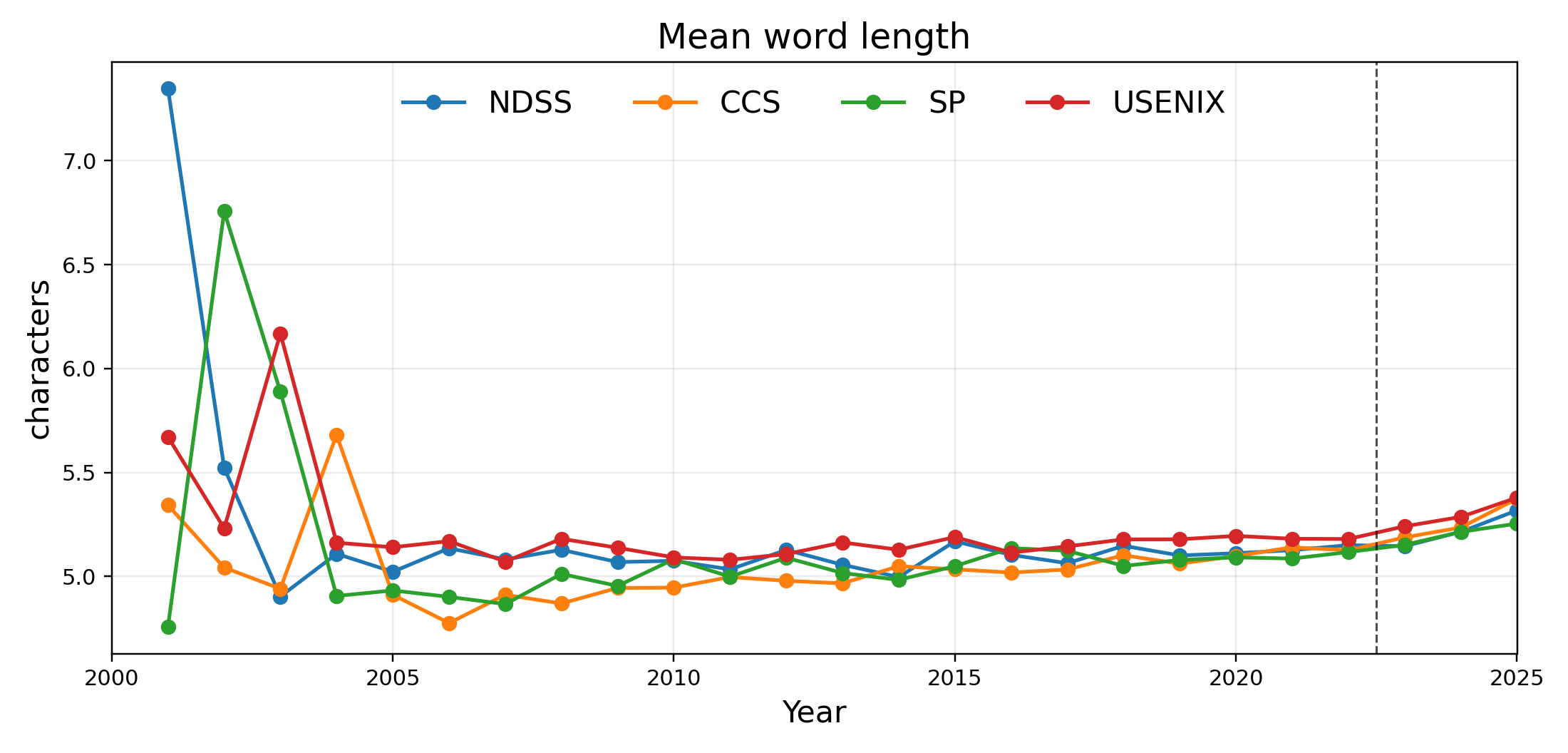

Perhaps most consequentially, the Flesch Reading Ease scores for cybersecurity papers display a sustained post-2022 decline, suggesting that the increased lexical density is translating into less accessible, more technical prose.

Figure 3: The average Flesch reading ease for papers by year, showing a decrease after 2022.

Marker Words and LLM Stylistics

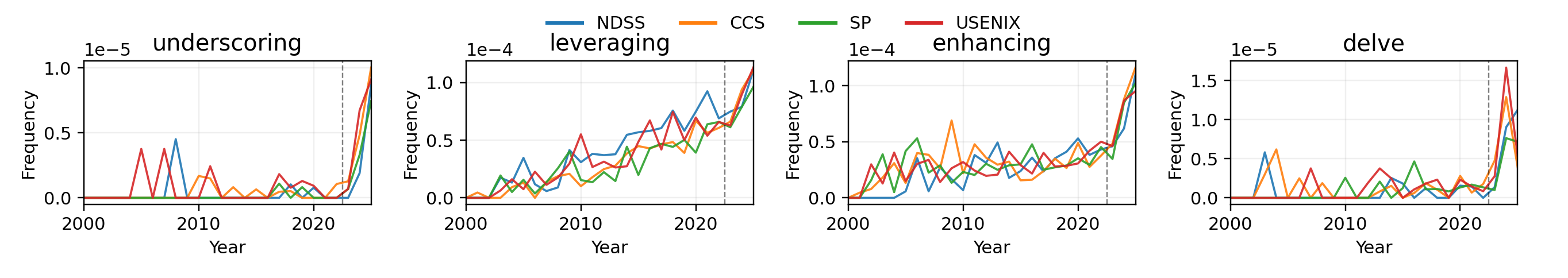

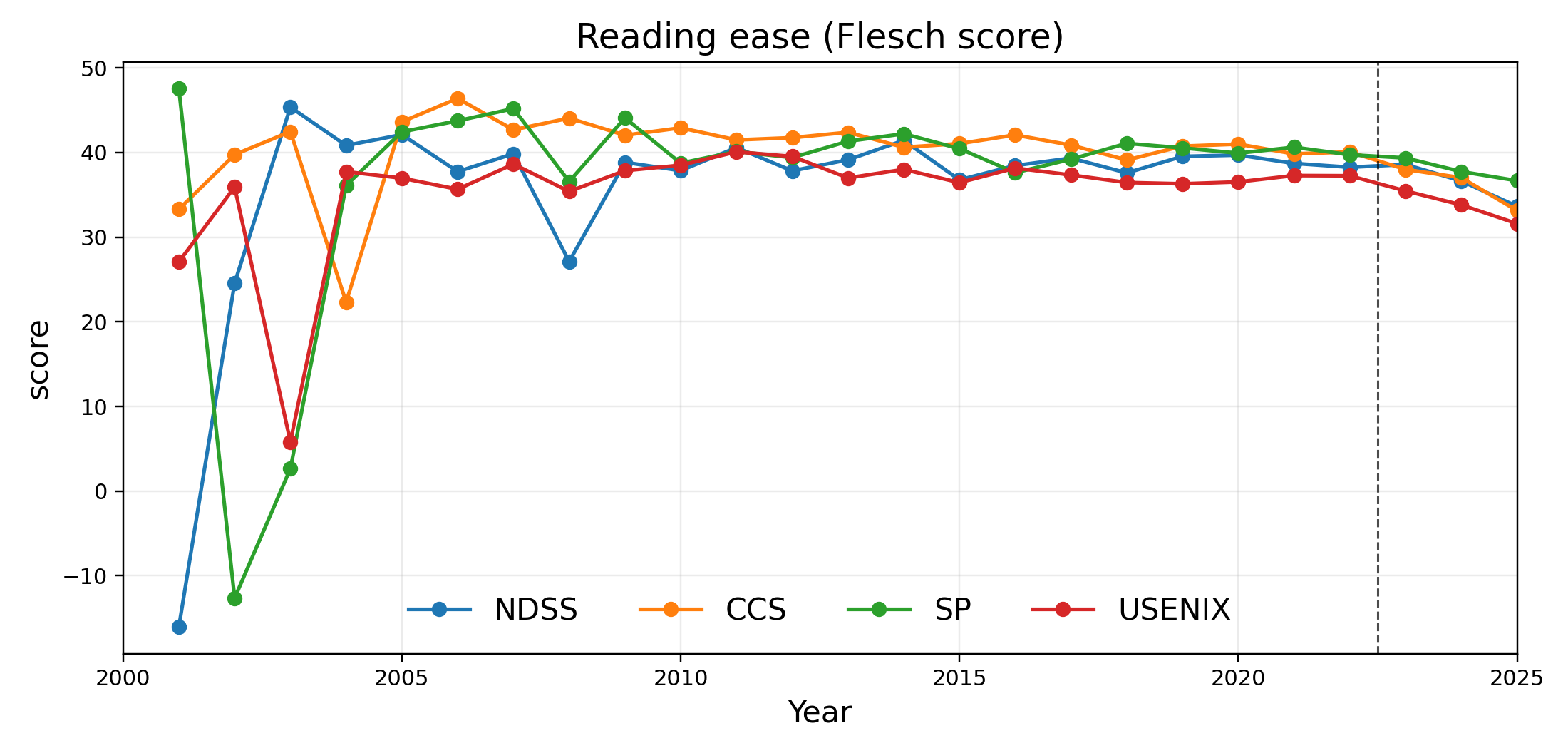

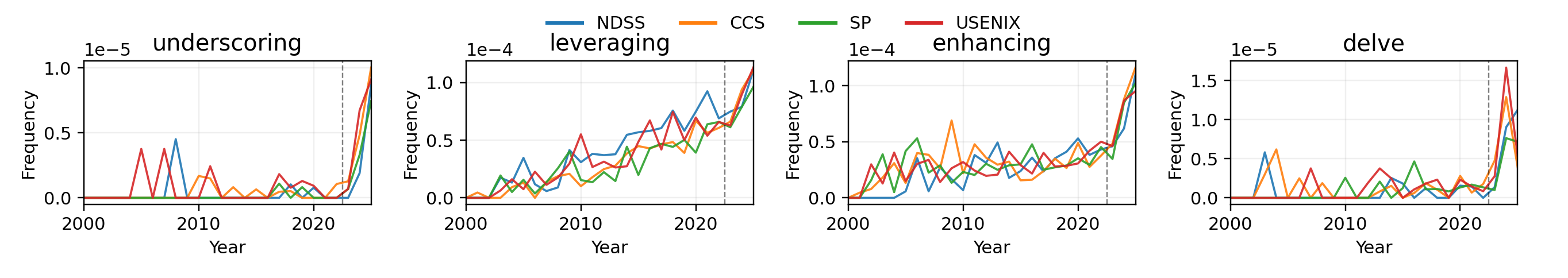

The study leverages marker words previously identified as characteristic of GenAI-assisted text, tracking their frequency over time. Terms such as "enhancing," "delve," and "underscoring" show sharp, discontinuous increases in usage following the release of ChatGPT.

Figure 4: Frequency trends for four words likely influenced by GenAI, showing sharp increases after 2022.

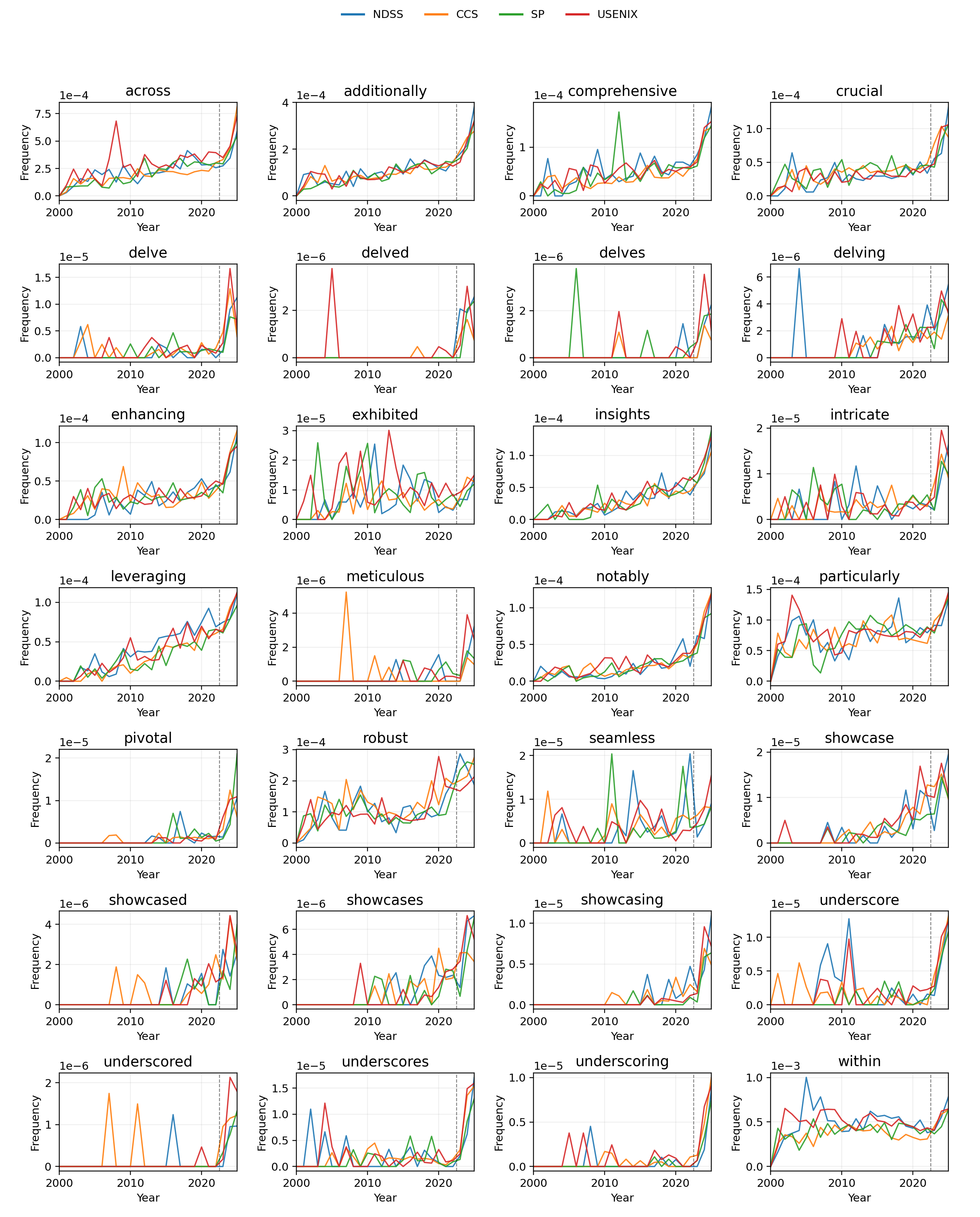

The comprehensive set of marker vocabulary reveals consistent rises across nearly all tracked terms, though the specific trajectories can vary between venues and are subject to perturbation with major LLM releases (e.g., GPT-4.5).

Figure 5: Full set of tracked marker words showing longitudinal frequency trends.

Notably, some words such as "leveraging" demonstrated a pre-existing upward trend, complicating attribution to GenAI alone. In contrast, "underscoring" and "enhancing" provide clearer correlation to AI adoption due to their relatively stable baseline prior to 2022.

Theoretical and Practical Implications

The findings indicate that generative AI is no longer merely an assistive tool for non-native writers but is actively altering the stylistic baseline of scientific prose in cybersecurity. While increased polish and technicality may benefit peer review and global accessibility, the measurable increase in lexical complexity and the decrease in readability suggest an ongoing trade-off with accessibility, particularly for readers outside the immediate sub-discipline or those with limited technical expertise.

The divergence in conference policies regarding GenAI raises serious questions about equitable research communication and the recognition of AI's contribution versus human authorship autonomy. Uniform policy frameworks and continued community monitoring are required to strike a sustainable balance.

From a methodological perspective, the identified marker words and style metrics provide a basis for automated detection of GenAI-influenced writing, an area already under active investigation in related work (Geng et al., 2024, 2604.09316).

Speculation on Future Directions

Ongoing developments in LLM capabilities (e.g., GPT-4.5 and beyond) portend further stylistic shifts, possibly with new marker vocabulary and emergent idiomatic patterns. The prospect of highly adept, style-mimicking AI raises the bar for effective detection and disclosure mechanisms, making continuous linguistic surveillance and policy revision imperative.

Additionally, the observed reduction in reading ease underscores the risk that GenAI may unintentionally create barriers to entry, restricting knowledge dissemination if community best practices do not keep accessibility at the forefront.

Both for research reproducibility and for the integrity of scientific communication, it is essential that communities not only track but actively curate the role of generative models in scholarly writing.

Conclusion

This longitudinal analysis demonstrates that the adoption of generative AI, particularly post-2022, has imparted quantifiable stylistic changes to publications in top-tier cybersecurity conferences. These changes include a measurable rise in lexical complexity, lower readability scores, and surging use of characteristic marker words. The evolving policy ecosystem remains patchwork and reactive. As LLMs become ever more capable, the cybersecurity community must proactively address both the opportunities and the challenges posed by GenAI, ensuring that scientific communication remains clear, equitable, and accountable.