- The paper demonstrates that LLMs can catalyze design workflows by adopting diverse roles—from an information source to a full producer—during paired design sessions.

- The paper employs a mixed-method analysis using prompt timeline visualization and thematic coding to reveal distinct collaboration patterns and address context drift.

- The paper highlights practical implications for tool design by advocating for mechanisms that support divergent ideation, context tracking, and trust calibration in collaborative settings.

Examining the Role of LLMs in Collaborative Software Design

Introduction

LLMs have fundamentally transformed individual coding and development tasks, yet their function in group-based, human-centric activities such as collaborative software design remains underexplored. "The Role of LLMs in Collaborative Software Design" (2604.09120) presents an empirical investigation that addresses this knowledge gap by analyzing the behavior of 18 pairs of software professionals co-designing an application with optional LLM assistance. Through qualitative and quantitative analysis of interaction logs, session recordings, and interviews, the study elucidates how LLMs are appropriated across varying collaboration patterns and roles, and the implications on design workflows and human initiative.

Study Design and Methodology

The authors orchestrated a controlled remote laboratory study, tasking 36 participants (organized into 18 pairs) to design a university campus bicycle parking application. Participants had professional experience with software design and varied levels of prior LLM exposure. The study provided ecological validity by permitting natural tool selection and offering both shared and separate LLM interfaces (backed by ChatGPT 3.5). The dataset included screen/audio session recordings, chat logs, design artifacts, and post-task interviews.

The authors' analytic pipeline comprised abductive coding of conversation logs, video analysis of real-time collaboration, and inductive thematic analysis of participant perspectives. This multilevel, mixed-method approach enabled characterization of interaction patterns, LLM-invoked assistance types, and socio-cognitive effects in the design process.

Patterns of LLM Use in Collaborative Design

The introduction of an LLM catalyzed diverse joint use patterns:

- Shared Instance Use: Analogous to pair programming; one participant acted as "driver." Promoted mutual awareness, coordinated prompt construction, and synchronized design artifact scrutiny.

- Parallel Use with Separate Instances: Each designer instantiated and prompted their own LLM. Enabled independent exploration but introduced risk of “context drift,” manifesting inconsistencies between parallel design threads.

- Dynamic Transitions: Some pairs shifted interaction patterns mid-task, contingent on subtasks or as a response to collaboration breakdowns.

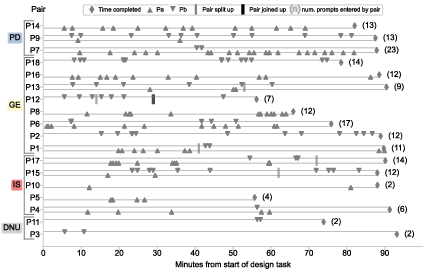

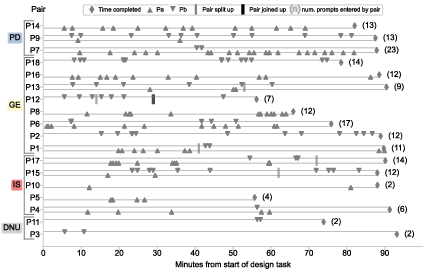

These patterns are visualized in the prompt entry timeline, which reveals the heterogeneity of LLM interaction across pairs, task phases, and subtask assignments.

Figure 1: Timeline of prompt entry by each partner in all pairs, grouped and annotated by LLM reliance (DNU=no role, IS=information source, GE=generator, PD=producer).

Analysis revealed four archetypal roles assigned to the LLM:

- No Role: Two pairs effectively excluded the LLM due to perceived trust deficits or sufficient team expertise.

- Information Source: The LLM functioned as an on-demand knowledge retrieval or search device; prompts elicited focused facts (e.g., technology APIs), mirroring web search behaviors.

- Generator: The LLM was tasked with artifact instantiation (e.g., UML class diagrams, API definitions). These outputs served as seeds for further human-led refinement and discussion.

- Producer: For three pairs, the LLM directly generated comprehensive sections of the design or even orchestrated the entire design solution, with humans relegated to prompt engineering and evaluative oversight.

Assignment of these roles was not determined by seniority or LLM experience; rather, it reflected joint negotiation, comfort with GenAI, and evolving perceptions of LLM utility during the session.

Interpretation and Negotiation of LLM Output

Regardless of the LLM's role, human designers remained the locus of critical reasoning:

- Scrutiny and Reflection: Designers rigorously critiqued LLM suggestions by referencing the PRD, mapping outputs to their own cognitive models, and employing visual tools for validation.

- Anchoring and Fixation: Initial LLM outputs often anchored design trajectories, occasionally curtailing exploration of alternatives and inducing early convergence—a phenomenon previously noted as design fixation [NERONI2019180, wadinambiarachchiEffectsGenerativeAI2024].

- Selective Adoption and Iteration: LLM-generated artifacts were typically not adopted unconditionally. Partial integration, deletion, or iterative prompting to refine, correct, or explore alternatives were routine.

- Trust Mediation: Perceived LLM hallucinations or superficial reasoning prompted conservative incorporation strategies and reinforced the necessity of human review [zhangHallucinations2025, wangInvestigatingDesigningTrust2023].

Cognitive and Socio-Technical Implications

Participant interviews surfaced nuanced implications:

- Trust and Verification: Participants highlighted the ongoing requirement for independent verification of LLM outputs due to hallucination propensity, further supporting the need for interface features that facilitate credibility assessment.

- Human Partnership Value: Designers valued the dialogical aspect of human partners, especially for context elaboration, critical questioning, and compensating for knowledge gaps unaddressed by LLM prompting.

- Confidence and Comfort: LLMs contributed to users’ confidence via “sanity checks,” increased perceived coverage, and support in addressing design “unknowns.”

- Task-Scenario Suitability: LLMs were seen as better suited for early-stage ideation, greenfield solutions, or routine artifact generation, but less for deep, context-specific complex reasoning.

The study enumerates actionable implications for next-generation AI-assisted design environments:

- Facilitate Divergence and Merging: Tools should enable forking/merging of design contexts to support parallel exploration and later synthesis, mitigating anchor and context-drift effects.

- Contextual Awareness: Mechanisms to track and highlight evolving conversational context across team and LLM instances are critical for maintaining synchronization.

- Bias Mitigation: Interfaces or agentic interventions that explicitly challenge early convergence should be explored to support divergent thinking and counter premature fixation.

The findings underscore that human-led reflection, initiative, and negotiation remain central; the LLM operates as a catalytic, not substitutive, actor in design.

The results extend prior work that observed LLMs’ primary utility as code accelerators or routine artifact generators [barkeGroundedCopilotHow2023, khojahCodeGenerationObservational2024, liangLargeScaleSurveyUsability2024]. Unlike individualized contexts, collaborative design mediates a richer set of roles and requires advanced coordination, alignment, and negotiation. The study further nuances understandings of trust dynamics, self-efficacy, and how human agency is preserved—echoed in recent work in UX and GenAI adoption [liUserExperienceDesign2024, lambiaseCulturalAdoptionLLM2025, kim2025exploring].

From a theoretical standpoint, the LLM is not merely a tool or passive actor, but a boundary artifact that shapes, constrains, and catalyzes design discourse [star1989institutional]. The research demonstrates that human designers retain epistemic authority, using LLMs as dynamic resources in a fundamentally co-creative process.

Conclusion

This study provides a comprehensive, fine-grained characterization of how LLMs are enrolled in collaborative software design: as silent specters, search engines, artifact generators, and even as full design producers. Despite the broad spectrum of roles and collaboration modalities, human designers keep epistemic control, critically filtering, transforming, and negotiating LLM suggestions. Robust LLM integration into collaborative settings thus requires explicitly supporting divergent ideation, context management, and interface affordances for trust calibration. Further work should pursue longitudinal, in-situ studies and meta-analyses linking collaboration patterns to design quality and creativity.