- The paper presents Gaussian Wrapping, which endows each 3D Gaussian with a learnable orientation to enable robust, closed-form surface extraction.

- It leverages analytic normal and vacancy fields derived from oriented Gaussians, improving mesh fidelity and handling thin structures effectively.

- The method demonstrates state-of-the-art performance on benchmarks, offering efficient, compact, and watertight mesh recovery with reduced artifacts.

High-Fidelity Surface Reconstruction from 3D Gaussian Splatting via Oriented Gaussians

Introduction and Motivation

The paper "From Blobs to Spokes: High-Fidelity Surface Reconstruction via Oriented Gaussians" (2604.07337) proposes a principled extension to 3D Gaussian Splatting (3DGS) for explicit, high-fidelity, watertight mesh recovery from multi-view imagery. While 3DGS enables real-time, high-quality novel view synthesis by representing scenes with rasterized 3D Gaussian primitives, it lacks a geometric field (e.g., SDF, occupancy) necessary for robust, unbiased surface extraction. Existing methods either fuse heuristic depth maps or co-train an implicit branch, resulting in ad-hoc extraction, smoothing, or geometric erosion, and typically fail to reconstruct thin structures or complete background geometry robustly.

The authors address this fundamental disconnect between a rasterization-oriented formulation and explicit geometry recovery by introducing Gaussian Wrapping: a framework where each 3D Gaussian is endowed not simply with isotropic opacity, but a learnable orientation (normal), enabling the definition of a reciprocal oriented attenuation field. This key modification theoretically and practically bridges the gap between 3DGS and the Objects as Volumes (OaV) stochastic geometry framework, allowing closed-form, differentiable computation of occupancy (vacancy), normal fields, and principled surface extraction mechanisms.

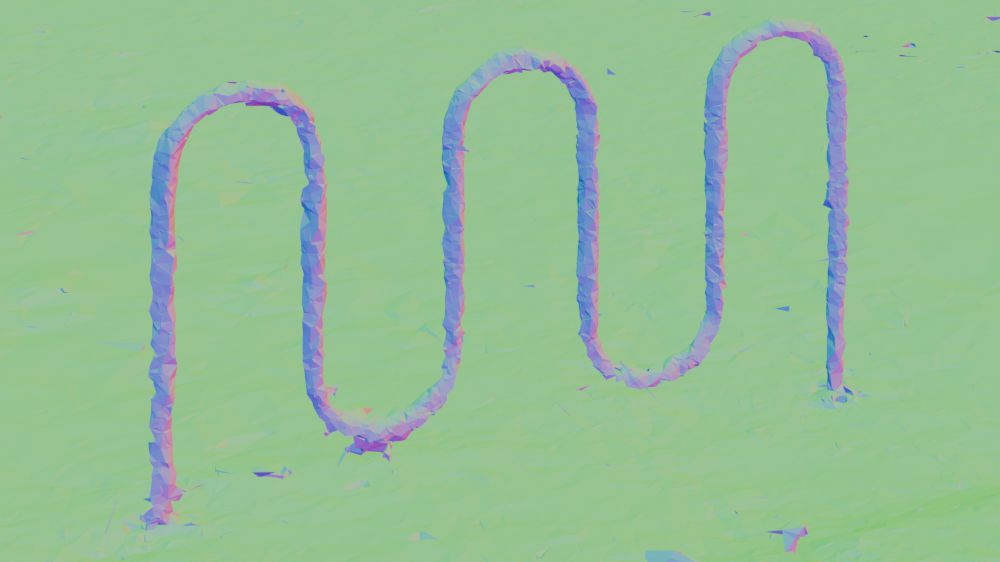

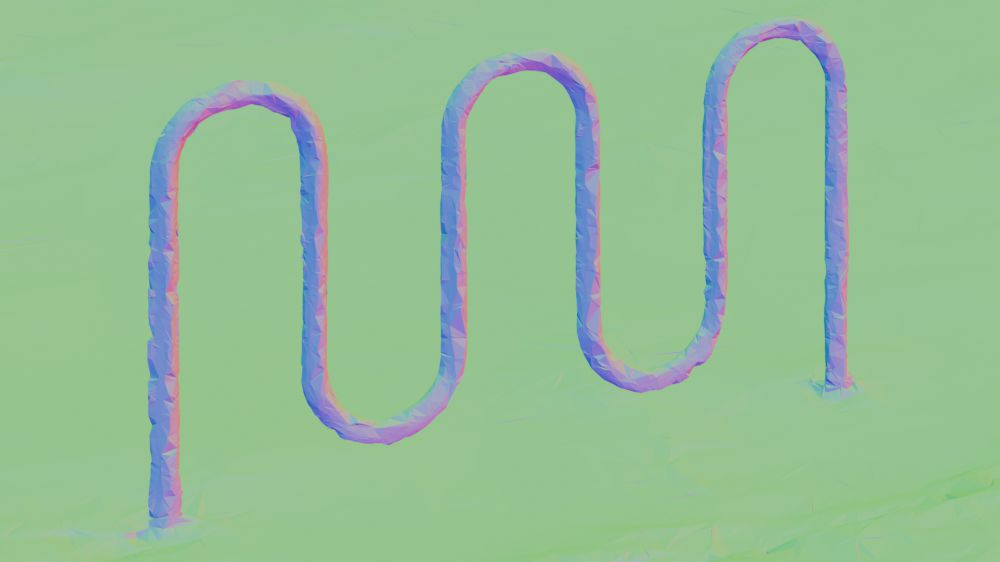

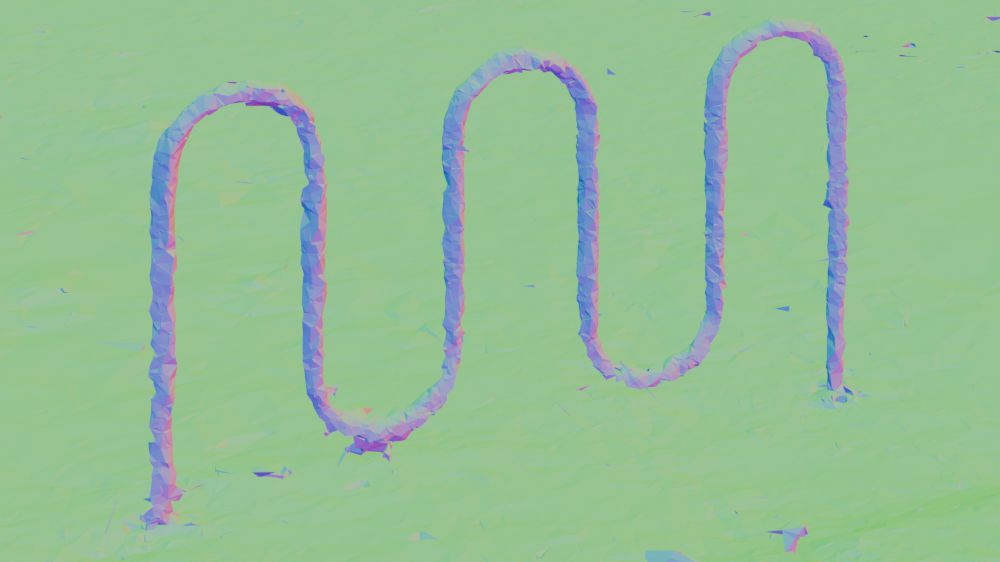

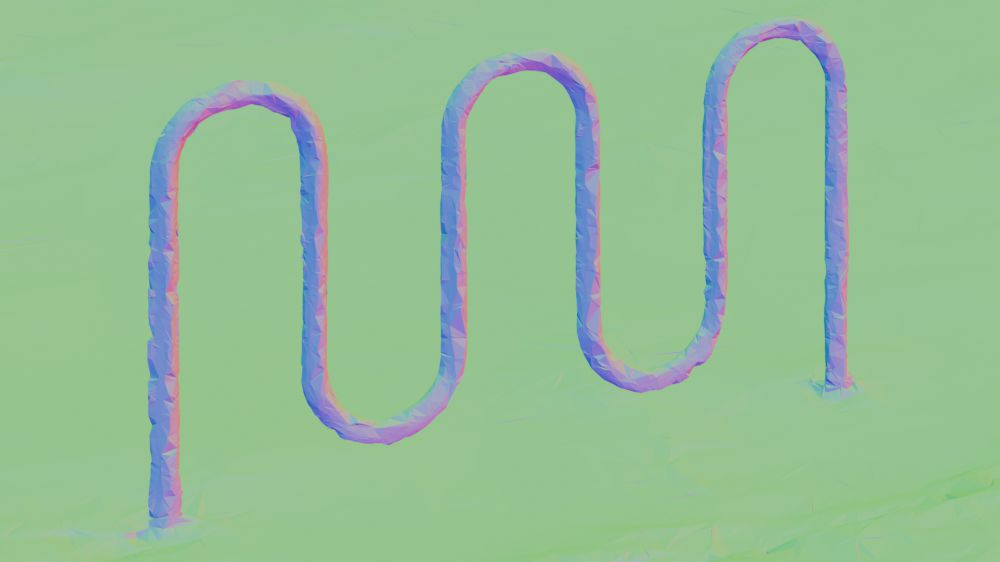

Figure 1: High-fidelity surface meshes (with and without texture) reconstructed from multiview RGB, including thin structures (spokes) and compact backgrounds, via the proposed Gaussian Wrapping method.

The work sits at the confluence of neural rendering, implicit and explicit representations, and stochastic geometry. It builds on the OaV formalism, which connects attenuation-based rendering to stochastic occupancy and surface normal fields via reciprocal transport assumptions. Unlike SDF-based renderers (e.g., NeuS, VolSDF) that are theoretically amenable to explicit mesh extraction but computationally demanding and biased towards over-smoothing, 3DGS is a particle-based rasterizer with no global density field, lacking a direct means to define and extract boundary surfaces. Previous hybrid solutions either regularize Gaussians ad hoc, fuse depth heuristics, or jointly optimize an implicit (MLP) branch, typically falling short in mesh fidelity, watertightness, and coverage of fine or out-of-distribution geometry.

The core theoretical advance here is to view 3D Gaussians as oriented surface elements. Each primitive is parameterized not only by position and scale but by a learned outward normal. The attenuation (extinction) field generated by this ensemble becomes reciprocal and, crucially, enables closed-form expressions for vacancy and normal fields:

V(x)=∇logv(x)=i=1∑N∇log(1−Gi(x)),N(x)=∥V(x)∥V(x).

These analytic gradients are valid in the OaV sense wherever Gaussians are incident on the true object boundary, enabling both geometry-aware training and robust isosurface-based extraction.

Figure 2: The proposed pipeline—wrapping the scene boundary with oriented Gaussians, evaluating the normal and vacancy fields, and leveraging them for mesh extraction via pivot-based and adaptive methods.

Gaussian Wrapping: Methodology

Orientation and Densification

A novel set of losses enables Gaussians to "wrap" the underlying surface:

- Normal Alignment Loss: The oriented normal parameter for each Gaussian is supervised to align with the image-space gradient of the rendered depth, imposing an energy that minimizes the discrepancy between splatted normals and geometric gradients.

- Densification: Areas with high normal inconsistency (gaps in the wrapping shell) are targeted for duplication; Gaussians in these regions are cloned with flipped normals, effectively filling holes in the mesh hypothesis and ensuring coverage.

Field Evaluation and Mesh Extraction

The model leverages the analytic, closed-form vector field V(x) for both normal supervision and mesh extraction.

- Pivot-Based Marching Tetrahedra: For each Gaussian, two pivots are generated along the normal—one at the center, one at a set distance along the normal—enabling surface crossing detection even for thin structures. Marching Tetrahedra is run on the Delaunay triangulation of these pivots, providing a lightweight, watertight mesh.

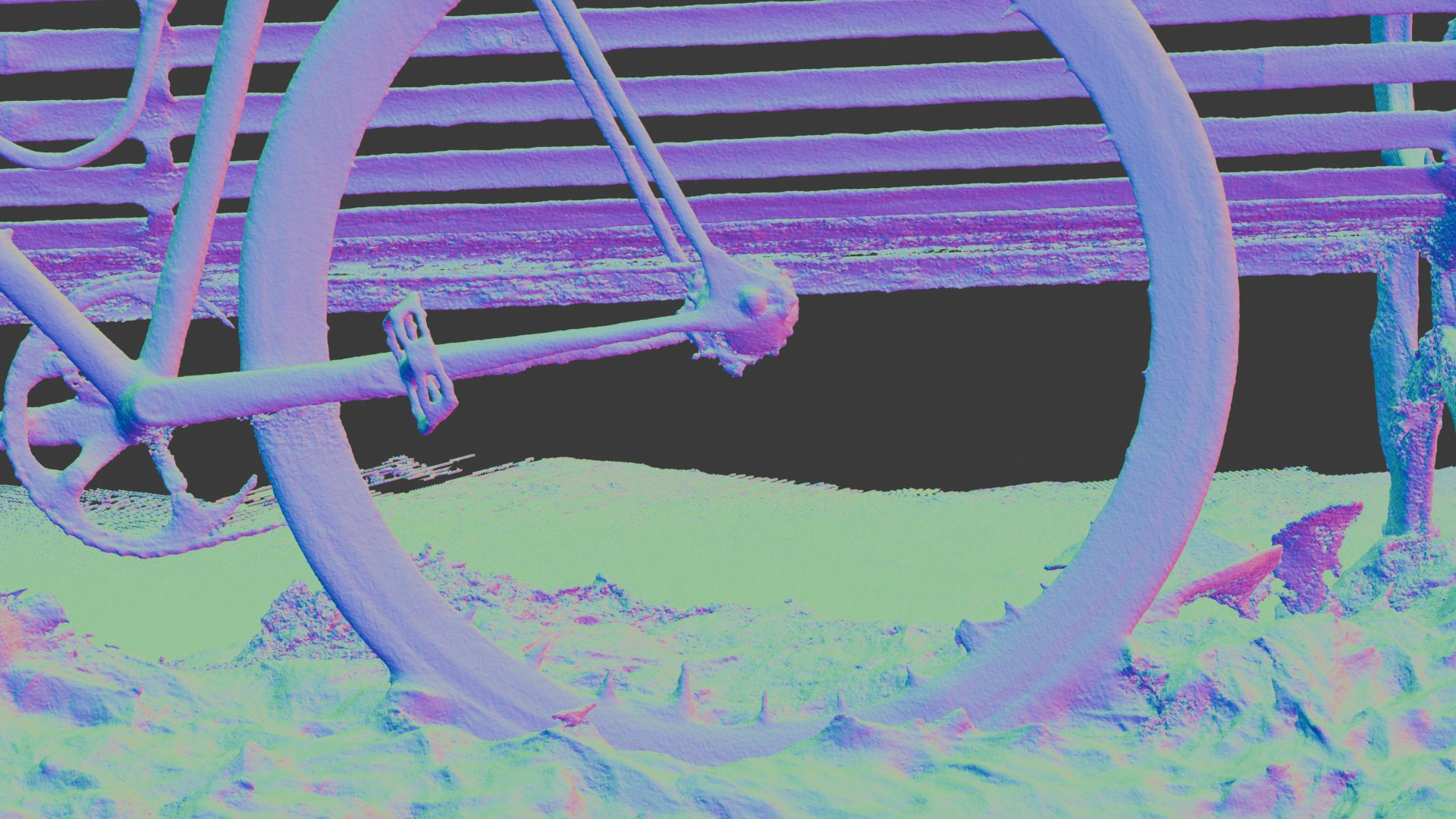

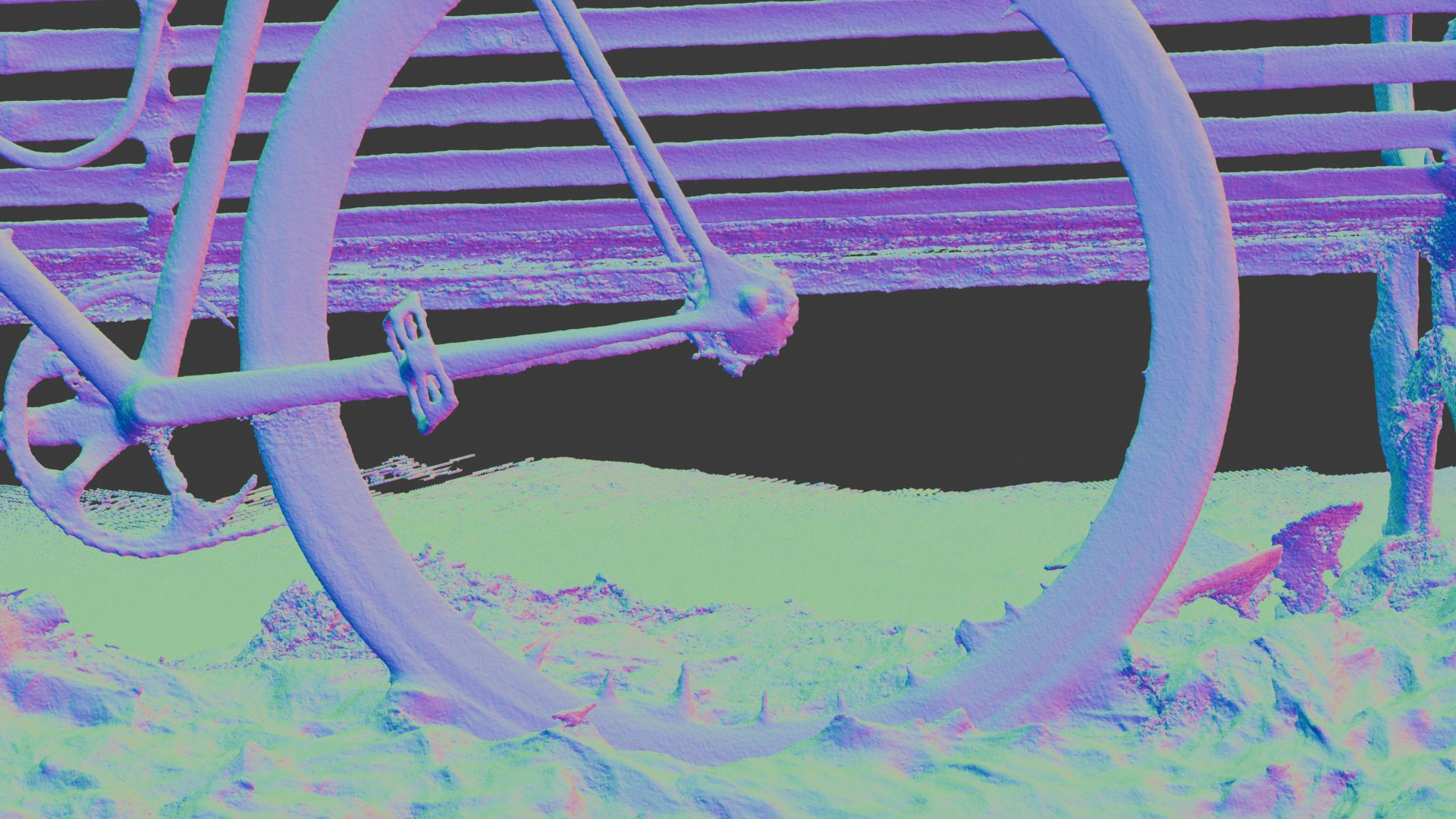

Figure 3: Vertex generation for mesh extraction from the oriented Gaussian distribution using the pivot-based approach.

- Primal Adaptive Meshing: To decouple mesh resolution from the underlying particle distribution and accommodate arbitrarily fine features (thin wires, background edges), an adaptive pipeline is used. Vertices are sampled on the boundary, projected iteratively to the v(x)=0.5 isosurface via a Newton update along the analytic normal direction, and tetrahedralized with robust in/out classification—enabling region-of-interest refinement and minimizing discretization artifacts.

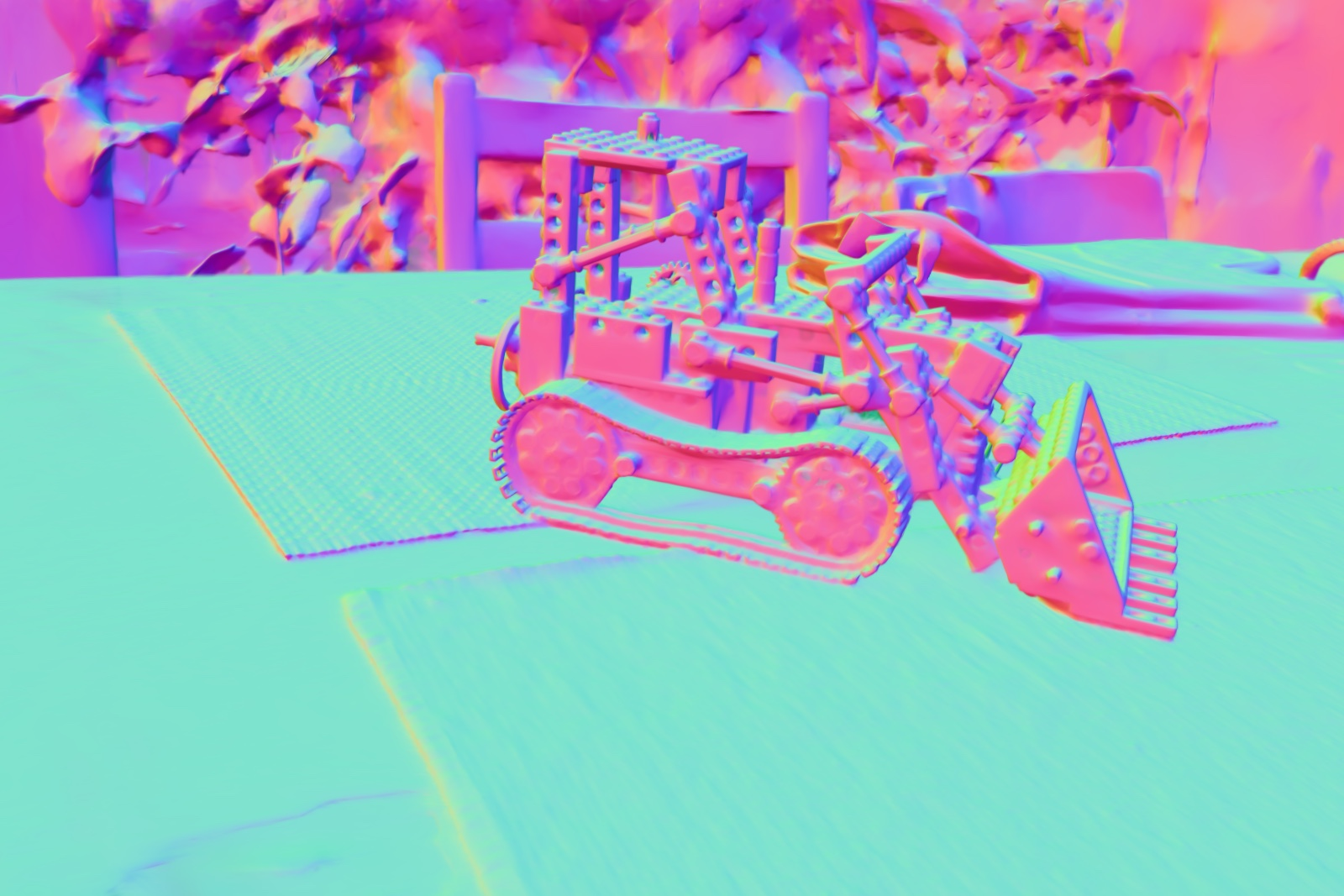

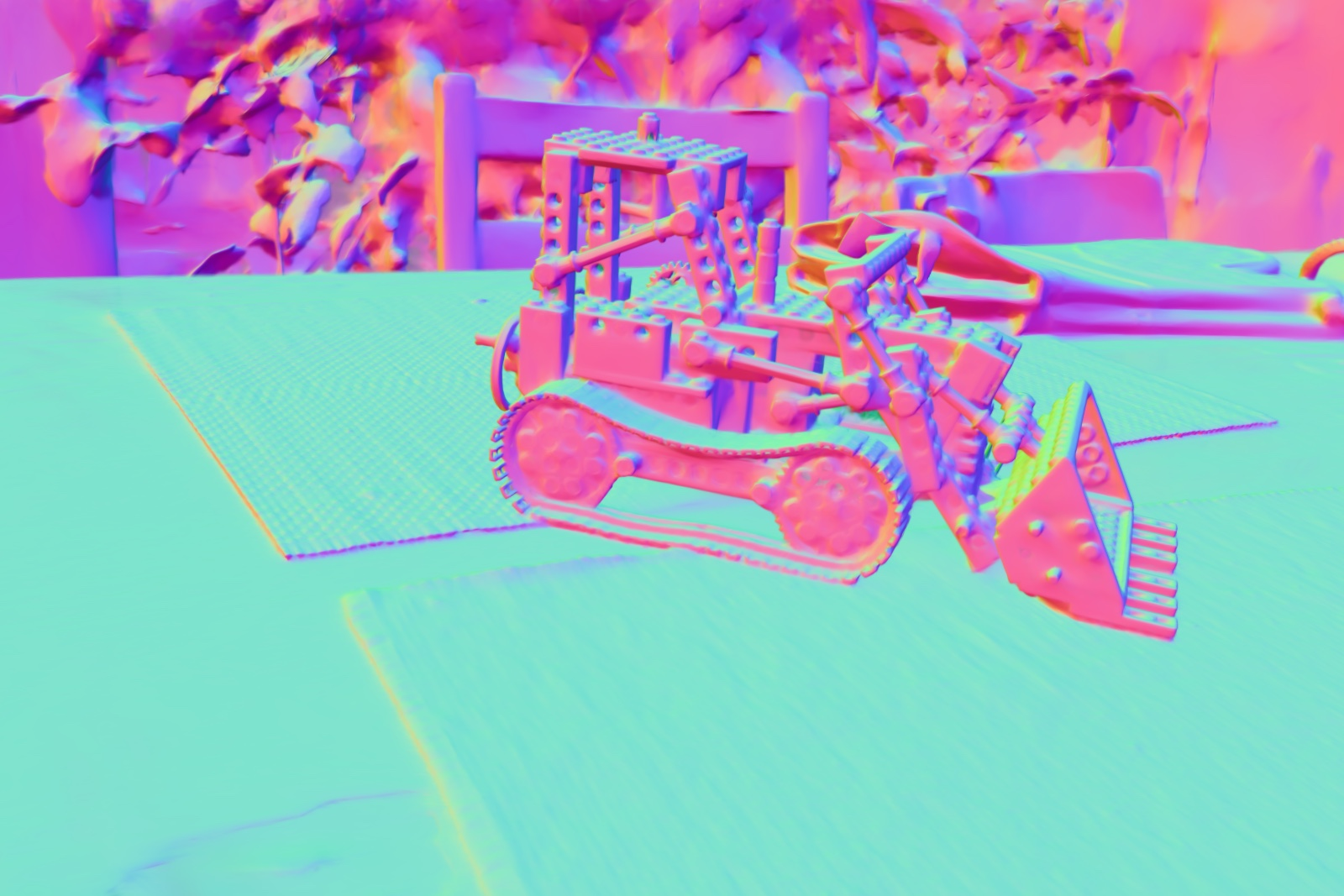

Figure 4: Qualitative comparison on challenging scenes—Gaussian Wrapping reconstructs finer features and thin structures while achieving robust coverage.

Empirical Results

Quantitative Benchmarks

Extensive evaluation is performed on DTU, Tanks and Temples (T&T), and MipNeRF-360 datasets. Both traditional mesh-based and novel view synthesis metrics are reported, with further investigation into evaluation protocol bias (vertex vs. uniformly sampled or virtual-scan-based surface statistics).

Key findings:

- State-of-the-art Mean F1 Scores: On T&T under the unbiased uniform and virtual scanning protocols (which mitigate mesh-density and ground-truth acquisition biases), Gaussian Wrapping achieves superior mean F1 scores among scene-scale methods. The method outperforms previous approaches on completeness, thin structure recovery, and mesh compactness.

- Mesh-Based Rendering (MBR) and NVS: The approach sets new benchmarks in MBR metrics, especially on challenging outdoor (MipNeRF-360) scenes, indicating high-quality, complete geometric coverage that also generalizes to realistic rendering.

Ablations

The ablation studies show that the normal field loss and oriented densification are critical for mesh fidelity, reducing artifacts and capturing sharp, high-frequency geometry while maintaining or improving all mesh, image, and rendering-based metrics.

Figure 5: Normal field loss ablation—without it, large-scale artifacts and missing geometry occur in pivot-based extraction.

Generalization and Drop-In Use

The authors demonstrate that Gaussian Wrapping can be used as a regularizer on top of other Gaussian-based meshing methods (e.g., RaDe-GS), yielding large improvements in both F1 metrics and qualitative geometry, confirming the generality and plug-and-play nature of the orientation and densification mechanisms.

Figure 6: Applying the Normal Field supervision and densification losses to competing methods (here RaDe-GS) immediately improves surface faithfulness and completeness.

Discussion and Implications

Theoretical Impact

By establishing an analytic, closed-form connection between oriented discrete elements (Gaussians) and the stochastic geometric fields (vacancy, normal) at the heart of mesh extraction, the authors close a longstanding gap in particle-based scene modeling—reconciling the rendering and geometry domains both formally and practically. The reciprocal attenuation derivation is validated empirically and can serve as the foundation for further generalization to other primitives or volume/surface hybrid representations.

Practical Significance

- Efficiency: The method produces watertight meshes with substantial compression (significantly fewer pivots/vertices than competing works) and handles complex backgrounds in unbounded scenes.

- Robustness in Real Scenes: The pipeline is robust to ground-truth scanning artifacts (e.g., missing geometry in occluded regions), and the unified occupancy formulation leads to less bias and quantitative/qualitative improvement across benchmarks and evaluation protocols.

- Extensibility: As the orientation mechanism requires only a minor number of additional learnable parameters, and the losses are scale- and method-agnostic, these advances can be incorporated into arbitrary 3DGS-style approaches, implicit-explicit hybrids, or even future analytic particle fields or differentiable rendering engines.

Limitations and Future Work

The current sampling strategy for adaptive meshing is uniform and may underperform in highly detailed or low-sample-density regions. Guiding vertex/primitive selection by curvature or by properties of the analytic vector field could allow even sparser, more efficient, and curvature-adaptive meshes. Generalization of the analytic attenuation field to more complex or accurate volumetric rendering models (e.g., EVER) is anticipated as a next step.

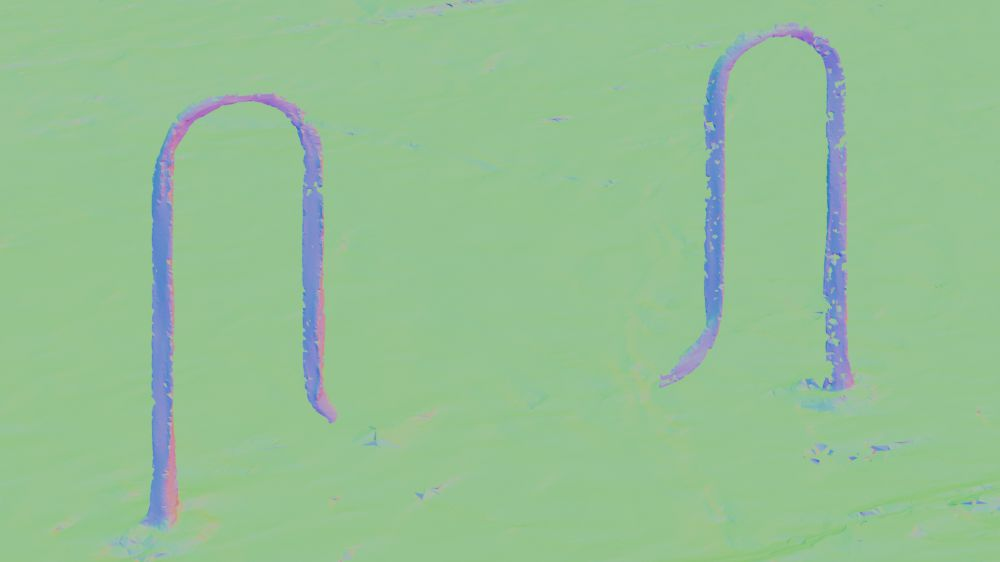

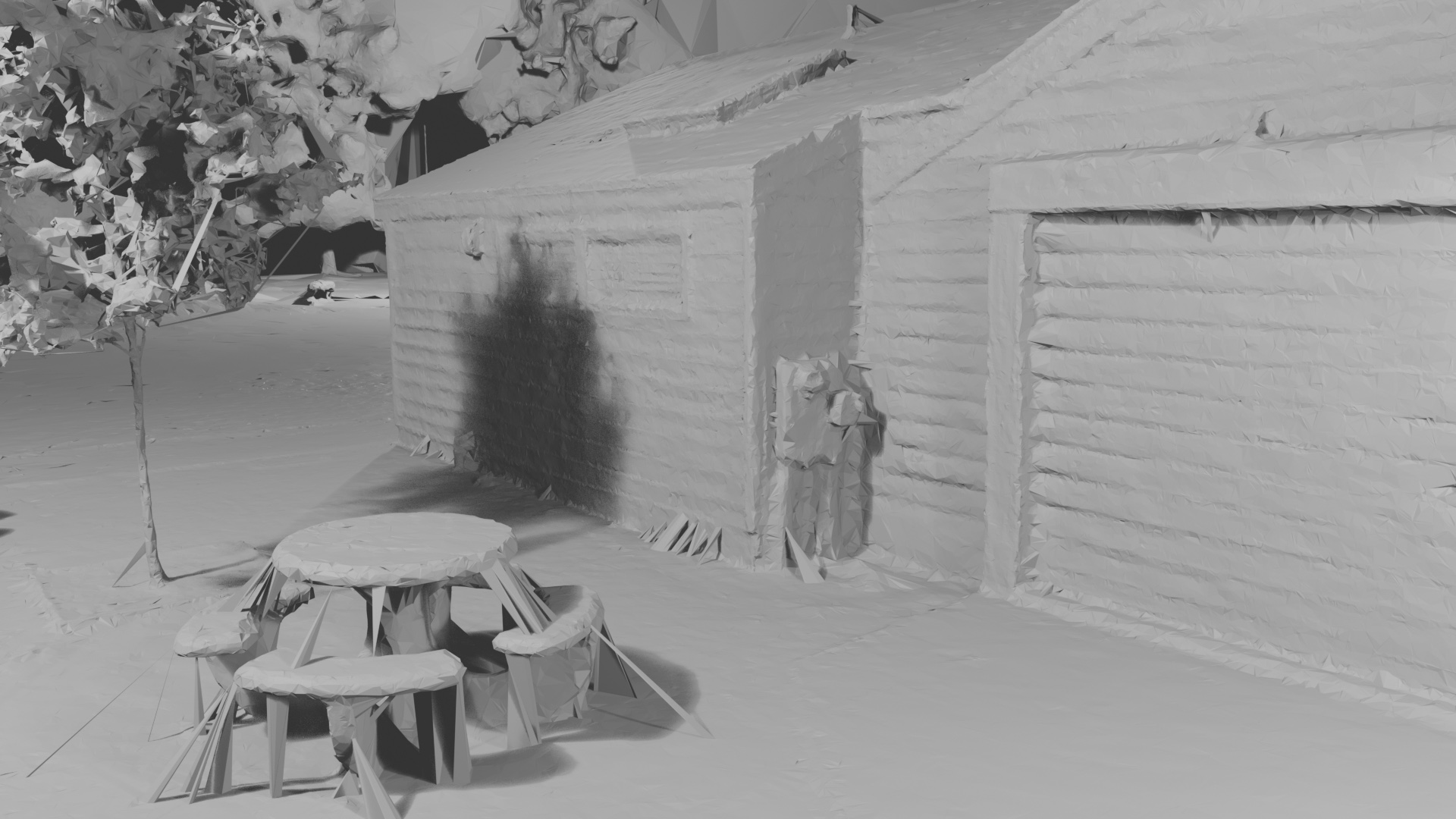

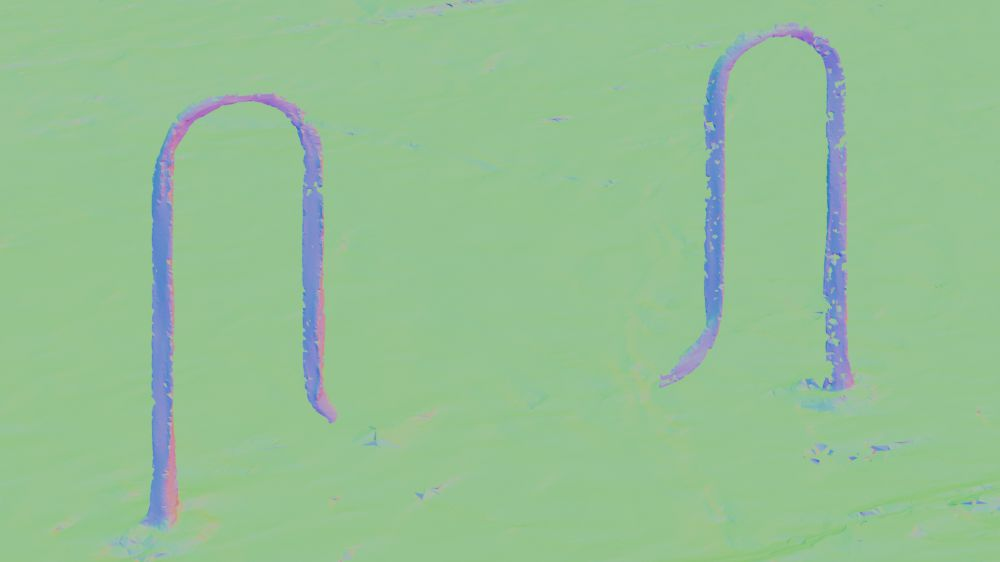

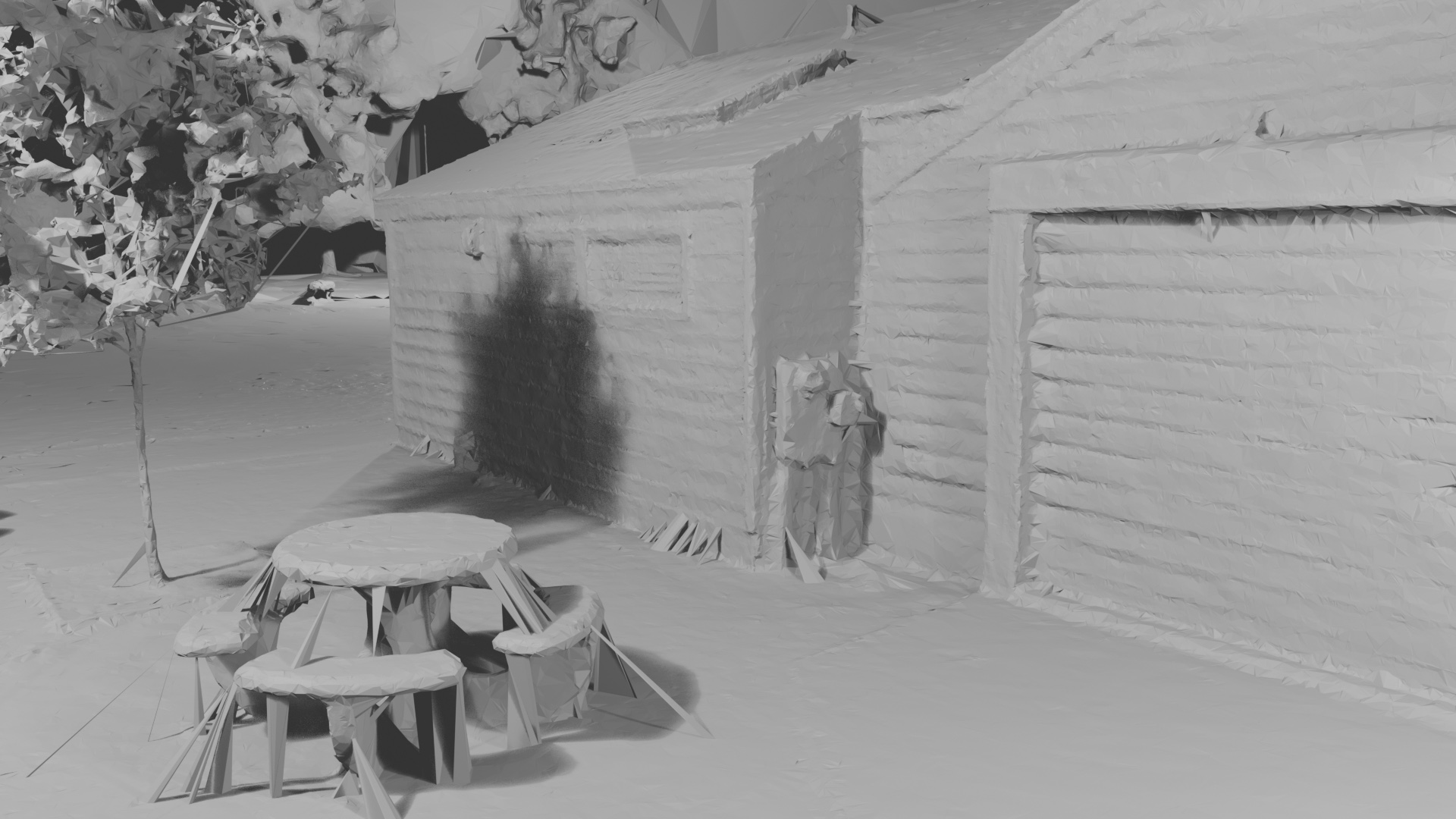

Figure 7: Visualization of the analytic normal field on complex real scenes—showing the field's alignment with the underlying surface geometry and its use in both supervision and extraction.

Conclusion

Gaussian Wrapping fundamentally advances the state of explicit surface recovery from multi-view imagery using Gaussian Splatting representations. By reinterpreting scene primitives as oriented probabilistic surface elements and deriving reciprocal, closed-form geometric fields, this framework enables high-fidelity, watertight, and compact surfaces efficiently, bridging a persistent gap between real-time particle renderers and robust, bias-minimized geometry pipelines. The architecture-agnostic nature of the analytic fields and regularizers promises broad applicability in future multi-view and neural rendering pipelines.