- The paper demonstrates that participatory design methods can identify safe, context-sensitive GenAI features that enhance learners' agency and employment aspirations.

- The paper reveals how GenAI serves as a peer, mentor, and employability guide while addressing privacy and surveillance risks in restrictive environments.

- The paper highlights the need for stepwise, localized scaffolding in GenAI systems to ensure pedagogical integrity and mitigate contextual risks.

Study Motivation and Context

This paper investigates the requirements and effectiveness of accountable GenAI learning companions in gender-restrictive, surveilled settings using remote participatory design (PD) with women in Afghanistan who are banned from formal education (2604.07253). It addresses the confluence of educational exclusion, structural privacy risks, household constraints, and the absence of peer learning communities, with GenAI increasingly filling gaps as a tutor, peer, and employability guide. The study analyzes lived experiences and design imaginaries of 20 participants (from a survey of n=140) to probe critical questions around contextual safety, surveillance, pedagogical fit, and agency in constrained informal learning environments.

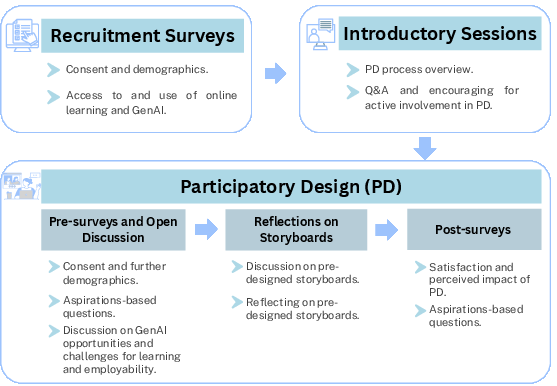

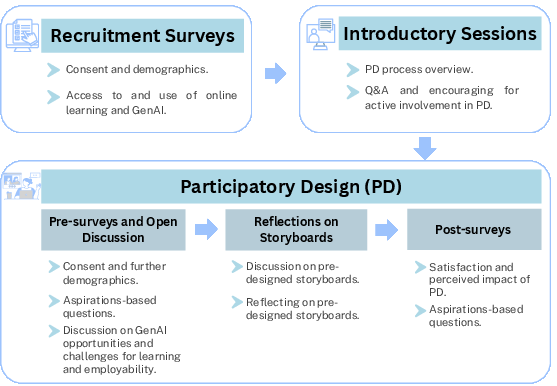

Figure 1: Overview of the study design: recruitment, PD familiarization, open discussions, storyboard reflections, and evaluation surveys.

Methodology: Participatory Design under Constraint

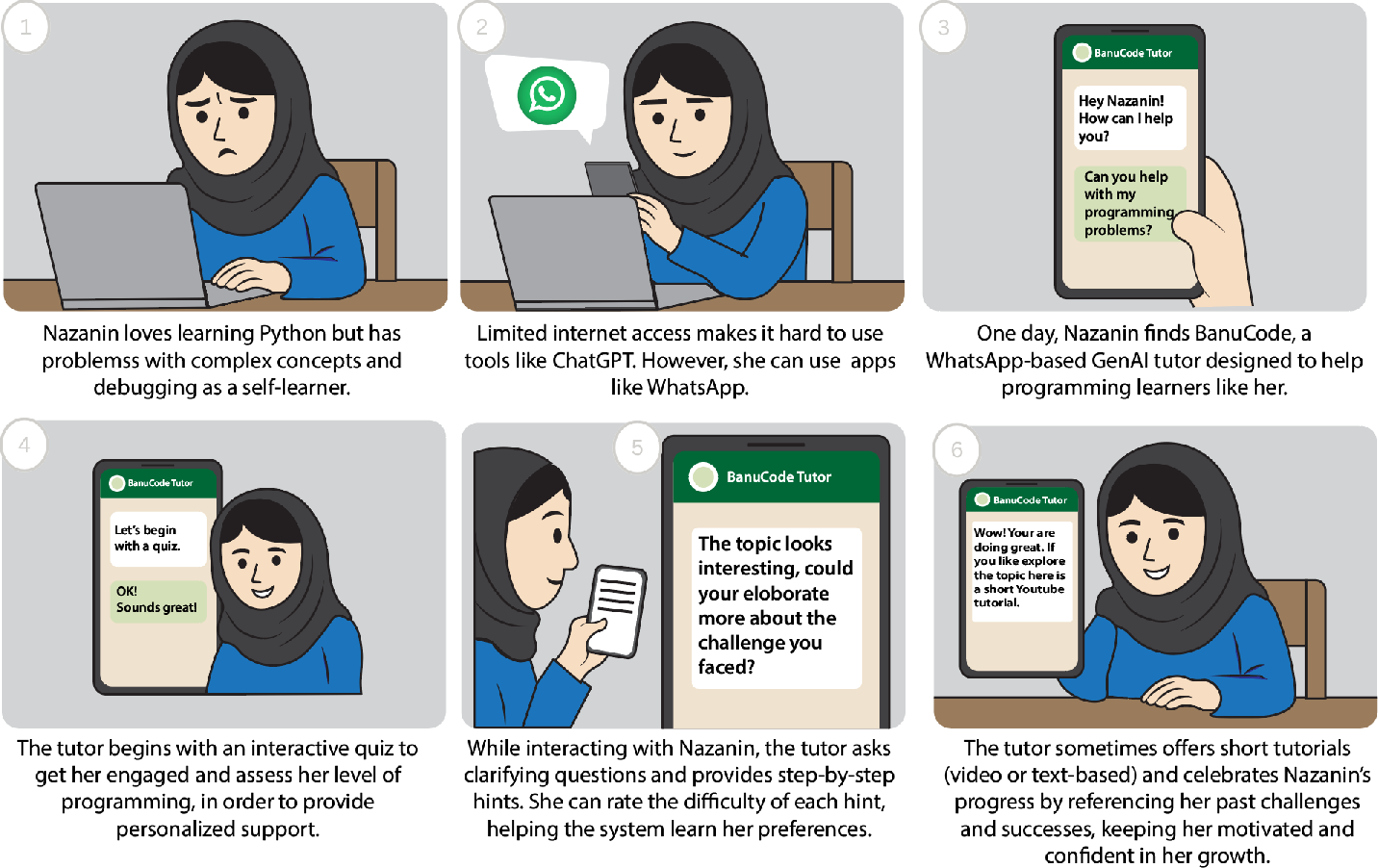

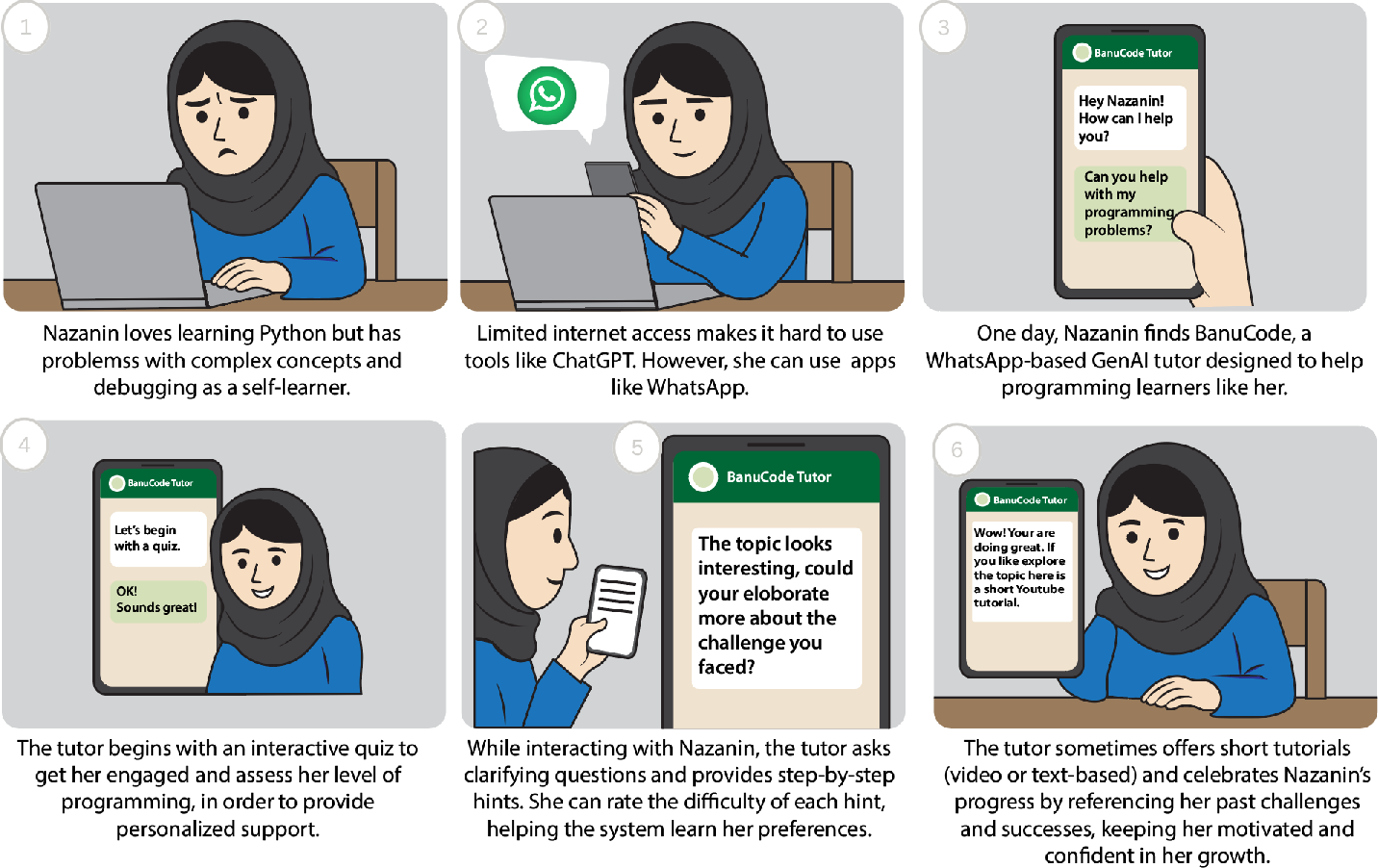

The authors employ remote PD in five small-group sessions, supplementing surveys and scenario/storyboard translation. Sessions elicit both qualitative and quantitative data: open-ended discussions, critical feedback on pre-designed storyboards, and aspiration-based scales pre-/post-intervention. The design methodology is intentionally situated—co-facilitated by researchers with lived experience of educational restriction—to ensure trust, mitigate power dynamics, and foreground agency. Data analysis involves thematic coding (Cohen’s κ=0.72) and statistical evaluation (paired t-tests with effect size percentile rank).

Figure 2: Example pre-designed storyboard used in participatory design sessions.

Key Findings: GenAI as Community Substitute and Risk-Laden Companion

GenAI as Peer, Mentor, and Employability Guide

Participants report intensive GenAI use, not primarily as information retrieval but as scaffolded companionship. The "always-on" agent compensates for missing peer networks: providing stepwise guidance, feedback, and personalized employability support (role-play for interviews, freelancer-client simulation, soft skills practice). Notably, GenAI is perceived as more effective when it enables longitudinal interaction and career-oriented task scaffolding, aligning with broader trends in EdTech [chen2024gptutor].

Companionship Constraints: Privacy, Surveillance, Context

Privacy emerges as a non-negotiable precondition, intricately bound to gendered risks (shared devices, household/authority monitoring, threat of exposure). Surveillance is not abstract: even trivial trace retention (history, registration) can trigger familial or societal harm. Contextual mismatch is pronounced. Existing GenAI output often lacks linguistic, infrastructural, and social fit: code solutions requiring high-end hardware, advice misaligned with local norms, and English-only materials that create employability tension. Participants highlight the risk of direct-answer modes undermining learning integrity and critical thinking, leading to an illusory sense of progress.

Numerical Outcomes: Aspirational Gains

Engagement in PD sessions correlates with statistically significant increases in aspirations, perceived agency, and perceived avenues for learning and employment. Aspirational scores rise from M=31.5 (pre-PD) to M=34.8 (post-PD), p=0.01. Agency and avenue subscales similarly show robust lifts (p=0.03, p=0.01), with post-PD median percentiles at the 77th–80th of pre-PD distributions.

Design Directions for Accountable GenAI

Drawing on participant proposals, the paper synthesizes actionable design directions:

- AI-Facilitated Virtual Learning Spaces: GenAI should enable anonymous, level-based group interactions as partial community substitutes, supporting collaborative practice and peer mentoring without registration or identity linkage.

- Safety-First Interaction and Trace Control: Minimize device traces, enable rapid deletion, and guarantee anonymous or temporary access. System outputs must be boundary-aware, avoiding guidance that is risky in local context—privacy is not only backend compliance but interaction-level assurance.

- Pedagogical Integrity: Stepwise Reasoning: Support should prioritize reasoning and intermediate steps, suppressing direct-answer modes that foster over-reliance and cognitive crutch effects observed in prior research [bassner2025lessstress, barcaui2025cognitivecrutch].

- Microlearning and Infrastructure Sensitivity: Assistance must be fragmentable, resumable, bilingual, fit for low-spec devices, and operable with intermittent connectivity. Localization is not merely translation but pragmatic actionability under household and infrastructure constraints.

- Employability Alignment: Scaffolding should span realistic career opportunities (remote freelancing, skill development, client communications) rather than generic advice detached from local feasibility.

Implications and Theoretical Significance

The study reframes the evaluation of educational GenAI in high-risk contexts, shifting from output quality to "warranted reliance"—whether learners can use GenAI safely, meaningfully, and contextually. Accountability is construed primarily as exposure control; harm is not only unreliable output but persistent traces, contextual misalignment, and interactional opacity. PD is shown to positively impact future envisioning, not merely as requirement elicitation but as agency-building intervention. The work expands the theoretical scope of accountability from transparency and fairness to situated exposure, learner control, and participatory aspiration formation.

Future Directions

Practically, these findings call for GenAI systems that foreground anonymous, safety-first, and context-adaptive interaction modalities, with built-in controls for exposure minimization in compromised environments. Pedagogically, stepwise, reasoning-centric support should replace direct-answer paradigms. Further research is needed on persistent agency outcomes and broader scaling in varied gender-restrictive or politically unstable contexts. Theoretically, the field should continue to refine definitions of accountability and warranted reliance in EdTech, particularly under extreme infrastructural and sociocultural constraint.

Conclusion

This paper provides a substantive account of how women excluded from formal education employ GenAI as a learning companion, confronting privacy, surveillance, pedagogical, and contextual risks. The PD process not only surfaces nuanced design requirements but is associated with marked increases in educational and employment aspirations. The findings underscore that accountable and safe GenAI must enable warranted reliance, foregrounding exposure control, user agency, and situated support over output-centric evaluation. This reframing has broad implications for the design and assessment of GenAI in socio-politically constrained learning environments.