- The paper introduces PoM as a linear complexity alternative to self-attention, using a polynomial mixer to aggregate token context efficiently.

- It employs a two-stage process with shared state construction and token-specific gating, ensuring permutation equivariance and universal sequence representation.

- Experimental evaluations across NLP, vision, OCR, and geospatial tasks show 2–4× speedups and memory efficiency without compromising performance.

PoM: Linear-Time Attention Replacement with the Polynomial Mixer

Introduction and Motivation

The "PoM: A Linear-Time Replacement for Attention with the Polynomial Mixer" (2604.06129) presents the Polynomial Mixer (PoM), a novel sequence mixing module that achieves linear complexity with respect to sequence length and is proposed as a direct substitute for the self-attention mechanism in Transformer architectures. This development addresses the longstanding computational bottleneck of Multi-Head Attention (MHA), whose quadratic time and memory complexity in sequence length n significantly restricts scalability across modalities—especially in vision and geospatial tasks, where input sequences scale rapidly with resolution and coverage.

The PoM design is inspired by representation learning with high-order moments and polynomial features, leveraging these concepts to aggregate token context efficiently. The paper extensively validates PoM in five disparate real-world domains—ranging from generative modeling to semantic segmentation—demonstrating that the replacement of attention with PoM does not compromise performance relative to quadratic-cost MHA. Instead, PoM yields substantial speed and memory efficiency gains, especially at long sequence lengths, a regime where even highly optimized attention implementations like FlashAttention begin to lag.

Polynomial Mixer Architecture

PoM introduces a two-stage aggregation-query token mixing process. Given an input tensor X∈Rd×n of n tokens, each with feature dimension d, the process is as follows:

- Shared State Construction: Each input token is projected to a higher-dimensional space and then transformed via a degree-k polynomial, parameterized by learnable coefficients. The result is aggregated over the sequence to form a single contextual state vector H(X)∈RD.

- Token-Specific Extraction: Each token produces a gating vector, which multiplicatively gates H(X) to extract relevant context and projects this back to the original dimension.

The module ensures all operations are linear in both computation and memory with respect to sequence length n. The effective expressivity of PoM derives from the polynomial expansion, capable of capturing complex token interactions.

Permutation Equivariance and Universality

The PoM construction is formally shown to be permutation equivariant, a critical property for sequence models. The authors further prove—mirroring the universal approximation guarantees for MHA—that Transformers constructed with PoM (termed PolyMorphers) are universal sequence-to-sequence approximators. This hinges on the contextual mapping property and the inclusion of positional encoding.

Adapting PoM to Sequence Causality

PoM naturally extends to handle causal and arbitrary attention masks. By defining time-step-dependent or block-causal state representations, PoM can process causal or structured dependencies efficiently, supporting both parallel training and recursive inference with O(1) complexity per token.

Computational Analysis

A detailed comparison of computational costs highlights the transition point beyond which PoM becomes decisively more efficient than attention:

- For typical Transformer settings (e.g., d=512–X∈Rd×n0, X∈Rd×n1, X∈Rd×n2), PoM outpaces MHA as soon as X∈Rd×n3 (which is typically at modest sequence lengths, e.g., few thousand tokens).

- Compared to highly engineered solutions such as FlashAttention, PoM's PyTorch-based implementation is already faster for long-context applications, despite not using custom kernels.

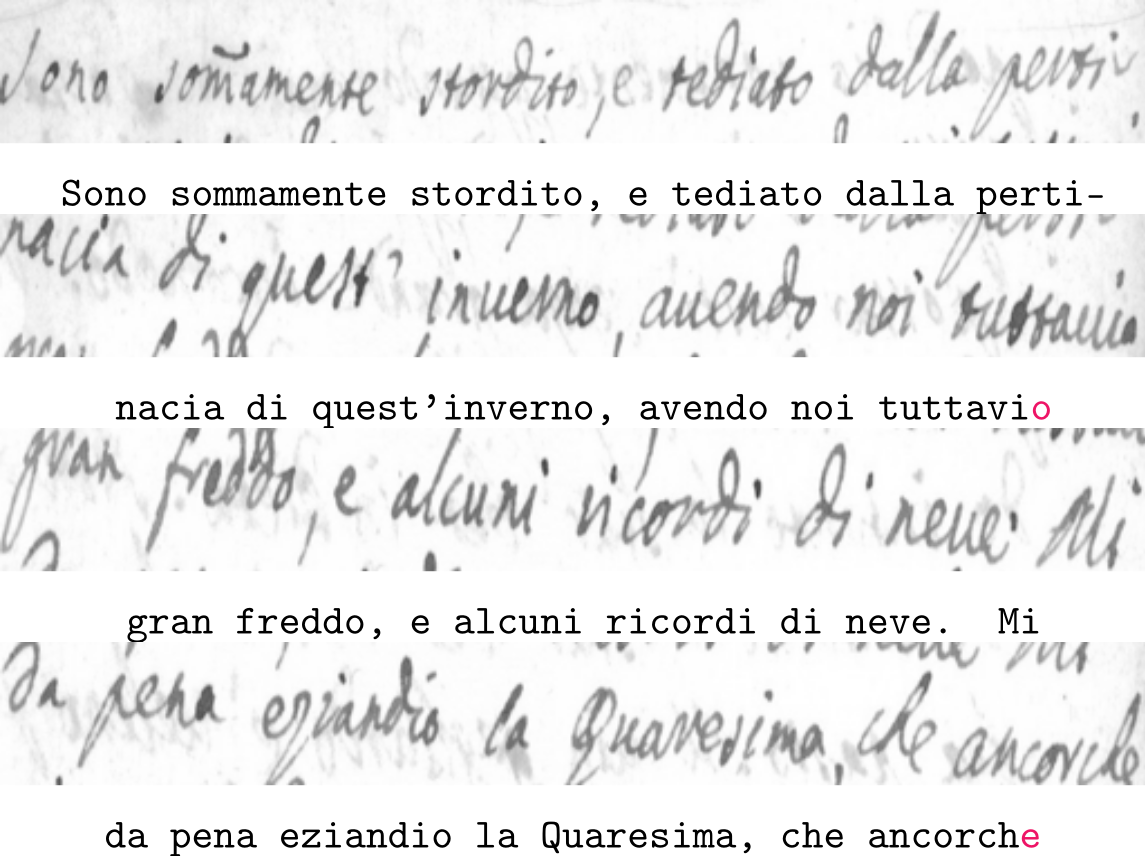

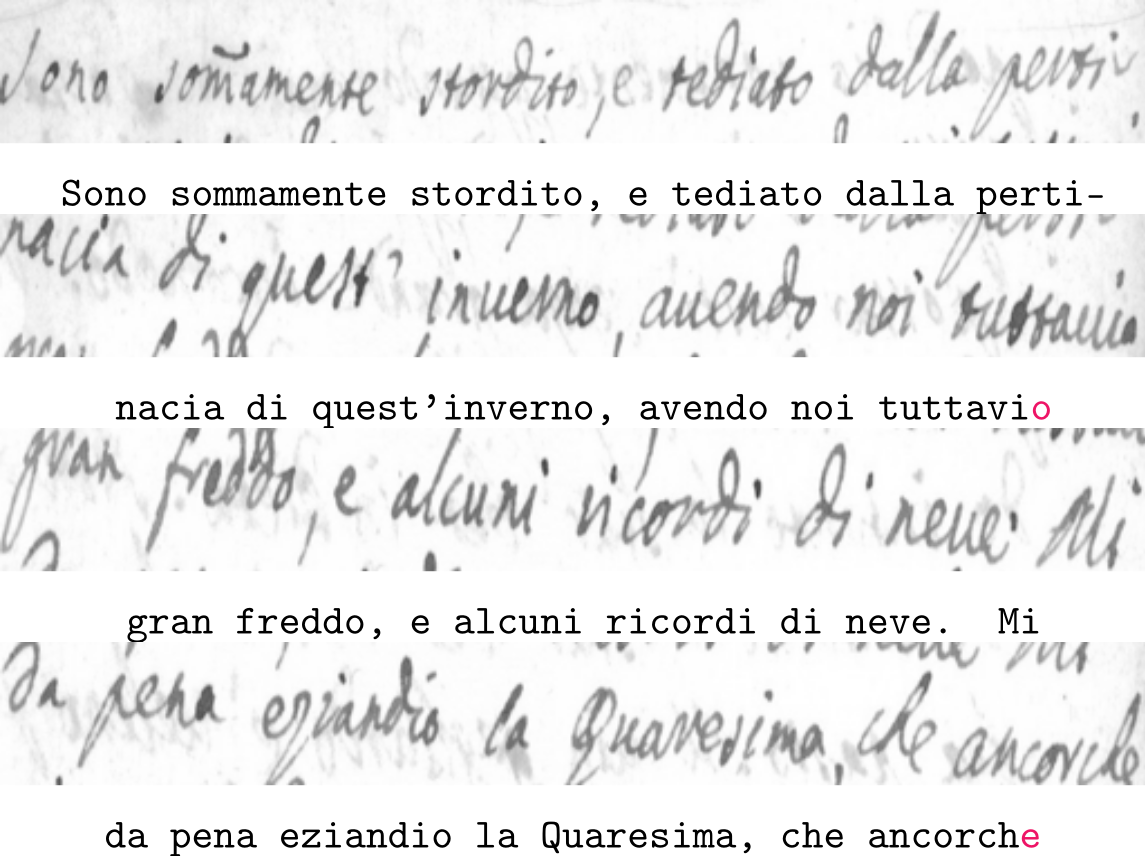

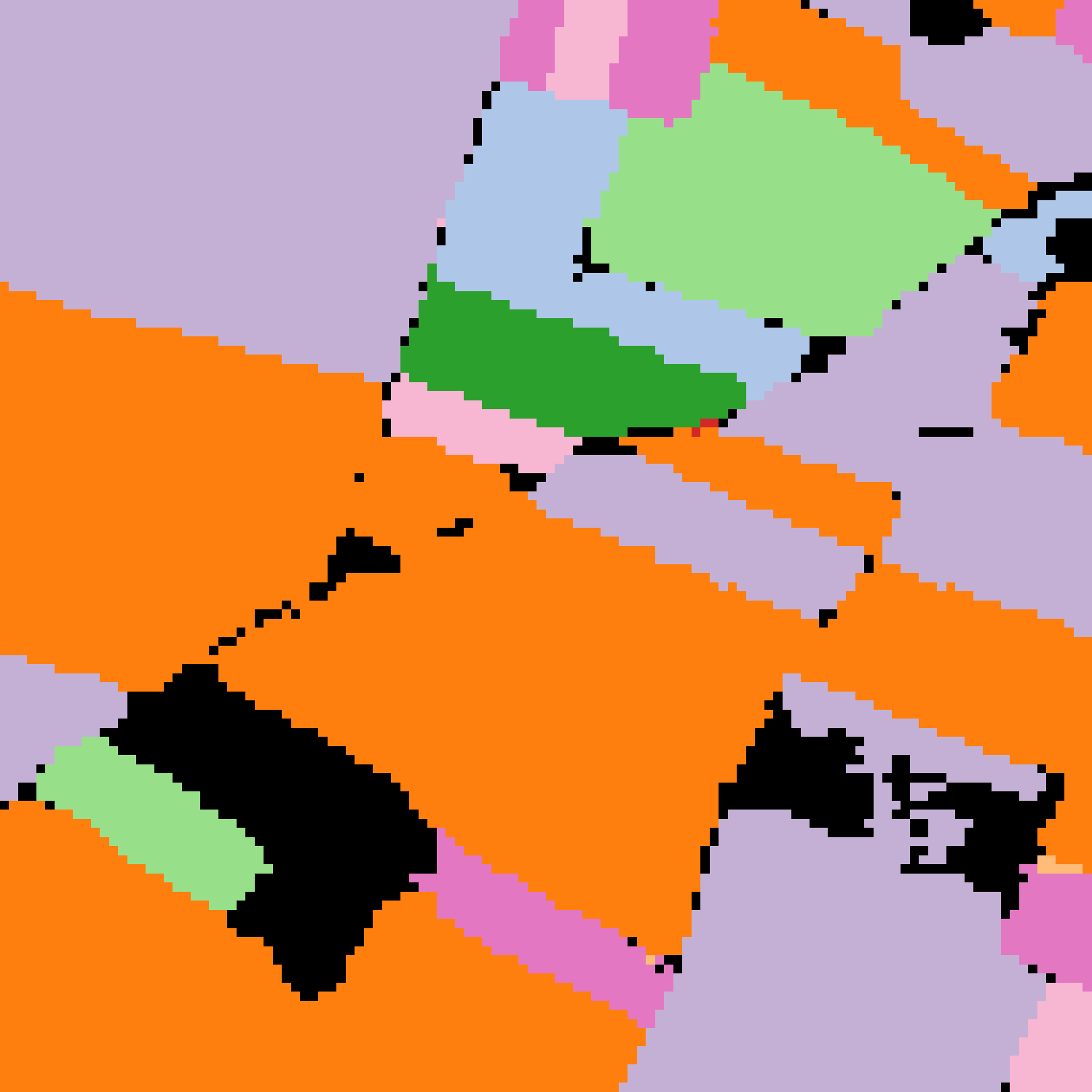

Figure 2: Evaluation across NLP, OCR, 3D segmentation, and Earth observation, demonstrating PoM’s retention of performance and speed advantage compared to MHA and hybrid models.

Experimental Evaluation Across Domains

The authors conduct a systematic empirical analysis of PoM in five diverse tasks: natural language modeling, image generation, OCR, point cloud segmentation, and remote sensing time series segmentation.

Natural Language Processing

GPT2 variants with PoM blocks achieve validation and downstream benchmark scores nearly matching standard MHA counterparts. Hybrid architectures (combining PoM and local attention) completely close the remaining performance gap, occasionally surpassing the vanilla Transformer. PoM-based models exhibit throughput improvements exceeding X∈Rd×n4 versus Transformer baselines at long sequences, outpacing Mamba and maintaining stable accuracy.

Optical Character Recognition

Replacing self-attention in state-of-the-art handwritten text recognition models with PoM blocks yields competitive error rates (CER, WER) on both single- and multi-line tasks. The throughput improvements are more pronounced as the number of aggregated input sequences (lines) increases, highlighting the efficiency gain in more demanding, long-context regimes.

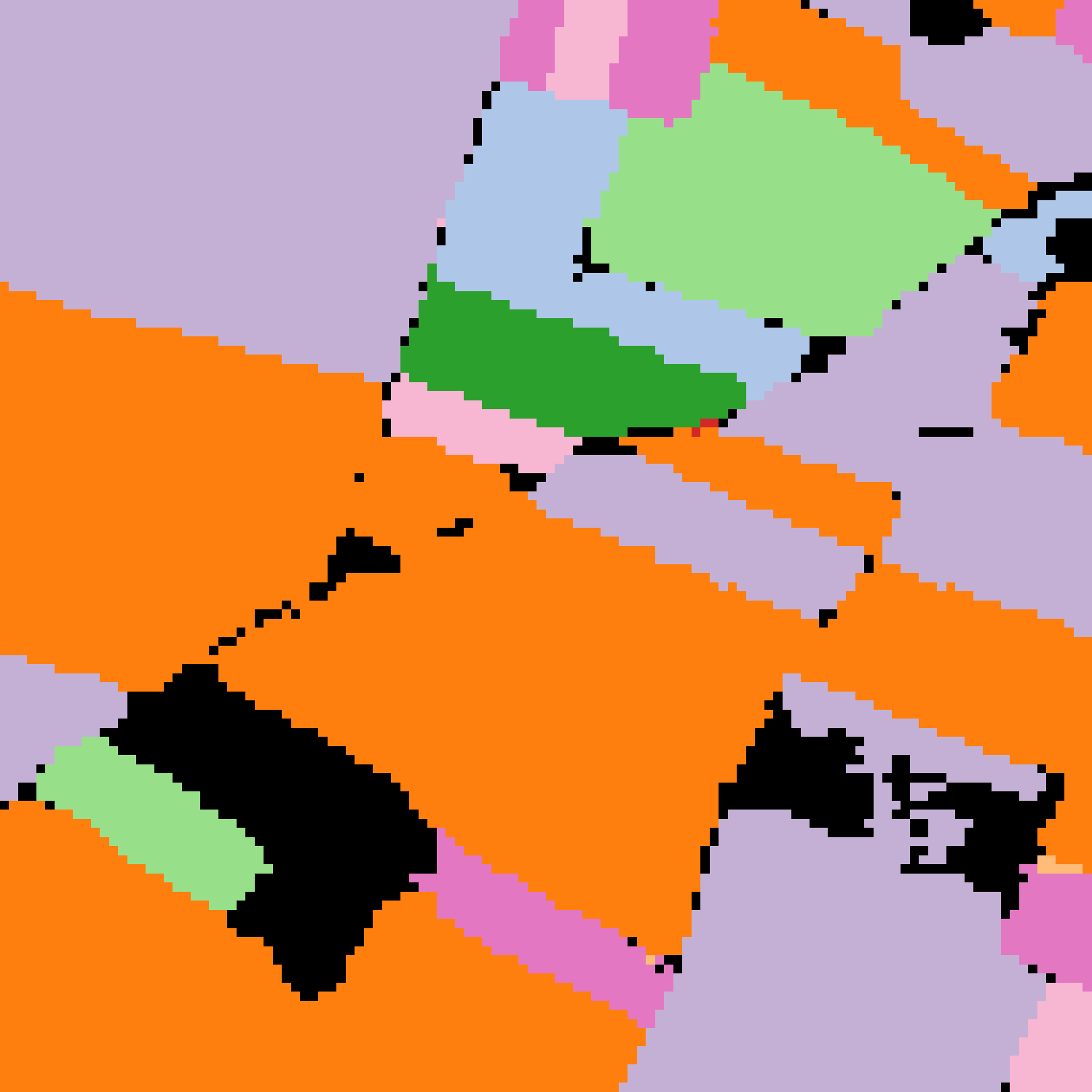

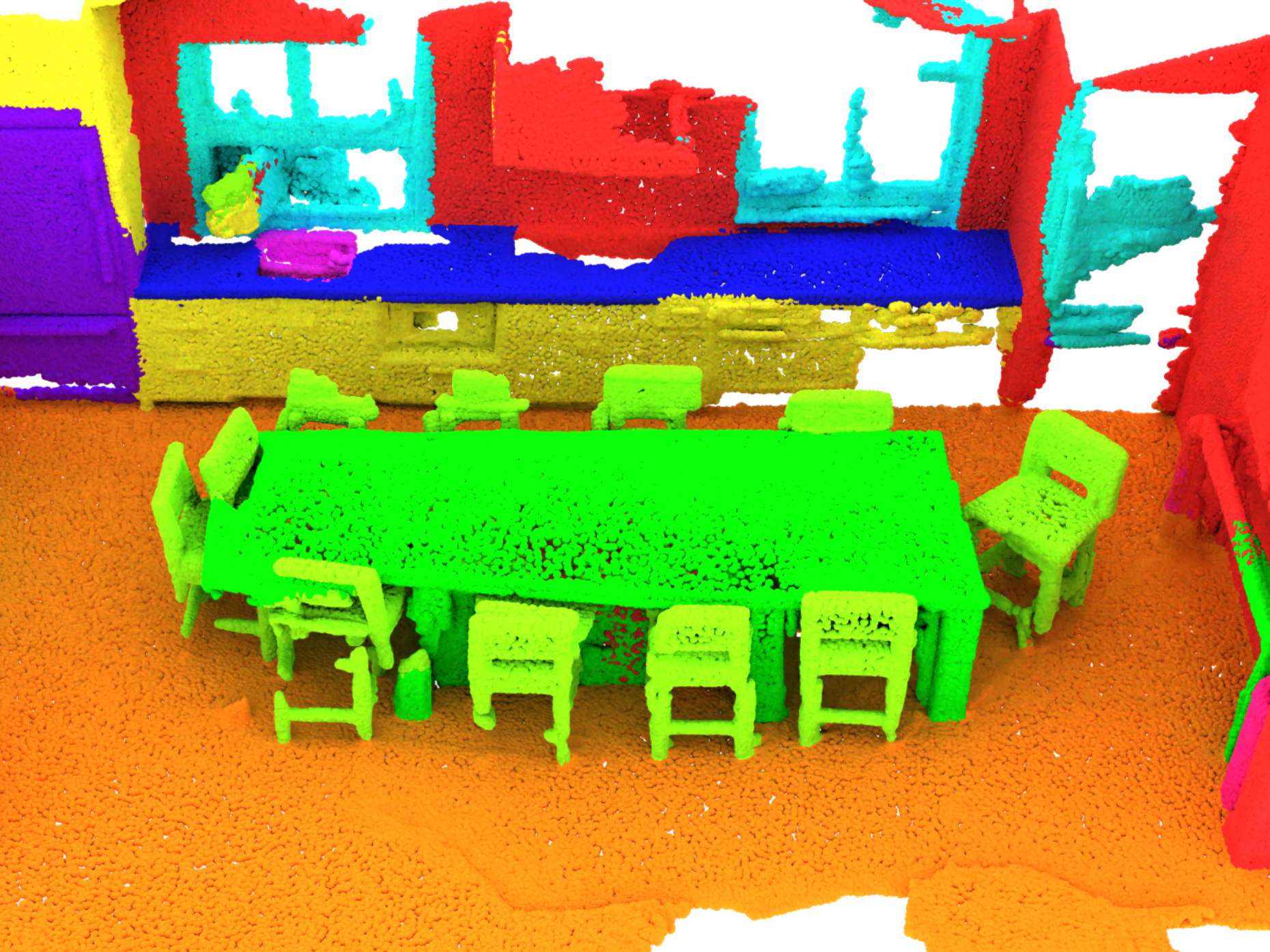

Earth Observation and 3D Point Cloud Segmentation

In dense, high-dimensional modalities (e.g., crop classification from satellite time series, point cloud semantic segmentation), PoM matches or slightly trails MHA in accuracy (mean IoU, OA), but inference throughput improves over X∈Rd×n5, enabling real-time or large-scale inference in scenarios where MHA would face computational or memory barriers.

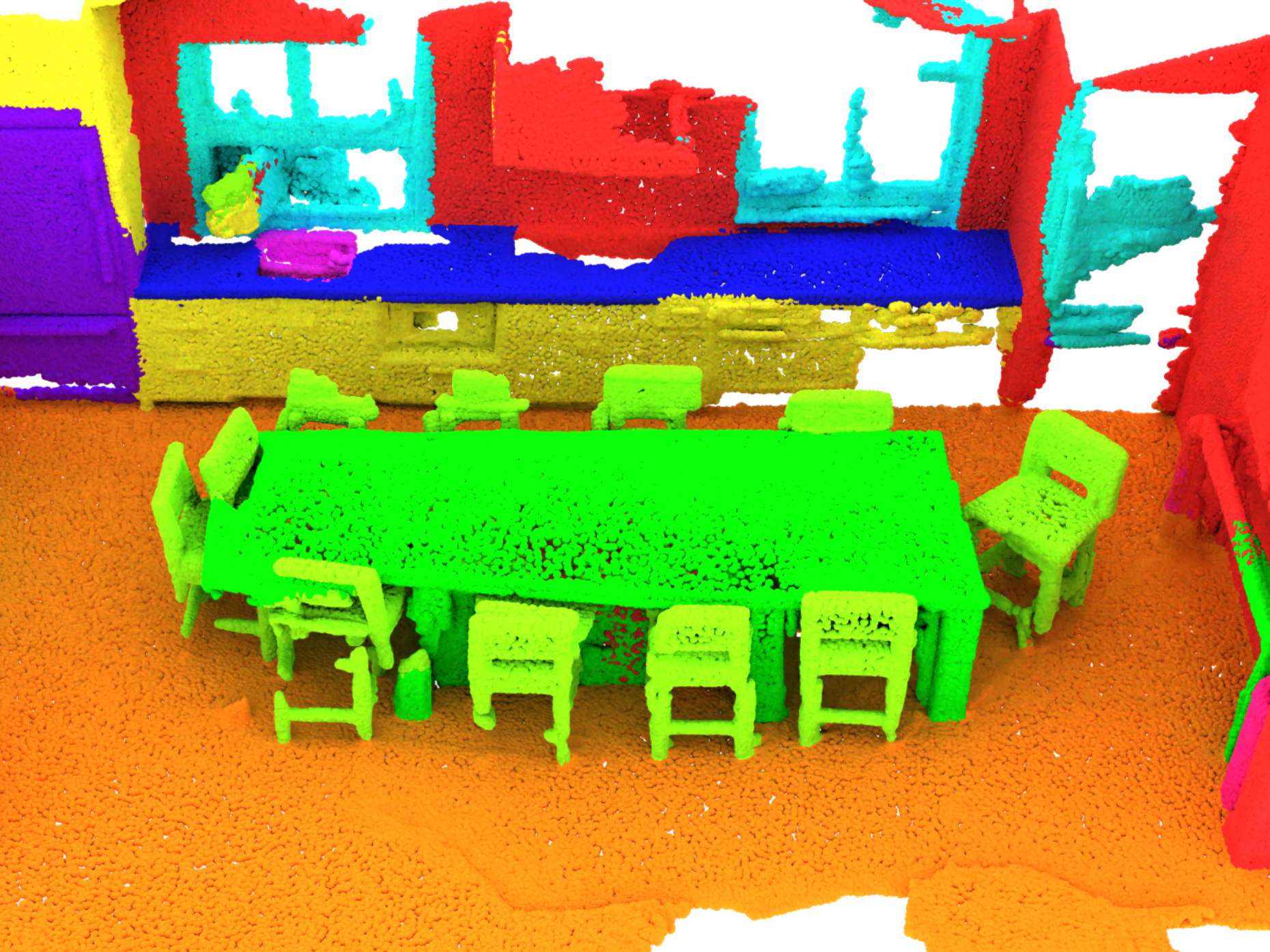

Image Generation

PoM substitutes for attention in scalable transformer-based diffusion models (e.g., SiT, DiT) trained on ImageNet for class-conditional synthesis. The replacement leaves FID scores unchanged, while drastically reducing computational latency per image as resolution increases.

Figure 1: Qualitative sample diversity for class-conditional image generation using SiPoM-XL/2, underscoring preservation of synthesis quality over PoM.

Discussion of Results and Claims

A salient claim—validated by extensive ablation and cross-domain evaluation—is that PoM yields negligible or no performance penalty relative to MHA for most practical settings when appropriately parameterized. The hybrid scheme, where local attention layers alternate with PoM blocks, demonstrates that global context provided by PoM can be complemented with fine-grained local modeling.

Numerical results reported underscore throughput gains:

- In English Wikipedia-scale language modeling, PoM-based architectures can process sequences up to X∈Rd×n6k tokens at up to X∈Rd×n7k tokens/s (see Table 2).

- PoM delivers X∈Rd×n8–X∈Rd×n9 speedup over FlashAttention at high resolutions in image generation.

- For satellite time series, throughput increases from n0 to n1 kmn2/s with PoM versus attention-based models.

Importantly, these improvements are achieved using high-level implementations, implying the potential for even greater acceleration with custom kernels.

Implications and Future Directions

Practically, PoM's linear complexity enables real-time processing and direct modeling of longer sequences—including high-resolution visual content, extended text, or complex multimodal data—without imposing arbitrary restrictions. Theoretically, PoM retains the universality of Transformers for sequence modeling within a more efficient computational envelope.

Key implications include:

- The architectural bottleneck imposed by quadratic self-attention can be circumvented without sacrificing model expressivity or accuracy, provided mixing operations are designed to preserve sequence context and permutation properties.

- PoM unlocks architecturally feasible training and deployment for long-context and high-dimensional tasks, which will likely facilitate new instantiations of multimodal and generative AI models.

Looking ahead, further optimization—particularly of hardware-aware kernels—will reduce overhead and extend PoM's advantage in all regimes. Exploration of PoM within large-scale pretraining and autoregressive modeling pipelines, as well as its hybridization with other efficient architectures (e.g., SSMs, convolutional modules), represents promising avenues.

Conclusion

The Polynomial Mixer establishes a new efficiency-accuracy frontier in sequence modeling, matching self-attention's empirical capabilities with dramatically lower resource requirements. The generality with which PoM can substitute for MHA across NLP, vision, geospatial, and generative modeling tasks, without specialized tuning or compromise, argues strongly for its adoption in future efficient Transformer frameworks. The modularity and formal guarantees of PoM suggest its seamless integration within existing architectures, providing a scalable path forward for the next generation of AI systems.