Mathematical methods and human thought in the age of AI

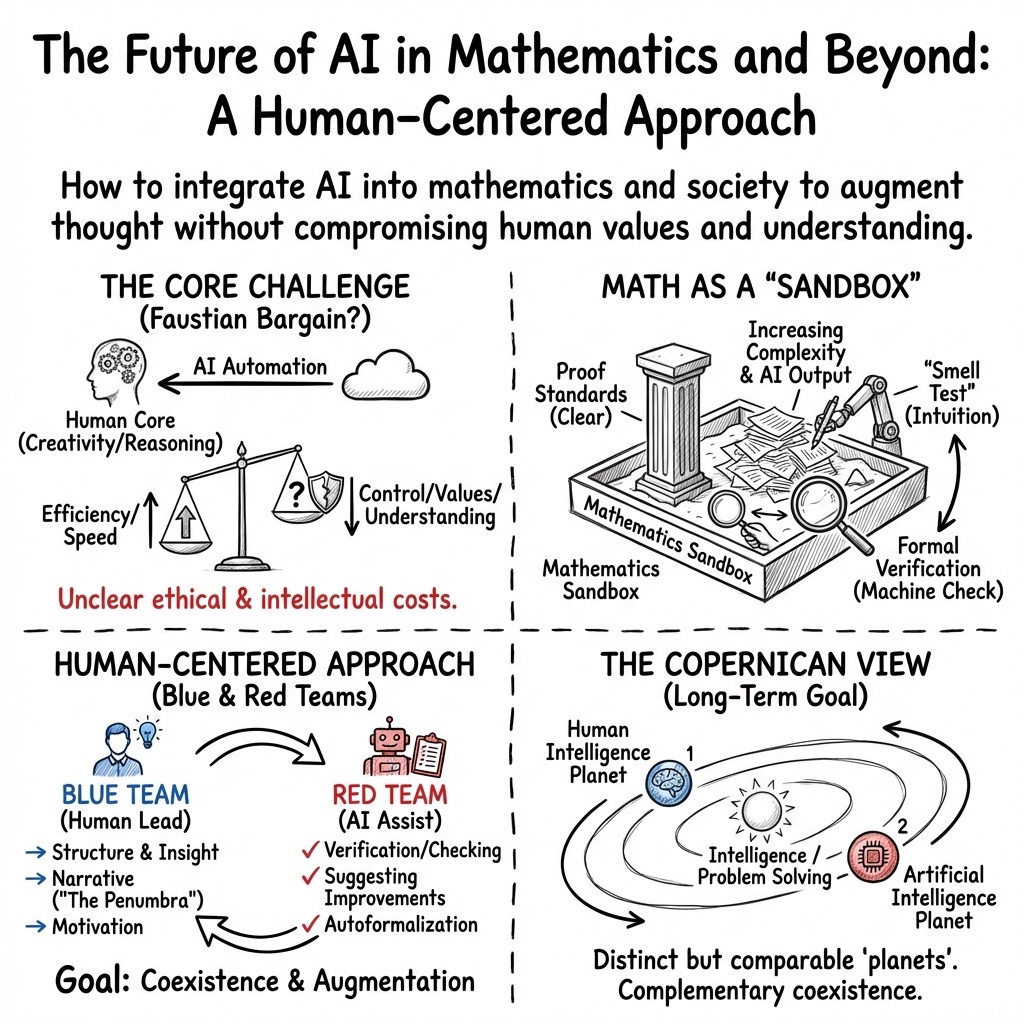

Abstract: AI is the name popularly given to a broad spectrum of computer tools designed to perform increasingly complex cognitive tasks, including many that used to solely be the province of humans. As these tools become exponentially sophisticated and pervasive, the justifications for their rapid development and integration into society are frequently called into question, particularly as they consume finite resources and pose existential risks to the livelihoods of those skilled individuals they appear to replace. In this paper, we consider the rapidly evolving impact of AI to the traditional questions of philosophy with an emphasis on its application in mathematics and on the broader real-world outcomes of its more general use. We assert that artificial intelligence is a natural evolution of human tools developed throughout history to facilitate the creation, organization, and dissemination of ideas, and argue that it is paramount that the development and application of AI remain fundamentally human-centered. With an eye toward innovating solutions to meet human needs, enhancing the human quality of life and expanding the capacity for human thought and understanding, we propose a pathway to integrating AI into our most challenging and intellectually rigorous fields to the benefit of all humankind.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper asks a big question: As AI gets better and spreads everywhere, how should we use it so it helps people rather than harms them? The authors focus on mathematics as a clear example, because math has strong rules for what counts as “true.” They argue that AI is another step in the long history of human tools, but it must stay human-centered—designed to support human goals, understanding, and quality of life.

Key Objectives and Questions

The paper aims to explore a few simple but important questions:

- Why are AI tools being built and adopted so quickly, and who actually benefits from them?

- How will AI change the way we do math, especially proof and understanding?

- What should count as real “intelligence,” “creativity,” and “knowledge” when machines can generate convincing work?

- How can we trust AI-made math, give proper credit, and handle issues like data and ownership?

- What are the economic, social, and environmental costs of AI, and how do we balance them against the benefits?

Methods and Approach

This isn’t an experiment-heavy paper. Instead, the authors use:

- History and analogies: They compare AI to past technologies like the printing press and the Industrial Revolution. These tools changed how we work and share ideas, but they didn’t create ideas by themselves. Modern AI is different because it can generate the ideas’ form (like text, images, or even math proofs).

- Thought experiments and classic tests: They mention ideas like the “Turing test” (can a machine talk like a human?) and the “Chinese room” (does following rules to speak a language mean true understanding?).

- Real examples from math:

- “Proof assistants” (like Lean) are computer programs that check every step of a math proof—like a super strict robot grader.

- “Autoformalization” is software that tries to turn human-written math into the precise language computers can check.

- Famous computer-aided proofs (like the four-color theorem) show how tech can help solve tough problems, but also raise new questions about trust and verification.

- The “smell test” is a mathematician’s gut feeling about whether a proof makes sense—a kind of intuition that AI doesn’t easily capture.

- Practical experiences: The authors work in math and art and have used AI tools themselves. They discuss specific risks (like “citogenesis,” where something gets cited so often by tools that it appears true even if it started as weakly supported or mistaken).

Main Findings and Why They Matter

Here are the paper’s key messages and why they’re important:

- AI can create convincing outputs without deep understanding. For example, a chatbot might write a “good-looking” proof that passes a computer check but doesn’t explain the big idea. This matters because humans learn from stories, motives, and insight—not just from correct answers.

- Formal checking helps, but it’s not enough. A proof assistant can certify a statement—but it doesn’t guarantee the statement matches the original intent. Even small misreadings of definitions (like whether “natural numbers” include 0) can flip a result.

- Math will adapt standards. Just like researchers learned to trust computer-aided proofs over time, the math community can build new rules for verifying AI-generated work—such as sharing code, separating computer parts from human reasoning, and making tests repeatable.

- There’s a risk of a flood of shallow work. If AI can mass-produce endless “technically correct” results, we might get lots of papers that don’t add real understanding or connect to bigger ideas. That could waste attention and weaken trust.

- Ethics and credit need new rules. Who owns AI-generated content? Who is responsible for errors? How do we properly cite work when hundreds of hidden contributors (training data, tool builders, users) are involved?

- The costs are huge and must be counted. AI uses a lot of money, energy, water, and skilled labor. If a small number of companies gain while many people lose jobs or opportunities, the social damage can be deep.

These points matter because they shape how we will think, learn, and work. If we get it wrong, we risk losing trust, fairness, and meaning. If we get it right, AI can amplify human abilities and speed up discovery.

Implications and Potential Impact

The authors suggest a human-centered path forward:

- Keep people at the center. Build and deploy AI to meet real human needs, improve quality of life, and expand understanding—not just to cut costs or chase hype.

- Strengthen trust. Use tools like proof assistants carefully, and pair them with clear explanations that show insight and motivation, not just correctness.

- Set clear standards. Require transparency about AI use, fair credit and citation practices, and new norms for responsibility when AI contributes to research.

- Value understanding, not just answers. Encourage mathematicians (and other researchers) to share the “why”—the intuition, experiments, and failed paths that teach the field—not just polished final results.

- Measure and manage costs. Count the environmental and social impacts, and make sure benefits reach many people, not just a few.

In simple terms: AI can be a powerful helper and idea generator. But to make it truly good for everyone, we need rules, wisdom, and care. We should use AI to boost human thought—not replace it—and build systems that protect fairness, learning, and trust.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Based on the paper, the following unresolved issues merit focused research and concrete action:

- An operational, measurable definition of “human-centered AI” in research settings, including explicit objectives, success metrics, and governance rules.

- Concrete metrics and evaluation protocols for “insight” or “explanatory depth” in mathematical work beyond formal correctness (e.g., generalizability, narrative clarity, transfer to new problems).

- Benchmarks, datasets, and error taxonomies for autoformalization that capture semantic fidelity (e.g., conventions like whether 0 is in ℕ) and guard against mis-specification.

- Supply-chain security and provenance frameworks for formal mathematical libraries (e.g., Mathlib): auditing, version signing, adversarial tests, rollback plans for compromised definitions.

- Standards to represent the “penumbra” of a proof (motivation, heuristics, metamathematics) in machine-readable, citable form alongside formally verified core proofs.

- Publication and peer-review guidelines for AI-generated or AI-assisted mathematics (disclosure requirements, replicability artifacts, model/version documentation, dataset usage).

- Authorship, credit, and contribution protocols when AI tools, engineers, training data curators, and researchers all materially shape results; criteria for when a model is a “tool” vs. a “contributor.”

- Liability and accountability regimes for errors in AI-assisted research (apportioning responsibility among authors, institutions, and tool providers; insurance/indemnification models).

- Citation and provenance standards to prevent “citogenesis” loops (source-of-truth tagging, confidence scores, decontamination of training sets, bibliographic lineage graphs).

- Responsible integration of AI-generated conjectures and “deep research” outputs: thresholds for publication, evidentiary labels, versioning, and replication practices before community uptake.

- Empirical, longitudinal studies on how AI use in coursework and research affects mathematical intuition, transfer, and long-term skill development; evidence-based curricular interventions.

- Role design and incentives for an emerging division of labor in mathematics (formalizers, toolsmiths, conjecture curators, integrators), and how career structures should adapt.

- Community mechanisms to triage and curate a potential flood of technically correct but low-value results (prioritization criteria, topic taxonomies, meta-reviews, community-driven “value signals”).

- Accepted definitions and tests for “understanding,” “creativity,” and “explanatory depth” tailored to mathematical practice, replacing outdated benchmarks (e.g., Turing-style tests).

- Model-selection guidance for researchers (risk–benefit checklists, domain constraints, data-governance fit, reproducibility and environmental budgets) to decide when AI is appropriate at all.

- Intellectual property and licensing frameworks for training data and outputs in scientific contexts (compensation to rights holders, opt-in/opt-out registries, derivative rights for proofs).

- Fair-use boundaries in training and deployment: whether and how public interest or “magnitude of benefit” justifies broader use of copyrighted materials; governance and oversight mechanisms.

- Standardized AI impact assessments for research deployments that quantify distributional effects, well-being, opportunity loss (e.g., displaced entry-level roles), and externalities—not just monetary ROI.

- Environmental accounting and targets: agreed life-cycle assessment (energy, water, e-waste), reporting requirements, and criteria for models to at least offset their own footprint.

- Technical pathways to align data-driven models with physical-law constraints (physics-informed, neuro-symbolic hybrids), plus benchmarks to test truth-seeking beyond pattern-matching.

- Methods and incentives to capture and share the research process (failed attempts, heuristics, exploratory code) at scale, including credit for negative results and process artifacts.

- Benchmarks and leaderboards for autoformalization and proof generation that reward readability, modularity, and pedagogical value—not only correctness and brevity.

- Detection and mitigation of dataset contamination and feedback loops in research assistants/search tools (audits, watermarking, citation hygiene, active decontamination).

- Equity interventions to address compute/tool access gaps for students and researchers globally (public compute pools, open models, shared formal libraries, training programs).

- Pedagogical standards and assessment designs resilient to LLM outsourcing (e.g., oral defenses, construct-and-critique tasks, proof reconstruction from perturbed premises).

- Governance models to avoid concentration of AI infrastructure power (public or cooperative clouds, antitrust guardrails, shared community infrastructure for formal verification).

- Practical ethical checklists that operationalize “benefit to humanity” for each AI use case (stakeholder mapping, harm forecasting, red-teaming, sunset clauses, continuous monitoring).

- Lifecycle strategies for decommissioning/retrofitting AI infrastructure to minimize stranded environmental and social costs.

- Empirical validation of the “mathematics-as-sandbox” claim: case studies that test whether norms and tools proven in math translate to other disciplines without unintended harms.

- Research on how trust and the informal “smell test” evolve in a mixed human–AI literature, and what new reputational signals (e.g., curated badges, provenance scores) effectively guide attention.

Practical Applications

Immediate Applications

Below are actionable uses that can be piloted or deployed now, with sector links, likely tools/workflows, and key dependencies or assumptions.

- AI-augmented formal verification for research and safety-critical software

- Sectors: academia (mathematics, CS), software, robotics, aerospace, finance (risk), hardware design

- What: Use LLMs to draft proof sketches/specifications and delegate machine-checkable parts to proof assistants; isolate computational lemmas; publish replicable code and “checksums”

- Tools/workflows: Lean/Mathlib, Coq/Rocq, Isabelle, Dafny/TLA+, CI pipelines (GitHub Actions), Overleaf or IDE plugins; structured “formal core + human narrative” submissions

- Assumptions/dependencies: Adequate library coverage; team skill with proof assistants; journal/regulator acceptance; compute cost for checking; governance for dependency updates

- AI-assisted literature review with provenance guardrails

- Sectors: academia, corporate R&D

- What: Employ “deep research” agents to surface obscure results, require human vetting, log prompts and sources, and prevent feedback loops (citogenesis) by excluding one’s summaries from the tool’s future training/index

- Tools/workflows: Elicit, Scite, Semantic Scholar, Zotero plugins; provenance tags and confidence scores; internal review checklists

- Assumptions/dependencies: Team capacity for verification; tool access; institutional norms for citing AI-assisted searches

- AI disclosure and citation practices for scholarly communication

- Sectors: academia, publishing, policy

- What: Require AI Contribution Statements (model, version, prompts, scope of assistance), cite models as tools, and deposit prompts/system settings with submissions

- Tools/workflows: Journal policy templates; submission portals with AI-use fields; ORCID/CRediT extensions for AI involvement

- Assumptions/dependencies: Publisher buy-in; community norms; legal/IP guidance on prompt and model disclosure

- Classroom assessment redesign to preserve learning while permitting AI

- Sectors: education (secondary, tertiary)

- What: Structure assignments around oral defenses, process logs, randomized variants, and “smell test” critiques of AI-generated solutions; allow constrained AI use with reflection

- Tools/workflows: LMS rubrics, viva-style checkpoints, version-controlled notebooks; AI-use reflection forms

- Assumptions/dependencies: Instructor training; departmental policies; academic integrity enforcement

- Reproducibility and supply-chain security for formal libraries and computational artifacts

- Sectors: academia, industry (software/hardware)

- What: Pin versions and lock dependencies for formal libraries; sign artifacts; provide checksums and result replication scripts for computational parts

- Tools/workflows: Nix/Guix, Sigstore/code signing, reproducible builds, artifact DOIs, provenance SBOMs

- Assumptions/dependencies: Upstream library cooperation; CI infrastructure; reviewer competence with reproducibility workflows

- Human-centered deployment checklists for AI rollouts

- Sectors: industry, public sector

- What: Pre-deployment assessment that maps intended beneficiaries, quantifies human benefit vs harm, requires human-in-the-loop for consequential decisions, and documents energy/water use

- Tools/workflows: Risk–benefit templates, Responsible AI checklists, socio-technical impact assessment, CodeCarbon/ML emissions trackers, carbon-aware scheduling

- Assumptions/dependencies: Executive sponsorship; access to operations data; incentives aligned to human-centered KPIs

- Environmental reporting for model training and operations

- Sectors: industry, regulators, sustainability

- What: Report energy, carbon, and water per training run and per 1,000 inferences; include site-level mitigations and grid mix

- Tools/workflows: Metering integrations, standardized reporting schemas, third-party audits

- Assumptions/dependencies: Data center cooperation; accepted accounting standards; customer/regulatory demand

- At-work “AI-use boundary” and data-protection policies

- Sectors: industry, government

- What: Define allowed tasks for AI (e.g., drafting, summarization) and prohibited tasks (e.g., confidential data ingestion) with mandatory human oversight for customer-facing outputs

- Tools/workflows: Policy rollouts, data-loss prevention, model access control, logging and review

- Assumptions/dependencies: Legal/privacy frameworks; security tooling; employee enablement

- Dual-artifact publishing: formal proof + expository penumbra

- Sectors: academia (mathematics, theoretical CS)

- What: Require a machine-checked formal core alongside a human-readable narrative that explains motivation, heuristics, and generalization

- Tools/workflows: Journals conference guidelines; artifact track; repository submission (e.g., Zenodo)

- Assumptions/dependencies: Editorial standards; community incentives for exposition; availability of proof assistants

- AI-assisted peer-review triage (not replacement)

- Sectors: academia, publishing

- What: LLM-based pre-scan to surface common red flags (e.g., flawed citations, internal contradictions) and summarize for reviewers; reviewers remain decision-makers

- Tools/workflows: Reviewer dashboards; “bad smell” checklists encoded as prompts; audit logs

- Assumptions/dependencies: Data privacy; reviewer buy-in; careful calibration to avoid bias

- Personal research hygiene for AI users

- Sectors: daily life, knowledge work

- What: Use AI for brainstorming/translation/summarization with explicit source tracking and mandatory cross-checking against primary sources before reuse

- Tools/workflows: Note-taking apps with citation fields, browser extensions for source verification, reading checklists

- Assumptions/dependencies: User discipline; tool usability; access to primary literature

Long-Term Applications

The following require advances in research, scaling, standardization, or cultural adoption before broad deployment.

- End-to-end autoformalized research pipelines (“proof notebooks”)

- Sectors: academia (math, CS), regulated software/hardware

- What: Authoring environments that convert informal math/specs to formal proofs, verify them, and link artefacts to narrative automatically

- Tools/workflows: Autoformalization LLMs tightly coupled to Lean/Coq/Rocq; LaTeX/Overleaf integration; literate-programming style “proof notebooks”

- Assumptions/dependencies: Major gains in autoformalization; broader formal library coverage; community training and standards

- AI co-researchers for conjecturing with metamathematical annotations

- Sectors: academia

- What: Systems that propose conjectures, provide experimental evidence, suggest proof strategies, and label axiomatic dependencies (reverse mathematics)

- Tools/workflows: Symbolic–neural hybrids; search over proof graphs; integrated experiment modules

- Assumptions/dependencies: Reliable conjecture ranking; norms for citing unverified AI-generated conjectures; datasets of heuristics and failed attempts

- Standards bodies for formal verification artifacts across industries

- Sectors: policy, aerospace, medical devices, automotive, finance

- What: Regulatory acceptance pathways for proofs/specs machine-verified to agreed standards; certification of formal libraries and toolchains

- Tools/workflows: ISO/IEC-style specifications; conformance test suites; third-party certification labs

- Assumptions/dependencies: Cross-industry consensus; liability frameworks; secure supply-chain governance for formal ecosystems

- Provenance and anti-citogenesis infrastructure

- Sectors: academia, media, search platforms

- What: Web-scale provenance graphs linking claims to primary sources and detecting AI-induced feedback loops; “do-not-train-on” tagging for derivative summaries

- Tools/workflows: Content-signing, Knowledge Graphs, cryptographic attestations, crawler rules

- Assumptions/dependencies: Platform cooperation; open standards for provenance; incentives to prefer primary sources

- Secure governance for formal math/software libraries

- Sectors: academia, open-source foundations, industry

- What: Risk management for “hacks” via subtle definition changes; mandatory reviews, code signing, and reproducibility enforcement for canonical libraries (e.g., Mathlib)

- Tools/workflows: Maintainer councils, secure CI, reproducible builds, incident response

- Assumptions/dependencies: Sustained funding; stewardship agreements; contributor vetting

- Value–impact accounting embedded in AI deployment and policy

- Sectors: industry, regulators, public sector

- What: Tie approvals, incentives, or procurement eligibility to quantified human-benefit metrics (access, well-being, safety) and documented harms/mitigations

- Tools/workflows: Socioeconomic impact models; third-party audits; longitudinal monitoring

- Assumptions/dependencies: Agreement on metrics; data availability; political will

- Workforce transition and new professional roles

- Sectors: education, industry, government

- What: Training pipelines for “AI proof engineers,” “AI provenance officers,” and human-in-the-loop supervisors; credentialing and apprenticeships

- Tools/workflows: Micro-credentials, professional standards, funded reskilling programs

- Assumptions/dependencies: Employer demand; public/private funding; standardized competencies

- Environmental self-offsetting AI deployments

- Sectors: energy, logistics, industry

- What: Require large models to demonstrably reduce emissions/water/energy in operations beyond their own footprint (e.g., grid optimization, routing, HVAC control)

- Tools/workflows: Measurement and verification protocols; causal impact evaluation

- Assumptions/dependencies: Robust baselines; access to operational levers; trustworthy auditing

- Cross-disciplinary journals and datasets for mathematical heuristics

- Sectors: academia, publishing

- What: Outlets and repositories that capture motivation, failed attempts, and exploratory data alongside proofs to train future AI and inform human intuition

- Tools/workflows: Structured lab notebooks; standardized schema for heuristics; open datasets

- Assumptions/dependencies: Cultural acceptance of publishing negative/heuristic results; funding for curation

- Consumer-facing provenance and AI-use indicators

- Sectors: daily life, media platforms, education

- What: Tools that surface provenance trails and AI involvement for articles, images, and claims; user alerts for likely feedback loops or weak sourcing

- Tools/workflows: Browser integrations, platform-native labels, source graphs

- Assumptions/dependencies: Reliable detection methods; platform cooperation; UX that supports comprehension

- Attention-allocation and trust scoring for research discovery

- Sectors: academia, funding agencies

- What: Systems that help triage the growing volume of AI-assisted papers by combining formal correctness, author reputation, empirical support, and narrative value

- Tools/workflows: Multifactor ranking algorithms; community-curated lists; reviewer marketplaces

- Assumptions/dependencies: Agreement on scoring dimensions; safeguards against gaming; openness of metadata

Each application reflects the paper’s core themes: center human benefit, combine formal verification with transparent narrative, guard against provenance failures and “odorless” but unhelpful outputs, and build governance and educational practices that preserve understanding alongside automation.

Glossary

- AI effect: The tendency for tasks once achieved by AI to no longer be seen as markers of intelligence. "The

AI effect'' also was recognized around this time; for instance, the ability to perform well at chess was considered a good measure of intelligence until the advent of chess engines which couldmindlessly'' outperform chess masters through mechanical exploration of game trees, at which point the ``chess test'' for intelligence became largely abandoned." - AlphaProof: An AI system that generates formally verified mathematical proofs. "Consider for instance the proofs generated by AlphaProof \cite{alpha} to problems in the 2024 International Mathematical Olympiad, which contained numerous redundant or inexplicable steps but nevertheless were formally verified to be correct solutions."

- autoformalization: Automatic conversion of informal mathematical text into a formal proof language. "for instance by integrating AI tools to achieve partial (or possibly even complete) ``autoformalization'' \cite{wu}."

- automated theorem prover: Software that attempts to prove theorems automatically via formal logic. "to the more traditional good-old fashioned AI (GOFAI) (such as automated theorem provers or chess engines), which can solve narrow ranges of problems by applying precise mathematical rules."

- axiom of choice: A set-theoretic principle asserting the existence of choice functions for families of nonempty sets. "which seeks to understand which axioms of mathematics (e.g., the axiom of choice, or the law of the excluded middle) are actually needed to establish a given result."

- Bourbaki era: A period influenced by the Nicolas Bourbaki collective emphasizing unified, abstract, and rigorous exposition in mathematics. "the ``Bourbaki era'' \cite{mashaal} of having a central authority prescribe the orthodox practice of mathematics is already decades in the past"

- Chinese room: Searle’s thought experiment arguing that symbol manipulation alone doesn’t constitute understanding. "Searle's ``Chinese room'' thought-experiment \cite{searle}, regarding the question of whether a mechanical device programmed to converse in Chinese truly understands the language, dates back to 1980."

- citogenesis: A circular citation phenomenon where repeated referencing creates false credibility. "AI is also on the verge of creating potentially widespread circular citation loops, a process humorously dubbed ``citogenesis'' by Randall Munroe"

- deepfakes: Synthetic media that convincingly imitates real images, audio, or video. "a flood of deepfakes and slop has followed, sloshing through our digital third spaces."

- diffusion model: A generative model that learns to reverse a noise process to synthesize data such as images. "or diffusion models that can generate images and other media"

- Fair Use: A U.S. legal doctrine permitting limited use of copyrighted material without permission under certain conditions. "So far, much of the accumulation of training data for the LLMs has been argued (by their developers) as falling under the ``Fair Use'' doctrine."

- Fermat's last theorem: The statement that no nonzero integers satisfy an + bn = cn for n > 2. "For instance, Fermat's last theorem asserts that for any natural number greater than $2$, there are no natural number solutions to the equation ;"

- first-order logic: A formal logical system that quantifies over individuals but not over predicates or functions. "Such interpretations and impressions of the mathematical text generally are not captured in the official frameworks of rigorous mathematics, such as first-order logic or set theory;"

- formal proof assistant: Software that mechanically checks proofs written in a precise formal language. "through the more widespread deployment of formal proof assistants (such as Lean or Rocq) which can automatically check the validity of a mathematical argument if it is written in a certain precise computer language \cite{toffoli}."

- formal verification: Mathematically proving that a system or statement satisfies a formal specification. "Firstly, formal verification only certifies that a formalized argument establishes a formal mathematical statement, but does not rule out errors in translation between the formal statement and the original intended statement."

- four color theorem: The theorem that any planar map can be colored using at most four colors so adjacent regions differ. "Large computer-assisted proofs, such as the proof of the four color theorem \cite{appel} or the Kepler conjecture \cite{kepler}, were initially quite controversial, being impractical to fully check by hand;"

- fourth paradigm: The data-intensive paradigm of scientific discovery emphasizing large-scale data analysis. "the ``fourth paradigm'' of data-driven mathematics \cite{fourth} could conceivably be so successful as to crowd out the more traditional paradigms of empirical evidence, theoretical reasoning, and computational numerics"

- GOFAI: Good-old-fashioned AI; symbolic, rule-based approaches to AI. "to the more traditional good-old fashioned AI (GOFAI) (such as automated theorem provers or chess engines), which can solve narrow ranges of problems by applying precise mathematical rules."

- Kepler conjecture: The statement about the densest packing of equal spheres in three-dimensional space. "Large computer-assisted proofs, such as the proof of the four color theorem \cite{appel} or the Kepler conjecture \cite{kepler}, were initially quite controversial"

- LLM: A machine learning model trained on vast text corpora to predict and generate text. "such as LLMs that can process complex text"

- law of the excluded middle: The logical principle that for any proposition P, either P or not P is true. "which seeks to understand which axioms of mathematics (e.g., the axiom of choice, or the law of the excluded middle) are actually needed to establish a given result."

- Lean: An interactive theorem prover used for formalizing and verifying mathematical proofs. "through the more widespread deployment of formal proof assistants (such as Lean or Rocq) which can automatically check the validity of a mathematical argument if it is written in a certain precise computer language \cite{toffoli}."

- Mathlib: A large community-driven library of formalized mathematics for Lean. "Lean's Mathlib project"

- metamathematics: The study of mathematics itself using mathematical methods, especially properties of formal systems. "allowing for the metamathematics\footnote{One example of such metamathematics is the reverse mathematics (see, e.g, \cite{stillwell}) of a theorem, which seeks to understand which axioms of mathematics (e.g., the axiom of choice, or the law of the excluded middle) are actually needed to establish a given result. Traditionally, the reverse mathematics of a result is only explored many years after the original proof of the result, and requires specialist training in logic as well as domain expertise for the subfield of mathematics that the theorem resides in.} of a result to be rigorously discussed and explored simultaneously with the mathematical result itself."

- Peano Axioms: A collection of axioms intended to formally characterize the natural numbers. "A typical example was the claim by Nelson \cite{nelson} in 2011 that the Peano Axioms were logically inconsistent;"

- prisoner's dilemma: A game-theoretic scenario where individual rational choices can lead to worse collective outcomes. "The ``prisoner's dilemma'' of such competition has pressured many individuals and organizations to experimentally adopt these tools as hastily as possible"

- reverse mathematics: A program studying which axioms are necessary to prove particular theorems. "One example of such metamathematics is the reverse mathematics (see, e.g, \cite{stillwell}) of a theorem, which seeks to understand which axioms of mathematics (e.g., the axiom of choice, or the law of the excluded middle) are actually needed to establish a given result."

- Rocq: A proof assistant (the rebranded successor to Coq) used for formal verification of mathematics. "through the more widespread deployment of formal proof assistants (such as Lean or Rocq) which can automatically check the validity of a mathematical argument if it is written in a certain precise computer language \cite{toffoli}."

- Turing test: A test of machine intelligence based on indistinguishability from human conversation. "The famed

Turing test'' of whether an AI could converse in a manner indistinguishable from humans has similarly been effectively passed by modern LLMs (see, e.g., \cite{turing}), relinquishing its former status as agold standard'' for artificial intelligence."

Collections

Sign up for free to add this paper to one or more collections.