- The paper introduces a bounded autonomy approach via three interfaces—Converge, Ground, and Whisper—that balance LLM autonomy with player-guided gameplay.

- The paper demonstrates that probabilistic reply-chain decay reduces mean chain depth from 10.0 to 4.4, ensuring stable and socially coherent interactions.

- The paper shows that soft player steering achieves 86.7% intervention alignment, validating robust action grounding and safety in live multiplayer settings.

Bounded Autonomy for LLM Characters in Live Multiplayer Games

LLMs enable open-ended, context-sensitive behavior and naturalistic dialogue for game characters. However, integrating LLM-driven agents into live multiplayer games introduces critical runtime control problems: balancing agent autonomy with playability, ensuring agent actions remain executable, keeping multi-character social interaction coherent, and enabling players to subtly guide their agents without direct command-and-control. Naively granting LLM agents full behavioral autonomy leads to disruptions, including socially incoherent action propagation, invalid or unsafe actions, and a dichotomy between excessive micromanagement and unplayable unpredictability. The paper formalizes these as manifestations of a more general bounded autonomy problem and proposes a three-interface control architecture as a system-level solution.

The Bounded Autonomy Architecture

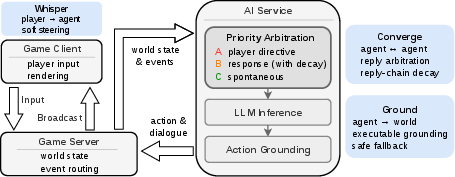

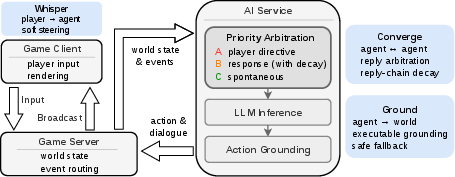

Bounded autonomy is realized via three runtime interfaces: Converge (agent-agent arbitration and cascaded interaction bounding), Ground (embedding-constrained action grounding with robust fallback), and Whisper (player soft steering).

Figure 1: System architecture: game client for input/render, server for state/event routing, and stateless AI for arbitration, LLM inference, and grounding. Interfaces: Whisper (player-to-agent soft steering), Converge (agent-to-agent arbitration/decay), Ground (agent-to-world action grounding with fallback).

The architecture decouples the LLM-driven agent from unfettered world-modification capacity. Converge is responsible for controlling reply propagation and focus to ensure social legibility and containment of cascades; Ground ensures only valid, semantically-matched actions are executed, and Whisper gives players realtime, lightweight influence over agent behavior without overriding autonomy.

Converge: Controlling Social Propagation

The Converge interface implements two key control primitives:

- Reply-Focus Arbitration: Determination of the most socially relevant stimulus when multiple candidate actions compete. This is operationalized by relationship-biased routing, favoring interlocutors with the strongest ties, aligning with conversational analysis and HCI literature.

- Probabilistic Reply-Chain Decay: A stochastic reply continuation function attenuates propagation depth, using a decay coefficient (e.g., α=0.2) to reduce the probability of further replies with each depth increment. This mechanism replaces hard caps with distributional bounds, ensuring conversations in live rooms do not devolve into endless or high-frequency cascades.

Empirical results demonstrate a binary split: with decay disabled, all trials hit the max chain depth (mean = 10.0), eliminating autonomous behaviors. With decay enabled, chain depths are naturally capped (mean = 4.4, SD = 1.3), and autonomous event share increases to 0.77.

Ground: Embedding-Based Action Mapping

LLM outputs are mapped to a finite set of behavior bundles encapsulating animation, dialog, and world-action pairs. Free-form intent expressions (from the agent or player) are matched to executable bundles using sentence-level embeddings (all-mpnet-base-v2), with fallback to safe actions if similarity confidence is low. Emotional state constraints further prune candidate sets, ensuring alignment of intent and affect expression. This approach prevents invalid and semantically unsafe actions while supporting extensible behaviors.

Evaluation over a manually created probe set yields:

- 87% top-1 and 96% top-3 accuracy for talk-bundles,

- 84% top-1 accuracy for to-self bundles (with near-perfect coverage for non-fantastical actions),

- 63% top-1 for non-talk bundles, with most errors attributable to semantic proximity among physical-contact gestures.

Failure cases are semantically interpretable (e.g., adjacent-but-not-identical actions); robust safety is ensured via fallback.

Whisper: Soft Player Steering

Whisper provides player-to-agent steering without total override. Player inputs are interpreted as high-priority, soft nudges influencing the agent's next action via the same bundle selection/grounding pipeline. Whisper's "bias not dictate" principle preserves emergent agent authorship while enabling players to course-correct or inject narrative nuance in real time.

A 30-case benchmark shows 86.7% intervention-alignment, with to-other (interpersonal) cases succeeding in all instances and to-self cases limited by the expressiveness of the to-self action bundle pool. Whisper effectiveness is directly causal: swapping the whisper direction flips behavioral realization for the same context.

System Implementation Details

Every agent operates on a fixed-interval behavior heartbeat (40s), ingesting serialized room state and event history. The stateless AI service processes arbitration, grounding, and response generation. The architecture prioritizes:

- External player steering (Priority A > B > C).

- Synchronization of actions within the world state via the game server.

- Consistency and deduplication via agent-level locks and time-gated message suppression.

Practical and Theoretical Implications

Practically, this architecture demonstrates live deployment feasibility for LLM-controlled, player-owned agents in multiplayer social games, realizing:

- Synchronous, player-steerable agent behavior,

- Robust containment of disruptive action cascades,

- Action safety and social legibility,

- Lightweight, player-accessible real-time intervention.

Theoretically, the work reframes LLM character integration as a runtime control problem, highlighting that playability, autonomy, and social coherence require systemic mechanisms beyond improved generative models or richer cognitive architectures (as explored in prior multi-agent simulation work [park2023generative], [sid2024], [aivilization2026]).

Limitations and Future Directions

Current limitations include:

- Hand-tuned, static decay parameters; adaptive or learned decay could improve flexibility across diverse room contexts and episode lengths.

- Simple, flat embedding-based retrieval for action mapping: hierarchical or context-aware retrieval architectures could resolve ambiguity for dense or semantically similar bundles.

- Evaluation sets are curated rather than in-the-wild; live play-derived benchmarks could better capture ecological validity.

- Whisper’s efficacy is ultimately bounded by the underlying LLM’s adherence to soft guidance and by the coverage of the behavior bundle pools.

- The architecture does not enhance long-horizon cognition, memory, or personality continuity per agent.

Future work should address human factors in authorship/influence tradeoff, extend action grounding with domain-adapted or contrastive embeddings, and formalize arbitration policy optimization under real play dynamics.

Conclusion

Bounded autonomy provides a technically viable control architecture enabling LLM characters to function as socially coherent, executable, and player-steerable agents in live multiplayer environments. The triadic interface approach—Converge, Ground, and Whisper—addresses cascading reply control, consistent action grounding, and lightweight player intervention, collectively solving both practical deployment and fundamental interaction design challenges for LLM-character integration. This system establishes a template for future multiplayer games with controllable, emergent, LLM-driven character play.