- The paper introduces a dual-factor framework that decouples technical feasibility from risk to assess AI automation potential.

- It employs task decomposition of 2,087 work activities across 923 occupations using LLM ensembles and HITL validation.

- Findings reveal a cognitive risk asymmetry where human risk assessments inflate AI estimates, influencing labor reallocation and compliance premiums.

Tech-Risk Boundaries in Occupational Automation: An Expert Review of "Bounded by Risk, Not Capability"

Introduction

"Bounded by Risk, Not Capability: Quantifying AI Occupational Substitution Rates via a Tech-Risk Dual-Factor Model" (2604.04464) scrutinizes the dominant paradigm in AI-driven labor market analysis. The authors challenge the adequacy of existing, capability-centric models of occupational exposure to LLMs by introducing risk-based constraints pivotal to actual AI integration. Their core argument is that technical feasibility alone cannot predict labor disruption; institutional, legal, and physical risk factors manifest critical bottlenecks in real-world adoption, which cannot be captured by models limited to evaluating statistical algorithmic reach. Through a granular, task-decomposed analysis and a formal Tech-Risk Dual-Factor framework, the paper advances the discourse from static technical capability metrics to a decision-theoretic paradigm that aligns with behavioral economics, management science, and regulatory realities.

Methodological Framework: Task Decomposition and Dual-Factor Quantification

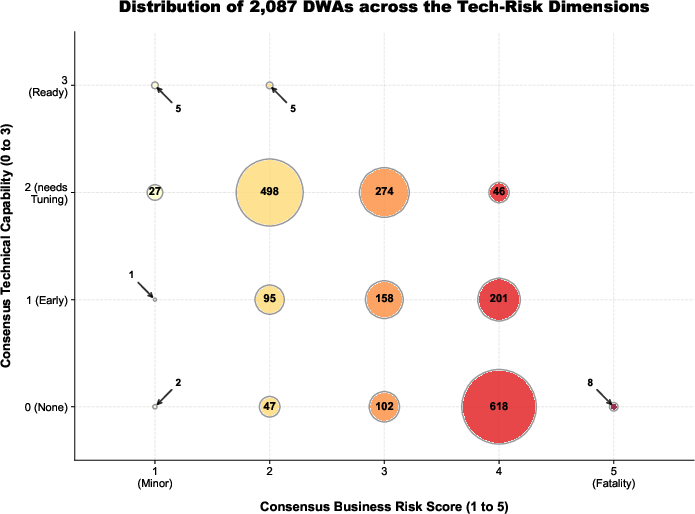

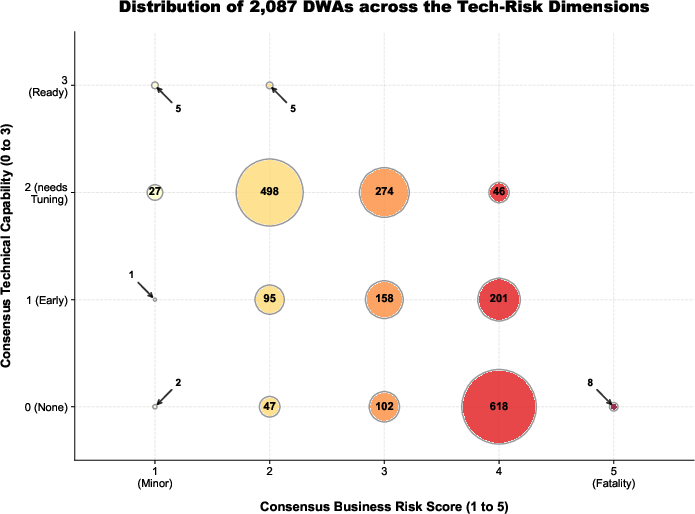

A principal innovation of the study lies in its bottom-up, micro-analytical data operationalization. The methodology deconstructs 923 ONET occupations into 2,087 atomic Detailed Work Activities (DWAs). Each DWA is then mass-scored by a localized ensemble of state-of-the-art open-source LLMs (Qwen2.5, Gemma-2-27b, Llama-3.1, Mistral-Nemo), robustly evaluated against two orthogonal axes:

- Technical Feasibility (Tech Level, 0–3): Determining whether the current AI stack (native LLM, external agents, system integration, or HITL) can autonomously execute the atomic work.

- Failure Risk (Risk Score, 1–5): Quantifying the downstream business, legal, or safety penalty of failure, from trivial inefficiency to fatal impact.

Figure 1: Distribution of 2,087 DWAs across Technical Capability and Business Risk, showing dense clustering in medium-to-high risk segments and underscoring the empirical bottleneck introduced by non-technological frictions.

The authors implement a multi-model ensemble scoring matrix and stratified Human-in-the-Loop (HITL) validation with 31 cross-disciplinary experts, controlling for epistemic bias through deliberate qualification of the risk cohort. Consensus between AI and humans is strong for technical boundaries (Spearman's ρ≈0.88), but a substantial, statistically significant inflation in risk appraisal emerges among management experts relative to AI baseline (+0.35).

Cognitive Risk Asymmetry: Quantifying the Human–Algorithm Gap

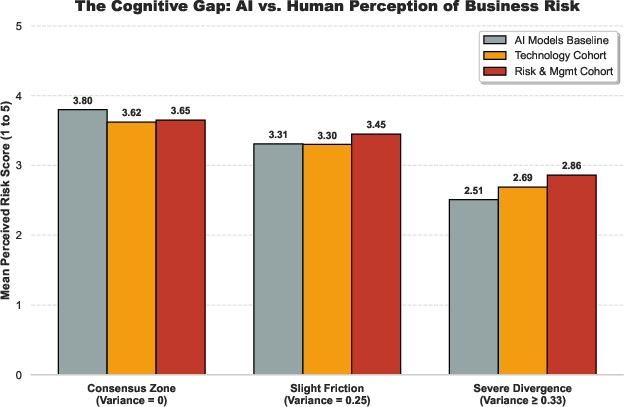

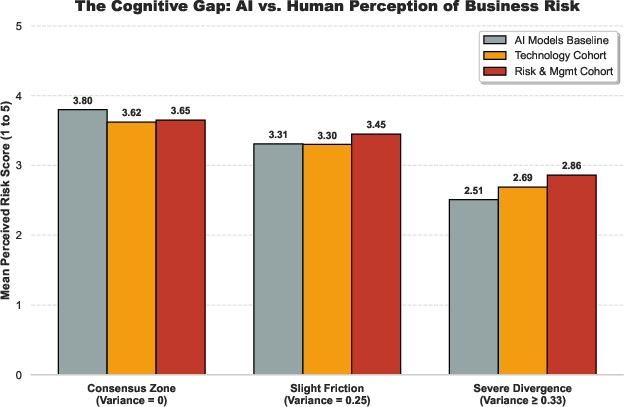

A core empirical contribution is the observed "Cognitive Risk Asymmetry." In severe divergence zones—where technical and legal consequences of task failure are ambiguous—human management experts systematically impose a structural penalty above algorithmic estimates. Ordered logit regression confirms a significant, non-trivial institutional risk premium (β=0.65, p<0.001), with manager-assigned scores exceeding AI outputs even in the absence of technical ignorance.

Figure 2: Visualizing the Cognitive Gap; in ambiguous, high-risk contexts, human management experts inflate perceived risk by +0.35 over LLM ensemble estimates—implicating structural risk aversion as a dominant friction.

This penalty is rationalized as a function of organizational liability. The asymmetric cost structure for tail-risk events (litigation, injury, catastrophic loss) demands conservative overrides from fiduciary actors—a dynamic not mirrored in probability-driven generative architectures. Algorithmic aversion is strongest in tasks for which organizational survival, legal exposure, or brand equity is threatened by low-probability but high-consequence model failures, thus sharply bounding practical automation potential.

The Tech-Risk Dual-Factor Matrix: Constraining Automation by Risk

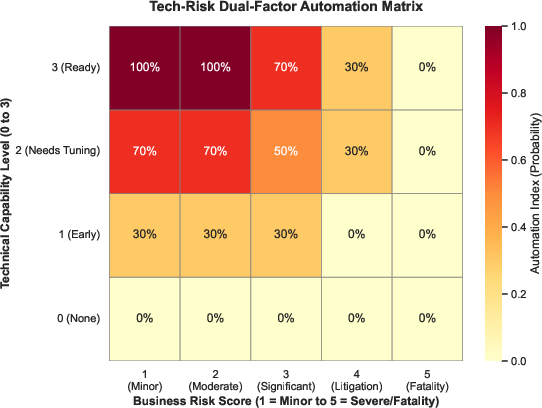

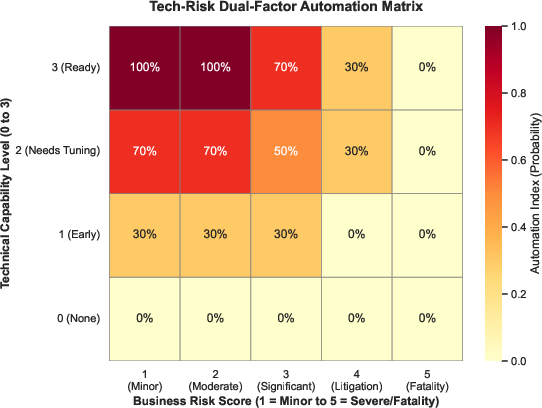

The Tech-Risk Dual-Factor mapping is operationalized via a piecewise function that clamps the Automation Index (AI) for each DWA according to both feasibility and risk score. Severe risk (R=5) or intrinsically physical/ethical tasks (Tech Level = 0) enforce an absolute automation ban (AI=0), regardless of technical advances elsewhere. Even for tasks with maximal technical readiness, substantial compliance risk (e.g., R=4) restricts the automation to partial augmentation (AI≤0.3), reflecting co-piloting regimes.

Figure 3: The Tech-Risk Dual-Factor Automation Matrix; technical progress is non-linearly devalued by risk, with severe and fatal categories triggering hard vetoes independent of the LLM’s raw capability.

At the task aggregation level, automation exposure is bottlenecked by the lowest-scored DWA (Leontief-style O-ring aggregation), prioritizing systemic risk over average technical proficiency. Occupational indices are subsequently computed via importance-weighted task aggregation, furnishing a granular, empirically risk-adjusted vulnerability distribution for 923 occupations.

Redistribution of Labor Market Vulnerability: From Symbolic Cognition to Physical Embodiment

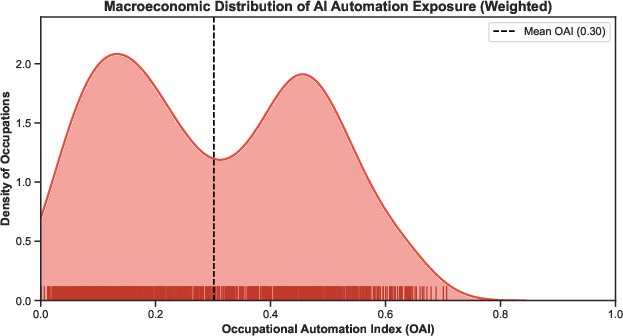

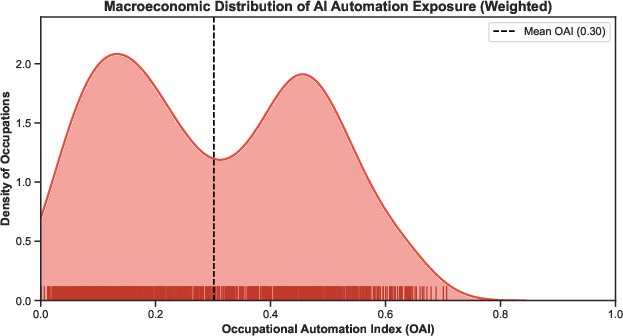

The empirical application yields a non-uniform vulnerability distribution that is at odds with canonical Routine-Biased Technological Change (RBTC) expectations. High OAI scores (>0.7) are sharply concentrated in non-routine, symbolic-manipulation occupations (Data Scientists, Editors, Technical Writers), while embodied physical trades and high-liability healthcare roles demonstrate near-immunity (+0.350).

Figure 4: Macroeconomic Labor Market Vulnerability Distribution of +0.351; exposure is concentrated in symbolic and process-oriented cognitive domains, while the majority of occupations remain resilient due to risk and physical bottlenecks.

The implication is explicit: cognitive complexity is not intrinsically protective; on the contrary, digital “clean-room” roles lacking physical or compliance friction are the soft targets for LLM substitution. In contrast, so-called “brittle” manual and care work is structurally defended by non-codifiable risk premiums and the persistent epistemic limits of probability-fitting AI.

Compliance Premium, Augmentation, and Dynamic Implications

The paper proposes the “Compliance Premium” thesis: as the wage premium in high-exposure occupations collapses due to feasible LLM substitution, resilient roles accrue labor market value not from cognitive difficulty, but from their proximity to legal, ethical, or physical risk. The transition from substitution to persistent human-in-the-loop augmentation is mathematically enforced by the model’s non-linear degradation regime and empirically reflected in occupational rankings. This predicts a labor market reallocation toward risk-absorbing and embodied professions, fundamentally inverting traditional career strategy, educational priorities, and wage structures.

Robustness checks across alternate risk-mapping regimes (aggressive/compliant/conservative institutional frictions) reveal stability in the ordinal hierarchy of vulnerabilities, although not in absolute OAI values. Thus, the “Cognitive Risk Asymmetry” remains an invariant structuring principle under wide regulatory fluctuation.

Limitations

The model’s predictive validity is framed as a static snapshot, bounded by the current capabilities of probabilistic LLM architectures. It does not account for rapid technical advances, forward-looking task creation, or full general equilibrium feedback. HITL sample size (+0.352) is robust for expert-driven protocols but not for global extrapolation. The reliance on historical ONET taxonomies also renders the results backward-looking with respect to emerging job categories.

The authors explicitly note that future breakthroughs in logical, embodied, causal AI (“Logical AI” as per neurosymbolic or system-2 reasoning) could invert present risk barriers, precipitating wavefront shocks as previously protected roles become algorithmically tractable.

Conclusion

By formally decoupling technical capability from risk and codifying their interaction, "Bounded by Risk, Not Capability" frames occupational replaceability as a function of institutional and physical friction, not algorithmic reach. The practical implication is a nuanced risk-adjusted index for policymakers and firms, orthogonal to existing exposure indices, that better aligns with observed lags in AI diffusion and sectoral transformation. Theoretically, the study re-centers risk aversion and compliance as primary determinants in the automation transition, anticipating the emergence of a human capital stratification that rewards embodied and fiduciary competencies over symbolic abstraction. Future developments in AI architecture—in particular, the transition toward physical and causal reasoning—represent the contingent variable that may decisively restructure these risk boundaries.