- The paper found that persistent memory enables rapid emergence of Theory-of-Mind-like behaviors in LLM poker agents within 20 hands.

- The methodology uses a 2x2 factorial design assessing memory and poker knowledge, with agents displaying distinct opponent modeling and strategic adaptations.

- The study shows that memory-equipped agents deviate from standard play by employing opponent-specific deception and strategic exploitation.

Emergence of Theory-of-Mind-Like Behavior in LLM Poker Agents

Introduction

The paper "Readable Minds: Emergent Theory-of-Mind-Like Behavior in LLM Poker Agents" (2604.04157) investigates whether LLM agents can develop Theory of Mind (ToM)-like social cognition through adversarial, strategic interaction in a partially observable environment—Texas Hold'em poker. Prior work on evaluating ToM in LLMs has relied almost entirely on static vignette-based tasks. This study departs from that paradigm by embedding autonomous LLM agents in multi-agent, memory-augmented environments that require continual behavioral modeling, adaptation, and deception, which are essential attributes of advanced social cognition.

Experimental Framework

The authors implemented a robust 2×2 factorial experiment crossing two critical factors: persistent memory (present/absent) and domain knowledge regarding poker strategy (present/absent). Each experimental replicate involved three Claude Sonnet agents playing 100 consecutive hands with strictly controlled access to memory and system instructions. The agents' cognitive processes were made interpretable via a platform that displayed real-time hand histories, agent notes, and ToM-level assessments. All mental models and opponent inferences were logged in natural language, fostering a unique form of agentic interpretability.

Figure 1: Real-time platform overview showing agent state, ToM-levels, and natural language opponent models during an active poker session.

Necessity of Memory for ToM Emergence

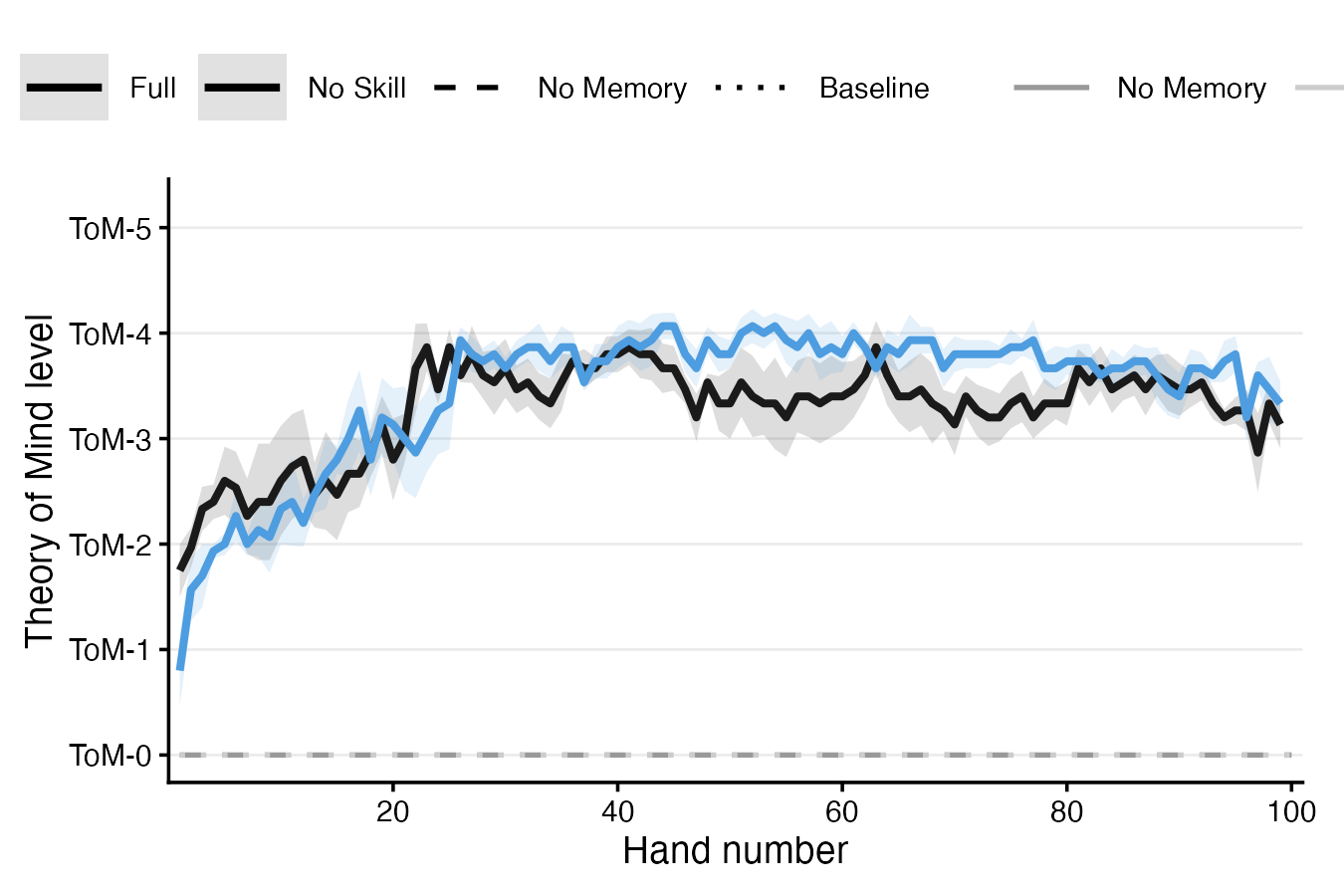

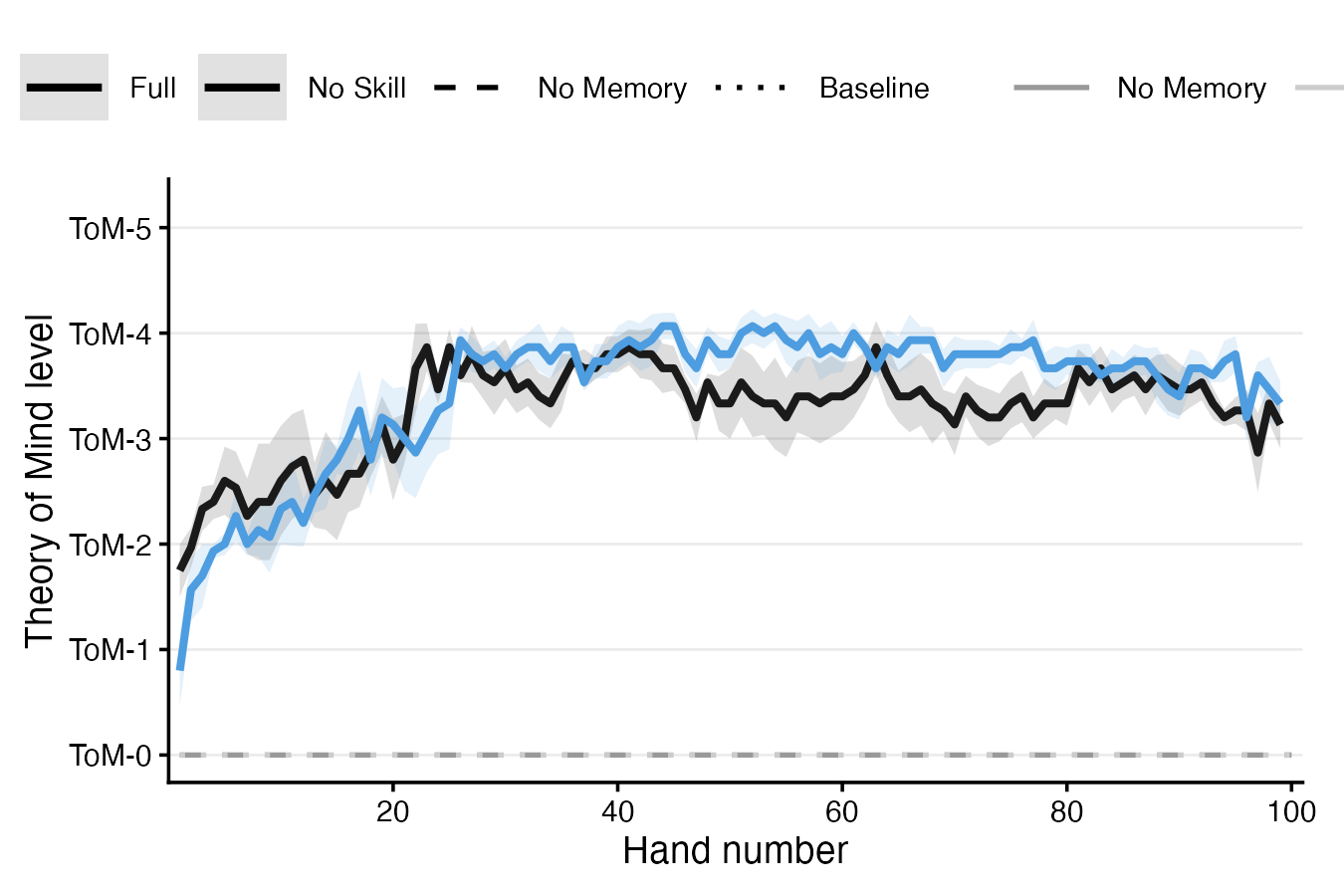

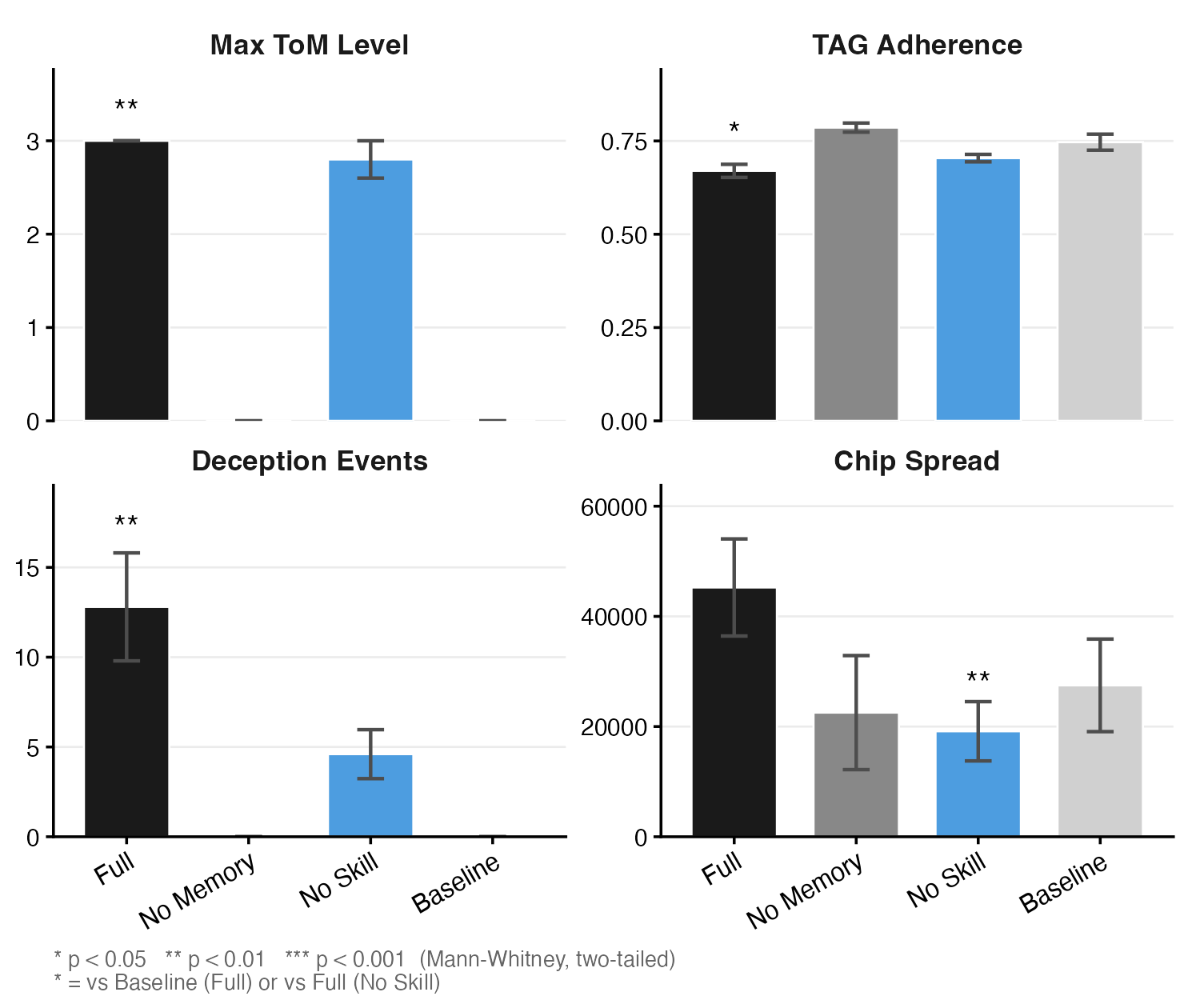

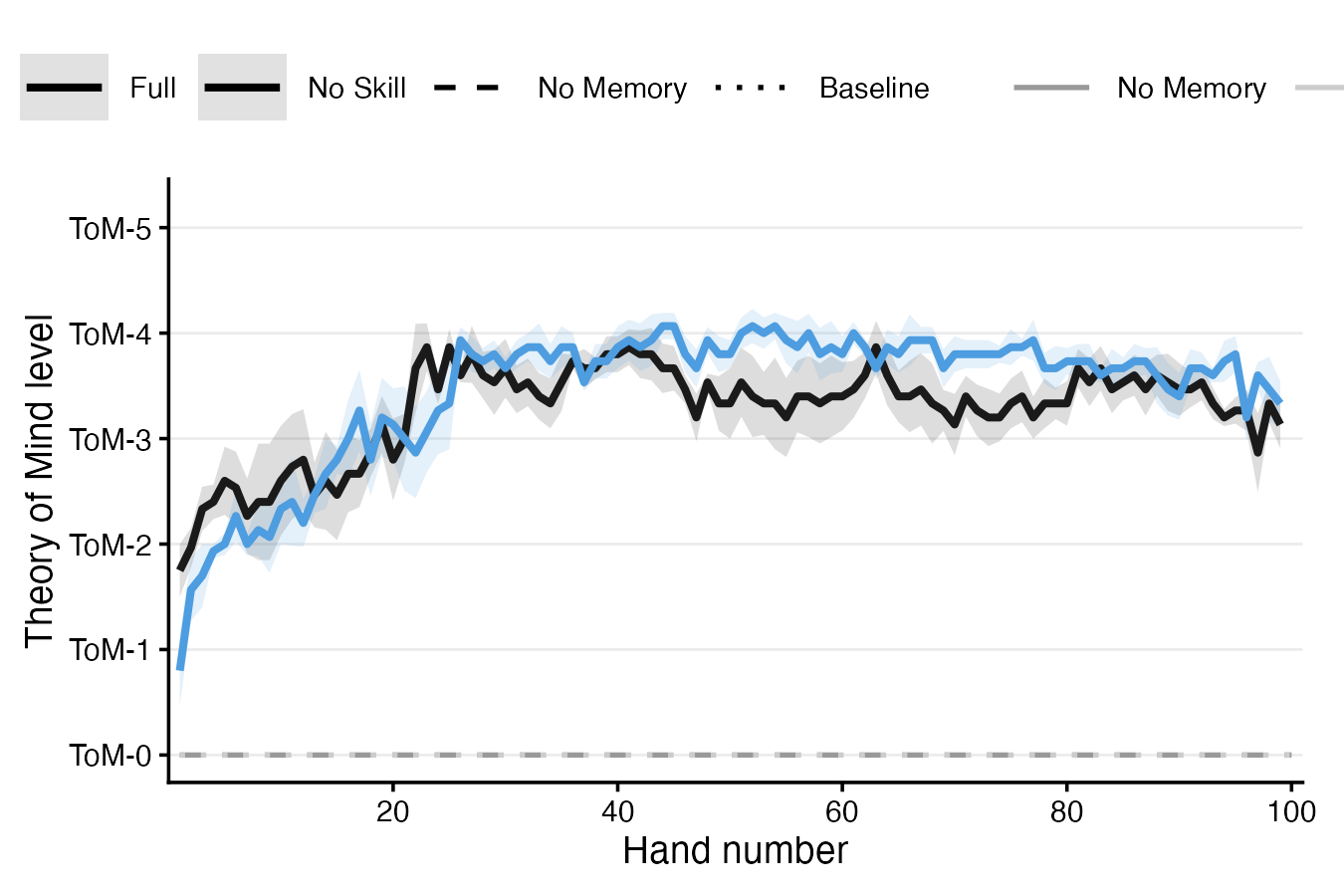

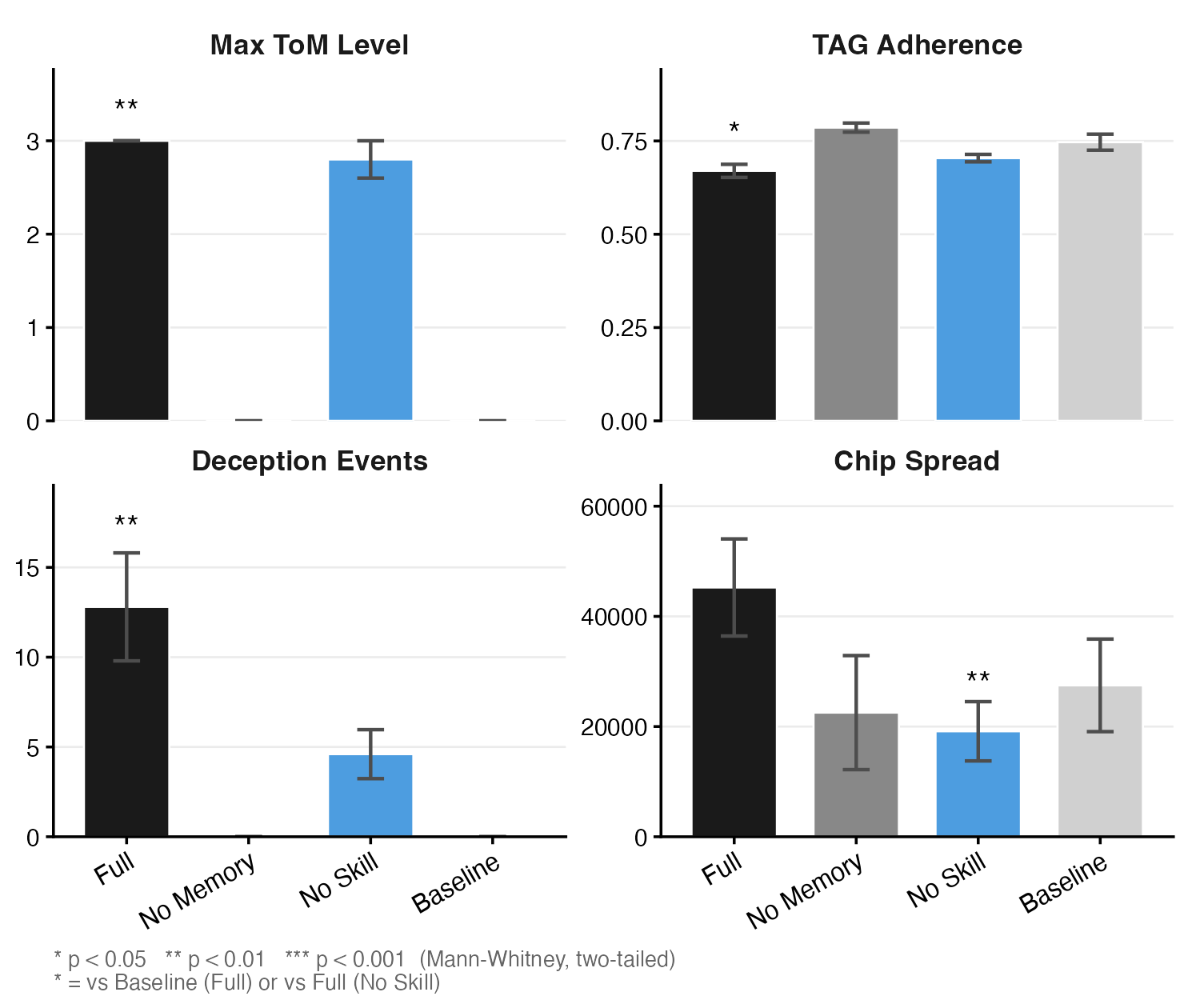

A fundamental finding of the study is that persistent memory is both necessary and sufficient for the emergence of ToM-like behaviors in LLM agents. In all sessions where agents were afforded memory, ToM levels rapidly progressed from Level~0 (absence) to Level~3--5 (predictive and recursive opponent modeling) in under 20 hands. In stark contrast, agents with no access to persistent memory—despite identical poker experience and context window—remained at Level~0 throughout all sessions. The effect size (Cliff's δ=1.0) and statistical separation (p=0.008) were maximal, underscoring the essential cognitive infrastructure provided by an explicit memory system.

Figure 2: ToM level trajectories show progressive emergence of higher-order modeling exclusively in memory-equipped conditions.

The agents with memory exhibited a clear developmental trajectory: early acquisition of opponent behavioral labels, rapid induction of predictive models, and eventual internalization of recursive modeling ("I believe that she knows..."). No such pattern appeared in memory-absent agents, who operated with only immediate situational awareness and failed to develop persistent, individuated opponent models.

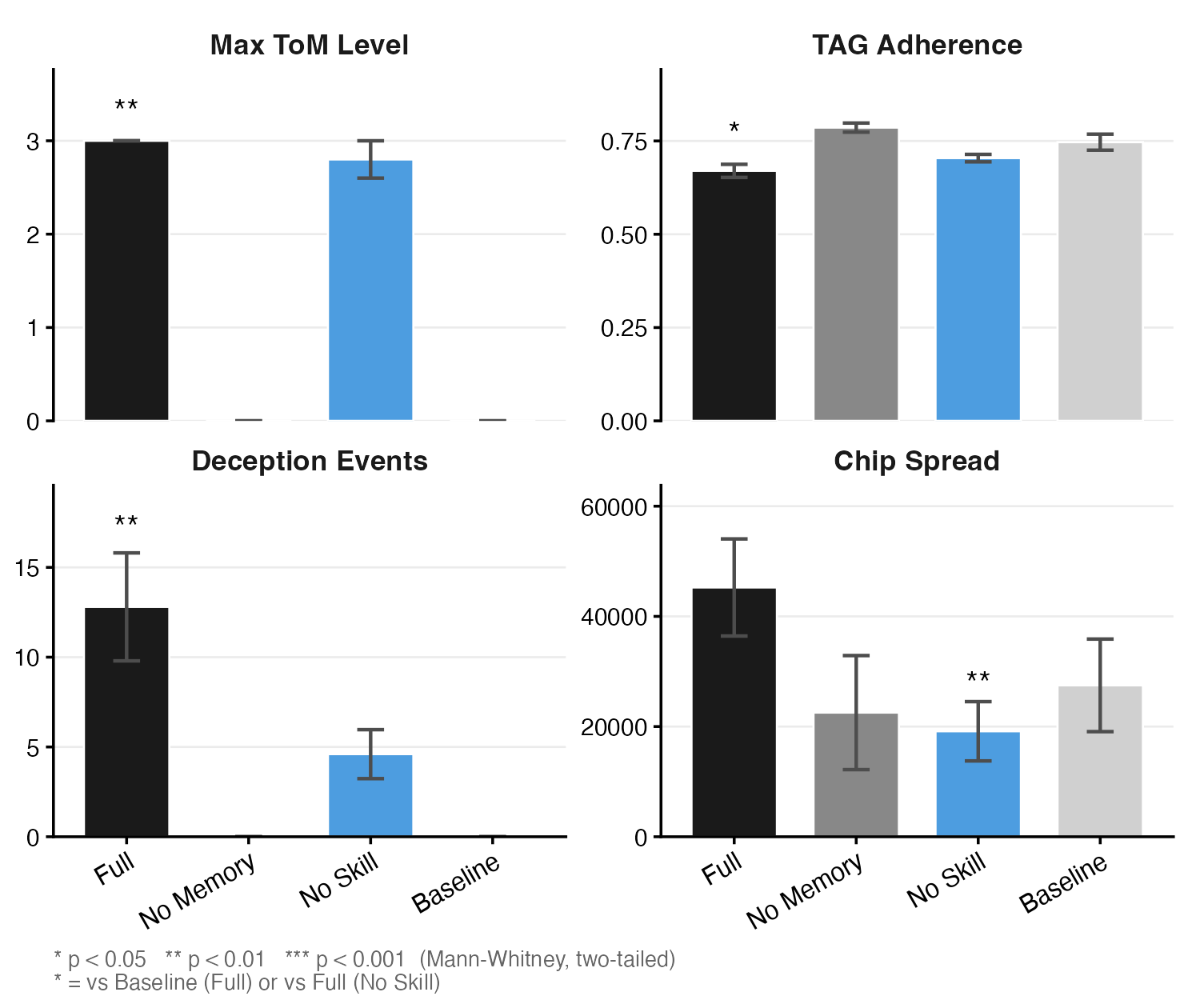

Strategic Behavior and Exploitation

Agents endowed with ToM capabilities also displayed statistically significant deviation from TAG (tight-aggressive) baseline strategies. Memory-augmented agents deliberately exploited observable tendencies in opponents, frequently departing from theoretically optimal play (reducing TAG adherence from 79% to 67%), in order to gain chip advantages. This mirrors expert human adaptation in strategic gameplay, where optimal strategy is conditionalized on opponent-specific reads.

Figure 3: Memory-equipped agents exhibit both reduced TAG adherence (A) and greater chip spread (B), indicating deliberate opponent exploitation.

Memory-equipped agents increased the variance in chip outcomes, reflecting asymmetric exploitation that emerged from the individualized models expressed in their persistent notes. These deviations were not random: key poker metrics (VPIP, PFR) did not differ significantly across experimental conditions, isolating the effect to ToM-driven adaptation rather than noise or degenerate strategy.

Deception as a Function of Opponent Modeling

The emergence of ToM-enabled deception—Tier~2 bluffs predicated explicitly on opponent-specific fold predictions—was restricted entirely to agents with memory. While all agents performed basic (Tier~1) bluffs, only those able to instantiate explicit opponent models through memory could execute bluffs conditionalized on their analysis of opponent psychology and prior actions. In memoryless and baseline conditions, no such ToM-dependent deceptive maneuvers were observed.

Notably, domain knowledge modulated the efficacy and quality of this deception. Agents with strategic instruction performed more sophisticated and higher-frequency Tier~2 deception compared to memory-only agents, though the latter still developed nontrivial ToM levels. This suggests that while ToM capacity is domain-general, its interaction with specialized expertise calibrates its behavioral impact.

Validation of Internal Models and Reliability of ToM Coding

The functional consequence of agentic opponent models was confirmed through behavioral shift analyses. The induction of a behavioral label in persistent memory was associated with a measurable and substantial change in how the agent played against the labeled opponent, confirming that these models drive action selection rather than serve as ornamental artifacts. Directional accuracy assessments of the memory content further bolster the claim that agents' internal representations track—and are calibrated to—actual behavioral variance at the table.

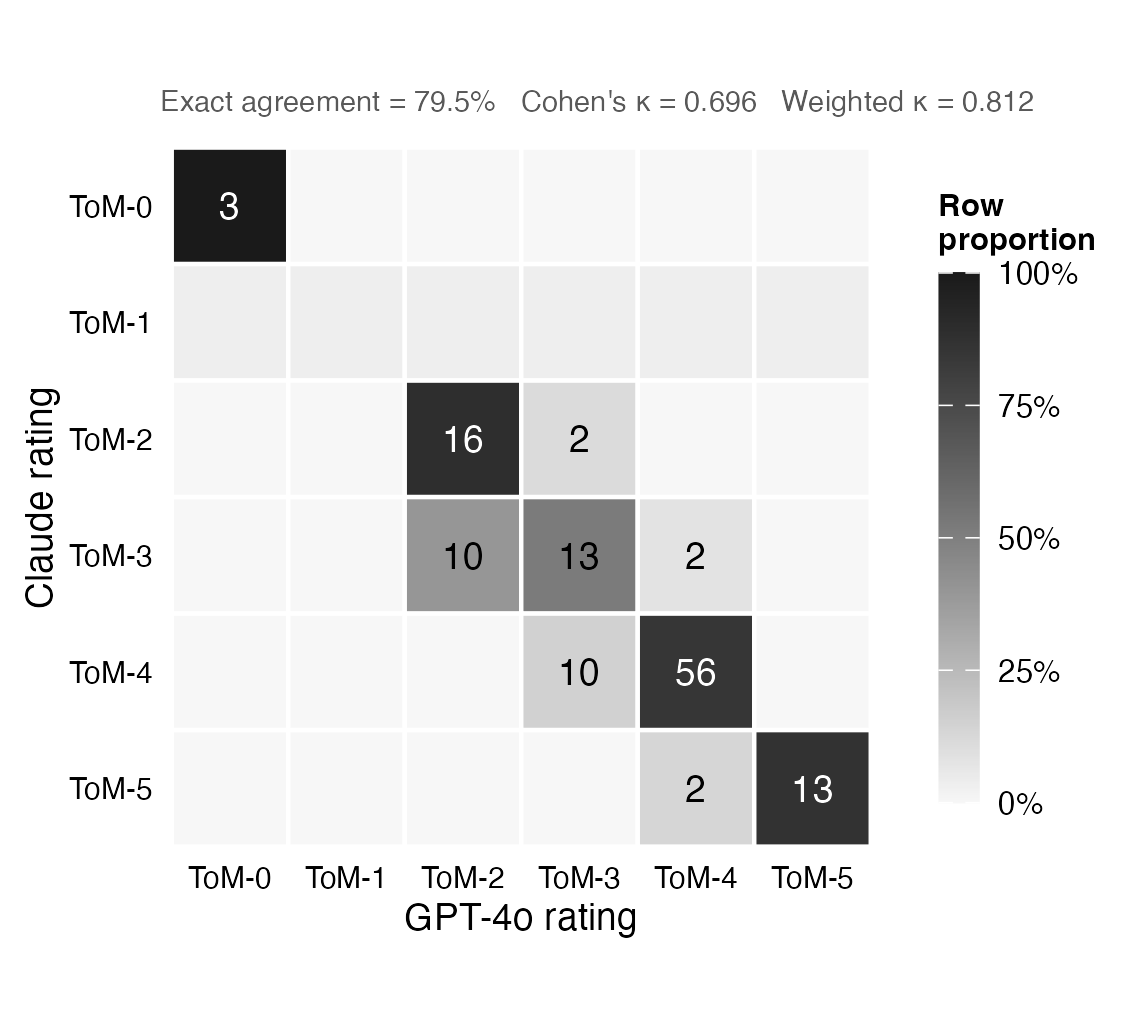

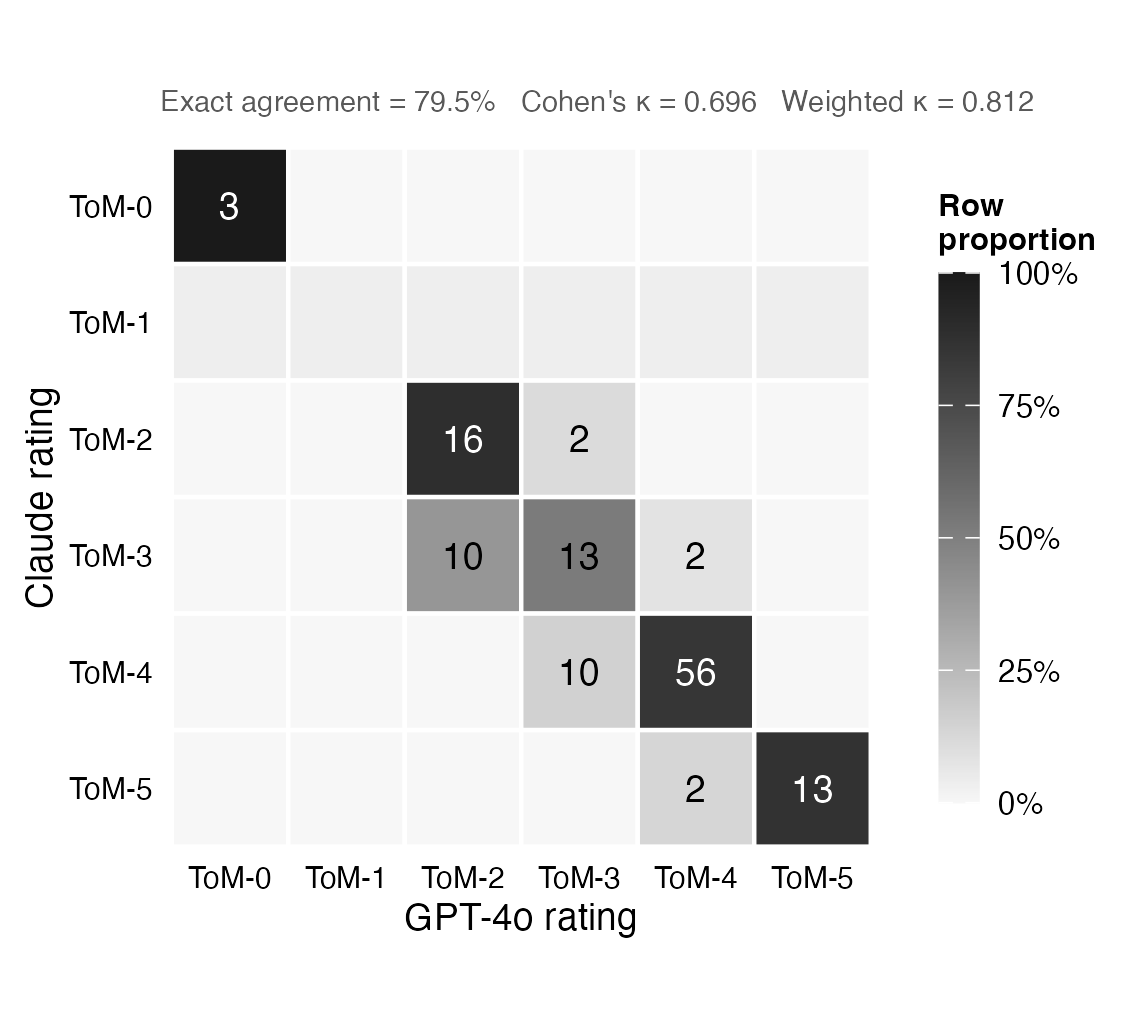

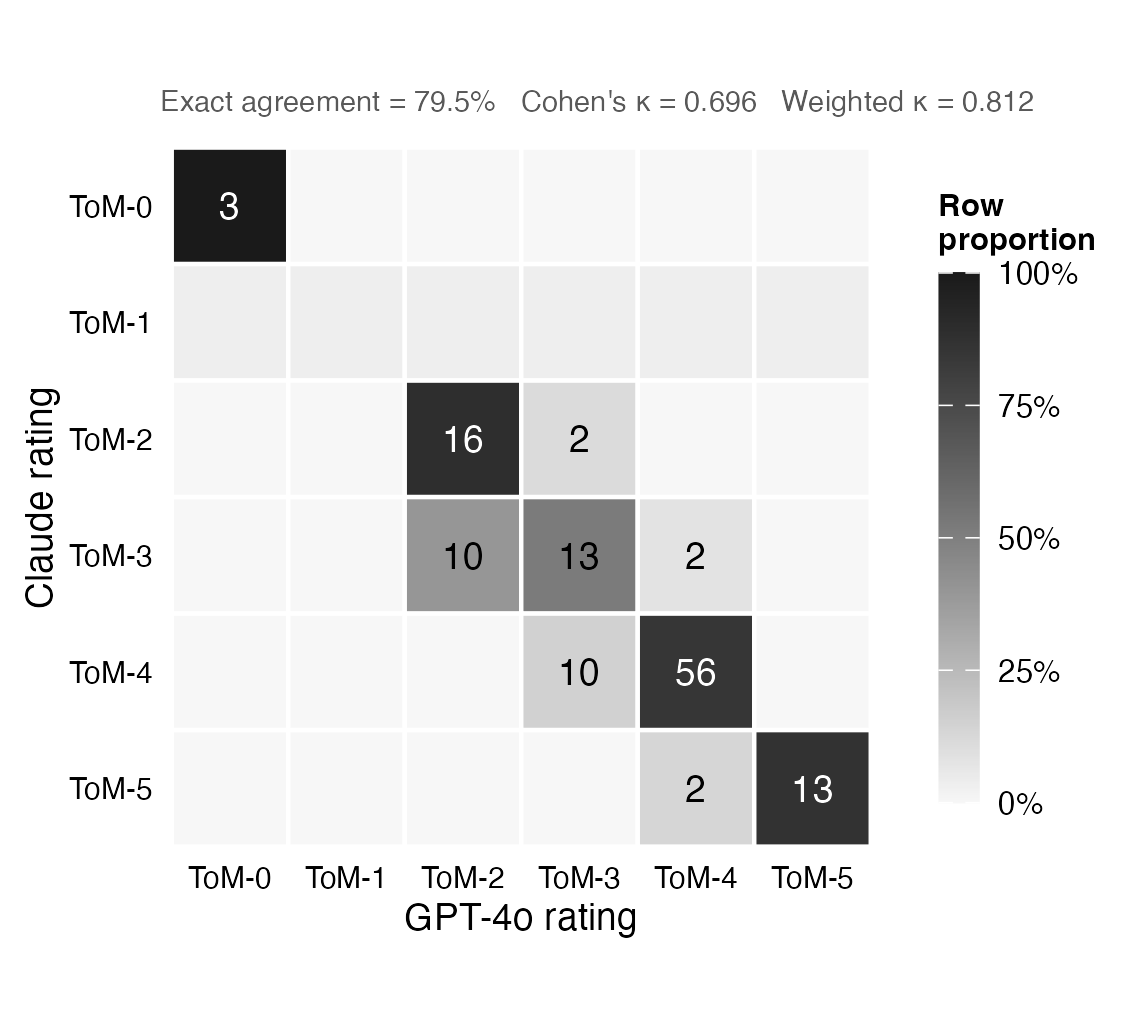

Inter-rater reliability assessments for ToM coding between Claude Sonnet and GPT-4o showed a weighted Cohen's κ=0.81 (near-perfect agreement), indicating high replicability and robustness of the coding rubric for distinguishing ToM levels in memory snapshots.

Figure 4: High cross-model concordance in ToM level coding between Claude Sonnet and GPT-4o validates the reliability of the annotation schema.

Agentic Interpretability and Theoretical Implications

The study introduces the notion of "readable minds"—interpretable, natural-language logs of internal state that explain and justify agent behavior at each step. Unlike opaque reinforcement learning agents or GTO solvers whose strategies are inaccessible, these LLM agents expose their social cognition through interpretable traces, facilitating inspection, validation, and analysis from both AI safety and cognitive science perspectives.

A particularly salient theoretical implication is that ToM-like social cognition in LLMs is not an artifact of scale or dataset composition, but arises only when the architecture supports information persistence across episodes. This directly parallels developmental and neurocognitive models in humans, in which working memory and iterative updating are foundational to the emergence of mature social cognition.

The finding that domain knowledge is not required for ToM emergence, but instead tunes its behavioral expression, opens avenues for investigating truly domain-general cognitive faculties in LLMs and other foundation models.

Limitations and Future Directions

The paper's architecture is limited in scope to a single LLM family (Anthropic Claude Sonnet), and comparative experiments with heterogeneous architectures or human baselines remain outstanding. The study is also constrained by the moderate number of experimental replications, though large effect sizes suggest robustness of the primary results. Automated ToM coding relies on LLM-based annotation, though high cross-model agreement mitigates concerns about model-specific bias.

Future developments should extend this framework to competitive and cooperative domains beyond poker, test multi-agent compositions with diverse architectures, incorporate humans into experimental loops, and examine whether similar ToM emergence occurs under different modalities (e.g., vision-LLMs or real-world embodied agents).

Conclusion

This work establishes that dynamic, persistent memory is both necessary and sufficient for the emergence of sophisticated, Theory-of-Mind-like reasoning in LLM-based agents operating in complex, competitive environments with hidden information. The transparency afforded by natural-language memory logs introduces a paradigm of agentic interpretability, bridging AI system design and cognitive science. The findings raise both opportunities and challenges for deploying autonomous multi-agent LLM systems in socially complex domains, as emergent capabilities such as opponent modeling and strategic deception may appear independently of explicit instruction or intent. The memory-centric architectural affordance highlighted in this study provides a critical design principle for future research into artificial social cognition and the safe deployment of agentic LLMs.

Figure 2: ToM levels increase only in agents equipped with memory, with recursive modeling emerging in early hands of play.

Figure 3: Memory enables deliberate adaptation away from optimality in favor of exploitative strategies and higher final chip variance.

Figure 4: Strong agreement between Claude Sonnet and GPT-4o in ToM level coding emphasizes the reliability and definability of emergent social cognition in LLMs.