- The paper finds that agent-authored pull requests are less frequently referenced yet incur significantly higher review and integration costs.

- It employs a systematic analysis of 33,596 PRs from high-profile repositories using non-parametric methods to compare human and agent interactions.

- The study reveals that human developers function as key integrators, while agents predominantly self-reference to issue corrective fixes.

Human-AI Workflows in PR Reference Dynamics: An Empirical Characterization of Agent-Authored Pull Request Integration

Introduction

The proliferation of advanced AI coding agents such as GitHub Copilot, Cursor, Devin, Claude Code, and OpenAI Codex is transforming collaborative software engineering. These agents are now fully autonomous contributors, generating and submitting substantial numbers of pull requests (PRs) to large codebases. Despite their rapid adoption, the impact of agent-authored PRs on core coordination activities like code review, and their actual interaction with human developers, remains poorly understood. "Humans Integrate, Agents Fix: How Agent-Authored Pull Requests Are Referenced in Practice" (2604.04059) provides a large-scale analysis of agent-authored PRs, exposing nuanced interaction patterns in referencing behavior and revealing the emergent structures of human-agent coordination.

Methodology

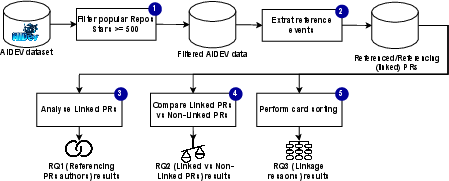

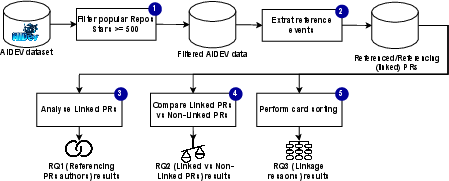

A systematic methodological framework is applied, leveraging the AIDev dataset, which aggregates 932,791 agent-authored PRs from 116,211 repositories. A filtered sample of 33,596 PRs from high-signal repositories (over 500 GitHub stars) forms the empirical basis for detailed reference event analysis. Reference events are extracted from PR timelines and categorized into Agent-to-Agent, Human-to-Agent, and subtypes (self-reference, cross-agent, solo human, AI-assisted). Quantitative code review metrics—#Commits, #Comments, Review Duration—are compared using non-parametric methods (Mann–Whitney U, Cliff’s delta). Reference intent taxonomy is constructed via manual card sorting of 213 sampled reference events, stratified by agent.

Figure 1: Overview of the methodological workflow used to identify, classify, and analyze reference events in agent-authored PRs.

Prevalence and Taxonomy of Agent-Authored PR Referencing

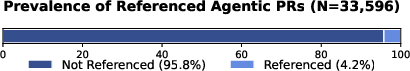

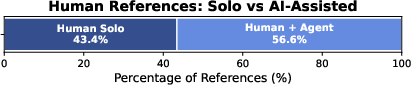

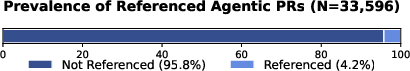

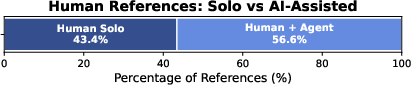

A principal finding is that 4.2% of agent-authored PRs are referenced, a rate substantially lower than highly collaborative human contexts (e.g., OpenStack: 25%). Most references to agent-PRs are initiated by humans (95.6%), with a notable 43.4% of these being AI-assisted, indicating a significant "meta-collaboration" pattern. Agent-initiated references are rare (4.4%) and, when they occur, are overwhelmingly self-referential (95.5%), suggesting that agents predominantly operate by iterating over their own outputs rather than integrating across multiple agent contributions.

Figure 2: Prevalence distribution of references involving agent-authored PRs.

The distinction between Solo Human and AI-Assisted referencing is further highlighted, demonstrating deep agent-embedding in human workflows—not only as code producers but also as cognitive partners in navigating and extending code history.

Figure 3: Distribution of Human-to-Agent PR references, highlighting the significant role of AI assistance in referencing behavior.

Review and Integration Costs

Statistical analysis demonstrates that linked agent-authored PRs (those that reference others or are referenced) are substantially "heavier" in terms of project management overhead. These PRs have double the number of commits, significantly more review comments, and substantially longer review durations compared to their isolated counterparts. The effect sizes are medium to large (δ up to 0.56), decisively rejecting the null hypothesis of no difference. This indicates that PRs involved in referencing networks are loci of greater coordination cost and are subject to more detailed scrutiny and negotiation, underscoring that integration of agent output is non-trivial for both humans and agents.

Intent and Patterns of Reference: Constructive vs. Corrective

A unified taxonomy of PR reference motivations reveals systematically distinct roles for human and agent actors. Human developers employ references to constructively extend agent code—adding features, updating logic, and providing explicit project context (59% constructive references). Conversely, agents overwhelmingly reference their own prior PRs in a corrective capacity—issuing fixes, reversions, or explicit deprecations (68% corrective references).

This bifurcation indicates that humans function as integrators, weaving agent output into broader software artifacts, while agents are predominantly utilized in self-correcting, isolated refinement loops. Contextual linking and knowledge management via referencing are more prevalent in human-to-agent patterns, implying unique human value in maintaining long-term project traceability and coherence.

Implications and Future Directions

The empirical evidence indicates that agent-authored PRs are not assimilated into the software development lifecycle as isolated, interchangeable units. Instead, human developers assume the role of high-order integrators, leveraging agent output as composable building blocks while relying on agents themselves primarily for autonomous remediation of defects. The significant review and coordination costs associated with referenced agent PRs point to ongoing challenges for both agent capability (in generating context-aware, easily integrable changes) and human-agent workflow design.

The prominent presence of AI-assisted human referencing further introduces a recursive, meta-collaborative layer to the software process: developers use agents both for code production and for mediating, curating, and aligning the contributions of other agents. These findings motivate several concrete directions for future research:

- Agent Specialization and Handoffs: Exploration of agent architectures that specialize in constructive extension rather than mere error correction.

- Meta-Collaboration Mechanisms: Development of interfaces and protocols for facilitating higher-level human-AI interaction patterns around code review and integration.

- Traceability and Contextualization: Enhanced agent introspection and context modeling to support richer referencing and more efficient integration into project histories.

Conclusion

This work establishes the fundamental reality that agent-authored PRs are both less frequently referenced yet more heavily scrutinized when integrated, and that referencing intent starkly differentiates human and agent roles within the collaborative workflow. The emergence of AI-assisted referencing highlights the deep entwinement of agents in all facets of software engineering, transcending simple code generation and foreshadowing a new era of recursively mediated development activity. Continued empirical and design research is critical to optimizing these workflows for scalable, reliable hybrid human-AI software development.