- The paper demonstrates that Flow Matching–optimized CNFs dramatically improve unweighting efficiency in complex, high-multiplicity processes compared to traditional methods.

- It leverages an ODE-based approach and helicity conditioning to create a diffeomorphic mapping that transforms latent samples into accurate physical phase-space events.

- Empirical results in e+e- and t-tbar channels show substantial performance gains, cost savings, and enhanced simulation precision for LHC event generation.

Monte Carlo Event Generation with Continuous Normalizing Flows

Introduction and Motivation

High-precision simulations for collider experiments, especially those at the LHC, require the generation of vast numbers of unweighted Monte Carlo (MC) events with accurate kinematic distributions. A central challenge is the sharply decreasing unweighting efficiency ϵ encountered in high-multiplicity processes due to multimodal distributions and strong variable correlations. Traditional methods, including adaptive multi-channel algorithms (such as Vegas) and Coupling-Layer Normalizing Flows (NFs), have reached critical limitations, especially for processes with seven or more final-state particles where ϵ often drops below 0.01%. This bottleneck substantially impacts storage, computation, and ultimately the attainable precision of experimental analyses.

Machine learning methods—including NFs—offer a route to more flexible transformation and sampling schemes. However, their scalability and efficiency have thus far been insufficient for the most computationally intensive processes. This work investigates the potential of Continuous Normalizing Flows (CNFs), optimized via Flow Matching (FM) objectives, for phase-space sampling in lepton-pair and top-quark pair production with up to five and four jets, respectively. Conditioning the CNF on helicity configurations further enables the exploitation of correlations between discrete (helicity) and continuous kinematic features.

Theoretical Framework and Methodology

The primary goal is to construct a diffeomorphic map ψθ that transforms samples from a tractable latent distribution q0 (chosen as a standard normal) into samples from the complex, high-dimensional target p0 defined over the physical phase space. This is achieved by mapping the physical manifold M to a unit hypercube U via a known transformation ϕ, and parameterizing ψθ:U→U either as a Coupling-Layer flow or, as proposed here, a CNF defined by integrating a learned time-dependent vector field ϵ0.

Instead of maximum-likelihood objectives that require repeated density evaluations, FM directly aligns ϵ1 with a target vector field ϵ2 constructed by interpolating between samples from ϵ3 and ϵ4. This simulation-free approach is computationally efficient and provides unique minimizers for the vector field. The CNF model is trained iteratively, initially with samples from baseline generator outputs and progressively refined in subsequent steps.

Helicity configurations—discrete indices on which matrix elements depend nontrivially—are introduced as conditioning variables in the network, maximizing the exploitation of all available structure within the data. Models are trained and benchmarked using the Chili phase-space mapping and Pepper matrix-element generator, embedded in standard LHC simulation toolchains.

Empirical benchmarking focuses on ϵ5 gluon and ϵ6 gluon production channels, selected for their experimental relevance and computational complexity. Unweighting efficiency, specifically ϵ7 (fraction of unweighted events such that overweight events contribute at most ϵ8 to the integral), serves as the principal metric.

Performance comparisons among Vegas, Coupling Flows, and ODE-based CNF Flows (Flow Matching) are summarized below:

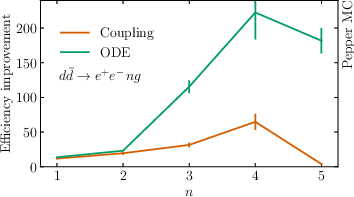

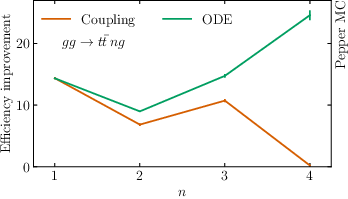

Figure 1: Relative gains in unweighting efficiency ϵ9 for ODE Flows and Coupling Flows compared to Vegas, as a function of final-state jet multiplicity, for both 0.01%0 gluon (left) and 0.01%1 gluon (right) production.

Key numerical findings:

- For 0.01%2, the ODE Flow achieves 0.01%3, representing a 1840.01%4 improvement over Vegas and 430.01%5 over Coupling Flows.

- For 0.01%6, ODE Flow gives 0.01%7, 250.01%8 higher than Vegas and 1440.01%9 higher than Coupling Flows.

- For ψθ0, relative improvements remain strong but decrease at higher jet multiplicity.

At high multiplicities, ODE Flows maintain or increase their advantage, while Coupling Flows' efficiency deteriorates (dropping below Vegas for the most complex case). This demonstrates the superior expressivity and scalability of FM-optimized CNFs in sampling challenging multimodal, high-dimensional distributions.

Furthermore, transferring the expressivity of ODE Flow models to Coupling Flows using RegFlow enables two orders of magnitude faster inference while recovering the majority of the efficiency gain. This approach yields effective walltime speedups of up to %%%%31ϵ032%%%% in practical event generation workflows.

Implications and Future Prospects

On a practical level, these results strongly suggest that Flow Matching–trained CNFs can dramatically reduce computational resources required for precision LHC event simulation, particularly in contexts demanding hundreds of billions of events. The substantial amplification in ψθ3 translates directly into cost savings and enables higher-fidelity exploration of rare or complex final states.

From a theoretical perspective, conditioning on discrete variables (such as helicities) and employing joint optimization for both discrete and continuous aspects of the distribution are powerful techniques that could see broader adoption in machine learning–based integration and sampler algorithms. The demonstrated ability to transfer performance between architectures (ODE Flows to Coupling Flows via RegFlow) points towards hybrid schemes that combine rigorous expressivity with practical inference speed.

Future developments are expected in several directions:

- Extension to multi-channel, conditional models that learn across a variety of partonic processes and multiplicities simultaneously, leveraging inter-channel correlations.

- Public integration of these techniques in widely-used simulation and event generation frameworks (e.g., Pepper, Sherpa, Pythia), streamlining their adoption for experimental and phenomenological studies.

- Further architectural and software optimizations to accelerate ODE Flow models directly.

Conclusion

This paper establishes Flow Matching–optimized Continuous Normalizing Flows as a highly effective method for high-dimensional phase-space sampling in collider physics, yielding improvements in unweighting efficiency by up to two orders of magnitude over the traditional Vegas algorithm for the most complex processes studied. The methodology enables precise, large-scale MC event generation critical for ongoing and future precision physics programs at the LHC and beyond. Extensions toward conditional, multi-process models and fully integrated event generation toolchains are anticipated to further consolidate these gains and broaden the impact on the community.