- The paper introduces a novel hybrid approach combining nested MLMC with preintegration to enhance convergence in discontinuous risk estimation.

- It employs smoothing via preintegration to achieve a variance decay of O(mₗ⁻¹) and bounded higher-order moments even in high-dimensional settings.

- The method reduces computational cost from O(TOL⁻⁵⁄₂) to near-optimal O(TOL⁻² (log(TOL))²), enabling efficient estimation for multi-asset risk metrics.

Nested Multilevel Monte Carlo with Preintegration: Efficient Risk Estimation

Motivation and Problem Statement

Nested Monte Carlo (NMC) is the standard approach for estimating risk measures in finance, especially when one needs to compute expectations involving conditional expectations, such as the probability of extreme losses. A canonical example is estimating θ:=P(E[X∣ω]>c). However, NMC suffers substantial computational inefficiencies, particularly due to the discontinuity introduced by indicator functions and the bias-variance behavior resulting from the nesting. Multilevel Monte Carlo (MLMC) is a variance reduction technique that addresses some inefficiencies, but its convergence is suboptimal when discontinuous functions (like indicators) are involved, causing poor robustness and high kurtosis at deeper MLMC levels.

Preintegration techniques, also known as conditional Monte Carlo or conditional sampling, have emerged as a promising methodology to regularize discontinuities by integrating out one random variable. This smoothing enables better variance decay, and, crucially, is compatible with MLMC without altering the bias profile. The paper proposes a novel integration: nested MLMC combined with preintegration (and its numerical realization), theoretically analyzing its convergence and robustness, then empirically validating it with European option portfolios under the Black-Scholes model.

Methodology

Problem Setup and Simulation

The risk estimation problem is represented as estimating E[g(φ(ω))] where g(⋅)=I{⋅>c} and φ(ω)=E[X∣ω] for some outer random variable ω and inner random variable X. Standard nested MC approximates the inner conditional expectation using m samples, with outer simulation repeated n times. The estimator’s bias decays at rate $1/m$ (Theorem 1) [nested_bias2010].

Preintegration and Numerical Smoothing

Preintegration analytically (or numerically) integrates over a chosen dimension (e.g., ω1), yielding a function E[g(φ(ω))]0 that is “smoothed” with respect to discontinuities. For cases where a closed form is unavailable, numerical smoothing (as in [bayer2023numerical, bayer2024multilevel]) uses Newton iteration to approximate the root(s) and applies Laguerre quadrature for numerical evaluation. Regularity conditions and monotonicity are critical for guaranteeing the uniqueness and accuracy of discontinuity points.

MLMC with Preintegration

MLMC constructs a telescoping sum estimator across increasingly fine levels. The smoothed estimator combines MLMC with preintegration, with error decomposition distinguishing bias (weak error), statistical MLMC error, and numerical integration/root-finding error. Theoretical results demonstrate:

- The strong convergence rate improves from E[g(φ(ω))]1 (standard MLMC) to E[g(φ(ω))]2 (smoothed MLMC), i.e., variance decays as E[g(φ(ω))]3 (Theorem).

- The kurtosis is uniformly bounded E[g(φ(ω))]4, compared to explosive kurtosis in standard MLMC.

- Computational complexity is reduced to E[g(φ(ω))]5 (Corollary), nearly optimal, with further improvement possible via antithetic sampling or quasi-Monte Carlo (QMC).

Robustness and Further Extensions

High kurtosis and the poor estimation of variances at deep levels are mitigated by smoothing. Antithetic sampling, ineffective for discontinuous functions in standard MLMC, becomes potent in the smoothed framework. For high-dimensional problems, inner QMC sampling remedies accuracy loss, achieving convergence rates better than E[g(φ(ω))]6 (sometimes near E[g(φ(ω))]7), and, with antithetic QMC, even faster.

Theoretical Results

The paper establishes several key theoretical findings:

- Bias of the nested estimator decays as E[g(φ(ω))]8, not altered by preintegration.

- The variance of MLMC level differences with preintegration decays as E[g(φ(ω))]9, doubling the convergence exponent relative to standard MLMC.

- The kurtosis remains bounded (g(⋅)=I{⋅>c}0), eliminating instability from high-kurtosis spikes.

- Computational complexity is nearly optimal, falling to g(⋅)=I{⋅>c}1.

Empirical Validation

Numerical experiments are conducted with European option portfolios under the Black-Scholes model, assessing expectation, variance, kurtosis, and computational cost as functions of MLMC level and problem dimension.

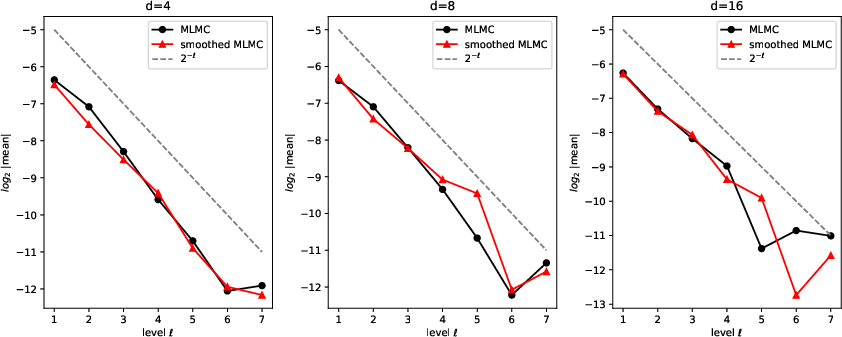

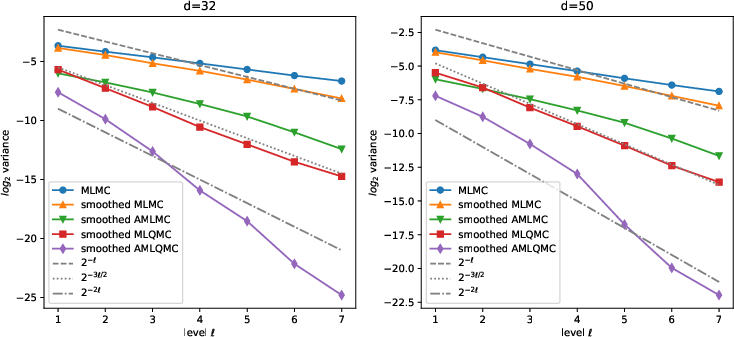

Weak and Strong Convergence

The expectation converges rapidly and stably in smoothed MLMC even as dimension increases, matching the standard MLMC rate but outperforming in stability.

Figure 1: Expectations for MLMC and smoothed MLMC for g(⋅)=I{⋅>c}2.

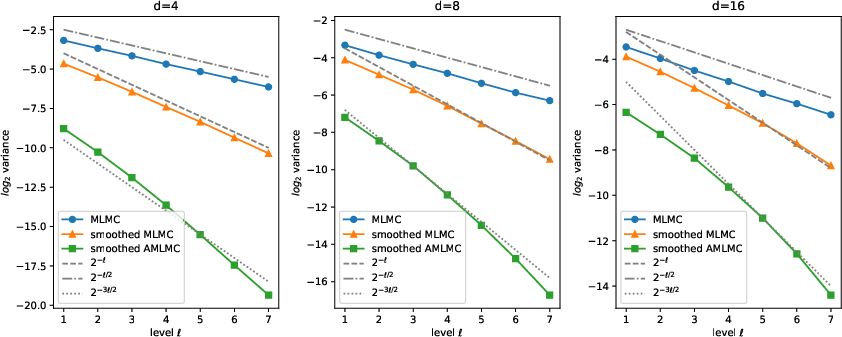

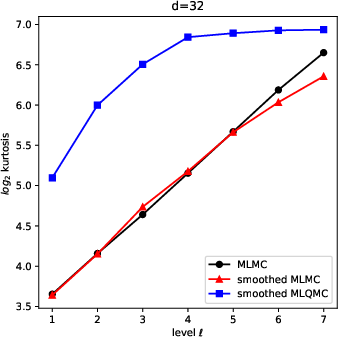

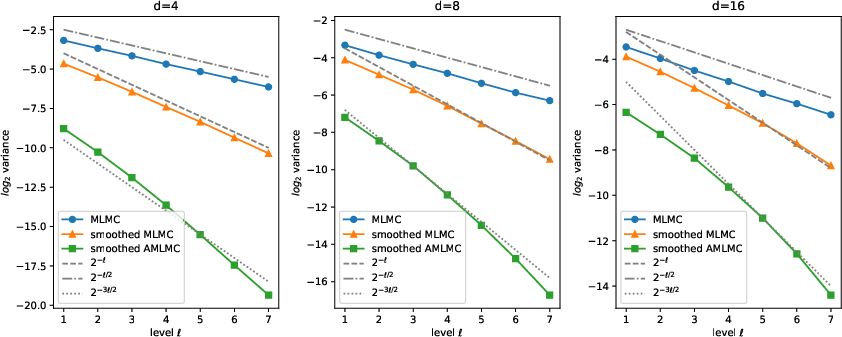

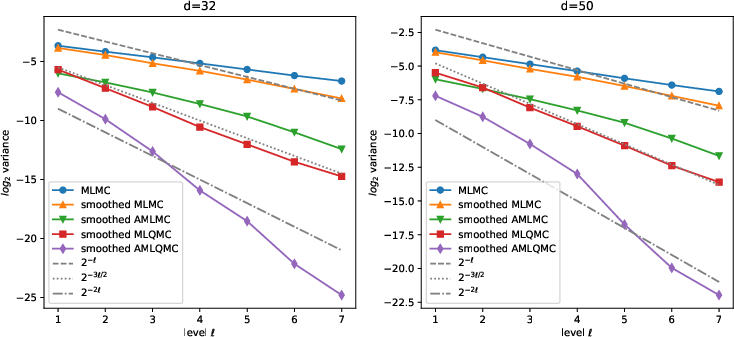

Variance decays much faster in smoothed MLMC (g(⋅)=I{⋅>c}3) than standard MLMC (g(⋅)=I{⋅>c}4), with smoothed AMLMC (antithetic) approaching g(⋅)=I{⋅>c}5.

Figure 2: Variance decay rates for MLMC variants across g(⋅)=I{⋅>c}6.

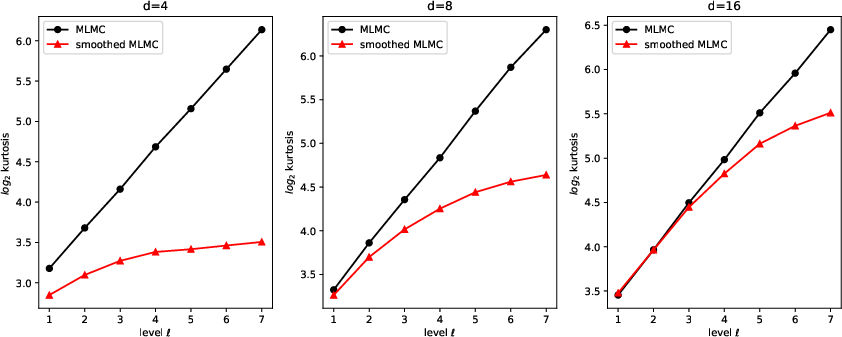

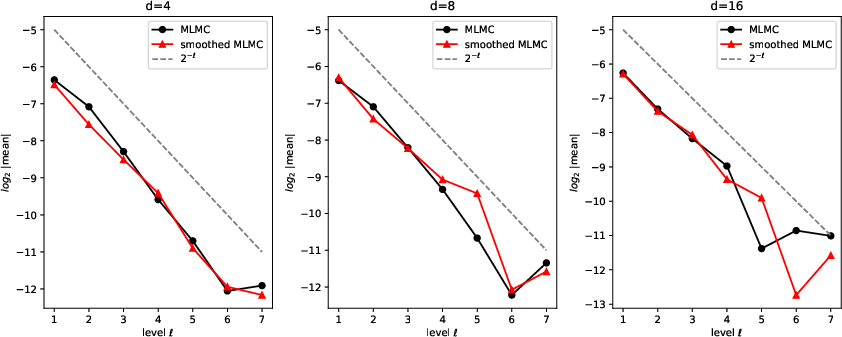

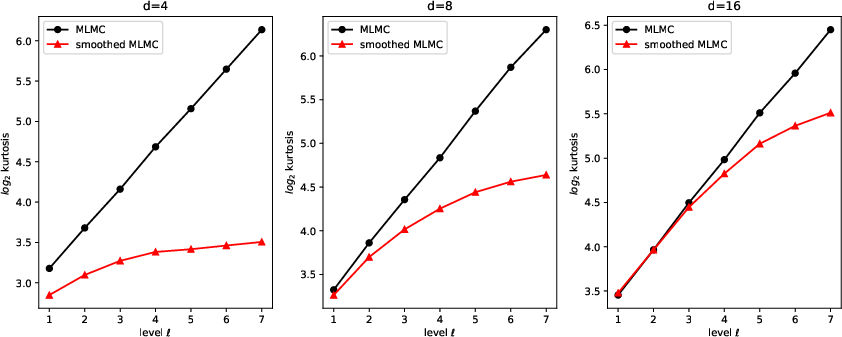

Kurtosis and Robustness

Smoothed MLMC maintains bounded kurtosis with level, while standard MLMC incurs rapidly growing kurtosis, severely limiting robust allocation at deep levels.

Figure 3: Kurtosis profiles for MLMC and smoothed MLMC, g(⋅)=I{⋅>c}7.

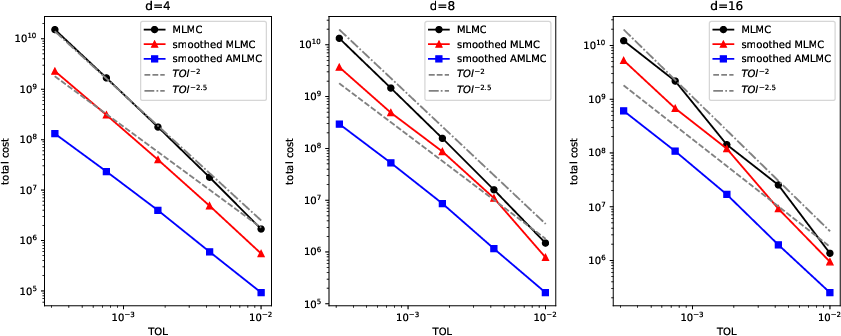

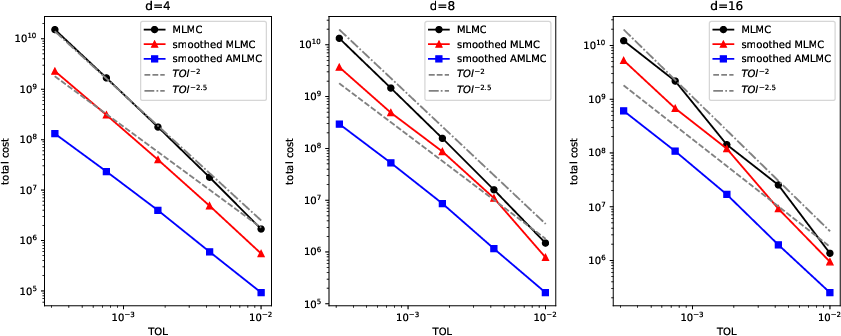

Computational Complexity

Smoothed MLMC achieves substantially lower computational cost at comparable accuracy, scaling as g(⋅)=I{⋅>c}8 (or even lower with antithetic sampling), compared to g(⋅)=I{⋅>c}9 for standard MLMC.

Figure 4: Total computational cost for various MLMC strategies, φ(ω)=E[X∣ω]0.

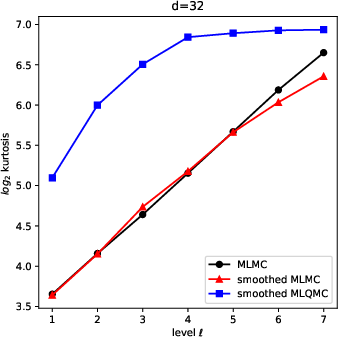

In higher dimensions (φ(ω)=E[X∣ω]1), smoothed MLMC and smoothed MLQMC maintain favorable variance decay (rate near φ(ω)=E[X∣ω]2 or φ(ω)=E[X∣ω]3), while smoothed AMLQMC achieves variance reduction by orders of magnitude, though at a cost of higher kurtosis.

Figure 5: Variance decay in high-dimensional (φ(ω)=E[X∣ω]4) smoothed MLMC and MLQMC.

Figure 6: Kurtosis of smoothed MLQMC for dimension φ(ω)=E[X∣ω]5.

Practical and Theoretical Implications

The integration of preintegration with MLMC addresses major inefficiency and instability challenges in nested risk estimation. Practically, this yields:

- Robust risk estimates for portfolios with moderate-to-high dimensionality and complex conditional structures.

- Nearly optimal computational cost for regulatory, portfolio, and option risk measurement applications.

- Stability in sample allocation, facilitating automation in large simulation frameworks.

Theoretically, the work demonstrates how smoothing transformations (preintegration or numerical) can regularize the bias-variance tradeoffs inherent in nested simulation, providing a foundation for further investigation:

- Optimal parameter allocation (quadrature points, Newton tolerances).

- Extension to MLQMC with combined smoothing, with potential for further complexity reduction.

- Adaptation to more general risk measures and stochastic volatility models.

Conclusion

This paper provides a formally analyzed and empirically validated methodology for efficient risk estimation, combining nested MLMC with preintegration to regularize discontinuities and reduce computational complexity. The approach is robust and scalable across dimensions, attains strong convergence rates, bounded kurtosis, and achieves practical efficiency gains. Its implications extend to risk management, option pricing, and broader financial simulation tasks. Future research can optimize hyperparameter allocation and extend smoothing-MLMC techniques to even more complex stochastic models and broader classes of risk measures.