- The paper introduces MASF, which constructs the forward process via interpolation between the identity and the measurement operator to obtain an analytic likelihood score.

- It demonstrates superior performance over conventional filters, achieving lower RMSE and enhanced stability in chaotic systems like Lorenz-63, Lorenz-96, and Kolmogorov flow.

- This method eliminates heuristic likelihood approximations, enabling robust and scalable Bayesian updates in high-dimensional, nonlinear data assimilation tasks.

Measurement-Aware Forward Processes for Score-Based Data Assimilation

Introduction

Score-based generative models have introduced scalable, non-Gaussian inference for data assimilation in high-dimensional nonlinear dynamical systems. Traditional ensemble-based Bayesian filters (EnKF, particle filters) suffer from severe limitations in high-dimensional and nonlinear regimes—typically due to Gaussian moment constraints or weight degeneracy—frequently observed in real-world geophysical, meteorological, and physical systems. Recent research has leveraged score-based models, notably diffusion-based approaches, to enable explicit likelihood-guided data assimilation. However, existing score-based approaches such as Score-based Filters (SF) and Score-based Sequential Langevin Sampling (SSLS) decouple the design of their forward processes from the measurement operator, making the posterior sampling step reliant on heuristic or computationally expensive likelihood score approximations.

The paper "Rethinking Forward Processes for Score-Based Data Assimilation in High Dimensions" (2604.02889) addresses this bottleneck by introducing a Measurement-Aware Score-based Filter (MASF). MASF constructs the forward process via explicit interpolation from the identity to the measurement operator, yielding analytic tractability for the likelihood score and enabling theoretically consistent, efficient sequential inference.

Measurement-Aware Forward Process Construction

Standard score-based approaches specify the forward process independently of the measurement mapping, resulting in intractable likelihood evaluation at the perturbed state—necessitating ad hoc approximations or expensive Markov Chain Monte Carlo (MCMC) sampling.

MASF innovates by directly aligning the forward process with the measurement operator, formulating the forward marginal Xt=A(t)X0+Σ(t)1/2ϵ, where A(t) interpolates from the identity to the measurement operator A as t progresses. This design ensures that, at every intermediate time, the conditional law is analytically tractable and the induced likelihood score admits a closed-form.

Figure 1: Schematic comparison of likelihood score: independent- and measurement-aware forward process designs.

With this, the likelihood score ∇xtlogp(z∣xt) is obtained as an explicit Gaussian (closed-form) expression, which is then combined with the learned prior score to construct the posterior score:

∇xtlogp(xt∣z)=∇xtlogp(xt)+∇xtlogp(z∣xt).

This tractable formulation eliminates the need for repeated, potentially inaccurate likelihood-score approximations prevalent in prior work.

Figure 2: Pipeline of MASF, where the forward process interpolates between the state and measurement, ensuring tractable posterior sampling.

Algorithmic Instantiation and Learning

MASF operates recursively across measurement times. Each filter iteration proceeds as follows:

- Time Update: Evolve the posterior ensemble forward through the system dynamics to the next measurement time.

- Score Model Training: Perturb propagated samples via the measurement-aware forward process and train a denoising score-matching network to estimate the prior score.

- Measurement Update: At each measurement step, reverse-time sampling is performed by integrating the reverse-time SDE parameterized by the sum of the trained prior score and the analytic likelihood score, producing posterior samples.

This approach is summarized in the paper's pseudo-code with dedicated attention to stable score estimation and efficient, consistent sampling.

Empirical Results

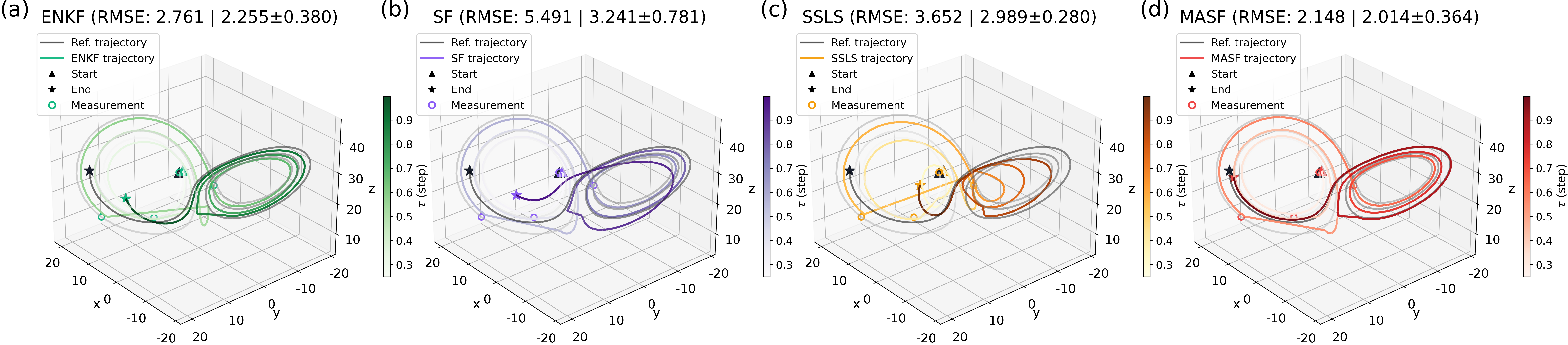

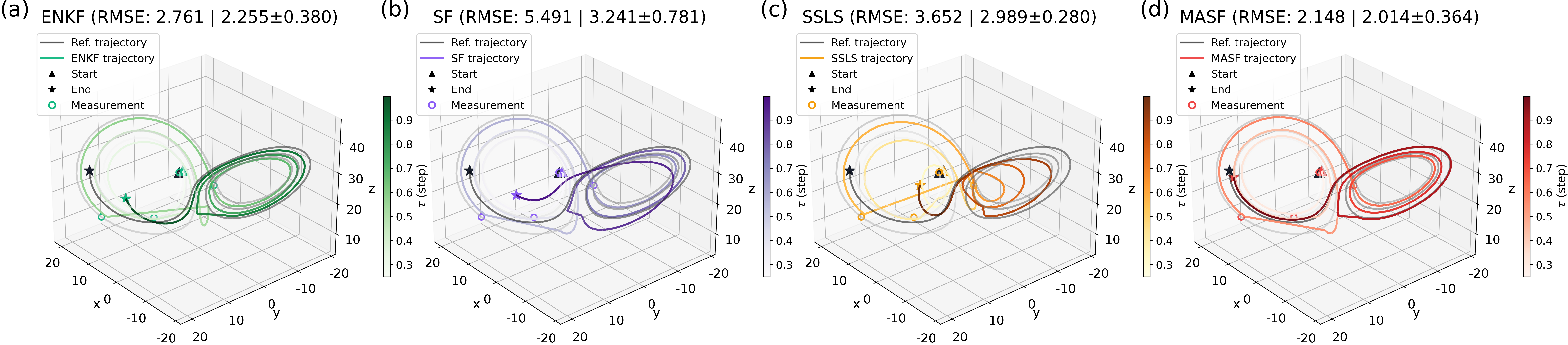

Lorenz–63

Evaluation on the Lorenz–63 chaotic dynamical system confirms the stability and superior accuracy of MASF relative to EnKF, SF, and SSLS. Under sparse measurement regimes (gap = 100), MASF yields consistently lower RMSE and reduced variance across random seeds, indicating improved robustness in transiently chaotic, low-dimensional settings.

Figure 3: State assimilation trajectories for Lorenz–63 with a large measurement gap, demonstrating stronger tracking ability and lower RMSE for MASF.

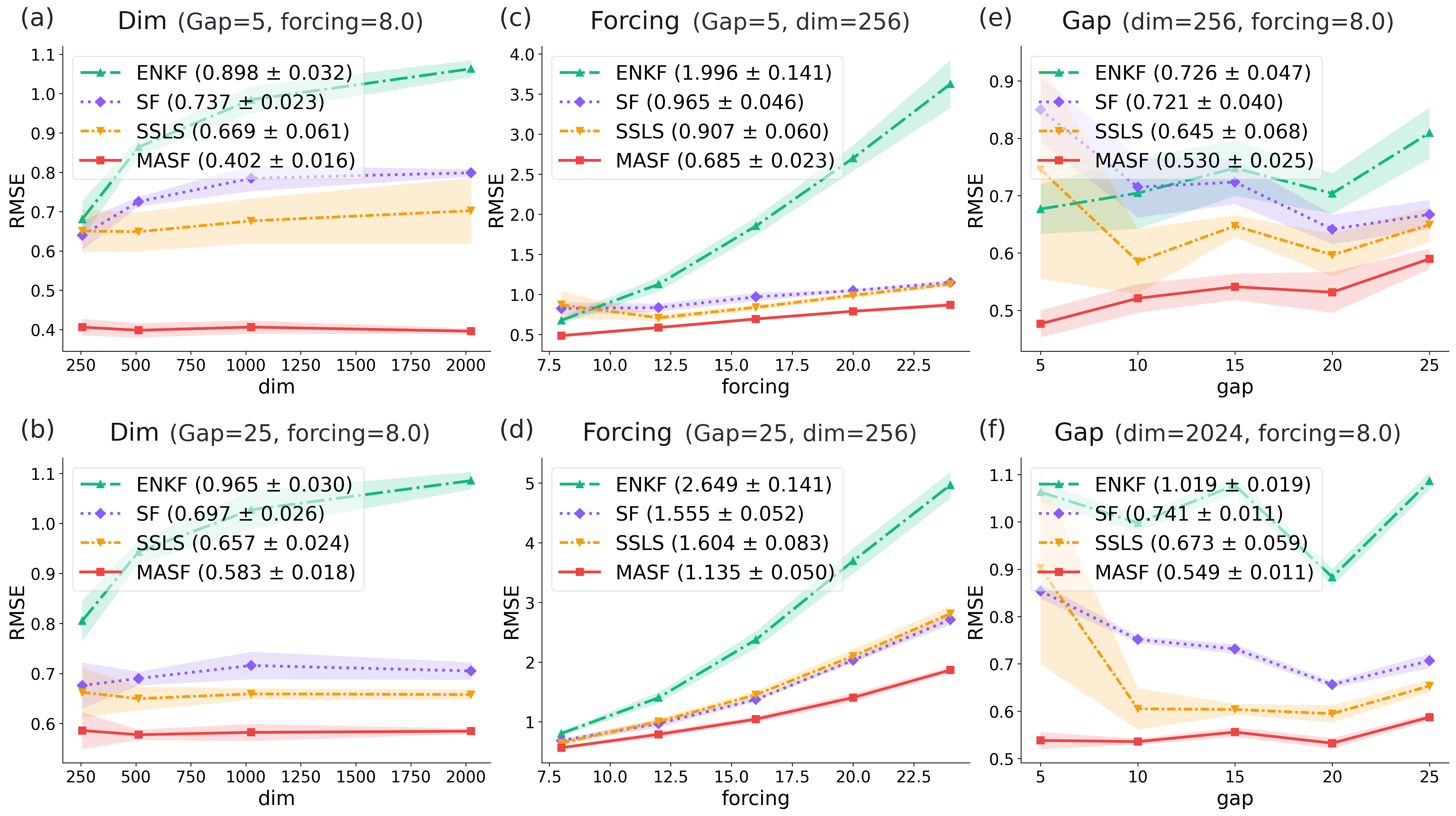

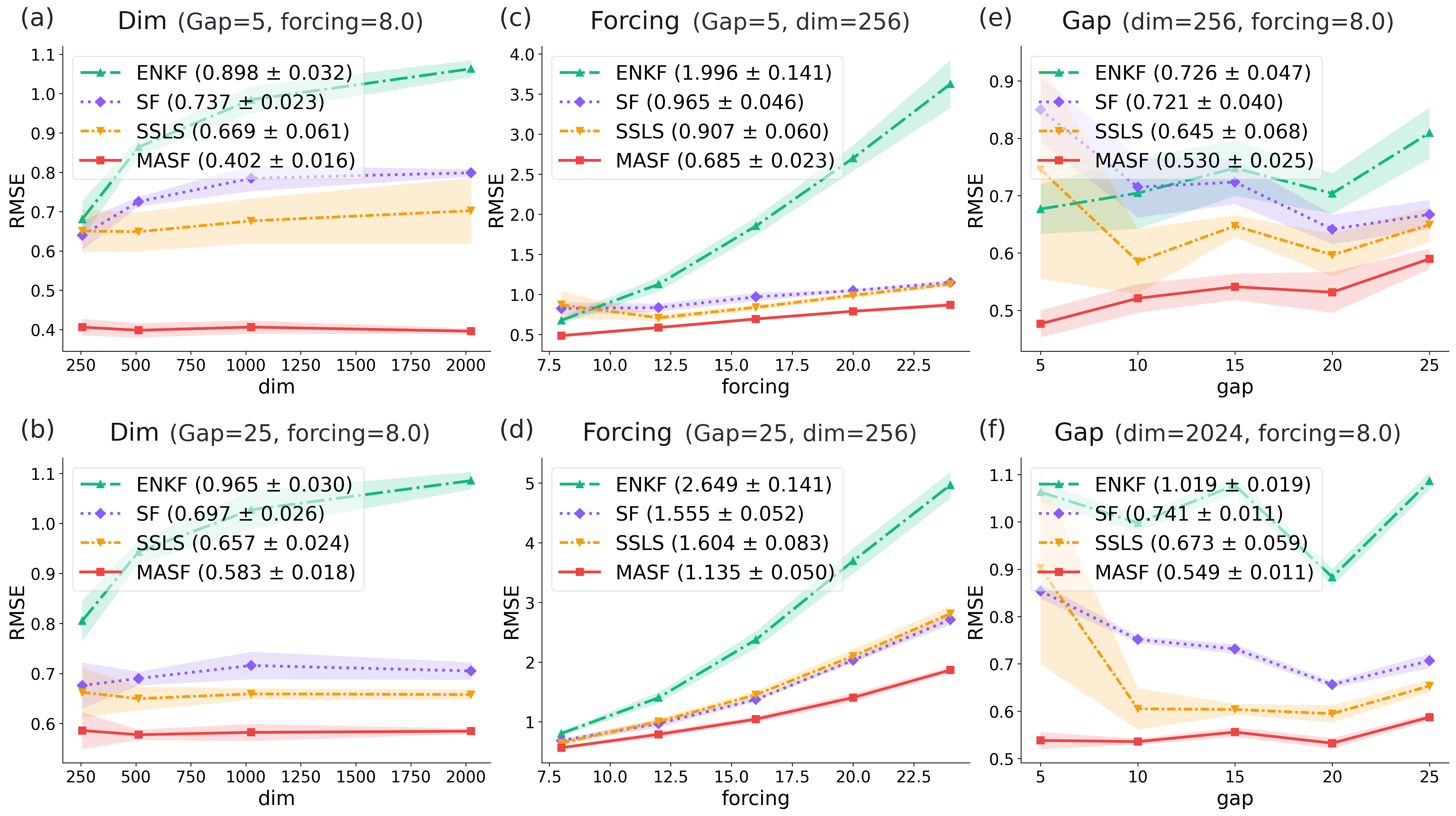

Lorenz–96

The Lorenz–96 system, with scalable state dimension and chaoticity, is used to benchmark performance under extreme high-dimensionality and spatial sparseness. Across sweeps of dimension (d=256 to $2048$), chaoticity (forcing F), and measurement gap, MASF establishes the lowest RMSE throughout—all while maintaining stability without recourse to heuristic corrections. Notably, MASF's accuracy is relatively insensitive to increasing nonlinearity or measurement sparsity, a point of marked divergence from EnKF and SF.

Figure 4: Lorenz–96 results showing that MASF maintains low error and robustness as dimension, chaoticity, and measurement gap increase.

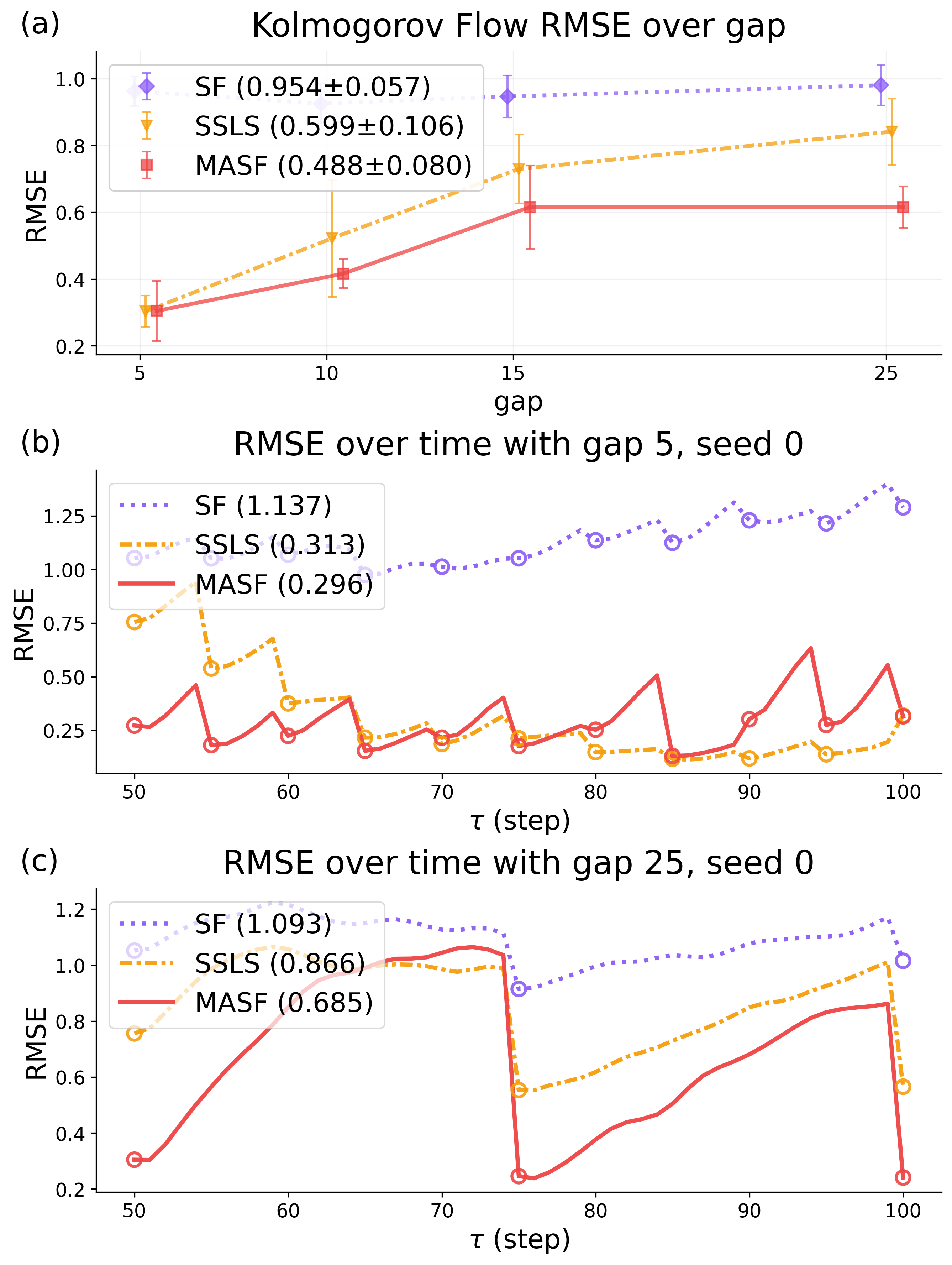

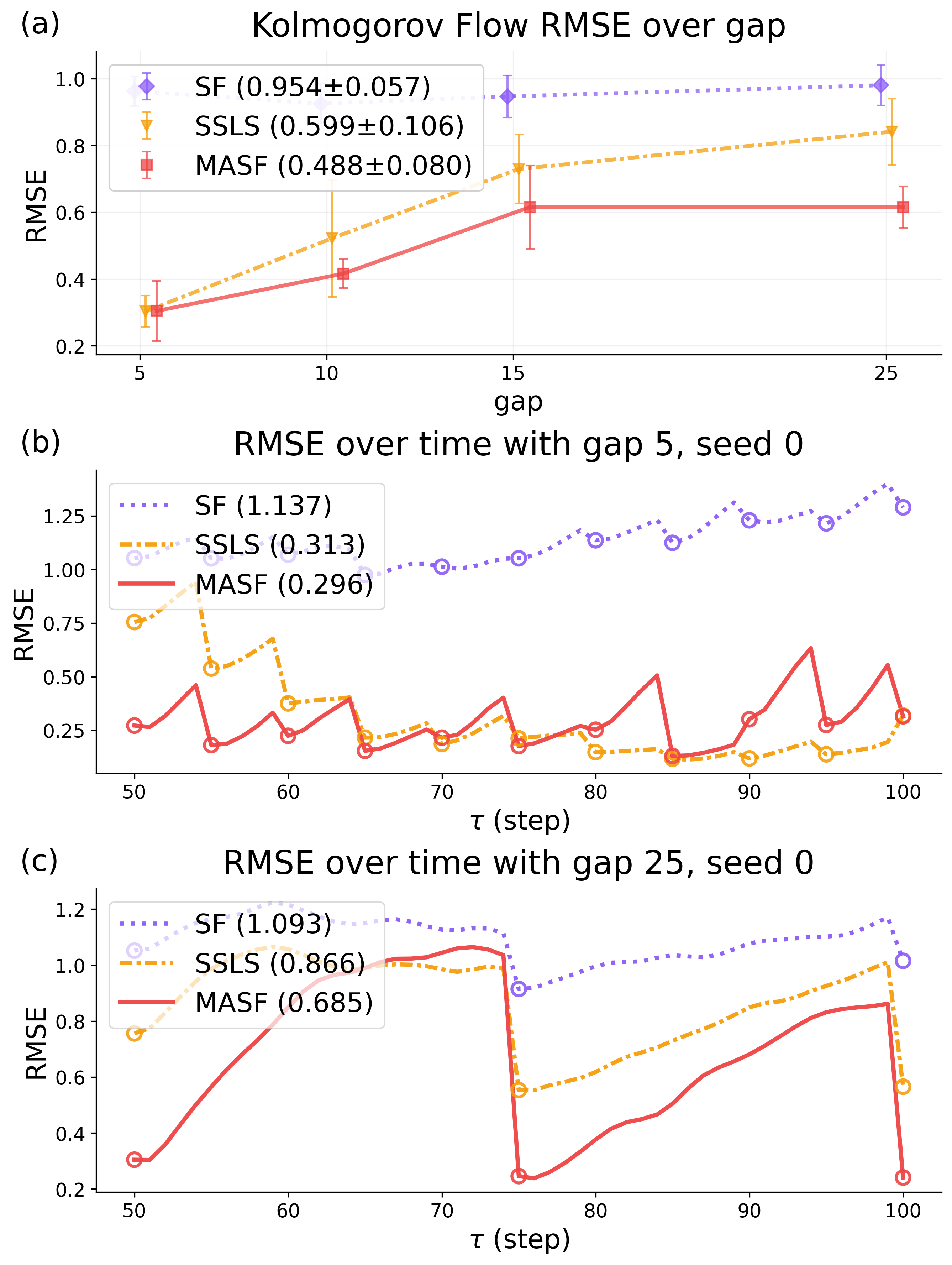

Kolmogorov Flow

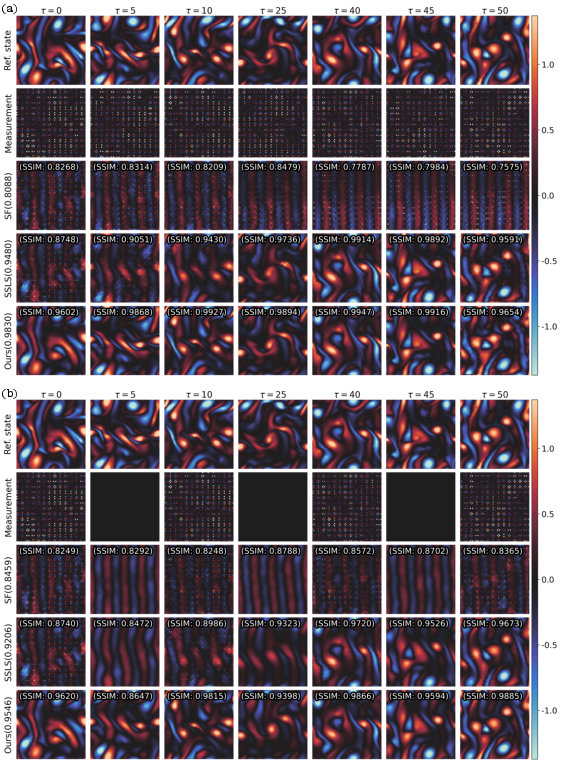

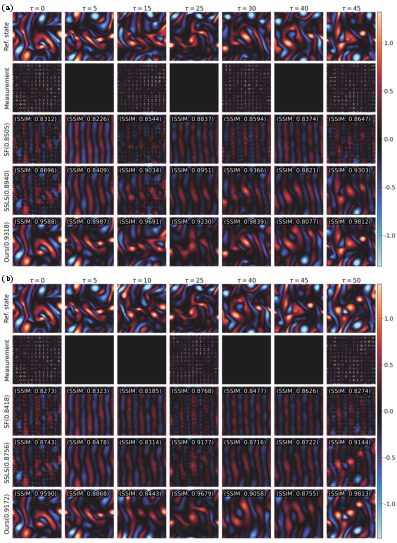

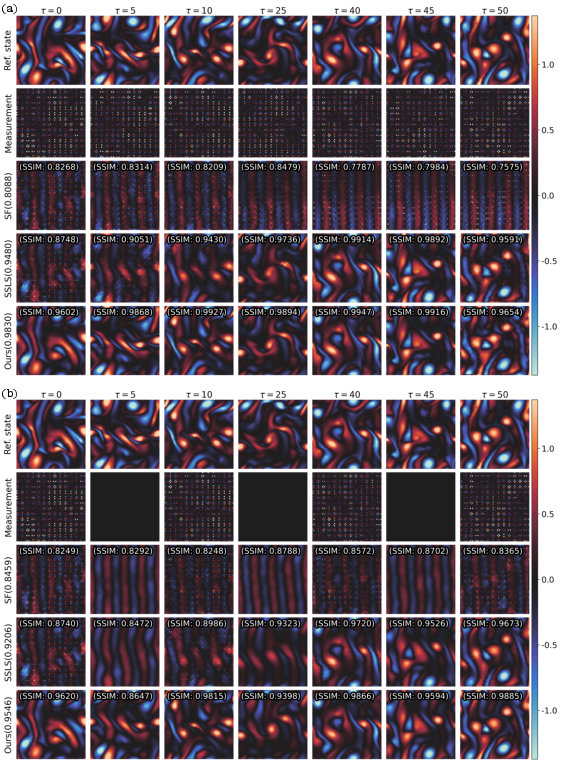

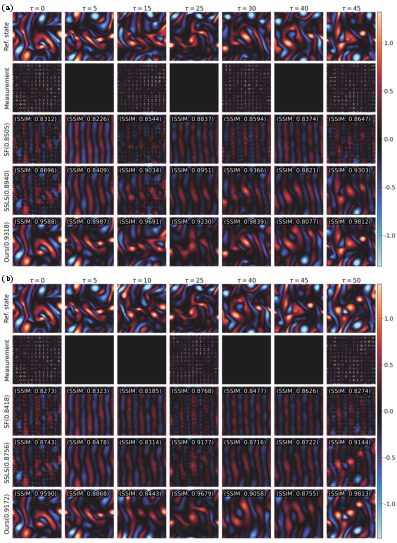

On 2D Navier-Stokes (Kolmogorov flow), MASF attains consistently superior RMSE and SSIM relative to SF and SSLS, especially as the measurement gap widens—a setting where SF notably degrades due to likelihood score approximations and SSLS increasingly suffers from computational expense and blur in reconstructions.

Figure 5: Kolmogorov flow RMSE curves and temporal profiles highlight MASF's resilience to sparse update intervals.

Qualitative analysis shows that MASF reconstructs spatial flow structures with higher structural fidelity and less blurring across temporal windows compared to baselines, which increasingly deviate as supervision becomes sparser.

Figure 6: Visual Kolmogorov flow reconstructions at moderate measurement gaps, highlighting spatial and structural fidelity enhancement in MASF.

Figure 7: Qualitative reconstruction for Kolmogorov flow at large measurement gaps, where MASF maintains high SSIM and spatial accuracy compared to severe artifacts or blurring in SF and SSLS.

Discussion and Implications

A central result of the paper is that correctly aligning the forward diffusion/masking process with the measurement equation directly unlocks analytic likelihood scores and consistent Bayesian updates, even in the presence of high-dimensional, nonlinear system structure and extremely sparse observations. Theoretical implications include the elimination of error-accumulating heuristic approximations, as well as more robust, interpretable Bayesian updates across sequential filtering problems. Practically, MASF enables high-dimensional data assimilation tasks that were previously infeasible for score-based methods and inaccessible to classical ensemble or particle filters, as evidenced in chaotic high-dimensional fluid dynamics or weather prediction models.

Strong numerical results: Across all benchmarks and regime sweeps, MASF achieves the lowest RMSE and SSIM losses and displays order-of-magnitude stability improvements over alternatives.

Notably, the MASF framework can, in principle, generalize to settings with non-square measurement operators, provided the interpolation remains invertible, and can be coupled with latent space approaches to address state-measurement mismatch. Extensions to nonlinear measurement operators represent a natural, albeit nontrivial, direction—requiring either nonlinear moment-matching SDEs or generalized interpolation in latent geometry.

Limitations and Future Directions

MASF currently assumes state and measurement spaces are dimensionally matched and that the measurement operator A has a nonnegative spectrum for invertible interpolation. Relaxing these restrictions—e.g., via latent embedding and complex- or symmetric-space interpolation—is an open direction, as is extending the moment-matching SDE formulation for non-linear observation operators. Additionally, while MASF mitigates error accumulation, it still demands retraining/fine-tuning of the prior score network at each measurement step; scalable architectures for amortized or continual score learning would further increase efficiency.

MASF, by demonstrating a theoretically-sound, measurement-aware conditional generative paradigm, opens new intersections between diffusion-based probabilistic inference and state-space filtering—suggesting applications beyond physical assimilation, such as temporal imputation or control in partially observed high-dimensional systems.

Conclusion

The Measurement-Aware Score-based Filter advances score-based data assimilation by aligning the forward process with the measurement operator, enabling exact likelihood-guided posterior sampling at every step. This construction removes key weaknesses of prior score-based methods and enables robust, stable, and accurate inference in both low- and high-dimensional regimes with sparse noisy measurements. Theoretical and empirical results demonstrate improved performance and stability over established baselines, indicating MASF as a principled path forward for high-dimensional sequential Bayesian inference and state tracking (2604.02889).