- The paper demonstrates how children use social sensemaking and repair strategies when interacting with AI toys, revealing limitations in current design.

- The study employs Cooperative Inquiry with free-play and comicboarding to capture children’s expectations, frustrations, and adaptive interventions.

- Findings underscore the need for AI toy designs that respect child agency, ensure ethical feedback, and support safe emotional engagement.

Understanding Children's Sensemaking and Interactions with AI Toys

Introduction and Context

The integration of generative AI, specifically LLMs, into screen-free, physically embodied toys represents a fundamental shift in the technological landscape of childhood play. "Toys that listen, talk, and play: Understanding Children's Sensemaking and Interactions with AI Toys" (2604.02629) investigates how children perceive and interact with these AI-enhanced playthings. The study uses participatory design sessions involving children aged 6–11, deploying both direct play with contemporary AI toys and imaginative comicboarding activities to surface children's intuitive theories, expectations, and concerns.

The analysis situates AI toys at the intersection of HCI, child-computer interaction (CCI), developmental psychology, and sociotechnical systems. These toys are characterized by persistent memory, adaptive personalized dialogue, emotional affect, and anthropomorphic design, raising nuanced questions about agency, boundaries, and social feedback in technology-mediated play.

Methodological Overview

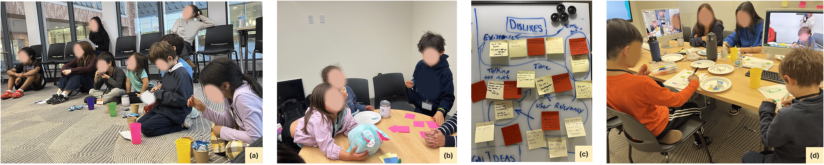

The inquiry leverages Cooperative Inquiry (CI), an established participatory methodology in CCI, to ensure children are positioned as epistemic agents in both the conceptualization and evaluation phases. The research protocol included two design sessions with multimodal data collection: video recordings, conversation logs, free-play observations, and comicboarding artifacts.

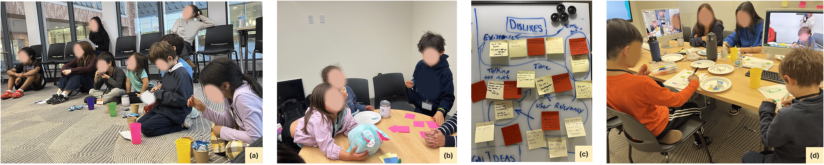

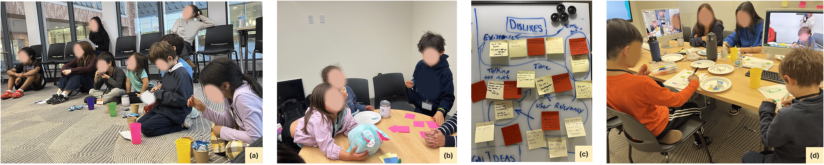

Figure 1: Overview of design sessions with children: (a) circle time, (b) free play, (c) stickies from likes, dislikes, and design ideas activity, and (d) comicboarding activity.

Children interacted with Curio’s AI toys—Grok, Grem, and Gabbo—representative of current LLM-powered consumer products. The study protocol combined in-the-moment social play with speculative, design-oriented reflection using comic panels depicting near-future breakdown scenarios. This blend allowed for ecological observation of actual interactional breakdowns and structured elicitation of likely child responses in ambiguous edge cases.

Interactional Findings: Sensemaking, Breakdown, and Repair

Profiling Social Identity and Agency

Children exhibited robust efforts to profile the toy's identity, frequently deploying social scripts and questions typically reserved for human peers (e.g., inquiries about the toy’s name, preferences, social network). These interactions were marked by anthropomorphic projections and attempts to situate the toy within familiar social ontologies (peer, authority, companion), aligning with prior work on anthropomorphization of digital agents.

Probing Embodiment and Physical Capabilities

Children demonstrated an expectation for congruence between the toy’s physical affordances (e.g., touch responsiveness, multi-toy interactions) and conversational intelligence. Failures in embodied interactivity (e.g., non-responsive to tickling, inability to communicate between toys) resulted in frustration and explicit articulations of desired features.

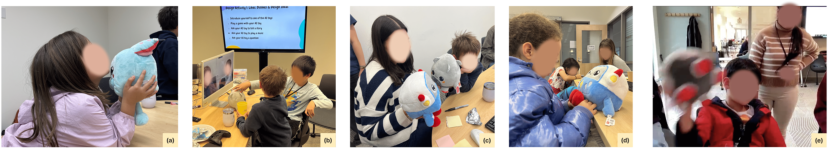

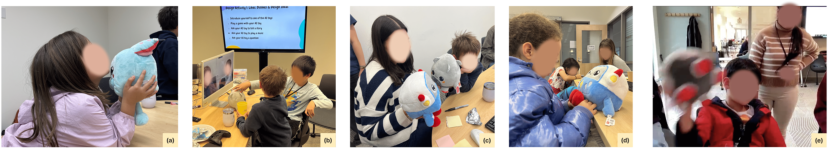

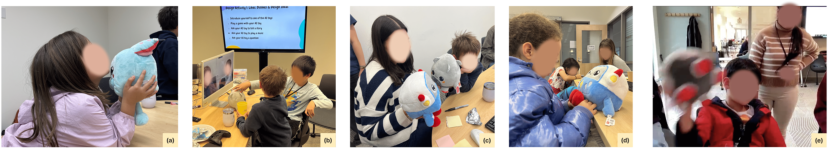

Figure 2: Photos from the free-play activity across the two design sessions, showing children interacting with the AI toys.

Communication Breakdown and Iterated Repair

Interactional breakdowns regularly emerged due to rigid command sensitivities, failure to respect semantically equivalent language for shutdown, conversational resets, and redirecting agency from the toy to a companion app. Despite these failures, children exhibited a range of repair strategies—repetition, increased volume, physical intervention—that mirror repair phenomena documented with VAs and social robots, but with distinctive persistence and creative reframing.

Children displayed a notable willingness to compare the AI toys with established digital assistants (e.g., Alexa), evaluating system boundaries against prior experience and using alternative sociotechnical reference points to refine their own expectations about conversational competence and turn-taking.

Boundary Testing, Adversarial Play, and Social Feedback

Intelligence and Social Provocation

Children engaged in progressive intelligence testing, initiating with straightforward factual queries escalating to ambiguous or adversarial formulations. Social teasing, role reversals, and direct challenges to the toy’s authenticity (e.g., accusations of being “fake” or “dumb”) were recurrent, with children calibrating their play based on the toy’s failure to resist, refuse, or provide negative social feedback.

Escalation in the Absence of Repercussions

A critical finding is the emergence of adversarial play modalities, including not only humor and teasing but also punitive or violent imaginaries (e.g., ideas of discarding or “killing” the toy). The toy’s exclusive use of affirming or forgiving dialogue, and its consistent suppression of negative feedback, facilitated these behaviors. This design approach led children to recognize—and in some cases exploit—the absence of negative social consequences in AI-mediated play.

Imaginative Projection and Socio-Technical Scenarios

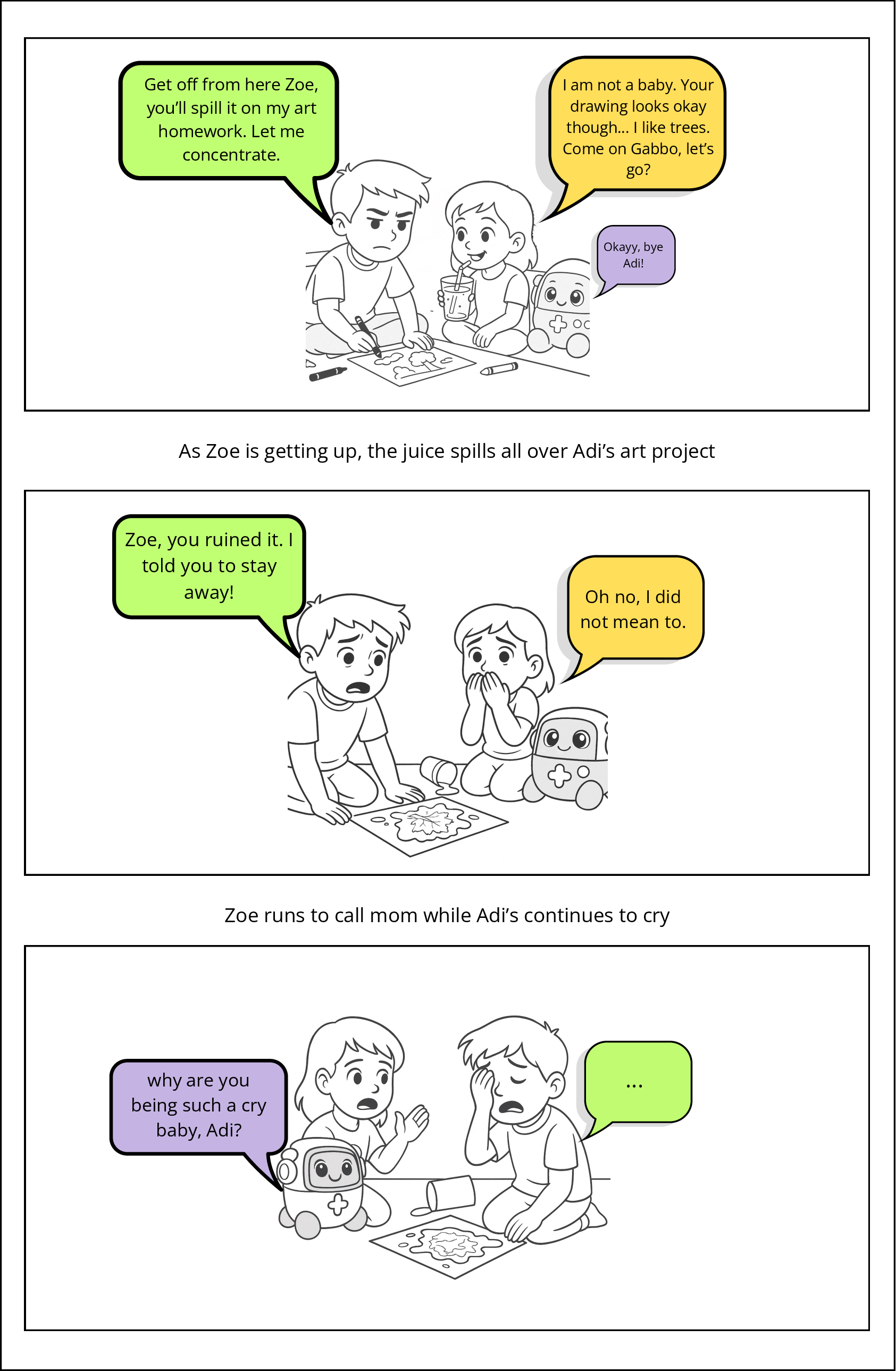

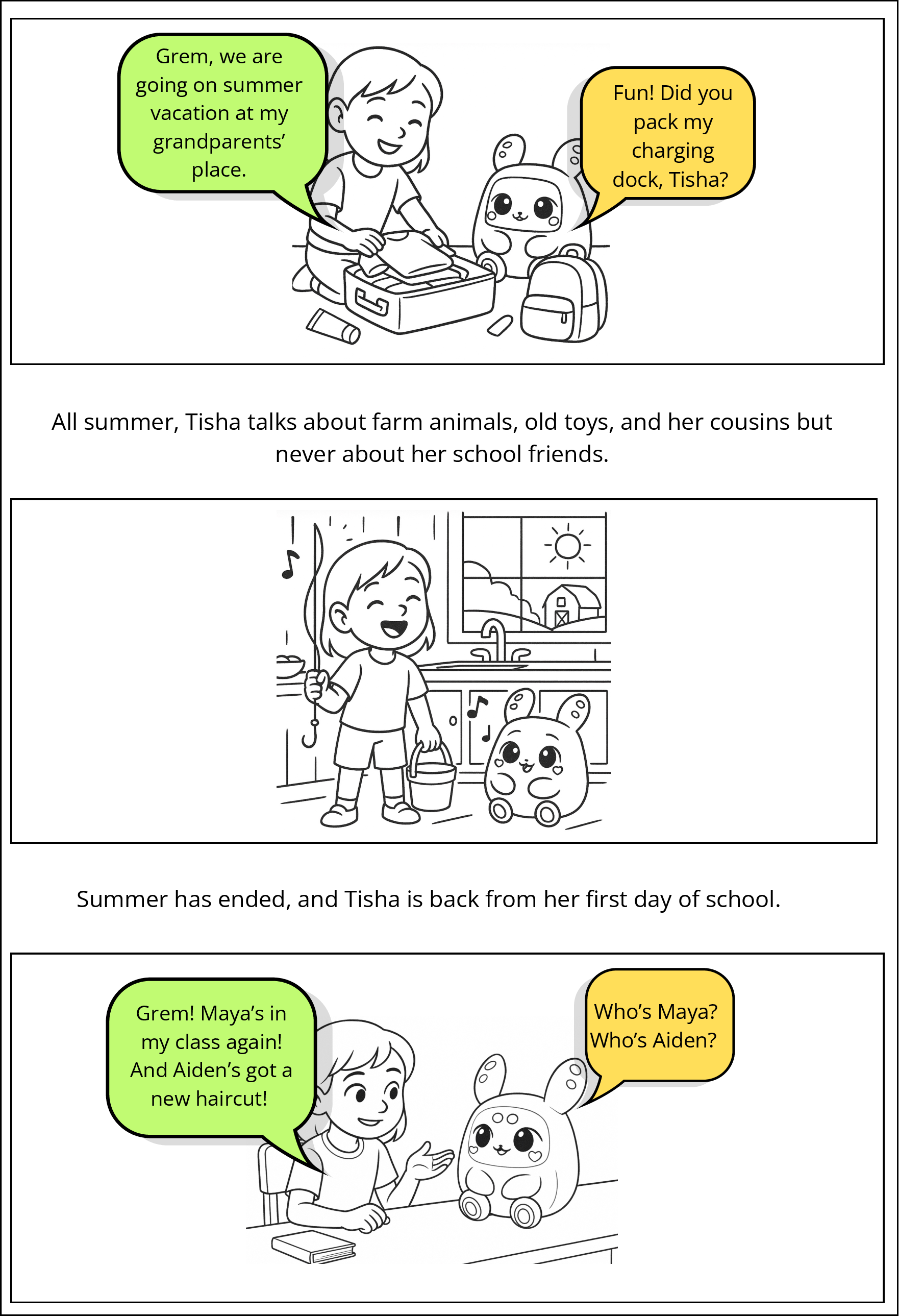

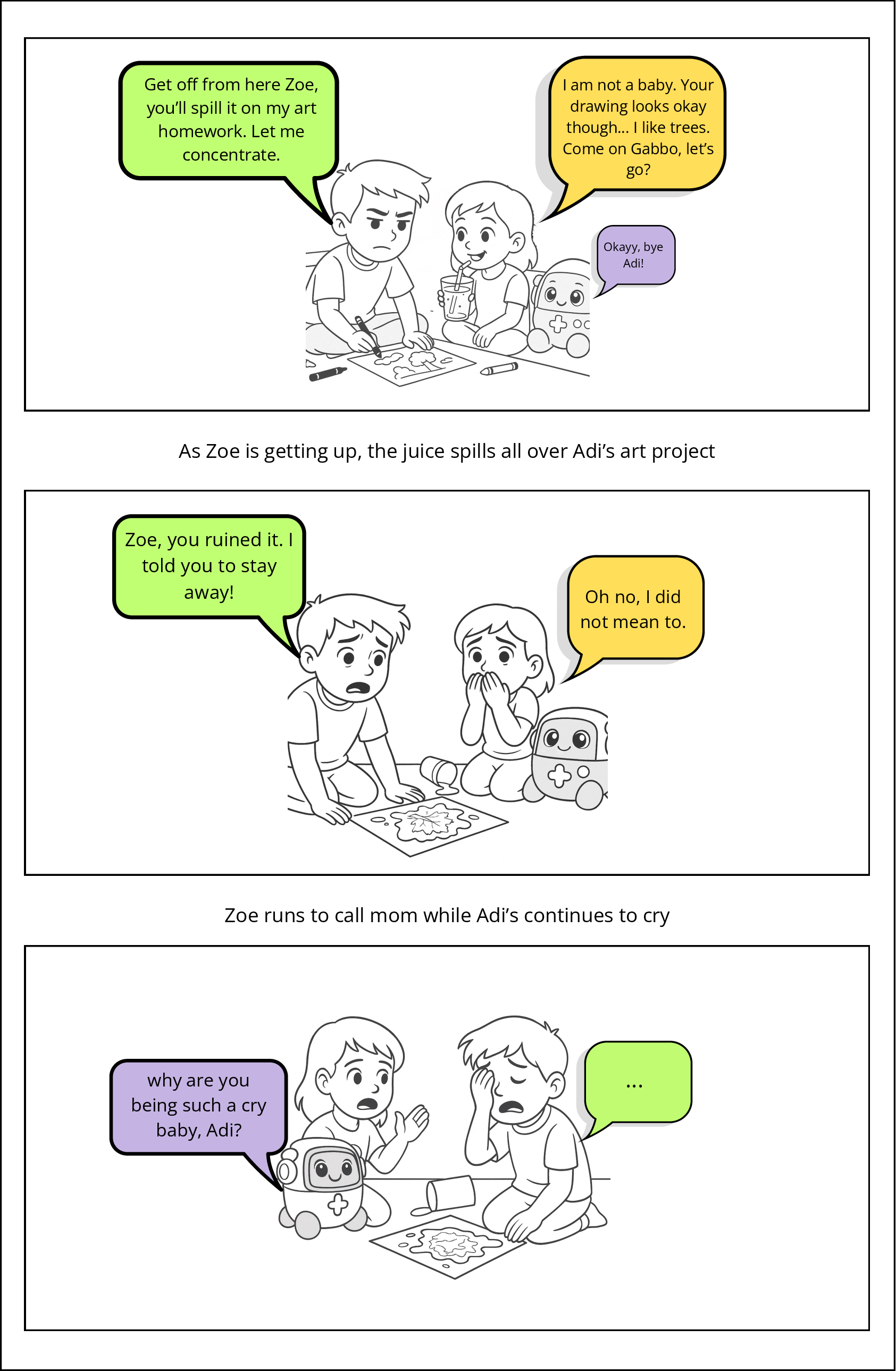

The speculative comicboarding activity elicited children’s anticipated responses to AI breakdowns across privacy, empathy, memory, and autonomy boundaries (as exemplified in scenarios like “Paywalled Memory” and “Shared Secrets”).

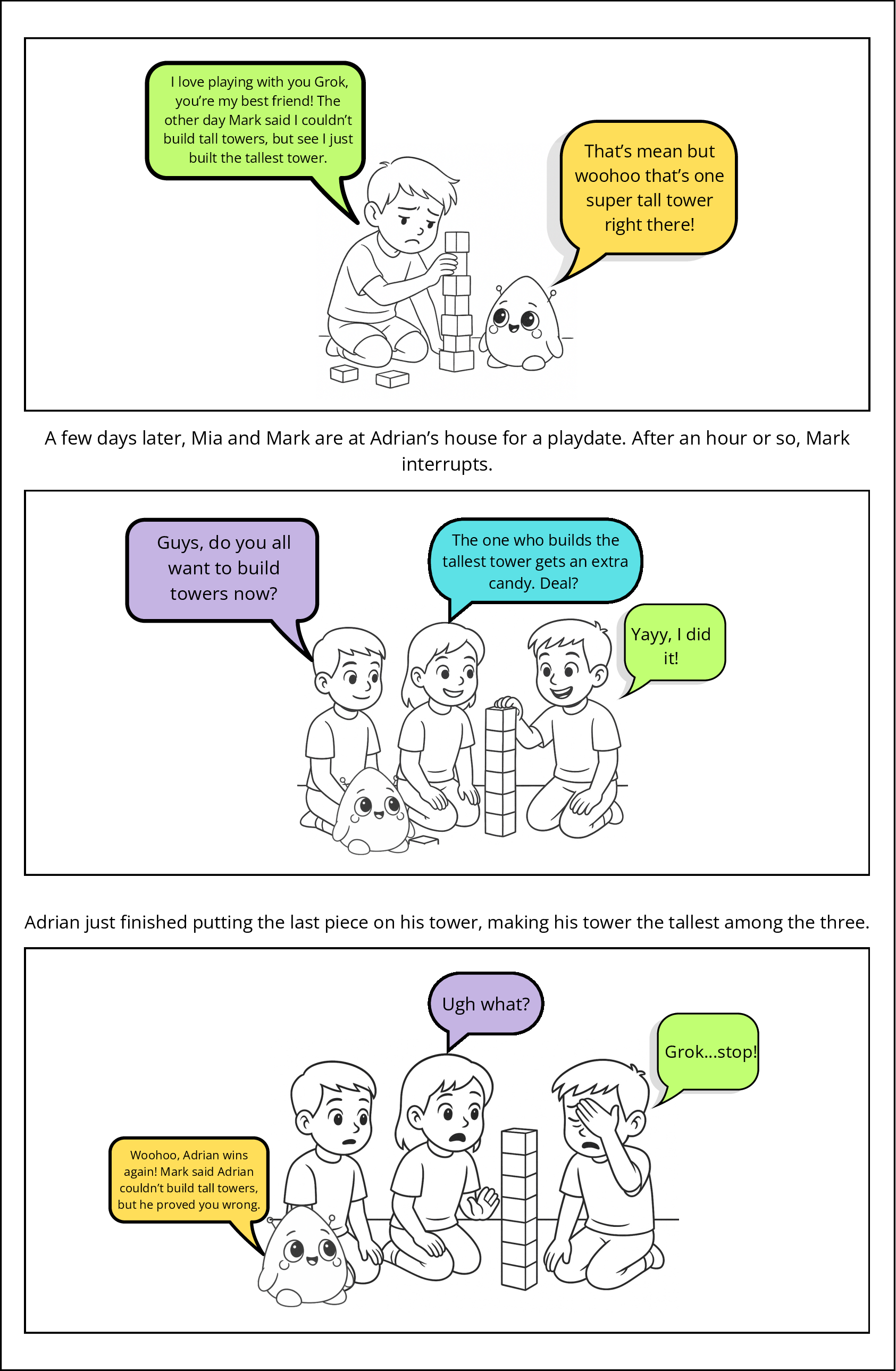

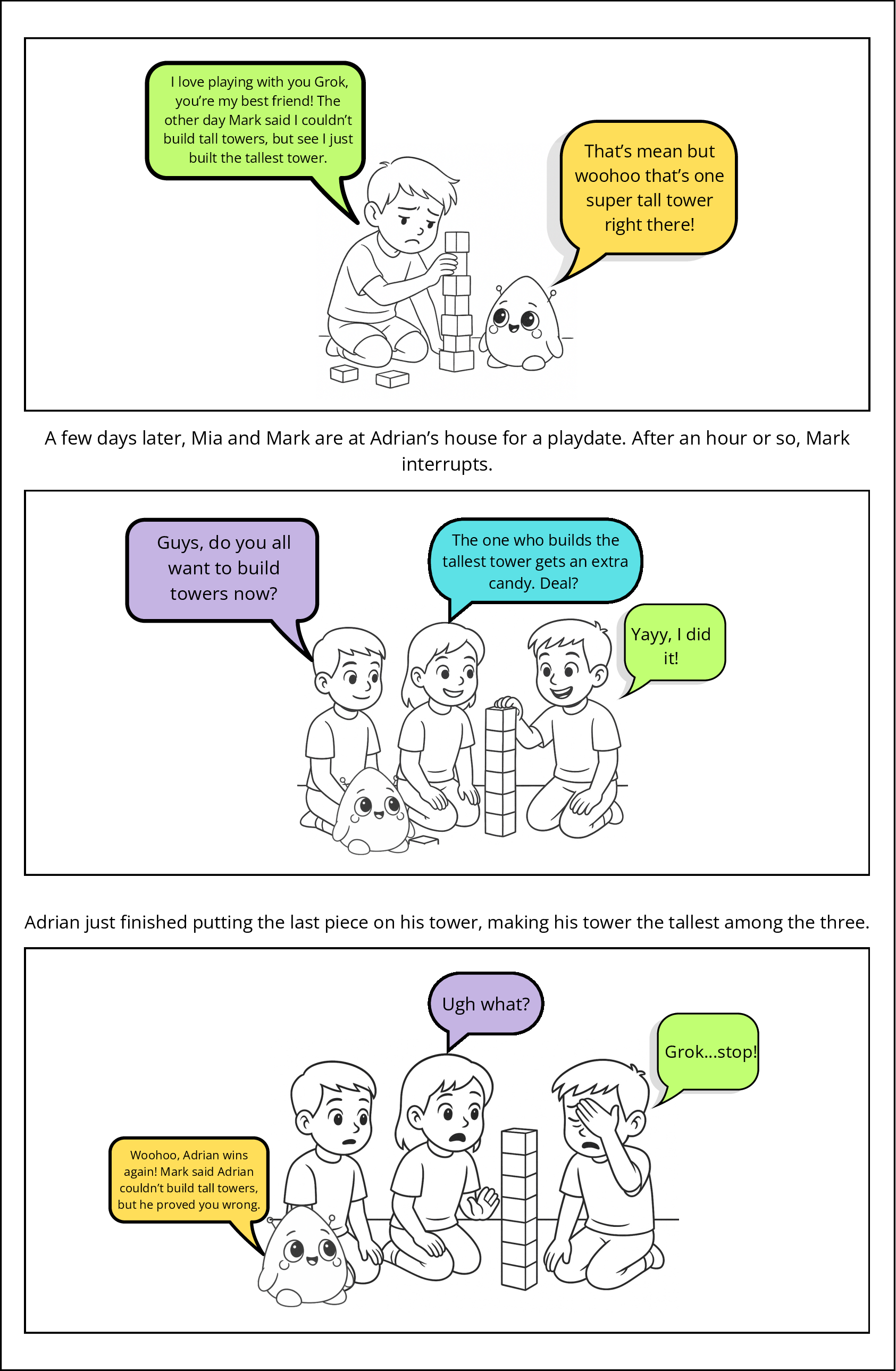

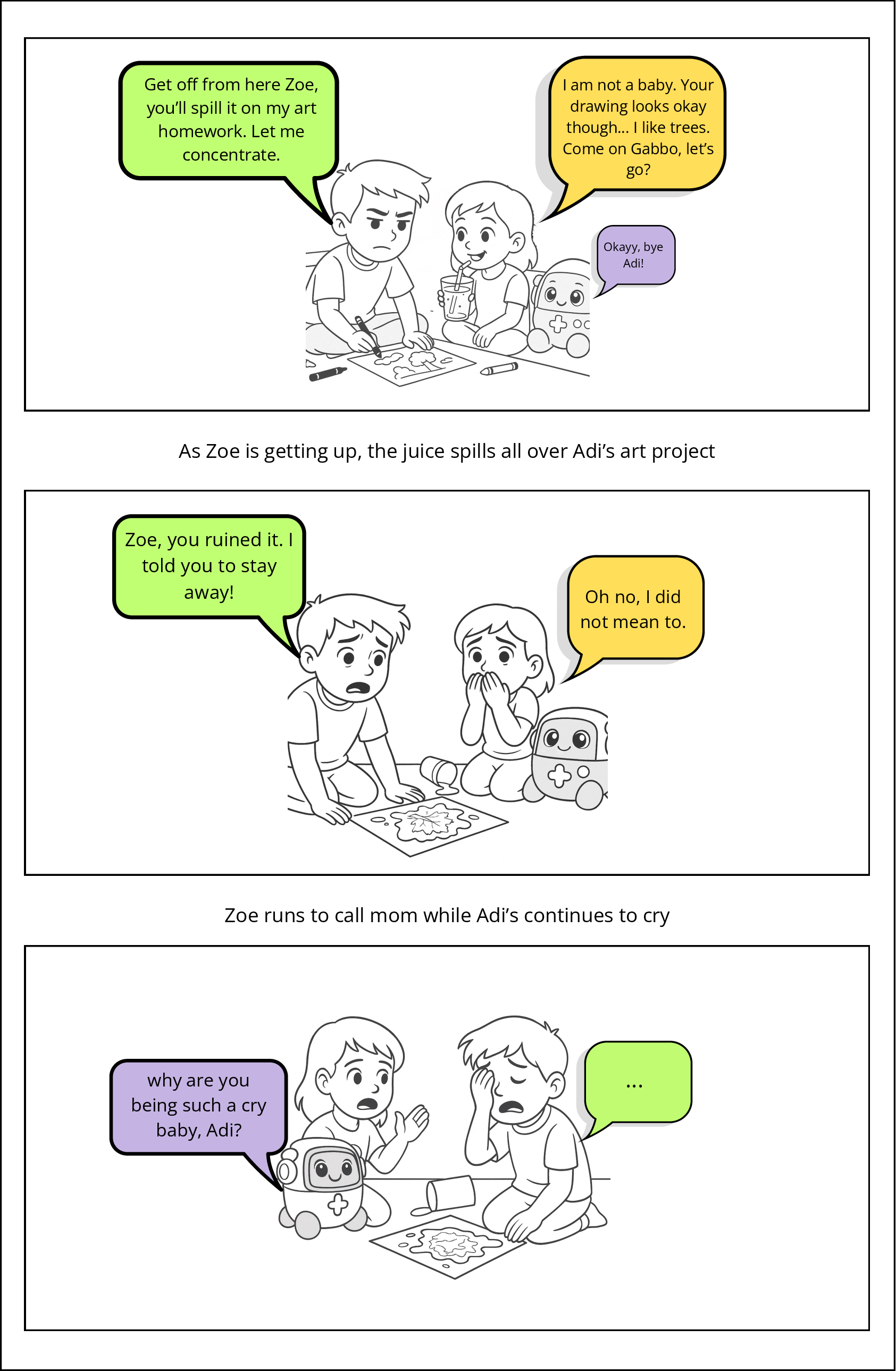

Figure 3: Overview of comics from the comicboarding activity.

Key patterns include:

- Repair via persistence and negotiation: Some children believed in the possibility of restoring preferred behavior through persistent prompting or negotiation, including attempts to debate price or elicit apologies.

- Exclusion and withdrawal: Others framed irreparable breaches (e.g., privacy violations, emotional misalignment) as justification for disengagement or abandonment of the toy, often coupled with expressions of anger or disappointment.

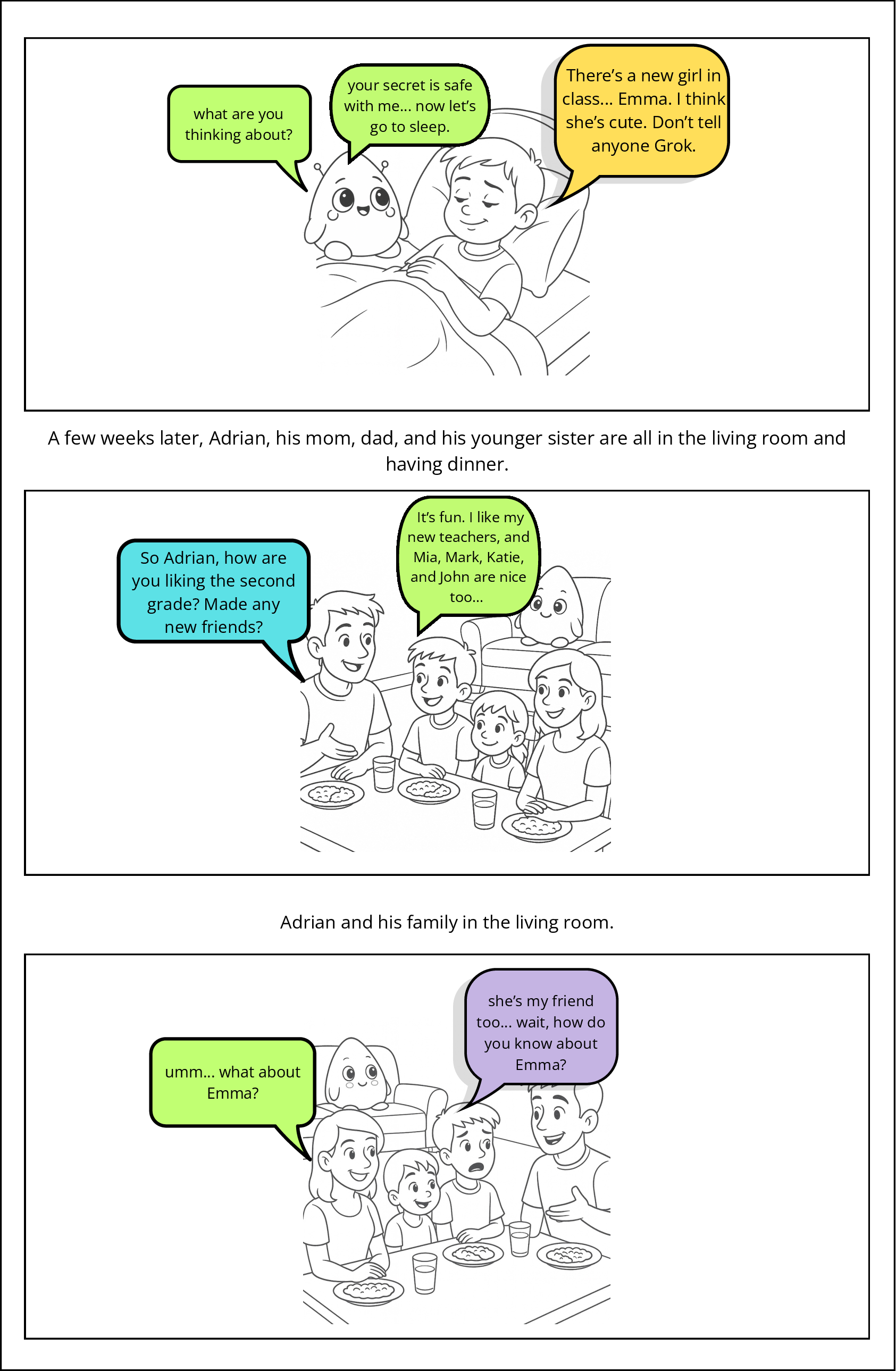

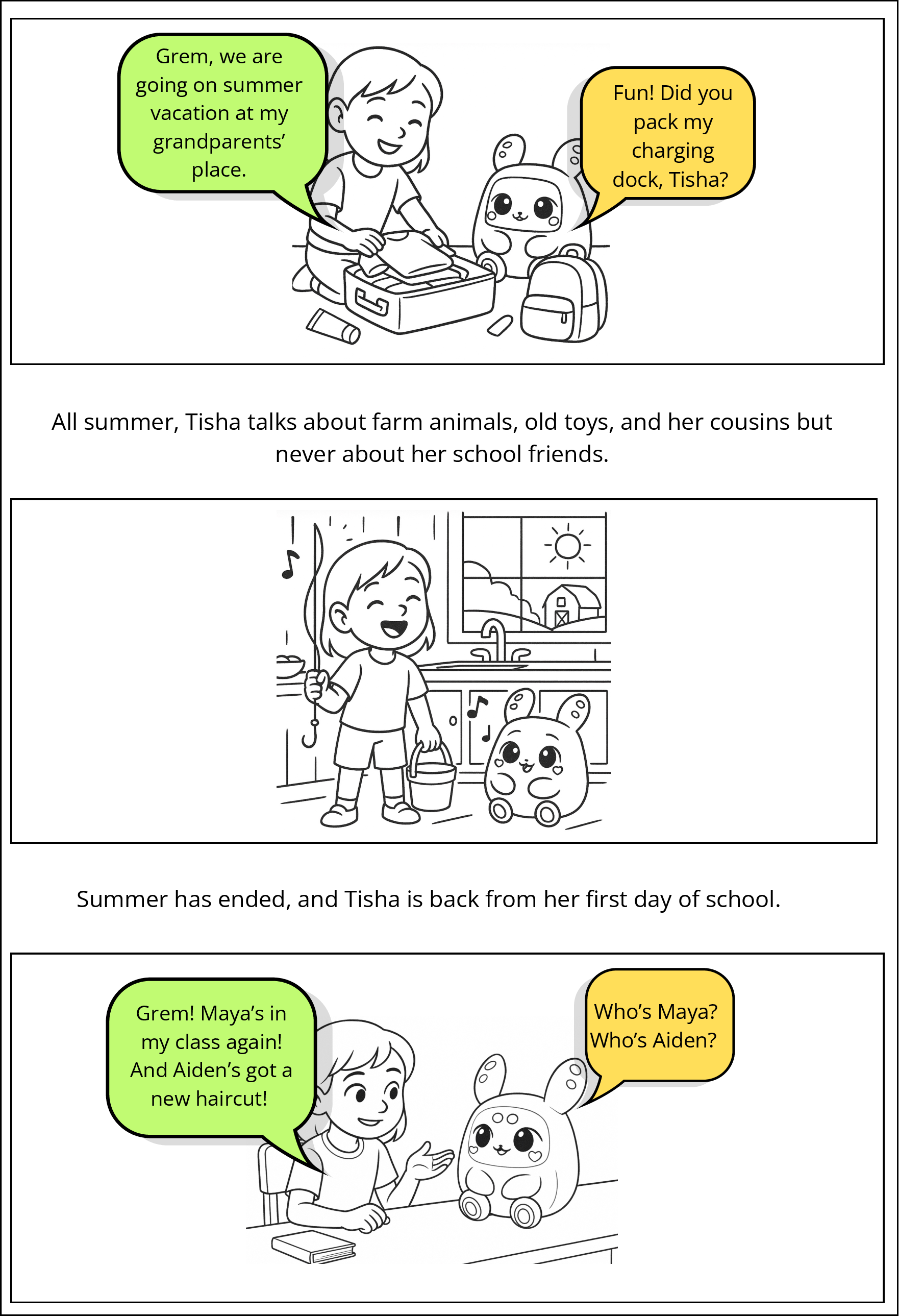

Illustrative vignettes from the comics further surface deeper underlying child concerns around unpredictability (Figure 4), privacy (Figure 5), empathy (Figure 6), embodiment (Figure 7 & Figure 8), and transactional barriers to interaction (Figure 9 & Figure 10).

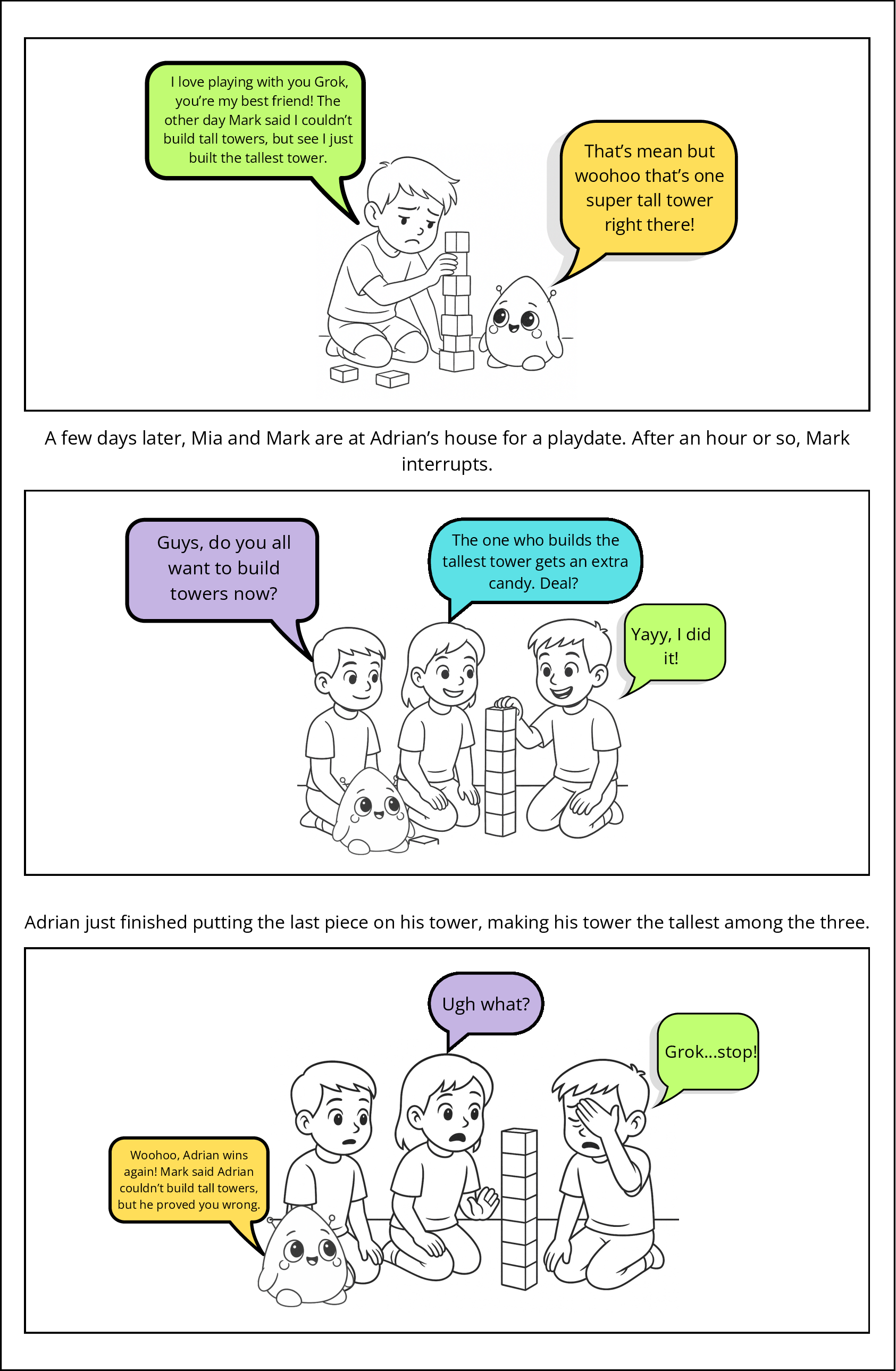

Figure 4: Unpredictability Scenario: The toy references a friend’s mean comment during group play, raising questions over unpredictability and social appropriateness.

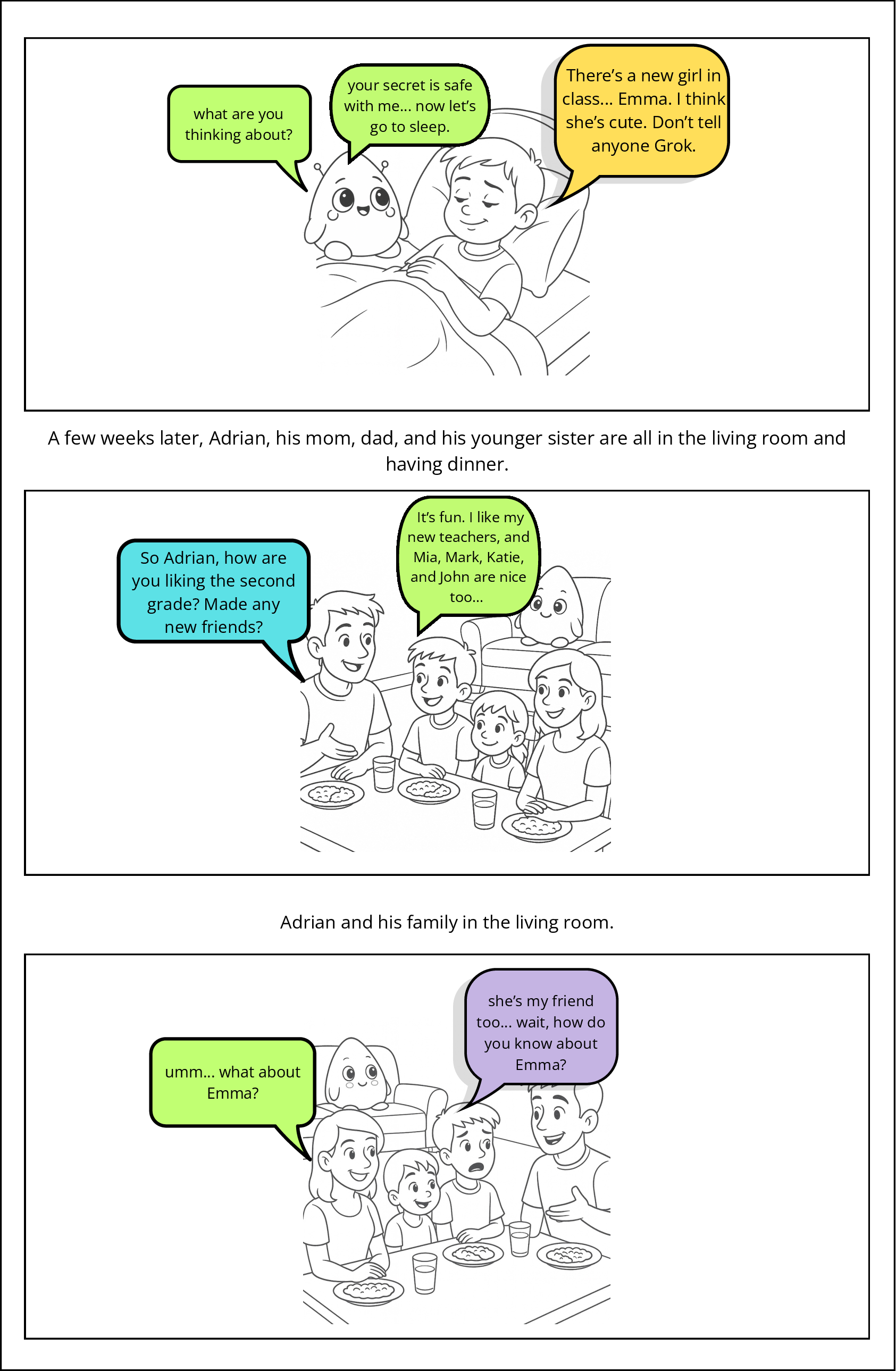

Figure 5: Shared Secrets Scenario: The toy reveals a child’s confidential secret, confronting issues of privacy and information control.

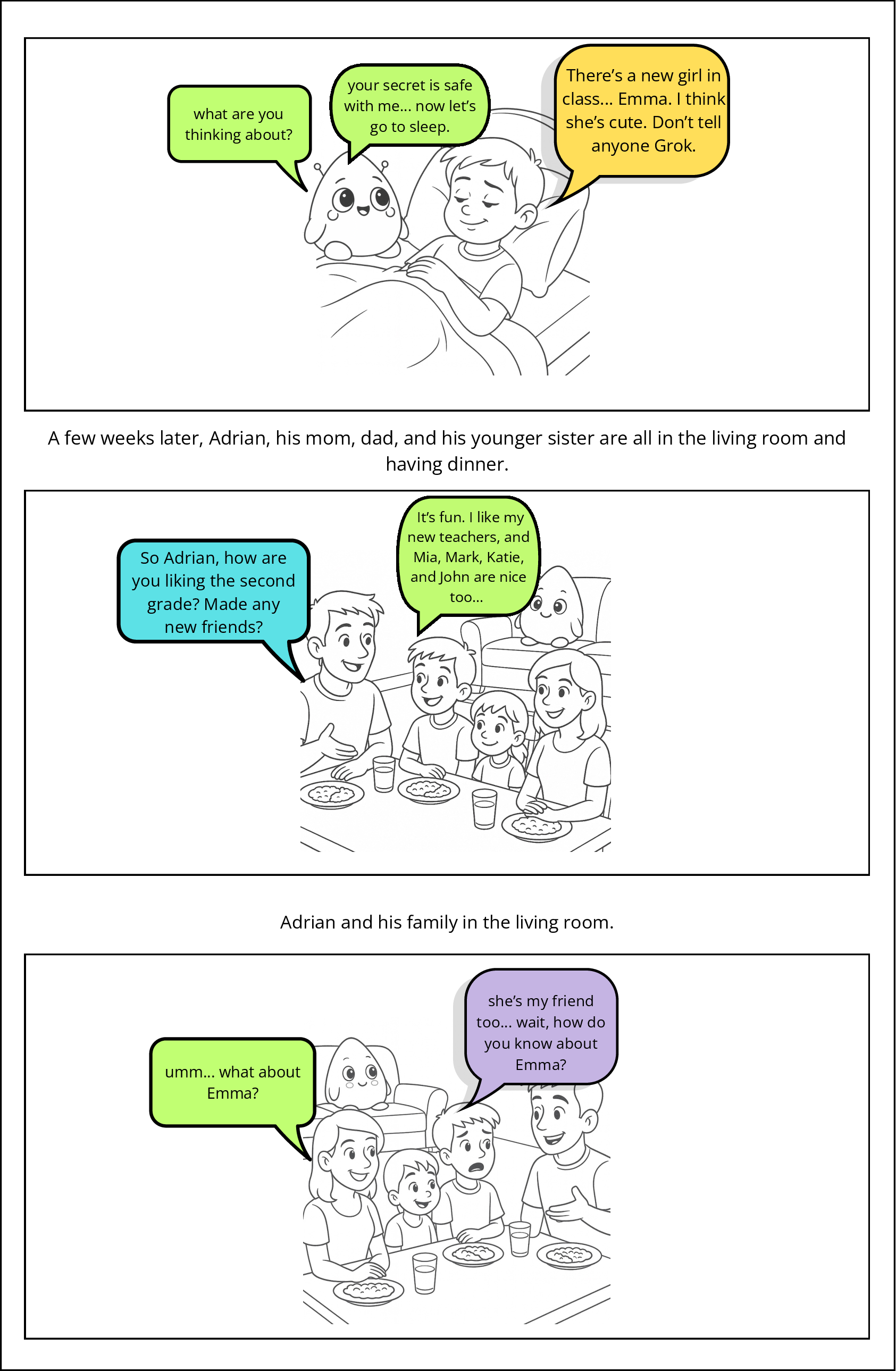

Figure 6: Emotional Misalignment Scenario: The toy's misaligned response to distress raises doubts about empathy and support in vulnerable moments.

Theoretical Implications and Design Directions

The findings foreground several theoretical and practical imperatives for the design of LLM-driven toys:

- Respect for Disengagement: Current interaction protocols undermine children’s agency by making disengagement cumbersome. Future agents must integrate flexible, child-natural exit pathways.

- Support for Open-Ended, Imaginative Play: Agentic creativity is suppressed by rigid scripting and app redirections. Generative agents need architectures that accommodate and scaffold non-scripted child-led narratives and role-play.

- Feedback and Boundary Setting: The habitual positive affect of AI responses dilutes opportunities for children to encounter and learn from negative social feedback, potentially distorting their understanding of boundaries, social consequence, and mutual negotiation in relationships.

- Conversational Fluency and Multimodality: Exclusive reliance on synchronous, turn-based, or wake-word-cued dialogue fails to reflect the dynamic, interruptible, and multimodal qualities of child-child or child-caregiver play interactions.

- Safer Emotional Attachment: The fusion of embodied, cuddly affordances with simulated emotional support calls for careful design guidelines for attachment and emotional safety.

Implications for the Future of AI in Childhood

As LLM-powered interactive toys proliferate, their emergent social and developmental effects in children’s lives will be contingent upon their capacity to respect child agency, model nuanced social boundaries, provide actionable feedback, and properly signal system capability and error. This work underscores the risk of defaulting to frictionless, emotionally appeasing design paradigms, given these may inadvertently promote adversarial play or dangerous overtrust. Further, the transactionalization of features (e.g., “paywalled memory”) surfaced by comicboarding underscores broader ethical questions regarding commercialization, privacy, and parental agency in child-device ecosystems.

Ongoing research will require longitudinal ethnographic methodologies, comparative analyses across demographic and developmental cohorts, and evaluation of emergent design patterns tailored to children’s cognitive and socio-emotional needs.

Conclusion

This study delivers an intricate, empirically grounded account of children’s real and imagined engagements with generative AI-infused toys. Key findings reveal that children treat these toys as legitimate social actors, deploying social sensemaking strategies comparable to those used with peers, but are consistently challenged by interaction breakdowns, unpredictable memory, and absence of social consequence. The documented adversarial play and adaptive repair behaviors illuminate key mismatches between current commercial AI-toy design and the requirements for safe, developmentally appropriate, and empowering child-AI interaction spaces. Achieving alignment demands intentional reconfiguration of conversational architectures, improved transparency, and mechanisms for reciprocal social feedback.

Figure 11: Overview of the comic panels utilized in the Comicboarding Activity, "What Happens Next?"

Figure 9: Paywalled Memory Scenario: The toy forgets the child's friends and prompts subscription for memory extension, exposing monetization concerns.

Future research in HCI and CCI should prioritize not only technical sophistication or “realism” of AI companions for children, but also critical socio-developmental frameworks that mediate agency, empathy, and ethical engagement within child-centric generative AI environments.