- The paper presents a hybrid neuro-symbolic pipeline that decouples visual abstraction from symbolic rule induction through a structured four-stage process.

- The method achieves 24.4% pass@2 for the neuro-symbolic system and 30.8% with an ensemble, significantly outperforming pure LLM baselines.

- The approach reduces computational costs and enhances interpretability, setting a robust blueprint for future compositional reasoning in AGI.

Compositional Neuro-Symbolic Reasoning for ARC-AGI-2

Motivation and Problem Setting

The paper addresses the challenge of systematic abstraction and compositional visual reasoning on the ARC-AGI-2 benchmark, focusing on robust inductive generalization from a handful of input–output grid pairs. While large-scale neural models and symbolic program synthesis methods individually show significant gaps—either in reliable combinatorial generalization or perceptual grounding—the work introduces a hybridized neuro-symbolic architecture. The approach specifically targets problems where tasks defy solution by brute-force memoization, require robust scene decomposition, and demand non-trivial, multi-level transformation composition under minimal demonstration.

Pipeline Architecture

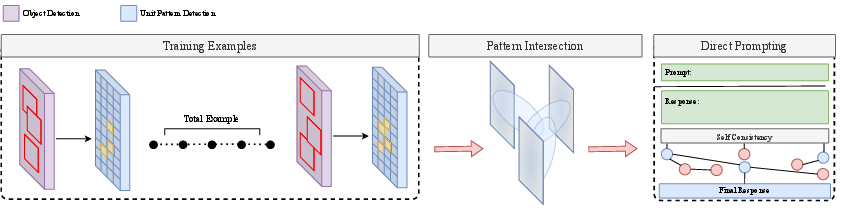

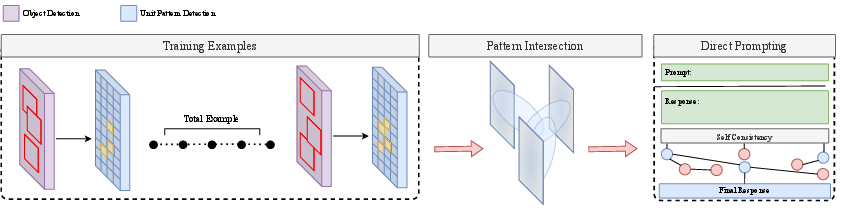

The proposed architecture strictly separates perception from rule induction, integrating neural-guided search with symbolic constraint enforcement. The four-stage pipeline is:

- Structured Object Abstraction: Transforms raw grids into symbolic scene graphs by extracting connected components and rich geometric/color attributes. Low-level statistics (bounding boxes, centroids, color histograms) are algorithmic, while ambiguous cases invoke LLM-assisted enrichment for attributes such as cavity identification or irregular shape classification.

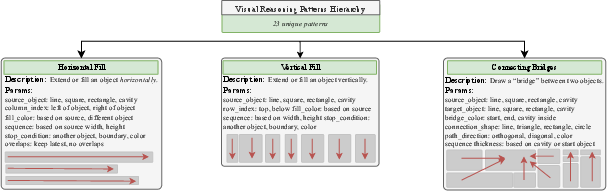

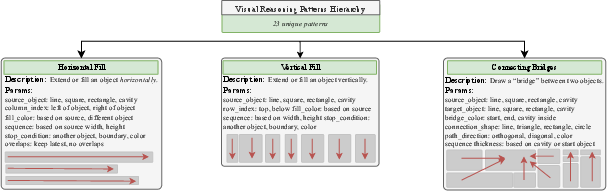

- Neural-Guided Transformation Proposal: Using a fixed DSL of 22 parameterized "Unit Patterns" (atomic visual reasoning operations; see Figure 1), a neural prior ranks candidate transformation programs. The neural network, typically a frontier LLM, operates exclusively over the symbolic domain—proposing sequences of DSL primitives rather than pixel-level edits.

- Cross-Example Consistency Filtering: Symbolic execution of candidate programs across all demonstrations filters by strict cross-example agreement, enforcing that only programs compatible with all training pairs survive. Among consistent candidates, the simplest (minimal-complexity) is selected as the final hypothesis.

- Guided Test-Time Solution Generation: For the unseen input, a structured hint—comprising recurrent Unit Patterns and parameterizations—is passed to the solver (again LLM- or rule-based), guiding output synthesis. Aggregation by cell-wise majority vote (self-consistency) and (optionally) ensemble selection with candidates from an LLM-only system further increase robustness.

Figure 2: End-to-end neuro-symbolic reasoning pipeline illustrating object-centric abstraction, neural pattern proposal, symbolic consistency filtering, and test-time guided solution generation.

The foundation for compositionality is the transformation library: a hierarchy of visual reasoning patterns encoded as a symbolic DSL, each parameterized by object-level properties. This hierarchy constrains the hypothesis space and facilitates systematic generalization without combinatorial explosion.

Figure 1: The transformation pattern hierarchy enables compositional reasoning via modular, parameterized operations, supporting complex visual manipulations from atomic units.

Empirical Results

The method yields strong empirical performance on ARC-AGI-2:

- Compositional Reasoner (Neuro-symbolic only): 24.4% pass@2 on the public evaluation set.

- Meta-Classifier (Ensemble with ARC Lang Solver): 30.8%—the highest reported at submission.

Both metrics decisively outperform LLM-only baselines (18.3% for GPT-5-Pro, 16.0% for Grok-4, all others below 10%), showing that symbolic constraint delivers measurable systematic generalization unattainable by scaling alone. Symbolic hints account for the largest single source of improvement: ablative removal of structured hints drops accuracy by 6.9 points, significantly more than eliminating sampling-based self-consistency (3.9 points), confirming that object-centric program-space restriction is key.

Notably, performance gains in the ensemble arise from non-identical solved subsets, with the neuro-symbolic system excelling on tasks requiring explicit cavity reasoning, compositional fills, and structural transformations, while the LLM system (ARC Lang Solver) prefers semantically-driven groupings. The meta-classifier's improvement derives from this orthogonality, not increased generative capacity.

Theoretical and Practical Implications

The results empirically reinforce that explicit separation of visual abstraction and symbolic program induction induces a strong inductive bias, improving generalization relative to end-to-end neural architectures that entangle perception and reasoning. The approach substantially reduces the hypothesis entropy passed to the downstream rule-synthesizing or grid-generating LLM, mitigating both error propagation and sampling inefficiency. Symbolic abstraction also yields interpretability and deterministic reproducibility for many tasks.

This architecture also significantly reduces computational costs typically associated with brute-force neuro-symbolic search over program space. Symbolic preprocessing adds only a modest fixed cost, while self-consistency remains the dominant inference bottleneck due to its multiplicative nature.

Limitations include the expressivity ceiling of the 22-pattern DSL (certain compositional or latent-grouping tasks remain unsolved), dependence on prompt-engineered LLM pipeline calibration in ambiguous perception, and residual stochasticity in solution generation. These suggest directions for future research, including expansion and refinement of the atomic pattern set, integration of more global constraint solvers, and replacement of sampling-based aggregation with more principled program verification and search.

Broader Impact and Future Directions

The pipeline's separation of perceptual abstraction, neural-guided DSL proposal, and symbolic consistency filtering offers an effective strategy for compositional visual reasoning beyond the ARC benchmark. The architectural principles (object-centric representation, symbolic program induction over object graphs, cross-example consensus filtering) directly inform future research in multi-modal and systematic abstraction for advanced AGI architectures and for neuro-symbolic program synthesis in other domains (language, planning, embodied cognition). Importantly, scaling model size or context window alone is unlikely to yield similar generalization capabilities without such structural constraints.

Future developments may focus on:

- Extending the DSL to capture deeper forms of relational abstraction

- Incorporating amortized or refinement-based program search directly into symbolic filtering

- Formalizing perception modules to further reduce reliance on prompt engineering

- Efficient elimination of the need for large Nc self-consistency sampling

Conclusion

Explicit separation of perception, neural hypothesis generation, and symbolic program induction enables architectures to outperform pure LLMs on ARC-AGI-2 by a wide margin, highlighting the limitations of end-to-end neural modeling for systematic compositional reasoning. The observed gains are primarily due to structural bias, and to a lesser extent stochastic stabilization and ensemble diversity, providing a concrete step toward systematic abstraction without task-specific tuning or expensive sampling-based scaling. These principles offer a robust blueprint for advancing neuro-symbolic reasoning in general AI.