- The paper introduces MetaNav, which employs metacognitive reasoning to dynamically monitor and correct navigation strategies, reducing local oscillation and redundant revisiting.

- It integrates spatial memory, history-aware heuristic planning, and LLM-based reflective correction to seamlessly balance exploration and computational efficiency.

- Empirical results show significant improvements in success rate and SPL across benchmarks, while reducing VLM call frequency by over 20% compared to prior methods.

Introduction

"Stop Wandering: Efficient Vision-Language Navigation via Metacognitive Reasoning" (2604.02318) addresses significant inefficiencies in training-free Vision-Language Navigation (VLN) agents, particularly those arising from local oscillation, redundant revisiting, and high-latency queries to Vision-LLMs (VLMs). While the integration of foundation models (VLMs, LLMs) enhances multi-modal perception and instruction following, prior methodologies are hampered by myopic exploration policies and passive memory systems. The paper proposes MetaNav, a navigation framework built to endow agents with metacognitive reasoning: explicit monitoring, diagnosis, and adaptive correction of navigation strategies in 3D environments. This essay presents a thorough, technical analysis of MetaNav's architecture, empirical results, and implications for autonomous embodied agents.

Architectural Overview

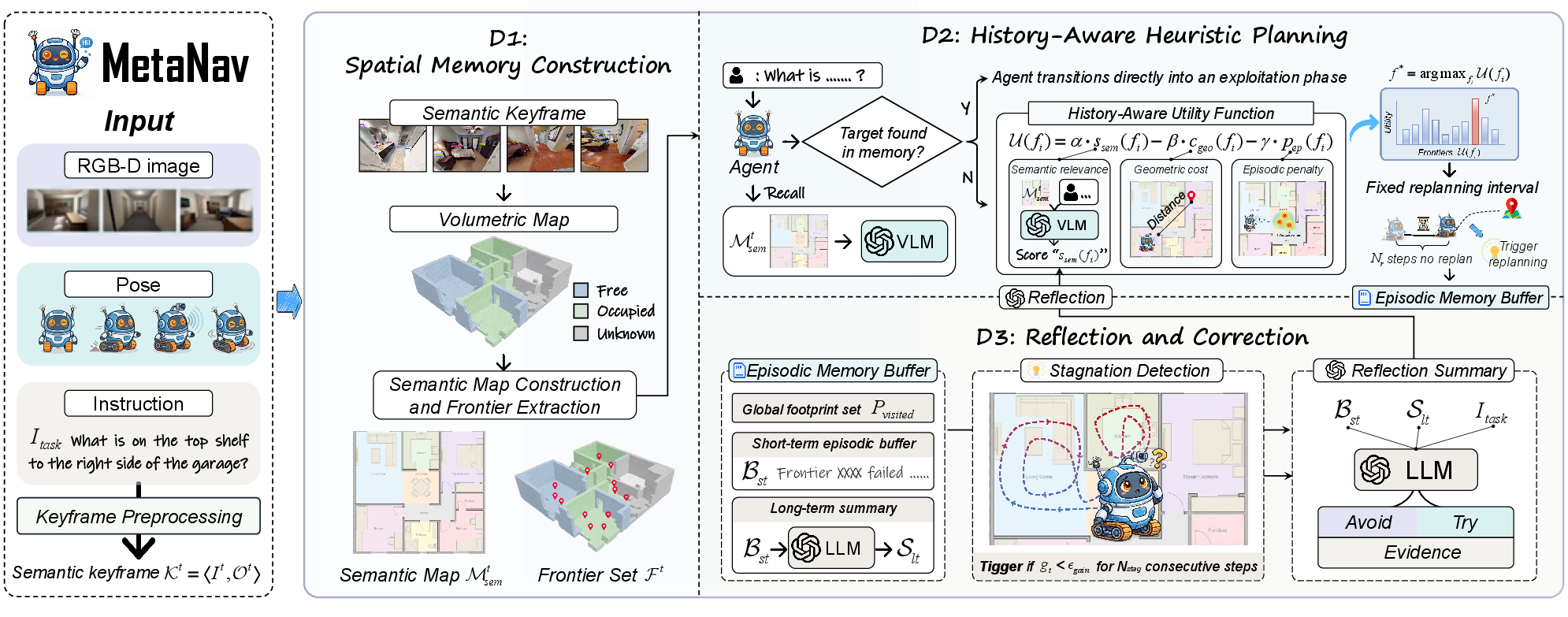

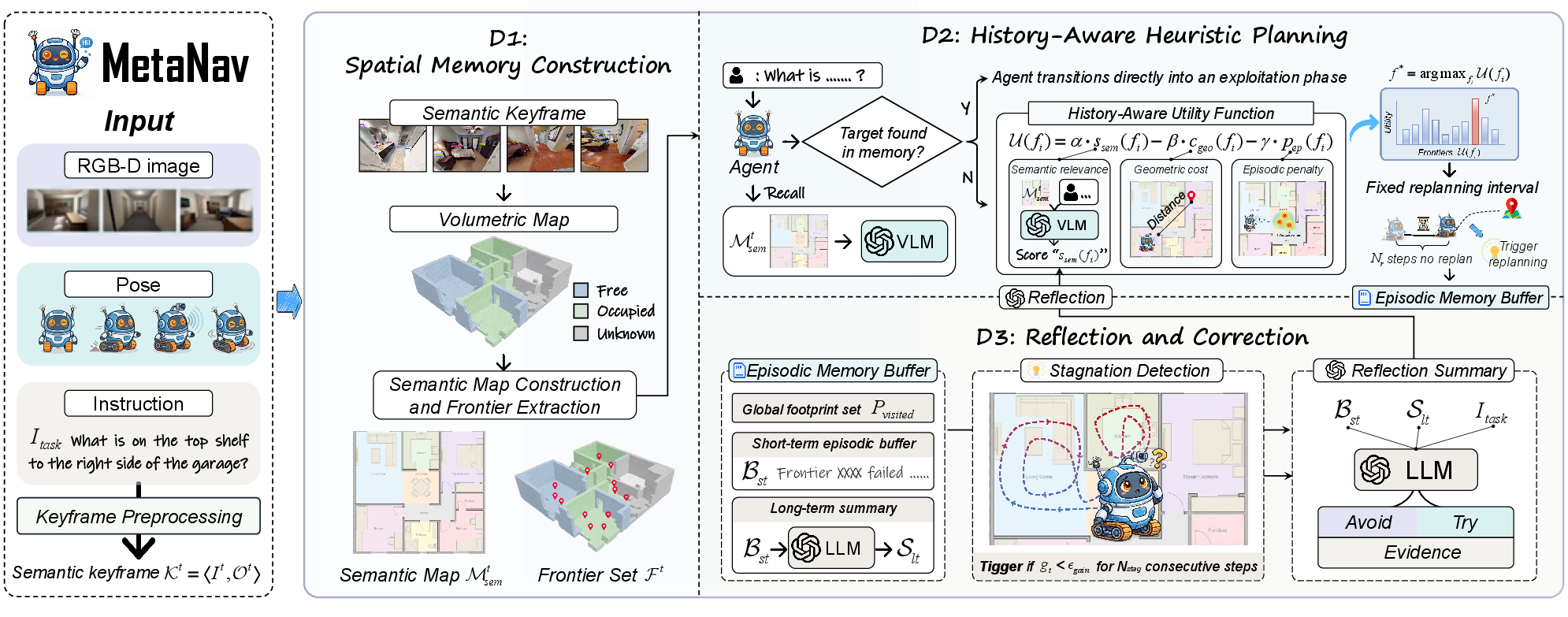

MetaNav integrates three essential mechanisms: Spatial Memory Construction, History-Aware Heuristic Planning, and Reflection and Correction, forming a closed metacognitive perception-planning-reflection loop.

Figure 1: System architecture of MetaNav, highlighting the flow from sensory input to spatial memory, history-aware planning, and LLM-based reflective correction.

Spatial Memory Construction fuses egocentric RGB-D observations using TSDF volumetric integration into a persistent 3D semantic map and tracks frontiers. Objects are detected and localized via a frozen VLM and open-vocabulary segmenter (e.g., SAM). The map supports candidate frontier extraction as boundaries between known-free and unknown volumes, facilitating global situational awareness.

History-Aware Heuristic Planning operates by evaluating frontiers not only by semantic relevance but also by geometric cost and a recency-weighted episodic penalty that suppresses revisiting areas traversed recently. VLM queries for semantic frontier scoring are batched and invoked at fixed replanning intervals; in between, the agent executes directly toward selected waypoints, reducing inference overhead.

Reflection and Correction introduces a structured episodic buffer and stagnation detection logic based on information gain over unexplored volume. Upon detecting deadlocks, a LLM is invoked with recent episodic traces; it generates explicit directives (Avoid/Try/Evidence) that dynamically modulate the scoring function for future frontier selection.

Analysis of Navigation Behaviors

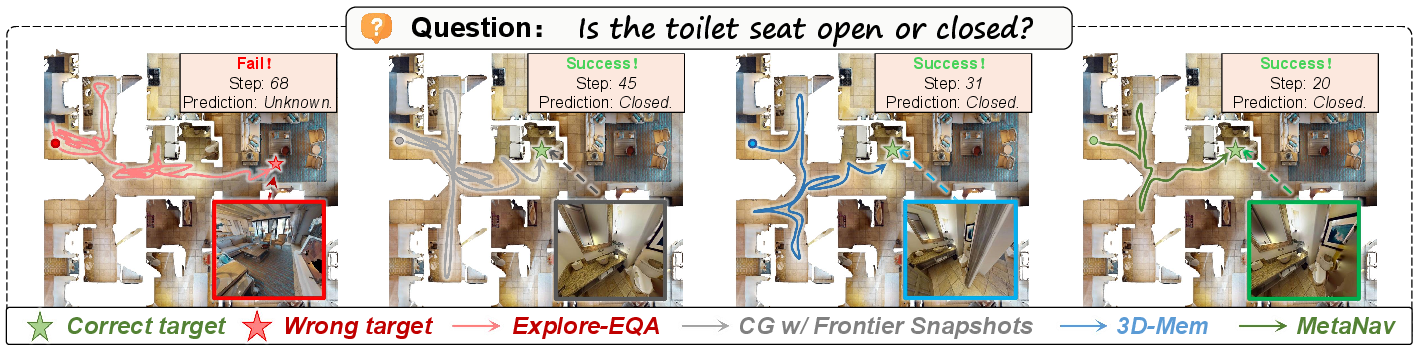

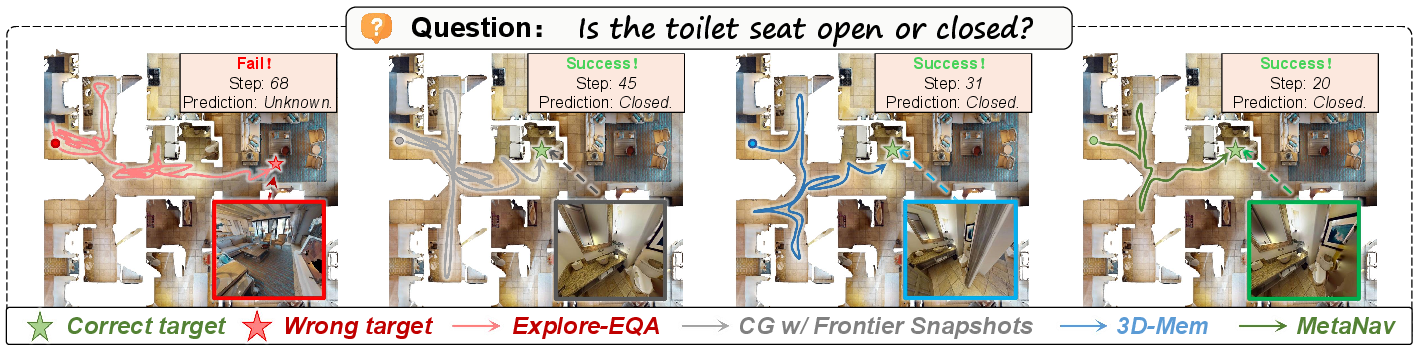

The core claim is that metacognitive reflection—explicitly invoking LLM-based reasoning at stagnation points—prevents pathological behaviors characteristic of prior methods, such as oscillation and target misidentification.

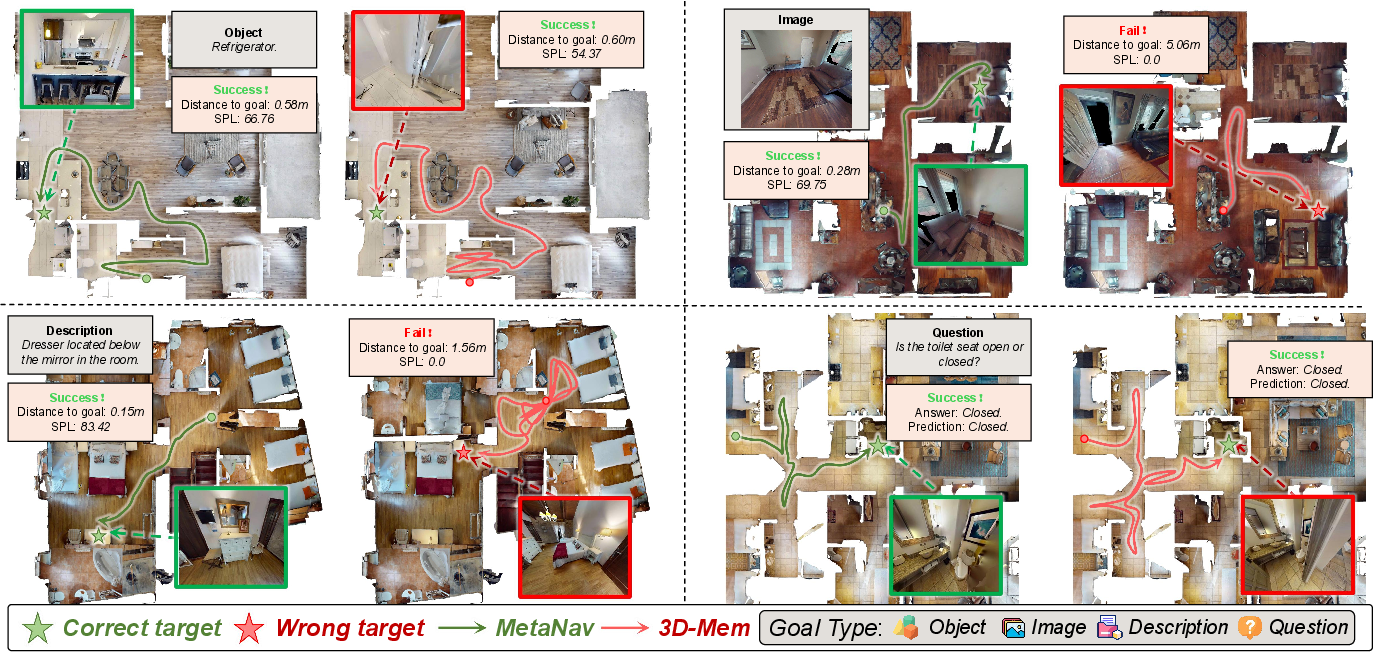

Figure 2: Example navigation trajectories. MetaNav's reflective reasoning enables escape from local minima, while baselines demonstrate oscillatory or distracted behavior.

Qualitative trajectory comparisons demonstrate that MetaNav consistently avoids repetitive cycles and dead ends, due to: (i) the suppression of spatially/temporally proximate dead-end revisits via the episodic penalty, and (ii) LLM-driven adaptive rule injection whenever local progress stalls.

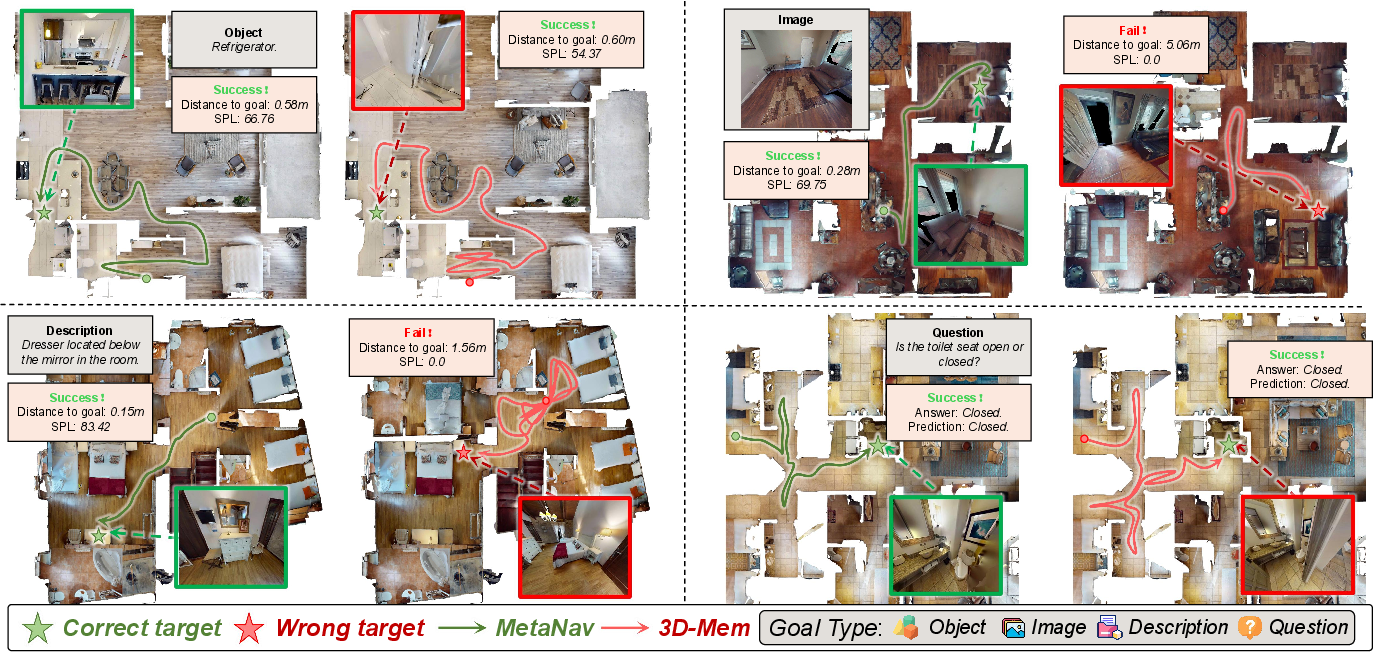

Figure 3: Comparison of navigation paths for various goal modalities, highlighting how MetaNav (green) follows efficient, non-redundant paths as opposed to baseline oscillatory patterns (red).

Empirical Results

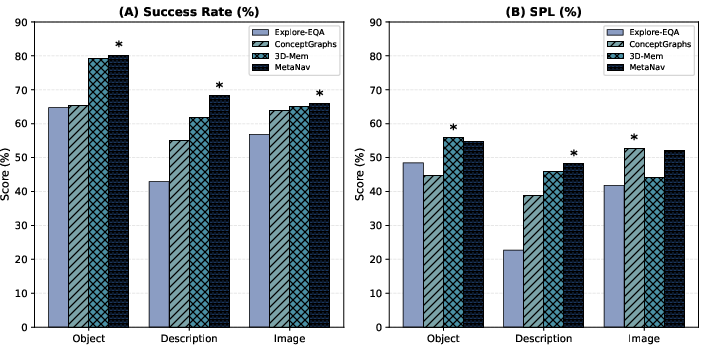

MetaNav exhibits strong quantitative improvements across diverse benchmarks:

Efficiency: The system reduces VLM decision calls per episode by 20.7% over 3D-Mem, attributable to fixed-interval planning and reflection triggers only upon stagnation. Ablation analysis indicates that omitting metacognitive components leads to substantial drops in both SR and SPL.

Ablation and Sensitivity

Ablation studies reveal several critical points:

- Removing Reflection and Correction results in a 5.1% SR drop (from 71.4% to 66.3%).

- Disabling the Episodic Penalty or reverting to pure greedy VLM-based frontier selection degrades efficiency and increases oscillation.

- Spatial memory is essential; its removal yields a drastic performance reduction (SR from 71.4% to 58.6%).

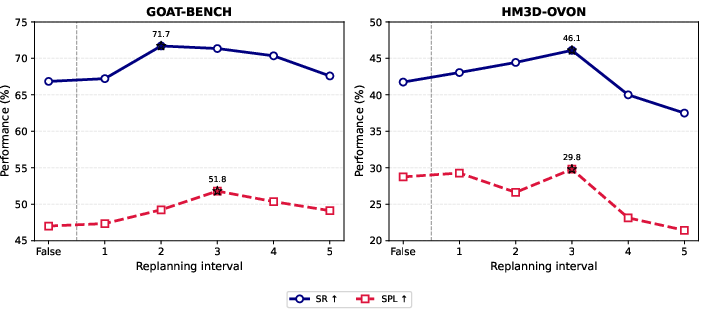

Further, proper calibration of the replanning interval and short-term buffer length is crucial for balancing reactivity and latency.

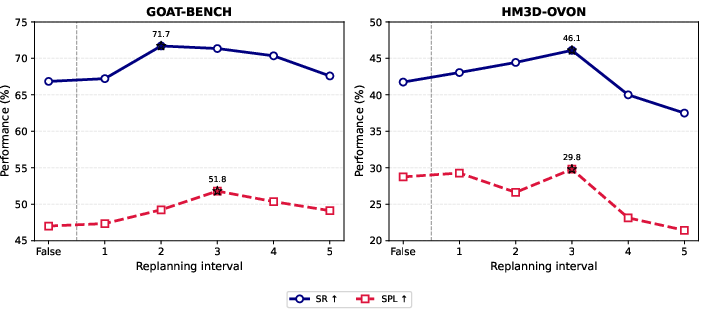

Figure 5: The relationship between replanning interval and navigation performance metrics.

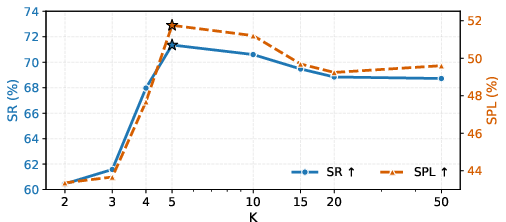

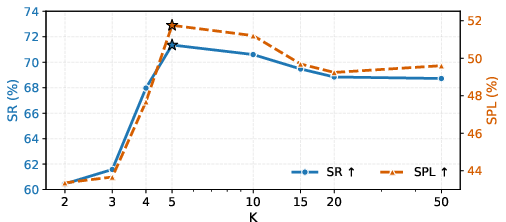

Figure 6: Impact of episodic memory buffer length on success rate and SPL; optimal context size provides both recent evidence and avoids memory bloat.

Implications and Theoretical Considerations

The formal integration of metacognitive mechanisms sets MetaNav apart from classical and contemporary frontier-based VLN agents. By explicitly differentiating between instantaneous sensory guidance and experience-based adaptation, MetaNav represents a shift towards self-correcting embodied systems capable of post hoc strategy revision. The approach demonstrates that LLMs, when provided with structured episodic context and explicit prompts, can effectively generate corrective heuristics that outperform static policy optimization or passive memory utilization.

Practically, this demonstrates that high-latency, high-capacity models (e.g., GPT-4o) can be efficiently amortized over long action sequences using hybrid planning architectures. More broadly, it suggests that self-monitoring and reflection, hallmarks of human cognition, can be operationalized for physical agents navigating highly ambiguous and previously unseen environments.

Future Directions

Theoretical extensions include hierarchical abstraction over episodic memory, active learning for heuristic rule generation, and scaling to multi-agent collaborative tasks. Additionally, integrating learned predictive models within the metacognitive loop may further improve reasoning about unobserved occluded structure and enable even more robust long-horizon planning. Research into quantifying the reliability and interpretability of LLM-generated corrective rules will also accelerate practical deployment.

Conclusion

MetaNav (2604.02318) defines a new paradigm for VLN: one that combines foundation model perception, structured memory, and explicit metacognitive reasoning. The framework yields state-of-the-art navigation efficiency among training-free systems, eliminating oscillatory and redundant behaviors through continuous progress monitoring and adaptive corrective reasoning. This architectural advancement paves the way for robust, autonomous, and self-correcting embodied agents capable of operating in real-world environments under open-set instructions.