- The paper introduces Phyelds, a Python-native framework that embeds field calculus, easing aggregate programming for distributed systems.

- It leverages a modular architecture and dynamic context management to support asynchronous sensor networks, IoT, and robotic swarms.

- Integration with machine learning and simulation tools demonstrates its potential in decentralized federated and reinforcement learning applications.

Phyelds: Enabling Pythonic Aggregate Computing

Context and Paradigm: Aggregate Programming and the Field Calculus

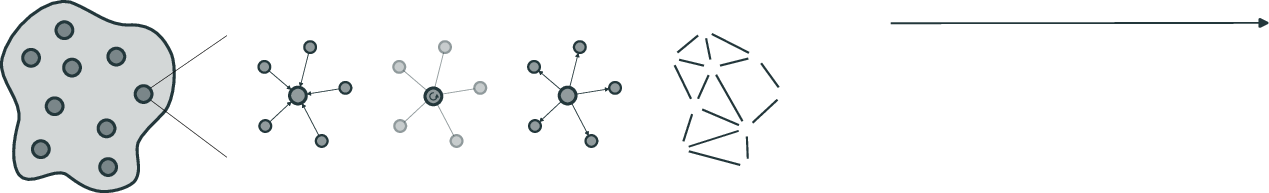

Aggregate programming is a macroprogramming paradigm for engineering large-scale, distributed systems—such as sensor networks, IoT infrastructures, and robot swarms—where program specification targets collective behavior rather than per-device logic. Computations are expressed as operations over computational fields: mappings from device/time tuples to values, processed locally by asynchronous, possibly unreliable nodes. The formal foundation of this approach is the field calculus, a minimal functional language that encapsulates core coordination primitives (e.g., state maintenance, neighbor interaction, conditional execution, aggregation) and an alignment mechanism ensuring consistent synchronization among ensemble members.

The system model in field-based aggregate computing organizes device operation into asynchronous rounds comprising three phases: sense (ingest environmental/neighbor data), compute (execute aggregate program), and interact (propagate results). Aligning devices to program structure at runtime is essential for correct information exchange and ensures robust global guarantees such as self-stabilization.

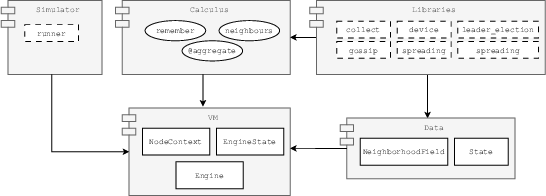

Figure 1: Graphical representation of the aggregate computing system model.

Summary of the Phyelds Framework

Phyelds is a lightweight, idiomatic Python implementation of the field calculus and aggregate programming abstractions. Previous frameworks (Protelis, ScaFi, FCPP) are either functional (Scala, C++) or tightly coupled to domains (JVM, static typing), creating adoption barriers for data science practitioners. Phyelds closes this gap by embedding aggregate computing into the Python ecosystem, utilizing an imperative/object-oriented API layered for seamless integration with dominant machine learning and reinforcement learning toolchains.

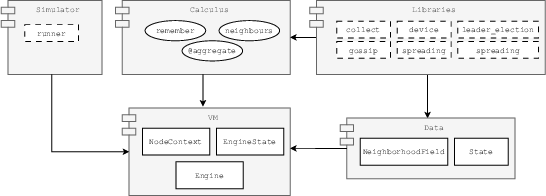

Figure 2: Architecture of Phyelds, showing modules, dependencies, and their key classes/functions.

The architecture is modular, with core compartments including a virtual machine (VM), field/data types, calculus API, algorithmic libraries, and a simulator. The VM encapsulates execution state and context, supporting alignment via function call tracking and dynamic context management utilizing Python's runtime features (e.g., contextvars, AST transformation). The calculus module exposes decorated aggregate code, imperative state persistence via remember, neighbor data access (neighbors), and alignment-aware conditionals. Library modules provide a suite of compositional building blocks for gradients, information diffusion, aggregation, local Voronoi partitioning, robust leader election, and self-organizing communication structures.

Engineering Patterns and Machine Learning Integration

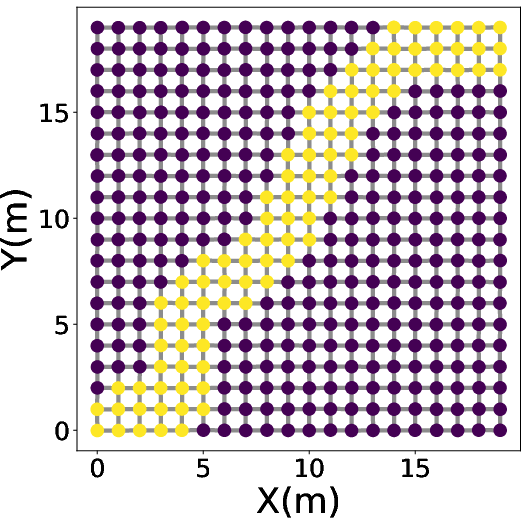

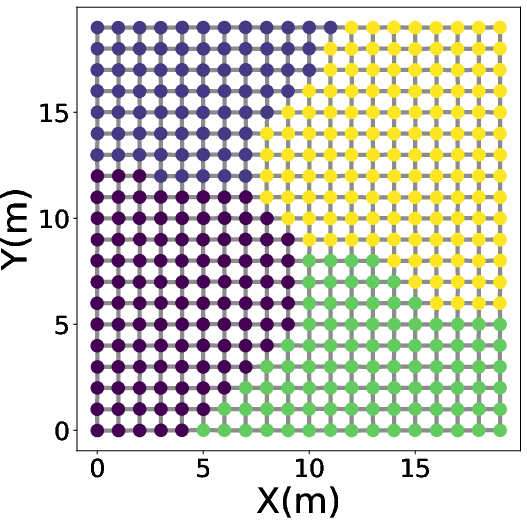

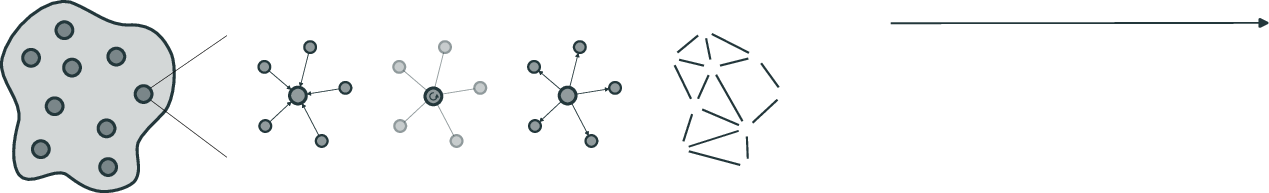

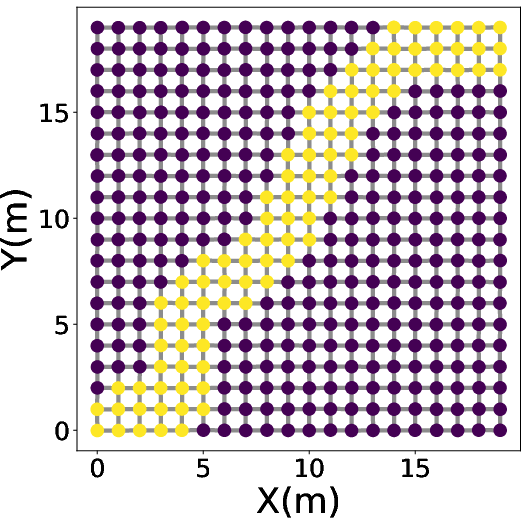

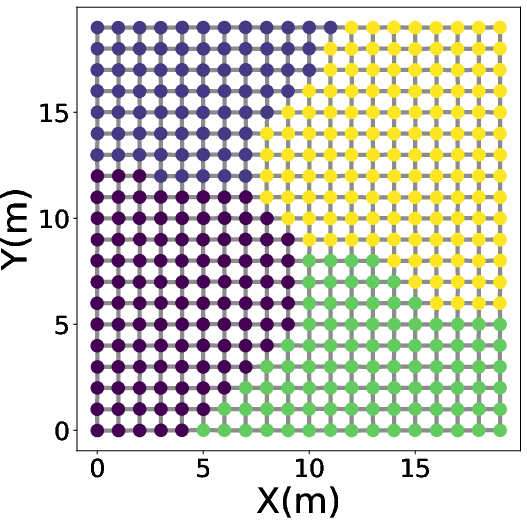

Two canonical field-based coordination patterns are directly enabled in Phyelds: channel construction and self-organizing coordination regions (SCR). Channels are logical paths of specified width between sources and targets, instrumental for spatial routing and flow control; SCR partitions the network into Voronoi-like regions, performing local leader election and regional aggregation for scalable, decentralized coordination.

Figure 3: Channel construction between a source and target device visualized as an aggregate field.

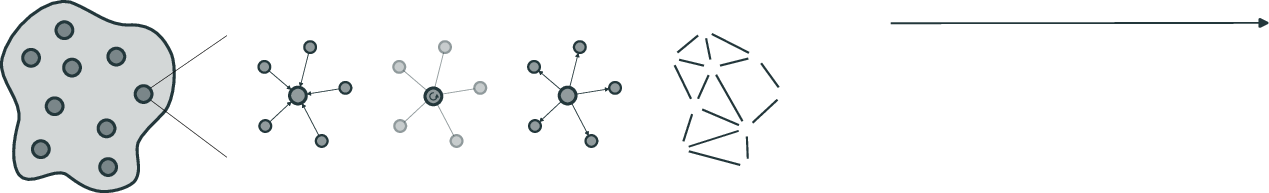

A salient direction enabled by Phyelds is the engineering of collective machine learning processes, notably in decentralized federated learning and multi-agent reinforcement learning (MARL). The framework provides algorithmic primitives for self-organizing federations, where devices interact and dynamically cluster according to data/model similarity, perform in-region model aggregation and broadcast, and coordinate iterative policy improvement without requiring global synchrony or explicit centralization. This supports scenarios with spatially non-i.i.d. data and heterogeneous device ensembles. In the reinforcement learning context, Phyelds integrates with the VMAS vectorized simulator, enabling the succinct implementation of local field-driven agent behaviors as demonstrated by the Vicsek-style flocking model.

Figure 4: Vicsek flocking simulation in VMAS using Phyelds shows agents gradually aligning velocities via aggregate coordination.

Design Implications and Future Perspectives

By bridging aggregate programming with Python’s ecosystem, Phyelds facilitates field calculus adoption in domains where Python is the lingua franca: ML workflows, education, and robotics (including ROS integration). This unlocks natural compositions of distributed AI/learning protocols with field-based coordination, enabling hybrid systems that adapt, organize, and specialize collectively at runtime.

From a theoretical perspective, the translation of field calculus concepts to Python's imperative paradigm exposes new challenges and opportunities: runtime AST rewriting for alignment, local-global state mapping, and efficient handling of large-scale, dynamic network topologies within the flexibility and constraints of Python’s execution model. Practically, Phyelds’s modularity and external simulator hooks support cross-validation of collective protocols, foster reproducibility, and lower the threshold for benchmarking innovative distributed learning/coordination approaches across domains (sensor networks, edge computing, swarm robotics).

Ongoing and future work targets systematic benchmarking, improved performance/scalability profiles, deeper ML toolchain integration, and deployment trials in realistic edge and robotic systems. Comparative studies with frameworks such as SimPy or Mesa3 will clarify tradeoffs in expressiveness and efficiency for Python-based agent-oriented macrosystems.

Conclusion

Phyelds provides a complete, Python-native framework for aggregate computing, combining formal coordination constructs with imperative programming idioms and integration hooks for ML/robotics. By supporting the expression and simulation of both classical and data-driven collective patterns, Phyelds enables scalable engineering of distributed intelligence, and fosters new research in robust, adaptive macrosystem software architectures (2603.29999).