- The paper introduces a hybrid sampler that integrates NUTS and Gibbs steps with state-space marginalization to efficiently estimate DSEMs with binomial responses.

- It leverages logit/Pólya-Gamma and probit/truncated normal augmentations to handle discrete data and accommodate missingness in intensive longitudinal datasets.

- Simulation results demonstrate efficiency gains up to 6.9x, making the method scalable for complex models with high-dimensional and longitudinal data.

Hybrid NUTS-Gibbs Sampler for Dynamic Structural Equation Models with Binomial Outcomes

Overview

The paper "A Hybrid NUTS-Gibbs Sampler with State Space Marginalization for Estimation of Dynamic Structural Equation Models with Binomial Outcomes" (2603.29647) develops a novel inference algorithm for Dynamic Structural Equation Models (DSEMs) focused on efficient estimation when outcomes are binomially distributed. The proposed method resolves several bottlenecks related to scalability and flexibility inherent in existing Metropolis-within-Gibbs samplers, particularly for large-scale intensive longitudinal data (ILD) and models with discrete (binomial/Bernoulli) response variables.

By alternating between a No-U-Turn Sampler (NUTS) step utilizing Kalman filter-based state-space marginalization, and a parallelizable Gibbs step for latent responses, the hybrid NUTS-Gibbs sampler achieves significant improvements in sampling efficiency. The method supports both logit and probit parameterizations, and is demonstrated to be practical for ILD datasets with hundreds of participants and timepoints, addressing a critical gap in estimation routines for complex discrete-response DSEMs.

The paper presents a comprehensive formulation of DSEMs with discrete outcomes, generalizing prior models by supporting both logit and probit links. The modeling framework consists of three hierarchical components:

- Within-level latent states: modeled as autoregressive or VAR processes capturing time-varying participant dynamics.

- Between-participant variation: modeled as latent trait structures, typically with participant-specific means and factor loadings.

- Between-timepoint variation: optional structure for systematic temporal effects.

The responses are binomial (or Bernoulli as a special case), and data augmentation is used for efficient inference:

- Probit link: Bernoulli outcomes are augmented with truncated normal latent variables, allowing conditional conjugacy.

- Logit link: Binomial outcomes utilize Pólya-Gamma augmentation, facilitating a conditional Gaussian structure and efficient Gibbs updates.

These augmentations render the measurement model compatible with Kalman filter-based state-space marginalization, which is typically restricted to the Gaussian case.

State Space Marginalization and Hybrid Sampling

A core challenge addressed is the scalability of inference—Gibbs samplers require sampling latent states at O(N⋅T) per iteration, which is prohibitive for modern ILD datasets. The hybrid algorithm combines:

- NUTS step: Marginalizes within-level latent states analytically via the Kalman filter, operating on only O(N+T) parameters (population-level and participant-level states), exponentially reducing computational burden compared to traditional approaches.

- Gibbs step: Samples latent (pseudo-)responses using parallelized conditional distributions (truncated normal for probit, Pólya-Gamma for logit), efficiently exploiting the independence across participants and timepoints.

This alternation targets the true posterior distribution and leverages modern automatic differentiation tools (e.g., NumPyro with JAX), which optimize cross-chain and participant-level parallelism.

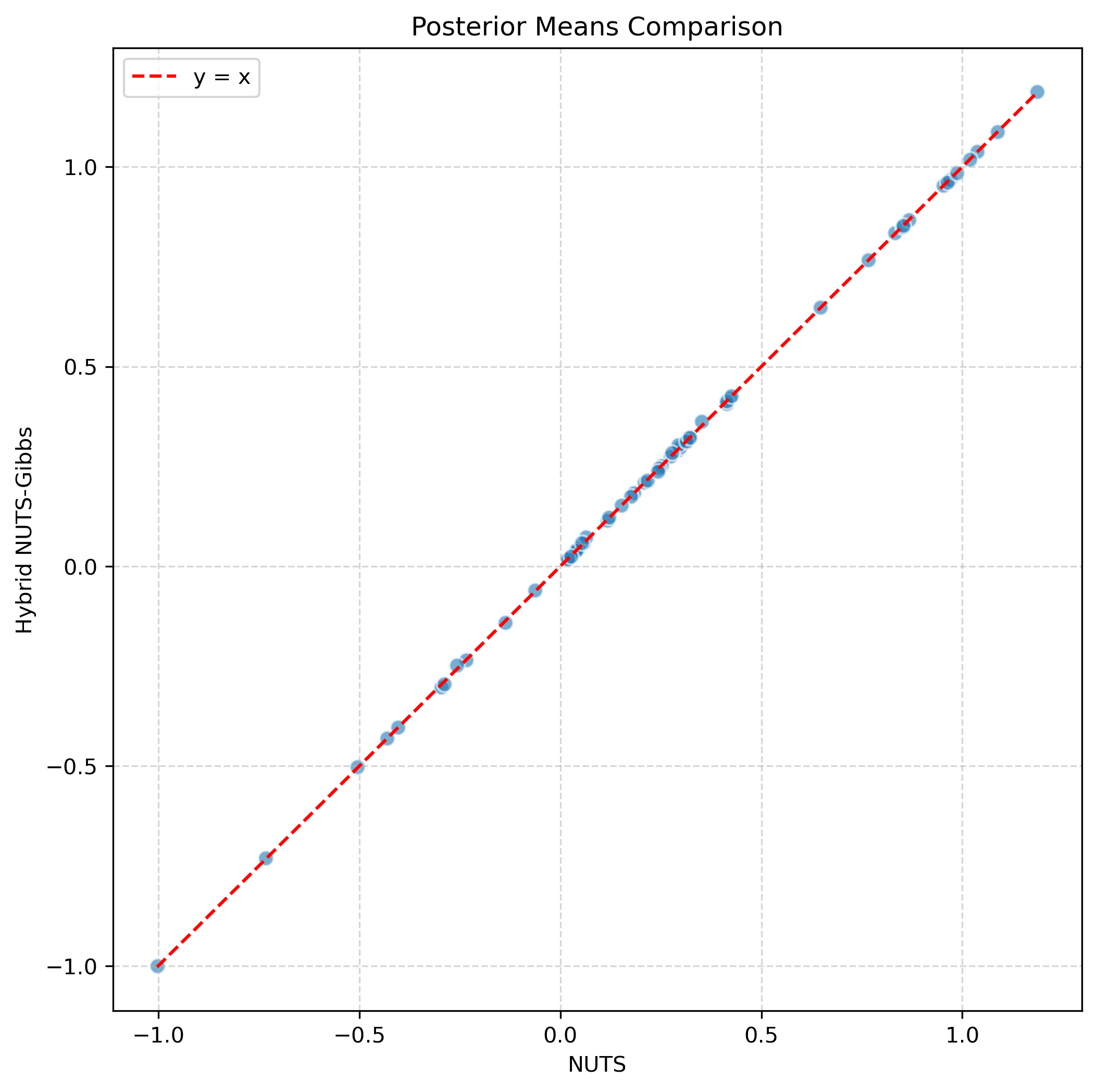

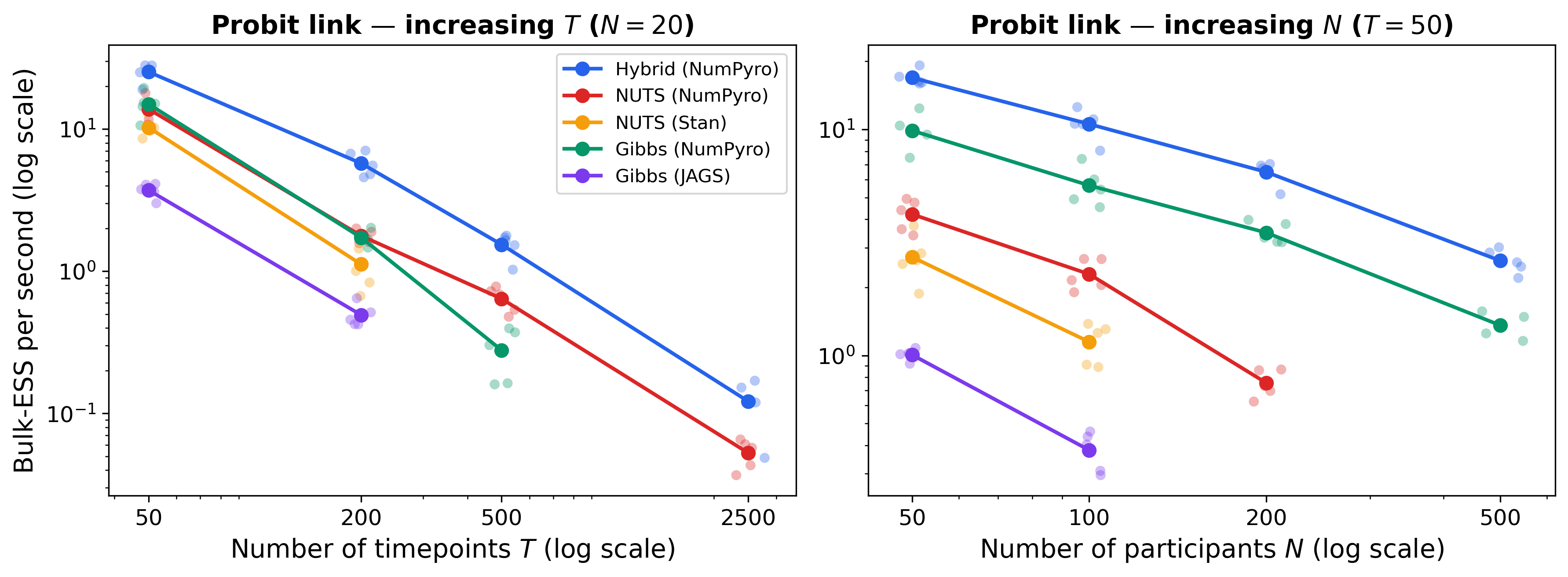

Multiple simulation experiments demonstrate strong numerical results for the hybrid sampler across a range of model specifications:

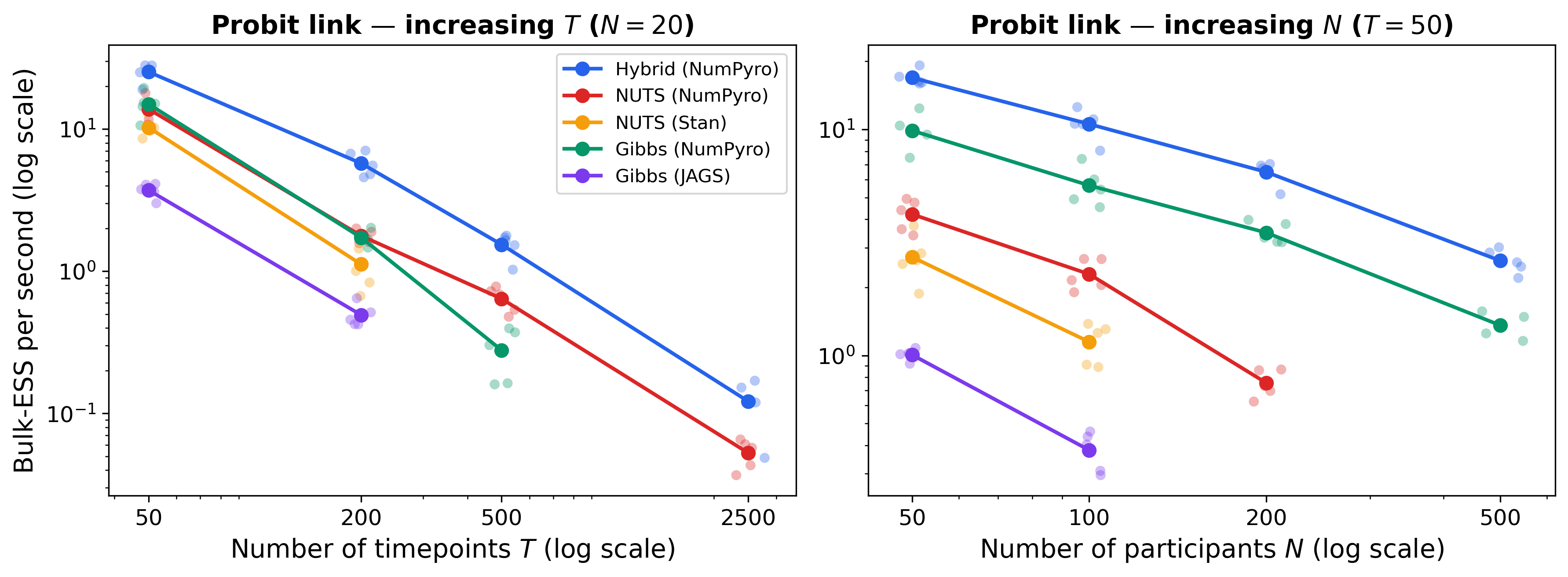

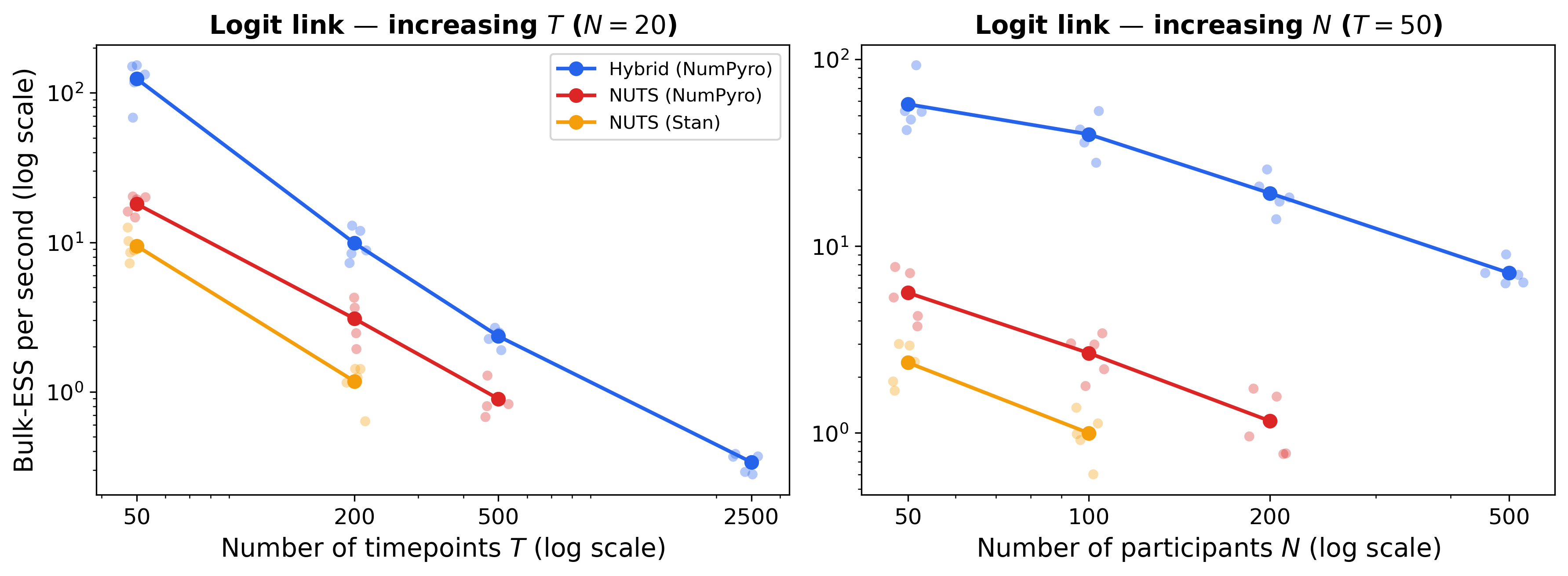

- Bernoulli AR(1) Model with Participant-Invariant Dynamics: For fixed participants and increasing timepoints, the hybrid sampler consistently outperformed competing algorithms, showing efficiency gains ranging from 1.7x to 6.9x and making previously infeasible parameter regimes tractable (e.g., N=20, T=2500).

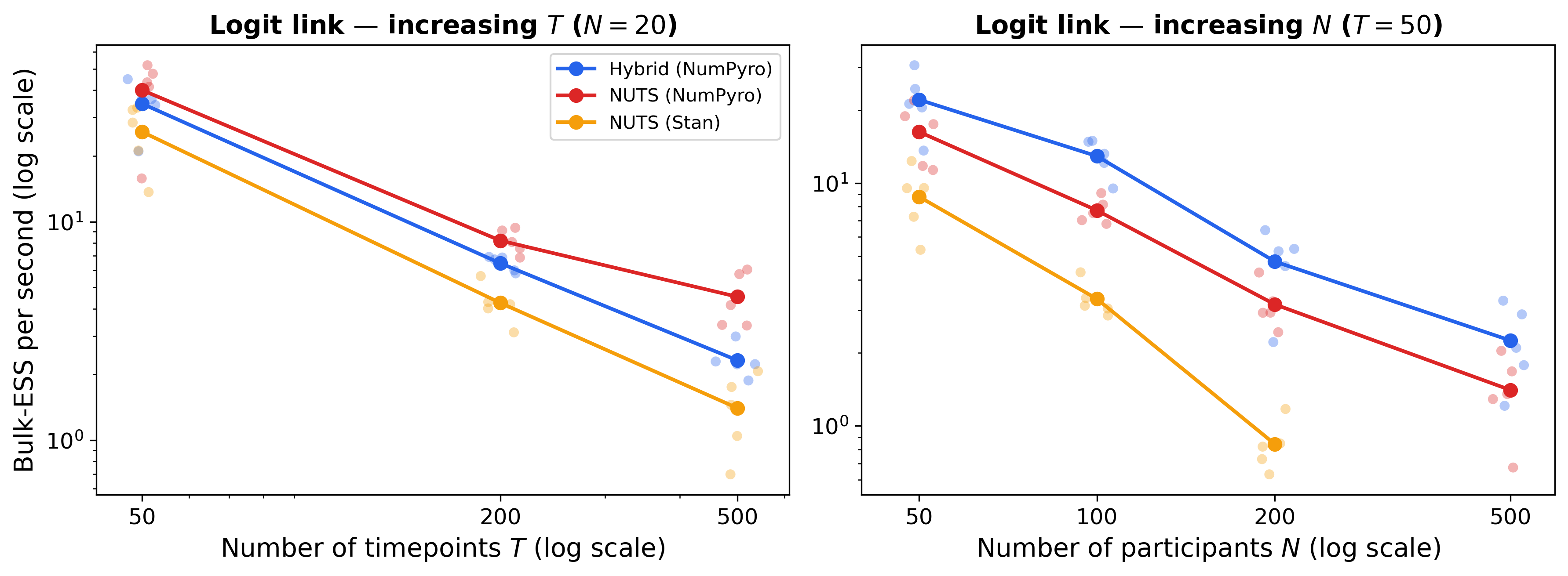

Figure 1: Bulk efficiency for the five-indicator AR(1) model with participant-invariant dynamics, illustrating superior effective sample sizes across algorithms and simulation settings.

- Bernoulli AR(1) Model with Participant-Varying Dynamics: The hybrid algorithm excelled in the large N regime (many participants, moderate timepoints), achieving 1.5x–1.7x efficiency gains over pure NUTS. For large T, pure NUTS occasionally was more efficient, aligning with theoretical expectations regarding parallelism.

Figure 2: Bulk efficiency for the five-indicator AR(1) model with participant-varying dynamics, highlighting scaling advantages in participant-rich settings.

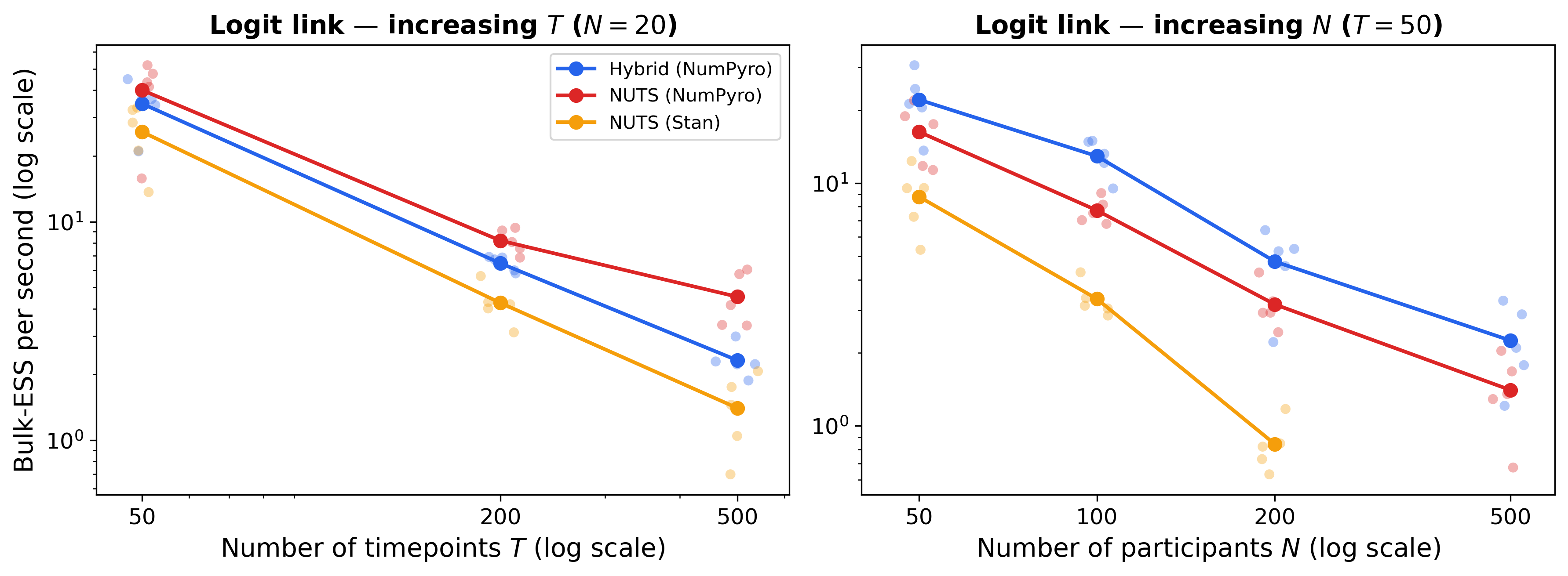

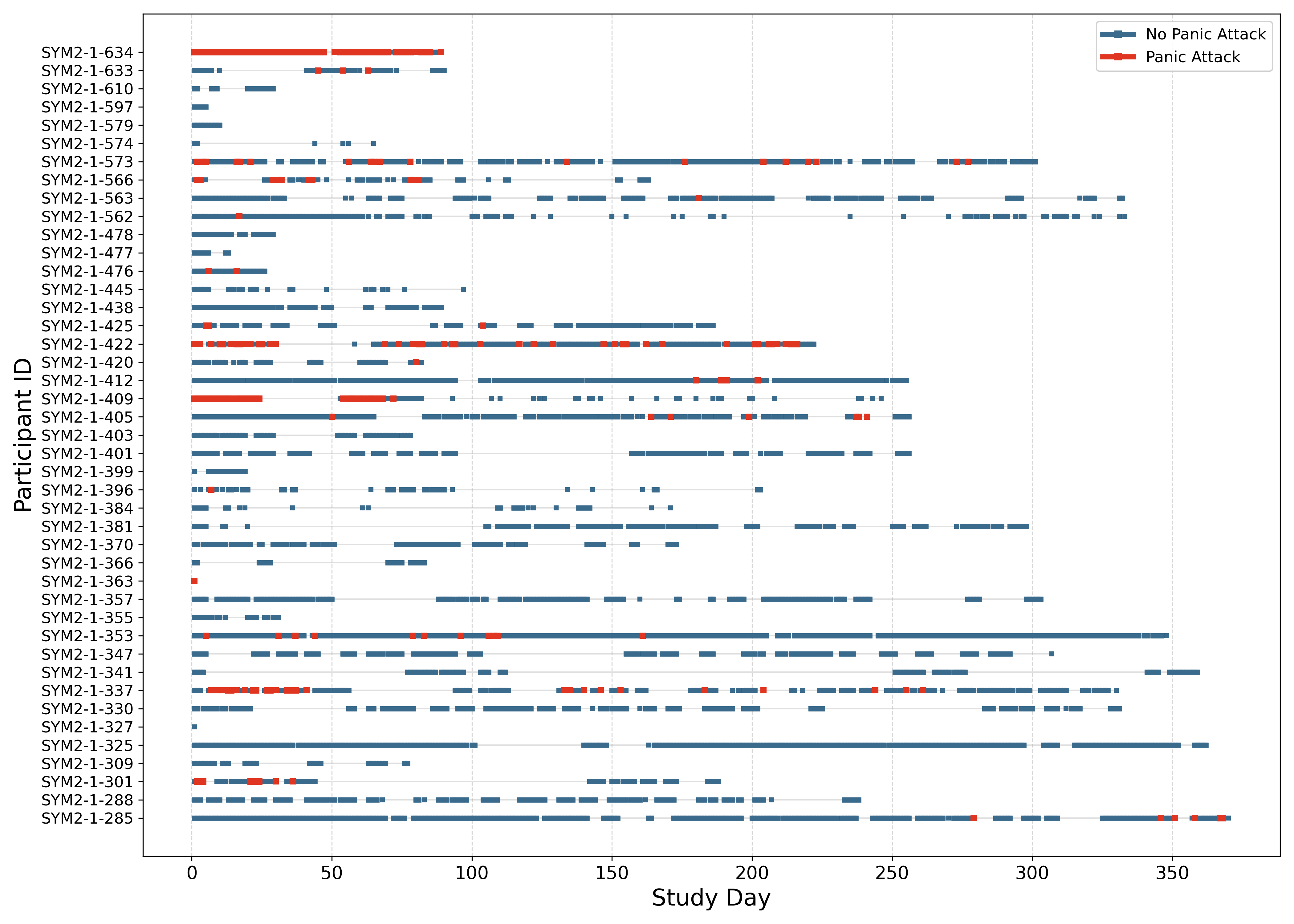

- Missing Data Handling: Kalman filter-based marginalization naturally accommodates missingness patterns without sacrificing efficiency or statistical validity.

Figure 3: Missingness pattern in the EMA data, with panic attack occurrences indicated in red, exemplifying robust handling of irregular data.

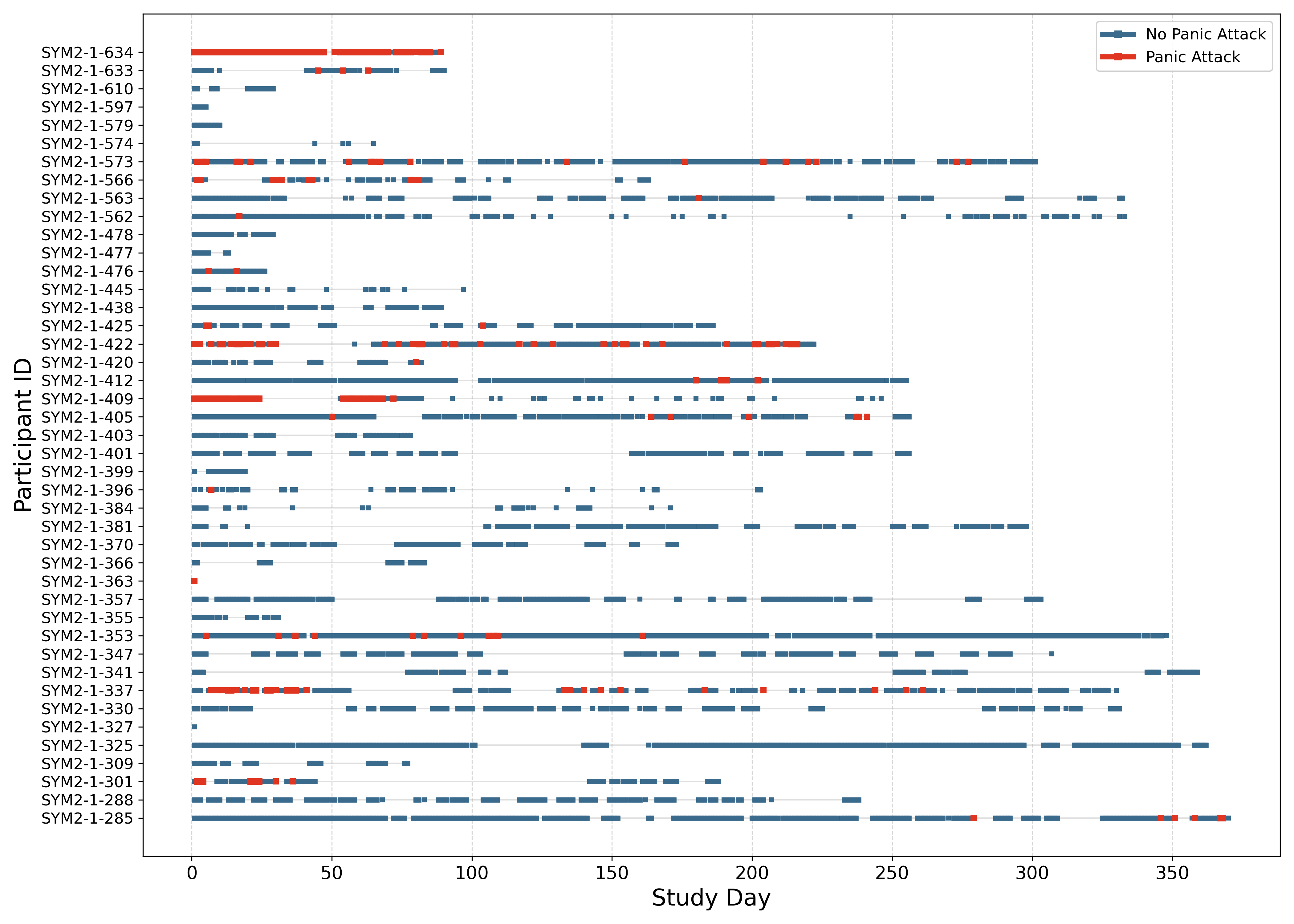

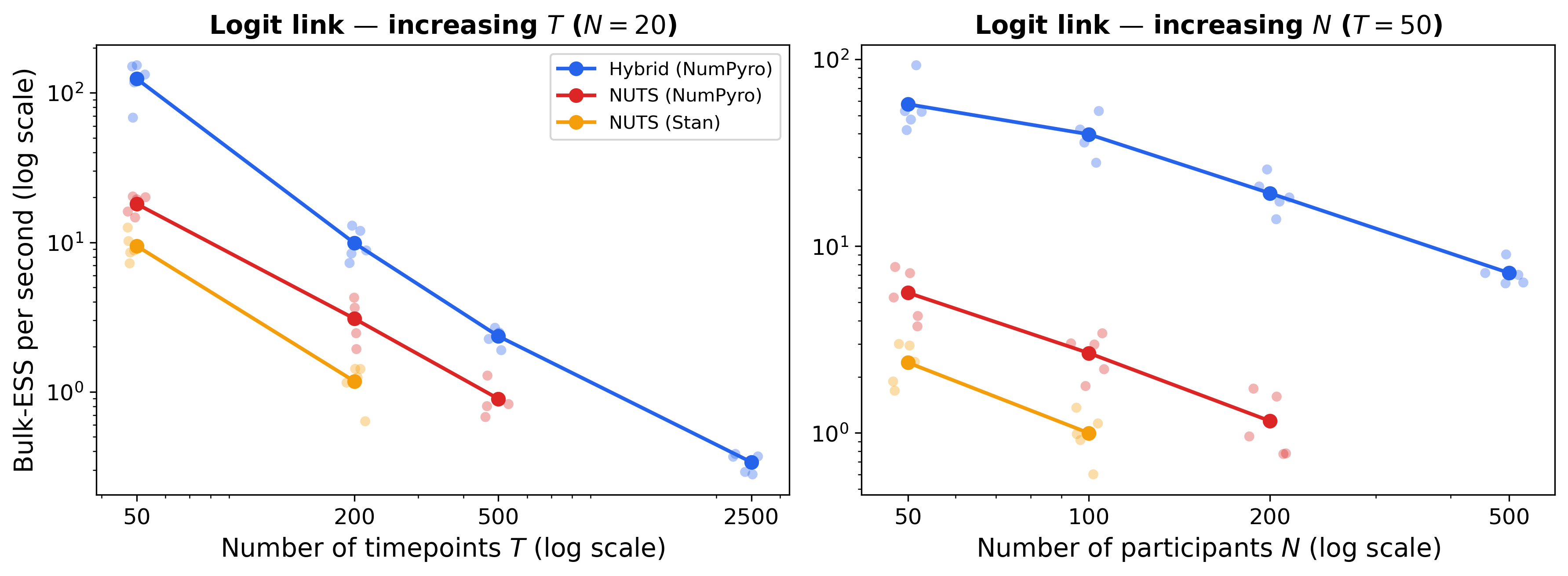

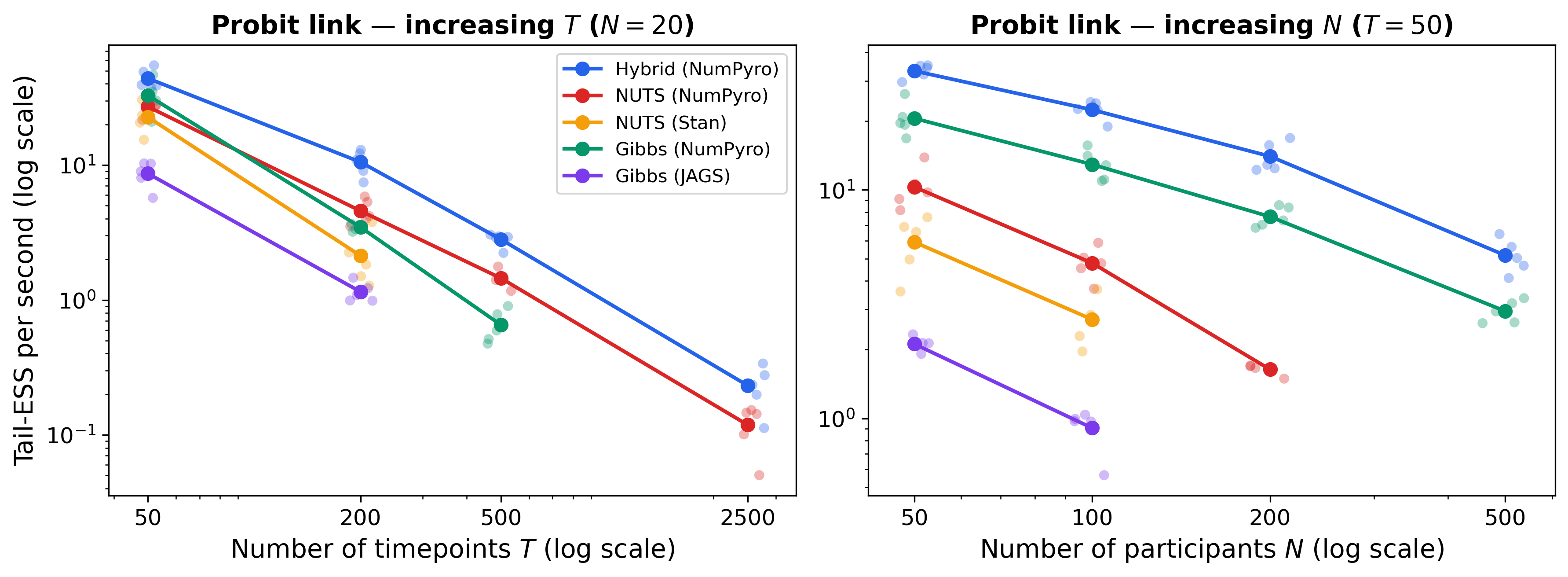

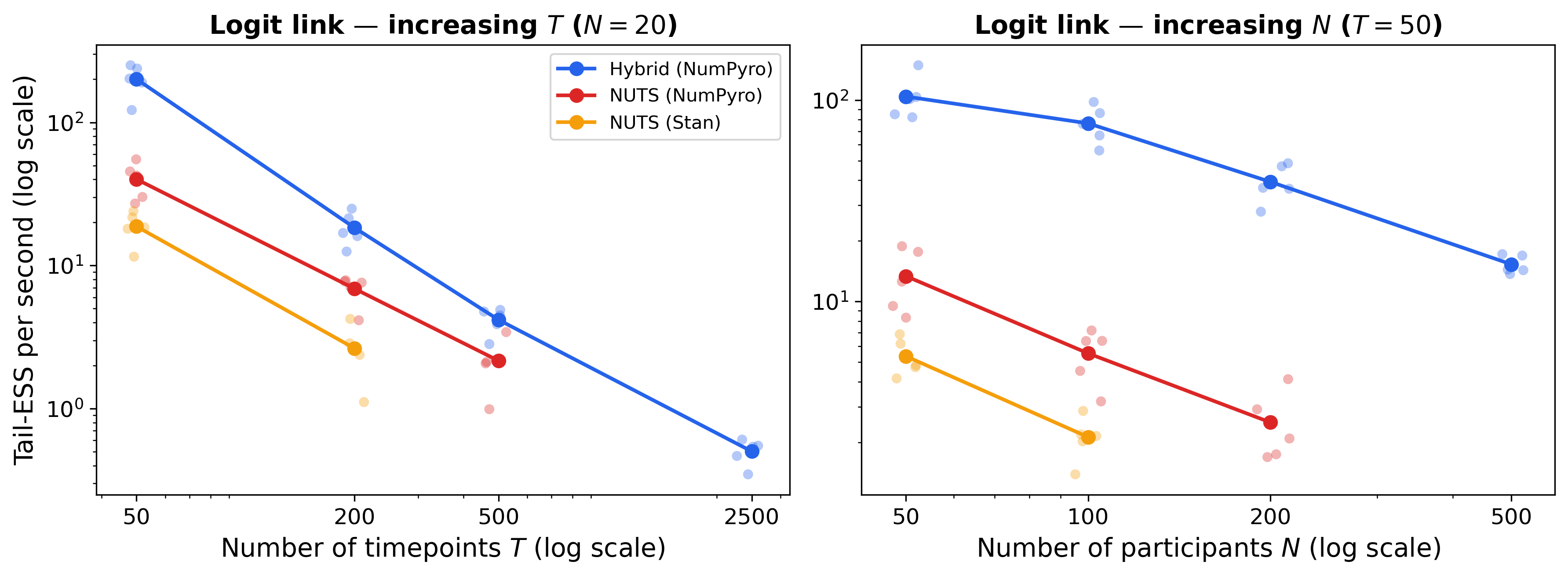

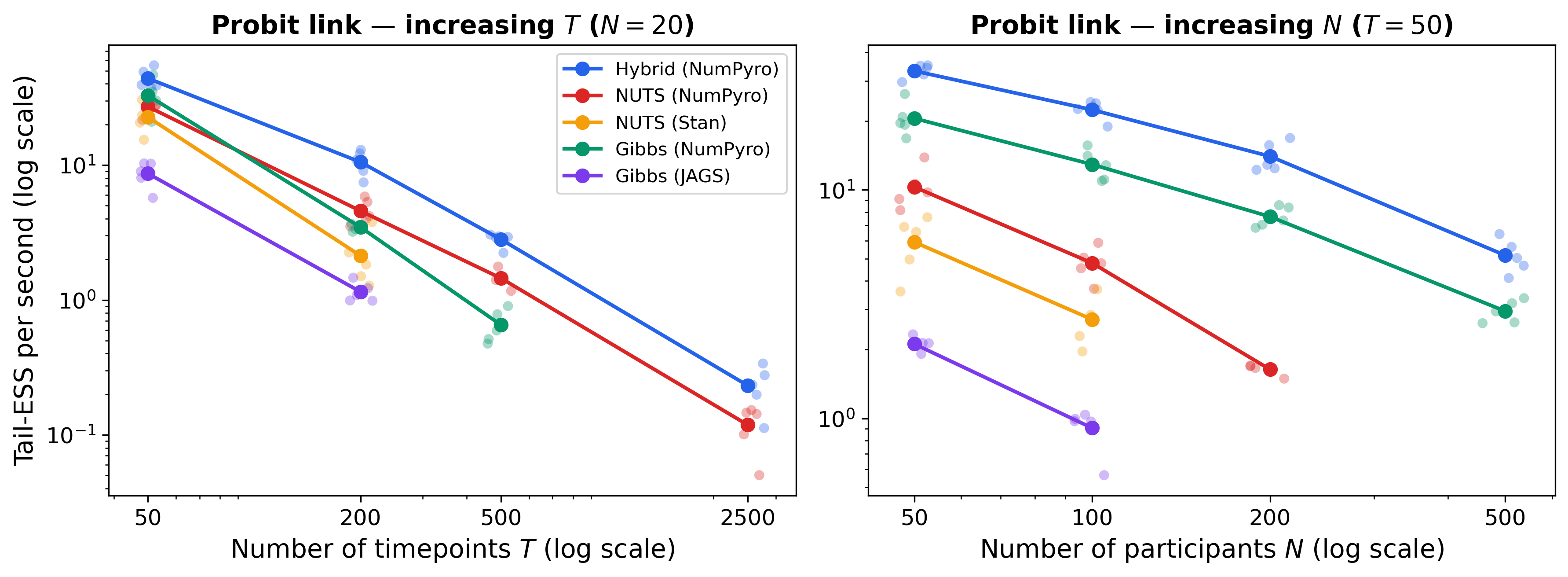

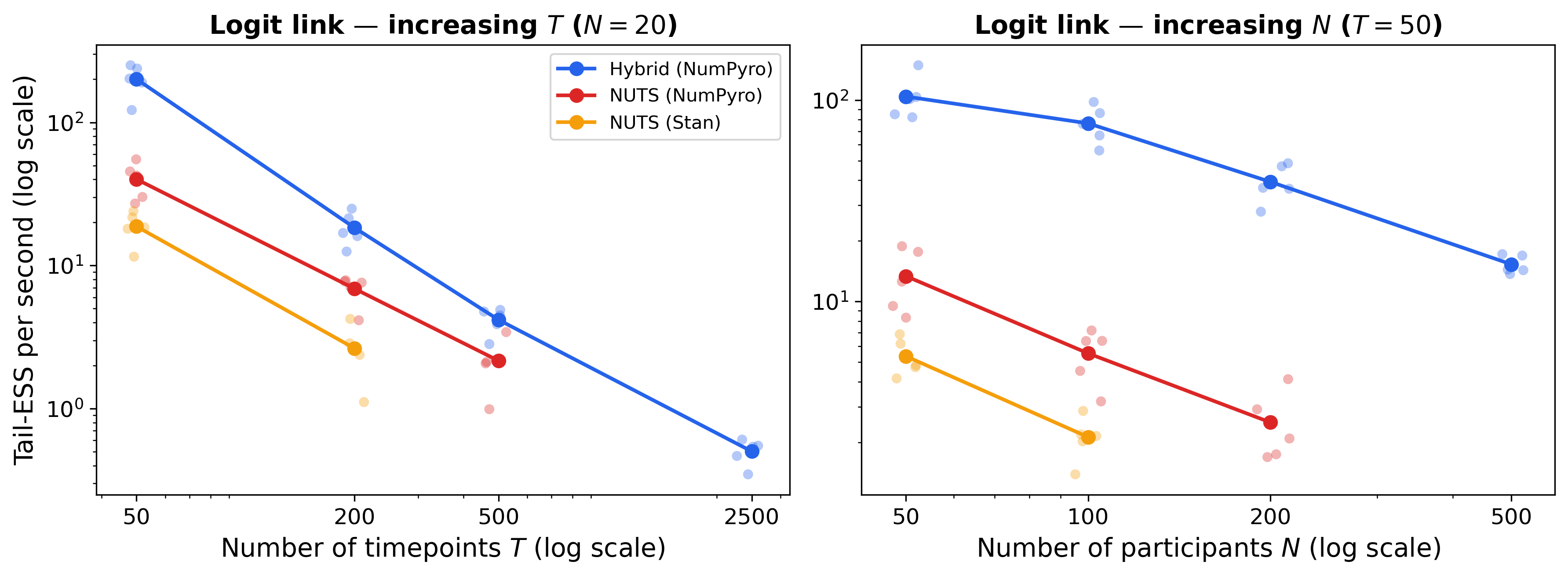

- Logit and Probit Link Comparisons: The hybrid sampler produced comparable or superior effective sample sizes for both link functions, with logit models benefiting substantially from the Pólya-Gamma augmentation.

Figure 4: Bulk efficiency with logit link for participant-invariant dynamics, demonstrating efficient inference across data scales.

Figure 5: Tail efficiency with probit link for participant-invariant dynamics, evidencing robust posterior quantile estimation.

Figure 6: Tail efficiency with logit link for participant-invariant dynamics.

(Figures 7–9)

Figures 7–9: Bulk and tail efficiency plots for participant-varying dynamics under probit and logit links.

Limitations and Scope of Applicability

The hybrid NUTS-Gibbs method demonstrates significant efficiency and scalability improvements for DSEMs with binomial responses. However, extensions to ordinal/negative binomial outcomes are nontrivial and present latent computational bottlenecks:

- Ordinal Responses: Constrained threshold parameters lead to narrow acceptance regions in NUTS, severely limiting proposal step sizes and causing low sampler efficiency. Hybrid approaches perform worse than pure NUTS, indicating the need for alternative strategies (e.g., Metropolis updates for joint latent state/threshold proposals).

- Negative Binomial Responses: Pólya-Gamma augmentation relies on integer dispersion parameters, posing incompatibility with NUTS (restricted to continuous spaces). Approximate sampling via truncated infinite series may offer pragmatic solutions but remains an open challenge.

Practical and Theoretical Implications

The hybrid NUTS-Gibbs sampler substantially expands the feasible scope of DSEM estimation for ILD datasets with discrete outcomes, addressing both statistical and computational considerations. The method supports model flexibility (probit/logit links, binomial data), accommodates missingness, and exploits parallelism intrinsic to modern hardware (CPUs/GPUs/TPUs).

Practically, this enables:

- Complex longitudinal modeling (e.g., digital phenotyping, cognitive testing, educational technology data) with hundreds of participants and timepoints.

- Applications with efficient inference for latent dynamics, discrete measurements, and participant heterogeneity.

- Scalability for high-dimensional models, including multivariate state structures and extended autoregressive dynamics.

Theoretically, the approach sets a foundation for further development of state-space marginalization techniques in Bayesian inference—potentially generalizing to unscented Kalman filters for non-Gaussian outcomes and extending to particle MCMC for exponential family responses.

Future Directions

- GPU Optimization: The method is primed for GPU/TPU acceleration, particularly the Kalman filter steps, though Pólya-Gamma rejection sampling presents parallelization challenges warranting empirical assessment and possible distributional approximations.

- Model Generalization: Extending efficient marginalization to ordinal outcomes, negative binomial responses, and broader exponential family models is vital for advancing DSEM applicability.

- Algorithmic Extensions: Incorporation of unscented Kalman filters or pseudo-marginal MCMC schemes could further generalize inference routines, balancing computational feasibility with posterior accuracy.

Conclusion

This paper provides a technically rigorous, practically efficient solution for estimating DSEMs with binomial outcomes, leveraging hybrid NUTS-Gibbs sampling and state-space marginalization. The method achieves substantial gains in computational efficiency, opens new avenues for scalable statistical modeling in psychology, education, and digital health, and lays groundwork for further innovations in Bayesian inference for complex longitudinal data.