Securing Elliptic Curve Cryptocurrencies against Quantum Vulnerabilities: Resource Estimates and Mitigations

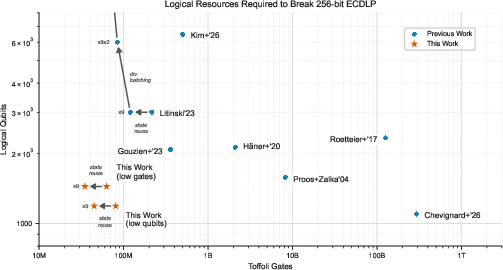

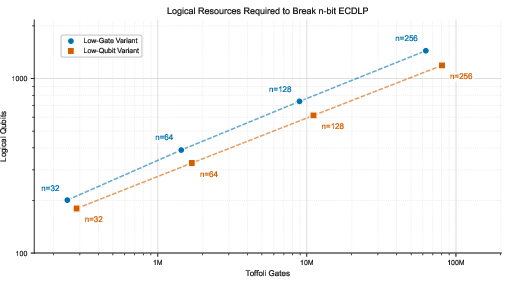

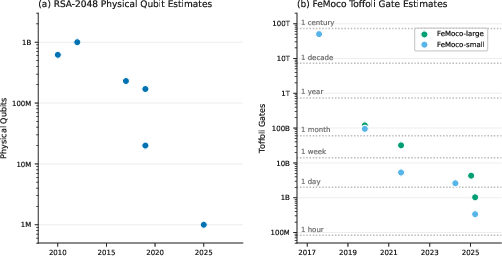

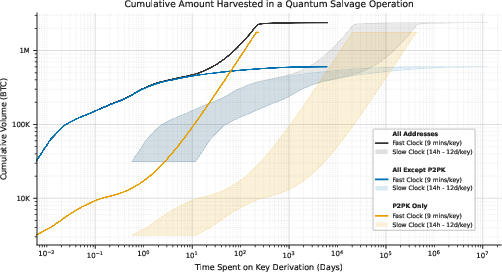

Abstract: This whitepaper seeks to elucidate implications that the capabilities of developing quantum architectures have on blockchain vulnerabilities and mitigation strategies. First, we provide new resource estimates for breaking the 256-bit Elliptic Curve Discrete Logarithm Problem, the core of modern blockchain cryptography. We demonstrate that Shor's algorithm for this problem can execute with either <1200 logical qubits and <90 million Toffoli gates or <1450 logical qubits and <70 million Toffoli gates. In the interest of responsible disclosure, we use a zero-knowledge proof to validate these results without disclosing attack vectors. On superconducting architectures with 1e-3 physical error rates and planar connectivity, those circuits can execute in minutes using fewer than half a million physical qubits. We introduce a critical distinction between fast-clock (such as superconducting and photonic) and slow-clock (such as neutral atom and ion trap) architectures. Our analysis reveals that the first fast-clock CRQCs would enable on-spend attacks on public mempool transactions of some cryptocurrencies. We survey major cryptocurrency vulnerabilities through this lens, identifying systemic risks associated with advanced features in some blockchains such as smart contracts, Proof-of-Stake consensus, and Data Availability Sampling, as well as the enduring concern of abandoned assets. We argue that technical solutions would benefit from accompanying public policy and discuss various frameworks of digital salvage to regulate the recovery or destruction of dormant assets while preventing adversarial seizure. We also discuss implications for other digital assets and tokenization as well as challenges and successful examples of the ongoing transition to Post-Quantum Cryptography (PQC). Finally, we urge all vulnerable cryptocurrency communities to join the ongoing migration to PQC without delay.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper explains how future quantum computers could break the cryptography that secures many cryptocurrencies today (like Bitcoin and Ethereum), and what we can do about it. The authors give updated, realistic estimates of how big and how fast a quantum computer would need to be to crack the main kind of math problem these systems rely on. They also suggest technical and policy steps to keep digital money safe.

The main questions the paper asks

- How many “quantum resources” (think: the size and speed of a quantum computer) are needed to break the specific math used by most cryptocurrencies, called elliptic curve cryptography on the secp256k1 curve?

- Could early quantum computers attack transactions while they’re being sent (not just old wallets), and which quantum hardware is most dangerous for that?

- How can researchers warn the world with trustworthy numbers without revealing a step‑by‑step attack guide?

- What parts of modern blockchains beyond basic payments (like smart contracts, proof‑of‑stake, or privacy systems) might be at risk?

- What practical defenses and policies should be adopted now, especially for “dormant” or abandoned assets that can’t be upgraded?

How they approached the problem

Estimating quantum “cost” in plain terms

Breaking a cryptocurrency key on a quantum computer uses an algorithm called Shor’s algorithm. To describe how hard that is, the authors count:

- Logical qubits: the “useful” quantum memory the program needs.

- Toffoli gates: a type of quantum step that, in practice, dominates how long the program takes to run.

- Physical qubits: the much larger number of real qubits needed once you include error correction (because today’s qubits are noisy and need lots of backups and checking).

You can think of this like:

- Logical qubits = how many files you need open.

- Toffoli gates = how many keystrokes you must type.

- Physical qubits = how many extra computers you need to avoid typos and crashes.

Proving results without leaking a “how‑to”

Publishing a full attack blueprint could help bad actors. Instead, the team uses a zero‑knowledge proof (ZK proof). That’s like a cooking contest where the chef proves they can bake a cake by letting judges verify the cake’s quality—without revealing the secret recipe. Here, they cryptographically prove their circuits really achieve the claimed sizes and speeds, without revealing the actual attack circuits.

Attack types and hardware “speed classes”

The paper explains three kinds of quantum attacks:

- On‑spend: crack a key while a transaction is being broadcast and steal the funds before it’s finalized. Timing: seconds to minutes.

- At‑rest: crack keys that have been visible for a long time (like old or reused public keys). Timing: hours to days.

- On‑setup: do a one‑time quantum computation to recover hidden setup secrets in certain protocols, then use that result to cheat later with just a regular computer.

They also split quantum hardware into:

- Fast‑clock machines (superconducting, photonic, silicon spin qubits): very fast operations. These could do on‑spend attacks.

- Slow‑clock machines (ion traps, neutral atoms): slower operations. These may start with at‑rest attacks only.

The main findings

- Smaller, faster quantum attacks than previously thought

- They show Shor’s algorithm for the 256‑bit secp256k1 curve (used by Bitcoin, Ethereum, and others) can run with either:

- About 1200 logical qubits and about 90 million Toffoli gates, or

- About 1450 logical qubits and about 70 million Toffoli gates.

- With standard assumptions for superconducting quantum hardware (error rates around 1 in 1000 and planar connections), this could be done with fewer than 500,000 physical qubits and finish in minutes.

- They show Shor’s algorithm for the 256‑bit secp256k1 curve (used by Bitcoin, Ethereum, and others) can run with either:

- On‑spend attacks could become possible on fast machines

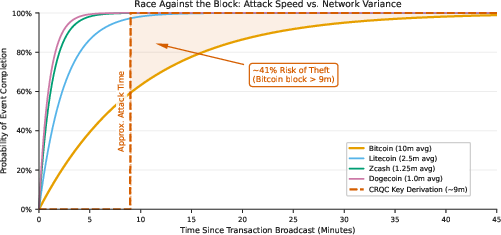

- Because some quantum computers can “pre‑compute” half the work and wait, the remaining time after seeing a public key could be about 9–12 minutes—close to Bitcoin’s average 10‑minute block time.

- That means early fast‑clock quantum computers might be able to grab transactions from the public “mempool” right as they’re being processed in some cryptocurrencies.

- Slow‑clock machines likely can’t do that at first; they would mainly threaten long‑exposed keys.

- Not all parts of crypto are equally at risk

- Proof‑of‑Work mining (like Bitcoin’s hashing) isn’t meaningfully helped by quantum algorithms in a way that would break consensus.

- Many coins are safer if public keys stay hidden until spending, but reuse or long exposure of a public key increases risk.

- Modern features introduce new risks:

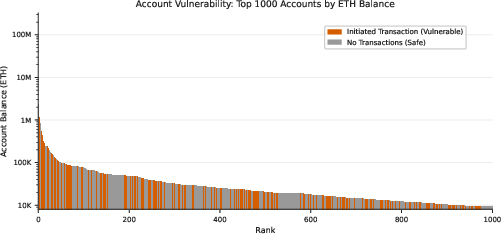

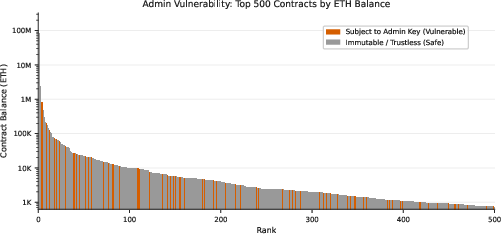

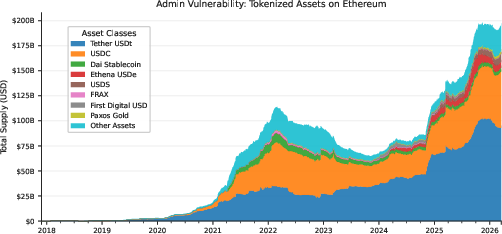

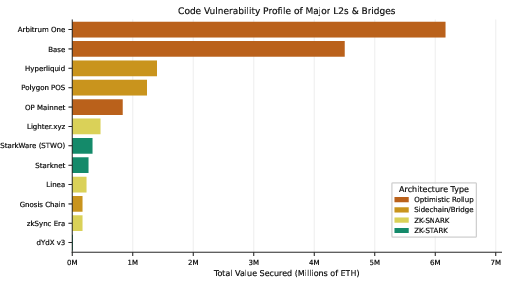

- Smart contracts and account‑based systems can expose public keys in different ways.

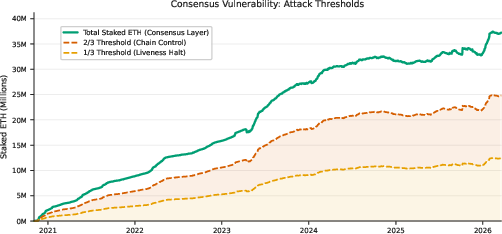

- Proof‑of‑Stake (validators’ keys), data availability sampling, and some privacy systems that rely on “trusted setups” can be vulnerable, including to on‑setup attacks that create reusable classical exploits.

- A responsible‑disclosure path for quantum cryptanalysis

- The authors argue it’s time to stop publishing full attack circuits but continue publishing solid resource estimates.

- They back their claims with a cryptographic ZK proof so others can verify the numbers without learning how to run the attack.

- Dormant assets are a special danger

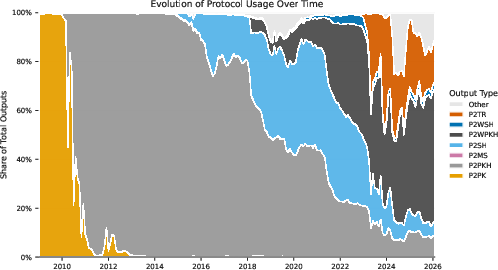

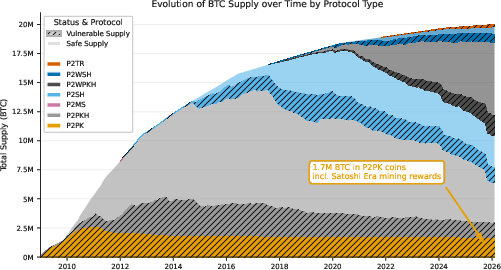

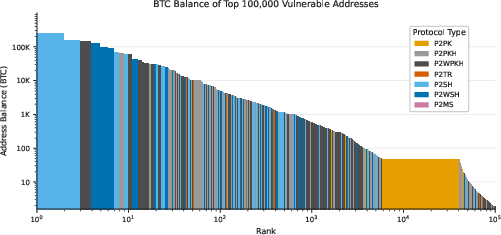

- Large pools of “abandoned” or long‑held assets (like old Bitcoin addresses that directly reveal public keys, including Pay‑to‑Public‑Key coins) could eventually be taken by quantum attackers.

- Because these wallets can’t update themselves, this creates a long‑term, fixed target worth tens or hundreds of billions of dollars.

Why these results matter

- The window for safe operation of today’s elliptic‑curve‑based cryptocurrencies is shorter than many expected. Early fast‑clock quantum computers could threaten live transactions, not just old wallets.

- The threat is broader than basic payments. Smart contracts, staking, and certain scaling/privacy tools can be exposed in new ways.

- Confidence matters: even rumors can destabilize markets. Clear, verifiable estimates help communities act in time.

What the paper recommends

Technical steps to start now

- Move to post‑quantum cryptography (PQC) as soon as possible. PQC algorithms already exist and are being used on the internet and in some blockchains.

- Use intermediate defenses while migrating:

- Avoid reusing public keys and minimize how long keys are publicly exposed.

- Use private mempools or commit‑reveal schemes so attackers can’t “see and snipe” transactions in real time.

- Upgrade validator and smart‑contract key practices in proof‑of‑stake systems.

- Continue rigorous, transparent resource reporting—but without publishing attack blueprints—and consider ZK proofs to build trust.

Policy and ecosystem steps

- Plan for “digital salvage” rules for dormant assets: laws and procedures to manage recovery, taxation, or destruction of abandoned, quantum‑vulnerable holdings in a controlled, legal way—so criminals or hostile states can’t scoop them up first.

- Coordinate standards and timelines for PQC migration across chains, including wallets, exchanges, and tokenized assets.

Bottom line

Quantum computers won’t break everything overnight, but credible, well‑supported estimates show that early fast‑clock machines could steal some transactions within minutes and unlock long‑exposed keys. The fix—migrating to post‑quantum cryptography—is known but hard. Starting now, using both technical and policy tools, gives the best chance to keep digital money secure as quantum technology matures.

Knowledge Gaps

Unresolved gaps, limitations, and open questions

The paper advances resource estimates and a disclosure model for quantum attacks on ECDLP-based cryptocurrencies, but it leaves several concrete issues unresolved that merit further research:

- Algorithm-level success probability and repetition overhead: The estimates do not quantify the per-run success probability of Shor’s algorithm (given nonzero logical error rates) or the expected number of repetitions needed to reach high-confidence key recovery; impacts on Toffoli counts and wall-clock time are not provided.

- Subroutine-level resource breakdown: There is no detailed allocation of logical qubits, T/Toffoli counts, and depths across major subroutines (e.g., point addition/multiplication pipelines, modular arithmetic, QFT/phase estimation, uncomputation), limiting independent validation and targeted optimization.

- Parameterization of windowed arithmetic: The chosen window size(s) and related tradeoffs (space vs time, precomputation tables, routing) are not specified; sensitivity of qubit and gate counts to these parameters remains unclear.

- Applicability beyond secp256k1: Resource estimates for other widely deployed curves (e.g., ed25519, NIST P-256, SM2) and pairing-friendly curves relevant to ZK systems (e.g., BLS12-381, BW6, BN254) are not quantified; relative difficulty across curves is not analyzed.

- On-setup attacks in practice: No resource estimates are given for recovering “toxic waste” in powers-of-tau ceremonies (or similar setups) for specific parameter choices in widely used zkSNARK ecosystems; does Shor on the relevant elliptic curves/finite fields make such backdoors feasible earlier than on-spend ECDLP attacks?

- Success criteria for “most inputs”: The ZK proof checks point addition correctness on a random sample and asserts sufficiency of “most inputs,” but does not quantify the tolerated failure rate for Shor’s algorithm nor bound the soundness error introduced by this approximation.

- Completeness and soundness of the ZK proof: The zkVM-based proof validates only the point addition subroutine on 9,000 random inputs seeded by a hash; it does not attest to the full circuit (e.g., scalar multiplication orchestration, phase estimation control logic), to worst-case correctness, or to end-to-end reversibility; the security assumptions (e.g., Fiat–Shamir style instantiation, zkVM correctness) are not formalized for this use case.

- Magic-state factory assumptions: The T-state production rate (e.g., 50,000 qubit-rounds per T state) and the resulting factory footprint assume specific distillation protocols and target error rates without detailing factory topology, code distances, factory counts, and their integration with scheduling/routing; robustness to hardware variation is not analyzed.

- Error-correction parameterization: Concrete code distances, logical error budgets per Toffoli/QFT, decoder throughput requirements, and layout/surgery schedules (including “yoked” storage and movement overhead) are not provided, preventing independent recomputation of the “<0.5M physical qubits” claim.

- Reaction-limited execution assumptions: The 10 μs control reaction time and 50% per-Toffoli control overhead are assumed but not stress-tested; sensitivity to slower classical control, decoder latencies, and feedback-control bottlenecks is not evaluated.

- Fast-clock vs slow-clock breakpoints: Quantitative break-even analyses (cycle time, gate times, factory round times) that separate feasibility of on-spend vs at-rest attacks across architectures are not provided; thresholds for when slow-clock platforms could reach on-spend capability (e.g., via improved factories) remain unspecified.

- “Primed machine” practicality: The approach to halve on-spend latency assumes indefinite maintenance of primed phase-estimation states; the required logical memory, idle error rates, code distance increases during wait, and opportunity cost (number of primed machines vs latency targets) are not analyzed.

- Parallelization and amortization in practice: Beyond noting state reuse and Montgomery’s trick, the paper does not quantify real-world throughput gains (e.g., multi-instance batching, merging inversions) for single- vs multi-target attacks and their implications for attack economics.

- Full-network on-spend viability: The feasibility of racing the network (mempool propagation, fee-bumping, latency, MEV competition, miner/validator behavior, private mempools) is not modeled; probabilities of successfully front-running/overwriting a victim transaction per chain are not quantified.

- Countermeasure efficacy modeling: The real-world effectiveness and latency impact of mitigations (e.g., private mempools, commit–reveal, adaptor/threshold signatures, ephemeral keys, hybrid PQC) against on-spend attackers are not quantified for major chains.

- Architecture-specific physical estimates: While superconducting estimates are provided, comparable numbers for photonic and spin-qubit platforms (and lower bounds for ion-trap/neutral-atom devices) are not detailed; cross-architecture resource/timeline comparisons are lacking.

- Hardware-level constraints and non-idealities: Practical issues such as crosstalk, calibration drift, cryo I/O bandwidth, wiring density, non-planarity penalties, long-range connectivity implementation costs, and thermal budgets are not factored into the physical-qubit/runtimes claims.

- Scalability from 1.2k to 1.45k logical qubits: The assertion that scaling between these sizes poses “no meaningful challenges” is not supported with concrete layout, fabrication, and control-system analyses that capture non-linear overheads.

- Success repetition and post-processing overheads: The classical post-processing workload (continued fractions, modular reductions, collision resolution) and its integration with control loop timing in reaction-limited execution are not analyzed for end-to-end latency.

- Exposure patterns across chains: The rate and distribution of public-key exposure (e.g., key reuse, script types, EOA usage, compressed keys, address-type distributions) per blockchain are not quantified, limiting risk estimates for at-rest vs on-spend attacks.

- Coverage of non-ECDLP cryptographic components: Many ecosystems rely on multiple primitives (e.g., hash-based commitments, Merkle trees, SNARK verifiers, BLS signatures); the paper does not systematically map which components become vulnerable via on-setup or ECDLP-related avenues and when.

- PQC migration costs at protocol level: Concrete on-chain costs (signature sizes, verification gas, throughput, latency, bandwidth/storage impacts for nodes, fee dynamics) and migration timelines for Bitcoin, Ethereum, Solana, and others are not computed.

- Hybrid and incremental transitions: The viability and performance of hybrid ECDLP+PQC schemes (e.g., Schnorr+Dilithium, threshold+PQC), their deployability in existing script/VMs, and the residual security during transition are not evaluated.

- Effects of algorithmic improvements: No sensitivity analysis is provided for potential future reductions in Toffoli counts, qubit counts, or T-factory overheads; risk windows under plausible improvement trajectories remain unbounded.

- Dormant asset policy design: The “digital salvage” concept lacks operational/legal design details (custody, adjudication, taxation, anti-abuse controls), cross-jurisdictional alignment, and analysis of game-theoretic impacts (market confidence, fork incentives, miner/validator responses).

- Cross-chain dormant asset quantification: While Bitcoin P2PK exposure is discussed, comparable measurements for Ethereum (e.g., lost EOAs), Solana, and other chains (including multisig/DAOs/bridges) are not presented.

- Governance feasibility: The social-layer feasibility of protocol changes (e.g., burns, forced migrations, script deprecations), their consensus requirements, and contingency plans if consensus fails (fork economics) are not analyzed.

- Standards for responsible quantum-disclosure: Beyond a single zkVM proof, a general framework for reproducible, auditable, and safe disclosure of quantum cryptanalysis (e.g., statement formats, third-party attesters, test coverage, formal soundness) is not proposed.

- Thresholds for “safe” mempool/settlement times: The paper does not translate resource estimates into actionable guidance for minimum confirmation times, batching windows, or commit–reveal delays that would neutralize first-generation fast-clock on-spend attacks.

- Impact on non-crypto digital assets: While tokenization and RWAs are mentioned, concrete mappings of custodial flows, key-management patterns, and contract/gateway vulnerabilities to the ECDLP threat model and migration pathways are not provided.

- Formal modeling of attacker economics: No quantitative model of attacker cost (CAPEX/OPEX, machine count, amortization over many keys, expected revenue under network conditions) vs defender cost for countermeasures and migration is provided.

- Verification tooling and datasets: Reproducible artifacts beyond the zkVM statement (e.g., abstracted resource models, schedulers, open benchmarks for elliptic-curve subroutines) are not provided to enable independent replication under varied assumptions.

- Explicit thresholds for slow-clock on-spend feasibility: The paper speculates that improved magic-production may enable slow-clock on-spend attacks but does not define concrete performance targets (round times, factory throughput, qubit counts) that would suffice.

- Compressed/hashed key pathways: The effect of compressed keys and hashed public-key encodings (e.g., P2PKH, P2WPKH) on timing windows and precomputation strategies for on-spend attacks is not quantified.

- Chain-specific consensus impacts: For PoS systems (e.g., Ethereum), the paper references validator risks but in these sections does not quantify the fraction of exposed keys, plausible reorg depths, or the resource threshold for majority compromise in on-spend/on-setup modes.

Practical Applications

Immediate Applications

The following items translate the paper’s findings into deployable actions, tools, and workflows across industry, academia, policy, and day-to-day use.

- Boldly prioritize PQC migration using new, substantiated risk timelines

- Sectors: finance, software, cybersecurity, blockchain

- What: Update risk registers and roadmaps using the paper’s ECDLP-breaking estimates (≈1200–1450 logical qubits; 70–90M Toffolis; ≈9–12 min “on-spend” window on fast-clock platforms with ~500k physical qubits). Re-rank PQC initiatives for wallets, exchanges, custodians, and L1/L2s.

- Assumptions/Dependencies: Surface code with ~10-3 error rates, 10 µs reaction times, and planar connectivity; community governance to approve upgrades.

- Adopt private orderflow/mempools and commit–reveal for transactions to block “on-spend” attacks

- Sectors: blockchain (Ethereum, Solana), software/infrastructure

- What: Route transactions via private relays (e.g., MEV-Boost/OFAs on Ethereum) and add commit–reveal or encrypted-then-reveal flows to reduce public key exposure before inclusion. For Bitcoin-like chains, explore private relay agreements and transaction package mechanisms that minimize pre-inclusion key disclosure.

- Assumptions/Dependencies: Relay/operator trust models, network incentive alignment (MEV dynamics), latency budgets, protocol support for commit–reveal patterns.

- Enforce strict “no key reuse” and minimize public key exposure by default in wallets

- Sectors: consumer finance, wallets, exchange/custody

- What: Ship wallet updates that auto-rotate addresses, discourage reuse, and delay revealing public keys until absolutely necessary. For UTXO chains, default to script types that keep public keys hashed until spend.

- Assumptions/Dependencies: UX acceptance (address changes), developer effort, fee overhead from more outputs/addresses.

- Inventory and proactively mitigate “at-risk” onchain assets (e.g., P2PK, reused keys)

- Sectors: finance, analytics, custodians, exchanges

- What: Build scanners/dashboards to quantify dormant/reused-key exposure (e.g., Bitcoin P2PK, reused P2PKH, vanity addresses). Proactively sweep or rebind assets to safer locking scripts or PQC-compatible upgradeable contracts where governance allows.

- Assumptions/Dependencies: Onchain analytics accuracy, legal authority to move organizationally held assets, fee budgets.

- Implement trusted-setup hygiene and auditing for zk systems; prefer transparent setups

- Sectors: software/security, L2 rollups, privacy apps (e.g., Tornado-like), data availability sampling (DAS)

- What: Audit powers-of-tau and ceremony artifacts; rotate parameters; adopt transparent-proof systems (e.g., STARKs) or MPC with verifiable randomness to remove “on-setup” toxic-waste backdoors.

- Assumptions/Dependencies: Performance/fee trade-offs of transparent systems; circuit- and proof-system migration costs.

- Launch internal red-team exercises using the paper’s ZK-verified resource estimates

- Sectors: software/security, exchanges, L1/L2 core teams, auditors

- What: Tabletop scenarios for both fast-clock and slow-clock timelines; model “primed-machine” attackers (halved on-spend window). Use the ZK proof method to verify claims without disclosing attack circuits.

- Assumptions/Dependencies: Staff training, simulation tools, executive attention.

- Harden validator operations in PoS systems against ECC-compromise risks

- Sectors: blockchain (Ethereum and others using ECDSA/BLS), infrastructure

- What: Reduce pre-inclusion exposure of validator signatures; adopt threshold/multi-operator signing to limit single-key compromise impact; prepare emergency key rotation/exit procedures; stress-test slashing and reorg scenarios.

- Assumptions/Dependencies: Consensus/governance approval, client compatibility, validator incentives.

- Communicate clearly to reduce FUD while mobilizing action

- Sectors: policy, communications, finance

- What: Publish guidance that (a) clarifies PoW is not practically breakable by Grover in this context and (b) explains the real ECC risks and timelines; provide ongoing status dashboards on PQC migration progress.

- Assumptions/Dependencies: Cross-community coordination; consistent messaging.

- Update vendor/security requirements for firmware, boot, and crypto libraries

- Sectors: hardware wallets, HSMs, secure boot, cloud infra

- What: Require PQC readiness for signatures/attestation and define timelines for hybrid or post-quantum firmware updates, secure boot chains, and SSH/TLS dependencies that touch crypto backends serving blockchain infra.

- Assumptions/Dependencies: Supply-chain coordination; PQC implementation maturity.

- Make PQC-capable, upgradeable contract patterns the default for new deployments

- Sectors: DeFi, stablecoins, tokenization (RWA), enterprise

- What: Deploy upgradeable or multi-algorithm signature gates (hybrid ECDSA+PQC) for token contracts, multisigs, and bridges; enable admin rotations to PQC keys without pausing asset operations.

- Assumptions/Dependencies: Governance and user trust in upgradeability; gas/size overhead.

- Introduce “quantum risk” dashboards and alerts at chain and app levels

- Sectors: analytics, security, finance

- What: Real-time monitoring of mempool public key exposure windows; flagged dormant assets; zk parameter exposure; validator key hygiene; rollup/das parameter audit status.

- Assumptions/Dependencies: Data availability and indexing, community-agreed risk metrics.

- Adopt responsible-disclosure workflows anchored in ZK proofs

- Sectors: academia, security research, software vendors

- What: Publish ZK-verified claims about vulnerabilities/resource bounds while withholding exploit specifics; standardize tooling (e.g., zkVMs) and peer-review norms for cryptanalytic disclosures.

- Assumptions/Dependencies: Editorial/publisher acceptance; reproducible zk stacks; legal guidance.

- Pause/sequence high-risk cryptographic features until mitigations are in place

- Sectors: protocol R&D, L2s, privacy apps

- What: Gate launches of features that expand ECC exposure surface (new zk gadgets, DA sampling variants) behind mitigations (transparent setups, shorter exposure windows, hybrid signatures).

- Assumptions/Dependencies: Product roadmaps, user demand, governance procedures.

- User-level hygiene: rotate addresses and prefer wallets with private orderflow support

- Sectors: daily life, consumer finance

- What: Use per-payment addresses, avoid address reuse, opt into wallets that support private relays/commit–reveal and that can migrate to PQC when available.

- Assumptions/Dependencies: Wallet feature availability; user education.

Long-Term Applications

The following items require further R&D, standardization, scaling, or governance decisions before broad deployment.

- Full PQC migration for digital signatures and key exchange on blockchains

- Sectors: blockchain, software, finance

- What: Introduce PQC address/script types (e.g., Dilithium/Falcon/SPHINCS+) and hybrid transitions; redesign validator signing (e.g., BLS→PQC or hybrid). Replace ECDH with Kyber-like KEMs where applicable.

- Assumptions/Dependencies: Onchain cost increases (larger keys/signatures), throughput trade-offs, audited implementations, user acceptance.

- Protocol-level commit–reveal or encrypted-transaction pipelines by default

- Sectors: blockchain infra

- What: Embed commit–reveal into transaction lifecycles (e.g., pre-commit hash, reveal at inclusion) or adopt mempool encryption protocols. Aim to minimize any public key exposure ahead of finality.

- Assumptions/Dependencies: Consensus changes, compatibility with MEV/PBS designs, latency impact.

- Replace trusted setups with transparent or post-quantum-secure parameter generation

- Sectors: L2 rollups, privacy protocols, DAS

- What: Move to transparent proof systems (e.g., STARKs) or hardened MPC ceremonies with verifiably random beacons and PQC security. Build ceremony-proof registries and continuous parameter-rotation frameworks.

- Assumptions/Dependencies: Proof size/time overheads, developer tooling, onchain verifier costs.

- Governance, economic, and legal frameworks for “digital salvage”

- Sectors: policy, finance, judiciary, tax

- What: Define lawful, regulated processes for handling dormant, quantum-vulnerable assets (e.g., P2PK BTC): salvage licensing, tax treatment, optional “burn on salvage” community policies, or fork-based quarantines.

- Assumptions/Dependencies: Jurisdictional harmonization, political consensus, enforceability; community preference for immutability vs stability.

- Quantum-aware consensus and validator designs

- Sectors: blockchain core devs

- What: Architect validator duties and signatures to be resilient to short on-spend windows (e.g., aggregation with commit–reveal; PQC threshold schemes; reduced pre-inclusion exposure). Incorporate emergency quorums for rapid key rotation.

- Assumptions/Dependencies: Protocol complexity, liveness/safety proofs under new assumptions.

- Standardized “quantum risk” reporting, audits, and insurance markets

- Sectors: finance, insurance, auditors

- What: Define disclosure standards (e.g., % exposure to ECC by script type, zk parameter provenance), audit guidelines for PQC readiness, and insurance products that price quantum theft/compromise risks.

- Assumptions/Dependencies: Historical loss modeling scarcity, actuarial acceptance, regulatory oversight.

- Network-level accelerations to shrink exposure windows

- Sectors: blockchain infra

- What: Reduce mempool dwell times via proposer–builder separation optimizations, faster slots/blocks (where safe), or inclusion commitments that limit the period between key reveal and finality.

- Assumptions/Dependencies: Security margins against reorgs/DoS; hardware/network capabilities.

- PQC-native multisig/bridge/DAO designs and standards

- Sectors: DeFi, enterprise, tokenization

- What: Standardize PQC multisig primitives, threshold schemes, and bridge attestations; upgrade DAO voting signatures to PQC; provide migration paths for existing deployments.

- Assumptions/Dependencies: Interoperability, verifiability costs, user governance acceptance.

- Tooling for chain-wide ECC→PQC migrations with minimal user friction

- Sectors: software, wallets, exchanges

- What: Migration toolkits/SDKs that automate key regeneration, hybrid signature issuance, and safe state transitions; bulk-sweep workflows for custodians.

- Assumptions/Dependencies: Backwards compatibility, robust failure modes, UI/UX changes.

- Quantum-architecture-aware threat modeling across critical ECC deployments

- Sectors: cybersecurity, academia, standards bodies

- What: Institutionalize fast-clock vs slow-clock scenarios in standards and risk frameworks (TLS, SSH, e-passports, IoT signatures), mirroring on-spend vs at-rest taxonomy for non-blockchain use.

- Assumptions/Dependencies: Consensus in standards groups, empirical hardware progress tracking.

- Standardize ZK-based responsible disclosure for high-impact cryptanalysis

- Sectors: academia, publishers, software vendors

- What: Journals and vendors accept ZK-verified claims for embargoed vulnerabilities; develop reproducible zkVM templates and peer-review criteria.

- Assumptions/Dependencies: Legal/IP policies, reproducibility at scale, community trust.

- Research and adoption of high-rate QEC (e.g., qLDPC) to refine timelines

- Sectors: academia, quantum hardware

- What: If higher-rate codes (consistent with engineering constraints) mature, revise attack timelines and defenses; conversely, plan mitigations that remain robust under faster-than-expected quantum progress.

- Assumptions/Dependencies: Decoder performance, hardware connectivity advances, stable error models.

- Chain-level “quantum posture” registries and gating for critical features

- Sectors: blockchain governance, regulators

- What: Maintain registries of chain/app PQC readiness; require PQC for certain RWA/stablecoin issuance tiers; gate new high-risk features behind demonstrated mitigations.

- Assumptions/Dependencies: Regulatory clarity, issuer cooperation, global coordination.

- Educational and UX programs for end-users and developers

- Sectors: education, consumer finance, dev tooling

- What: Curriculum and in-wallet prompts that explain address reuse risks, mempool exposure, and migration steps; developer guidelines for safe-by-default cryptography in smart contracts.

- Assumptions/Dependencies: Funding, integration into wallet ecosystems, measurable outcomes.

- Continuous, community-driven attack-surface minimization

- Sectors: open-source, protocol R&D

- What: Ongoing review to retire ECC-heavy features, refactor protocols toward PQC and transparent proofs, and adopt “defense-in-depth” patterns that assume adversaries with primed, fast-clock CRQCs.

- Assumptions/Dependencies: Sustained maintainer engagement, grant support, governance flexibility.

These applications collectively translate the paper’s advances—precise quantum attack resource estimates, the fast-clock vs slow-clock threat model, the ZK proof approach to responsible disclosure, and concrete blockchain vulnerability analyses—into a prioritized, actionable migration path toward post-quantum resilience, while addressing policy, governance, and real-world operational constraints.

Glossary

- Abandoned assets: Dormant cryptocurrency holdings (e.g., lost keys, inactive wallets) that cannot migrate and may be vulnerable to future quantum attacks. "the enduring concern of ``abandoned'' assets."

- Account model: A blockchain state model that tracks balances by account addresses rather than UTXOs, typical of Ethereum-like systems. "identifying distinct risks to its account model, smart contract governance"

- At-Rest Attacks: Quantum attacks that target public keys exposed for long periods, allowing ample time to derive private keys. "At-Rest Attacks: Attacks targeting public keys that remain exposed onchain or offchain for long periods of time"

- Bicycle architecture: A hardware/encoding layout used with certain qLDPC codes that leverages specific connectivity patterns to reduce qubit costs. "the ``bicycle'' architecture used for 2-gross qLDPC codes"

- commit-reveal schemes: Protocols where a party first commits to a value and reveals it later to prevent front-running or timing attacks. "commit-reveal schemes"

- Coordinated Vulnerability Disclosure (CVD): A security practice where vulnerabilities are reported privately and disclosed publicly after remediation or an agreed window. "Responsible Disclosure or Coordinated Vulnerability Disclosure (CVD)"

- cryptographically-relevant quantum computers (CRQCs): Quantum computers large and reliable enough to threaten deployed cryptosystems like RSA and ECC. "cryptographically-relevant quantum computers (CRQCs)"

- CSPRNG: A cryptographically secure pseudo-random number generator used to produce unpredictable, secure randomness. "cryptographically secure pseudo-random number generator (CSPRNG)"

- Data Availability Sampling mechanism: A protocol ensuring that transaction or rollup data is widely available by sampling; attacks can undermine security if parameters are compromised. "Data Availability Sampling mechanism"

- defense-in-depth principle: A security strategy that layers multiple independent protections to reduce risk. "defense-in-depth principle"

- digital salvage: A proposed regulated framework for recovering dormant, vulnerable digital assets to prevent illicit seizure. "various frameworks of ``digital salvage''"

- div batching: A circuit optimization that batches modular inversions across parallel instances to reduce overall cost. "div batching"

- Elliptic Curve Discrete Logarithm Problem (ECDLP): Given a point and its scalar multiple on an elliptic curve, the problem of finding the scalar; basis of ECC security. "the 256-bit Elliptic Curve Discrete Logarithm Problem"

- encoding rate: The ratio of logical to physical qubits in an error-correcting code; higher rate implies more efficient use of qubits. "logical-to-physical qubit ratio also known as the code's encoding rate"

- fast-clock (architecture): Quantum hardware with fast gates and short error-correction cycles (e.g., superconducting, photonic) enabling quick attacks. "``fast-clock'' (such as superconducting and photonic)"

- Fear, Uncertainty and Doubt (FUD): Communication tactics that undermine confidence in systems by spreading anxiety or ambiguity. "Fear, Uncertainty and Doubt (FUD)"

- Fiat-Shamir heuristic: A method to turn interactive proofs into non-interactive ones using hash functions to simulate challenges. "the Fiat-Shamir heuristic"

- Grover's algorithm: A quantum search algorithm offering quadratic speedup; discussed regarding PoW immunity. "Grover's algorithm"

- Kerckhoff's Principle: The doctrine that a system’s security should rely on key secrecy, not secrecy of its design. "Kerckhoff's Principle"

- Montgomery's trick: A technique to compute multiple modular inversions efficiently by batching, reducing overall inversion cost. "``Montgomery's trick''"

- On-Setup Attacks: One-time quantum attacks on protocol setup parameters that create reusable classical backdoors. "On-Setup Attacks: Attacks targeting fixed public protocol parameters that produce a universal reusable backdoor"

- On-Spend Attacks: Quantum attacks executed within a transaction’s settlement window by deriving the key before confirmation. "On-Spend Attacks: Attacks targeting transactions in transit."

- Pinnacle architecture: A proposed high-rate code architecture with non-planar, higher-degree connectivity aiming to reduce qubit counts further. "the recently introduced ``Pinnacle'' architecture"

- planar connectivity: A hardware layout constraint where qubits connect locally on a plane, common in superconducting devices. "planar connectivity"

- planar degree-four connectivity: A planar hardware graph where each qubit connects to up to four neighbors, reflecting typical chip layouts. "planar degree-four connectivity"

- Post-Quantum Cryptography (PQC): Cryptographic schemes believed secure against quantum adversaries, intended to replace RSA/ECC. "Post-Quantum Cryptography (PQC)"

- powers-of-tau trusted setup ceremony: A protocol to generate public parameters for certain ZK systems; compromising it reveals “toxic waste.” "powers-of-tau trusted setup ceremony"

- Proof-of-Stake consensus: A consensus mechanism where validators stake assets to propose/validate blocks; validator keys are a quantum target. "Proof-of-Stake consensus"

- public mempool: The public queue of pending transactions broadcast to the network before confirmation. "public mempool transactions"

- qLDPC codes: Quantum Low-Density Parity-Check codes that promise higher rates by leveraging sparse parity checks (often with long-range links). "quantum Low-Density Parity-Check (qLDPC) codes"

- qubit-rounds: A resource unit counting both qubits and time (rounds) used, e.g., in magic-state distillation costs. "50,000 qubit-rounds"

- reaction-limited fashion: An execution regime where run time is dominated by classical control reaction latency rather than gate depth. "reaction-limited fashion"

- Responsible Disclosure: The practice of reporting vulnerabilities privately and publishing details after remediation or a set timeline. "Responsible Disclosure"

- secp256k1: The specific 256-bit elliptic curve used by Bitcoin/Ethereum for ECDSA, target of the ECDLP attack. "secp256k1"

- SHA-256 cryptographic hash: A widely used 256-bit hash function for commitments and fingerprints in proofs. "SHA-256 cryptographic hash"

- SHAKE256 extendable-output function (XOF): A Keccak-based hash variant that can output arbitrary-length pseudorandom bits. "SHAKE256 extendable-output function (XOF)"

- slow-clock (architecture): Quantum hardware with slower gates/cycles (e.g., neutral atoms, ion traps), limiting on-spend feasibility. "``slow-clock'' (such as neutral atom and ion trap)"

- SP1 zero-knowledge virtual machine (zkVM): A proving system that verifies program execution in zero knowledge. "the SP1 zero-knowledge virtual machine (zkVM)"

- state reuse: An optimization reusing the phase-estimation state to derive multiple keys per run. "state reuse"

- surface code: A topological quantum error-correcting code on a 2D lattice with high thresholds and local connectivity. "surface code error correction"

- T state: A magic state enabling fault-tolerant implementation of non-Clifford T gates via distillation. "A T state of sufficiently low error can be produced in 50,000 qubit-rounds."

- Toffoli gates: Three-qubit controlled-controlled-NOT gates that dominate fault-tolerant resource counts in these circuits. "70 million Toffoli gates"

- toxic waste: Secret parameters discarded after a trusted setup; recovering them enables catastrophic forgery. "the so-called ``toxic waste''"

- windowed arithmetic: A method to speed scalar multiplication or exponentiation by grouping bits into windows. "``windowed arithmetic'' technique"

- yokes: A layout technique for densely storing logical qubits in surface code with protective structures. "protected by ``yokes''"

- zero-knowledge proof (ZK proof): A cryptographic proof revealing nothing beyond the truth of a statement, used here to validate resource claims. "zero-knowledge (ZK) proof"

- zk-rollup: A Layer 2 scaling approach that uses zero-knowledge proofs to compress transactions; vulnerable if setup parameters are compromised. "a vulnerable zk-rollup of Ethereum"

Collections

Sign up for free to add this paper to one or more collections.