- The paper introduces ObjectMorpher, a framework using high-fidelity 3D Gaussian Splatting for photorealistic and non-rigid image editing.

- The method combines ARAP-constrained deformable graphs with linear blend skinning to ensure geometric consistency and preserve object identity.

- Experimental results show near-instant editing (10 ms latency) and superior human preference scores, outperforming 2D and other 3D-aware systems.

Introduction and Motivation

Traditional image editing methods predominantly operate in 2D pixel space, leading to limited controllability and geometric ambiguity, especially for object-level manipulations that involve non-rigid deformations or pose changes. Existing frameworks—ranging from prompt-driven diffusion models to drag-based interactive methods—lack explicit 3D awareness, making it challenging to preserve object identity, maintain geometric plausibility, or reintegrate edited objects harmoniously into complex scenes. Prior 3D-aware systems either require heavy per-image optimization or depend on incomplete and low-fidelity monocular reconstructions, resulting in slow, artifact-prone, and inflexible editing capabilities.

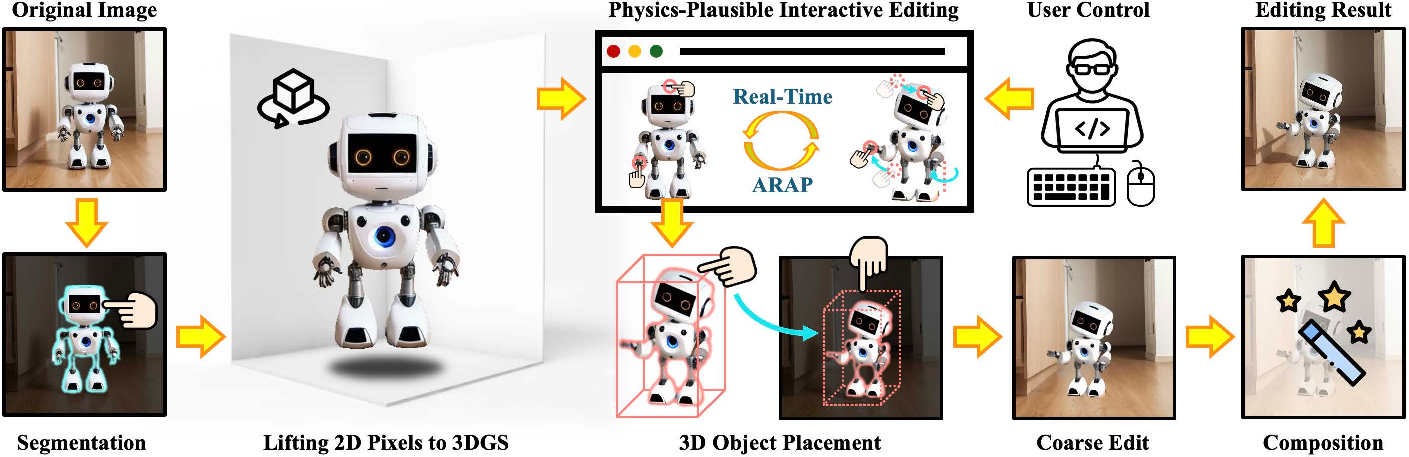

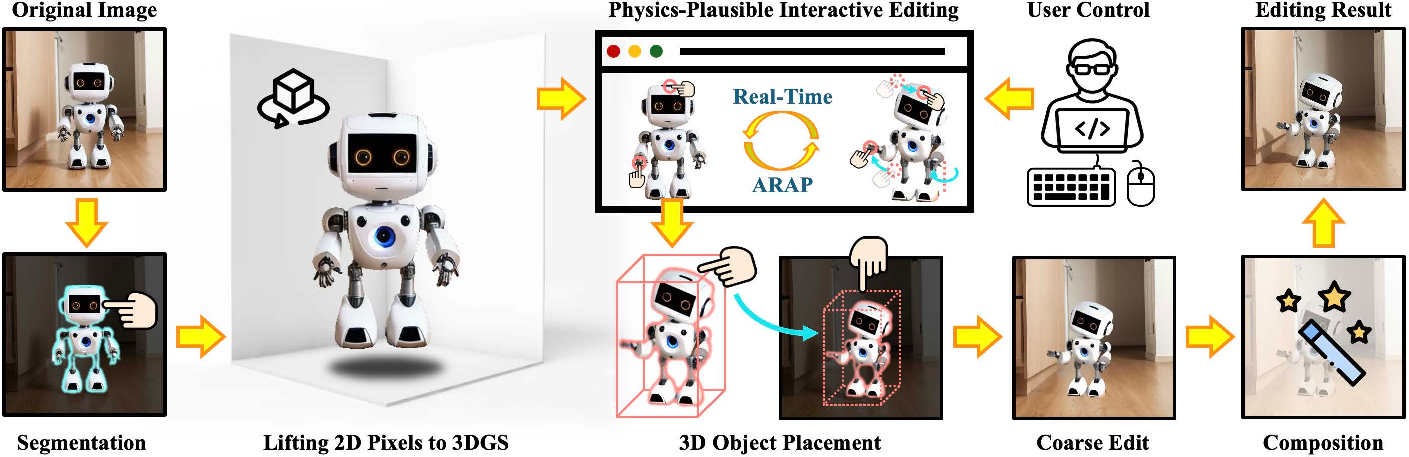

ObjectMorpher directly addresses these bottlenecks by introducing an interactive, geometry-grounded framework that leverages high-fidelity 3D Gaussian Splatting (3DGS) as an editable object representation. Through a novel pipeline that elevates objects to 3D, applies non-rigid control with physical constraints, and utilizes a composite diffusion module for seamless compositing, the method achieves fine-grained, efficient, and photorealistic edits with superior user controllability.

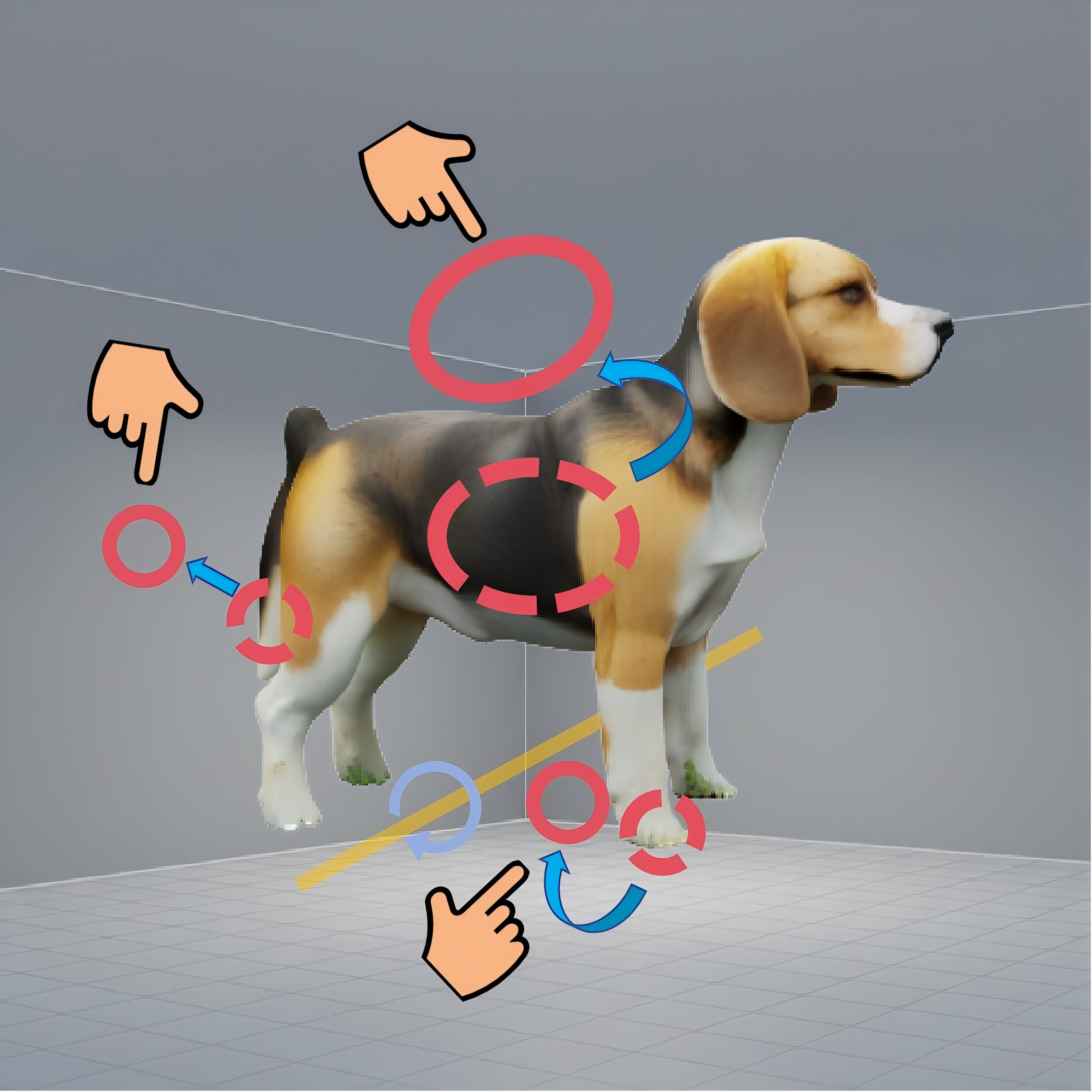

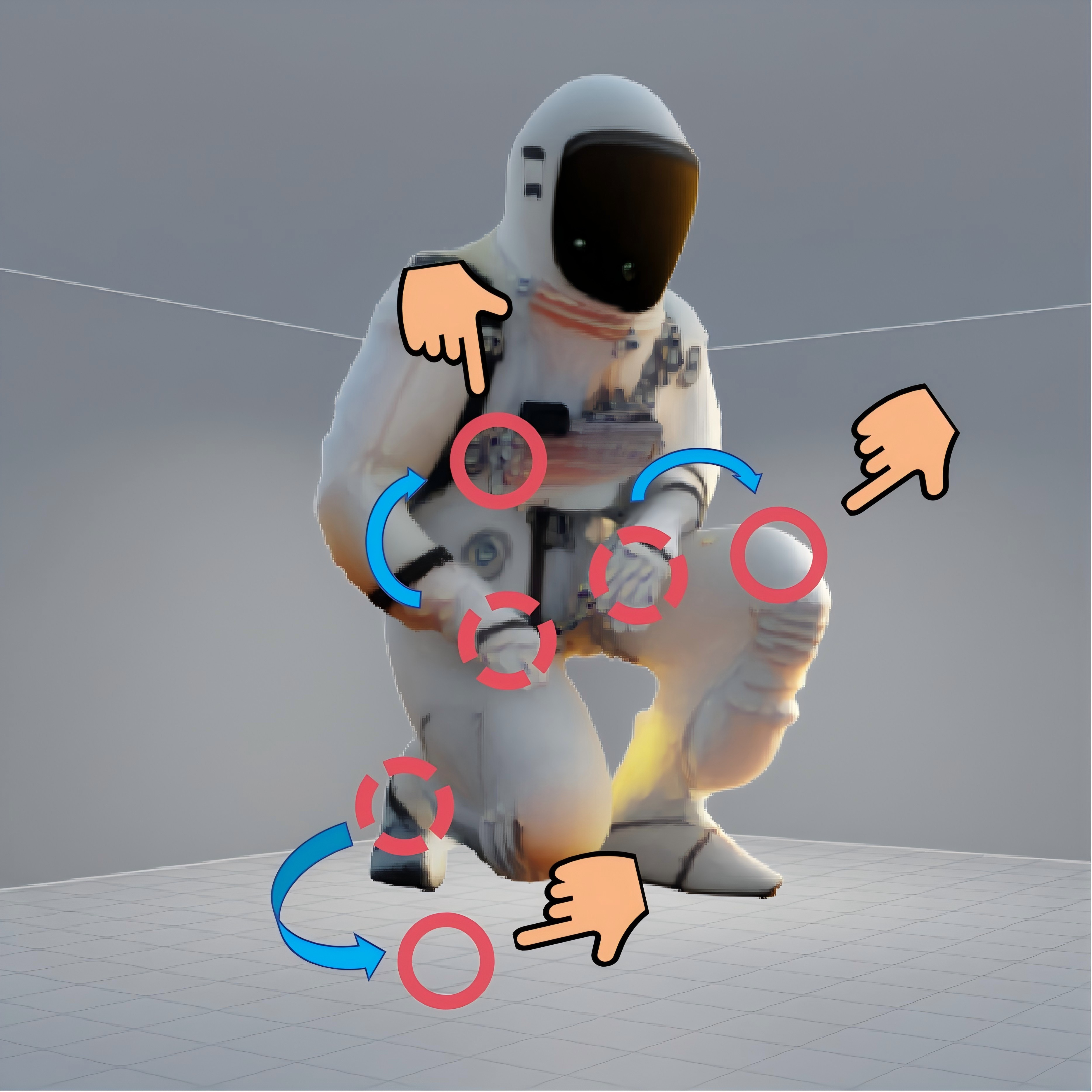

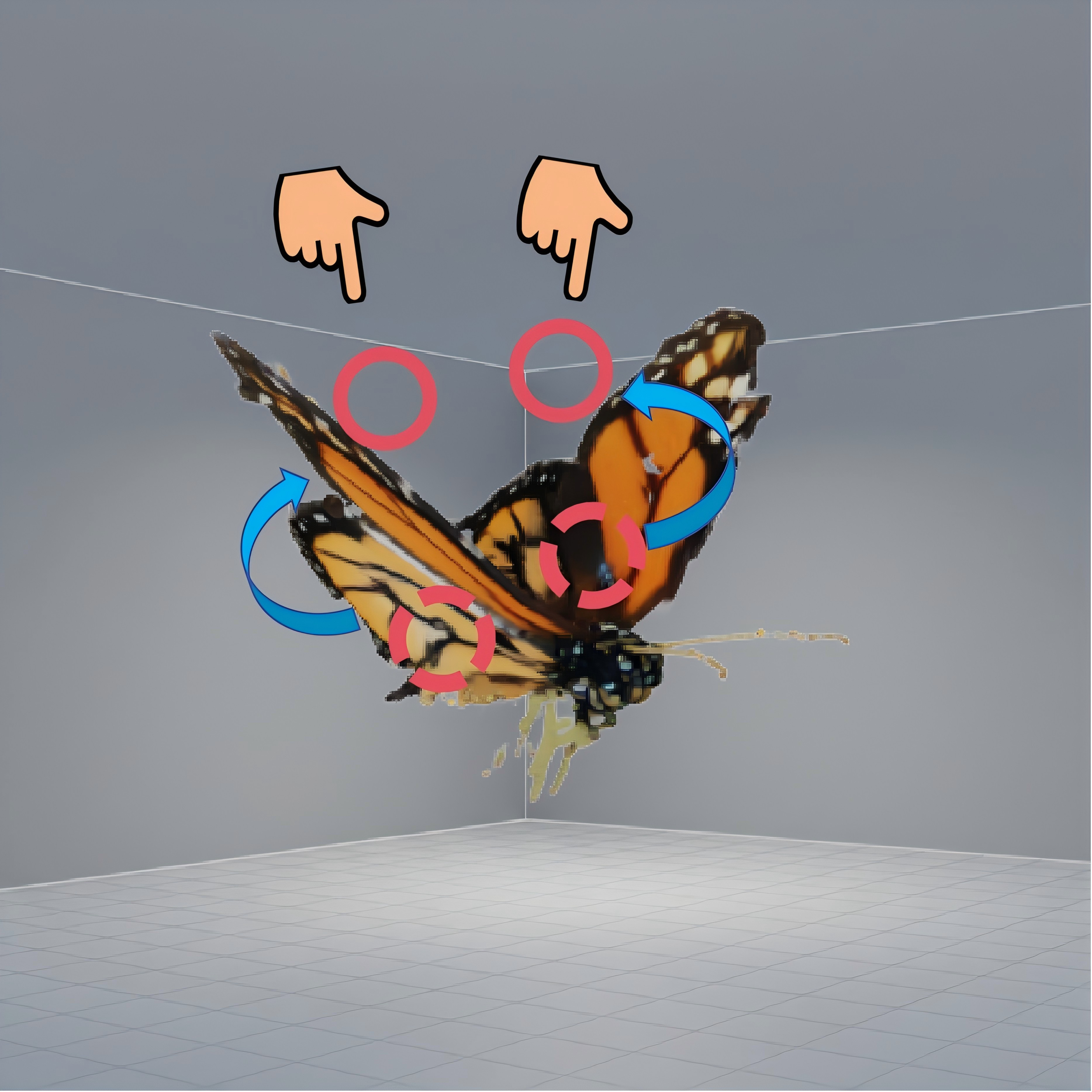

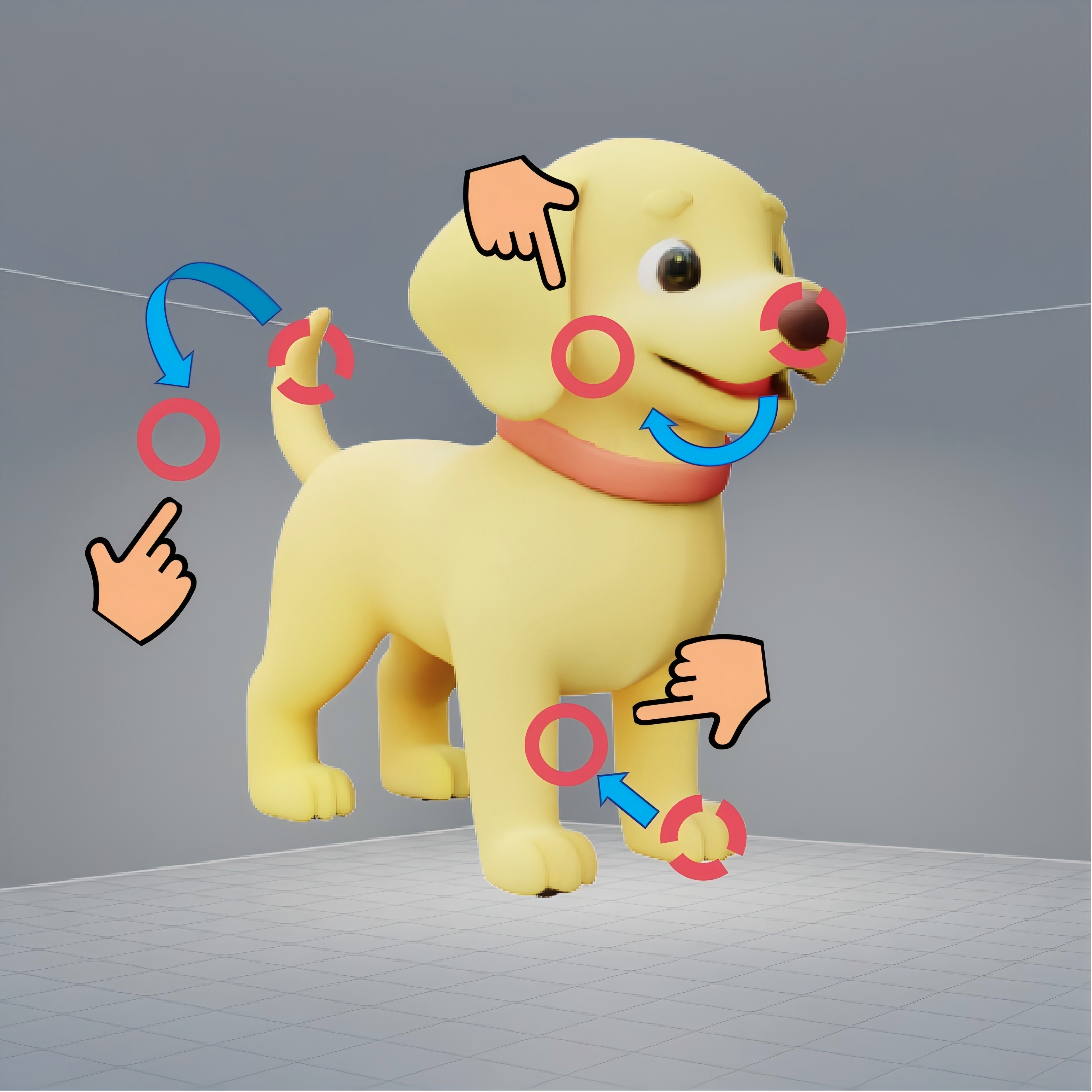

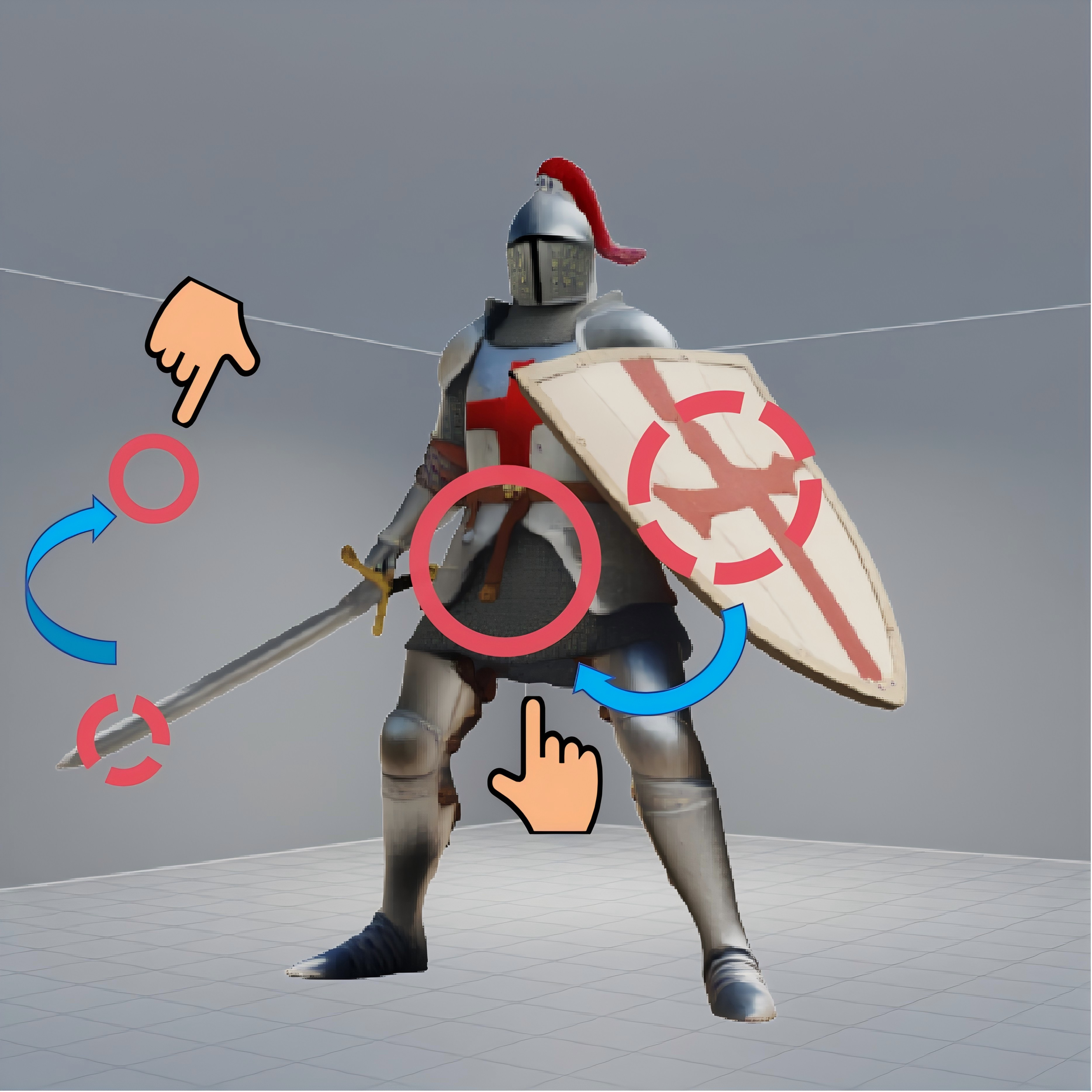

Figure 1: Overview of the ObjectMorpher image editing pipeline, demonstrating 2D-to-3D lifting, interactive manipulation, and generative composition for harmonious results.

Methodology

2D-to-3D Lifting and Representation

Object regions in the source image are selected via user interaction and segmented with SAM. The key innovation lies in elevating these 2D regions to a high-quality 3DGS representation using a diffusion-based 3D generation backend (TRELLIS). Compared to mesh representations, 3DGS optimizes for both geometry and photorealistic appearance via volumetric rendering loss, resulting in gradients suitable for dense, detailed, and local edits.

The resulting 3DGS proxy consists of a set of Gaussians, each parameterized by position, scale, rotation, color, and opacity, and rendered with EWA projection for high-fidelity forward and inverse projections.

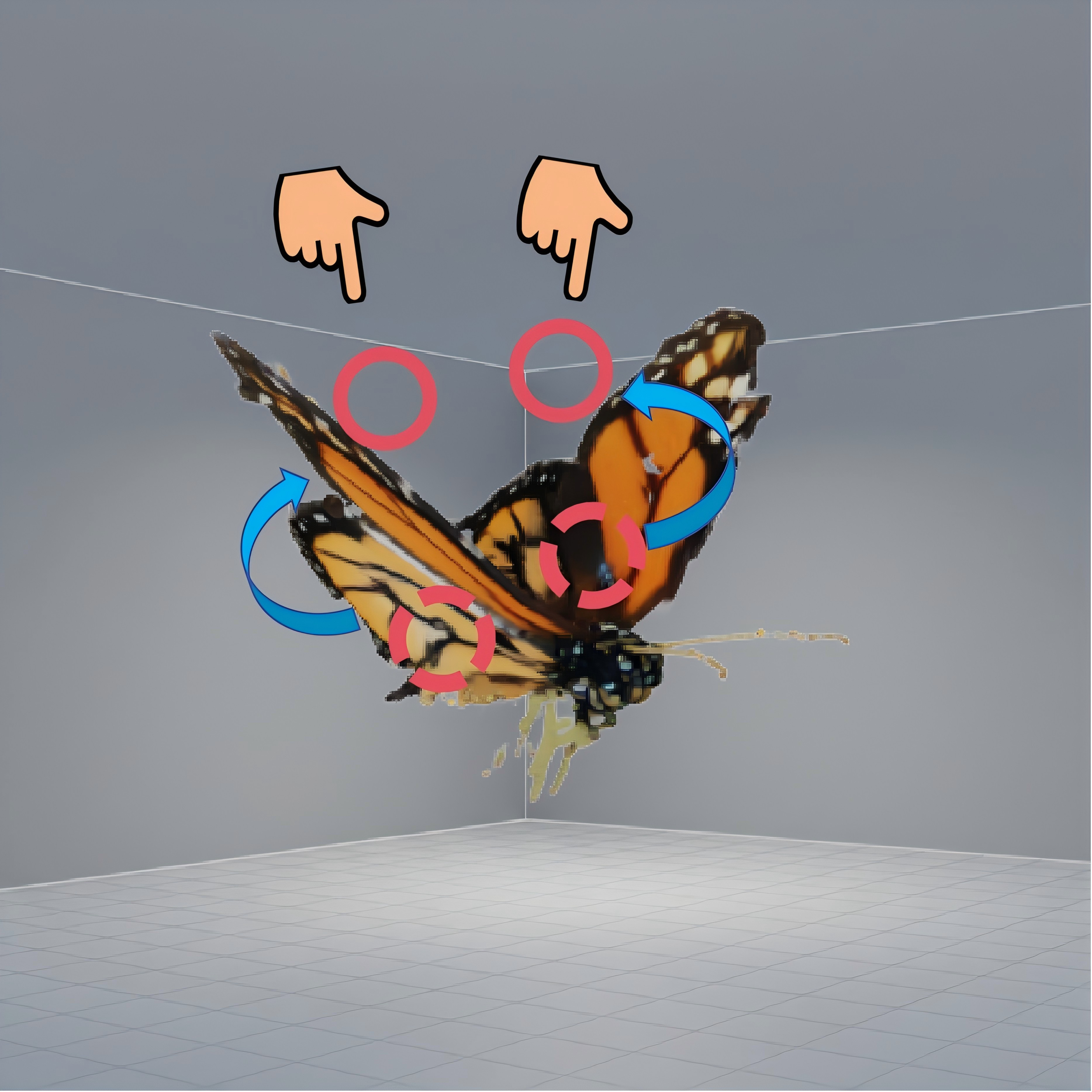

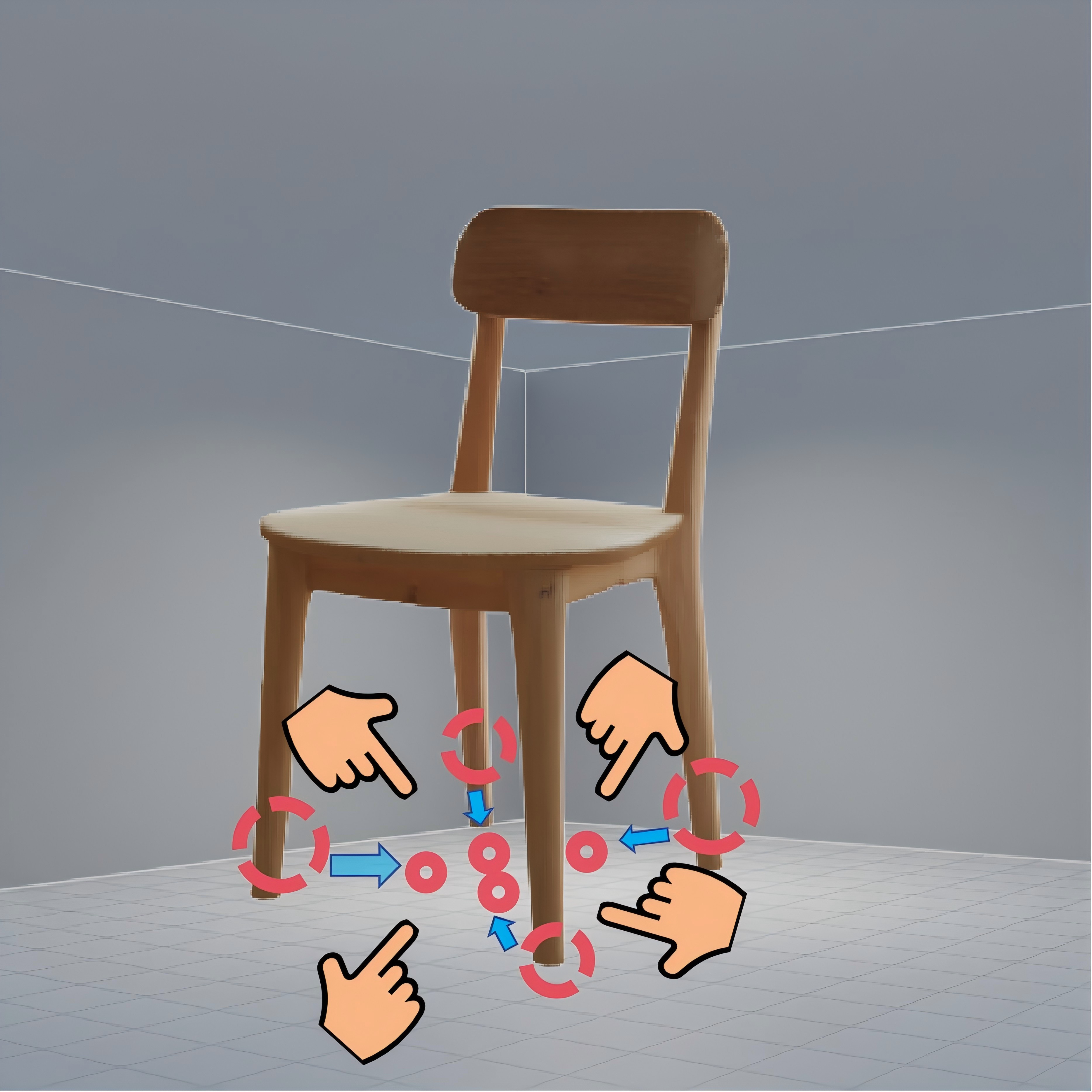

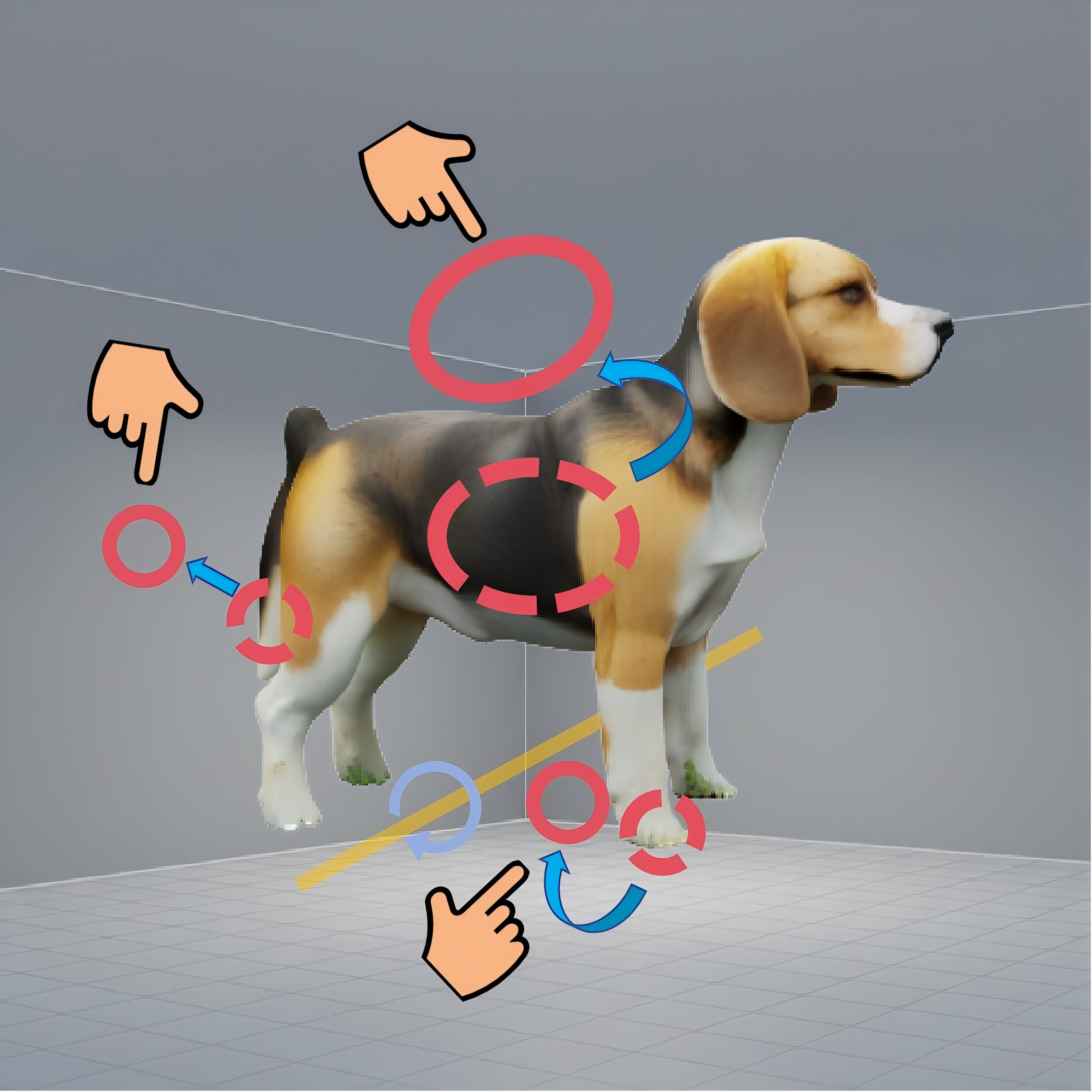

Interactive Non-Rigid Editing with Physical Priors

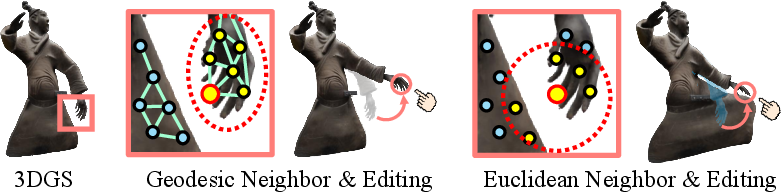

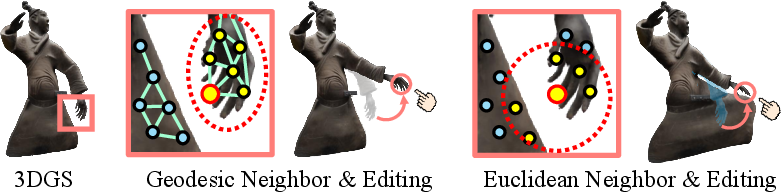

Direct editing of dense 3DGS (often millions of primitives) is computationally prohibitive in interactive scenarios. ObjectMorpher addresses this by introducing a sparse control proxy sampled from the 3DGS, forming a geodesically-aware deformable graph (Figure 2). User manipulations are captured as control point drags; the underlying ARAP (As-Rigid-As-Possible) energy ensures deformation remains locally rigid, transferring rotations and translations through iterative SVD-based optimization.

Figure 2: Comparison of naive Euclidean and manifold-respecting geodesic graph connections for the deformable proxy, impacting propagation of deformations.

Deformations from the sparse proxy are transferred to the dense 3DGS via Linear Blend Skinning, leveraging local affine transformations with geodesic continuity for natural and structure-preserving edits.

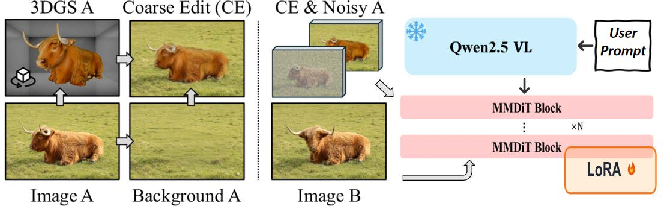

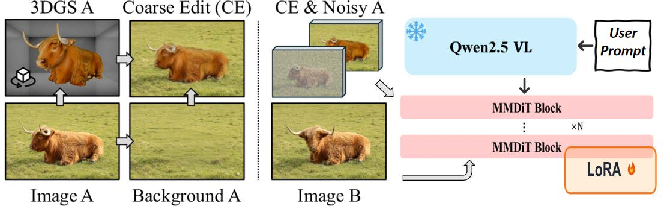

Generative Composite Editing

Following geometry manipulation, the edited 3DGS is projected and composited onto a cleaned background, generated via neural inpainting. ObjectMorpher then applies a specialized composite diffusion module, trained to harmonize boundaries, color, and lighting between the edited object and its scene context, overcoming typical artifacts from direct pasting. The module is conditioned both on the original image and task-specific textual instructions for improved realism and consistency (Figure 3).

Figure 3: Illustration of training data preparation and the diffusion-based pipeline for compositional refinement.

Experimental Evaluation

Qualitative and Quantitative Comparisons

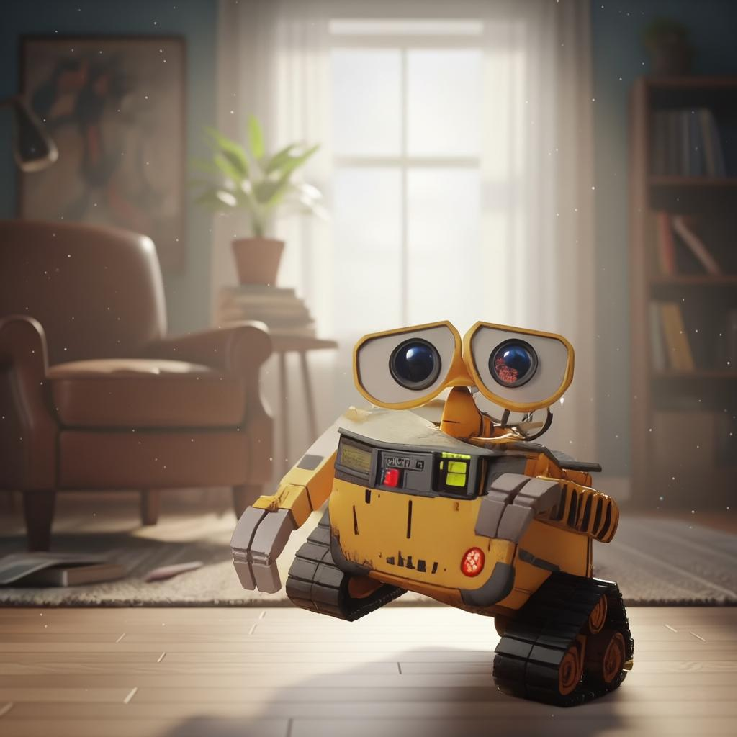

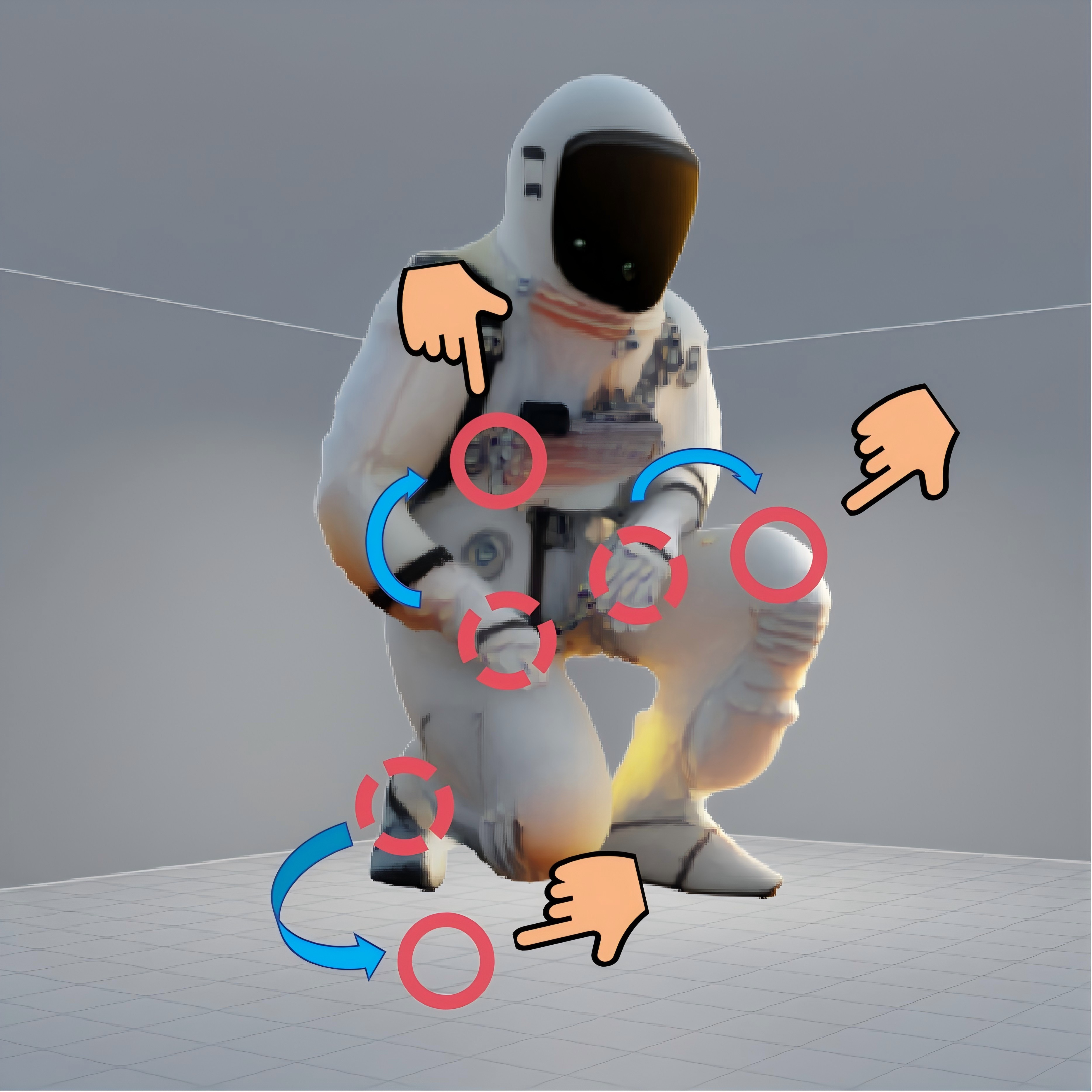

ObjectMorpher is evaluated on a curated benchmark with diverse subjects (animals, humanoids, inanimate, animated characters), and compared with both 2D drag-based and 3D-aware editing baselines. Across a breadth of editing challenges—non-rigid guidance, pose changes, and articulated manipulations—the method uniquely achieves faithful guidance following while maintaining photorealism (Figure 4). In contrast, 2D counterparts either fail to interpret 3D transformations or generate implausible results.

Figure 4: Qualitative comparisons showing that ObjectMorpher is the only method faithfully executing non-rigid guidance while maintaining photorealism across subjects.

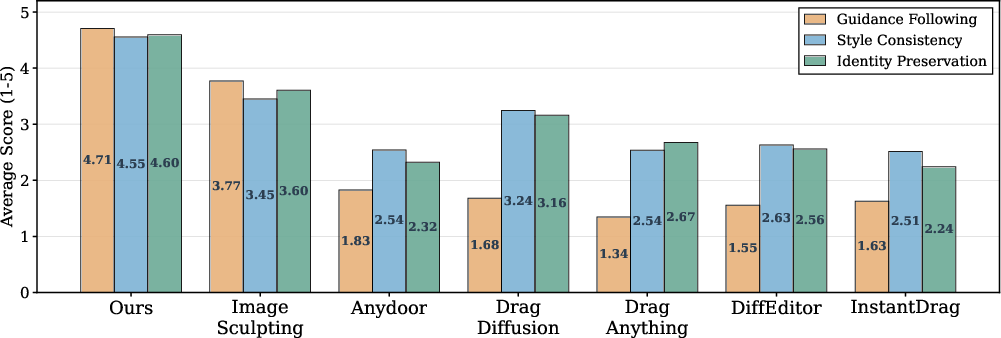

User Study and Numerical Results

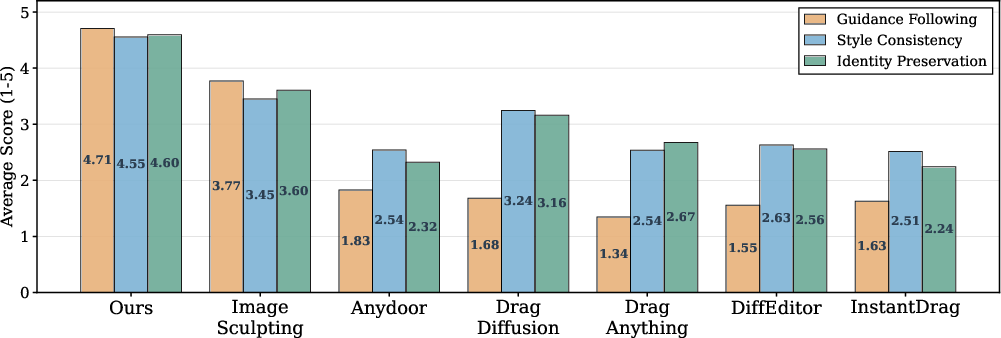

Automatic metrics such as LPIPS and SIFID, commonly used in generative evaluation, are revealed to be inadequate for controllable editing—they favor methods that perform little change rather than those effecting the correct manipulation. ObjectMorpher demonstrates dominance in human preference, achieving an average Guidance Following score of 4.71 out of 5, and surpassing baselines on Style Consistency and Identity Preservation (Figure 5).

Figure 5: Visual results of the user study, consistently favoring ObjectMorpher across all key metrics against strong baselines.

Interaction latency is near-instantaneous for the drag-editing stage (10 ms), and total edit time is on the order of tens of seconds, outperforming methods that require minutes to hours for similar tasks.

Ablations

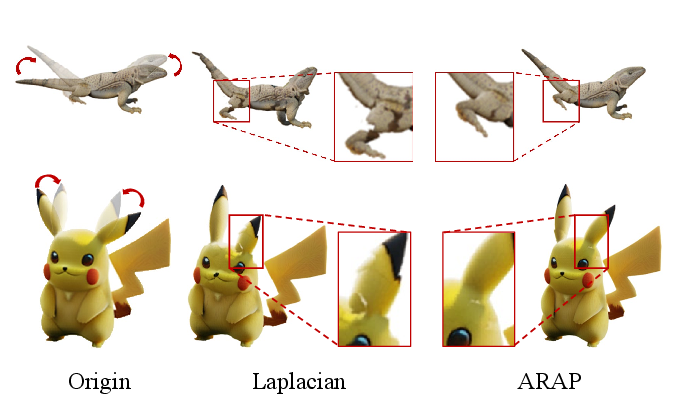

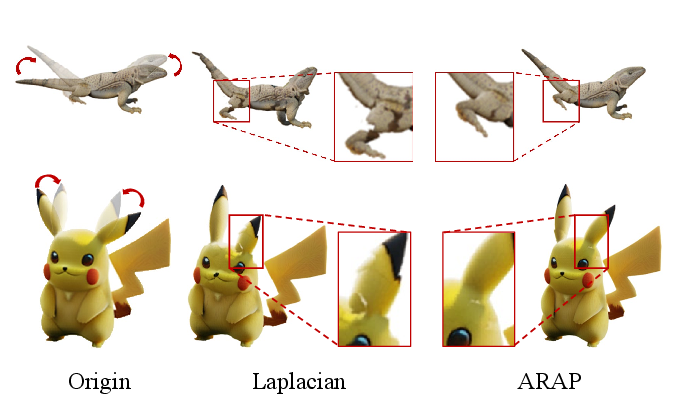

Critical ablations demonstrate that (1) 3DGS outperforms mesh for both rigidity and photorealistic integration, (2) generative composition is essential for seamless mergers (see Figure 3), and (3) ARAP constraints are necessary to avoid unnatural distortions during large and non-rigid transformations (Figure 6).

Figure 6: Ablation on deformation constraints, validating the necessity of ARAP for non-rigid yet visually plausible edits.

Implications and Future Directions

ObjectMorpher establishes a robust paradigm for interactive, geometry-aware editing with identity preservation and compositional harmony. Its architectural fusion—grounded 3DGS modeling, efficient proxy-based ARAP manipulation, and generative diffusion refinement—results in a flexible, high-fidelity, and user-friendly editing toolchain.

Practically, this method enables interactive visual design applications, advanced photography tools, in-game object customization, digital asset postprocessing, and downstream generative tasks bridging 2D/3D domains. The pipeline showcases an explicit interface for disambiguating 2D operations into 3D changes, which sets a scalable precedent for future mixed-modality editing systems.

Theoretically, the work suggests that dense, differentiable 3D representations (particularly Gaussian splats) serve as superior intermediates not only for rendering and view synthesis but also for user-driven, real-time image manipulation. The ARAP-based local constraint formulation can be generalized to other dense IRs and even video.

Future directions should target (i) closing the residual gap in fidelity for challenging materials (e.g., transparency, fine hair), (ii) fully-automated multi-object handling, (iii) end-to-end learning of the deformation–composition cycle, and (iv) leveraging foundation models for richer, higher-dimensional semantic control.

Conclusion

ObjectMorpher delivers an efficient and physically-consistent 3D-aware image editing framework, coupling editable 3DGS proxies with ARAP-constrained deformation and generative compositional diffusion. Extensive benchmark evaluation indicates clear superiority in controllability and photorealism over both 2D and existing 3D-aware systems. This methodology marks a significant step toward flexible, geometry-grounded, and harmonized visual content editing, with substantial potential to shape future cross-modal generative and editing pipelines.

(2603.28152)