- The paper introduces an agentic paradigm that redefines recommendation as a multi-agent decision process, replacing static pipelines with modular agents.

- It demonstrates how layered architectures and RL-LLM driven optimization enable autonomous evolution and scalable adaptability in heterogeneous environments.

- The approach provides explicit modularity and reward mechanisms to reconcile local agent performance with global business objectives for continual improvement.

From Static Pipelines to Agentic Recommender Systems

Technological Progression and Motivation

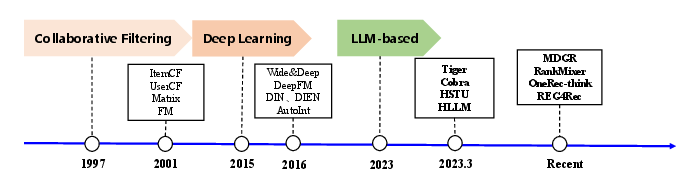

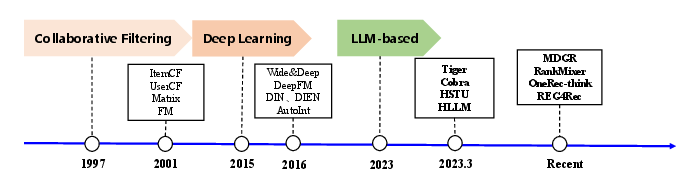

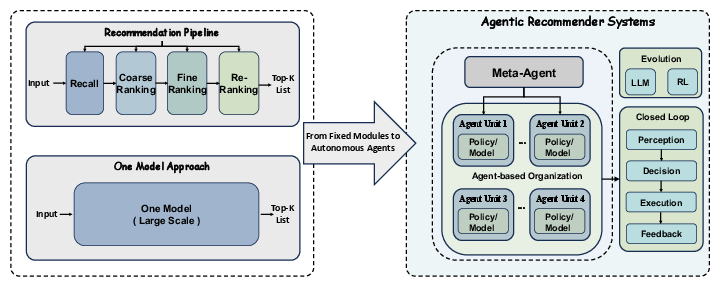

Current industrial recommender systems predominantly employ rigid, multi-stage pipelines (recall, ranking, re-ranking) with incremental improvements from collaborative filtering and matrix factorization to deep learning and LLM-based architectures. Although these methods have enabled scalability and performance, such pipelines are inherently static and lack autonomous adaptability. Their modules function as opaque black boxes, and system advancement relies heavily on expert-driven hypothesis formulation, manual configuration, and labor-intensive iteration. This approach becomes increasingly insufficient under growing heterogeneity in user/item distributions, complex business objectives, and operational constraints.

Figure 1: Technological evolution in recommendation systems, highlighting the transition from collaborative filtering to deep models and generative frameworks.

The Agentic Recommender Systems Paradigm

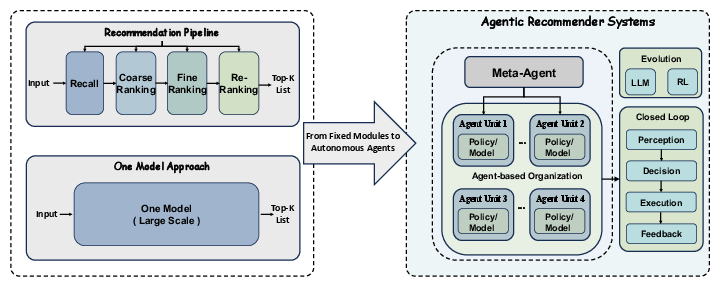

The paper proposes a paradigm shift, conceptualizing recommender systems as agentic, multi-agent decision processes rather than static pipelines. In this framework, discrete system components—recall, ranking, diversity enforcement—are elevated to agents if they meet criteria for functional closure, independent evaluability, and evolvable decision spaces. Each agent operates in a perception–decision–execution–feedback loop with well-defined interfaces, allowing modular improvement without destabilizing the global stack.

Figure 2: Illustration of the Agentic Recommender Systems paradigm, emphasizing the transition to agent-driven composition and evolution.

Motivating Factors

- Heterogeneity: Static models struggle to address rich variation in users, items, and entry points. AgenticRS enables specialized agents for sub-distributions, reducing mode collapse.

- Multi-objective Optimization: Current approaches entangle business objectives inside monolithic models or apply ad hoc rule layers. AgenticRS enables explicit objective delegation to agents, coupling local competence with global constraints.

- Complexity and Iteration Bottlenecks: Adding more recall/feature routes and advanced models increases complexity, but manual improvement is not scalable. Agentic design supports autonomous evolution and continual improvement.

- Adaptivity: Static pipelines resemble fixed production lines, lacking mechanisms for ongoing self-improvement and reconfiguration. AgenticRS provides explicit loci for adaptive behaviors.

Architectural Overview

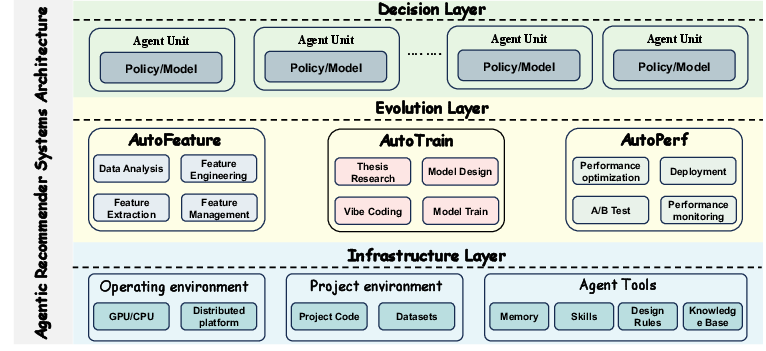

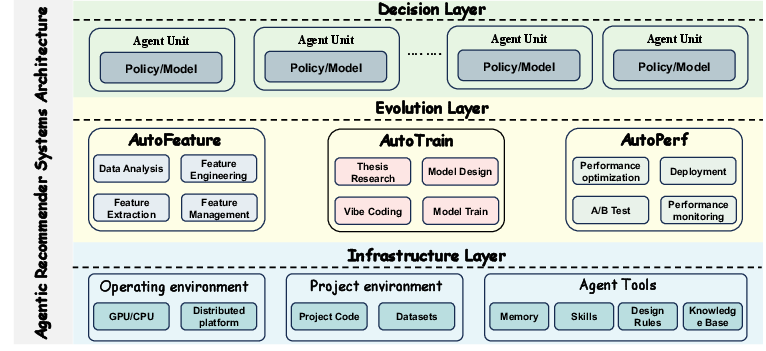

AgenticRS replaces stage-wise pipelines with a graph of interacting agents, selectively promoting modules that meet agent criteria. These are organized into three layers:

Figure 3: The architecture of agentic recommender systems, exhibiting layered agent composition and inter-agent orchestration.

Decision Layer

Agents here make key recommendation decisions—recall selection, ranking, policy enforcement—with non-fixed internal structure. Each agent produces actions (candidate sets, ranked lists, strategy adjustments) based on shared state.

Evolution Layer

Agents analyze feedback, logs, and rewards, proposing, training, and deploying new architectures and policies. Outputs span revised models, hyperparameters, and routing strategies, validated via controlled experiments.

Infrastructure Layer

This layer offers unified state and experience repositories, maintaining profiles, interaction history, meta-knowledge, and orchestrating task scheduling and conflict resolution among agents.

Mechanisms for Agent Evolution

AgenticRS facilitates two broad axes of evolution—methodological (RL and LLM-based) and structural (individual vs. compositional).

RL and Search-Based Optimization

When agent design spaces are moderate (architecture choices, hyperparameters), evolution is cast as RL or black-box search. Agents generate configurations, execute them, and receive reward signals for iterative improvement with minimal manual intervention.

LLM-Driven Innovation

For agents with high-dimensional and heterogenous action spaces (multi-tower architectures, complex routing), LLMs act as design assistants. Given agent descriptions and historical data, LLMs generate candidate architectures and training schemes, which are systematically evaluated and integrated if successful.

Individual vs. Compositional Evolution

- Individual: Agents improve their structure or policy within a fixed system graph, using RL/search or LLM-driven edits.

- Compositional: The system may alter agent compositions, selecting agents, reconfiguring ensembles, or adjusting interconnectivity to optimize for global business metrics and resource allocation.

These axes alternate: the system engages in individual optimization, then explores compositional shifts when local gains saturate.

Implications and Prospects

The AgenticRS framework introduces explicit modularity and autonomous evolution into recommender systems, addressing practical bottlenecks in adaptability, scalability, and multi-objective optimization. By turning core modules into independently evolvable agents with stable interfaces and layered reward design, systems gain the flexibility to assign subspaces and objectives, continually improve both locally and globally, and reduce engineering overhead. Layered reward mechanisms facilitate reconciliation between local competence and overarching business objectives.

Practically, this approach can enable rapid adaptation to distributional shifts, facilitate sustainable scaling across heterogeneous data, and streamline maintenance cycles. Theoretically, AgenticRS opens avenues in lifelong learning, evolving agent networks, and decision orchestration in composite systems. Future developments may encompass advanced agent composition strategies, meta-learning across agent pools, and automated discovery of new agent types driven by emergent behaviors and shifting constraints.

Conclusion

Agentic Recommender Systems offer a structured rubric for decentralizing intelligence and adaptability in recommender architectures. By leveraging modular agent design, RL-driven optimization, and LLM-based innovation, the framework achieves scalable, sustainable evolution toward continually improving ecosystem behaviors. The proposed layered organization and reward coupling balance local optimization with global objectives, marking a formal advance in recommendation paradigms toward adaptive, multi-agent systems (2603.26100).