MAGICIAN: Efficient Long-Term Planning with Imagined Gaussians for Active Mapping

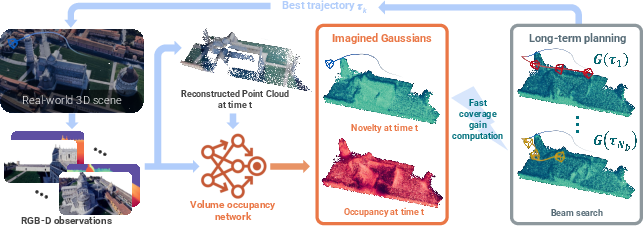

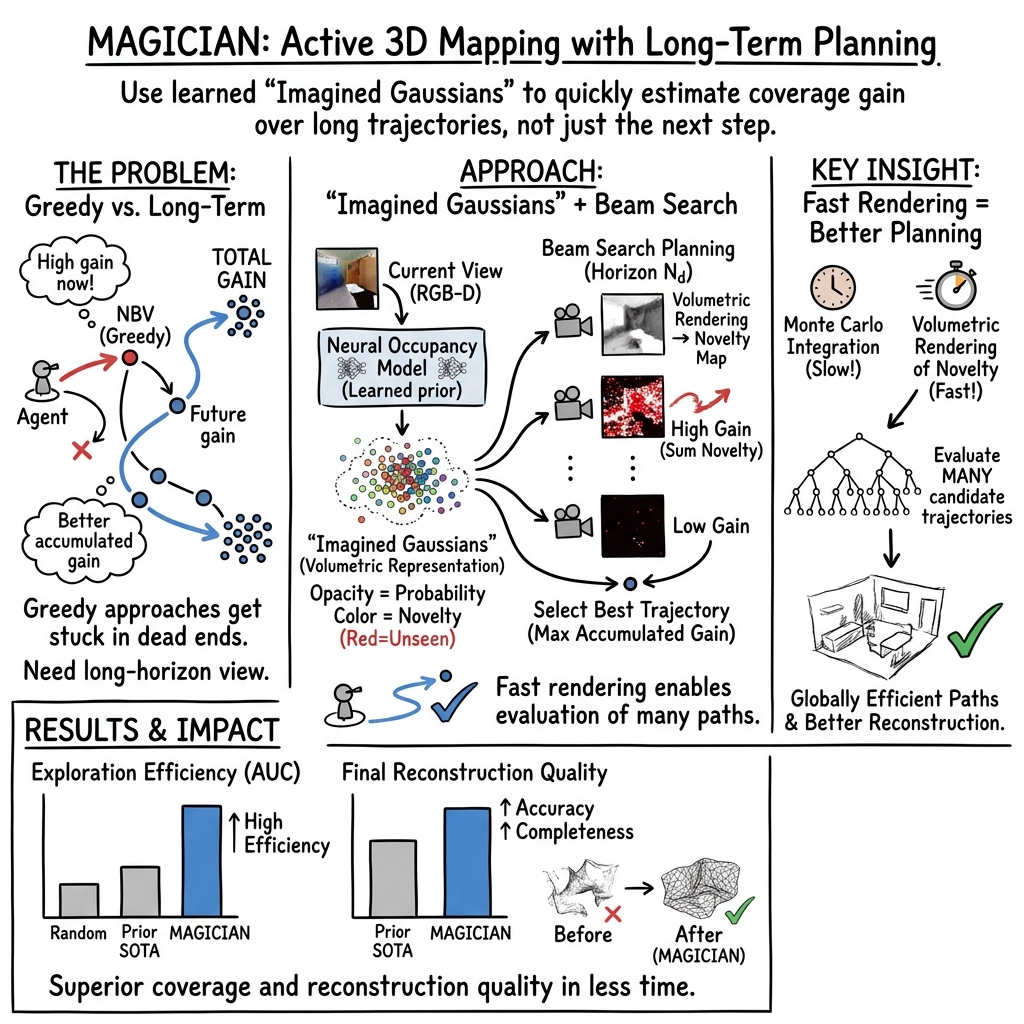

Abstract: Active mapping aims to determine how an agent should move to efficiently reconstruct an unknown environment. Most existing approaches rely on greedy next-best-view prediction, resulting in inefficient exploration and incomplete scene reconstruction. To address this limitation, we introduce MAGICIAN, a novel long-term planning framework that maximizes accumulated surface coverage gain through Imagined Gaussians, a scene representation derived from a pre-trained occupancy network with strong structural priors. This representation enables efficient computation of coverage gain for any novel viewpoint via fast volumetric rendering, allowing its integration into a tree-search algorithm for long-horizon planning. We update Imagined Gaussians and refine the planned trajectory in a closed-loop manner. Our method achieves state-of-the-art performance across indoor and outdoor benchmarks with varying action spaces, demonstrating the critical advantage of long-term planning in active mapping.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (big picture)

The paper introduces MAGICIAN, a way for a robot (like a drone or a wheeled bot) to explore an unknown place and build a good 3D map faster and more completely. Instead of only picking the “next best step” each time, MAGICIAN plans several steps ahead. It does this by “imagining” what the unseen parts of the world might look like, then choosing a route that will reveal the most new surfaces overall.

What questions the researchers asked

They set out to solve three simple-sounding but hard problems:

- How can a robot plan a long route that uncovers the most new parts of a scene, not just the next step?

- How can it quickly guess how much “new stuff” it would see from many possible future camera positions?

- How can it keep improving its plan as it moves and sees more?

How MAGICIAN works (in everyday terms)

Think of the robot as an explorer in a foggy building or outdoor site, trying to create a clean 3D model. It needs to pick places to go that will show it the most new surfaces (walls, floors, statues, trees, etc.).

MAGICIAN has three key ideas:

1) A world model that “imagines” what’s likely there

- The system uses a learned model (an “occupancy network”) that, from the robot’s past photos, predicts which parts of space are probably filled (occupied) and which are empty.

- You can think of this like a smart guess: based on what it has already seen and what it has learned from many 3D examples, it estimates where objects probably are, even if they’re currently hidden.

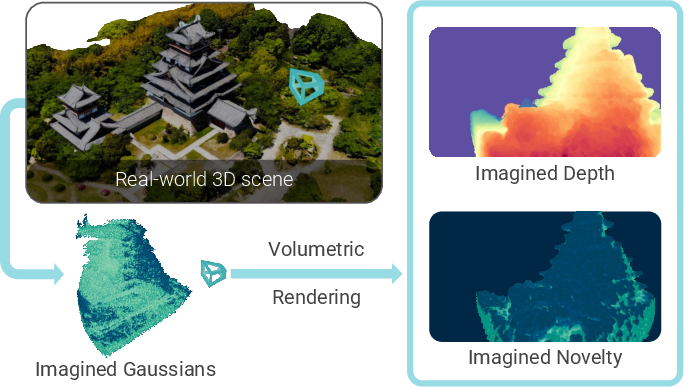

2) Imagined Gaussians: soft 3D dots that stand in for the scene

- To make planning fast, MAGICIAN represents the world as lots of small, soft 3D blobs (Gaussians). Each blob has:

- An “opacity” (how solid it seems), which equals the probability that space is actually occupied there.

- A “novelty” flag (new vs. already seen).

- Why “imagined”? Because many blobs sit in places the robot hasn’t seen yet; their opacities come from the model’s best guess.

- With this blob world, MAGICIAN can do “volumetric rendering,” which is like shining rays through fog to see what would be visible from a camera spot. This lets it quickly estimate how much new surface a camera would reveal—the “coverage gain”—without slow calculations.

In simple terms: put lots of soft dots where things might be, then simulate what the camera would see from different positions. Add up “how much new stuff” appears in each simulated view to score that position.

3) Long-term planning with beam search (keeping the best few options)

- Instead of picking only the very next view, MAGICIAN explores many possible short future routes (like branches of a tree).

- At each step, it keeps only the top few most promising routes (the “beams”)—the ones that are predicted to reveal the most total new surface over the next several moves.

- Each route carries its own copy of the imagined world, updating blobs’ novelty from “new” to “seen” as if the robot had actually gone there. That way, the plan doesn’t double-count places already covered by that route.

- After choosing the best route, the robot executes just the first move, captures real images, updates its imagined blobs (making guesses more accurate), and repeats the plan. This is a tight loop: see, imagine, plan, move.

A small but important detail: this blob-based simulation is fast. The paper reports about a 25× speed-up for scoring a single viewpoint compared to an older approach, which is what makes long-term planning practical.

What they found and why it matters

Across both indoor and outdoor tests, MAGICIAN covered more of the scene and did it more efficiently than previous methods.

Highlights:

- On a challenging outdoor/indoor benchmark (Macarons++), MAGICIAN increased final surface coverage from about 82% to about 92%, and improved mapping speed (AUC) as well.

- On the popular indoor MP3D set, it beat strong baselines for both a wheeled robot and a drone, achieving higher completeness and lower error.

- Using only 100 images gathered by each method’s exploration route, MAGICIAN’s routes led to better 3D reconstructions and nicer novel-view renderings (sharper, fewer holes), showing that better planning really does produce better 3D models.

Ablation (what makes it tick):

- Planning farther ahead and considering more candidate routes improves results.

- Even if MAGICIAN is forced to be “greedy” (only 1 step look-ahead), its fast “Imagined Gaussians” scoring still beats older greedy methods.

- Replanning often helps, but even replanning every few steps still works well.

- The system didn’t rely on heavy fine-tuning to new indoor data to work well, showing good generalization.

Why this is useful (impact and future directions)

Robots that can map quickly and thoroughly are valuable in many places:

- Search and rescue (finding safe paths and understanding damaged buildings)

- Inspection (bridges, factories, heritage sites)

- AR/VR content capture and filmmaking

- Delivery and warehouse robots

The main advance is that MAGICIAN makes “thinking ahead” practical by imagining the world in a simple, fast format (soft 3D blobs) and using that to plan routes that reveal the most new surface. That means less wandering, fewer dead ends, and better maps sooner.

Looking forward, the same idea—imagine, simulate, plan—could work with only regular RGB images (no depth), and could include “semantic” understanding (knowing what objects are), so robots could not only map faster but also seek out meaningful things (exits, machines, artifacts) on purpose.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of unresolved issues that future work could address:

- Assumed perfect state estimation and depth sensing: the method presumes known, noise-free poses and accurate depth for novelty updates; how to jointly plan under pose uncertainty, sensor noise, calibration errors, or RGB-only sensing remains open.

- Static-scene assumption: no mechanism for handling dynamic elements (moving objects/people) or time-varying occupancy; how to maintain and plan with a temporally consistent, dynamic world model is unexplored.

- Dependence on a specific occupancy network: sensitivity to the quality, calibration, and biases of the chosen occupancy model (pre-trained on ShapeNet and fine-tuned on select scenes) is not characterized; robustness to out-of-distribution environments and alternative occupancy predictors needs evaluation.

- Lack of online model adaptation: the occupancy network is re-queried but not clearly adapted online to new observations; how to perform continual/online learning or Bayesian updating of occupancy to correct priors is not specified.

- Imagined Gaussians design choices: isotropic Gaussians with radius set to half of the nearest-neighbor distance are used by default; the impact on thin structures, anisotropic surfaces, and fine geometry is unquantified; exploring anisotropic covariances or adaptive radii is an open direction.

- Sampling strategy for Gaussians: proxy points are “randomly sampled with higher density inside the exploration bounding box,” but the number, distribution, and adaptivity over time are not analyzed; how sampling density/placement trades off coverage accuracy vs computation remains unclear.

- Novelty modeling is binary and per-Gaussian center: view quality (e.g., angle of incidence, depth accuracy, pixel footprint), partial observations, and multi-view fusion are not modeled; designing continuous, quality-weighted novelty and avoiding double-counting or premature novelty depletion is open.

- Occlusion/transmittance calibration: using occupancy probabilities as opacities to approximate transmittance introduces modeling mismatch; how to calibrate opacity/transmittance so rendered novelty aligns with true occlusion is unstudied.

- Objective ignores travel/time/energy costs: the planner maximizes accumulated coverage without explicit motion cost terms; balancing coverage gain with path length, time, energy, and actuation limits (multi-objective optimization) is not addressed.

- Scalability of beam search: each beam maintains an independent Gaussian state, which increases memory and compute with beam width and depth; scaling to larger, continuous action spaces or longer horizons, and comparing with alternatives like MCTS or heuristic DP, is not explored.

- Real-time feasibility on embedded platforms: while per-view gain is fast, the total planning loop cost (rendering per candidate × branching factor × horizon × beams) and memory footprint are not reported; profiling on resource-constrained hardware is missing.

- Collision safety under uncertainty: collision avoidance relies on predicted occupancy without explicit uncertainty margins or risk-aware constraints; methods to ensure safety guarantees or robust planning under probabilistic occupancy are not provided.

- Handling sensing imperfections: the novelty update relies on a depth tolerance ε_d, but sensitivity to ε_d, depth noise/outliers, missing depth, and reflective/translucent surfaces is not analyzed.

- Integration with SLAM/localization: the framework assumes known poses; how to couple with SLAM (pose graph, loop closure) and plan while accounting for map and pose uncertainty (active SLAM) is open.

- Large-scale environments and memory: the number of Gaussians and per-beam state duplication may not scale to kilometer-scale outdoor scenes; out-of-core/streaming representations, hierarchical tiling, or culling strategies are not considered.

- Multi-agent extension: coordinating multiple agents with shared or partially shared Imagined Gaussians and distributed planning is not explored.

- Task-driven semantics: current objective is purely geometric coverage; incorporating semantics, task goals, or category-specific priors to guide exploration remains future work (noted by the authors).

- Reconstruction-quality-aware planning: coverage is used as a proxy for downstream reconstruction, but the utility does not directly reflect mesh/texture fidelity; integrating reconstruction uncertainty or differentiable reconstruction feedback into the planning objective is an open problem.

- Robustness to prior hallucinations: occupancy priors might hallucinate non-existent structures or miss thin obstacles, potentially causing inefficient or unsafe plans; detecting and correcting such prior errors during exploration is unaddressed.

- Hyperparameter sensitivity: key parameters (beam width N_b, horizon N_d, replanning frequency N_f, number of Gaussians, sampling density, ε_d) lack systematic sensitivity analysis beyond limited ablations; guidelines for tuning across environments are missing.

- Termination and stopping criteria: the method runs for a fixed budget; designing data-driven stopping rules when marginal coverage gain saturates is unexplored.

- Evaluation limitations: results are on simulated/scan-based benchmarks with ground-truth meshes; real-robot trials, robustness to real-world sensing imperfections, and comparisons under identical on-board compute constraints are absent.

- Theoretical properties: there are no optimality or approximation guarantees for the beam search or for the rendered-coverage surrogate; analyzing submodularity/monotonicity of the gain and providing bounds remains an open theoretical question.

- Generalization of the framework: although claimed to be occupancy-model agnostic, the approach is only demonstrated with one architecture; benchmarking with different occupancy predictors and 3D foundation models, including RGB-only pipelines, is pending.

Practical Applications

Immediate Applications

Below are deployable use cases that leverage MAGICIAN’s long-term planning and “Imagined Gaussians” for fast coverage-gain computation. Each item notes sector(s), potential product/workflow concepts, and key dependencies/assumptions.

- Autonomous infrastructure and utility inspection

- Sectors: energy, utilities, civil infrastructure, telecom

- What: Coverage-optimized drone/UGV routes to rapidly scan bridges, substations, power plants, wind turbines, solar farms, or telecom towers for full-surface inspection and 3D reconstruction with minimal battery/time.

- Tools/workflows: “Coverage-Optimized Flight Planner” integrated into ground-control stations (e.g., QGroundControl), ROS 2 module that exposes a coverage-gain service, post-flight pipeline for 3D Gaussian Splatting and mesh extraction (e.g., MILo).

- Assumptions/dependencies: RGB-D (or depth-from-stereo/LiDAR) and pose estimates available (via SLAM or RTK-GNSS), static or slow-changing scene, regulatory approval for drone operations (especially BVLOS), onboard or edge GPU for fast volumetric rendering.

- Construction progress capture and as-built digital twins

- Sectors: AEC (architecture, engineering, construction), proptech

- What: Weekly site scans planned to maximize new-surface coverage, reducing wasted vantage points and accelerating as-built model updates for BIM coordination and QA/QC.

- Tools/workflows: “Digital Twin Update Planner” that ingests previous scans and coverage maps to plan next missions; BIM integration to overlay coverage heatmaps; automated ROI re-targeting.

- Assumptions/dependencies: Frequent layout changes require frequent replanning; site safety protocols for robots/drones; reliable localization (indoor SLAM) in GPS-denied areas.

- Facility management and indoor mapping for operations

- Sectors: facilities, commercial real estate, logistics

- What: Service robots perform high-coverage scans to maintain up-to-date indoor maps for maintenance planning, navigation, and asset tracking.

- Tools/workflows: ROS 2 “Active Mapping Planner” plug-in that replaces greedy NBV; facility dashboard tracking AUC/final coverage across floors.

- Assumptions/dependencies: Indoor localization, RGB-D or LiDAR sensors, building access schedules, mostly static environments during scan windows.

- Warehouse and retail inventory environment capture

- Sectors: logistics, retail

- What: Ground robots plan long-horizon routes to efficiently capture shelf exteriors and aisle geometry for digital layout verification and scan-to-SKU workflows.

- Tools/workflows: Integration with WMS to prioritize high-novelty zones; coverage KPI dashboards.

- Assumptions/dependencies: Narrow aisles and dynamic human traffic require collision-aware planning and frequent replanning; availability of depth/pose.

- Cultural heritage and museum scanning

- Sectors: cultural preservation, tourism, media

- What: Time-constrained high-fidelity scans of complex artefacts and interiors (vaults, columns, sculptures) with minimized holes in reconstructed surfaces.

- Tools/workflows: “Curator Scan Scheduler” to plan short access windows; mesh-ready outputs via MILo.

- Assumptions/dependencies: Limited access times, fragile environments; accurate indoor localization; consistent lighting is beneficial.

- Search-and-rescue (rapid situational mapping)

- Sectors: public safety, defense

- What: Quickly generate high-coverage maps of unfamiliar structures to guide responders; prioritize novel regions to avoid redundant exploration.

- Tools/workflows: “Coverage Navigator” that produces waypoints for responders’ drones/UGVs; on-the-fly replan as areas become accessible.

- Assumptions/dependencies: Dynamic environments and hazards; robust localization in smoke/dust; partial observability; conservative collision margins.

- VFX/VR/AR scene capture and NeRF/3DGS dataset collection

- Sectors: media, gaming, metaverse

- What: Autopilot camera paths that prioritize coverage for downstream NeRF/3DGS training, improving completeness and reducing capture time.

- Tools/workflows: “NeRF Capture Autopilot” that exports routes to handheld gimbals/drones; automatic novelty-aware re-shoot suggestions.

- Assumptions/dependencies: Stable lighting preferred; accurate pose estimation; depth available (or good stereo).

- Academic research and teaching

- Sectors: academia, R&D

- What: A reproducible baseline for long-horizon active mapping; benchmarking and curriculum modules comparing greedy NBV vs. beam-search planning with Imagined Gaussians.

- Tools/workflows: Habitat/Matterport integration; open-source ROS 2 node; standardized AUC/final-coverage metrics for courses and labs.

- Assumptions/dependencies: GPU resources for batched volumetric rendering; pre-trained occupancy models (e.g., ShapeNet + scene fine-tuning, if needed).

Long-Term Applications

These use cases are promising but require further research, scaling, or integration with emerging models and systems.

- Multi-robot coordinated exploration with shared Imagined Gaussians

- Sectors: robotics, logistics, public safety, energy

- What: Teams of robots collaboratively maximize coverage while minimizing overlap; cloud map server merges occupancy/novelty and assigns sub-goals.

- Tools/workflows: “Imagined Gaussians Map Server” for synchronized novelty fields; fleet-level beam search with communication-aware constraints.

- Assumptions/dependencies: Robust map/pose fusion, low-latency comms, conflict resolution, scalable memory for large Gaussian sets.

- RGB-only exploration via 3D foundation models

- Sectors: software, consumer devices, robotics

- What: Replace depth sensors with RGB-only geometry proxies (e.g., DUSt3R/VGGT) to plan coverage on smartphones, wearables, or lightweight robots.

- Tools/workflows: “Depthless Active Mapping” mode using foundation models to produce pseudo-depth/occupancy for Imagined Gaussians.

- Assumptions/dependencies: Maturity of RGB-only geometry under varied lighting/texture; drift control; computational budgets on edge devices.

- Semantic- and goal-aware exploration

- Sectors: healthcare, facilities, manufacturing, public safety

- What: Augment coverage objective with semantics to prioritize critical assets (e.g., exits, valves, equipment), or task-specific regions (sterile zones in hospitals).

- Tools/workflows: Joint novelty–semantic gain; “Targeted Exploration Planner” that balances coverage with task utility.

- Assumptions/dependencies: Reliable real-time semantic perception; policy-compliant handling of sensitive areas; dataset/domain generalization.

- Dynamic and human-populated environments

- Sectors: retail, airports, hospitals, smart buildings

- What: Robust long-term planning where novelty/occlusion changes due to moving people/objects; suppress redundant revisits and maintain safety margins.

- Tools/workflows: Time-decayed novelty fields; predictive occlusion models; human-aware motion constraints.

- Assumptions/dependencies: Tracking and prediction of dynamic agents; safe interaction policies; continual SLAM in dynamic scenes.

- Planetary, subterranean, and underwater exploration

- Sectors: space, mining, oceanography

- What: High-coverage mapping in GPS-denied, extreme environments (lava tubes, caves, subsea structures) with strict energy and comms constraints.

- Tools/workflows: Onboard beam search with tight power budgets; delayed or intermittent map sharing; ruggedized occupancy estimation.

- Assumptions/dependencies: Extreme localization challenges, limited sensing, radiation/pressure effects, high autonomy requirements.

- Insurance and finance: rapid post-disaster assessment

- Sectors: insurance, reinsurance, public sector

- What: Efficient, coverage-maximizing scans to assess structural damage quickly for claims and risk modeling.

- Tools/workflows: “Coverage Budget Optimizer” that adapts to limited flight windows; standardized AUC/coverage reporting for audit.

- Assumptions/dependencies: Changing, hazardous environments; flight restrictions; data governance and privacy.

- Utility-scale asset networks (wind/solar farms, transmission corridors)

- Sectors: energy, utilities

- What: Fleet-level planning to update digital twins across large, distributed assets; focus on under-covered or recently modified components.

- Tools/workflows: Coverage dashboards; rolling novelty maps to schedule maintenance scans; integration with CMMS/EAM systems.

- Assumptions/dependencies: BVLOS regulatory approvals; long-range comms; large-scale map storage and synchronization.

- Consumer home robotics and AR mapping

- Sectors: consumer electronics, smart home

- What: Home robots or AR devices use coverage-aware exploration to maintain accurate apartment/house maps for navigation and AR placement.

- Tools/workflows: “Active Mapping Module” on embedded GPUs; incremental novelty decay to manage regular re-scans.

- Assumptions/dependencies: Privacy controls; compact compute; robust visual-inertial odometry and loop closure.

- Standardized procurement and policy guidelines for public digital twins

- Sectors: government, urban planning

- What: Adoption of coverage/AUC metrics to evaluate vendor scan plans and digital twin maintenance; policy templates for efficient, safe data capture.

- Tools/workflows: Public RFP checklists including coverage efficiency and replanning frequency; compliance dashboards.

- Assumptions/dependencies: Consensus on metrics; data standards; alignment with privacy and accessibility regulations.

Cross-Cutting Dependencies and Assumptions

- Sensing and localization: Requires depth (RGB-D, LiDAR, or reliable depth-from-RGB) and reasonably accurate poses (SLAM/odometry/RTK). RGB-only variants depend on robust 3D foundation models.

- Compute: Real-time or near-real-time GPU rendering for Imagined Gaussians (onboard or edge). Memory scales with Gaussian count; tiling or streaming may be needed for very large scenes.

- Scene characteristics: Best performance in mostly static environments; dynamic scenes need additional modeling and frequent replanning.

- Safety and compliance: Collision-aware planning, geofencing, and regulatory approvals for drones; privacy-preserving capture in public/indoor spaces.

- Model generalization: Occupancy network is pre-trained (e.g., on ShapeNet) and may need fine-tuning for unusual geometries or sensor domains; domain shift can affect novelty/occupancy predictions.

- System integration: Requires low-level motion controllers, fail-safes, and possibly traditional global planners for long-distance transits; tight loops for perception–planning–action with configurable beam width and horizon.

Glossary

- 3D foundation models: Large pre-trained models that provide general-purpose 3D geometry priors usable across tasks. "3D foundation models~\cite{wang2024dust3r, wang2025vggt}"

- 3D Gaussian Splatting (3D GS): A rendering and scene representation technique that uses collections of 3D Gaussians for efficient radiance-field-like rendering. "3D Gaussian Splatting (3D GS)~\cite{kerbl3Dgaussians, guedon2024sugar, 2dgs}"

- 6 DoF: Six degrees of freedom describing free 3D motion (three translations and three rotations). "6 DoF"

- A: The A graph search algorithm for shortest-path planning using heuristics. "A*~\cite{astar}"

- Active mapping: The problem of deciding how an agent should move to efficiently reconstruct an unknown environment. "Active mapping aims to determine how an agent should move to efficiently reconstruct an unknown environment."

- Area Under the Curve (AUC): A scalar evaluation metric computed as the area under the curve of a quantity over time; here, coverage over time to measure efficiency. "AUC, which evaluates the efficiency of the reconstruction process as the area under the curve of coverage over time."

- Beam search: A heuristic search that expands a fixed number of top-scoring partial solutions at each step to plan sequences. "apply beam search to plan candidate trajectories"

- Combinatorial explosion: The rapid growth in the number of possible solutions (e.g., trajectories) that makes exhaustive search infeasible. "combinatorial explosion of possible trajectories"

- Depth tolerance: A small allowable difference between rendered and measured depths used for visibility/observation checks. "within a depth tolerance ."

- Differentiable Gaussian rasterizer: A renderer that rasterizes Gaussians with gradients, enabling depth and image generation while supporting optimization. "we use the differentiable Gaussian rasterizer from RaDe-GS~\cite{zhang2024rade}"

- Fisher information: A measure of how much information an observable random variable carries about an unknown parameter; used for view scoring. "the Fisher information~\cite{jiang2024fisherrf}"

- Frontier-based exploration: A strategy that directs exploration to boundaries between known free space and unknown space. "relies heavily on frontier-based exploration"

- Imagined Gaussians: A volumetric scene representation consisting of Gaussians whose opacities are predicted from an occupancy model to estimate future coverage. "We propose Imagined Gaussians derived from a neural occupancy field"

- Isotropic Gaussians: Gaussians with identical variance in all directions, used here as simple 3D primitives. "We use isotropic Gaussians with radius equal to half the distance to the nearest neighbor."

- Mesh-In-the-Loop Gaussian Splatting (MILo): A reconstruction approach that integrates mesh supervision into Gaussian Splatting optimization. "Mesh-In-the-Loop Gaussian Splatting~\cite{guedon2025milo}"

- Monte Carlo integral: An integral approximated via random sampling; here, used (and avoided) for volumetric coverage estimation. "volumetric Monte Carlo integral"

- Monte Carlo sampling: Random sampling used to approximate integrals or expectations; computationally heavy for dense 3D queries. "Monte Carlo sampling requires repeatedly querying"

- NeRF: Neural Radiance Fields, a neural volumetric rendering method modeling view-dependent color and density. "NeRF~\cite{mildenhall2020nerf}"

- Next-best-view (NBV): A greedy strategy that selects the single next camera pose expected to maximize information or coverage. "next-best-view (NBV)~\cite{banta2000next, nbvp, 20next}"

- Novel view synthesis: Rendering images from viewpoints not seen during training or data capture. "novel view synthesis"

- Novelty indicator: A binary variable that flags whether a surface point has been previously observed. "referred to as the novelty indicator"

- Occupancy field: A function mapping 3D points to probabilities of being occupied. "predicts the probabilistic occupancy field"

- Occupancy network: A neural model that predicts whether 3D locations are occupied, providing geometry priors for planning. "pre-trained occupancy network"

- Opacity (Gaussian opacities): The per-Gaussian parameter determining how much a primitive contributes to density/visibility in rendering. "Gaussian opacities encode occupancy probabilities"

- Planning horizon: The number of future steps considered during planning. "the planning horizon steps"

- Rapidly-exploring Random Trees (RRT): A sampling-based motion planning algorithm that efficiently explores high-dimensional spaces. "RRT~\cite{rrt}"

- SE(3): The Lie group of 3D rigid-body transformations (rotations and translations). "\mathrm{SE}(3)"

- Submodular optimization: Optimization leveraging the diminishing-returns property to select informative subsets efficiently. "generated through submodular optimization"

- Transmittance: The accumulated probability that a ray has not encountered occluding matter up to a point, used in volumetric rendering. "Transmittance equals 1 in empty space"

- Tree search: A search method that explores branches of possible action sequences to find high-value plans. "performing a tree search of the possible future moves."

- View frustum: The pyramidal volume defined by a camera’s field of view within which objects are visible. "the camera view frustum "

- Volumetric rendering: Rendering that integrates density and color along rays through a volume to produce images or other maps. "fast volumetric rendering"

- Volumetric rendering equation: The integral equation governing how emitted color and transmittance accumulate along a ray. "the volumetric rendering equation~\cite{mildenhall2020nerf}"

- World model: An internal predictive model of the environment used to plan actions in unobserved regions. "serving as a world model for planning"

Collections

Sign up for free to add this paper to one or more collections.